Parakeet CTC Riva 0.6b

Important

NVIDIA NIM currently is in limited availability, sign up here to get notified when the latest NIMs are available to download.

Parakeet CTC Riva NIM provides state-of-the-art automatic speech recognition (ASR) models, capable of transcribing spoken English with exceptional accuracy. Parakeet CTC Riva 0.6b en-US is a speech-to-text model that transcribes input speech in English alphabets. It is an XL version of the FastConforme It is an XL version of the FastConformer CTC model.

Key benefits of Parakeet:

State-of-the-art accuracy: Superior WER performance across diverse audio sources and domains with strong robustness to non-speech segments.

Open-source and extensibility: Built on NVIDIA NeMo, allowing for seamless integration and customization.

Pre-trained checkpoints: Ready-to-use model for inference or fine-tuning.

Permissive license: Released under CC-BY-4.0 license, model checkpoints can be used in any commercial application.

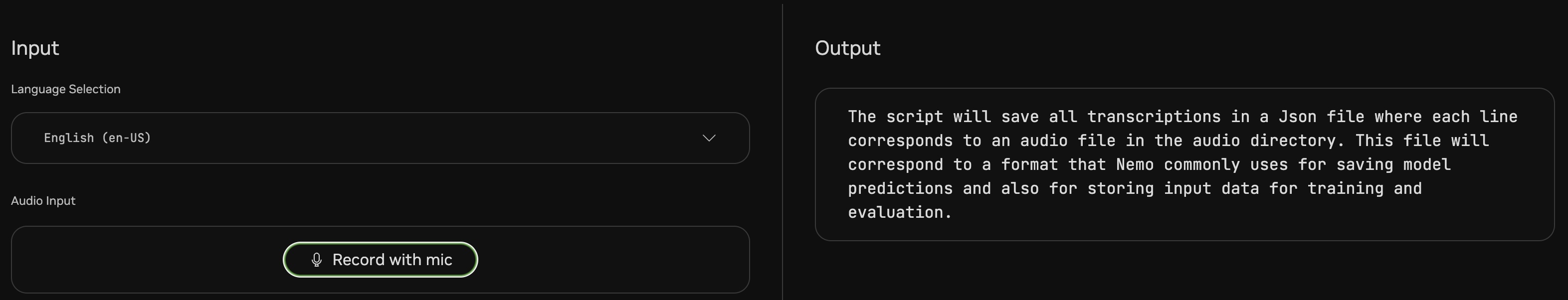

An example input speech for AI-generated translation

Note

A more detailed description of the model can be found in the Model Card.

Model Specific Requirements

The following are specific requirements for Parakeet CTC Riva NIM.

Important

Please refer to NVIDIA NIM documentation for necessary hardware, operating system, and software prerequisites if you have not done so already.

Hardware

H100 or A100 or L40 GPU

Software

Minimum NVIDIA Driver Version: 535

Once the above requirements have been met, you will use the QuickStart guide to pull the NIM container, pull the GPU-specific model and run the NIM.

Quickstart Guide

Note

This page assumes Prerequisite Software (Docker, NGC CLI, NGC registry access) is installed and set up.

Pull the NIM container.

docker pull nvcr.io/nvidia/nim/speech_nim:24.03

Set GPU_TYPE to indicate your GPU. For H100 GPU, use

h100x1. For A100 GPU, usea100x1. For L40, usel40x1.export GPU_TYPE=h100x1

Prepare a directory for model download.

1export MODEL_REPOSITORY=~/nim_model 2mkdir -p ${MODEL_REPOSITORY}

Pull GPU-specific model.

ngc registry model download-version nvidia/nim/parakeet-ctc-riva-0-6b:en-us_${GPU_TYPE}_fp16_24.03 --dest ${MODEL_REPOSITORY}

Run the NIM container in detached mode.

1docker run -d --rm --name riva-speech \ 2--runtime=nvidia -e CUDA_VISIBLE_DEVICES=0 \ 3--shm-size=1G \ 4-v ${MODEL_REPOSITORY}/parakeet-ctc-riva-0-6b_ven-us_${GPU_TYPE}_fp16_24.03:/config/models/parakeet-ctc-riva-0-6b-en-us \ 5-e MODEL_REPOS="--model-repository /config/models/parakeet-ctc-riva-0-6b-en-us" \ 6-p 50051:50051 \ 7nvcr.io/nvidia/nim/speech_nim:24.03 start-riva

Run gRPC Health check. This will return “status”: “SERVING” when the service is ready for inference. It may take a few minutes for the service to start completely.

docker run --rm --net host fullstorydev/grpcurl -plaintext -H "content-type: application/grpc" 0.0.0.0:50051 grpc.health.v1.Health/Check

Once the service is ready, run inference with the provided sample speech file in the container.

docker exec riva-speech python3 /opt/riva/examples/transcribe_file.py --server 0.0.0.0:50051 --input-file /opt/riva/wav/en-US_sample.wav

Available Models

Version |

GPU Model |

Number of GPUs |

Precision |

Memory Footprint |

File Size |

|---|---|---|---|---|---|

en-us_h100x1_fp16_24.03 |

H100 |

1 |

FP16 |

6 GB |

2 GB |

en-us_a100x1_fp16_24.03 |

A100 |

1 |

FP16 |

6 GB |

2 GB |

en_us_l40x1_fp16_24.03 |

L40 |

1 |

FP16 |

6 GB |

2 GB |

Detailed Instructions

This section provides additional details outside of the scope of the Quickstart Guide.

Throughout these instructions, we will define bash variables that we will reuse:

1MODEL_DIRECTORY=~/nim_model

2mkdir ${MODEL_DIRECTORY}

3

4# Set GPU_TYPE to indicate your GPU. For H100 GPU, use "h100x1". For A100 GPU, use "a100x1". For L40, use "l40x1".

5export GPU_TYPE=h100x1

Pull Container Image

Container image tags follow the versioning of YY.MM, similar to other container images on NGC. You may see different values under “Tags:”. These docs were written based on the latest available at the time.

ngc registry image info nvcr.io/nvidia/nim/speech_nim

1Image Repository Information 2Name: speech_nim 3Display Name: speech_nim 4Short Description: RIVA NIM 5Built By: 6Publisher: 7Multinode Support: False 8Multi-Arch Support: False 9Logo: 10Labels: NVIDIA AI Enterprise Supported 11Public: No 12Access Type: 13Associated Products: [] 14Last Updated: Mar 14, 2024 15Latest Image Size: 6.38 GB 16Signed Tag?: False 17Latest Tag: 24.03 18Tags: 19 24.03 20 24.03nightly

Pull the container image

docker pull nvcr.io/nvidia/nim/speech_nim:24.03

ngc registry image pull nvcr.io/nvidia/nim/speech_nim:24.03

Pull GPU-specific Model

Model tags follow the versioning of repository:version. The model is called

parakeet-ctc-riva-0-6band the version follows the naming pattern<LANG_CODE>_<GPU_TYPE>x<NUM_GPUS>_<precision>_YY.MM.x. Additional versions are available and can be seen by running the following the NGC command line command:ngc registry model list nvidia/nim/parakeet-ctc-riva-0-6b:*

Pull model optimized for the specific GPU. Make sure you have set

GPU_TYPEas mentioned in the previous section.ngc registry model download-version nvidia/nim/parakeet-ctc-riva-0-6b:en-us_${GPU_TYPE}_fp16_24.03

Launch Microservice

Launch the container. Start-up may take a couple of minutes until the service is available.

1docker run -d --rm --name riva-speech \

2--runtime=nvidia -e CUDA_VISIBLE_DEVICES=0 \

3--shm-size=1G \

4-v ${MODEL_DIRECTORY}/parakeet-ctc-riva-0-6b_ven-us_${GPU_TYPE}_fp16_24.03:/config/models/parakeet-ctc-riva-0-6b-en-us \

5-e MODEL_REPOS="--model-repository /config/models/parakeet-ctc-riva-0-6b-en-us" \

6-p 50051:50051 \

7nvcr.io/nvidia/nim/speech_nim:24.03 start-riva

This starts the service with gRPC endpoint in a detached container by the name riva-speech. Refer to Riva ASR APIs for API documentation.

(Optional): Container logs can be checked using the command below.

docker logs riva-speech

You should see a log similar to the one shown below, which indicates the successful deployment of the service.

I0306 10:40:55.868216 253 riva_server.cc:171] Riva Conversational AI Server listening on 0.0.0.0:50051

Health and Liveness Checks

The container exposes gRPC health service for integration into existing systems such as Kubernetes. Remember, it may take a few minutes to load the model and initialize the service completely.

docker run --rm --net host fullstorydev/grpcurl -plaintext -H "content-type: application/grpc" 0.0.0.0:50051 grpc.health.v1.Health/Check

Once the service is ready for inference, the above command will show the below output.

1{

2"status": "SERVING"

3}

Run Inference

Interacting with the Parakeet CTC Riva requires the use of a Python client to send audio data to the NIM. The NIM container contains the Python client, sample code and sample data which can be used to test the NIM by calling python3 /opt/riva/examples/transcribe_file.py --server 0.0.0.0:50051 --input-file /opt/riva/wav/en-US_sample.wav. The following instructions describe how to install and use the Riva Python client outside of the container in your local environment.

Install the Riva Python client package

Install

pipif requiredsudo apt-get install python3-pip

Install Riva client package

pip install nvidia-riva-client

Download Riva sample client

1cd ${MODEL_DIRECTORY} 2git clone https://github.com/nvidia-riva/python-clients.git

Run Speech to Text inference

In case you do not have a speech file available, you can use a sample speech file provided in the container. Riva server supports Mono, 16-bit audio in WAV, OPUS and FLAC formats.

1cd ${MODEL_DIRECTORY} 2 3# Copy sample speech file from container to host 4docker cp riva-speech:/opt/riva/wav/en-US_sample.wav .

Run streaming inference client. Input speech file is streamed to the service chunks-by-chunk and the transcript returned by the service is printed on the terminal.

python3 python-clients/scripts/asr/transcribe_file.py --server 0.0.0.0:50051 --input-file en-US_sample.wav --language-code en-USAbove command will print the transcript as shown below.

## what is natural language processing

Stopping the Container

When you’re done testing the endpoint, you can bring down the container by running the below command.

docker stop riva-speech

More Information

This NIM supports only the Parakeet CTC 0.6b model on H100, A100, & L40S. We will be expanding support for models and GPUs in the future. For deployment on other GPUs or deployment of other ASR models, refer to NVIDIA Riva which is a production-grade GPU accelerated inferencing solution for ASR available today.