Distributed Training with PyTorch

Learn how to run distributed training with PyTorch in DGX Cloud Lepton.

For batch jobs, some frameworks like PyTorch, TensorFlow, and Horovod support distributed training. DGX Cloud Lepton also supports distributed training for these frameworks.

Here is an example of running a distributed PyTorch job with two workers on DGX Cloud Lepton.

Prepare the Python script for distributed training

As an example, this script implements distributed training of a convolutional neural network (CNN) on the MNIST dataset using PyTorch's DistributedDataParallel (DDP) to leverage multiple GPUs in parallel.

The file has been saved in the GitHub repository here.

Create Job through Dashboard

Head over to the Batch Jobs page, and follow the steps below to create a job.

Set up the job

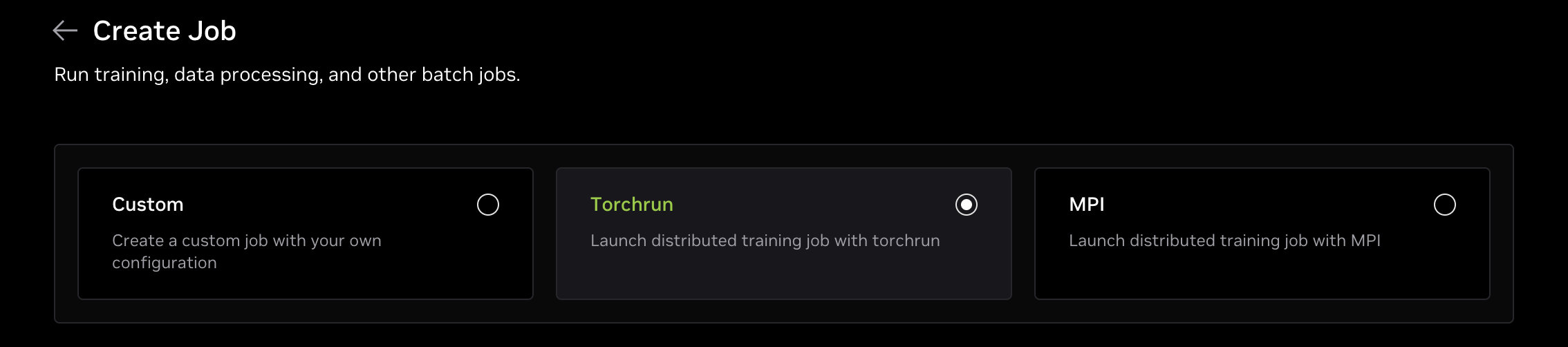

Select Job Type

Select Torchrun on the top of the create job page.

Resource

In the resource section, first select which node group you want to use.

Select the resource type you want to use, for example, gpu.8xh100-sxm, and set the number of workers to the desired number.

In this guide, we want to use 2 replicas, so we set the number of workers to 2.

Container

In the container section, use the image (nvcr.io/nvidia/pytorch:25.08-py3) and paste the following command as the Torchrun Command to run the job:

Create and Monitor

Now you can click the Create button to create and run the job. After that, you can check the job logs or details to monitor the job.

Within the job details page, you can see the status of each worker and the logs for each worker. You can also use the Web Terminal to connect to the worker node and check the worker's status. Once the job is finished, you will see the job with a Completed state.