Table of Contents

- Note

- SW Release Applicability: This sample is available in both NVIDIA DriveWorks and NVIDIA DRIVE Software releases.

Description

The Structure from Motion (SFM) sample demonstrates the triangulation functionality of the SFM module; a car pose is estimated entirely from CAN data using the NVIDIA® DriveWorks egomotion module. The car has a 4-fisheye camera rig that is pre-calibrated. Features are detected and tracked using the features module. Points are triangulated for each frame by using the estimated pose and tracked features.

Running the Sample

The structure from motion sample, sample_sfm, accepts the following optional parameters. If none are specified, it will process four supplied pre-recorded video.

./sample_sfm --baseDir=[path/to/rig/dir]

--rig=[rig.json]

--dbc=[canbus_file]

--dbcSpeed=[signal_name]

--dbcSteering=[signal_name]

--maxFeatureCount=[integer]

where

--baseDir=[path/to/rig/dir]

Path to the folder containint the rig.json file.

Default value: path/to/data/samples/sfm/triangulation

--rig=[rig.json]

A `rig.json` file as serialized by the DriveWorks rig module, or as produced by the DriveWorks calibration tool.

The rig must include the 4 camera sensors and one CAN sensor.

The rig sensors must contain valid protocol and parameter properties to open the virtual sensors.

The video files must be a H.264 stream.

Video containers as MP4, AVI, MKV, etc. are not supported.

The rig file also points to a video timestamps text file where each row contains the frame index

(starting at 1) and the timestamp for all cameras. It is read by the camera virtual sensor.

Default value: rig.json

--dbc=[canbus_file]

The CAN bus file that is required by the canbus virtual sensor.

Default value: canbus.dbc

--dbcSpeed=[signal_name]

Name of the signal corresponding to the car speed in the given dbc file.

Default value: M_SPEED.CAN_CAR_SPEED

--dbcSteering=[signal_name]

Name of the signal corresponding to the steering angle in the given dbc file.

Default value: M_STEERING.CAN_CAR_STEERING

--maxFeatureCount=[integer]

The manixum amount of features stored for tracking.

Default value: 2000

If a mouse is available, the left button rotates the 3D view, the right button translate, and the mouse wheel zooms.

While the sample is running the following commands are available:

- Press V to enable / disable pose estimation.

- Press F to enable / disable feature position prediction.

- Press Space to pause / resume execution.

- Press Q to switch between different camera views.

- Press R to restart playback.

Output

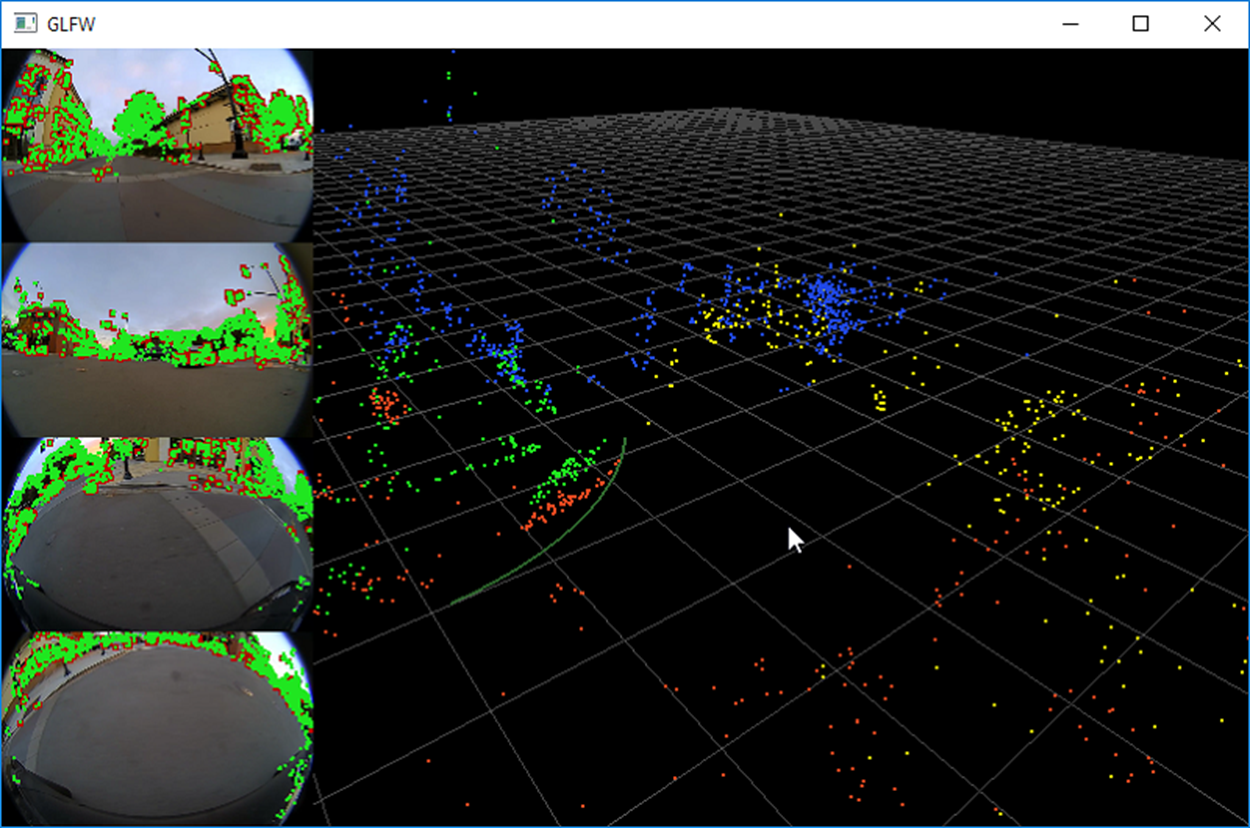

The left side of the screen shows the 4 input images; tracked features are shown in green. Triangulated points are reprojected back onto the camera and shown in red. The right side shows a 3D view of the triangulated point cloud.

In 3D, the colors are:

- Red points = points from frontal camera

- Green points = points from rear camera

- Blue points = points from left camera

- Yellow points = points from right camera

- Green/red line = path of the car

Additional Information

For more details see Structure from Motion (SFM) .