Table of Contents

- Note

- SW Release Applicability: This sample is available in NVIDIA DRIVE Software releases.

Description

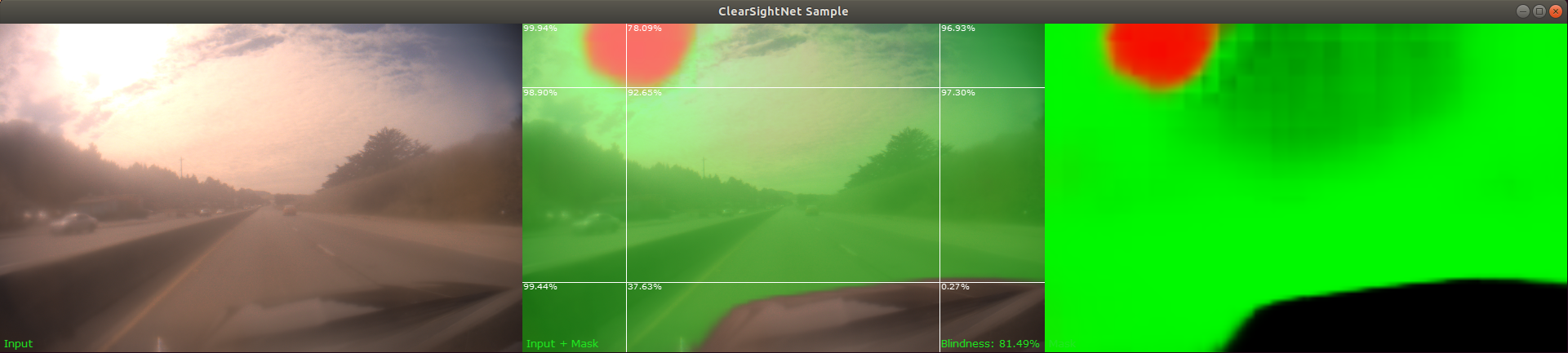

The ClearSightNet sample streams frames from a live camera or recorded video. It runs ClearSightNet DNN inference on each frame to generate a mask. This mask indicates image regions that are blurred, blocked, sky or otherwise clean.

Running the Sample

The ClearSightNet sample, sample_clearsightnet, accepts the following optional parameters. If none are specified, it performs detections on a supplied pre-recorded video.

/sample_clearsightnet --input-type=[video,camera]

--video=[path/to/video]

--camera-type=[camera]

--camera-group=[a|b|c|d]

--camera-index=[0|1|2|3]

--stopFrame=[frame]

--customModelPath=[customModelPath]

--filter-window=[window]

--numRegionsX=[numRegionsX]

--numRegionsY=[numRegionsY]

--dividersX=[regionDividersX]

--dividersY=[regionDividersY]

--precision=[int8|fp16|fp32]

--dla=[0|1]

--dlaEngineNo=[0|1]

where

--input-type=[video,camera]

Specifies whether the input is from a live camera or a recorded video.

Live camera input is supported only on NVIDIA DRIVE(tm) platforms.

It is not supported on Linux (x86 architecture) host systems.

Default value: video

--video=[path/to/video]

Specifies the absolute or relative path of a raw or h264 recording.

Only applicable if --input-type=video.

Default value: path/to/data/samples/clearsightnet/sample.mp4.

--camera-type=[camera]

Specifies a supported AR0231 `RCCB` sensor.

Only applicable if --input-type=camera.

Default value: ar0231-rccb-bae-sf3324

--camera-group=[a|b|c|d]

Specifies the group to which the camera is connected.

Only applicable if --input-type=camera.

Default value: a

--camera-index=[0|1|2|3]

Indicates the camera index on the given port.

Default value: 0

--stopFrame=[number]

Runs ClearSightNet only on the first <number> frames and then exits the application.

The default value for `--stopFrame` is 0, for which the sample runs endlessly.

Default value: 0

--customModelPath=[customModelPath]

Name of a non-default model or path to where a custom or non-default model is located.

Default value: "" (loads default model)

--filter-window=[window]

Temporal filter window. The output overall blindness ratio is median filtered over

this many frames. This sets the dwBlindnessDetectorParams.temporalFilterWindow parameter.

Default value: 5

--numRegionsX=[numRegionsX]

Number of image sub-regions in X direction. Maximum allowed regions is 8. This sets the

dwBlindnessDetectorParams.numRegionsX parameter.

Default value: 3

--numRegionsY=[numRegionsY]

Number of image sub-regions in Y direction. Maximum allowed regions is 8. This sets the

dwBlindnessDetectorParams.numRegionsY parameter.

Default value: 3

--dividersX=[regionDividersX]

Comma separated list of locations of sub-region dividers (expressed in image fractions)

in X direction. Number of dividers must equal numRegionsX-1. This sets the

dwBlindnessDetectorParams.regionDividersX parameter.

Default value: 0.2,0.8

--dividersY=[regionDividersY]

Comma separated list of locations of sub-region dividers (expressed in image fractions)

in Y direction. Number of dividers must equal numRegionsY-1. This sets the

dwBlindnessDetectorParams.regionDividersY parameter.

Default value: 0.2,0.8

--precision=[int8|fp16|fp32]

Defines the precision of the ClearSightNet DNN. The following precision levels are supported.

- int8

- 8-bit signed integer precision.

- Supported GPUs: compute capability >= 6.1.

- Faster than fp16 and fp32 on GPUs with compute capability = 6.1 or compute capability > 6.2.

- fp16 (default)

- 16-bit floating point precision.

- Supported GPUs: compute capability >= 6.2

- Faster than fp32.

- fp32

- 32-bit floating point precision.

- Supported GPUs: compute capability >= 5.0

When using DLA engines only fp16 is allowed.

Default value: fp16

--dla=[0|1]

Setting this parameter to 1 runs the ClearSightNet DNN inference on one of the DLA engines.

Default value: 0

--dlaEngineNo=[0|1]

Chooses the DLA engine to be used.

Only applicable if --dla=1

Default value: 0

Examples

To run a default (H264) video in a loop:

./sample_clearsightnet

To use the --video option to run a custom RAW or H264 video file:

./sample_clearsightnet --video=PATH_TO_VIDEO [--stopFrame=<frame idx>]

This runs sample_experimental_clearsightnet until frame <frame_idx>. The default value is 0, where the sample runs in loop mode.

In order to run the sample on a live video feed on NVIDIA DRIVE platform, set the --input-type option to camera.

./sample_clearsightnet --input-type=camera [--camera-type=<rccb camera type> --camera-group=<camera group> --camera-index=<camera idx on camera group>]

where default values are:

ar0231-rccb-bae-sf3324for--camera-type.afor--camera-group.0for--camera-index.

In order to run the sample on a DLA engine on an NVIDIA DRIVE platform, set the --dla option to 1. Optionally you can provide --dlaEngineNo to 0 or 1. By default it takes --dlaEngineNo as 0.

./sample_clearsightnet --dla=1 --dlaEngineNo=0

Output

- Note

- A red overlay in the sample indicates fully blocked or blind regions, green overlay indicates partially blocked or blurred regions and blue overlay indicates sky regions. In addition, the percentage shown at the top-left corner of each sub-region is the corresponding value in

regionBlindnessRatiooutput.

Additional Information

For more details see ClearSightNet.