1 # Copyright (c) 2019-2020 NVIDIA CORPORATION. All rights reserved.

3 @page dwx_dnn_plugins DNN Plugins

6 @section plugin_overview DNN Plugins Overview

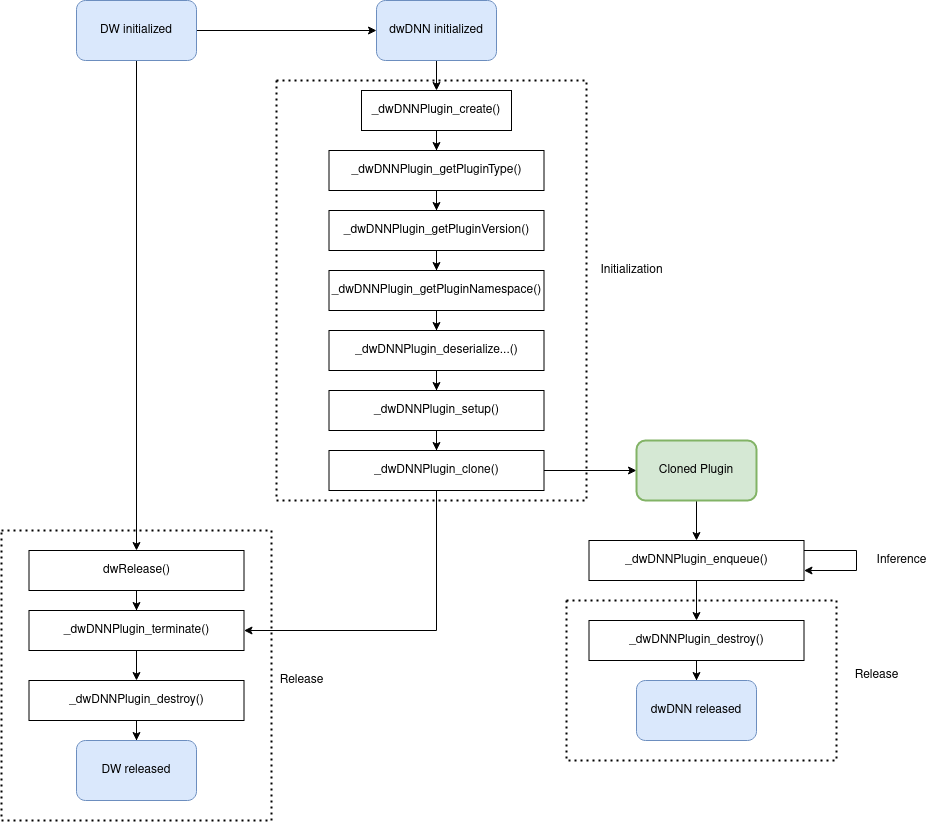

8 The DNN Plugins module enables DNN models that are composed of layers that are not supported by TensorRT to benefit from the efficiency of TensorRT.

10 Integrating such models to DriveWorks can be divided into the following steps:

12 -# @ref plugin_implementation "Implement custom layers as dwDNNPlugin and compile as a dynamic library"

13 -# @ref model_generation "Generate TensorRT model with plugins using tensorRT_optimization tool"

14 -# @ref model_runtime "Load the model and the corresponding plugins at initialization"

16 @section plugin_implementation Implementing Custom DNN Layers as Plugins

18 @note Dynamic libraries representing DNN layers must depend on the same TensorRT, CuDNN and CUDA version as DriveWorks if applicable.

20 A DNN plugin in DriveWorks requires implementing a set of pre-defined functions. The declarations of these functions are located in `dw/dnn/plugins/DNNPlugin.h`.

22 These functions have `_dwDNNPluginHandle_t` in common. This is a custom variable that can be constructed at initialization and used or modified during the lifetime of the plugin.

24 In this tutorial, we are going to implement and integrate a pooling layer (PoolPlugin) for an MNIST UFF model. See \ref dwx_dnn_plugin_sample for the corresponding sample.

26 In PoolPlugin example, we shall implement a class to store the members that must be available throughout the lifetime of the network model as well as to provide the methods that are required by dwDNNPlugin.

27 One of these members is CuDNN handle, which we shall use to perform the inference.

28 This class will be the custom variable `_dwDNNPluginHandle_t` that will be passed to each function.

33 PoolPlugin() = default;

34 PoolPlugin(const PoolPlugin&) = default;

35 virtual ~PoolPlugin() = default;

41 size_t getWorkspaceSize(int) const;

43 void deserializeFromFieldCollections(const char8_t* name,

44 const dwDNNPluginFieldCollection& fieldCollection);

46 void deserializeFromBuffer(const char8_t* name, const void* data, size_t length);

48 void deserializeFromWeights(const dwDNNPluginWeights* weights, int32_t numWeights)

50 int32_t getNbOutputs() const;

52 dwBlobSize getOutputDimensions(int32_t index, const dwBlobSize* inputs, int32_t numInputDims);

54 bool supportsFormatCombination(int32_t index, const dwDNNPluginTensorDesc* inOut,

55 int32_t numInputs, int32_t numOutputs) const;

58 void configurePlugin(const dwDNNPluginTensorDesc* inputDescs, int32_t numInputs,

59 const dwDNNPluginTensorDesc* outputDescs, int32_t numOutputs);

61 int enqueue(int batchSize, const void* const* inputs, void** outputs, void*, cudaStream_t stream);

63 size_t getSerializationSize();

65 void serialize(void* buffer);

67 dwPrecision getOutputPrecision(int32_t index, const dwPrecision* inputPrecisions, int32_t numInputs) const;

69 static const char* getPluginType()

74 static const char* getPluginVersion()

79 void setPluginNamespace(const char* libNamespace);

81 const char* getPluginNamespace() const;

83 dwDNNPluginFieldCollection getFieldCollection();

86 size_t type2size(dwPrecision precision);

88 template<typename T> void write(char*& buffer, const T& val);

90 template<typename T> T read(const char* data, size_t totalLength, const char*& itr);

92 dwBlobSize m_inputBlobSize;

93 dwBlobSize m_outputBlobSize;

94 std::shared_ptr<int8_t[]> m_tensorInputIntermediateHostINT8;

95 std::shared_ptr<float32_t[]> m_tensorInputIntermediateHostFP32;

96 float32_t* m_tensorInputIntermediateDevice = nullptr;

97 std::shared_ptr<float32_t[]> m_tensorOutputIntermediateHostFP32;

98 std::shared_ptr<int8_t[]> m_tensorOutputIntermediateHostINT8;

99 float32_t* m_tensorOutputIntermediateDevice = nullptr;

101 float32_t m_inHostScale{-1.0f};

102 float32_t m_outHostScale{-1.0f};

104 PoolingParams m_poolingParams;

106 dwPrecision m_precision{DW_PRECISION_FP32};

107 std::map<dwPrecision, cudnnDataType_t> m_typeMap = {{DW_PRECISION_FP32, CUDNN_DATA_FLOAT},

108 {DW_PRECISION_FP16, CUDNN_DATA_HALF},

109 {DW_PRECISION_INT8, CUDNN_DATA_INT8}};

111 cudnnHandle_t m_cudnn;

112 cudnnTensorDescriptor_t m_srcDescriptor, m_dstDescriptor;

113 cudnnPoolingDescriptor_t m_poolingDescriptor;

115 std::string m_namespace;

116 dwDNNPluginFieldCollection m_fieldCollection{};

121 A plugin will be created via the following function:

124 dwStatus _dwDNNPlugin_create(_dwDNNPluginHandle_t* handle);

127 In this example, we shall construct the PoolPlugin and store it in the `_dwDNNPluginHandle_t`:

131 dwStatus _dwDNNPlugin_create(_dwDNNPluginHandle_t* handle)

133 std::unique_ptr<PoolPlugin> plugin(new PoolPlugin());

134 *handle = reinterpret_cast<_dwDNNPluginHandle_t>(plugin.release());

142 The actual initialization of the member variables of PoolPlugin is triggered by the following function:

145 dwStatus _dwDNNPlugin_initialize(_dwDNNPluginHandle_t handle);

148 As you can see above, the same plugin handle is passed to the function and the initialize method of this

149 handle can be called as the following:

152 dwStatus _dwDNNPlugin_initialize(_dwDNNPluginHandle_t handle)

154 auto plugin = reinterpret_cast<PoolPlugin*>(handle);

155 plugin->initialize();

160 In PoolPlugin example, we shall initialize CuDNN, Pooling descriptor and source and destination tensor:

165 CHECK_CUDA_ERROR(cudnnCreate(&m_cudnn)); // initialize cudnn and cublas

166 CHECK_CUDA_ERROR(cudnnCreateTensorDescriptor(&m_srcDescriptor)); // create cudnn tensor descriptors we need for bias addition

167 CHECK_CUDA_ERROR(cudnnCreateTensorDescriptor(&m_dstDescriptor));

168 CHECK_CUDA_ERROR(cudnnCreatePoolingDescriptor(&m_poolingDescriptor));

169 CHECK_CUDA_ERROR(cudnnSetPooling2dDescriptor(m_poolingDescriptor,

170 m_poolingParams.poolingMode, CUDNN_NOT_PROPAGATE_NAN,

171 m_poolingParams.kernelHeight,

172 m_poolingParams.kernelWidth,

173 m_poolingParams.paddingY, m_poolingParams.paddingX,

174 m_poolingParams.strideY, m_poolingParams.strideX));

180 TensorRT requires plugins to provide serialization and deserialization functions. Following APIs

181 are needed to be implemented in order to meet this requirement:

184 // Serialize plugin to a buffer.

185 dwStatus _dwDNNPlugin_getSerializationSize(size_t* serializationSize, _dwDNNPluginHandle_t handle);

187 // Serialize plugin to a buffer.

188 dwStatus _dwDNNPlugin_serialize(void* buffer, _dwDNNPluginHandle_t handle);

190 // Deserialize plugin from a buffer.

191 dwStatus _dwDNNPlugin_deserializeFromBuffer(const char8_t* name, const void* buffer, size_t len,

192 _dwDNNPluginHandle_t handle);

194 // Deserialize plugin from field collection.

195 dwStatus _dwDNNPlugin_deserializeFromFieldCollection(const char8_t* name, const dwDNNPluginFieldCollection* fieldCollection,

196 _dwDNNPluginHandle_t handle);

198 // Deserialize plugin from weights.

199 dwStatus _dwDNNPlugin_deserializeFromWeights(const dwDNNPluginWeights* weights, int32_t numWeights,

200 _dwDNNPluginHandle_t handle);

203 An example of serializing to / deserializing from buffer:

206 size_t getSerializationSize()

208 size_t serializationSize = 0U;

209 serializationSize += sizeof(m_poolingParams);

210 serializationSize += sizeof(m_inputBlobSize);

211 serializationSize += sizeof(m_outputBlobSize);

212 serializationSize += sizeof(m_precision);

213 if (m_precision == DW_PRECISION_INT8)

216 serializationSize += sizeof(float32_t) * 2U;

218 return serializationSize;

221 void serialize(void* buffer)

223 char* d = static_cast<char*>(buffer);

225 write(d, m_poolingParams);

226 write(d, m_inputBlobSize);

227 write(d, m_outputBlobSize);

228 write(d, m_precision);

229 if (m_precision == DW_PRECISION_INT8)

231 write(d, m_inHostScale);

232 write(d, m_outHostScale);

236 void deserializeFromBuffer(const char8_t* name, const void* data, size_t length)

238 const char* dataBegin = reinterpret_cast<const char*>(data);

239 const char* dataItr = dataBegin;

240 m_poolingParams = read<PoolingParams>(dataBegin, length, dataItr);

241 m_inputBlobSize = read<dwBlobSize>(dataBegin, length, dataItr);

242 m_outputBlobSize = read<dwBlobSize>(dataBegin, length, dataItr);

243 m_precision = read<dwPrecision>(dataBegin, length, dataItr);

244 if (m_precision == DW_PRECISION_INT8)

246 m_inHostScale = read<float32_t>(dataBegin, length, dataItr);

247 m_outHostScale = read<float32_t>(dataBegin, length, dataItr);

254 The created plugin shall be destroyed by the following function:

257 dwStatus _dwDNNPlugin_destroy(_dwDNNPluginHandle_t handle);

260 For more information on how to implement plugin, please see \ref dwx_dnn_plugin_sample.

265

268 @section model_generation Generating TensorRT Model with Plugins

271 In PoolPlugin example, we have only one custom layer that requires a plugin.

272 The path of the plugin is `libdnn_pool_plugin.so`, assuming that it is located in the same folder as @ref dwx_tensorRT_tool.

273 Since this is a UFF model, the layer names do not need to be specified.

274 Therefore, we can create a `plugin.json` file as follows:

278 "libdnn_pool_plugin.so" : [""]

282 Finally, we can generate the model by:

284 ./tensorRT_optimization --modelType=uff --uffFile=mnist_custom_pool.uff --inputBlobs=in --outputBlobs=out --inputDims=1x28x28 --pluginConfig=plugin.json --out=mnist_with_plugin.dnn

288 @section model_runtime Loading TensorRT Model with Plugins

290 Loading a TensorRT model with plugin generated via @ref dwx_tensorRT_tool requires plugin configuration to be defined at initialization of `::dwDNNHandle_t`.

292 The plugin configuration structures look like this:

296 const dwDNNCustomLayer* customLayers; /**< Array of custom layers. */

297 size_t numCustomLayers; /**< Number of custom layers */

298 } dwDNNPluginConfiguration;

302 const char* pluginLibraryPath; /**< Path to a plugin shared object. Path must be either absolute path or path relative to DW lib folder. */

303 const char* layerName; /**< Name of the custom layer. Required for caffe models. */

307 Just like the json file used at model generation (see \ref plugin_json "plugin json"), for each layer, the layer name and path to the plugin that implements the layer must be provided.

309 In PoolPlugin example:

311 dwDNNPluginConfiguration pluginConf{};

312 pluginConf.numCustomLayers = 1;

313 dwDNNCustomLayer customLayer{};

315 // Assuming libdnn_pool_plugin.so is in the same folder as libdriveworks.so

316 std::string pluginPath = "libdnn_pool_plugin.so";

317 customLayer.pluginLibraryPath = pluginPath.c_str();

318 pluginConf.customLayers = &customLayer;

320 // Initialize DNN from a TensorRT file with plugin configuration

322 CHECK_DW_ERROR(dwDNN_initializeTensorRTFromFile(&dnn, "mnist_with_plugin.dnn", &pluginConf, DW_PROCESSOR_TYPE_GPU, sdk));

325 See \ref dwx_dnn_plugin_sample for more details.