1 # Copyright (c) 2019-2020 NVIDIA CORPORATION. All rights reserved.

3 @page calibration_usecase_features Feature-based Camera Self-Calibration

7 The camera's extrinsic pose calibration with respect to the vehicle is represented by both

8 orientation and position parameters.

10 Orientation parameters are pitch and roll with respect to a nominal ground

11 plane, and yaw with respect to the car's forward direction. The camera's pitch

12 and yaw orientation components are crucial for lane-holding, where the accuracy

13 requirements are such that relying only on factory calibration is unrealistic.

14 Pitch and roll are important for, e.g., distance estimation and ground plane

15 projections. In a similar way, the camera's height is crucial for map generation

16 of road markings on the ground.

18 NVIDIA<sup>®</sup> DriveWorks uses visually tracked features to estimate

19 camera motion and calibrate the camera's full orientation and height component

20 in a self-calibration setting. Pitch and yaw are calibrated simultaneously by

21 comparing the translation direction obtained from the visual motion and the

22 car's egomotion. Likewise, roll is calibrated by matching estimated angular

23 motion of the camera with the car's egomotion, and requires turn maneuvers to be

24 estimated. In addition, visually tracked and triangulated features on the ground

25 plane next to the car are used to estimate the height component of the camera's

26 position relative to the car.

28 ### Camera Motion Estimation

30 Camera motion estimation is performed by considering a pair of images and

31 minimizing the epipolar-based error of it's tracked features. The calibration

32 engine receives feature tracks for each frame and it will decide which image

33 pairs are suitable for calibration. This results in camera motion in form of a

34 rigid transformation (rotation and translation direction) between the camera at

37 ### Camera Roll / Pitch / Yaw / Height

39 The visual motion obtained in the previous step is compared to the motion of the

40 car (estimated by the egomotion module). Using the well-known hand-eye

41 constraint, the relative transformation between the camera and the car is

42 estimated. This relative transform provides the pitch and yaw of the camera.

43 Turning car motion constraints the roll of the camera. Additionally, the camera

44 motion is used to triangulate feature tracks on the ground next to the car, from

45 which the camera's height is inferred.

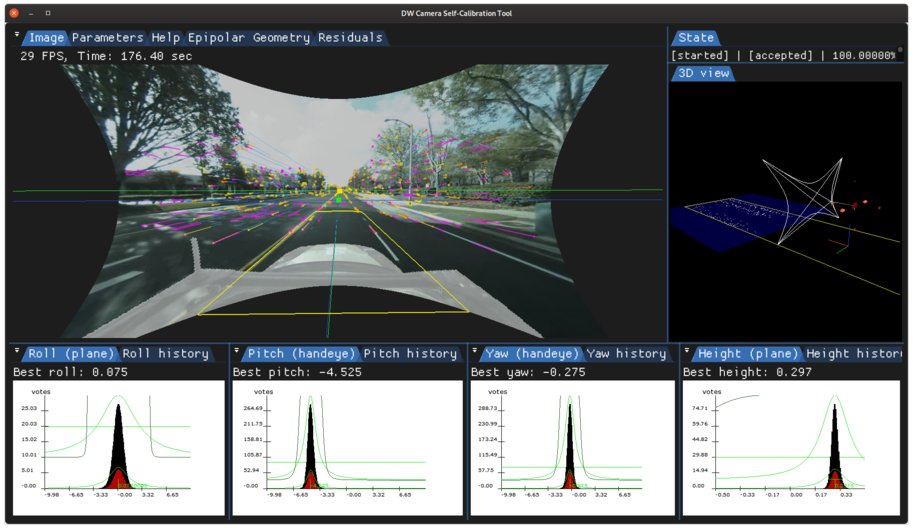

47 The instantaneous calibration for an image pair is accumulated in histograms for

48 the calibration's roll, pitch, yaw, and height components to estimate a final

49 calibration in a robust way.

51

55 ### Initialization Requirements

57 - Nominal values on camera calibration

58 - Orientation(roll/pitch/yaw): roll/pitch/yaw less than 5 degree error

59 - Position(x/y/z): less than 10 mm

60 - Intrinsic calibration: accurate to 0.5 pixel

62 ### Runtime Calibration Dependencies

64 - Any height calibration: absolute scale is inferred from egomotion, which needs to use calibrated

65 odometry properties (e.g., wheel radii via radar self-calibration)

66 - Side-camera roll calibration: side-camera roll is inferred from egomotion's axis of rotation, which

67 needs to be based on an accurate IMU calibration

69 ### Input Requirements

71 - Assumption: feature tracks compatible with DriveWork's module @ref

72 imageprocessing_features_mainsection

73 - Vehicle egomotion: requirements can be found in the @ref egomotion_mainsection

76 ### Output Requirements

78 - Corrected calibration for pitch/yaw (mandatory) and roll/height (optionally,

80 - Correction accuracy:

81 + pitch/yaw: less than 0.20deg error

82 + roll: less than 0.25deg error

83 + height: less than 3cm error

84 - Time/Events to correction:

85 + pitch/yaw: less than 1:44 minutes in 75% of the cases, less than 3:30

86 minutes in 100% of the cases

87 + roll: correction depends on turning motion, and requires less than four

88 strong turns in 75% of the cases, and less than six strong turns in 100%

90 + height: less than 2:41 minutes in 75% of the cases, less than 4:15 minutes

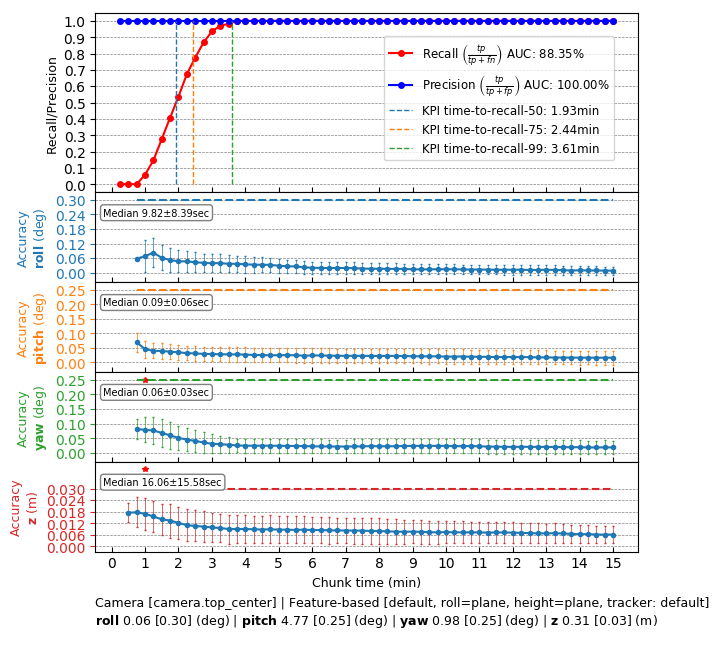

93 ## Cross-validation KPI

95 Several hours of data are used to produce a reference calibration value for

96 cross-validation. Then, short periods of data are evaluated for whether they can

97 recover the same values. For example, the graph below shows precision/recall

98 curves of motion-based camera roll/pitch/yaw/height self-calibration. Precision indicates

99 that an accepted calibration is within a fixed precision threshold from the reference

100 calibration, and recall indicates the ratio of accepted calibrations in the

101 given amount of time.

103

107 The following code snippet shows the general structure of a program

108 that performs camera self-calibration

111 dwFeatureListHandle_t featureListHandle; // used by the features module

113 dwCalibrationEngine_initialize(...); // depends on sensor from rig configuration module

114 dwCalibrationEngine_initializeCamera(...); // depends on nominal calibration from rig configuration. Estimated signals (roll/pitch/yaw/height) can be selected here

115 dwCalibrationEngine_startCalibration(...); // runtime calibration dependencies need to be met

117 while(true) // main loop

119 // track features for current camera frame

120 dwFeatureTracker_trackFeatures(featureListHandle, ...);

122 // get feature tracks

123 dwFeatureListPointers featureDataGPU;

124 dwFeatureList_getDataPointers(&featureDataGPU, ..., featureListHandle); // requires features module

126 // feed feature tracks into self-calibration

127 dwCalibrationEngine_addFeatureDetections(..., featureDataGPU.featureCount, ...);

129 // retrieve calibration status

130 dwCalibrationEngine_getCalibrationStatus(...);

132 // retrieve self-calibration results

133 dwCalibrationEngine_getSensorToRigTransformation(...);

136 dwCalibrationEngine_stopCalibration(...);

139 This workflow is demonstrated in the following sample: @ref

140 dwx_camera_calibration_sample