The HDR Compatibility of the connected sink can be determined using a series of calls in Java and Native C++. Since it is discouraged to access system properties directly from native, the best way to retrieve this value using Java from native is by making use of JNI (Java-Native Interface). A call from the engine coded in native to Java which queries this property and returns the value back to native should accomplish this. A sample implementation from Java and C++ can be found below.

import java.io.BufferedReader;

import java.io.IOException;

import java.io.InputStreamReader;

public boolean JavaCallback_isDisplayHDRCompatible() throws IOException {

try {

// Retrieve desired system property

java.lang.Process p = Runtime.getRuntime().exec("getprop sys.hwc.hdr.supported");

BufferedReader in = new BufferedReader(

new InputStreamReader(p.getInputStream()));

String valueStr = in.readLine();

final int value = Integer.parseInt(valueStr);

// Value is 1 only if connected sink is HDR-compatible.

if (value == 1)

return true;

} catch (IOException e) {

e.printStackTrace();

}

return false;

}

#include <NvNativeAppGlue/NvNativeAppGlue.h>

boolisDisplayHDRCompatible()

{ jmethodID mid = 0;

// Application thread environment

JNIEnv* env = app->env;

// Application thread object

jobject thisThread = app->thisThread;

jclass thisClass = env->GetObjectClass(thisThread);

// Error handling

if (env>ExceptionOccurred()) {

env->ExceptionDescribe();

env->ExceptionClear();

return false;

}

if (thisClass != 0) {

// Get method id of the Java Callback method

mid = env->GetMethodID(thisClass, "JavaCallback_isDisplayHDRCompatible", "()Z");

// Error handling

if (env->ExceptionOccurred()) {

env->ExceptionDescribe();

env->ExceptionClear();

return false;

}

env->DeleteLocalRef(thisClass);

}

// Call the Java method

jboolean result = env->CallBooleanMethod(thisThread, mid);

return (bool)result;

}

When creating an EGL surface for HDR rendering, the following extensions have to be taken into account.

EGL_EXT_pixel_format_float

This extension enables applications to choose and render with floating point EGL framebuffer configs, which allows HDR content to be presented in higher precision . Higher precision can generally help reduce visual artifacts, such as banding, that may become more noticeable on HDR displays.

Khronos extension specification:

https://www.khronos.org/registry/egl/extensions/EXT/EGL_EXT_pixel_format_float.txt

EGL_EXT_gl_colorspace_bt2020_linear

EGL_EXT_gl_colorspace_bt2020_pq

EGL_EXT_gl_colorspace_scRGB_linear

These extensions provide an EGL interface for applications to notify the underlying display pipeline of the framebuffer colorspace that is in use. The colorspaces supported by these extensions include linear BT.2020, PQ encoded BT.2020 and linear scRGB. This information will assist the compositor to perform colorspace correct blending and composition.

Khronos extension specification:

https://www.khronos.org/registry/egl/extensions/EXT/EGL_EXT_gl_colorspace_bt2020_linear.txt

https://www.khronos.org/registry/egl/extensions/EXT/EGL_EXT_gl_colorspace_scrgb_linear.txt

EGL_EXT_surface_SMPTE2086_metadata

This extension provides an EGL interface for applications to specify the color volume information of the original mastering display to the sink display (supplied as SMPTE 2086 metadata). This information can help keep the original creative intent and achieve more accurate and consistent color reproduction on different displays.

Khronos extension specification:

https://www.khronos.org/registry/egl/extensions/EXT/EGL_EXT_surface_SMPTE2086_metadata.txt

In order to preserve the precision of all color channels, we make use of a floating point buffer with 16 bits of precision. We do this by creating a new framebuffer and storing a FP16 texture as a target attachment.

In OpenGL, we can do this as follows:

GLuint tex, fbo;

glGenFramebuffers(1, &fbo);

glBindFramebuffer(GL_FRAMEBUFFER, fbo);

glActiveTexture(GL_TEXTURE0);

glBindTexture(GL_TEXTURE_2D, tex);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_LINEAR);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_LINEAR);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_WRAP_S, GL_CLAMP_TO_EDGE);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_WRAP_T, GL_CLAMP_TO_EDGE);

glTexImage2D(GL_TEXTURE_2D, 0, GL_RGBA16F, screen_width,

screen_height, 0, GL_RGBA, GL_FLOAT, NULL);

glBindTexture(GL_TEXTURE_2D, 0);

glFramebufferTextureEXT(GL_FRAMEBUFFER, GL_COLOR_ATTACHMENT0, tex, 0);

The scene's final render in the desired color space (traditionally sRGB, linear) is done on this FP16 target and this target should be fed to the ACES tone mapper pipeline as an input texture. The ACES algorithm is run on this color to provide the final output device color.

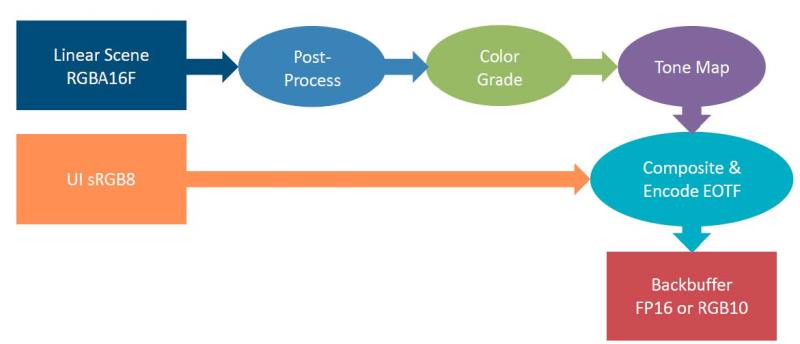

The figure above shows the pipeline for HDR output. One major difference (outlined more in the next section on UI Compositing) is that the tone mapping happens on the scene before the UI compositing takes place. More information on why this is done can be found in reference documents bundled along with this developer guide.

The typical tone mapping pipeline is altered by taking the linear color output from the high precision backbuffer which has the final image before post processing and before UI is rendered, and fed into the ACES tonemapper. This linear color undergoes post processing, color grading, LMT, RRT, ODT, etc and then the UI in the same color space as the output from ODT phase is composited on top of it. Refer to the ACES Homepage (http://www.oscars.org/science-technology/sci-tech-projects/aces) and GitHub repo (https://github.com/ampas/aces-dev) for more information and sample code for ACES implementation.

Today, UI is authored pretty much directly in the output referred sRGB color space. This has been a standard 2D workflow since roughly the beginning of time. As a result, the simplest solution is to keep the workflow as is. Since the scRGB is set to the same primaries, and with a white level that is the prescribed white for sRGB, the developer can simply blend the UI straight over top as they do today. The colors should come out identical to what the UI artists have been expecting based on their work developing it on an SDR monitor.

If the back buffer was mapped with 1.0 being the max possible luminance for UHD, then the resulting UI would be quite uncomfortable for the user to look at for an extended period of time. Even if the user could tolerate the extreme brightness, they would likely lose a bit of the HDR fidelity, as the overly bright UI would stand as a reference to adapt the eyes making the scene look dimmer.

There are a couple of enhancements that a game developer may wish to add to make things go as smooth as possible with the UI. First, it is worth adding a scale factor to the UI when in HDR mode. As users may be accustomed to viewing the game on an overly bright SDR monitor, it may be worth upping the luminance level of the UI by some amount. Additionally, this can counteract the viewer adapting to the brighter highlights in the HDR scene. Secondly, if your game relies on a lot of transparent UI, it may be worth compositing the entire UI plane offscreen, then blending it in one compositing operation. This is useful, because extremely bright highlights can glow through virtually any level of transparency set in the UI, overwhelming the transparent elements. You can counteract that by applying a very simple tone map operator, like Reinhard, to bring the max level of anything below transparent pixels to a reasonable level.

Some game developers might already be shaking their heads about the potential cost of the ACES-derived operators discussed above. This is true if the implementation were to calculate values for every pixel in every cycle. However, that cost isn't necessary. Instead, the same solution described previously for LMT application applies for tone mapping as well. The whole process can reasonably be mapped into a 3D LUT, even baking in the LMT. This turns the entire color grading and tone mapping pass into the execution of a shaper function and a 3D texture fetch. This is the sort of workflow that the movie industry has often used, so we can be fairly confident that it will generally be good enough for our purposes.

It is important to remember that the 3D LUT will need to hold high-precision data, preferably FP16. This is to ensure that the outputs have the proper precision. The performance improvements by using LUTs over implementing ACES tone mapper in the fragment shader can be very significant, and reduces the performance cost of the HDR application to a minimal value. However, there may be an additional performance impact, due to driver overhead in more GPU demanding applications. More information about LUT implementation can be found in the documentation section of the aces-dev repository, as indicated above.

NVIDIA® GameWorks™ Documentation Rev. 1.0.220830 ©2014-2022. NVIDIA Corporation and affiliates. All Rights Reserved.