Camera Software Development Solution

Applies to: Jetson AGX Xavier series

This document describes the NVIDIA® Jetson AGX Xavier™ series camera software solution and explains the NVIDIA supported and recommended camera software architecture for fast and optimal time to market. Development options are outlined and explained to customize the camera solution for USB, YUV, and Bayer camera support.

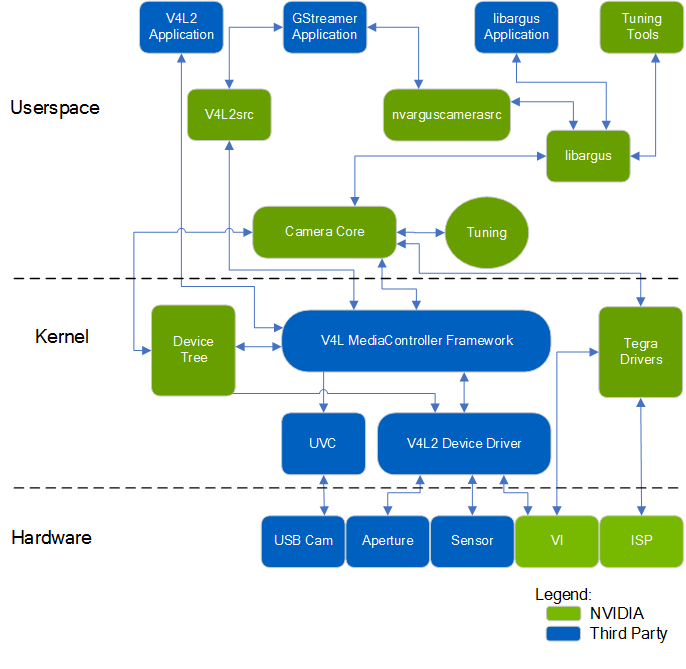

Camera Architecture Stack

The NVIDIA camera software architecture includes NVIDIA components that allow for ease of development and customization:

The camera architecture includes the following NVIDIA components:

• libargus—Provides a low-level API based on the camera core stack.

• nvarguscamerasrc—NVIDIA camera GStreamer plugin that provides options to control ISP properties using the ARGUS API.

• v4l2src—A standard Linux V4L2 application that uses direct kernel IOCTL calls to access V4L2 functionality.

NVIDIA provides OV5693 Bayer sensor as a sample. NVIDIA tunes this sensor for the NVIDIA® Jetson™ platform. The drive code, based on the media controller framework, is available at:

./kernel/nvidia/drivers/media/i2c/ov5693.c

NVIDIA provides additional sensor support for Jetson Board Support Package (BSP) software releases. Developers must work with NVIDIA certified camera partners for any Bayer sensor and tuning support. The work involved includes:

• Sensor driver development

• Custom tools for sensor characterization

• Image quality tuning

These tools and operating mechanisms are NOT part of the public Jetson Embedded Platform (JEP) Board Support Package release.

Camera API Matrix

The matrix of the camera APIs available at each camera configuration are as follows.

Uses Jetson ISP (CSI Interface) | Does not use Jetson ISP † (CSI Interface) | USB (UVC) * (USB Interface) | |

Camera API | libargus GStreamer (GST-nvarguscamerasrc) | V4L2 | V4L2 |

* Drivers are not provided by NVIDIA, you must write the drivers. Customer can support peripheral bus device such as: — Ethernet — Non-UVC USB † Libargus is the preferred path to access the camera. | |||

Note: | The default OV5693 camera does not contain an integrated ISP. Use of the V4L2 API with the reference camera records “raw” Bayer data. |

Approaches for Validating and Testing the V4L2 Driver

Once your driver development is complete, use the provided tools or application to validate and test the V4L2 driver interface.

For general GStreamer and multimedia operations, see the Multimedia User Guide available from the NVIDIA Embedded Download Center at:

Applications Using libargus Low-Level APIs

The NVIDIA Multimedia API provides samples that demonstrate how to use the libargus APIs to preview, capture, and record the sensor stream.

The Multimedia API must be installed with NVIDIA® SDK Manager or as a standalone package. SDK Manager is available for download from the Jetson Download Center at:

The Multimedia API Reference may be downloaded from the Jetson Download Center at:

Applications Using GStreamer with the nvarguscamerasrc Plugin

Use the nvarguscamerasrc GStreamer plugin supported camera features with ARGUS API to:

• Enable ISP post-processing for Bayer sensors

• Perform format conversion

• Generate output directly for YUV sensor and USB camera

For example, for a Bayer sensor with the format 1080p/30/BGGR:

• Save the preview into the file as follows:

gst-launch-1.0 nvarguscamerasrc num-buffers=200 ! 'video/x-raw(memory:NVMM),width=1920, height=1080, framerate=30/1, format=NV12' ! omxh264enc ! qtmux ! filesink location=test.mp4 -e

• Render the preview to an HDMI™ screen as follows:

gst-launch-1.0 nvarguscamerasrc ! ‘video/x-raw(memory:NVMM), width=1920, height=1080, format=(string)NV12, framerate=(fraction)30/1' ! nvoverlaysink -e

Applications Using GStreamer with V4L2 Source Plugin

Using the YUV sensor, or USB camera, to output YUV images without ISP post-processing does not use the NVIDIA camera software stack.

For example, a USB camera with the format 480p/30/YUY2:

• Save the preview into a file as follows (based on software converter):

gst-launch-1.0 v4l2src num-buffers=200 device=/dev/video0 ! 'video/x-raw, format=YUY2, width=640, height=480, framerate=30/1' ! videoconvert ! omxh264enc ! qtmux ! filesink location=test.mp4 -ev

• Render the preview to a screen as follows:

//export DISPLAY=:0 if you are operating from remote console

gst-launch-1.0 v4l2src device=/dev/video0 ! 'video/x-raw, format=YUY2, width=640, height=480, framerate=30/1' ! xvimagesink -ev

For a YUV sensor with the format 480p/30/UYVY:

• Save the preview into a file as follows (based on hardware accelerated converter):

gst-launch-1.0 -v v4l2src device=/dev/video0 ! 'video/x-raw, format=(string)UYVY, width=(int)640, height=(int)480, framerate=(fraction)30/1' ! nvvidconv ! 'video/x-raw(memory:NVMM), format=(string)NV12' ! omxh264enc ! qtmux ! filesink location=test.mp4 -ev

• Render the preview to a screen as follows:

//export DISPLAY=:0 if you are operating from remote console

gst-launch-1.0 v4l2src device=/dev/video0 ! 'video/x-raw, format=(string)UYVY, width=(int)640, height=(int)480, framerate=(fraction)30/1' ! xvimagesink -ev

Applications Using V4L2 IOCTL Directly

Use V4L2 IOCTL to verify basic functionality during sensor bringup.

For example, capture from a Bayer sensor with the format 1080p/30/RG10:

v4l2-ctl --set-fmt-video=width=1920,height=1080,pixelformat=RG10 --stream-mmap --stream-count=1 -d /dev/video0 --stream-to=ov5693.raw

ISP Support

To enable ISP support

• Built-in to the Camera Core where the release package includes initial ISP configuration files for reference sensors.

Note: | CSI cameras, with integrated ISP and USB camera, can work in ISP bypass mode. Provided ISP support is available for Jetson Developer Kit (OV5693) RAW camera module. Additional ISP support for other camera modules are supported using third party partners. |

Infinite Timeout Support

This use case is different from typical camera use cases, where the camera sensor streams frames continuously and finite timeouts for the camera driver and associated hardware waiting for camera frames.

In this use case a camera sensor is triggered to generate a specified number of frames, after which it stops streaming indefinitely. Whenever it resumes streaming, the camera driver must resume capturing without timeout issues. The camera driver and hardware must always be ready to capture frames coming from the CSI sensor. Since the camera driver does not know when streaming will start again, it must wait indefinitely for incoming frames.

To support this use case, the camera sensor hardware module must support suspending and resuming streaming.

To enable this feature, run the camera server (i.e. the nvargus-daemon service) by setting an environment variable:

sudo service nvargus-daemon stop

sudo enableCamInfiniteTimeout=1 nvargus-daemon

Symlinks Changed by Mesa Installation

Installation of Mesa EGL may create a /usr/lib/<ABI_directory>/libEGL.so symlink, overwriting the symlink to the implementation library that must be used instead, /usr/lib/<ABI_directory>/tegra-egl/libEGL.so. This disrupts any client of EGL, including libraries in the release that use it for EGLStreams.

In this release, the symlink is replaced when the system is rebooted, fixing this issue on reboot. Similar workarounds have been applied in previous releases for other libraries such as libGL and libglx.

Other References

• For details on integrating with other Jetson Multimedia components using V4L2 or GStreamer, see the Jetson Linux Multimedia API Reference.

• For details on Argus, see the LibArgus JetPack documentation.

• For details on building a custom camera solution, see our Preferred Partners Community.