|

|

Jetson Linux Multimedia API Reference32.4.2 Release |

|

|

Jetson Linux Multimedia API Reference32.4.2 Release |

This sample demonstrates the simplest way to use NVIDIA® TensorRT™ to decode video and save the bounding box information to the result.txt file. TensorRT was previously known as GPU Inference Engine (GIE).

This samples does not require a Camera or display.

$ cd $HOME/tegra_multimedia_api/samples/04_video_dec_trt $ make

$ ./video_dec_trt [Channel-num] <in-file1> <in-file2> ... <in-format> [options]

The following example generates two results: result0.txt and result1.txt. The results contain normalized rectangle coordinates for detected objects.

$ ./video_dec_trt 2 ../../data/Video/sample_outdoor_car_1080p_10fps.h264 \

../../data/Video/sample_outdoor_car_1080p_10fps.h264 H264 \

--trt-deployfile ../../data/Model/resnet10/resnet10.prototxt \

--trt-modelfile ../../data/Model/resnet10/resnet10.caffemodel \

--trt-mode 0

$ sudo ~/jetson_clocks.sh

Channel-num option.For information on opening more than 16 video devices, see the following NVIDIA® DevTalk topic:

$ rm trtModel.cache

Inference Performance(ms per batch):xx Wait from decode takes(ms per batch):xx

$ cp result*.txt $HOME/tegra_multimedia_api/samples/02_video_dec_cuda $ cd $HOME/tegra_multimedia_api/samples/02_video_dec_cuda $ ./video_dec_cuda ../../data/Video/sample_outdoor_car_1080p_10fps.h264 H264 --bbox-file result0.txt $ ./video_dec_cuda ../../data/Video/sample_outdoor_car_1080p_10fps.h264 H264 --bbox-file result1.txt

The data pipeline is as follow:

Input video file -> Decoder -> VIC -> TensorRT Inference -> Plain text file with Bounding Box info

The sample does the following:

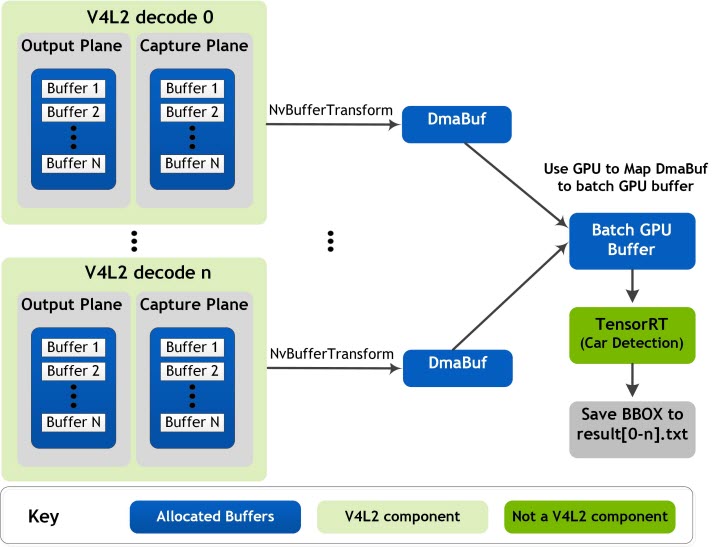

The following block diagram shows the video decoder pipeline and memory sharing between different engines. This memory sharing also applies to other L4T Multimedia samples.

This sample uses the following key structures and classes:

The global structure context_t manages all the resources in the application.

| Element | Description |

|---|---|

| NvVideoDecoder | Contains all video decoding-related elements and functions. |

| NvVideoConverter | Contains elements and functions for video format conversion. |

| EGLDisplay | Specifies the EGLImage used for CUDA processing. |

| conv_output_plane_buf_queue | Specifies the output plane queue for video conversion. |

| TRT_Context | Specifies interfaces for loading Caffemodel and performing inference. |

| Member | Description |

|---|---|

| decCaptureLoop | Gets buffers from dec capture plane and push to converter, and handle resolution change. |

| Conv outputPlane dqThread | Returns the buffers dequeued from converter output plane to decoder capture plane. |

| Conv captuerPlane dqThread | Gets buffers from conv capture plane and push to the TensorRT buffer queue. |

| trtThread | Specifies the CUDA process and inference characteristics. |

To display and verify the results and to scale the rectangle parameters, use the 02_video_dec_cuda sample as follows:

$ ./video_dec_cuda <in-file> <in-format> --bbox-file result.txt

The sample does the following:

Uses the default file:

resnet10.prototxt

The default model file is

resnet10.caffemodel

In this directory:

$SDKDIR/data/Model/resnet10

Performs end-of-stream (EOS) processesing as follows:

a. Completely reads the file.

b. Pushes a null v4l2buf to decoder.

c. Waits for all output plane buffers to return.

d. Sets get_eos:

decCap thread exit

e. Ends the TensorRT thread.

f. Sends EOS to the converter:

conv output plane dqThread callback return false conv output plane dqThread exit conv capture plane dqThread callback return false conv capture plane dqThread exit

g. Deletes the decoder:

deinit output plane and capture plane buffers

h. Deletes the converter:

unmap capture plane buffers

./video_dec_trt [Channel-num] <in-file1> <in-file2> ... <in-format> [options]

| Option | Description |

|---|---|

--trt-deployfile | Sets deploy file name. |

--trt-modelfile | Sets the model file name. |

--trt-mode <int> | Specifies to use float16 or not[0-2], where <int> is one of the following:

|

--trt-enable-perf | Enables performance measurement. |