TLT Launcher¶

Transfer Learning Toolkit (TLT) encapsulates DNN training pipelines that may be developed across different

training platforms. In order to abstract the details from the TLT user, TLT now is packaged with a launcher CLI.

The CLI is a python3 wheel package that may be installed using the python-pip. When installed, the

launcher CLI abstracts the user from having to instantiate and run several TLT containers and map the commands accordingly.

In this release of TLT, the TLT package includes 2 underlying dockers, based on the training framework. Each docker contains entrypoints to a task, which runs the sub-tasks associated with them. The tasks and sub-tasks follow the following cascaded structure

tlt <task> <sub-task> <args>

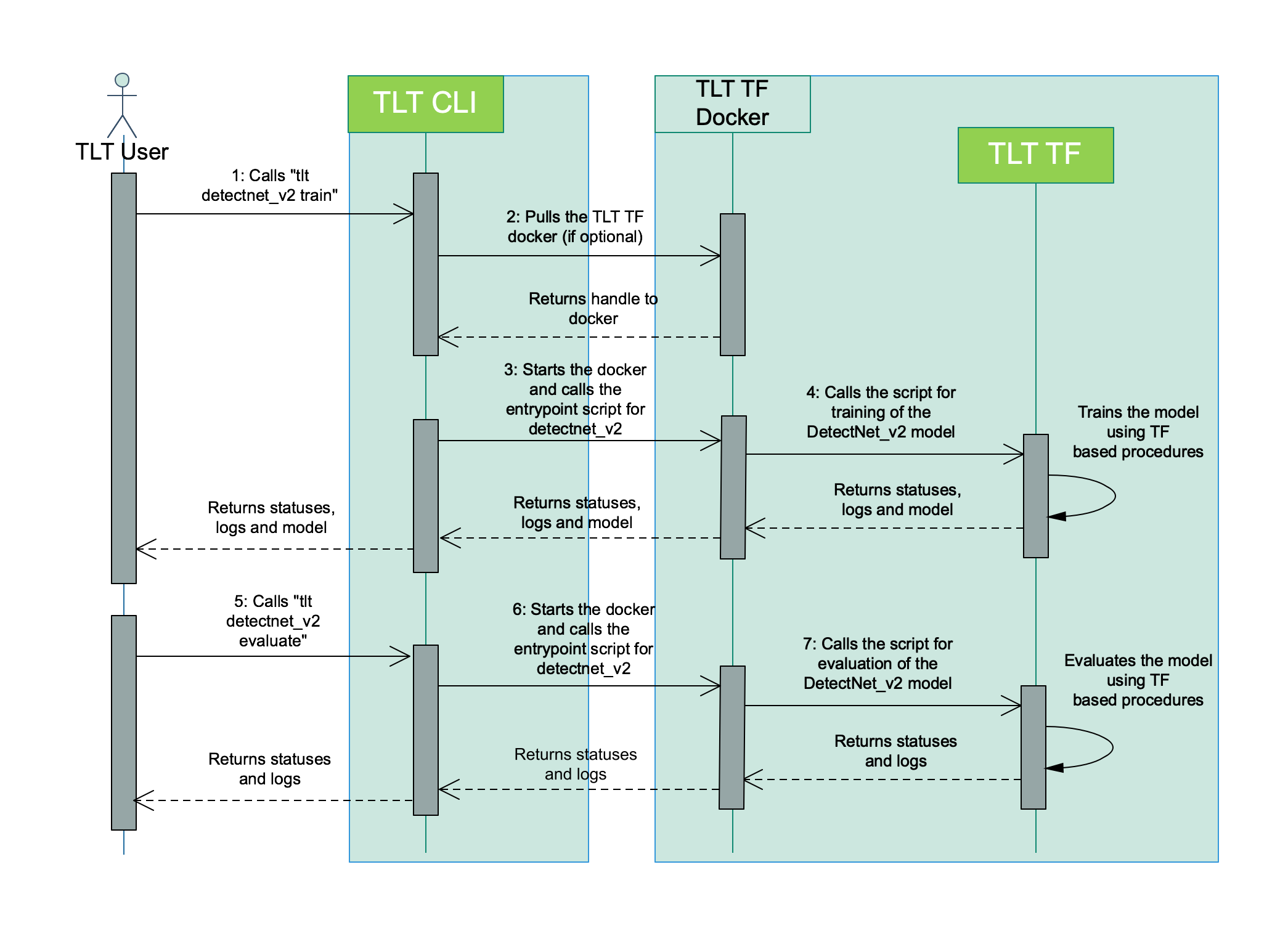

The tasks are broadly divided into computer vision and conversational AI. For example, DetectNet_v2 is a

computer vision task for object detection in TLT which supports subtasks such as train, prune,

evaluate, export etc. When the user executes a command, for example tlt detectnet_v2 train --help,

the TLT launcher does the following:

Pulls the required TLT container with the entrypoint for DetectNet_v2

Creates an instance of the container

Runs the

detectnet_v2entrypoint with thetrainsub-task

You may visualize the user interaction diagram for the TLT Launcher when running training and evaluation for a DetectNet_v2 model as follows:

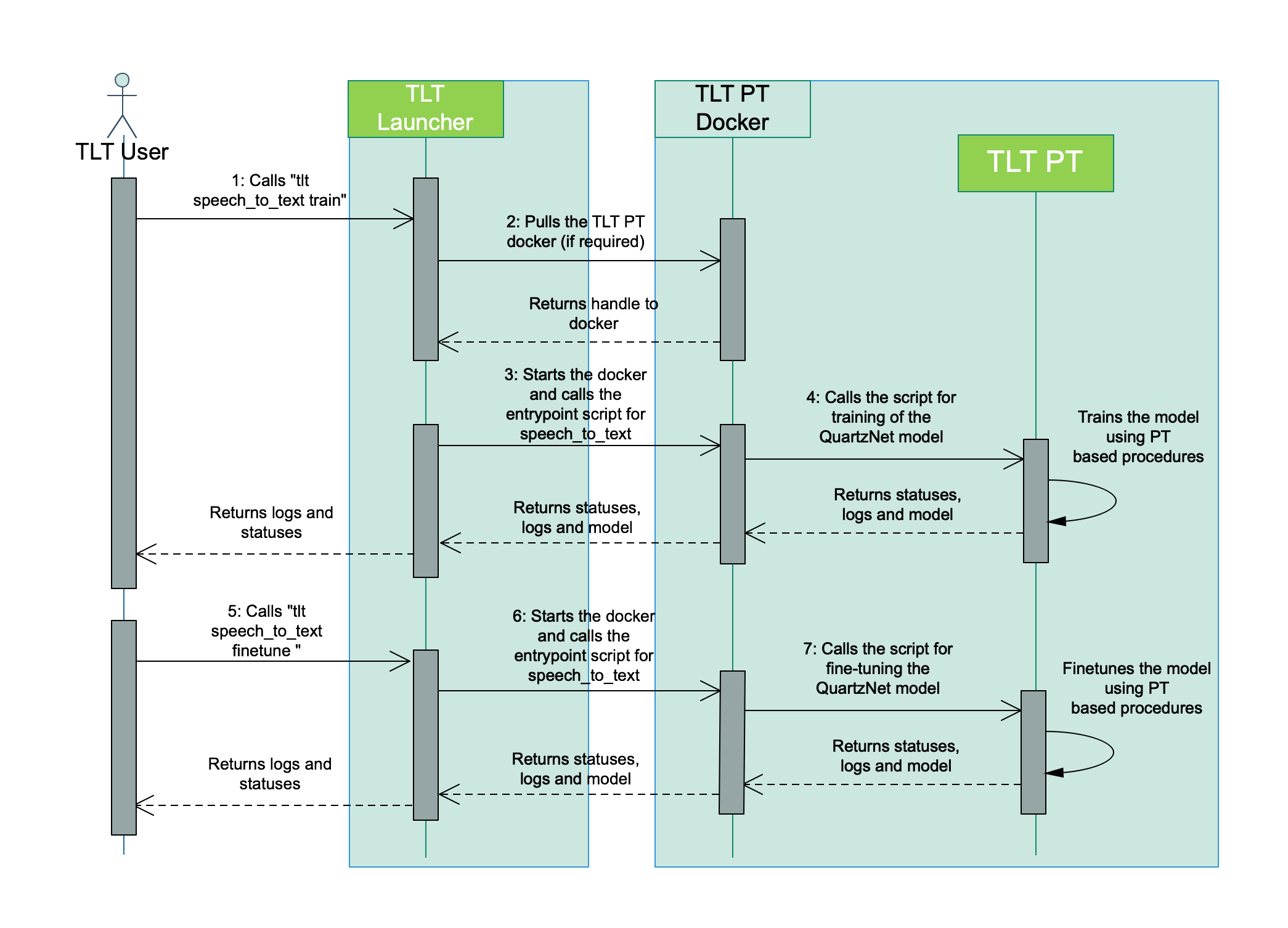

Similarly, the interaction diagram for training a QuartzNet speech_to_text model is as follows:

The following sections cover supported commands and configuring the launcher.

Running the launcher¶

Once the launcher has been installed, the workflow to run the launcher is as follows.

Listing the tasks supported in the docker.

After installing the launcher, you will now be able to list the tasks that are supported in the TLT launcher. The output of

tlt --helpcommand is as follows:usage: tlt [-h] {list,stop,info,augment,classification,detectnet_v2,dssd,emotionnet,faster_rcnn,fpenet,gazenet,gesturenet, heartratenet,intent_slot_classification,lprnet,mask_rcnn,punctuation_and_capitalization,question_answering, retinanet,speech_to_text,ssd,text_classification,tlt-converter,token_classification,unet,yolo_v3,yolo_v4} ... Launcher for TLT optional arguments: -h, --help show this help message and exit tasks: {list,stop,info,augment,classification,detectnet_v2,dssd,emotionnet,faster_rcnn,fpenet,gazenet,gesturenet,heartratenet ,intent_slot_classification,lprnet,mask_rcnn,punctuation_and_capitalization,question_answering,retinanet,speech_to_text, ssd,text_classification,tlt-converter,token_classification,unet,yolo_v3,yolo_v4}Configuring the launcher instance.

Running Deep Neural Networks implies working on large datasets. These datasets are usually present network share drives, with significantly higher storage capacity. Since the TLT launcher users docker containers under the hood, these drives/mount points neede to be mapped to the docker. The launcher instance can be configured in the

~/.tlt_mounts.jsonfile.The launcher config file consists of 3 sections, namely:

MountsEnvsDockerOptions

The

Mountsparameter defines the paths in the local machine, that should be mapped to the docker. This is a list ofjsondictionaries containing thesourcepath in the local machine and thedestinationpath that is mapped for the TLT commands.The

Envsparameter defines the environement variables to be set to the respective TLT docker. This is also a list of dictionaries. Each dictionary entry has 2 key-value pairs defined.variable: The name of the environment variable you would like to setvalue: The value of the environment variable

The

DockerOptionsparameter defines the options to be set when invoking the docker instance. This parameter is a dictionary containing key-value pair of the parameter and option to set. Currently, the TLT launcher only allows users to configure the following parameters.shm_size: Defines the shared memory size of the docker. If this parameter isn’t set, then the TLT instance allocates 64MB by default. TLT recommends setting this as"16G", thereby allocating 16GB of shared memory.ulimits: Defines the user limits in the docker. This parameter corresponds to the ulimit parameters in/etc/security/limits.conf. TLT recommends users setmemlockto-1andstackto67108864.user: Defines the user id and group id of the user to run the commands in the docker. By default, if this parameter isn’t defined in the~/.tlt_mounts.jsonthe uid and gid of the root user. However, this would mean that when directories created by the TLT dockers would be set to root permissions. If you would like to set the user in the docker to be the same as the host user, please set this parameter as “UID:GID”, where UID and GID can be obtained from the command line by runningid -uandid -g.ports: This parameter defines the ports in the docker to be mounted to the host.You may specify this parameter as a dictionary containig the map between the port in the docker to the port in the host machine. For example, if you wish to expose port 8888 and port 8000, this parameter would look as follows:

"ports":{ "8888":"8888", "8000":"8000" }

Please use the following code block as a sample for the

~/.tlt_mounts.jsonfile. In this mounts sample, we define 3 drives, an environment variable calledCUDA_DEVICE_ORDER. ForDockerOptionswe set shared memory size of16G, user limits and set the host user’s permission. We also bind the port 8888 from the docker to the host.{ "Mounts": [ { "source": "/path/to/your/data", "destination": "/workspace/tlt-experiments/data" }, { "source": "/path/to/your/local/results", "destination": "/workspace/tlt-experiments/results" }, { "source": "/path/to/config/files", "destination": "/workspace/tlt-experiments/specs" } ], "Envs": [ { "variable": "CUDA_DEVICE_ORDER", "value": "PCI_BUS_ID" } ], "DockerOptions": { "shm_size": "16G", "ulimits": { "memlock": -1, "stack": 67108864 }, "user": "1000:1000", "ports": { "8888": 8888 } } }

Similarly, a sample config file containing 2 mount points and no docker options is as below.

{ "Mounts": [ { "source": "/path/to/your/experiments", "destination": "/workspace/tlt-experiments" }, { "source": "/path/to/config/files", "destination": "/workspace/tlt-experiments/specs" } ] }

Running a task.

Once you have installed the TLT launcher, you may now run the tasks supported by TLT using the following command format.

tlt <task> <subtask> <cli_args>

To view the sub-tasks supported by a certain task, you may run the command following the template

For example: Listing the tasks of

detectnet_v2, the outputs is as follows:$ tlt detectnet_v2 --help Using TensorFlow backend. usage: detectnet_v2 [-h] [--gpus GPUS] [--gpu_index GPU_INDEX [GPU_INDEX ...]] [--use_amp] [--log_file LOG_FILE] {calibration_tensorfile,dataset_convert,evaluate,export,inference,prune,train} ... Transfer Learning Toolkit optional arguments: -h, --help show this help message and exit --gpus GPUS The number of GPUs to be used for the job. --gpu_index GPU_INDEX [GPU_INDEX ...] The indices of the GPU's to be used. --use_amp Flag to enable Auto Mixed Precision. --log_file LOG_FILE Path to the output log file. tasks: {calibration_tensorfile,dataset_convert,evaluate,export,inference,prune,train}The TLT launcher also supports a

runcommand associated with every task to allow you to run custom scripts in the docker. This provides you the opporturnity bring in your own data pre-processing scripts and leverage the prebuilt dependencies and isolated dev environements in the TLT dockers.For example, assume you have a shell script to download and preprocess COCO dataset into TFRecords for MaskRCNN, which requires TensorFlow as a dependency. You can simply map the directory containing that script to the docker using the steps mentioned in step 4 below with the

~/.tlt_mounts.jsonand run it astlt mask_rcnn run /path/to/download_and_preprocess_coco.sh <script_args>

The

tltlauncher CLI allows you to interactively run commands inside the docker associated with the tasks you wish to run. This is a useful tool for debugging commands inside the docker and viewing the filesystems from inside the container, as opposed to viewing the end output in the host system. To invoke an interactive session, run thetltcommand with the task and no other arguments. For example, to run interactive commands inside the docker containing the detectnet_v2 task, run the following command:tlt detectnet_v2

This command opens up an interactive session inside the

tlt-streamanalyticsdocker.Note

The interactive command uses the

~/.tlt_mounts.jsonfile to configure the launcher and mount paths in the host file system to the docker.Once you are inside the interactive session, you may run the command task and its associated subtask by calling the

<task> <subtask> <cli_args>commands without thetltprefix.For example, to train a detectnet_v2 model in the interactive session, run the following command after invoking an interactive session using

tlt detectnet_v2detectnet_v2 train -e /path/to/experiment_spec.txt -k <key> -r /path/to/train/output --gpus <number of GPUs>

Handling launched processes¶

TLT launcher allows users to list all the processes that were launched by an instance of the TLT launcher on the host machine,

and kill any jobs the user may deem unnecessary using the list and stop command.

Listing TLT launched processes

The list command, as the name suggests prints out a tabulated list of running processes with the command that was used to invoke the process.

A sample output of

tlt listcommand when you have 2 processes running is as below.============== ================== ============================================================================================================================================================================================= container_id container_status command ============== ================== ============================================================================================================================================================================================= 5316a70139 running detectnet_v2 train -e /workspace/tlt-experiments/detectnet_v2/experiment_dir_unpruned/experiment_spec.txt -k tlt_encode -r /workspace/tlt-experiments/detectnet_v2/experiment_dir_unpruned ============== ================== =============================================================================================================================================================================================

Killing running TLT instances

The

tlt stopcommand helps in killing the running containers should the users wish to abort the respective session. The usage for thetlt stopcommand is as mentioned below.usage: tlt stop [-h] [--container_id CONTAINER_ID [CONTAINER_ID ...]] [--all] {info,list,stop,augment,classification,classifynet,detectnet_v2,dssd,emotionnet,faster_rcnn,fpenet,gazenet,heartratenet,intent_slot_classification,lprnet,mask_rcnn,punctuation_and_capitalization,question_answering,retinanet,speech_to_text,ssd,text_classification,tlt-converter,token_classification,yolo_v3,yolo_v4} ... optional arguments: -h, --help show this help message and exit --container_id CONTAINER_ID [CONTAINER_ID ...] Ids of the containers to be stopped. --all Kill all TLT running TLT containers. tasks: {info,list,stop,augment,classification,classifynet,detectnet_v2,dssd,emotionnet,faster_rcnn,fpenet,gazenet,heartratenet,intent_slot_classification,lprnet,mask_rcnn,punctuation_and_capitalization,question_answering,retinanet,speech_to_text,ssd,text_classification,tlt-converter,token_classification,yolo_v3,yolo_v4}With

tlt stop, you may choose to eitherKill a subset of the containers shown by the

tlt listcommand by providing multiple container id’s to the launcher’s--container_idarg

A sample output of the

tlt listcommand after runningtlt stop --container_id 5316a70139, is as below.============== ================== ============================================================================================================================================================================================= container_id container_status command ============== ================== ============================================================================================================================================================================================= 5316a70139 running detectnet_v2 train -e /workspace/tlt-experiments/detectnet_v2/experiment_dir_unpruned/experiment_spec.txt -k tlt_encode -r /workspace/tlt-experiments/detectnet_v2/experiment_dir_unpruned ============== ================== =============================================================================================================================================================================================

Force kill all the containers by using the

tlt stop --allcommand.

A sample output of

tlt listcommand after running thetlt stop --allcommand is as below.============== ================== ========= container_id container_status command ============== ================== ========= ============== ================== =========

Retrieving information for the underlying TLT components

The

tlt infocommand allows users to retrieve information about the underlying components in the launcher. To retrieve options for thetlt infocommand, you can use thetlt info --helpcommand. The sample usage for the command is as follows:usage: tlt info [-h] [--verbose] {info,list,stop,info,augment,classification,detectnet_v2, ... ,tlt-converter,token_classification,unet,yolo_v3,yolo_v4} ... optional arguments: -h, --help show this help message and exit --verbose Print information about the TLT instance. tasks: {info,list,stop,info,augment,classification,detectnet_v2,dssd,emotionnet,faster_rcnn,fpenet,gazenet,gesturenet,heartratenet,intent_slot_classification,lprnet,mask_rcnn,punctuation_and_capitalization,question_answering,retinanet,speech_to_text,ssd,text_classification,tlt-converter,token_classification,unet,yolo_v3,yolo_v4}When you run

tlt info, the launcher returns concise information about the launcher, namely the docker container names, format version of the launcher config, TLT version, and publishing date.Configuration of the TLT Instance dockers: ['nvcr.io/nvidia/tlt-streamanalytics', 'nvcr.io/nvidia/tlt-pytorch'] format_version: 1.0 tlt_version: 3.0 published_date: 02/02/2021

For more information about the dockers and the tasks supported by the docker, you may use the

--verboseoption of thetlt infocommand. A sample output of thetlt info --verbosecommand is shown below.Configuration of the TLT Instance dockers: nvcr.io/nvidia/tlt-streamanalytics: docker_tag: v3.0-dp-py3 tasks: 1. augment 2. classification 3. detectnet_v2 4. dssd 5. emotionnet 6. faster_rcnn 7. fpenet 8. gazenet 9. gesturenet 10. heartratenet 11. lprnet 12. mask_rcnn 13. retinanet 14. ssd 15. unet 16. yolo_v3 17. yolo_v4 18. tlt-converter nvcr.io/nvidia/tlt-pytorch: docker_tag: v3.0-dp-py3 tasks: 1. speech_to_text 2. text_classification 3. question_answering 4. token_classification 5. intent_slot_classification 6. punctuation_and_capitalization format_version: 1.0 tlt_version: 3.0 published_date: mm/dd/yyyy

Useful Environment variables¶

The TLT launcher watches the following environment variables to override certain configurable parameters.

LAUNCHER_MOUNTS: This environment variable defines the path to the default launcher configuration.jsonfile. If not set, the launcher configuration path is picked up from~/.tlt_mounts.json.OVERRIDE_REGISTRY: This environment variable defines the registry to pull the TLT dockers from. By default, the TLT docker is hosted in NGC under the repositorynvcr.io. For example, if you set theOVERRIDE_REGISTRYenvironment variables as shown below,export OVERRIDE_REGISTRY="dockerhub.io"

the dockers would be

dockerhub.io/nvidia/tlt-streamanalytics:<docker_tag> dockerhub.io/nvidia/tlt-pytorch:<docker_tag>Note

- When using the

OVERRIDE_REGISTRYvariable, use thedocker logincommandto log in to this registry.

docker login $OVERRIDE_REGISTRY