Performance

Traning Performace Results

We applied multiple optimizations to speedup the training throughput of controlnet. The following numbers are got from running on a single A100 GPU.

Model |

Batch Size |

Flash Attention |

Channels Last |

Inductor |

Samples per Second |

Memory Usage |

Weak Scaling |

|---|---|---|---|---|---|---|---|

ControlNet |

8 |

NO |

NO |

NO |

11.68 |

76G |

1.0 |

ControlNet |

8 |

YES |

NO |

NO |

16.40 |

33G |

1.4 |

ControlNet |

8 |

YES |

YES |

NO |

20.24 |

29G |

1.73 |

ControlNet |

8 |

YES |

YES |

YES |

21.52 |

29G |

1.84 |

ControlNet |

32 |

YES |

YES |

YES |

27.20 |

66G |

2.33 |

Training Quality Results

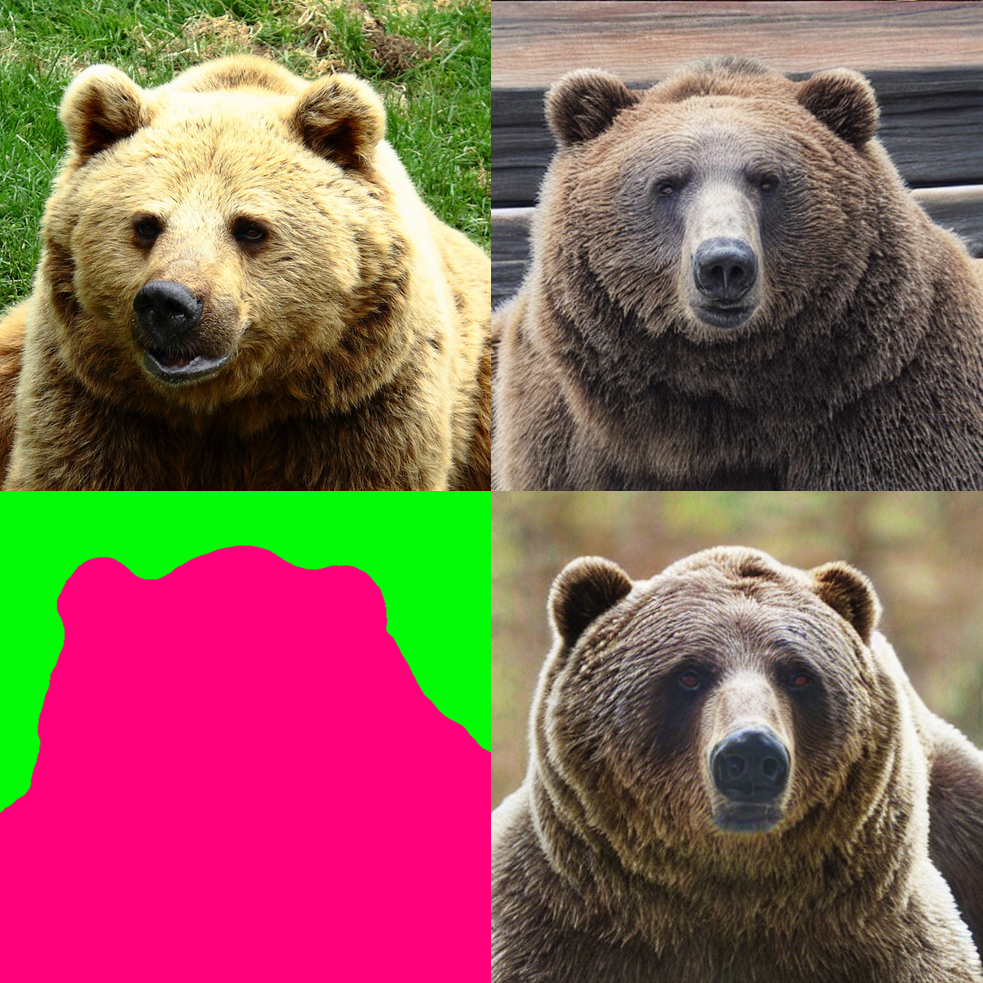

Here we show the examples of controlnet generations. The left column is the original input (upper) and conditioning image (lower).

Prompt: House.

Prompt: House in oil painting style.

Prompt: Bear.

Inference Performance Results

Latency times are started directly before the text encoding (CLIP) and stopped directly after the output image decoding (VAE). For framework we use the Torch Automated Mixed Precision (AMP) for FP16 computation. For TRT, we export the various models with the FP16 acceleration. We use the optimized TRT engine setup present in the deployment directory to get the numbers in the same environment as the framework.

GPU: NVIDIA DGX A100 (1x A100 80 GB)

Batch Size: Synonymous with num_images_per_prompt

Model |

Batch Size |

Sampler |

Inference Steps |

TRT FP 16 Latency (s) |

FW FP 16 (AMP) Latency (s) |

TRT vs FW Speedup (x) |

|---|---|---|---|---|---|---|

ControlNet (Res=512) |

1 |

DDIM |

50 |

1.7 |

6.5 |

3.8 |

ControlNet (Res=512) |

2 |

DDIM |

50 |

2.6 |

7.1 |

2.8 |

ControlNet (Res=512) |

4 |

DDIM |

50 |

4.4 |

11.1 |

2.5 |

ControlNet (Res=512) |

8 |

DDIM |

50 |

8.2 |

21.1 |

2.6 |