Install NetQ NVLink

Follow these steps to set up and configure your VMs in a cluster of servers running NetQ NVLink. First configure the VM on the master node, and then configure the VM on each additional node. NVIDIA recommends installing the virtual machines on different servers to increase redundancy in the event of a hardware failure.

System Requirements

NetQ NVLink 4.15.0 supports 3-node clusters with the following system requirements. Verify that each node in your cluster meets the VM requirements:

| Resource | Minimum Requirements |

|---|---|

| Processor | 48 virtual CPUs |

| Memory | 512GB RAM |

| Local disk storage | 3.2TB SSD with minimum disk IOPS of 1000 for a standard 4kb block size (Note: This must be an SSD; other storage options can lead to system instability and are not supported.) |

| Network interface speed | 10 Gbps NIC |

| Hypervisor | KVM/QCOW (QEMU Copy on Write) image for servers running Ubuntu; VMware ESXi™ 6.5 or later (OVA image) for servers running Cumulus Linux or Ubuntu |

Port Requirements

Confirm that the required ports are open for communications.

| Port or Protocol Number | Protocol | Component Access |

|---|---|---|

| 4 | IP Protocol | Calico networking (IP-in-IP Protocol) |

| 22 | TCP | SSH |

| 80 | TCP | nginx |

| 179 | TCP | Calico networking (BGP) |

| 443 | TCP | NetQ UI |

| 2379 | TCP | etcd datastore |

| 4789 | UDP | Calico networking (VxLAN) |

| 5000 | TCP | Docker registry |

| 6443 | TCP | kube-apiserver |

| 30001 | TCP | DPU communication |

| 31980 | TCP | NetQ Agent communication |

| 31982 | TCP | NetQ Agent SSL communication |

| 32708 | TCP | API Gateway |

Additionally, for internal cluster communication, you must open these ports:

| Port or Protocol Number | Protocol | Component Access |

|---|---|---|

| 8080 | TCP | Admin API |

| 5000 | TCP | Docker registry |

| 6443 | TCP | Kubernetes API server |

| 10250 | TCP | kubelet health probe |

| 2379 | TCP | etcd |

| 2380 | TCP | etcd |

| 7072 | TCP | Kafka JMX monitoring |

| 9092 | TCP | Kafka client |

| 7071 | TCP | Cassandra JMX monitoring |

| 7000 | TCP | Cassandra cluster communication |

| 9042 | TCP | Cassandra client |

| 7073 | TCP | Zookeeper JMX monitoring |

| 2888 | TCP | Zookeeper cluster communication |

| 3888 | TCP | Zookeeper cluster communication |

| 2181 | TCP | Zookeeper client |

| 36443 | TCP | Kubernetes control plane |

Installation and Configuration

Download the NetQ image.

a. Log in to your NVIDIA Application Hub account.

b. Select NVIDIA Licensing Portal.

c. Select Software Downloads from the menu.

d. In the search field above the table, enter NetQ.

e. For deployments using KVM, download the NetQ SW 4.15.0 KVM image. For deployments using VMware, download the NetQ SW 4.15.0 VMware image

f. If prompted, read the license agreement and proceed with the download.

NVIDIA employees can download NetQ directly from the NVIDIA Licensing Portal.

- Open your hypervisor and configure your VM. You can use the following examples for reference or use your own hypervisor instructions.

KVM Example Configuration

This example shows the VM setup process for a system with Libvirt and KVM/QEMU installed.

Confirm that the SHA256 checksum matches the one posted on the NVIDIA Application Hub to ensure the image download has not been corrupted.

$ sha256sum ./Downloads/netq-4.15.0-ubuntu-24.04-ts-qemu-scale.qcow2

$ a0d9a4f9ce8925b7dfb90a5a44616cadbf3fc667013abae07cd774555c08ff6f ./Downloads/netq-4.15.0-ubuntu-24.04-ts-qemu-scale.qcow2- Copy the QCOW2 image to a directory where you want to run it.

Tip: Copy, instead of moving, the original QCOW2 image that was downloaded to avoid re-downloading it again later should you need to perform this process again.

$ sudo mkdir /vms

$ sudo cp ./Downloads/netq-4.15.0-ubuntu-24.04-ts-qemu-scale.qcow2 /vms/ts.qcow2 - Create the VM.

For a Direct VM, where the VM uses a MACVLAN interface to sit on the host interface for its connectivity:

$ virt-install --name=netq_ts --vcpus=16 --memory=65536 --os-type=linux --os-variant=generic --disk path=/vms/ts.qcow2,format=qcow2,bus=virtio,cache=none --network=type=direct,source=eth0,model=virtio --import --noautoconsole

Replace the disk path value with the location where the QCOW2 image is to reside. Replace network model value (eth0 in the above example) with the name of the interface where the VM is connected to the external network.

Or, for a Bridged VM, where the VM attaches to a bridge which has already been setup to allow for external access:

$ virt-install --name=netq_ts --vcpus=16 --memory=65536 --os-type=linux --os-variant=generic \ --disk path=/vms/ts.qcow2,format=qcow2,bus=virtio,cache=none --network=bridge=br0,model=virtio --import --noautoconsole

Replace network bridge value (br0 in the above example) with the name of the (pre-existing) bridge interface where the VM is connected to the external network.

Make note of the name used during install as this is needed in a later step.

- Watch the boot process in another terminal window.

$ virsh console netq_ts

VMware Example Configuration

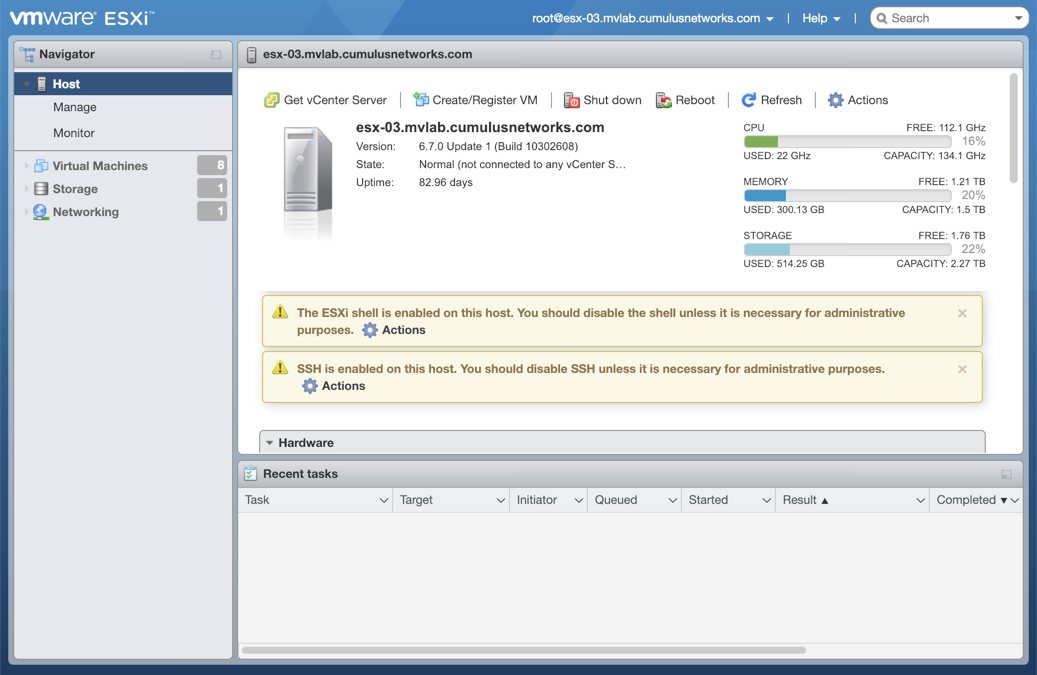

This example shows the VM setup process using an OVA file with VMware ESXi.Enter the address of the hardware in your browser.

Log in to VMware using credentials with root access.

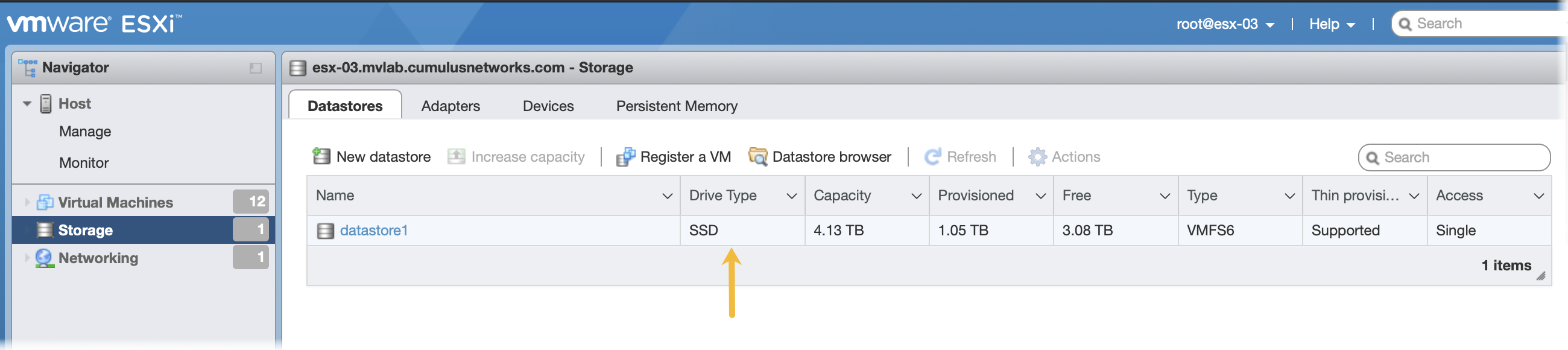

Click Storage in the Navigator to verify you have an SSD installed.

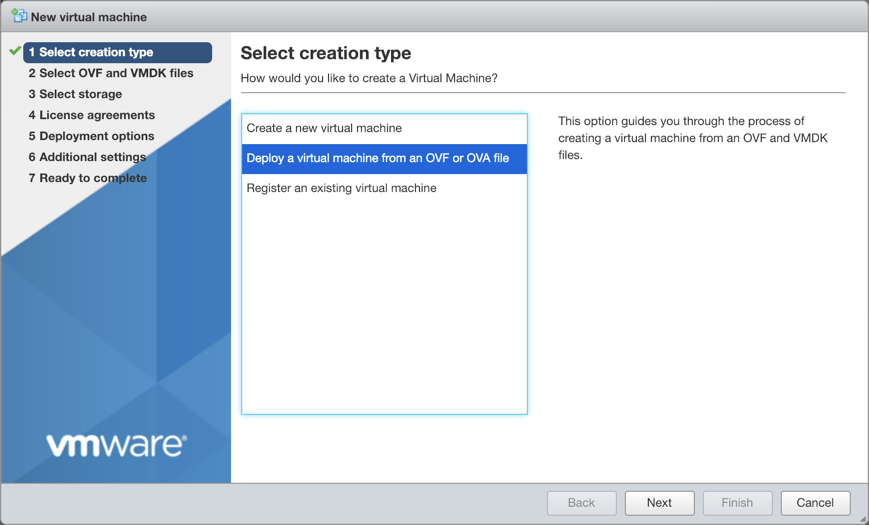

Click Create/Register VM at the top of the right pane.

Select Deploy a virtual machine from an OVF or OVA file, and click Next.

Provide a name for the VM, for example NetQ.

Tip: Make note of the name used during install as this is needed in a later step.

Drag the NetQ image file you downloaded from the NVIDIA Application Hub to the installation wizard, then click Next.

Select the storage type and data store for the image, then click Next.

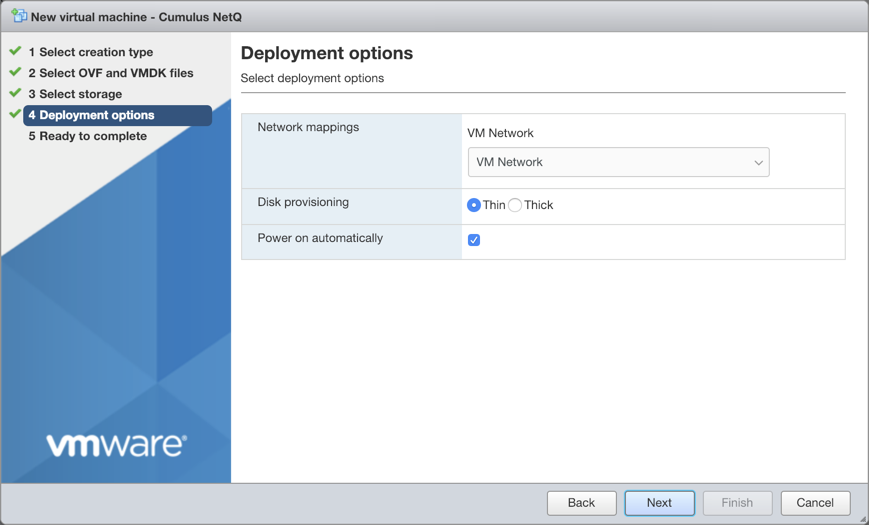

Accept the default deployment options or modify them according to your network needs. Click Next when you are finished.

Review the configuration summary. Click Back to change any of the settings, or click Finish to continue with the creation of the VM.

The progress of the request is shown in the Recent Tasks window at the bottom of the application. This may take some time. After the VM is deployed, the wizard displays the full hardware and configuration details.

- Log in to the VM and change the password.

Use the default credentials to log in the first time:

- Username: nvidia

- Password: nvidia

$ ssh nvidia@<ipaddr>

Warning: Permanently added '<ipaddr>' (ECDSA) to the list of known hosts.

Ubuntu 24.04 LTS

nvidia@<ipaddr>'s password:

You are required to change your password immediately (root enforced)

System information as of Thu Dec 3 21:35:42 UTC 2024

System load: 0.09 Processes: 120

Usage of /: 8.1% of 61.86GB Users logged in: 0

Memory usage: 5% IP address for eth0: <ipaddr>

Swap usage: 0%

WARNING: Your password has expired.

You must change your password now and login again!

Changing password for nvidia.

(current) UNIX password: nvidia

Enter new UNIX password:

Retype new UNIX password:

passwd: password updated successfully

Connection to <ipaddr> closed.

Log in again with your new password.

$ ssh nvidia@<ipaddr>

Warning: Permanently added '<ipaddr>' (ECDSA) to the list of known hosts.

Ubuntu 24.04 LTS

nvidia@<ipaddr>'s password:

System information as of Thu Dec 3 21:35:59 UTC 2024

System load: 0.07 Processes: 121

Usage of /: 8.1% of 61.86GB Users logged in: 0

Memory usage: 5% IP address for eth0: <ipaddr>

Swap usage: 0%

Last login: Thu Dec 3 21:35:43 2024 from <local-ipaddr>

nvidia@ubuntu:~$

- Change the hostname for the VM from the default value.

The default hostname for the NetQ virtual machines is ubuntu. Change the hostname to fit your naming conventions while meeting Internet and Kubernetes naming standards.

Kubernetes requires hostnames to be composed of a sequence of labels concatenated with dots. For example, “en.wikipedia.org” is a hostname. Each label must be from 1 to 63 characters long. The entire hostname, including the delimiting dots, has a maximum of 253 ASCII characters.

The Internet standards (RFCs) for protocols specify that labels may contain only the ASCII letters a through z (in lower case), the digits 0 through 9, and the hyphen-minus character ('-').

Set the new hostname using the following command. Replace NEW_HOSTNAME with the name you chose.

nvidia@hostname:~$ sudo hostnamectl set-hostname NEW_HOSTNAME

Add the same NEW_HOSTNAME value to /etc/hosts on your VM for the localhost entry. For example:

127.0.0.1 localhost NEW_HOSTNAME

Open your hypervisor and set up the VM for the additional nodes in the same manner as for the master node.

Install and activate the NetQ software using the CLI.

Run the following command on your master node to initialize the cluster. Copy the output of the command which includes the SSH key. You will use it in the next step.

nvidia@<hostname>:~$ netq install cluster master-init

Please run the following command on all worker nodes:

netq install cluster worker-init c3NoLXJzYSBBQUFBQjNOemFDMXljMkVBQUFBREFRQUJBQUFCQVFDM2NjTTZPdVM3dQN9MWTU1a

Run the

netq install cluster worker-init <ssh-key>command on each non-master node.Run the installation command on your master node with the mandatory configuration options:

nvidia@<hostname>:~$ netq install nvl servers 10.1.0.100 10.1.0.101 10.1.0.102 bundle /mnt/installables/NetQ-4.15.0.tgz kong-rw-password <rw-password> kong-ro-password <ro-password>

Optionally, include an alertmanager webhook URL with the alertmanager-webhook-url option:

nvidia@<hostname>:~$ netq install nvl servers 10.1.0.100 10.1.0.101 10.1.0.102 bundle /mnt/installables/NetQ-4.15.0.tgz kong-rw-password <rw-password> kong-ro-password <ro-password> alertmanager-webhook-url http://alertmanager.example.com/webhook

If this step fails for any reason, run netq bootstrap reset and then try again.