Document Summarization Support for NVIDIA RAG Blueprint#

This guide explains how to use the NVIDIA RAG Blueprint system’s summarization features, including how to enable summary generation during document ingestion and how to retrieve document summaries via the API.

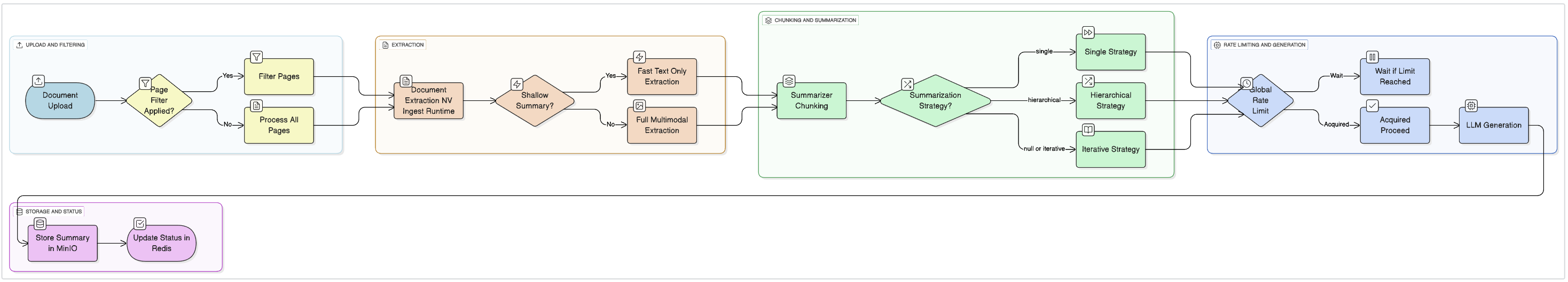

Architecture Overview#

The diagram below illustrates the complete RAG pipeline with the summarization workflow:

Overview#

The NVIDIA RAG Blueprint features intelligent document summarization with real-time progress tracking, enabling you to:

Generate summaries with multiple strategies – Choose between single-pass (fastest), hierarchical (balanced), or iterative (best quality)

Fast shallow extraction – 10x faster text-only summaries skipping OCR/tables/images

Flexible page filtering – Summarize specific pages using ranges, negative indexing, or even/odd patterns

Real-time status tracking – Monitor summary generation progress with chunk-level updates

Global rate limiting – Prevent GPU/API overload with Redis-based semaphores

Token-based chunking – Aligned with nv-ingest using the same tokenizer for consistency

Tip

For complete working code examples and easy deployment, refer to the Document Summarization Notebook. The notebook provides end-to-end workflows for all summarization features in both library and API modes.

1. Enabling Summarization During Document Ingestion#

When uploading documents to the vector store using the ingestion API (POST /documents), you can request that a summary be generated for each document. This is controlled by the generate_summary flag and optional summary_options in the data field of the multipart form request.

Prerequisites#

Before uploading documents, you need to create a collection. If you haven’t already created one, use the following command:

# Set server endpoints and collection name (modify if needed)

export INGESTOR_SERVER_URL="http://localhost:8082"

export RAG_SERVER_URL="http://localhost:8081"

export COLLECTION_NAME="test_summary_api"

# Create a collection

curl -X POST "${INGESTOR_SERVER_URL}/v1/collection" \

-H "Content-Type: application/json" \

-d "{

\"collection_name\": \"${COLLECTION_NAME}\"

}"

Example: Basic Summarization#

# Upload documents with summary generation

curl -X POST "${INGESTOR_SERVER_URL}/v1/documents" \

-H "accept: application/json" \

-F "documents=@data/multimodal/woods_frost.pdf" \

-F "documents=@data/multimodal/multimodal_test.pdf" \

-F "data={

\"collection_name\": \"${COLLECTION_NAME}\",

\"blocking\": false,

\"split_options\": {\"chunk_size\": 512, \"chunk_overlap\": 150},

\"generate_summary\": true

}"

generate_summary: Set to

trueto enable summary generation for each uploaded document. The summary generation always happens asynchronously in the backend after the ingestion is complete. The ingestion status is reported to be completed irrespective of whether summarization has been successfully completed or not. You can track the summary generation status independently using theGET /summaryendpoint.

Example: Advanced Summarization Options#

You can customize summarization behavior using the summary_options parameter:

# Upload with advanced summarization options

curl -X POST "${INGESTOR_SERVER_URL}/v1/documents" \

-H "accept: application/json" \

-F "documents=@data/multimodal/product_catalog.pdf" \

-F "data={

\"collection_name\": \"${COLLECTION_NAME}\",

\"blocking\": false,

\"generate_summary\": true,

\"summary_options\": {

\"page_filter\": [[1, 10], [-5, -1]],

\"shallow_summary\": true,

\"summarization_strategy\": \"single\"

}

}"

Summary Options Explained#

page_filter (optional): Select specific pages to summarize

Supported formats: Page filtering only applies to PDF and PPTX files. Other formats do not have a page structure and will be processed in full with a warning logged.

Ranges:

[[1, 10], [20, 30]]- Pages 1-10 and 20-30Negative indexing:

[[-5, -1]]- Last 5 pages (Pythonic indexing where -1 is last page)String filters:

"even"or"odd"- All even or odd pagesExamples:

[[1, 10]]- First 10 pages[[-10, -1]]- Last 10 pages[[1, 10], [-5, -1]]- First 10 and last 5 pages"even"- All even-numbered pages

shallow_summary (optional, default:

false): Enable fast text-only extractiontrue: Text-only extraction, skips OCR/table detection/image processing (~10x faster)false: Full multimodal extraction with OCR, tables, charts, and imagesUse

truefor quick summaries of text-heavy documentsUse

falsefor comprehensive summaries of documents with complex layouts

summarization_strategy (optional, default:

null): Choose summarization approach"single": ⚡ Fastest - Merge content, chunk by configured size, and summarize only the first chunkBest for: Quick overviews, short documents

Speed: Fastest (one LLM call)

"hierarchical": 🔀 Balanced - Tree-based summarization: summarize all chunks, merge summaries until they fit chunk size, repeat recursively until reaching one final summaryBest for: Balance between speed and quality

Speed: Fast (parallel processing with tree reduction)

null(or omit): 📚 Best Quality - Sequential refinement chunk by chunk (iterative)Best for: Long documents requiring best quality

Speed: Slower but highest quality

2. Retrieving Document Summaries#

Once a document has been ingested with summarization enabled, you can retrieve its summary using the GET /summary endpoint. The endpoint provides real-time status tracking with chunk-level progress information.

Endpoint#

GET /v1/summary?collection_name=<collection>&file_name=<filename>&blocking=<bool>&timeout=<seconds>

collection_name (required): Name of the collection containing the document.

file_name (required): Name of the file for which to retrieve the summary.

blocking (optional, default: false):

If

true, the request will wait (up totimeoutseconds) for the summary to be generated if it is not yet available. During this time, the endpoint polls Redis for status updates.If

false, the request will return immediately with the current status. If the summary is not ready, you’ll receive the current generation status (PENDING, IN_PROGRESS, or NOT_FOUND).

timeout (optional, default: 300): Maximum time to wait (in seconds) if

blockingis true.

Status Values#

The endpoint returns one of the following status values:

SUCCESS: Summary generation completed successfully. The

summaryfield contains the generated text.PENDING: Summary generation is queued but not yet started.

IN_PROGRESS: Summary is currently being generated. The response includes

progressinformation showing current chunk processing (e.g., “Processing chunk 3/5”).FAILED: Summary generation failed. The

errorfield contains the failure reason.NOT_FOUND: No summary was requested for this document (it was not uploaded with

generate_summary=true).

Example Request#

# Retrieve summary (non-blocking)

curl -X GET --globoff "${RAG_SERVER_URL}/v1/summary?collection_name=${COLLECTION_NAME}&file_name=woods_frost.pdf&blocking=false" \

-H "accept: application/json"

# Retrieve summary (blocking with timeout)

curl -X GET --globoff "${RAG_SERVER_URL}/v1/summary?collection_name=${COLLECTION_NAME}&file_name=woods_frost.pdf&blocking=true&timeout=60" \

-H "accept: application/json"

Example Response (Success)#

{

"message": "Summary retrieved successfully.",

"summary": "This document provides an overview of ...",

"file_name": "woods_frost.pdf",

"collection_name": "test_summary_api",

"status": "SUCCESS"

}

Example Response (In Progress)#

When summary generation is in progress, you’ll receive real-time progress information:

{

"message": "Summary generation is in progress. Set blocking=true to wait for completion.",

"status": "IN_PROGRESS",

"file_name": "woods_frost.pdf",

"collection_name": "test_summary_api",

"started_at": "2025-01-24T10:30:00.000Z",

"updated_at": "2025-01-24T10:30:15.000Z",

"progress": {

"current": 3,

"total": 5,

"message": "Processing chunk 3/5"

}

}

HTTP Status Code: 202 (Accepted)

Example Response (Pending)#

{

"message": "Summary generation is pending. Set blocking=true to wait for completion.",

"status": "PENDING",

"file_name": "woods_frost.pdf",

"collection_name": "test_summary_api",

"queued_at": "2025-01-24T10:30:00.000Z"

}

HTTP Status Code: 202 (Accepted)

Note: For PENDING status, only queued_at is set. Fields like started_at, updated_at, and progress will be null since processing hasn’t started yet.

Example Response (Not Found)#

{

"message": "Summary for woods_frost.pdf not found. To generate a summary, upload the document with generate_summary=true.",

"status": "NOT_FOUND",

"file_name": "woods_frost.pdf",

"collection_name": "test_summary_api"

}

HTTP Status Code: 404 (Not Found)

Example Response (Failed)#

{

"message": "Summary generation failed for woods_frost.pdf",

"status": "FAILED",

"error": "Error details here",

"file_name": "woods_frost.pdf",

"collection_name": "test_summary_api",

"started_at": "2025-01-24T10:30:00.000Z",

"completed_at": "2025-01-24T10:30:30.000Z"

}

HTTP Status Code: 500 (Internal Server Error)

Example Response (Timeout)#

When using blocking mode, if the summary is not generated within the specified timeout:

{

"message": "Timeout waiting for summary generation for woods_frost.pdf after 300 seconds",

"status": "FAILED",

"error": "Timeout after 300 seconds",

"file_name": "woods_frost.pdf",

"collection_name": "test_summary_api"

}

HTTP Status Code: 408 (Request Timeout)

3. Python Notebook and Code Examples#

Important

For complete, ready-to-use Python examples, download and run the Document Summarization Notebook.

The notebook demonstrates the following:

Collection creation and document ingestion with summarization

All three summarization strategies (single, hierarchical, iterative)

Page filtering and shallow summary options

Summary retrieval with blocking and non-blocking modes

Complete end-to-end workflows in both library mode and API mode

Quick Access to the Notebook#

You can fetch the notebook directly by using the following code.

# Download the summarization notebook

curl -O https://raw.githubusercontent.com/NVIDIA-AI-Blueprints/rag/main/notebooks/summarization.ipynb

# Or clone the repository for all notebooks

git clone https://github.com/NVIDIA-AI-Blueprints/rag.git

cd rag/notebooks

For API schema details, refer to the OpenAPI schema.

Note

Lite Mode Limitation: Document summarization is not supported in lite mode (containerless deployment). For lite mode examples, see rag_library_lite_usage.ipynb.

4. Configuration and Environment Variables#

The summarization feature can be configured using the following environment variables:

Redis Configuration (Status Tracking)#

Summary generation status is tracked using Redis to enable cross-service visibility (between ingestor and RAG servers). Configure the following environment variables for both services:

REDIS_HOST: Redis server hostname (default:

localhost)REDIS_PORT: Redis server port (default:

6379)REDIS_DB: Redis database number (default:

0)

Status Tracking Behavior:

Status information is stored in Redis with a 24-hour TTL (automatically cleaned up)

If Redis is unavailable, the system gracefully degrades: summaries will still be generated and stored in MinIO, but real-time status tracking will not be available

Status values include:

PENDING,IN_PROGRESS(with chunk progress),SUCCESS,FAILED, andNOT_FOUNDRedis semaphore counter is automatically reset when ingestor server starts, preventing stale values from crashed processes

Core Configuration#

The summarization feature uses specialized prompts defined in the prompt.yaml file. The system automatically selects the appropriate prompts based on extraction mode and document size:

shallow_summary_prompt: Used for text-only (shallow) extraction workflows whenshallow_summary: truedocument_summary_prompt: Used for full multimodal extraction (default) for single-chunk processingiterative_summary_prompt: Used for multi-chunk documents in iterative strategy

For more details on customizing these prompts, see Prompt Customization Guide.

Environment Variables:

SUMMARY_LLM: The model name to use for summarization (default:

nvidia/llama-3.3-nemotron-super-49b-v1.5)SUMMARY_LLM_SERVERURL: The server URL hosting the summarization model (default: empty, uses NVIDIA hosted API)

SUMMARY_LLM_MAX_CHUNK_LENGTH: Maximum chunk size in tokens for document processing (default:

9000)SUMMARY_CHUNK_OVERLAP: Overlap between chunks for summarization in tokens (default:

400)SUMMARY_LLM_TEMPERATURE: Temperature parameter for the summarization model, controls randomness (default:

0.0)SUMMARY_LLM_TOP_P: Top-p (nucleus sampling) parameter for the summarization model (default:

1.0)SUMMARY_MAX_PARALLELIZATION: Global rate limit for concurrent summary tasks across all workers (default:

20)

Example Configuration#

export SUMMARY_LLM="nvidia/llama-3.3-nemotron-super-49b-v1.5"

export SUMMARY_LLM_SERVERURL=""

export SUMMARY_LLM_MAX_CHUNK_LENGTH=9000

export SUMMARY_CHUNK_OVERLAP=400

export SUMMARY_LLM_TEMPERATURE=0.0

export SUMMARY_LLM_TOP_P=1.0

export SUMMARY_MAX_PARALLELIZATION=20

Token-Based Chunking#

The summarization system uses token-based chunking aligned with nv-ingest for consistency:

Tokenizer: Uses

e5-large-unsupervised(same as nv-ingest)Max Chunk Length: 9000 tokens

Chunk Overlap: 400 tokens

Benefits: More accurate chunking, better context preservation, aligned with ingestion pipeline

Global Rate Limiting#

To prevent GPU/API overload, the system uses Redis-based global rate limiting:

Default Limit: Maximum 20 concurrent summary tasks across all workers

Redis Semaphore: Coordinates access across multiple ingestor server instances

Connection Pooling: Up to 50 Redis connections for efficient coordination

Behavior: Tasks wait in queue until a slot becomes available

Reset on Startup: Counter automatically reset when ingestor server starts

Chunking Strategy#

The summarization system uses an intelligent chunking approach with different prompts for different scenarios:

Prompt Selection Based on Extraction Mode:

Shallow extraction (

shallow_summary: true): Usesshallow_summary_prompt- optimized for fast text-only processingFull extraction (

shallow_summary: false, default): Usesdocument_summary_promptanditerative_summary_prompt- comprehensive multimodal processing

Processing by Document Size:

Single Chunk Processing: If a document fits within

SUMMARY_LLM_MAX_CHUNK_LENGTHtokens, it’s processed as a single chunk.Prompt used:

shallow_summary_prompt(for shallow) ordocument_summary_prompt(for full extraction)Strategy: All strategies use the appropriate prompt for single-chunk documents

Multi-Chunk Processing: For larger documents:

The document is split into chunks using

SUMMARY_LLM_MAX_CHUNK_LENGTHas the maximum size (in tokens)SUMMARY_CHUNK_OVERLAPtokens are preserved between chunks for contextStrategy behavior:

single: Concatenates all chunks into one, truncates if exceeds max length (fastest)hierarchical: Processes chunks in parallel using extraction-appropriate prompt, then combines summaries (balanced)null/iterative: Initial chunk uses extraction-appropriate prompt, subsequent chunks useiterative_summary_promptto update existing summary (best quality)

This approach ensures that even very large documents can be summarized effectively while maintaining context across chunk boundaries. The prompt selection automatically adapts based on extraction mode, document size, processing stage, and selected strategy.

5. Notes and Best Practices#

Summarization is only available if

generate_summarywas set totrueduring document upload.If you request a summary for a document that was not ingested with summarization enabled, you’ll receive a

NOT_FOUNDstatus.Use the

blockingparameter to control whether your request waits for summary generation or returns immediately with the current status.The summary is pre-generated and stored in MinIO; repeated requests for the same document will return the same summary unless the document is re-uploaded or updated.

Status Tracking: Monitor summary generation progress in real-time using the

GET /summaryendpoint. TheIN_PROGRESSstatus includes chunk-level progress (e.g., “Processing chunk 3/5”).Redis Requirement: For status tracking and global rate limiting to work across services, ensure Redis is configured and accessible to both ingestor and RAG servers. Without Redis, summaries will still be generated but status tracking and rate limiting will be unavailable.

Timeout Handling: When using

blocking=true, set an appropriate timeout based on your document size. Large documents may take several minutes to summarize.Page Filtering Limitation: The

page_filterparameter only applies to PDF and PPTX files, which have a natural page structure. For other file types, the page filter will be ignored and the entire document will be processed, with a warning logged in the server logs.

Performance Recommendations#

For fastest summaries: Use

shallow_summary=true+summarization_strategy="single"+page_filterto select key pagesFor best quality: Use

shallow_summary=false+summarization_strategy=null(iterative) + process all pagesFor balanced approach: Use

shallow_summary=true+summarization_strategy="hierarchical"+ selective pages

Token-Based Chunking Best Practices#

Adjust

SUMMARY_LLM_MAX_CHUNK_LENGTHbased on your model’s context window and available resourcesLarger chunk sizes generally produce better summaries but require more memory and processing time

The default 9000 tokens works well for most models and document types

Keep

SUMMARY_CHUNK_OVERLAPat 400-500 tokens to maintain context continuity between chunks

Rate Limiting Considerations#

Increase

SUMMARY_MAX_PARALLELIZATIONif you have more GPU resources availableDecrease it if experiencing GPU memory issues or API rate limits

Monitor Redis semaphore usage to optimize for your workload