Workflow 1: Docker Containers

In this setup you will run the microservices directly locally from docker containers to create a single avatar animation stream instance.

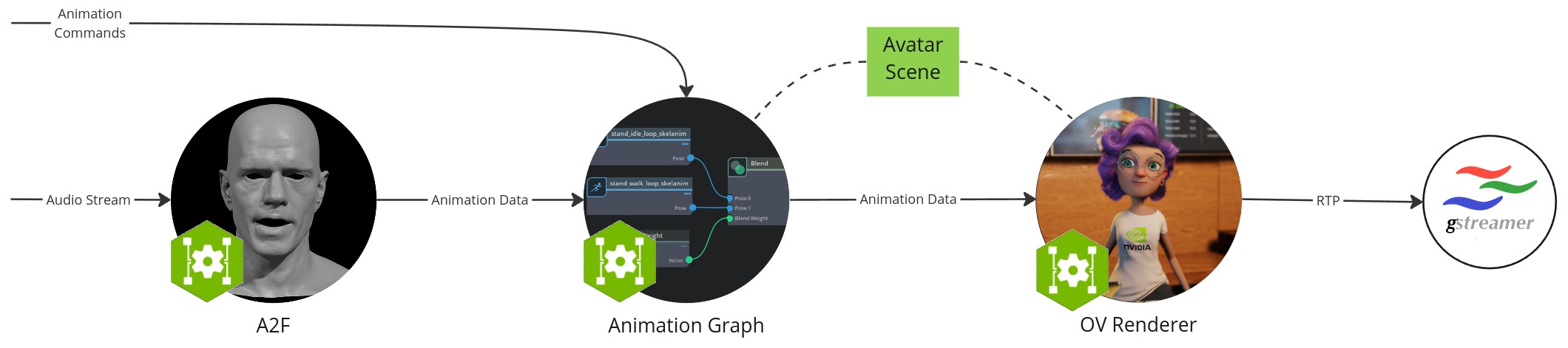

The workflow configuration consists of the following components:

Audio2Face (A2F) microservice: converts speech audio into facial animation including lip syncing

Animation Graph microservice: manages and blendes animation states

Omniverse Renderer microservice: renderer that visualizes the animation data based on the loaded avatar scene

Gstreamer client: captures and shows image and audio streaming data as user front end

Avatar scene: Collection of 3D scene and avatar model data that is saved in a local folder

Prerequisites

Before you start make sure to have installed and checked all prerequisites in the Development Setup.

Additionally, this section assumes that the following prerequisites are met:

You have installed Docker

You have access to NVAIE, which is required to download the Animation Graph and Omniverse Renderer microservices

You have access access to the public NGC catalog, which is required to download the Avatar Configurator

Hardware Requirements

Each component has its own hardware requirements. The requirements of the workflow is the sum of its components.

Audio2Face (A2F) microservice

Animation Graph microservice

Omniverse Renderer microservice

Pull Docker Containers

docker pull nvcr.io/eevaigoeixww/animation/ia-animation-graph-microservice:1.0.1

docker pull nvcr.io/eevaigoeixww/animation/ia-omniverse-renderer-microservice:1.0.1

docker pull nvcr.io/eevaigoeixww/animation/audio2face:1.0.11

Download Avatar Scene from NGC

Download scene from NGC and save it in a local avatar-scene-folder.

Alternatively, you can download the scene using the ngc command.

ngc registry resource download-version "nvidia/ucs-ms/default-avatar-scene:1.0.0"

Run Animation Graph Microservice

docker run -it --rm --gpus all --network=host --name anim-graph-ms -v <path-to-avatar-scene-folder>:/home/ace/asset nvcr.io/eevaigoeixww/animation/ia-animation-graph-microservice:1.0.1

Where <path-to-avatar-scene-folder> should be replaced with the local avatar scene folder from the previous step.

Note

If you encounter warnings about glfw failing to initialize, see the troubleshooting section.

Run Omniverse Renderer Microservice

docker run --env IAORMS_RTP_NEGOTIATION_HOST_MOCKING_ENABLED=true --rm --gpus all --network=host --name renderer-ms -v <path-to-avatar-scene-folder>:/home/ace/asset nvcr.io/eevaigoeixww/animation/ia-omniverse-renderer-microservice:1.0.1

Where <path-to-avatar-scene-folder> should be replaced with the local avatar scene folder again.

Note

Starting this service can take up to 30min. It is ready when you see the following line in the terminal:

[SceneLoader] Assets loaded

Run Audio2Face Microservice

Run the following command to run Audio2Face:

docker run --rm --network=host -it --gpus all nvcr.io/eevaigoeixww/animation/audio2face:1.0.11

Generating TRT models:

./service/generate_trt_model.py built-in claire_v1.3

./service/generate_a2e_trt_model.py

Make sure to set the IP in service/a2f_config.yaml (default 0.0.0.0) under grpc_output to your system’s IP.

nano service/a2f_config.yaml

Launch service:

./service/launch_service.py service/a2f_config.yaml

The following log line appears when the service is ready:

[2024-04-23 12:44:33.066] [ global ] [info] Running...

Note

More details on Audio2Face deployment can be found in Container Deployment.

Setup & Start Gstreamer

We will use Gstreamer to catch the image and audio output streams to visualize the avatar scene.

Install Gstreamer plugin:

sudo apt-get install gstreamer1.0-plugins-bad gstreamer1.0-libav

Run the video receiver in its own terminal:

gst-launch-1.0 -v udpsrc port=9020 caps="application/x-rtp" ! rtpjitterbuffer drop-on-latency=true latency=20 ! rtph264depay ! h264parse ! avdec_h264 ! videoconvert ! queue ! autovideosink sync=false

And the audio receiver in its own terminal (linux):

gst-launch-1.0 -v udpsrc port=9021 caps="application/x-rtp,clock-rate=16000" ! rtpjitterbuffer ! rtpL16depay ! audioconvert ! autoaudiosink sync=false

Or, if you are running the audio receiver on Windows:

gst-launch-1.0 -v udpsrc port=9021 caps="application/x-rtp,clock-rate=16000" ! rtpjitterbuffer ! rtpL16depay ! audioconvert ! directsoundsink sync=false

Note

No image or sound is shown until streams are added in next step!

Connect Microservices through Common Stream ID

Back in the main terminal, run the following commands:

stream_id=$(uuidgen)

curl -X POST -s http://localhost:8020/streams/$stream_id

curl -X POST -s http://localhost:8021/streams/$stream_id

Note

You should see now a slightly moving avatar rendering.

Test Animation Graph Interface

Let’s set a new posture:

curl -X PUT -s http://localhost:8020/streams/$stream_id/animation_graphs/avatar/variables/posture_state/Talking

or change the avatar’s position:

curl -X PUT -s http://localhost:8020/streams/$stream_id/animation_graphs/avatar/variables/position_state/Left

or start a gesture:

curl -X PUT -s http://localhost:8020/streams/$stream_id/animation_graphs/avatar/variables/gesture_state/Pulling_Mime

or trigger a facial gesture:

curl -X PUT -s http://localhost:8020/streams/$stream_id/animation_graphs/avatar/variables/facial_gesture_state/Smile

Alternatively, you can use the OpenAPI interface to see and try out more: http://localhost:8020/docs.

You can find all valid variable values here: Default Animation Graph.

Test Audio2Face

Let’s now take a sample audio file to feed into Audio2Face to drive the facial speaking animation.

Normally, you would send audio to Audio2Face through its gRPC API. For convenience, a python script allows you to do this through the command line. Follow the steps to setup the script.

The script comes with a sample audio file that is compatible with Audio2Face. Run the following command to send the sample audio file to Audio2Face:

python3 validate.py -u 127.0.0.1:50000 -i $stream_id english_voice_male_p1_neutral_16k.wav

Note

Audio2Face requires audio to be in 16KHz, mono-channel format.

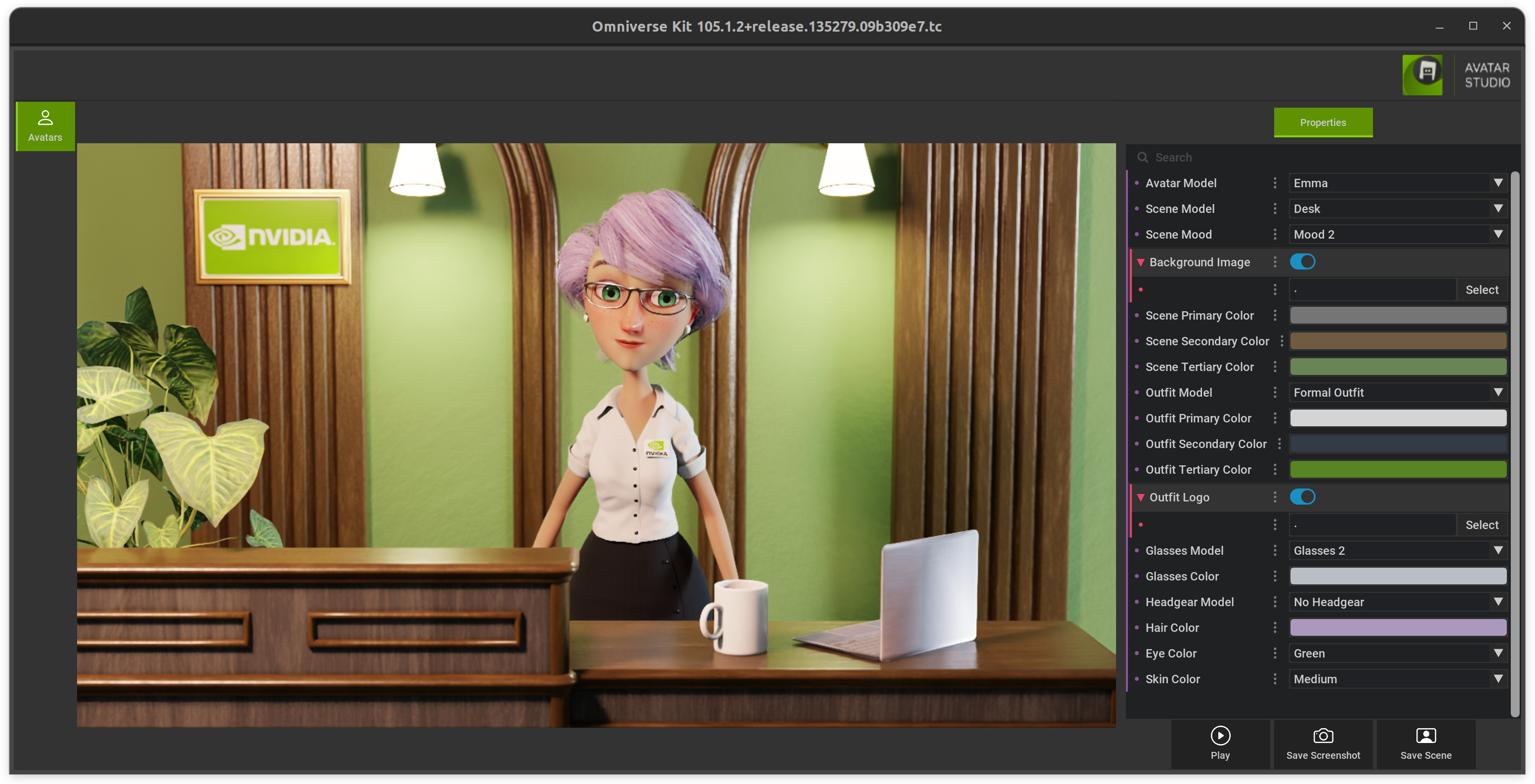

Change Avatar Scene

To modify or switch out the default avatar scene you can use the Avatar Configurator.

Download and unpack it and start it by running ./run_avatar_configurator.sh.

Once it successfully started (first time it takes longer to compile shaders) you can create your custom new scene and save it.

Then copy all the files from the folder avatar_configurator/exported and replace the files in the local folder avatar-scene-folder from the step “Download Avatar Scene from NGC”.

After restarting the Animation Graph and Omniverse Renderer service you will see the new scene.

Clean up: Remove Streams

You can clean up the streams using the following commands:

curl -X DELETE -s http://localhost:8020/streams/$stream_id

curl -X DELETE -s http://localhost:8021/streams/$stream_id