Stable Diffusion XL Turbo NIM

Important

NVIDIA NIM currently is in limited availability, sign up here to get notified when the latest NIMs are available to download.

SDXL Turbo allows you to generate images from text in real-time and uses a special version of SDXL that is optimized for speed and performance. Stabilityai/sdxl-turbo is a fast generative text-to-image model that can synthesize photorealistic images from a text prompt in a single network evaluation.

Use SDXL Turbo NIM to:

Generate images from text in seconds.

Generate images that are unique and original, unlike any other images on the internet.

Explore different ideas and concepts.

Elevate your expression and your personality.

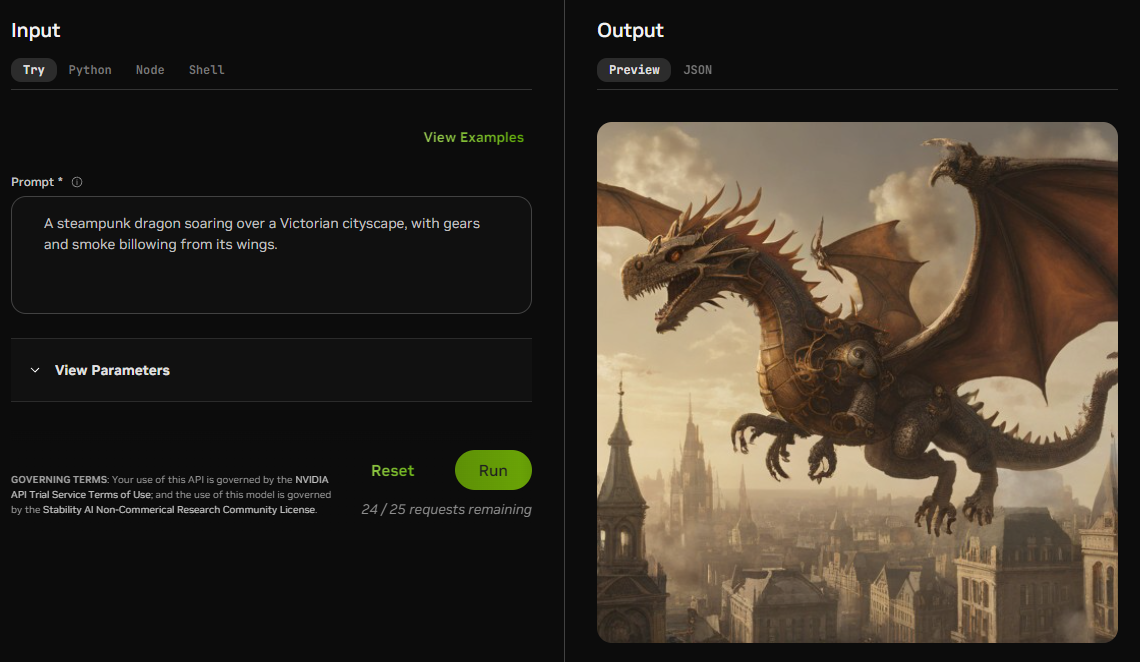

An example text prompt for an AI-generated image

Note

A more detailed description of the model can be found in the Model Card.

Model Specific Requirements

The following are specific requirements for SDXL Turbo NIM.

Important

Please refer to NVIDIA NIM documentation for necessary hardware, operating system, and software prerequisites if you have not done so already.

Hardware

Target GPUs: L40(S), A100 or H100 GPUs

Minimum available GPU Memory (VRAM): 16GB

Minimum available RAM: 24GB

Software

Minimum NVIDIA Driver Version: 535

Once the above requirements have been met, you will download the model and then use the Quickstart Guide to pull the NIM container, build the TensorRT (TRT) engines and run the NIM.

Download the Model

For the commercial use of the model, you need to receive the license from stability.ai.

Use the following commands to download (the size of the downloaded files is ~6.6GB):

Note

You can copy all the commands to your terminal at once

1mkdir -p sd-model-store sd-model-store/framework_model_dir sd-model-store/framework_model_dir/xl-turbo 2 3mkdir -p sd-model-store/framework_model_dir/xl-turbo/XL_BASE 4 5mkdir -p sd-model-store/framework_model_dir/xl-turbo/XL_BASE/scheduler 6curl -L https://huggingface.co/stabilityai/sdxl-turbo/resolve/main/scheduler/scheduler_config.json --output sd-model-store/framework_model_dir/xl-turbo/XL_BASE/scheduler/scheduler_config.json 7 8mkdir -p sd-model-store/framework_model_dir/xl-turbo/XL_BASE/tokenizer 9curl -L https://huggingface.co/stabilityai/sdxl-turbo/resolve/main/tokenizer/merges.txt --output sd-model-store/framework_model_dir/xl-turbo/XL_BASE/tokenizer/merges.txt 10curl -L https://huggingface.co/stabilityai/sdxl-turbo/resolve/main/tokenizer/special_tokens_map.json --output sd-model-store/framework_model_dir/xl-turbo/XL_BASE/tokenizer/special_tokens_map.json 11curl -L https://huggingface.co/stabilityai/sdxl-turbo/resolve/main/tokenizer/tokenizer_config.json --output sd-model-store/framework_model_dir/xl-turbo/XL_BASE/tokenizer/tokenizer_config.json 12curl -L https://huggingface.co/stabilityai/sdxl-turbo/resolve/main/tokenizer/vocab.json --output sd-model-store/framework_model_dir/xl-turbo/XL_BASE/tokenizer/vocab.json 13 14mkdir -p sd-model-store/framework_model_dir/xl-turbo/XL_BASE/tokenizer_2 15curl -L https://huggingface.co/stabilityai/sdxl-turbo/resolve/main/tokenizer_2/merges.txt --output sd-model-store/framework_model_dir/xl-turbo/XL_BASE/tokenizer_2/merges.txt 16curl -L https://huggingface.co/stabilityai/sdxl-turbo/resolve/main/tokenizer_2/special_tokens_map.json --output sd-model-store/framework_model_dir/xl-turbo/XL_BASE/tokenizer_2/special_tokens_map.json 17curl -L https://huggingface.co/stabilityai/sdxl-turbo/resolve/main/tokenizer_2/tokenizer_config.json --output sd-model-store/framework_model_dir/xl-turbo/XL_BASE/tokenizer_2/tokenizer_config.json 18curl -L https://huggingface.co/stabilityai/sdxl-turbo/resolve/main/tokenizer_2/vocab.json --output sd-model-store/framework_model_dir/xl-turbo/XL_BASE/tokenizer_2/vocab.json 19 20### Download Optimized ONNX files 21mkdir -p sd-model-store/onnx sd-model-store/onnx/xl-turbo 22 23mkdir -p sd-model-store/onnx/xl-turbo/XL_BASE 24 25mkdir -p sd-model-store/onnx/xl-turbo/XL_BASE/clip.opt 26curl -L https://huggingface.co/stabilityai/sdxl-turbo-tensorrt/resolve/main/clip.opt/model.onnx --output sd-model-store/onnx/xl-turbo/XL_BASE/clip.opt/model.onnx 27 28mkdir -p sd-model-store/onnx/xl-turbo/XL_BASE/clip2.opt 29curl -L https://huggingface.co/stabilityai/sdxl-turbo-tensorrt/resolve/main/clip2.opt/model.onnx --output sd-model-store/onnx/xl-turbo/XL_BASE/clip2.opt/model.onnx 30 31mkdir -p sd-model-store/onnx/xl-turbo/XL_BASE/unetxl.opt 32curl -L https://huggingface.co/stabilityai/sdxl-turbo-tensorrt/resolve/main/unetxl.opt/model.onnx --output sd-model-store/onnx/xl-turbo/XL_BASE/unetxl.opt/model.onnx 33curl -L https://huggingface.co/stabilityai/sdxl-turbo-tensorrt/resolve/main/unetxl.opt/86fa0306-8ebf-11ee-ad41-0242ac110003 --output sd-model-store/onnx/xl-turbo/XL_BASE/unetxl.opt/86fa0306-8ebf-11ee-ad41-0242ac110003 34 35mkdir -p sd-model-store/onnx/xl-turbo/XL_BASE/vae.opt 36curl -L https://huggingface.co/stabilityai/sdxl-turbo-tensorrt/resolve/main/vae.opt/model.onnx --output sd-model-store/onnx/xl-turbo/XL_BASE/vae.opt/model.onnx

To filter possible inappropriate and harmful images, please download the Safety Checker (the size of the downloaded files is ~1.2GB):

Note

You can copy all the commands to your terminal at once

1mkdir -p sd-model-store sd-model-store/framework_model_dir sd-model-store/framework_model_dir/safety_checker 2curl -L https://huggingface.co/CompVis/stable-diffusion-safety-checker/resolve/main/config.json --output sd-model-store/framework_model_dir/safety_checker/config.json 3curl -L https://huggingface.co/CompVis/stable-diffusion-safety-checker/resolve/main/pytorch_model.bin --output sd-model-store/framework_model_dir/safety_checker/pytorch_model.bin

Quickstart Guide

The following QuickStart Guide is provided to quickly get SDXL Turbo NIM up and running. Please refer to the detailed instruction section for additional information if needed.

Note

This page assumes Prerequisite Software (Docker, NGC CLI, NGC registry access) is installed and set up.

Pull the NIM container.

docker pull nvcr.io/nvidia/nim/genai_sd_nim:24.03

Build the TRT engines (). They would be stored in $(pwd)/sd-model-store/trt_engines/xl-turbo.

Important

It may take up to ten minutes for the engine to start.

1docker run --rm -it --gpus=1 \ 2 -v $(pwd)/sd-model-store:/sd-model-store \ 3 nvcr.io/nvidia/nim/genai_sd_nim:24.03 \ 4 bash -c "python3 build.py --model=sdxl-turbo"

Run the NIM.

1docker run --rm -it --name sdxl-turbo-server \ 2 --runtime=nvidia --gpus=1 \ 3 -p 8000:8000 -p 8001:8001 -p 8002:8002 -p 8003:8003 \ 4 -v $(pwd)/sd-model-store/trt_engines:/model-store/ \ 5 -v $(pwd)/sd-model-store/framework_model_dir:/model-store/framework_model_dir \ 6 -e MODEL_NAME=sdxl-turbo \ 7 nvcr.io/nvidia/nim/genai_sd_nim:24.03

Wait until the health check returns 200 before proceeding.

curl -i -m 1 -L -s -o /dev/null -w %{http_code} localhost:8000/v2/health/ready

Request Inference from the local NIM instance

1python3 -c " 2import json 3import base64 4import requests 5 6payload = json.dumps({ 7 \"text_prompts\": [ 8 { 9 \"text\": \"a photo of an astronaut riding a horse on mars\" 10 } 11 ] 12}) 13 14response = requests.post(\"http://localhost:8003/infer\", data=payload) 15 16response.raise_for_status() 17 18data = response.json() 19 20img_base64 = data[\"artifacts\"][0][\"base64\"] 21 22img_bytes = base64.b64decode(img_base64) 23 24with open(\"output.jpg\", \"wb\") as f: 25 f.write(img_bytes) 26"

Detailed Instructions

Pull Container Image

Container image tags follow the versioning of YY.MM, similar to other container images on NGC. You may see different values under “Tags:”. These docs were written based on the latest available at the time.

ngc registry image info nvcr.io/nvidia/nim/genai_sd_nim

1Image Repository Information 2 Name: genai_sd_nim 3 Display Name: genai_sd_nim 4 Short Description: GenAI SD NIM 5 Built By: NVIDIA 6 Publisher: 7 Multinode Support: False 8 Multi-Arch Support: False 9 Logo: 10 Labels: NVIDIA AI Enterprise Supported, NVIDIA NIM 11 Public: No 12 Last Updated: Mar 16, 2024 13 Latest Image Size: 11.15 GB 14 Latest Tag: 24.03 15 Tags: 16 24.03

Pull the container image

docker pull nvcr.io/nvidia/nim/genai_sd_nim:24.03

ngc registry image pull nvcr.io/nvidia/nim/genai_sd_nim:24.03

Build TRT Engines

Build TRT engines from the Pytorch checkpoints and optimize ONNX files.

1docker run --rm -it --gpus=1 \

2-v $(pwd)/sd-model-store:/sd-model-store \

3nvcr.io/nvidia/nim/genai_sd_nim:24.03 \

4bash -c "python3 build.py --model=sdxl-turbo"

Note

The build process may take up to ten minutes.

TRT engines would be stored in $(pwd)/sd-model-store/trt_engines/xl-turbo

Launch Microservice

Launch the container. Start-up may take a couple of minutes but the logs will read out Application startup complete. when the service is available.

1docker run --rm -it --name sdxl-turbo-server \

2--runtime=nvidia --gpus=1 \

3-p 8000:8000 -p 8001:8001 -p 8002:8002 -p 8003:8003 \

4-v $(pwd)/sd-model-store/trt_engines:/model-store/ \

5-v $(pwd)/sd-model-store/framework_model_dir:/model-store/framework_model_dir \

6-e MODEL_NAME=sdxl-turbo \

7nvcr.io/nvidia/nim/genai_sd_nim:24.03

Health and Liveness checks

The container exposes health and liveness endpoints for integration into existing systems such as Kubernetes at /v2/health/ready and /v2/health/live. These endpoints only return an HTTP 200 OK status code if the service is ready or live, respectively. Run these in a new terminal.

curl -i -m 1 -L -s -o /dev/null -w %{http_code} localhost:8000/v2/health/ready

curl -i -m 1 -L -s -o /dev/null -w %{http_code} localhost:8000/v2/health/live

Run Inference

Here is an example API call (see API reference for details)

1import json

2import base64

3import requests

4

5payload = json.dumps({

6 "text_prompts": [

7 {

8 "text": "a photo of an astronaut riding a horse on mars"

9 }

10 ]

11})

12

13response = requests.post("http://localhost:8003/infer", data=payload)

14

15response.raise_for_status()

16

17data = response.json()

18

19img_base64 = data['artifacts'][0]["base64"]

20

21img_bytes = base64.b64decode(img_base64)

22

23with open("output.jpg", "wb") as f:

24 f.write(img_bytes)

Here is the sample output for the above snippet:

Note

Your output may be different since the seed parameter is not set.

Stopping the Container

When you’re done testing the endpoint, you can bring down the container by running docker stop sdxl-turbo-server in a new terminal.