cuSOLVER

The API reference guide for cuSOLVER, a GPU accelerated library for decompositions and linear system solutions for both dense and sparse matrices.

1. Introduction

- The cuSolver API on a single GPU

- The cuSolverMG API on a single node multiGPU

The intent of cuSolver is to provide useful LAPACK-like features, such as common matrix factorization and triangular solve routines for dense matrices, a sparse least-squares solver and an eigenvalue solver. In addition cuSolver provides a new refactorization library useful for solving sequences of matrices with a shared sparsity pattern.

cuSolver combines three separate components under a single umbrella. The first part of cuSolver is called cuSolverDN, and deals with dense matrix factorization and solve routines such as LU, QR, SVD and LDLT, as well as useful utilities such as matrix and vector permutations.

Next, cuSolverSP provides a new set of sparse routines based on a sparse QR factorization. Not all matrices have a good sparsity pattern for parallelism in factorization, so the cuSolverSP library also provides a CPU path to handle those sequential-like matrices. For those matrices with abundant parallelism, the GPU path will deliver higher performance. The library is designed to be called from C and C++.

The final part is cuSolverRF, a sparse re-factorization package that can provide very good performance when solving a sequence of matrices where only the coefficients are changed but the sparsity pattern remains the same.

The GPU path of the cuSolver library assumes data is already in the device memory. It is the responsibility of the developer to allocate memory and to copy data between GPU memory and CPU memory using standard CUDA runtime API routines, such as cudaMalloc(), cudaFree(), cudaMemcpy(), and cudaMemcpyAsync().

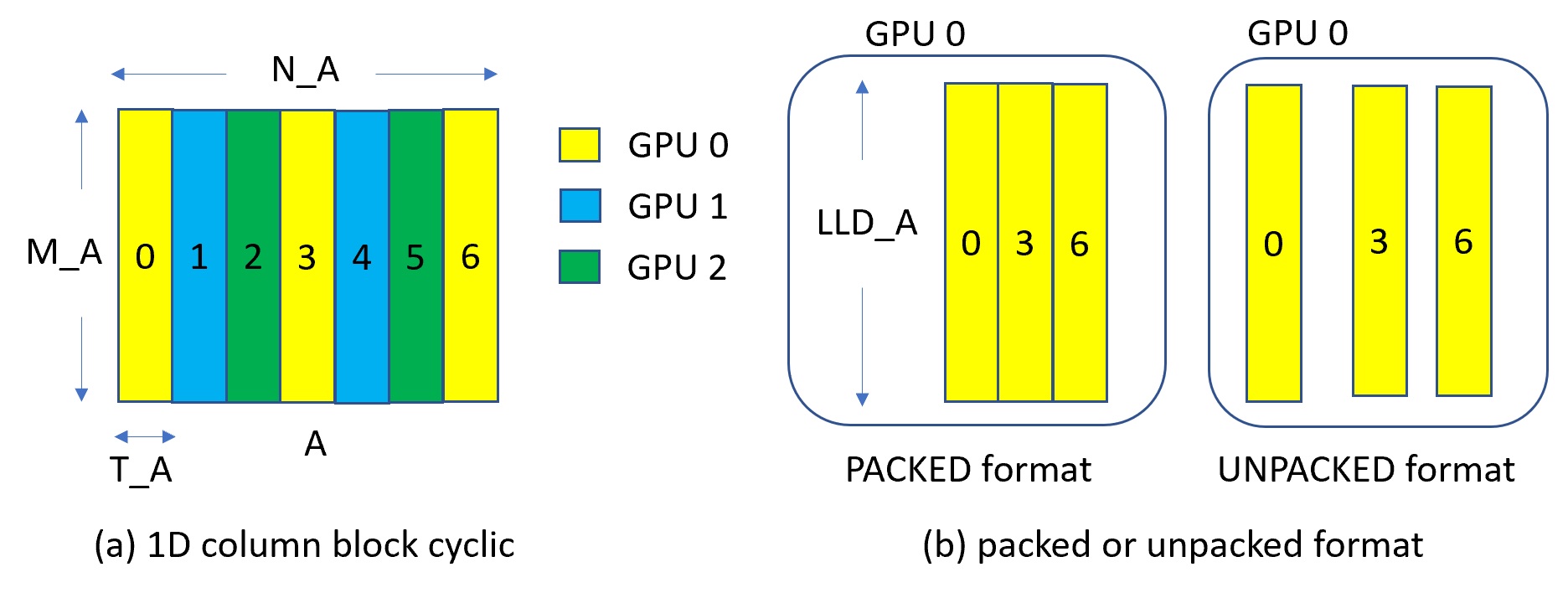

cuSolverMg is GPU-accelerated ScaLAPACK. By now, cuSolverMg supports 1-D column block cyclic layout and provides symmetric eigenvalue solver.

1.1. cuSolverDN: Dense LAPACK

The cuSolverDN library was designed to solve dense linear systems of the form

where the coefficient matrix , right-hand-side vector and solution vector

The cuSolverDN library provides QR factorization and LU with partial pivoting to handle a general matrix A, which may be non-symmetric. Cholesky factorization is also provided for symmetric/Hermitian matrices. For symmetric indefinite matrices, we provide Bunch-Kaufman (LDL) factorization.

The cuSolverDN library also provides a helpful bidiagonalization routine and singular value decomposition (SVD).

The cuSolverDN library targets computationally-intensive and popular routines in LAPACK, and provides an API compatible with LAPACK. The user can accelerate these time-consuming routines with cuSolverDN and keep others in LAPACK without a major change to existing code.

1.2. cuSolverSP: Sparse LAPACK

The cuSolverSP library was mainly designed to a solve sparse linear system

and the least-squares problem

where sparse matrix , right-hand-side vector and solution vector . For a linear system, we require m=n.

The core algorithm is based on sparse QR factorization. The matrix A is accepted in CSR format. If matrix A is symmetric/Hermitian, the user has to provide a full matrix, ie fill missing lower or upper part.

If matrix A is symmetric positive definite and the user only needs to solve , Cholesky factorization can work and the user only needs to provide the lower triangular part of A.

On top of the linear and least-squares solvers, the cuSolverSP library provides a simple eigenvalue solver based on shift-inverse power method, and a function to count the number of eigenvalues contained in a box in the complex plane.

1.3. cuSolverRF: Refactorization

The cuSolverRF library was designed to accelerate solution of sets of linear systems by fast re-factorization when given new coefficients in the same sparsity pattern

where a sequence of coefficient matrices , right-hand-sides and solutions are given for i=1,...,k.

The cuSolverRF library is applicable when the sparsity pattern of the coefficient matrices as well as the reordering to minimize fill-in and the pivoting used during the LU factorization remain the same across these linear systems. In that case, the first linear system (i=1) requires a full LU factorization, while the subsequent linear systems (i=2,...,k) require only the LU re-factorization. The later can be performed using the cuSolverRF library.

Notice that because the sparsity pattern of the coefficient matrices, the reordering and pivoting remain the same, the sparsity pattern of the resulting triangular factors and also remains the same. Therefore, the real difference between the full LU factorization and LU re-factorization is that the required memory is known ahead of time.

1.4. Naming Conventions

The cuSolverDN library provides two different APIs; legacy and generic.

The functions in the legacy API are available for data types float, double, cuComplex, and cuDoubleComplex. The naming convention for the legacy API is as follows:

| cusolverDn<t><operation> |

where <t> can be S, D, C, Z, or X, corresponding to the data types float, double, cuComplex, cuDoubleComplex, and the generic type, respectively. <operation> can be Cholesky factorization (potrf), LU with partial pivoting (getrf), QR factorization (geqrf) and Bunch-Kaufman factorization (sytrf).

The functions in the generic API provide a single entry point for each routine and support for 64-bit integers to define matrix and vector dimensions. The naming convention for the generic API is data-agnostic and is as follows:

| cusolverDn<operation> |

where <operation> can be Cholesky factorization (potrf), LU with partial pivoting (getrf) and QR factorization (geqrf).

The cuSolverSP library functions are available for data types float, double, cuComplex, and cuDoubleComplex. The naming convention is as follows:

| cusolverSp[Host]<t>[<matrix data format>]<operation>[<output matrix data format>]<based on> |

where cuSolverSp is the GPU path and cusolverSpHost is the corresponding CPU path. <t> can be S, D, C, Z, or X, corresponding to the data types float, double, cuComplex, cuDoubleComplex, and the generic type, respectively.

The <matrix data format> is csr, compressed sparse row format.

The <operation> can be ls, lsq, eig, eigs, corresponding to linear solver, least-square solver, eigenvalue solver and number of eigenvalues in a box, respectively.

The <output matrix data format> can be v or m, corresponding to a vector or a matrix.

<based on> describes which algorithm is used. For example, qr (sparse QR factorization) is used in linear solver and least-square solver.

All of the functions have the return type cusolverStatus_t and are explained in more detail in the chapters that follow.

| routine | data format | operation | output format | based on |

| csrlsvlu | csr | linear solver (ls) | vector (v) | LU (lu) with partial pivoting |

| csrlsvqr | csr | linear solver (ls) | vector (v) | QR factorization (qr) |

| csrlsvchol | csr | linear solver (ls) | vector (v) | Cholesky factorization (chol) |

| csrlsqvqr | csr | least-square solver (lsq) | vector (v) | QR factorization (qr) |

| csreigvsi | csr | eigenvalue solver (eig) | vector (v) | shift-inverse |

| csreigs | csr | number of eigenvalues in a box (eigs) | ||

| csrsymrcm | csr | Symmetric Reverse Cuthill-McKee (symrcm) |

The cuSolverRF library routines are available for data type double. Most of the routines follow the naming convention:

| cusolverRf_<operation>_[[Host]](...) |

where the trailing optional Host qualifier indicates the data is accessed on the host versus on the device, which is the default. The <operation> can be Setup, Analyze, Refactor, Solve, ResetValues, AccessBundledFactors and ExtractSplitFactors.

Finally, the return type of the cuSolverRF library routines is cusolverStatus_t.

1.5. Asynchronous Execution

The cuSolver library functions prefer to keep asynchronous execution as much as possible. Developers can always use the cudaDeviceSynchronize() function to ensure that the execution of a particular cuSolver library routine has completed.

A developer can also use the cudaMemcpy() routine to copy data from the device to the host and vice versa, using the cudaMemcpyDeviceToHost and cudaMemcpyHostToDevice parameters, respectively. In this case there is no need to add a call to cudaDeviceSynchronize() because the call to cudaMemcpy() with the above parameters is blocking and completes only when the results are ready on the host.

1.6. Library Property

The libraryPropertyType data type is an enumeration of library property types. (ie. CUDA version X.Y.Z would yield MAJOR_VERSION=X, MINOR_VERSION=Y, PATCH_LEVEL=Z)

typedef enum libraryPropertyType_t

{

MAJOR_VERSION,

MINOR_VERSION,

PATCH_LEVEL

} libraryPropertyType;

The following code can show the version of cusolver library.

int major=-1,minor=-1,patch=-1;

cusolverGetProperty(MAJOR_VERSION, &major);

cusolverGetProperty(MINOR_VERSION, &minor);

cusolverGetProperty(PATCH_LEVEL, &patch);

printf("CUSOLVER Version (Major,Minor,PatchLevel): %d.%d.%d\n", major,minor,patch);

1.7. high precision package

The cusolver library uses high precision for iterative refinement when necessary.

2. Using the CUSOLVER API

2.1. General description

This chapter describes how to use the cuSolver library API. It is not a reference for the cuSolver API data types and functions; that is provided in subsequent chapters.

2.1.1. Thread Safety

The library is thread-safe, and its functions can be called from multiple host threads.

2.1.2. Scalar Parameters

In the cuSolver API, the scalar parameters can be passed by reference on the host.

2.1.3. Parallelism with Streams

If the application performs several small independent computations, or if it makes data transfers in parallel with the computation, then CUDA streams can be used to overlap these tasks.

- Create CUDA streams using the function cudaStreamCreate(), and

- Set the stream to be used by each individual cuSolver library routine by calling, for example, cusolverDnSetStream(), just prior to calling the actual cuSolverDN routine.

The computations performed in separate streams would then be overlapped automatically on the GPU, when possible. This approach is especially useful when the computation performed by a single task is relatively small, and is not enough to fill the GPU with work, or when there is a data transfer that can be performed in parallel with the computation.

2.1.4. How to link cusolver library

cusolver library provides dynamic library libcusolver.so and static library libcusolver_static.a. If the user links the application with libcusolver.so, libcublas.so and libcublasLt.so are also required. If the user links the application with libcusolver_static.a, the following libraries are also needed, libcudart_static.a, libculibos.aliblapack_static.a, libmetis_static.a, libcublas_static.a and libcusparse_static.a.

2.1.5. Link Third-party LAPACK Library

- If you use libcusolver_static.a, then you must link with liblapack_static.a explicitly, otherwise the linker will report missing symbols. No conflict of symbols between liblapack_static.a and other third-party LAPACK library, you are free to link the latter to your application.

- The liblapack_static.a is built inside libcusolver.so. Hence, if you use libcusolver.so, then you don't need to specify a LAPACK library. The libcusolver.so will not pick up any routines from the third-party LAPACK library even you link the application with it.

2.1.6. convention of info

Each LAPACK routine returns an info which indicates the position of invalid parameter. If info = -i, then i-th parameter is invalid. To be consistent with base-1 in LAPACK, cusolver does not report invalid handle into info. Instead, cusolver returns CUSOLVER_STATUS_NOT_INITIALIZED for invalid handle.

2.1.7. usage of _bufferSize

There is no cudaMalloc inside cuSolver library, the user must allocate the device workspace explicitly. The routine xyz_bufferSize is to query the size of workspace of the routine xyz, for example xyz = potrf. To make the API simple, xyz_bufferSize follows almost the same signature of xyz even it only depends on some parameters, for example, device pointer is not used to decide the size of workspace. In most cases, xyz_bufferSize is called in the beginning before actual device data (pointing by a device pointer) is prepared or before the device pointer is allocated. In such case, the user can pass null pointer to xyz_bufferSize without breaking the functionality.

2.2. cuSolver Types Reference

2.2.1. cuSolverDN Types

The float, double, cuComplex, and cuDoubleComplex data types are supported. The first two are standard C data types, while the last two are exported from cuComplex.h. In addition, cuSolverDN uses some familiar types from cuBlas.

2.2.1.1. cusolverDnHandle_t

This is a pointer type to an opaque cuSolverDN context, which the user must initialize by calling cusolverDnCreate() prior to calling any other library function. An un-initialized Handle object will lead to unexpected behavior, including crashes of cuSolverDN. The handle created and returned by cusolverDnCreate() must be passed to every cuSolverDN function.

2.2.1.2. cublasFillMode_t

The type indicates which part (lower or upper) of the dense matrix was filled and consequently should be used by the function. Its values correspond to Fortran characters ‘L’ or ‘l’ (lower) and ‘U’ or ‘u’ (upper) that are often used as parameters to legacy BLAS implementations.

| Value | Meaning |

| CUBLAS_FILL_MODE_LOWER | the lower part of the matrix is filled |

| CUBLAS_FILL_MODE_UPPER | the upper part of the matrix is filled |

2.2.1.3. cublasOperation_t

The cublasOperation_t type indicates which operation needs to be performed with the dense matrix. Its values correspond to Fortran characters ‘N’ or ‘n’ (non-transpose), ‘T’ or ‘t’ (transpose) and ‘C’ or ‘c’ (conjugate transpose) that are often used as parameters to legacy BLAS implementations.

| Value | Meaning |

| CUBLAS_OP_N | the non-transpose operation is selected |

| CUBLAS_OP_T | the transpose operation is selected |

| CUBLAS_OP_C | the conjugate transpose operation is selected |

2.2.1.4. cusolverEigType_t

The cusolverEigType_t type indicates which type of eigenvalue solver is. Its values correspond to Fortran integer 1 (A*x = lambda*B*x), 2 (A*B*x = lambda*x), 3 (B*A*x = lambda*x), used as parameters to legacy LAPACK implementations.

| Value | Meaning |

| CUSOLVER_EIG_TYPE_1 | A*x = lambda*B*x |

| CUSOLVER_EIG_TYPE_2 | A*B*x = lambda*x |

| CUSOLVER_EIG_TYPE_3 | B*A*x = lambda*x |

2.2.1.5. cusolverEigMode_t

The cusolverEigMode_t type indicates whether or not eigenvectors are computed. Its values correspond to Fortran character 'N' (only eigenvalues are computed), 'V' (both eigenvalues and eigenvectors are computed) used as parameters to legacy LAPACK implementations.

| Value | Meaning |

| CUSOLVER_EIG_MODE_NOVECTOR | only eigenvalues are computed |

| CUSOLVER_EIG_MODE_VECTOR | both eigenvalues and eigenvectors are computed |

2.2.1.6. cusolverIRSRefinement_t

The cusolverIRSRefinement_t type indicates which solver type would be used for the specific cusolver function. Most of our experimentation shows that CUSOLVER_IRS_REFINE_GMRES is the best option.

More details about the refinement process can be found in Azzam Haidar, Stanimire Tomov, Jack Dongarra, and Nicholas J. Higham. 2018. Harnessing GPU tensor cores for fast FP16 arithmetic to speed up mixed-precision iterative refinement solvers. In Proceedings of the International Conference for High Performance Computing, Networking, Storage, and Analysis (SC '18). IEEE Press, Piscataway, NJ, USA, Article 47, 11 pages.

| Value | Meaning |

| CUSOLVER_IRS_REFINE_NOT_SET | Solver is not set, this value is what is set when creating the params structure. IRS solver will return error. |

| CUSOLVER_IRS_REFINE_NONE | No refinement solver, the IRS solver performs a factorisation followed by a solve without any refinement. For example if the IRS solver was cusolverDnIRSXgesv(), this is equivalent to a Xgesv routine without refinement and where the factorisation is carried out in the lowest precision. If for example the main precision was CUSOLVER_R_64F and the lowest was CUSOLVER_R_64F as well, then this is equivalent to a call to cusolverDnDgesv(). |

| CUSOLVER_IRS_REFINE_CLASSICAL | Classical iterative refinement solver. Similar to the one used in LAPACK routines. |

| CUSOLVER_IRS_REFINE_GMRES | GMRES (Generalized Minimal Residual) based iterative refinement solver. In recent study, the GMRES method has drawn the scientific community attention for its ability to be used as refinement solver that outperforms the classical iterative refinement method. based on our experimentation, we recommend this setting. |

| CUSOLVER_IRS_REFINE_CLASSICAL_GMRES | Classical iterative refinement solver that uses the GMRES (Generalized Minimal Residual) internally to solve the correction equation at each iteration. We call the classical refinement iteration the outer iteration while the GMRES is called inner iteration. Note that if the tolerance of the inner GMRES is set very low, let say to machine precision, then the outer classical refinement iteration will performs only one iteration and thus this option will behaves like CUSOLVER_IRS_REFINE_GMRES. |

| CUSOLVER_IRS_REFINE_GMRES_GMRES | Similar to CUSOLVER_IRS_REFINE_CLASSICAL_GMRES which consists of classical refinement process that uses GMRES to solve the inner correction system, here it is a GMRES (Generalized Minimal Residual) based iterative refinement solver that uses another GMRES internally to solve the preconditionned system. |

2.2.1.7. cusolverDnIRSParams_t

This is a pointer type to an opaque cusolverDnIRSParams_t structure, which holds parameters for the iterative refinement linear solvers such as cusolverDnXgesv(). Use corresponding helper functions described below to either Create/Destroy this structure or Set/Get solver parameters.

2.2.1.8. cusolverDnIRSInfos_t

This is a pointer type to an opaque cusolverDnIRSInfos_t structure, which holds information about the performed call to an iterative refinement linear solver (e.g., cusolverDnXgesv()). Use corresponding helper functions described below to either Create/Destroy this structure or retrieve solve information.

2.2.1.9. cusolverDnFunction_t

The cusolverDnFunction_t type indicates which routine needs to be configured by cusolverDnSetAdvOptions(). The value CUSOLVERDN_GETRF corresponds to the routine Getrf.

| Value | Meaning |

| CUSOLVERDN_GETRF | corresponds to Getrf |

2.2.1.10. cusolverAlgMode_t

The cusolverAlgMode_t type indicates which algorithm is selected by cusolverDnSetAdvOptions(). The set of algorithms supported for each routine is described in detail along with the routine documentation.

The default algorithm is CUSOLVER_ALG_0. The user can also provide NULL to use the default algorithm.

2.2.2. cuSolverSP Types

The float, double, cuComplex, and cuDoubleComplex data types are supported. The first two are standard C data types, while the last two are exported from cuComplex.h.

2.2.2.1. cusolverSpHandle_t

This is a pointer type to an opaque cuSolverSP context, which the user must initialize by calling cusolverSpCreate() prior to calling any other library function. An un-initialized Handle object will lead to unexpected behavior, including crashes of cuSolverSP. The handle created and returned by cusolverSpCreate() must be passed to every cuSolverSP function.

2.2.2.2. cusparseMatDescr_t

We have chosen to keep the same structure as exists in cuSparse to describe the shape and properties of a matrix. This enables calls to either cuSparse or cuSolver using the same matrix description.

typedef struct {

cusparseMatrixType_t MatrixType;

cusparseFillMode_t FillMode;

cusparseDiagType_t DiagType;

cusparseIndexBase_t IndexBase;

} cusparseMatDescr_t;

Please read documenation of CUSPARSE Library to understand each field of cusparseMatDescr_t.

2.2.2.3. cusolverStatus_t

This is a status type returned by the library functions and it can have the following values.

| CUSOLVER_STATUS_SUCCESS |

The operation completed successfully. |

| CUSOLVER_STATUS_NOT_INITIALIZED |

The cuSolver library was not initialized. This is usually caused by the lack of a prior call, an error in the CUDA Runtime API called by the cuSolver routine, or an error in the hardware setup. To correct: call cusolverCreate() prior to the function call; and check that the hardware, an appropriate version of the driver, and the cuSolver library are correctly installed. |

| CUSOLVER_STATUS_ALLOC_FAILED |

Resource allocation failed inside the cuSolver library. This is usually caused by a cudaMalloc() failure. To correct: prior to the function call, deallocate previously allocated memory as much as possible. |

| CUSOLVER_STATUS_INVALID_VALUE |

An unsupported value or parameter was passed to the function (a negative vector size, for example). To correct: ensure that all the parameters being passed have valid values. |

| CUSOLVER_STATUS_ARCH_MISMATCH |

The function requires a feature absent from the device architecture; usually caused by the lack of support for atomic operations or double precision. To correct: compile and run the application on a device with compute capability 2.0 or above. |

| CUSOLVER_STATUS_EXECUTION_FAILED |

The GPU program failed to execute. This is often caused by a launch failure of the kernel on the GPU, which can be caused by multiple reasons. To correct: check that the hardware, an appropriate version of the driver, and the cuSolver library are correctly installed. |

| CUSOLVER_STATUS_INTERNAL_ERROR |

An internal cuSolver operation failed. This error is usually caused by a cudaMemcpyAsync() failure. To correct: check that the hardware, an appropriate version of the driver, and the cuSolver library are correctly installed. Also, check that the memory passed as a parameter to the routine is not being deallocated prior to the routine’s completion. |

| CUSOLVER_STATUS_MATRIX_TYPE_NOT_SUPPORTED |

The matrix type is not supported by this function. This is usually caused by passing an invalid matrix descriptor to the function. To correct: check that the fields in descrA were set correctly. |

2.2.3. cuSolverRF Types

cuSolverRF only supports double.

2.2.3.1. cusolverRfHandle_t

The cusolverRfHandle_t is a pointer to an opaque data structure that contains the cuSolverRF library handle. The user must initialize the handle by calling cusolverRfCreate() prior to any other cuSolverRF library calls. The handle is passed to all other cuSolverRF library calls.

2.2.3.2. cusolverRfMatrixFormat_t

The cusolverRfMatrixFormat_t is an enum that indicates the input/output matrix format assumed by the cusolverRfSetupDevice(), cusolverRfSetupHost(), cusolverRfResetValues(), cusolveRfExtractBundledFactorsHost() and cusolverRfExtractSplitFactorsHost() routines.

| Value | Meaning |

| CUSOLVER_MATRIX_FORMAT_CSR | matrix format CSR is assumed. (default) |

| CUSOLVER_MATRIX_FORMAT_CSC | matrix format CSC is assumed. |

2.2.3.3. cusolverRfNumericBoostReport_t

The cusolverRfNumericBoostReport_t is an enum that indicates whether numeric boosting (of the pivot) was used during the cusolverRfRefactor() and cusolverRfSolve() routines. The numeric boosting is disabled by default.

| Value | Meaning |

| CUSOLVER_NUMERIC_BOOST_NOT_USED | numeric boosting not used. (default) |

| CUSOLVER_NUMERIC_BOOST_USED | numeric boosting used. |

2.2.3.4. cusolverRfResetValuesFastMode_t

The cusolverRfResetValuesFastMode_t is an enum that indicates the mode used for the cusolverRfResetValues() routine. The fast mode requires extra memory and is recommended only if very fast calls to cusolverRfResetValues() are needed.

| Value | Meaning |

| CUSOLVER_RESET_VALUES_FAST_MODE_OFF | fast mode disabled. (default) |

| CUSOLVER_RESET_VALUES_FAST_MODE_ON | fast mode enabled. |

2.2.3.5. cusolverRfFactorization_t

The cusolverRfFactorization_t is an enum that indicates which (internal) algorithm is used for refactorization in the cusolverRfRefactor() routine.

| Value | Meaning |

| CUSOLVER_FACTORIZATION_ALG0 | algorithm 0. (default) |

| CUSOLVER_FACTORIZATION_ALG1 | algorithm 1. |

| CUSOLVER_FACTORIZATION_ALG2 | algorithm 2. Domino-based scheme. |

2.2.3.6. cusolverRfTriangularSolve_t

The cusolverRfTriangularSolve_t is an enum that indicates which (internal) algorithm is used for triangular solve in the cusolverRfSolve() routine.

| Value | Meaning |

| CUSOLVER_TRIANGULAR_SOLVE_ALG1 | algorithm 1. (default) |

| CUSOLVER_TRIANGULAR_SOLVE_ALG2 | algorithm 2. Domino-based scheme. |

| CUSOLVER_TRIANGULAR_SOLVE_ALG3 | algorithm 3. Domino-based scheme. |

2.2.3.7. cusolverRfUnitDiagonal_t

The cusolverRfUnitDiagonal_t is an enum that indicates whether and where the unit diagonal is stored in the input/output triangular factors in the cusolverRfSetupDevice(), cusolverRfSetupHost() and cusolverRfExtractSplitFactorsHost() routines.

| Value | Meaning |

| CUSOLVER_UNIT_DIAGONAL_STORED_L | unit diagonal is stored in lower triangular factor. (default) |

| CUSOLVER_UNIT_DIAGONAL_STORED_U | unit diagonal is stored in upper triangular factor. |

| CUSOLVER_UNIT_DIAGONAL_ASSUMED_L | unit diagonal is assumed in lower triangular factor. |

| CUSOLVER_UNIT_DIAGONAL_ASSUMED_U | unit diagonal is assumed in upper triangular factor. |

2.2.3.8. cusolverStatus_t

The cusolverStatus_t is an enum that indicates success or failure of the cuSolverRF library call. It is returned by all the cuSolver library routines, and it uses the same enumerated values as the sparse and dense Lapack routines.

2.3. cuSolver Formats Reference

2.3.1. Index Base Format

The CSR or CSC format requires either zero-based or one-based index for a sparse matrix A. The GLU library supports only zero-based indexing. Otherwise, both one-based and zero-based indexing are supported in cuSolver.

2.3.2. Vector (Dense) Format

The vectors are assumed to be stored linearly in memory. For example, the vector

|

|

is represented as

|

|

2.3.3. Matrix (Dense) Format

The dense matrices are assumed to be stored in column-major order in memory. The sub-matrix can be accessed using the leading dimension of the original matrix. For examle, the m*n (sub-)matrix

|

|

is represented as

|

|

with its elements arranged linearly in memory as

|

|

where lda ≥ m is the leading dimension of A.

2.3.4. Matrix (CSR) Format

In CSR format the matrix is represented by the following parameters

| parameter | type | size | Meaning |

| n | (int) | the number of rows (and columns) in the matrix. | |

| nnz | (int) | the number of non-zero elements in the matrix. | |

| csrRowPtr | (int *) | n+1 | the array of offsets corresponding to the start of each row in the arrays csrColInd and csrVal. This array has also an extra entry at the end that stores the number of non-zero elements in the matrix. |

| csrColInd | (int *) | nnz | the array of column indices corresponding to the non-zero elements in the matrix. It is assumed that this array is sorted by row and by column within each row. |

| csrVal | (S|D|C|Z)* | nnz | the array of values corresponding to the non-zero elements in the matrix. It is assumed that this array is sorted by row and by column within each row. |

Note that in our CSR format sparse matrices are assumed to be stored in row-major order, in other words, the index arrays are first sorted by row indices and then within each row by column indices. Also it is assumed that each pair of row and column indices appears only once.

For example, the 4x4 matrix

|

|

is represented as

|

|

|

|

|

|

2.3.5. Matrix (CSC) Format

In CSC format the matrix is represented by the following parameters

| parameter | type | size | Meaning |

| n | (int) | the number of rows (and columns) in the matrix. | |

| nnz | (int) | the number of non-zero elements in the matrix. | |

| cscColPtr | (int *) | n+1 | the array of offsets corresponding to the start of each column in the arrays cscRowInd and cscVal. This array has also an extra entry at the end that stores the number of non-zero elements in the matrix. |

| cscRowInd | (int *) | nnz | the array of row indices corresponding to the non-zero elements in the matrix. It is assumed that this array is sorted by column and by row within each column. |

| cscVal | (S|D|C|Z)* | nnz | the array of values corresponding to the non-zero elements in the matrix. It is assumed that this array is sorted by column and by row within each column. |

Note that in our CSC format sparse matrices are assumed to be stored in column-major order, in other words, the index arrays are first sorted by column indices and then within each column by row indices. Also it is assumed that each pair of row and column indices appears only once.

For example, the 4x4 matrix

|

|

is represented as

|

|

|

|

|

|

2.4. cuSolverDN: dense LAPACK Function Reference

This chapter describes the API of cuSolverDN, which provides a subset of dense LAPACK functions.

2.4.1. cuSolverDN Helper Function Reference

The cuSolverDN helper functions are described in this section.

2.4.1.1. cusolverDnCreate()

cusolverStatus_t cusolverDnCreate(cusolverDnHandle_t *handle);

This function initializes the cuSolverDN library and creates a handle on the cuSolverDN context. It must be called before any other cuSolverDN API function is invoked. It allocates hardware resources necessary for accessing the GPU.

| parameter | Memory | In/out | Meaning |

| handle | host | output | the pointer to the handle to the cuSolverDN context. |

| CUSOLVER_STATUS_SUCCESS | the initialization succeeded. |

| CUSOLVER_STATUS_NOT_INITIALIZED | the CUDA Runtime initialization failed. |

| CUSOLVER_STATUS_ALLOC_FAILED | the resources could not be allocated. |

| CUSOLVER_STATUS_ARCH_MISMATCH | the device only supports compute capability 2.0 and above. |

2.4.1.2. cusolverDnDestroy()

cusolverStatus_t cusolverDnDestroy(cusolverDnHandle_t handle);

This function releases CPU-side resources used by the cuSolverDN library.

| parameter | Memory | In/out | Meaning |

| handle | host | input | handle to the cuSolverDN library context. |

| CUSOLVER_STATUS_SUCCESS | the shutdown succeeded. |

| CUSOLVER_STATUS_NOT_INITIALIZED | the library was not initialized. |

2.4.1.3. cusolverDnSetStream()

cusolverStatus_t cusolverDnSetStream(cusolverDnHandle_t handle, cudaStream_t streamId)

This function sets the stream to be used by the cuSolverDN library to execute its routines.

| parameter | Memory | In/out | Meaning |

| handle | host | input | handle to the cuSolverDN library context. |

| streamId | host | input | the stream to be used by the library. |

| CUSOLVER_STATUS_SUCCESS | the stream was set successfully. |

| CUSOLVER_STATUS_NOT_INITIALIZED | the library was not initialized. |

2.4.1.4. cusolverDnGetStream()

cusolverStatus_t cusolverDnGetStream(cusolverDnHandle_t handle, cudaStream_t *streamId)

This function sets the stream to be used by the cuSolverDN library to execute its routines.

| parameter | Memory | In/out | Meaning |

| handle | host | input | handle to the cuSolverDN library context. |

| streamId | host | output | the stream to be used by the library. |

| CUSOLVER_STATUS_SUCCESS | the stream was set successfully. |

| CUSOLVER_STATUS_NOT_INITIALIZED | the library was not initialized. |

2.4.1.5. cusolverDnCreateSyevjInfo()

cusolverStatus_t

cusolverDnCreateSyevjInfo(

syevjInfo_t *info);

This function creates and initializes the structure of syevj, syevjBatched and sygvj to default values.

| parameter | Memory | In/out | Meaning |

| info | host | output | the pointer to the structure of syevj. |

| CUSOLVER_STATUS_SUCCESS | the structure was initialized successfully. |

| CUSOLVER_STATUS_ALLOC_FAILED | the resources could not be allocated. |

2.4.1.6. cusolverDnDestroySyevjInfo()

cusolverStatus_t

cusolverDnDestroySyevjInfo(

syevjInfo_t info);

This function destroys and releases any memory required by the structure.

| parameter | Memory | In/out | Meaning |

| info | host | input | the structure of syevj. |

| CUSOLVER_STATUS_SUCCESS | the resources are released successfully. |

2.4.1.7. cusolverDnXsyevjSetTolerance()

cusolverStatus_t

cusolverDnXsyevjSetTolerance(

syevjInfo_t info,

double tolerance)

This function configures tolerance of syevj.

| parameter | Memory | In/out | Meaning |

| info | host | in/out | the pointer to the structure of syevj. |

| tolerance | host | input | accuracy of numerical eigenvalues. |

| CUSOLVER_STATUS_SUCCESS | the operation completed successfully. |

2.4.1.8. cusolverDnXsyevjSetMaxSweeps()

cusolverStatus_t

cusolverDnXsyevjSetMaxSweeps(

syevjInfo_t info,

int max_sweeps)

This function configures maximum number of sweeps in syevj. The default value is 100.

| parameter | Memory | In/out | Meaning |

| info | host | in/out | the pointer to the structure of syevj. |

| max_sweeps | host | input | maximum number of sweeps. |

| CUSOLVER_STATUS_SUCCESS | the operation completed successfully. |

2.4.1.9. cusolverDnXsyevjSetSortEig()

cusolverStatus_t

cusolverDnXsyevjSetSortEig(

syevjInfo_t info,

int sort_eig)

if sort_eig is zero, the eigenvalues are not sorted. This function only works for syevjBatched. syevj and sygvj always sort eigenvalues in ascending order. By default, eigenvalues are always sorted in ascending order.

| parameter | Memory | In/out | Meaning |

| info | host | in/out | the pointer to the structure of syevj. |

| sort_eig | host | input | if sort_eig is zero, the eigenvalues are not sorted. |

| CUSOLVER_STATUS_SUCCESS | the operation completed successfully. |

2.4.1.10. cusolverDnXsyevjGetResidual()

cusolverStatus_t

cusolverDnXsyevjGetResidual(

cusolverDnHandle_t handle,

syevjInfo_t info,

double *residual)

This function reports residual of syevj or sygvj. It does not support syevjBatched. If the user calls this function after syevjBatched, the error CUSOLVER_STATUS_NOT_SUPPORTED is returned.

| parameter | Memory | In/out | Meaning |

| handle | host | input | handle to the cuSolverDN library context. |

| info | host | input | the pointer to the structure of syevj. |

| residual | host | output | residual of syevj. |

| CUSOLVER_STATUS_SUCCESS | the operation completed successfully. |

| CUSOLVER_STATUS_NOT_SUPPORTED | does not support batched version |

2.4.1.11. cusolverDnXsyevjGetSweeps()

cusolverStatus_t

cusolverDnXsyevjGetSweeps(

cusolverDnHandle_t handle,

syevjInfo_t info,

int *executed_sweeps)

This function reports number of executed sweeps of syevj or sygvj. It does not support syevjBatched. If the user calls this function after syevjBatched, the error CUSOLVER_STATUS_NOT_SUPPORTED is returned.

| parameter | Memory | In/out | Meaning |

| handle | host | input | handle to the cuSolverDN library context. |

| info | host | input | the pointer to the structure of syevj. |

| executed_sweeps | host | output | number of executed sweeps. |

| CUSOLVER_STATUS_SUCCESS | the operation completed successfully. |

| CUSOLVER_STATUS_NOT_SUPPORTED | does not support batched version |

2.4.1.12. cusolverDnCreateGesvdjInfo()

cusolverStatus_t

cusolverDnCreateGesvdjInfo(

gesvdjInfo_t *info);

This function creates and initializes the structure of gesvdj and gesvdjBatched to default values.

| parameter | Memory | In/out | Meaning |

| info | host | output | the pointer to the structure of gesvdj. |

| CUSOLVER_STATUS_SUCCESS | the structure was initialized successfully. |

| CUSOLVER_STATUS_ALLOC_FAILED | the resources could not be allocated. |

2.4.1.13. cusolverDnDestroyGesvdjInfo()

cusolverStatus_t

cusolverDnDestroyGesvdjInfo(

gesvdjInfo_t info);

This function destroys and releases any memory required by the structure.

| parameter | Memory | In/out | Meaning |

| info | host | input | the structure of gesvdj. |

| CUSOLVER_STATUS_SUCCESS | the resources are released successfully. |

2.4.1.14. cusolverDnXgesvdjSetTolerance()

cusolverStatus_t

cusolverDnXgesvdjSetTolerance(

gesvdjInfo_t info,

double tolerance)

This function configures tolerance of gesvdj.

| parameter | Memory | In/out | Meaning |

| info | host | in/out | the pointer to the structure of gesvdj. |

| tolerance | host | input | accuracy of numerical singular values. |

| CUSOLVER_STATUS_SUCCESS | the operation completed successfully. |

2.4.1.15. cusolverDnXgesvdjSetMaxSweeps()

cusolverStatus_t

cusolverDnXgesvdjSetMaxSweeps(

gesvdjInfo_t info,

int max_sweeps)

This function configures maximum number of sweeps in gesvdj. The default value is 100.

| parameter | Memory | In/out | Meaning |

| info | host | in/out | the pointer to the structure of gesvdj. |

| max_sweeps | host | input | maximum number of sweeps. |

| CUSOLVER_STATUS_SUCCESS | the operation completed successfully. |

2.4.1.16. cusolverDnXgesvdjSetSortEig()

cusolverStatus_t

cusolverDnXgesvdjSetSortEig(

gesvdjInfo_t info,

int sort_svd)

if sort_svd is zero, the singular values are not sorted. This function only works for gesvdjBatched. gesvdj always sorts singular values in descending order. By default, singular values are always sorted in descending order.

| parameter | Memory | In/out | Meaning |

| info | host | in/out | the pointer to the structure of gesvdj. |

| sort_svd | host | input | if sort_svd is zero, the singular values are not sorted. |

| CUSOLVER_STATUS_SUCCESS | the operation completed successfully. |

2.4.1.17. cusolverDnXgesvdjGetResidual()

cusolverStatus_t

cusolverDnXgesvdjGetResidual(

cusolverDnHandle_t handle,

gesvdjInfo_t info,

double *residual)

This function reports residual of gesvdj. It does not support gesvdjBatched. If the user calls this function after gesvdjBatched, the error CUSOLVER_STATUS_NOT_SUPPORTED is returned.

| parameter | Memory | In/out | Meaning |

| handle | host | input | handle to the cuSolverDN library context. |

| info | host | input | the pointer to the structure of gesvdj. |

| residual | host | output | residual of gesvdj. |

| CUSOLVER_STATUS_SUCCESS | the operation completed successfully. |

| CUSOLVER_STATUS_NOT_SUPPORTED | does not support batched version |

2.4.1.18. cusolverDnXgesvdjGetSweeps()

cusolverStatus_t

cusolverDnXgesvdjGetSweeps(

cusolverDnHandle_t handle,

gesvdjInfo_t info,

int *executed_sweeps)

This function reports number of executed sweeps of gesvdj. It does not support gesvdjBatched. If the user calls this function after gesvdjBatched, the error CUSOLVER_STATUS_NOT_SUPPORTED is returned.

| parameter | Memory | In/out | Meaning |

| handle | host | input | handle to the cuSolverDN library context. |

| info | host | input | the pointer to the structure of gesvdj. |

| executed_sweeps | host | output | number of executed sweeps. |

| CUSOLVER_STATUS_SUCCESS | the operation completed successfully. |

| CUSOLVER_STATUS_NOT_SUPPORTED | does not support batched version |

2.4.1.19. cusolverDnIRSParamsCreate()

cusolverStatus_t cusolverDnIRSParamsCreate(cusolverDnIRSParams_t *params);

This function creates and initializes the structure of parameters for an IRS solver such as the cusolverDnIRSXgesv() or the cusolverDnIRSXgels() functions to default values. The params structure created by this function can be used by one or more call to the same or to a different IRS solver. Note that, in CUDA-10.2, the behavior was different and a new params structure was needed to be created per each call to an IRS solver. Also note that, the user can also change configurations of the params then call a new IRS instance, but be careful that the previous call was done because any change to the configuration before that the previous call was done could affect it.

| parameter | Memory | In/out | Meaning |

| params | host | output | Pointer to the cusolverDnIRSParams_t Params structure |

| CUSOLVER_STATUS_SUCCESS | The structure was created and initialized successfully. |

| CUSOLVER_STATUS_ALLOC_FAILED | The resources could not be allocated. |

2.4.1.20. cusolverDnIRSParamsDestroy()

cusolverStatus_t cusolverDnIRSParamsDestroy(cusolverDnIRSParams_t params);

This function destroys and releases any memory required by the Params structure.

| parameter | Memory | In/out | Meaning |

| params | host | input | The cusolverDnIRSParams_t Params structure |

| CUSOLVER_STATUS_SUCCESS | The resources are released successfully. |

| CUSOLVER_STATUS_IRS_PARAMS_NOT_INITIALIZED | The Params structure was not created. |

| CUSOLVER_STATUS_IRS_INFOS_NOT_DESTROYED | Not all the Infos structure associated with this Params structure have been destroyed yet. |

2.4.1.21. cusolverDnIRSParamsSetSolverPrecisions()

cusolverStatus_t

cusolverDnIRSParamsSetSolverPrecisions(

cusolverDnIRSParams_t params,

cusolverPrecType_t solver_main_precision,

cusolverPrecType_t solver_lowest_precision );

This function set both, the main and the lowest precision for the Iterative Refinement Solver (IRS). By main precision, we mean the precision of the Input and Output datatype. By lowest precision, we mean the solver is allowed to use as lowest computational precision during the LU factorization process. Note that, the user has to set both the main and lowest precision before a first call to the IRS solver because they are NOT set by default with the params structure creation, as it depends on the Input Output data type and user request. It is a wrapper to both cusolverDnIRSParamsSetSolverMainPrecision() and cusolverDnIRSParamsSetSolverLowestPrecision(). All possible combinations of main/lowest precision are described in the table below. Usually the lowest precision defines the speedup that can be achieved. The ratio of the performance of the lowest precision over the main precision (e.g., Inputs/Outputs datatype) define somehow the upper bound of the speedup that could be obtained. More precisely, it depends on many factors, but for large matrices sizes, it is the ratio of the matrix-matrix rank-k product (e.g., GEMM where K is 256 and M=N=size of the matrix) that define the possible speedup. For instance, if the inout precision is real double precision CUSOLVER_R_64F and the lowest precision is CUSOLVER_R_32F, then we can expect a speedup of at most 2X for large problem sizes. If the lowest precision was CUSOLVER_R_16F, then we can expect 3X-4X. A reasonable strategy should take the number of right-hand sides, the size of the matrix as well as the convergence rate into account.

| parameter | Memory | In/out | Meaning |

| params | host | in/out | The cusolverDnIRSParams_t Params structure |

| solver_main_precision | host | input | Allowed Inputs/Outputs datatype (for example CUSOLVER_R_FP64 for a real double precision data). See the table below for the supported precisions. |

| solver_lowest_precision | host | input | Allowed lowest compute type (for example CUSOLVER_R_16F for half precision computation). See the table below for the supported precisions. |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_PARAMS_NOT_INITIALIZED | The Params structure was not created. |

| Inputs/Outputs Data Type (e.g., main precision) | Supported values for the lowest precision |

| CUSOLVER_C_64F | CUSOLVER_C_64F, CUSOLVER_C_32F, CUSOLVER_C_16F, CUSOLVER_C_16BF, CUSOLVER_C_TF32 |

| CUSOLVER_C_32F | CUSOLVER_C_32F, CUSOLVER_C_16F, CUSOLVER_C_16BF, CUSOLVER_C_TF32 |

| CUSOLVER_R_64F | CUSOLVER_R_64F, CUSOLVER_R_32F, CUSOLVER_R_16F, CUSOLVER_R_16BF, CUSOLVER_R_TF32 |

| CUSOLVER_R_32F | CUSOLVER_R_32F, CUSOLVER_R_16F, CUSOLVER_R_16BF, CUSOLVER_R_TF32 |

2.4.1.22. cusolverDnIRSParamsSetSolverMainPrecision()

cusolverStatus_t

cusolverDnIRSParamsSetSolverMainPrecision(

cusolverDnIRSParams_t params,

cusolverPrecType_t solver_main_precision);

This function sets the main precision for the Iterative Refinement Solver (IRS). By main precision, we mean, the type of the Input and Output data. Note that, the user has to set both the main and lowest precision before a first call to the IRS solver because they are NOT set by default with the params structure creation, as it depends on the Input Output data type and user request. user can set it by either calling this function or by calling cusolverDnIRSParamsSetSolverPrecisions() which set both the main and the lowest precision together. All possible combinations of main/lowest precision are described in the table in the cusolverDnIRSParamsSetSolverPrecisions() section above.

| parameter | Memory | In/out | Meaning |

| params | host | in/out | The cusolverDnIRSParams_t Params structure |

| solver_main_precision | host | input | Allowed Inputs/Outputs datatype (for example CUSOLVER_R_FP64 for a real double precision data). See the table in the cusolverDnIRSParamsSetSolverPrecisions() section above for the supported precisions. |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_PARAMS_NOT_INITIALIZED | The Params structure was not created. |

2.4.1.23. cusolverDnIRSParamsSetSolverLowestPrecision()

cusolverStatus_t

cusolverDnIRSParamsSetSolverLowestPrecision(

cusolverDnIRSParams_t params,

cusolverPrecType_t lowest_precision_type);

This function sets the lowest precision that will be used by Iterative Refinement Solver. By lowest precision, we mean the solver is allowed to use as lowest computational precision during the LU factorization process. Note that, the user has to set both the main and lowest precision before a first call to the IRS solver because they are NOT set by default with the params structure creation, as it depends on the Input Output data type and user request. Usually the lowest precision defines the speedup that can be achieved. The ratio of the performance of the lowest precision over the main precision (e.g., Inputs/Outputs datatype) define somehow the upper bound of the speedup that could be obtained. More precisely, it depends on many factors, but for large matrices sizes, it is the ratio of the matrix-matrix rank-k product (e.g., GEMM where K is 256 and M=N=size of the matrix) that define the possible speedup. For instance, if the inout precision is real double precision CUSOLVER_R_64F and the lowest precision is CUSOLVER_R_32F, then we can expect a speedup of at most 2X for large problem sizes. If the lowest precision was CUSOLVER_R_16F, then we can expect 3X-4X. A reasonable strategy should take the number of right-hand sides, the size of the matrix as well as the convergence rate into account.

| parameter | Memory | In/out | Meaning |

| params | host | in/out | The cusolverDnIRSParams_t Params structure |

| lowest_precision_type | host | input | Allowed lowest compute type (for example CUSOLVER_R_16F for half precision computation). See the table in the cusolverDnIRSParamsSetSolverPrecisions() section above for the supported precisions. |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_PARAMS_NOT_INITIALIZED | The Params structure was not created. |

2.4.1.24. cusolverDnIRSParamsSetRefinementSolver()

cusolverStatus_t

cusolverDnIRSParamsSetRefinementSolver(

cusolverDnIRSParams_t params,

cusolverIRSRefinement_t solver);

This function sets the refinement solver to be used in the Iterative Refinement Solver functions such as the cusolverDnIRSXgesv() or the cusolverDnIRSXgels() functions. Note that, the user has to set the refinement algorithm before a first call to the IRS solver because it is NOT set by default with the creating of params. Details about values that can be set to and theirs meaning are described in the table below.

| parameter | Memory | In/out | Meaning |

| params | host | in/out | The cusolverDnIRSParams_t Params structure |

| solver | host | input | Type of the refinement solver to be used by the IRS solver such as cusolverDnIRSXgesv() or cusolverDnIRSXgels |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_PARAMS_NOT_INITIALIZED | The Params structure was not created. |

| Value | Meaning |

| CUSOLVER_IRS_REFINE_NOT_SET | Solver is not set, this value is what is set when creating the params structure. IRS solver will return error. |

| CUSOLVER_IRS_REFINE_NONE | No refinement solver, the IRS solver performs a factorization followed by a solve without any refinement. For example, if the IRS solver was cusolverDnIRSXgesv(), this is equivalent to a Xgesv routine without refinement and where the factorization is carried out in the lowest precision. If for example the main precision was CUSOLVER_R_64F and the lowest was CUSOLVER_R_64F as well, then this is equivalent to a call to cusolverDnDgesv(). |

| CUSOLVER_IRS_REFINE_CLASSICAL | Classical iterative refinement solver. Similar to the one used in LAPACK routines. |

| CUSOLVER_IRS_REFINE_GMRES | GMRES (Generalized Minimal Residual) based iterative refinement solver. In recent study, the GMRES method has drawn the scientific community attention for its ability to be used as refinement solver that outperforms the classical iterative refinement method. Based on our experimentation, we recommend this setting. |

| CUSOLVER_IRS_REFINE_CLASSICAL_GMRES | Classical iterative refinement solver that uses the GMRES (Generalized Minimal Residual) internally to solve the correction equation at each iteration. We call the classical refinement iteration the outer iteration while the GMRES is called inner iteration. Note that if the tolerance of the inner GMRES is set very low, let say to machine precision, then the outer classical refinement iteration will performs only one iteration and thus this option will behaves like CUSOLVER_IRS_REFINE_GMRES. |

| CUSOLVER_IRS_REFINE_GMRES_GMRES | Similar to CUSOLVER_IRS_REFINE_CLASSICAL_GMRES which consists of classical refinement process that uses GMRES to solve the inner correction system, here it is a GMRES (Generalized Minimal Residual) based iterative refinement solver that uses another GMRES internally to solve the preconditioned system. |

2.4.1.25. cusolverDnIRSParamsSetTol()

cusolverStatus_t

cusolverDnIRSParamsSetTol(

cusolverDnIRSParams_t params,

double val );

This function sets the tolerance for the refinement solver. By default it is such that, all the RHS satisfy:

- RNRM is the infinity-norm of the residual

- XNRM is the infinity-norm of the solution

- ANRM is the infinity-operator-norm of the matrix A

- EPS is the machine epsilon for the Inputs/Outputs datatype that matches LAPACK <X>LAMCH('Epsilon')

- BWDMAX, the value BWDMAX is fixed to 1.0

The user can use this function to change the tolerance to a lower or higher value. Our goal is to give the user more control such a way he can investigate and control every detail of the IRS solver. Note the, the tolerance value is always in real double precision whatever the Inputs/Outputs datatype is.

| parameter | Memory | In/out | Meaning |

| params | host | in/out | The cusolverDnIRSParams_t Params structure |

| val | host | input | double precision real value to which the refinement tolerance will be set. |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_PARAMS_NOT_INITIALIZED | The Params structure was not created. |

2.4.1.26. cusolverDnIRSParamsSetTolInner()

cusolverStatus_t

cusolverDnIRSParamsSetTolInner(

cusolverDnIRSParams_t params,

double val );

This function sets the tolerance for the inner refinement solver when the refinement solver consists of two-levels solver (e.g., CUSOLVER_IRS_REFINE_CLASSICAL_GMRES or CUSOLVER_IRS_REFINE_GMRES_GMRES cases). It is not referenced in case of one level refinement solver such as CUSOLVER_IRS_REFINE_CLASSICAL or CUSOLVER_IRS_REFINE_GMRES. It is set to 1e-4 by default. This function set the tolerance for the inner solver (e.g. the inner GMRES). For example, if the Refinement Solver was set to CUSOLVER_IRS_REFINE_CLASSICAL_GMRES, setting this tolerance mean that the inner GMRES solver will converge to that tolerance at each outer iteration of the classical refinement solver. Our goal is to give the user more control such a way he can investigate and control every detail of the IRS solver. Note the, the tolerance value is always in real double precision whatever the Inputs/Outputs datatype is.

| parameter | Memory | In/out | Meaning |

| params | host | in/out | The cusolverDnIRSParams_t Params structure |

| val | host | input | Double precision real value to which the tolerance of the inner refinement solver will be set. |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_PARAMS_NOT_INITIALIZED | The Params structure was not created. |

2.4.1.27. cusolverDnIRSParamsSetMaxIters()

cusolverStatus_t

cusolverDnIRSParamsSetMaxIters(

cusolverDnIRSParams_t params,

int max_iters);

This function sets the total number of allowed refinement iterations after which the solver will stop. Total means any iteration which means the sum of the outer and the inner iterations (inner is meaningful when two-levels refinement solver is set). Default value is set to 50. Our goal is to give the user more control such a way he can investigate and control every detail of the IRS solver.

| parameter | Memory | In/out | Meaning |

| params | host | in/out | The cusolverDnIRSParams_t Params structure |

| max_iters | host | input | Maximum total number of iterations allowed for the refinement solver |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_PARAMS_NOT_INITIALIZED | The Params structure was not created. |

2.4.1.28. cusolverDnIRSParamsSetMaxItersInner()

cusolverStatus_t

cusolverDnIRSParamsSetMaxItersInner(

cusolverDnIRSParams_t params,

cusolver_int_t maxiters_inner );

This function sets the maximal number of iterations allowed for the inner refinement solver. It is not referenced in case of one level refinement solver such as CUSOLVER_IRS_REFINE_CLASSICAL or CUSOLVER_IRS_REFINE_GMRES. The inner refinement solver will stop after reaching either the inner tolerance or the MaxItersInner value. By default, it is set to 50. Note that this value could not be larger than the MaxIters since MaxIters is the total number of allowed iterations. Note that, if the user call cusolverDnIRSParamsSetMaxIters after calling this function, the SetMaxIters has priority and will overwrite MaxItersInner to the minimum value of (MaxIters, MaxItersInner).

| parameter | Memory | In/out | Meaning |

| params | host | in/out | The cusolverDnIRSParams_t Params structure |

| maxiters_inner | host | input | Maximum number of allowed inner iterations for the inner refinement solver. Meaningful when the refinement solver is a two-levels solver such as CUSOLVER_IRS_REFINE_CLASSICAL_GMRES or CUSOLVER_IRS_REFINE_GMRES_GMRES. Value should be less or equal to MaxIters. |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_PARAMS_NOT_INITIALIZED | The Params structure was not created. |

| CUSOLVER_STATUS_IRS_PARAMS_INVALID | if the value was larger than MaxIters. |

2.4.1.29. cusolverDnIRSParamsEnableFallback()

cusolverStatus_t

cusolverDnIRSParamsEnableFallback(

cusolverDnIRSParams_t params );

This function enable the fallback to the main precision in case the Iterative Refinement Solver (IRS) failed to converge. In other term, if the IRS solver failed to converge, the solver will return a no convergence code (e.g., niter < 0), but can either return the non-convergent solution as it is (e.g., disable fallback) or can fallback (e.g., enable fallback) to the main precision (which is the precision of the Inputs/Outputs data) and solve the problem from scratch returning the good solution. This is the behavior by default, and it will guarantee that the IRS solver always provide the good solution. this function is provided because we provided cusolverDnIRSParamsDisableFallback which allow the user to disable the fallback and thus this function allow him to re-enable it.

| parameter | Memory | In/out | Meaning |

| params | host | in/out | The cusolverDnIRSParams_t Params structure |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_PARAMS_NOT_INITIALIZED | The Params structure was not created. |

2.4.1.30. cusolverDnIRSParamsDisableFallback()

cusolverStatus_t

cusolverDnIRSParamsDisableFallback(

cusolverDnIRSParams_t params );

This function disables the fallback to the main precision in case the Iterative Refinement Solver (IRS) failed to converge. In other term, if the IRS solver failed to converge, the solver will return a no convergence code (e.g., niter < 0), but can either return the non-convergent solution as it is (e.g., disable fallback) or can fallback (e.g., enable fallback) to the main precision (which is the precision of the Inputs/Outputs data) and solve the problem from scratch returning the good solution. This function disables the fallback and the returned solution is whatever the refinement solver was able to reach before it returns. Disabling fallback does not guarantee that the solution is the good one. However, for some users, who want to keep getting the solution of the lower precision, in case the IRS did not converge after certain number of iterations, they need to disable the fallback. The user can re-enable it by calling cusolverDnIRSParamsEnableFallback.

| parameter | Memory | In/out | Meaning |

| params | host | in/out | The cusolverDnIRSParams_t Params structure |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_PARAMS_NOT_INITIALIZED | The Params structure was not created. |

2.4.1.31. cusolverDnIRSParamsGetMaxIters()

cusolverStatus_t

cusolverDnIRSParamsGetMaxIters(

cusolverDnIRSParams_t params,

cusolver_int_t *maxiters );

This function returns the current setting in the params structure for the maximal allowed number of iterations (e.g., either the default MaxIters, or the one set by the user in case he set it using cusolverDnIRSParamsSetMaxIters). Note that, this function returns the current setting in the params configuration and not to be confused with the cusolverDnIRSInfosGetMaxIters which return the maximal allowed number of iterations for a particular call to an IRS solver. To be clearer, the params structure can be used for many calls to an IRS solver. A user can change the allowed MaxIters between calls while the Infos structure in cusolverDnIRSInfosGetMaxIters contains information about a particular call and cannot be reused for different call, and thus, cusolverDnIRSInfosGetMaxIters return the allowed MaxIters for that call.

| parameter | Memory | In/out | Meaning |

| params | host | in | The cusolverDnIRSParams_t Params structure |

| maxiters | host | output | The maximal number of iterations that is currently set |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_PARAMS_NOT_INITIALIZED | The Params structure was not created. |

2.4.1.32. cusolverDnIRSInfosCreate()

cusolverStatus_t

cusolverDnIRSInfosCreate(

cusolverDnIRSInfos_t* infos )

This function creates and initializes the Infos structure that will hold the refinement information of an Iterative Refinement Solver (IRS) call. Such information includes the total number of iterations that was needed to converge (Niters), the outer number of iterations (meaningful when two-levels preconditioner such as CUSOLVER_IRS_REFINE_CLASSICAL_GMRES is used ), the maximal number of iterations that was allowed for that call, and a pointer to the matrix of the convergence history residual norms. The Infos structure need to be created before a call to an IRS solver. The Infos structure is valid for only one call to an IRS solver, since it holds info about that solve and thus each solve will requires its own Infos structure.

| parameter | Memory | In/out | Meaning |

| info | host | output | Pointer to the cusolverDnIRSInfos_t Infos structure |

| CUSOLVER_STATUS_SUCCESS | The structure was initialized successfully. |

| CUSOLVER_STATUS_ALLOC_FAILED | The resources could not be allocated. |

2.4.1.33. cusolverDnIRSInfosDestroy()

cusolverStatus_t

cusolverDnIRSInfosDestroy(

cusolverDnIRSInfos_t infos );

This function destroys and releases any memory required by the Infos structure. This function destroys all the informations (e.g., Niters performed, OuterNiters performed, residual history etc) about a solver call, thus, a user is supposed to call it once he is done from the informations he need.

| parameter | Memory | In/out | Meaning |

| info | host | in/out | The cusolverDnIRSInfos_t Infos structure |

| CUSOLVER_STATUS_SUCCESS | the resources are released successfully. |

| CUSOLVER_STATUS_IRS_INFOS_NOT_INITIALIZED | The Infos structure was not created. |

2.4.1.34. cusolverDnIRSInfosGetMaxIters()

cusolverStatus_t

cusolverDnIRSInfosGetMaxIters(

cusolverDnIRSInfos_t infos,

cusolver_int_t *maxiters );

This function returns the maximal allowed number of iterations that was set for the corresponding call to the IRS solver. Note that, this function returns the setting that was set when that call happened and not to be confused with the cusolverDnIRSParamsGetMaxIters which return the current setting in the params configuration structure. To be clearer, the params structure can be used for many calls to an IRS solver. A user can change the allowed MaxIters between calls while the Infos structure in cusolverDnIRSInfosGetMaxIters contains information about a particular call and cannot be reused for different call, and thus cusolverDnIRSInfosGetMaxIters return the allowed MaxIters for that call.

| parameter | Memory | In/out | Meaning |

| infos | host | in | The cusolverDnIRSInfos_t Infos structure |

| maxiters | host | output | The maximal number of iterations that is currently set |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_INFOS_NOT_INITIALIZED | The Infos structure was not created. |

2.4.1.35. cusolverDnIRSInfosGetNiters()

cusolverStatus_t cusolverDnIRSInfosGetNiters(

cusolverDnIRSInfos_t infos,

cusolver_int_t *niters );

This function returns the total number of iterations performed by the IRS solver. If it was negative it means that the IRS solver did not converge and if the user did not disable the fallback to full precision, then the fallback to a full precision solution happened and solution is good. Please refer to the description of negative niters values in the corresponding IRS linear solver functions such as cusolverDnXgesv() or cusolverDnXgels()

| parameter | Memory | In/out | Meaning |

| infos | host | in | The cusolverDnIRSInfos_t Infos structure |

| niters | host | output | The total number of iterations performed by the IRS solver |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_INFOS_NOT_INITIALIZED | The Infos structure was not created. |

2.4.1.36. cusolverDnIRSInfosGetOuterNiters()

cusolverStatus_t

cusolverDnIRSInfosGetOuterNiters(

cusolverDnIRSInfos_t infos,

cusolver_int_t *outer_niters );

This function returns the number of iterations performed by the outer refinement loop of the IRS solver. When the refinement solver consists of a one level solver such as CUSOLVER_IRS_REFINE_CLASSICAL or CUSOLVER_IRS_REFINE_GMRES, it is the same as Niters. When the refinement solver consists of a two-levels solver such as CUSOLVER_IRS_REFINE_CLASSICAL_GMRES or CUSOLVER_IRS_REFINE_GMRES_GMRES, it is the number of iterations of the outer loop. See description of cusolverIRSRefinementSolver_t section for more details.

| parameter | Memory | In/out | Meaning |

| infos | host | in | The cusolverDnIRSInfos_t Infos structure |

| outer_niters | host | output | The number of iterations of the outer refinement loop of the IRS solver |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_INFOS_NOT_INITIALIZED | The Infos structure was not created. |

2.4.1.37. cusolverDnIRSInfosRequestResidual()

cusolverStatus_t cusolverDnIRSInfosRequestResidual(

cusolverDnIRSInfos_t infos );

This function, once called, tell the IRS solver to store the convergence history (residual norms) of the refinement phase in a matrix, that could be accessed via a pointer returned by the cusolverDnIRSInfosGetResidualHistory() function.

| parameter | Memory | In/out | Meaning |

| infos | host | in | The cusolverDnIRSInfos_t Infos structure |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_INFOS_NOT_INITIALIZED | The Infos structure was not created. |

2.4.1.38. cusolverDnIRSInfosGetResidualHistory()

cusolverStatus_t

cusolverDnIRSInfosGetResidualHistory(

cusolverDnIRSInfos_t infos,

void **residual_history );

If the user called cusolverDnIRSInfosRequestResidual() before the call to the IRS function, then the IRS solver will store the convergence history (residual norms) of the refinement phase in a matrix, that could be accessed via a pointer returned by this function. The datatype of the residual norms depends on the input and output data type. If the Inputs/Outputs datatype is double precision real or complex (CUSOLVER_R_FP64 or CUSOLVER_C_FP64), this residual will be of type real double precision (FP64) double, otherwise if the Inputs/Outputs datatype is single precision real or complex (CUSOLVER_R_FP32 or CUSOLVER_C_FP32), this residual will be real single precision FP32 float.

The residual history matrix consists of two columns (even for the multiple right-hand side case NRHS) of MaxIters+1 row, thus a matrix of size (MaxIters+1,2). Only the first OuterNiters+1 rows contains the residual norms the other (e.g., OuterNiters+2:Maxiters+1) are garbage. On the first column, each row "i" specify the total number of iterations happened till this outer iteration "i" and on the second columns the residual norm corresponding to this outer iteration "i". Thus, the first row (e.g., outer iteration "0") consists of the initial residual (e.g., the residual before the refinement loop start) then the consecutive rows are the residual obtained at each outer iteration of the refinement loop. Note, it only consists of the history of the outer loop.

If the refinement solver was CUSOLVER_IRS_REFINE_CLASSICAL or CUSOLVER_IRS_REFINE_GMRES, then OuterNiters=Niters (Niters is the total number of iterations performed) and there is Niters+1 rows of norms that correspond to the Niters outer iterations.

If the refinement solver was CUSOLVER_IRS_REFINE_CLASSICAL_GMRES or CUSOLVER_IRS_REFINE_GMRES_GMRES, then OuterNiters <= Niters corresponds to the outer iterations performed by the outer refinement loop. Thus, there is OuterNiters+1 residual norms where row "i" correspond to the outer iteration "i" and the first column specify the total number of iterations (outer and inner) that were performed till this step the second columns correspond to the residual norm at this step.

For example, let say the user specify CUSOLVER_IRS_REFINE_CLASSICAL_GMRES as a refinement solver and let say it needed 3 outer iterations to converge and 4,3,3 inner iterations at each outer respectively. This consists of 10 total iterations. Row 0 correspond to the first residual before the refinement start, so it has 0 in its first column. On row 1 which correspond to the outer iteration 1, it will be shown 4 (4 is the total number of iterations that were performed till now) on row 2, it will be 7 and on row 3 it will be 10.

As summary, let define ldh=Maxiters+1, the leading dimension of the residual matrix. then residual_history[i] shows the total number of iterations performed at the outer iteration "i" and residual_history[i+ldh] correspond to the norm of the residual at this outer iteration.

| parameter | Memory | In/out | Meaning |

| infos | host | in | The cusolverDnIRSInfos_t Infos structure |

| residual_history | host | output | Returns a void pointer to the matrix of the convergence history residual norms. See the description above for the relation between the residual norm datatype and the inout datatype. |

| CUSOLVER_STATUS_SUCCESS | The operation completed successfully. |

| CUSOLVER_STATUS_IRS_INFOS_NOT_INITIALIZED | The Infos structure was not created. |

| CUSOLVER_STATUS_INVALID_VALUE | This function was called without calling cusolverDnIRSInfosRequestResidual() in advance. |

2.4.1.39. cusolverDnCreateParams()

cusolverStatus_t

cusolverDnCreateParams(

cusolverDnParams_t *params);

This function creates and initializes the structure of 64-bit API to default values.

| parameter | Memory | In/out | Meaning |

| params | host | output | the pointer to the structure of 64-bit API. |

| CUSOLVER_STATUS_SUCCESS | the structure was initialized successfully. |

| CUSOLVER_STATUS_ALLOC_FAILED | the resources could not be allocated. |

2.4.1.40. cusolverDnDestroyParams()

cusolverStatus_t

cusolverDnDestroyParams(

cusolverDnParams_t params);

This function destroys and releases any memory required by the structure.

| parameter | Memory | In/out | Meaning |

| params | host | input | the structure of 64-bit API. |

| CUSOLVER_STATUS_SUCCESS | the resources are released successfully. |

2.4.1.41. cusolverDnSetAdvOptions()

cusolverStatus_t

cusolverDnSetAdvOptions (

cusolverDnParams_t params,

cusolverDnFunction_t function,

cusolverAlgMode_t algo );

This function configures algorithm algo of function, a 64-bit API routine.

| parameter | Memory | In/out | Meaning |

| params | host | in/out | the pointer to the structure of 64-bit API. |

| function | host | input | which routine to be configured. |

| algo | host | input | which algorithm to be configured. |

| CUSOLVER_STATUS_SUCCESS | the operation completed successfully. |

| CUSOLVER_STATUS_INVALID_VALUE | wrong combination of function and algo |

2.4.2. Dense Linear Solver Reference (legacy)

This chapter describes linear solver API of cuSolverDN, including Cholesky factorization, LU with partial pivoting, QR factorization and Bunch-Kaufman (LDLT) factorization.

2.4.2.1. cusolverDn<t>potrf()

cusolverStatus_t

cusolverDnSpotrf_bufferSize(cusolverDnHandle_t handle,

cublasFillMode_t uplo,

int n,

float *A,

int lda,

int *Lwork );

cusolverStatus_t

cusolverDnDpotrf_bufferSize(cusolveDnHandle_t handle,

cublasFillMode_t uplo,

int n,

double *A,

int lda,

int *Lwork );

cusolverStatus_t

cusolverDnCpotrf_bufferSize(cusolverDnHandle_t handle,

cublasFillMode_t uplo,

int n,

cuComplex *A,

int lda,

int *Lwork );

cusolverStatus_t

cusolverDnZpotrf_bufferSize(cusolverDnHandle_t handle,

cublasFillMode_t uplo,

int n,

cuDoubleComplex *A,

int lda,

int *Lwork);

cusolverStatus_t

cusolverDnSpotrf(cusolverDnHandle_t handle,

cublasFillMode_t uplo,

int n,

float *A,

int lda,

float *Workspace,

int Lwork,

int *devInfo );

cusolverStatus_t

cusolverDnDpotrf(cusolverDnHandle_t handle,

cublasFillMode_t uplo,

int n,

double *A,

int lda,

double *Workspace,

int Lwork,

int *devInfo );

cusolverStatus_t

cusolverDnCpotrf(cusolverDnHandle_t handle,

cublasFillMode_t uplo,

int n,

cuComplex *A,

int lda,

cuComplex *Workspace,

int Lwork,

int *devInfo );

cusolverStatus_t

cusolverDnZpotrf(cusolverDnHandle_t handle,

cublasFillMode_t uplo,

int n,

cuDoubleComplex *A,

int lda,

cuDoubleComplex *Workspace,

int Lwork,

int *devInfo );

This function computes the Cholesky factorization of a Hermitian positive-definite matrix.

A is a n×n Hermitian matrix, only lower or upper part is meaningful. The input parameter uplo indicates which part of the matrix is used. The function would leave other part untouched.

If input parameter uplo is CUBLAS_FILL_MODE_LOWER, only lower triangular part of A is processed, and replaced by lower triangular Cholesky factor L.

If input parameter uplo is CUBLAS_FILL_MODE_UPPER, only upper triangular part of A is processed, and replaced by upper triangular Cholesky factor U.

The user has to provide working space which is pointed by input parameter Workspace. The input parameter Lwork is size of the working space, and it is returned by potrf_bufferSize().

If Cholesky factorization failed, i.e. some leading minor of A is not positive definite, or equivalently some diagonal elements of L or U is not a real number. The output parameter devInfo would indicate smallest leading minor of A which is not positive definite.

If output parameter devInfo = -i (less than zero), the i-th parameter is wrong (not counting handle).

| parameter | Memory | In/out | Meaning |

| handle | host | input | handle to the cuSolverDN library context. |

| uplo | host | input | indicates if matrix A lower or upper part is stored, the other part is not referenced. |

| n | host | input | number of rows and columns of matrix A. |

| A | device | in/out | <type> array of dimension lda * n with lda is not less than max(1,n). |

| lda | host | input | leading dimension of two-dimensional array used to store matrix A. |

| Workspace | device | in/out | working space, <type> array of size Lwork. |

| Lwork | host | input | size of Workspace, returned by potrf_bufferSize. |

| devInfo | device | output | if devInfo = 0, the Cholesky factorization is successful. if devInfo = -i, the i-th parameter is wrong (not counting handle). if devInfo = i, the leading minor of order i is not positive definite. |

| CUSOLVER_STATUS_SUCCESS | the operation completed successfully. |

| CUSOLVER_STATUS_NOT_INITIALIZED | the library was not initialized. |

| CUSOLVER_STATUS_INVALID_VALUE | invalid parameters were passed (n<0 or lda<max(1,n)). |

| CUSOLVER_STATUS_ARCH_MISMATCH | the device only supports compute capability 2.0 and above. |

| CUSOLVER_STATUS_INTERNAL_ERROR | an internal operation failed. |

2.4.2.2. cusolverDnPotrf()[DEPRECATED]

[[DEPRECATED]] use cusolverDnXpotrf() instead. The routine will be removed in the next major release.

cusolverStatus_t

cusolverDnPotrf_bufferSize(

cusolverDnHandle_t handle,

cusolverDnParams_t params,

cublasFillMode_t uplo,

int64_t n,

cudaDataType dataTypeA,

const void *A,

int64_t lda,

cudaDataType computeType,

size_t *workspaceInBytes )

cusolverStatus_t

cusolverDnPotrf(

cusolverDnHandle_t handle,

cusolverDnParams_t params,

cublasFillMode_t uplo,

int64_t n,

cudaDataType dataTypeA,

void *A,

int64_t lda,

cudaDataType computeType,

void *pBuffer,

size_t workspaceInBytes,

int *info )

computes the Cholesky factorization of a Hermitian positive-definite matrix using the generic API interfacte.

A is a n×n Hermitian matrix, only lower or upper part is meaningful. The input parameter uplo indicates which part of the matrix is used. The function would leave other part untouched.

If input parameter uplo is CUBLAS_FILL_MODE_LOWER, only lower triangular part of A is processed, and replaced by lower triangular Cholesky factor L.

If input parameter uplo is CUBLAS_FILL_MODE_UPPER, only upper triangular part of A is processed, and replaced by upper triangular Cholesky factor U.

The user has to provide working space which is pointed by input parameter pBuffer. The input parameter workspaceInBytes is size in bytes of the working space, and it is returned by cusolverDnPotrf_bufferSize().

If Cholesky factorization failed, i.e. some leading minor of A is not positive definite, or equivalently some diagonal elements of L or U is not a real number. The output parameter info would indicate smallest leading minor of A which is not positive definite.

If output parameter info = -i (less than zero), the i-th parameter is wrong (not counting handle).

Currently, cusolverDnPotrf supports only the default algorithm.

| CUSOLVER_ALG_0 or NULL | Default algorithm. |