Parallel Thread Execution ISA Version 8.5

The programming guide to using PTX (Parallel Thread Execution) and ISA (Instruction Set Architecture).

1. Introduction

This document describes PTX, a low-level parallel thread execution virtual machine and instruction set architecture (ISA). PTX exposes the GPU as a data-parallel computing device.

1.1. Scalable Data-Parallel Computing using GPUs

Driven by the insatiable market demand for real-time, high-definition 3D graphics, the programmable GPU has evolved into a highly parallel, multithreaded, many-core processor with tremendous computational horsepower and very high memory bandwidth. The GPU is especially well-suited to address problems that can be expressed as data-parallel computations - the same program is executed on many data elements in parallel - with high arithmetic intensity - the ratio of arithmetic operations to memory operations. Because the same program is executed for each data element, there is a lower requirement for sophisticated flow control; and because it is executed on many data elements and has high arithmetic intensity, the memory access latency can be hidden with calculations instead of big data caches.

Data-parallel processing maps data elements to parallel processing threads. Many applications that process large data sets can use a data-parallel programming model to speed up the computations. In 3D rendering large sets of pixels and vertices are mapped to parallel threads. Similarly, image and media processing applications such as post-processing of rendered images, video encoding and decoding, image scaling, stereo vision, and pattern recognition can map image blocks and pixels to parallel processing threads. In fact, many algorithms outside the field of image rendering and processing are accelerated by data-parallel processing, from general signal processing or physics simulation to computational finance or computational biology.

PTX defines a virtual machine and ISA for general purpose parallel thread execution. PTX programs are translated at install time to the target hardware instruction set. The PTX-to-GPU translator and driver enable NVIDIA GPUs to be used as programmable parallel computers.

1.2. Goals of PTX

PTX provides a stable programming model and instruction set for general purpose parallel programming. It is designed to be efficient on NVIDIA GPUs supporting the computation features defined by the NVIDIA Tesla architecture. High level language compilers for languages such as CUDA and C/C++ generate PTX instructions, which are optimized for and translated to native target-architecture instructions.

The goals for PTX include the following:

Provide a stable ISA that spans multiple GPU generations.

Achieve performance in compiled applications comparable to native GPU performance.

Provide a machine-independent ISA for C/C++ and other compilers to target.

Provide a code distribution ISA for application and middleware developers.

Provide a common source-level ISA for optimizing code generators and translators, which map PTX to specific target machines.

Facilitate hand-coding of libraries, performance kernels, and architecture tests.

Provide a scalable programming model that spans GPU sizes from a single unit to many parallel units.

1.3. PTX ISA Version 8.5

PTX ISA version 8.5 introduces the following new features:

Adds support for

mma.sp::ordered_metadatainstruction.

1.4. Document Structure

The information in this document is organized into the following Chapters:

Programming Model outlines the programming model.

PTX Machine Model gives an overview of the PTX virtual machine model.

Syntax describes the basic syntax of the PTX language.

State Spaces, Types, and Variables describes state spaces, types, and variable declarations.

Instruction Operands describes instruction operands.

Abstracting the ABI describes the function and call syntax, calling convention, and PTX support for abstracting the Application Binary Interface (ABI).

Instruction Set describes the instruction set.

Special Registers lists special registers.

Directives lists the assembly directives supported in PTX.

Release Notes provides release notes for PTX ISA versions 2.x and beyond.

References

-

754-2008 IEEE Standard for Floating-Point Arithmetic. ISBN 978-0-7381-5752-8, 2008.

-

The OpenCL Specification, Version: 1.1, Document Revision: 44, June 1, 2011.

-

CUDA Programming Guide.

https://docs.nvidia.com/cuda/cuda-c-programming-guide/index.html

-

CUDA Dynamic Parallelism Programming Guide.

https://docs.nvidia.com/cuda/cuda-c-programming-guide/index.html#cuda-dynamic-parallelism

-

CUDA Atomicity Requirements.

https://nvidia.github.io/cccl/libcudacxx/extended_api/memory_model.html#atomicity

-

PTX Writers Guide to Interoperability.

https://docs.nvidia.com/cuda/ptx-writers-guide-to-interoperability/index.html

2. Programming Model

2.1. A Highly Multithreaded Coprocessor

The GPU is a compute device capable of executing a very large number of threads in parallel. It operates as a coprocessor to the main CPU, or host: In other words, data-parallel, compute-intensive portions of applications running on the host are off-loaded onto the device.

More precisely, a portion of an application that is executed many times, but independently on different data, can be isolated into a kernel function that is executed on the GPU as many different threads. To that effect, such a function is compiled to the PTX instruction set and the resulting kernel is translated at install time to the target GPU instruction set.

2.2. Thread Hierarchy

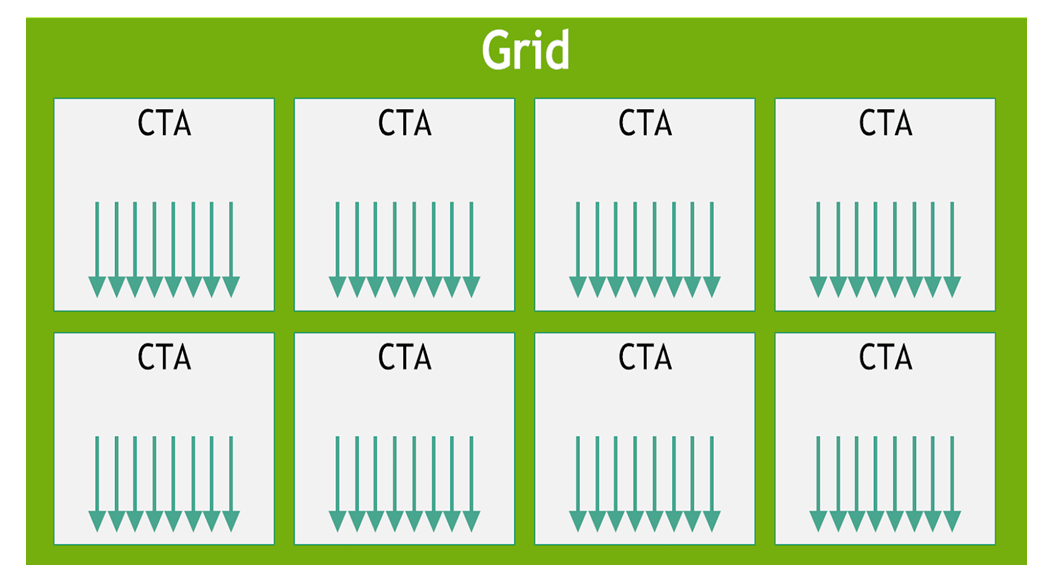

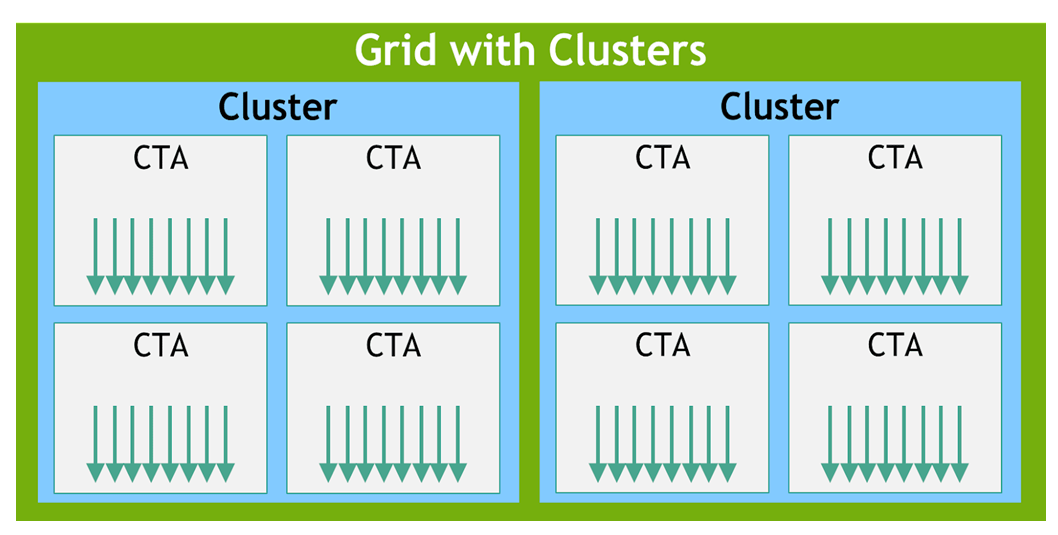

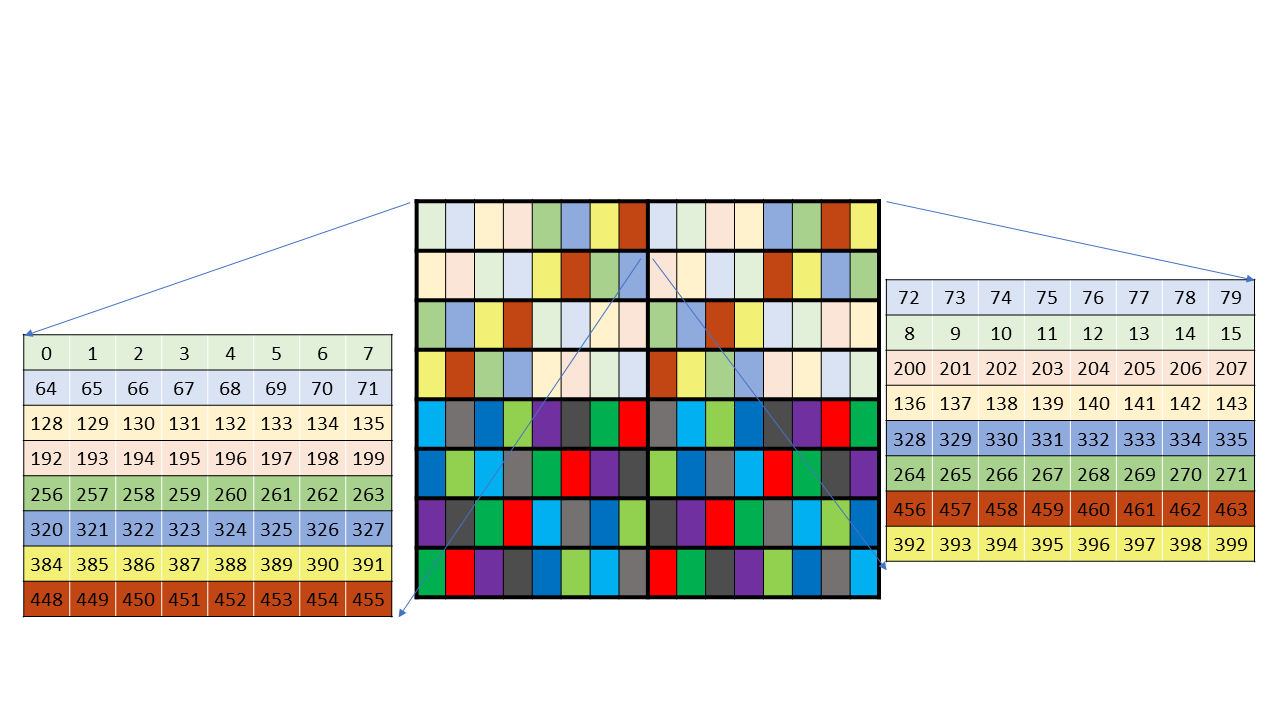

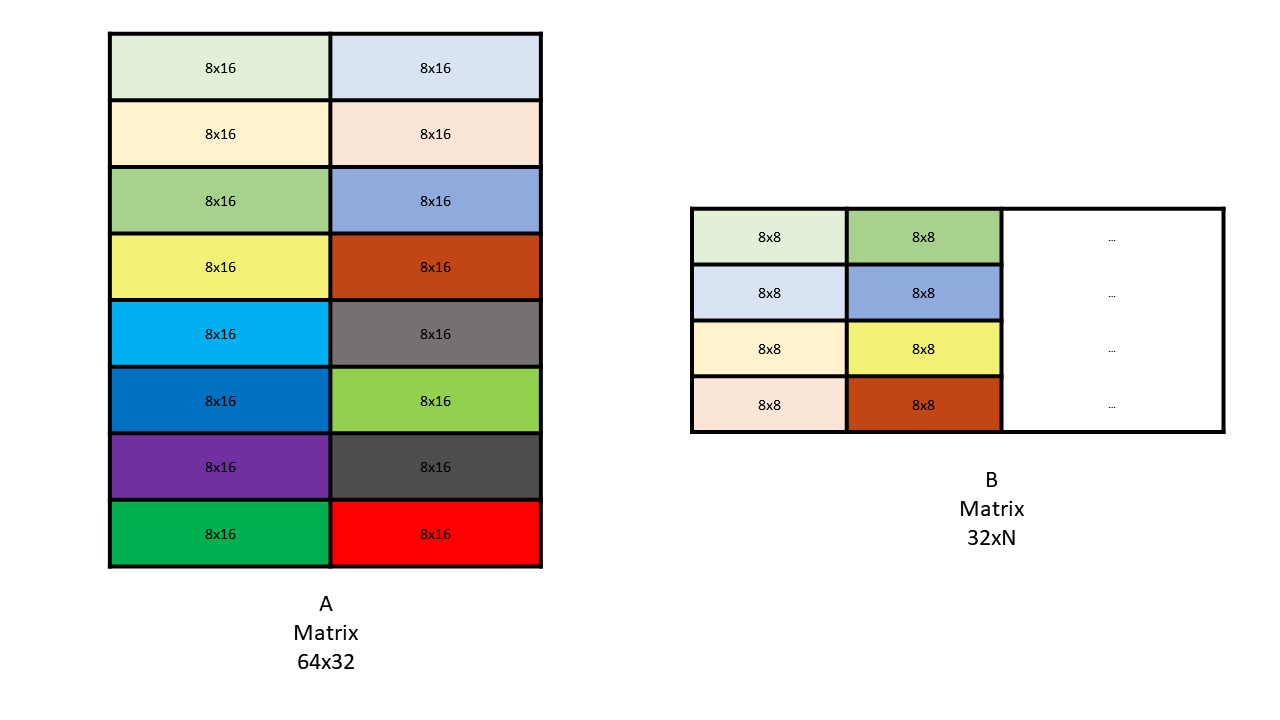

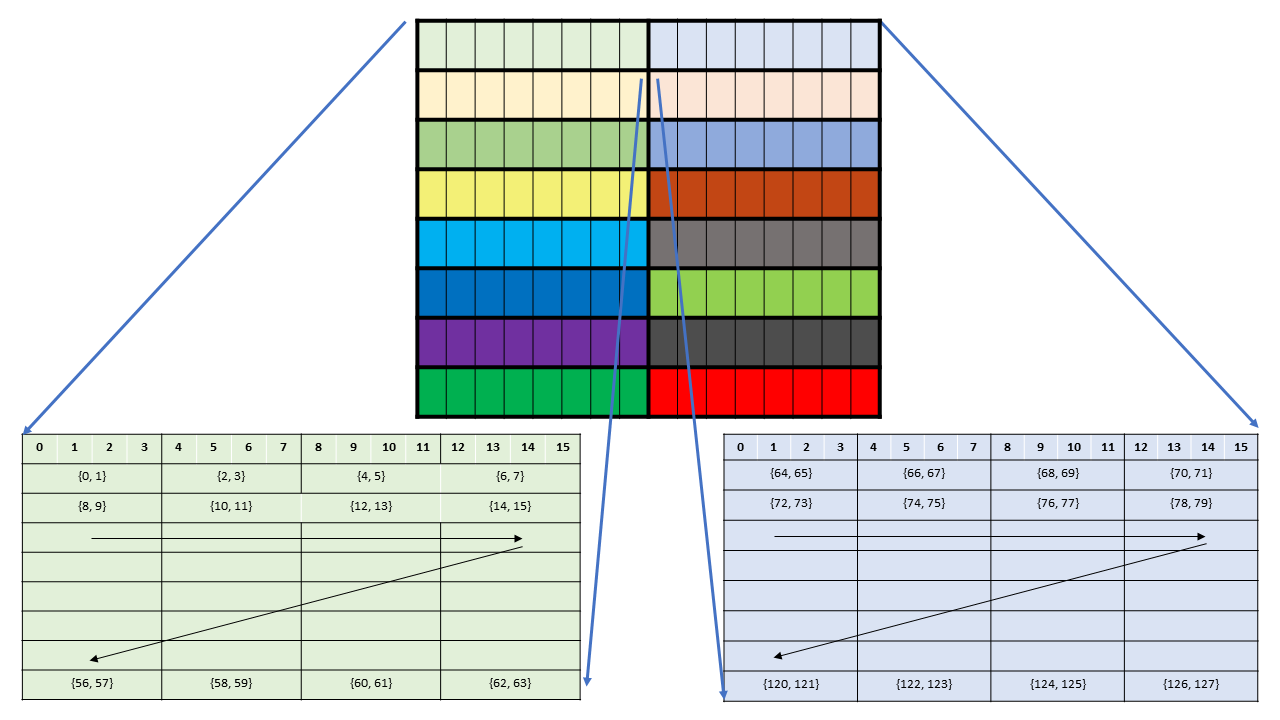

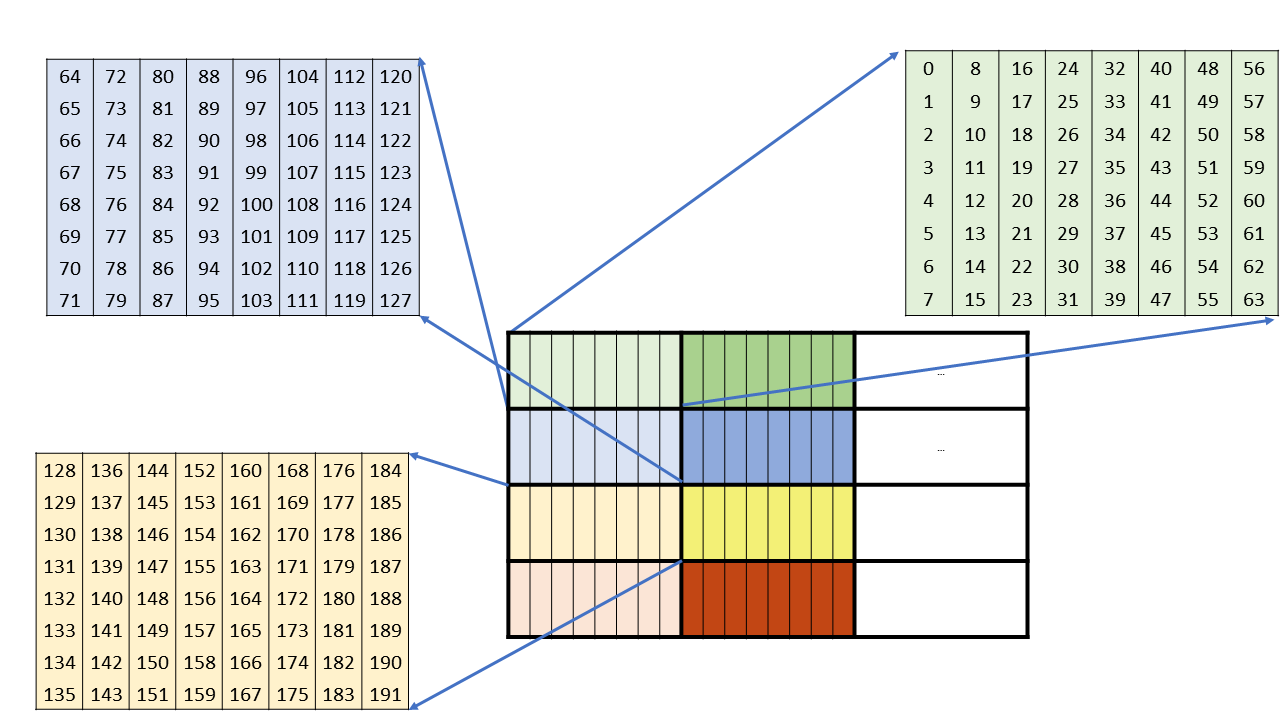

The batch of threads that executes a kernel is organized as a grid. A grid consists of either cooperative thread arrays or clusters of cooperative thread arrays as described in this section and illustrated in Figure 1 and Figure 2. Cooperative thread arrays (CTAs) implement CUDA thread blocks and clusters implement CUDA thread block clusters.

2.2.1. Cooperative Thread Arrays

The Parallel Thread Execution (PTX) programming model is explicitly parallel: a PTX program specifies the execution of a given thread of a parallel thread array. A cooperative thread array, or CTA, is an array of threads that execute a kernel concurrently or in parallel.

Threads within a CTA can communicate with each other. To coordinate the communication of the threads within the CTA, one can specify synchronization points where threads wait until all threads in the CTA have arrived.

Each thread has a unique thread identifier within the CTA. Programs use a data parallel

decomposition to partition inputs, work, and results across the threads of the CTA. Each CTA thread

uses its thread identifier to determine its assigned role, assign specific input and output

positions, compute addresses, and select work to perform. The thread identifier is a three-element

vector tid, (with elements tid.x, tid.y, and tid.z) that specifies the thread’s

position within a 1D, 2D, or 3D CTA. Each thread identifier component ranges from zero up to the

number of thread ids in that CTA dimension.

Each CTA has a 1D, 2D, or 3D shape specified by a three-element vector ntid (with elements

ntid.x, ntid.y, and ntid.z). The vector ntid specifies the number of threads in each

CTA dimension.

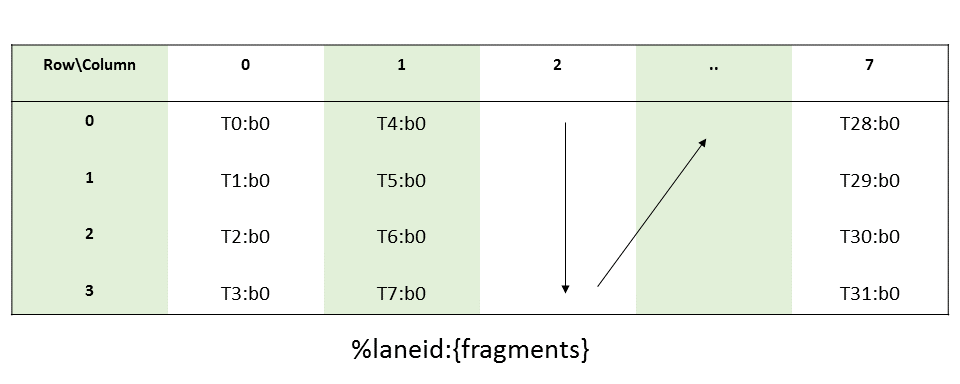

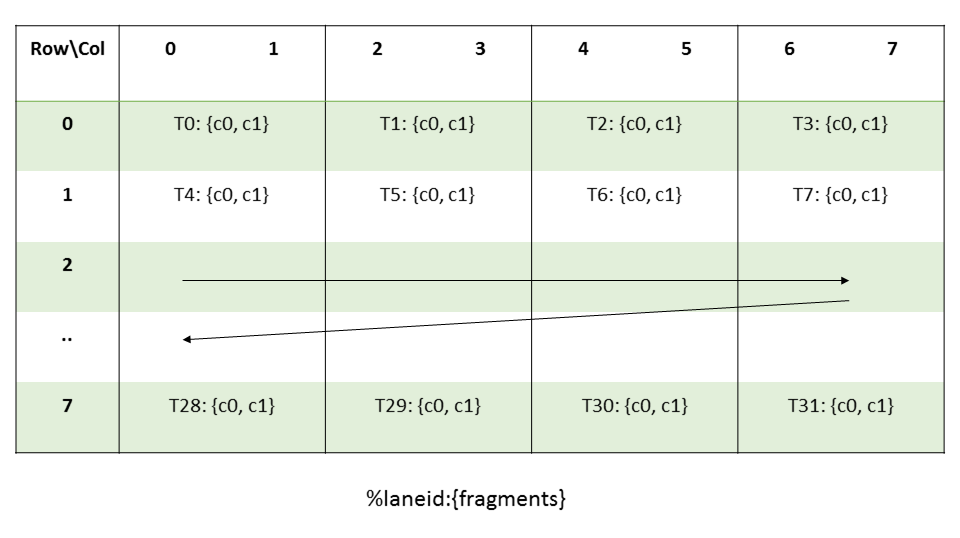

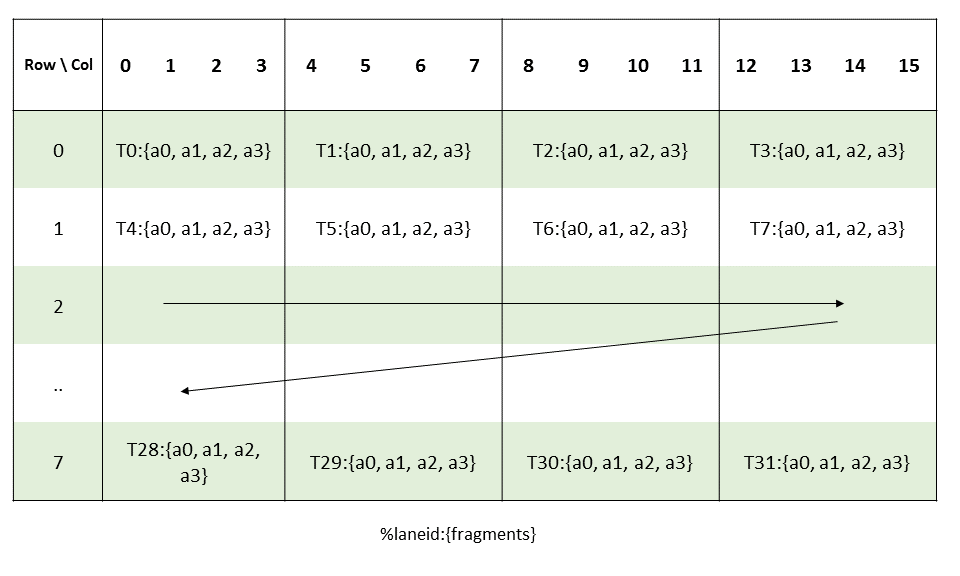

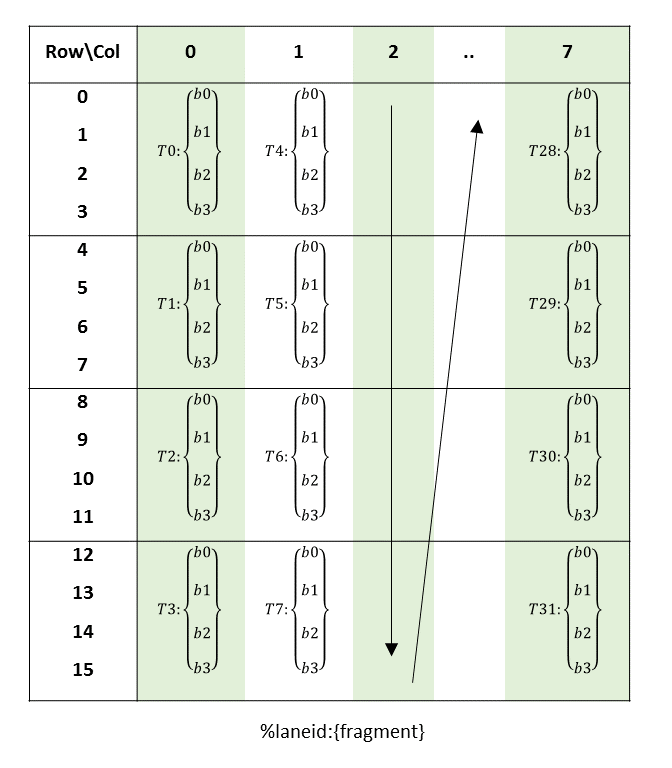

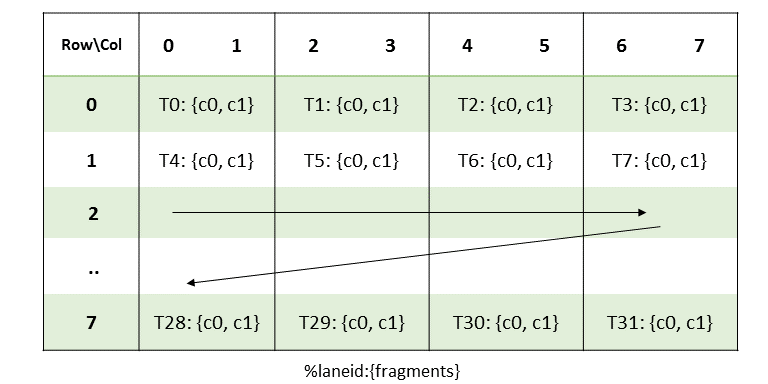

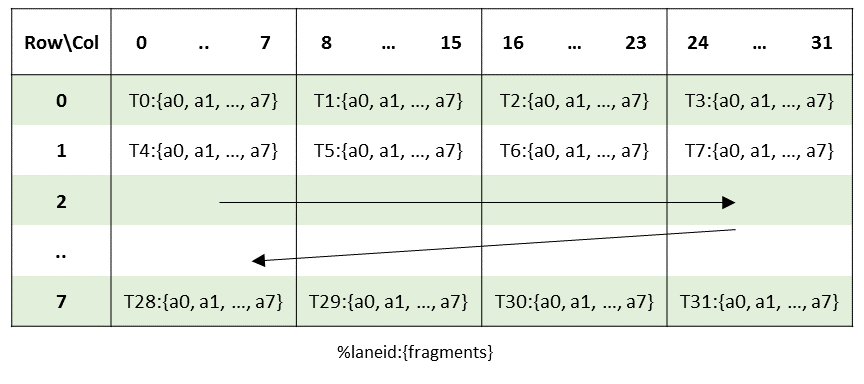

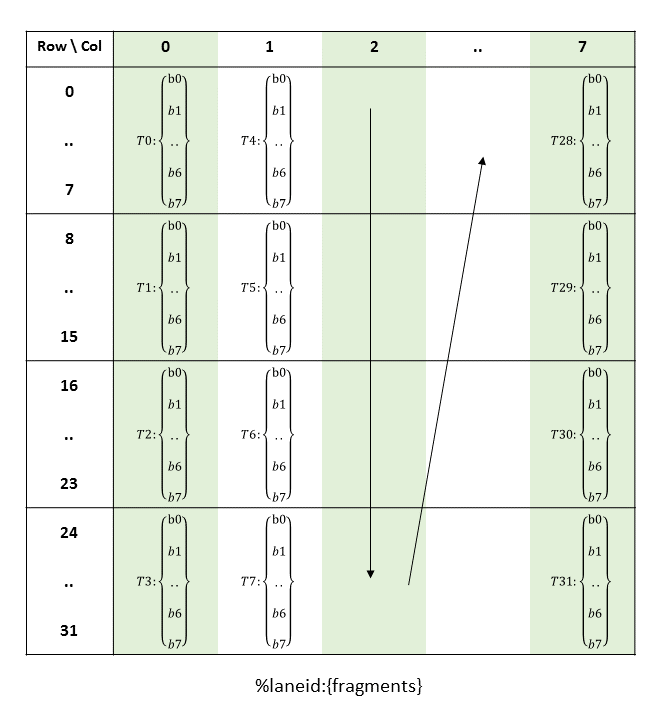

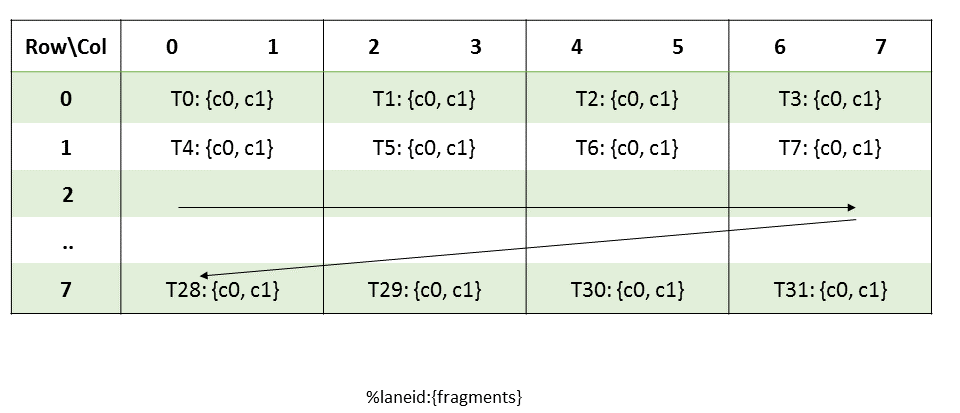

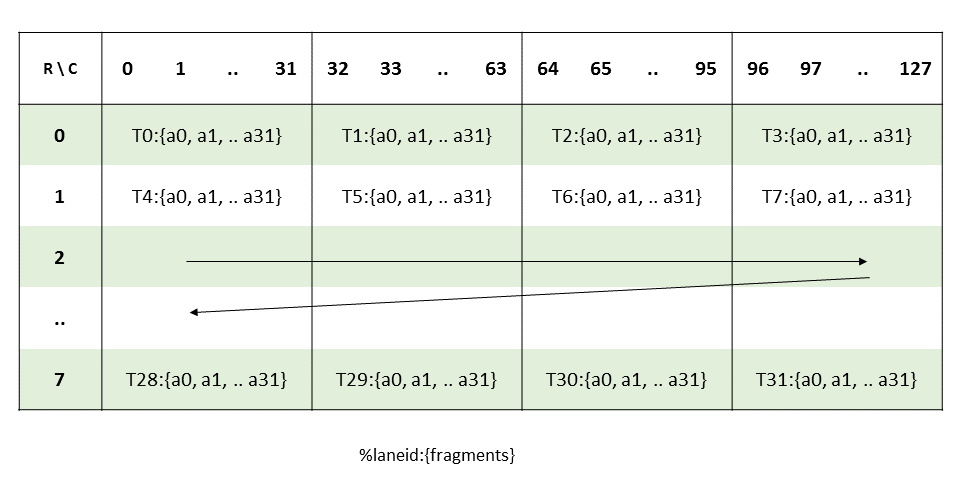

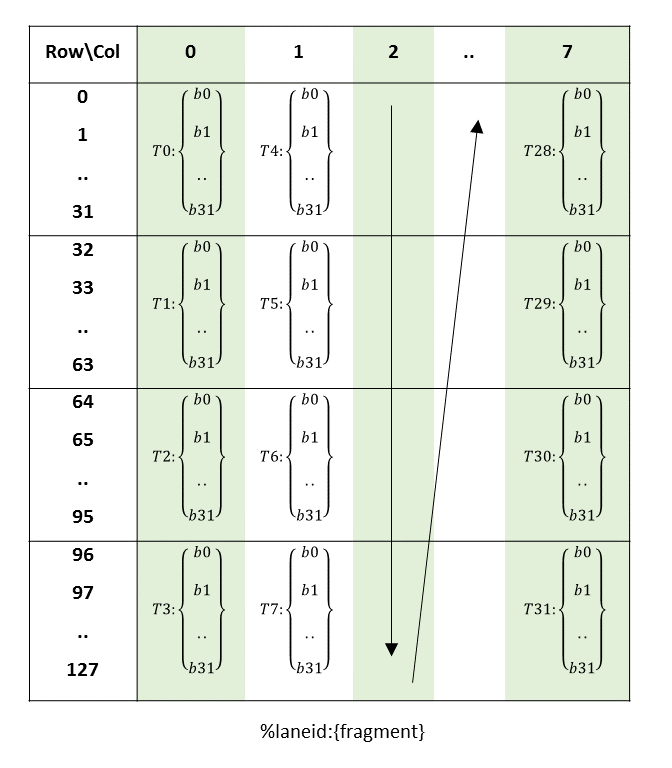

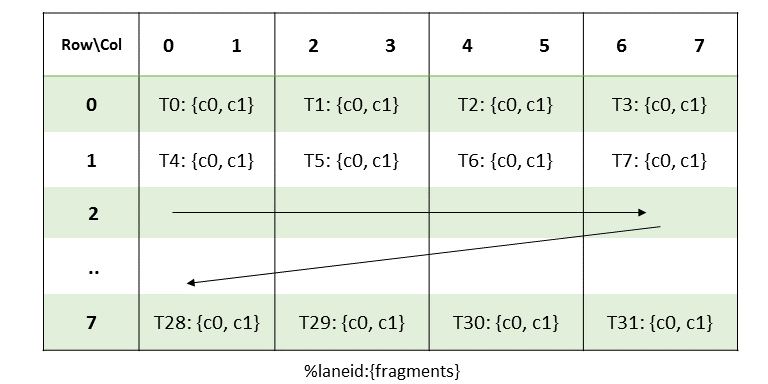

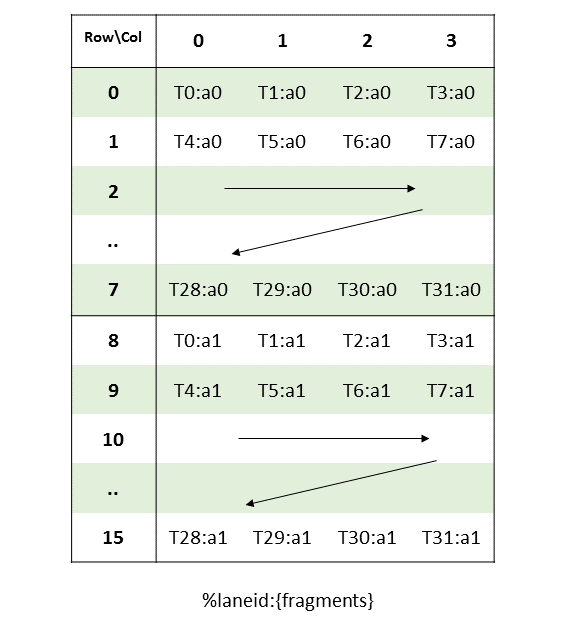

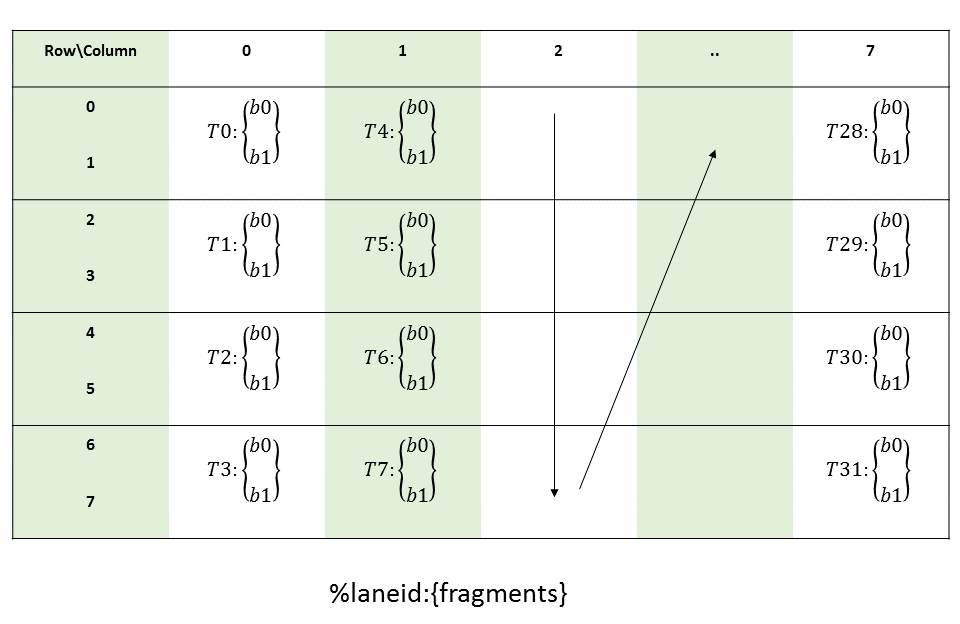

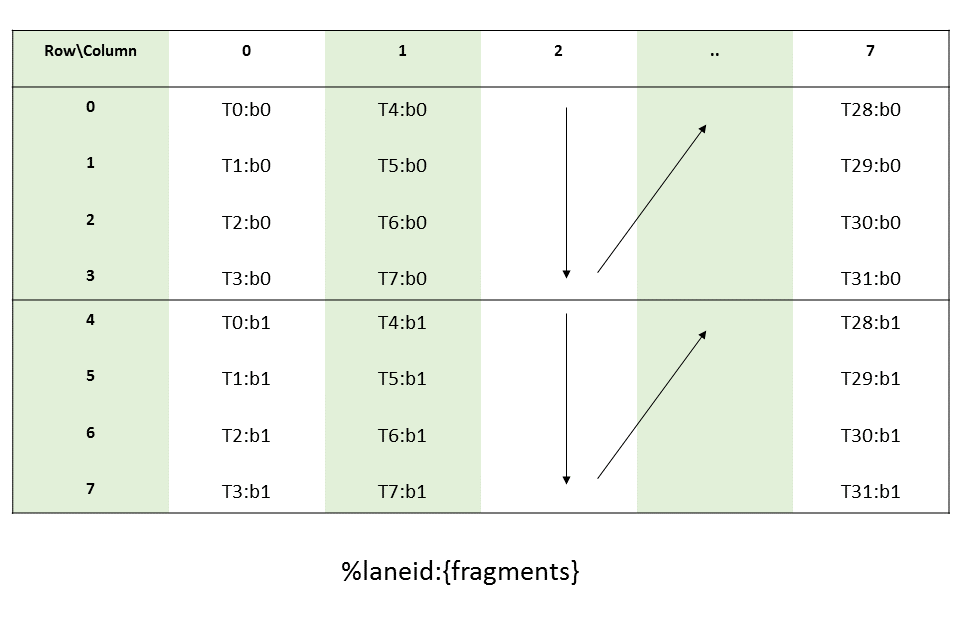

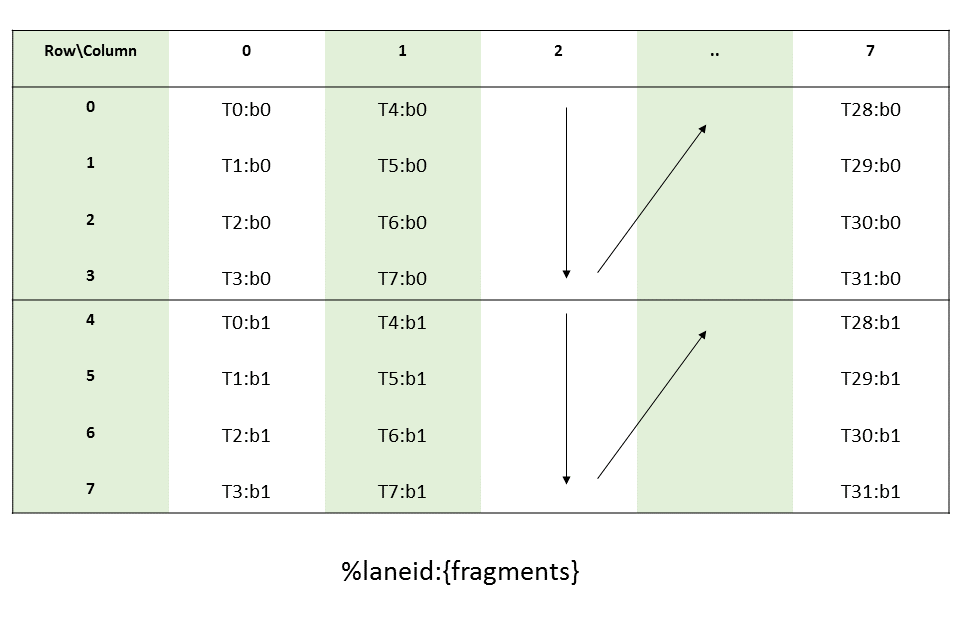

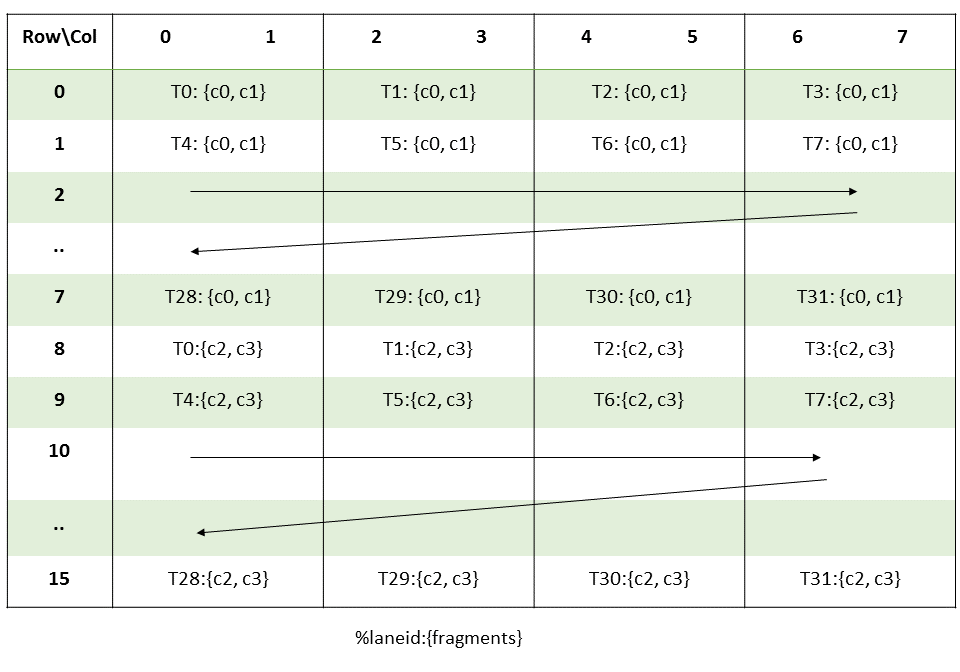

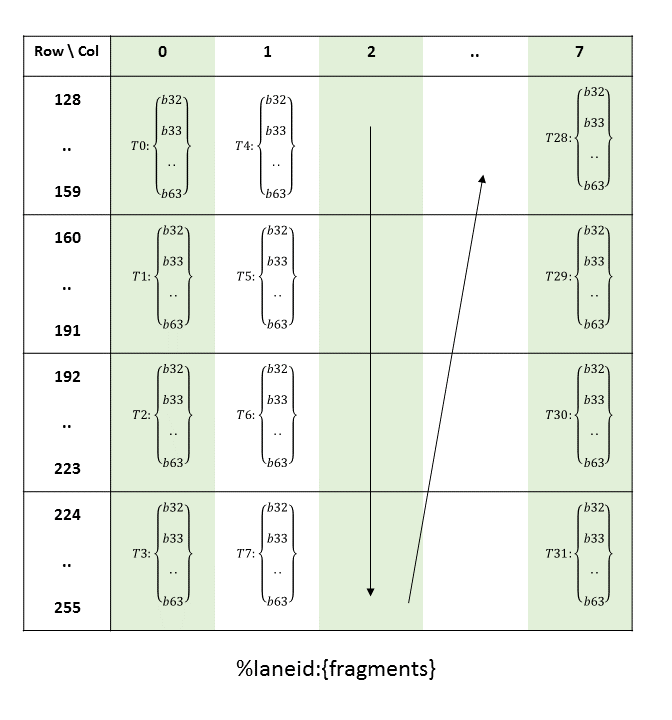

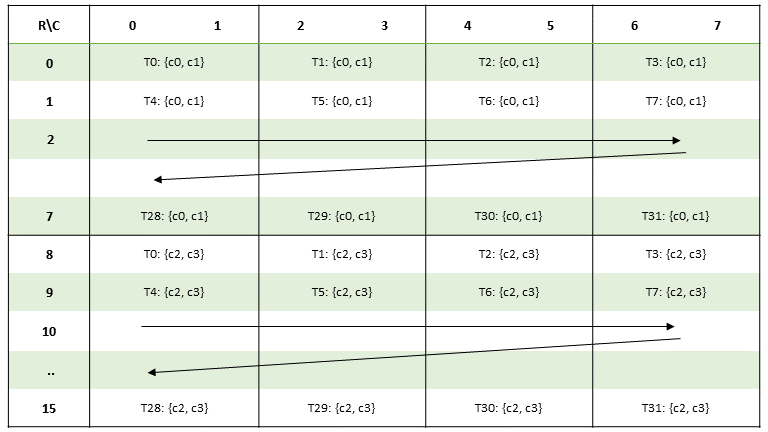

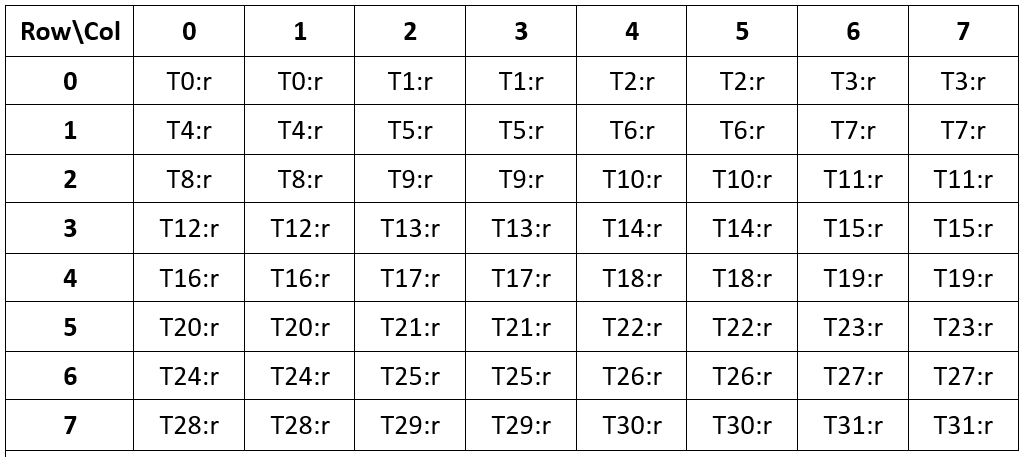

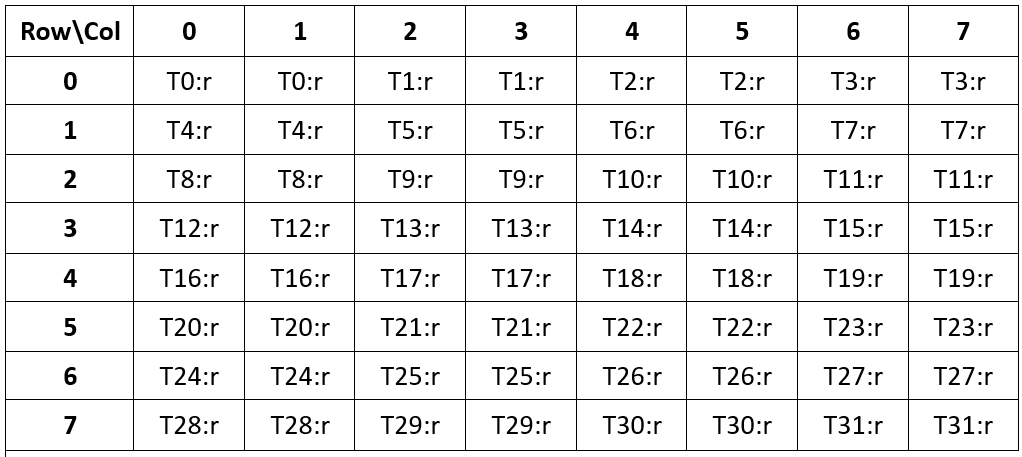

Threads within a CTA execute in SIMT (single-instruction, multiple-thread) fashion in groups called

warps. A warp is a maximal subset of threads from a single CTA, such that the threads execute

the same instructions at the same time. Threads within a warp are sequentially numbered. The warp

size is a machine-dependent constant. Typically, a warp has 32 threads. Some applications may be

able to maximize performance with knowledge of the warp size, so PTX includes a run-time immediate

constant, WARP_SZ, which may be used in any instruction where an immediate operand is allowed.

2.2.2. Cluster of Cooperative Thread Arrays

Cluster is a group of CTAs that run concurrently or in parallel and can synchronize and communicate with each other via shared memory. The executing CTA has to make sure that the shared memory of the peer CTA exists before communicating with it via shared memory and the peer CTA hasn’t exited before completing the shared memory operation.

Threads within the different CTAs in a cluster can synchronize and communicate with each other via

shared memory. Cluster-wide barriers can be used to synchronize all the threads within the

cluster. Each CTA in a cluster has a unique CTA identifier within its cluster

(cluster_ctaid). Each cluster of CTAs has 1D, 2D or 3D shape specified by the parameter

cluster_nctaid. Each CTA in the cluster also has a unique CTA identifier (cluster_ctarank)

across all dimensions. The total number of CTAs across all the dimensions in the cluster is

specified by cluster_nctarank. Threads may read and use these values through predefined, read-only

special registers %cluster_ctaid, %cluster_nctaid, %cluster_ctarank,

%cluster_nctarank.

Cluster level is applicable only on target architecture sm_90 or higher. Specifying cluster

level during launch time is optional. If the user specifies the cluster dimensions at launch time

then it will be treated as explicit cluster launch, otherwise it will be treated as implicit cluster

launch with default dimension 1x1x1. PTX provides read-only special register

%is_explicit_cluster to differentiate between explicit and implicit cluster launch.

2.2.3. Grid of Clusters

There is a maximum number of threads that a CTA can contain and a maximum number of CTAs that a cluster can contain. However, clusters with CTAs that execute the same kernel can be batched together into a grid of clusters, so that the total number of threads that can be launched in a single kernel invocation is very large. This comes at the expense of reduced thread communication and synchronization, because threads in different clusters cannot communicate and synchronize with each other.

Each cluster has a unique cluster identifier (clusterid) within a grid of clusters. Each grid of

clusters has a 1D, 2D , or 3D shape specified by the parameter nclusterid. Each grid also has a

unique temporal grid identifier (gridid). Threads may read and use these values through

predefined, read-only special registers %tid, %ntid, %clusterid, %nclusterid, and

%gridid.

Each CTA has a unique identifier (ctaid) within a grid. Each grid of CTAs has 1D, 2D, or 3D shape

specified by the parameter nctaid. Thread may use and read these values through predefined,

read-only special registers %ctaid and %nctaid.

Each kernel is executed as a batch of threads organized as a grid of clusters consisting of CTAs

where cluster is optional level and is applicable only for target architectures sm_90 and

higher. Figure 1 shows a grid consisting of CTAs and

Figure 2 shows a grid consisting of clusters.

Grids may be launched with dependencies between one another - a grid may be a dependent grid and/or a prerequisite grid. To understand how grid dependencies may be defined, refer to the section on CUDA Graphs in the Cuda Programming Guide.

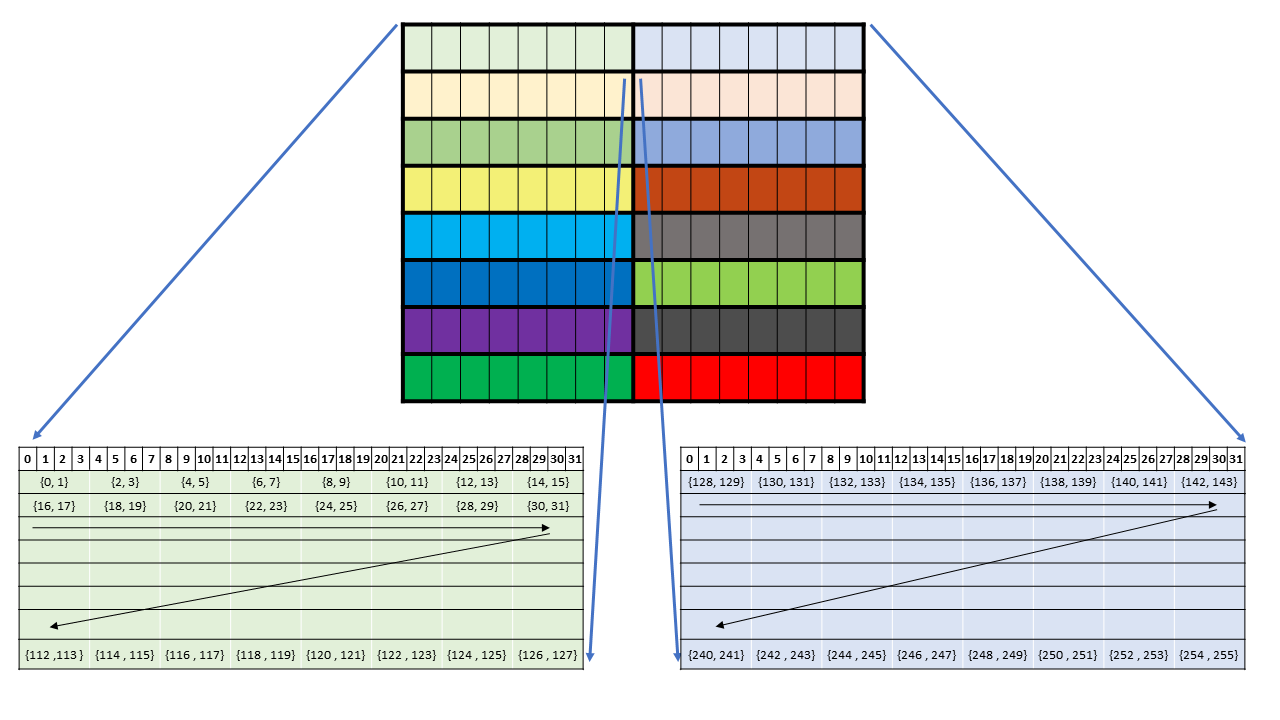

Figure 1 Grid with CTAs

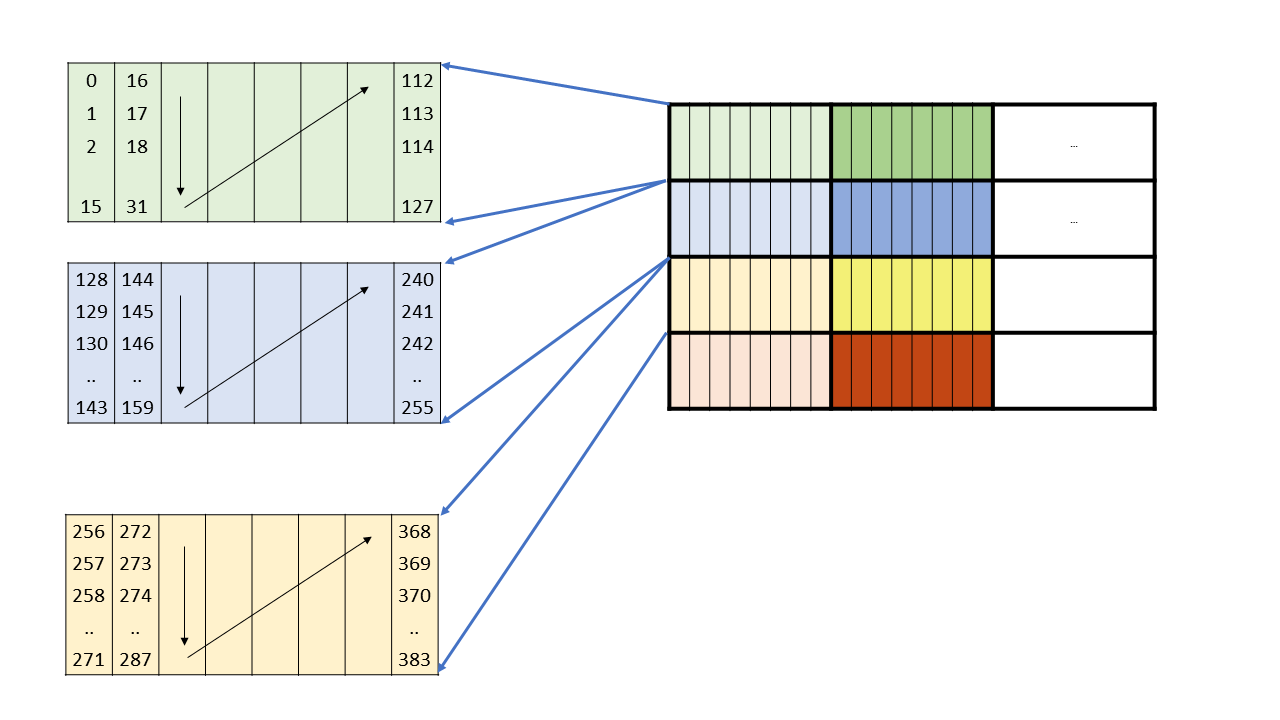

Figure 2 Grid with clusters

A cluster is a set of cooperative thread arrays (CTAs) where a CTA is a set of concurrent threads that execute the same kernel program. A grid is a set of clusters consisting of CTAs that execute independently.

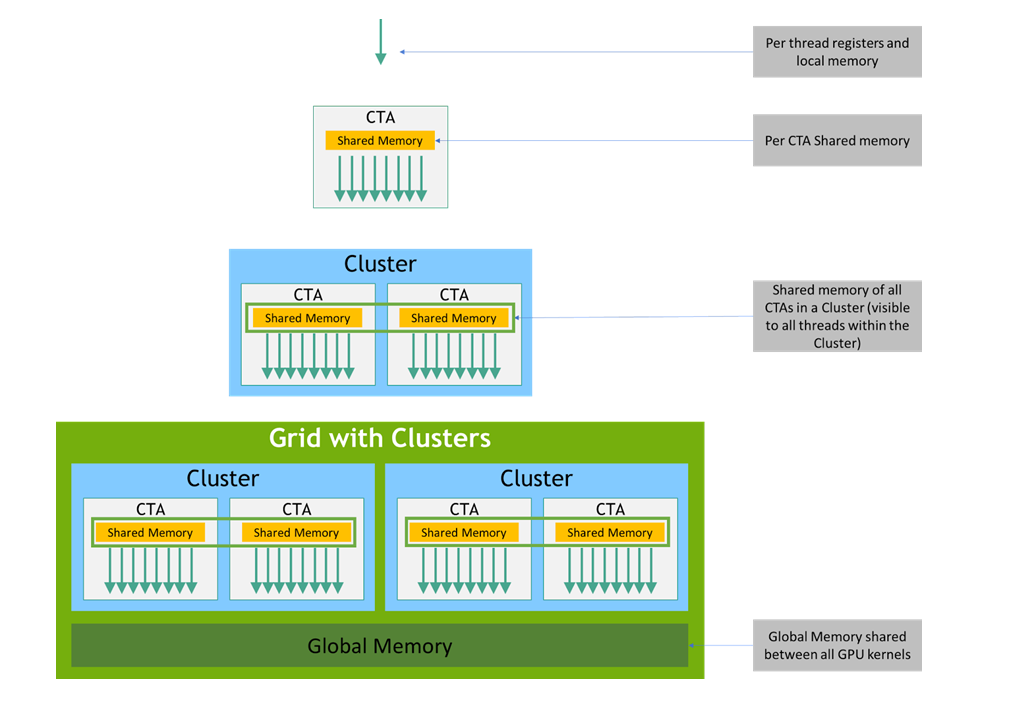

2.3. Memory Hierarchy

PTX threads may access data from multiple state spaces during their execution as illustrated by

Figure 3 where cluster level is introduced from

target architecture sm_90 onwards. Each thread has a private local memory. Each thread block

(CTA) has a shared memory visible to all threads of the block and to all active blocks in the

cluster and with the same lifetime as the block. Finally, all threads have access to the same global

memory.

There are additional state spaces accessible by all threads: the constant, param, texture, and surface state spaces. Constant and texture memory are read-only; surface memory is readable and writable. The global, constant, param, texture, and surface state spaces are optimized for different memory usages. For example, texture memory offers different addressing modes as well as data filtering for specific data formats. Note that texture and surface memory is cached, and within the same kernel call, the cache is not kept coherent with respect to global memory writes and surface memory writes, so any texture fetch or surface read to an address that has been written to via a global or a surface write in the same kernel call returns undefined data. In other words, a thread can safely read some texture or surface memory location only if this memory location has been updated by a previous kernel call or memory copy, but not if it has been previously updated by the same thread or another thread from the same kernel call.

The global, constant, and texture state spaces are persistent across kernel launches by the same application.

Both the host and the device maintain their own local memory, referred to as host memory and device memory, respectively. The device memory may be mapped and read or written by the host, or, for more efficient transfer, copied from the host memory through optimized API calls that utilize the device’s high-performance Direct Memory Access (DMA) engine.

Figure 3 Memory Hierarchy

3. PTX Machine Model

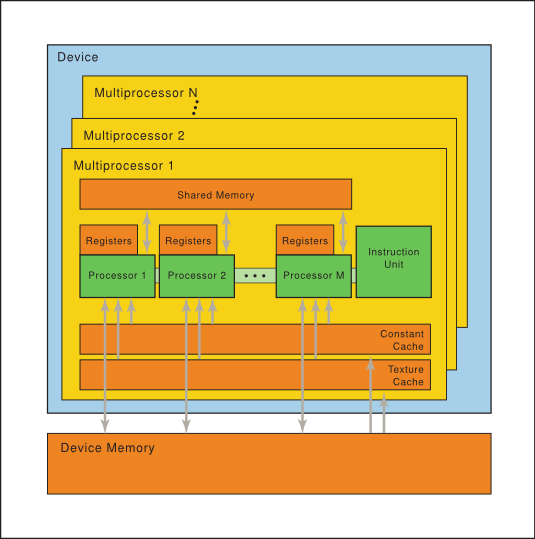

3.1. A Set of SIMT Multiprocessors

The NVIDIA GPU architecture is built around a scalable array of multithreaded Streaming Multiprocessors (SMs). When a host program invokes a kernel grid, the blocks of the grid are enumerated and distributed to multiprocessors with available execution capacity. The threads of a thread block execute concurrently on one multiprocessor. As thread blocks terminate, new blocks are launched on the vacated multiprocessors.

A multiprocessor consists of multiple Scalar Processor (SP) cores, a multithreaded instruction unit, and on-chip shared memory. The multiprocessor creates, manages, and executes concurrent threads in hardware with zero scheduling overhead. It implements a single-instruction barrier synchronization. Fast barrier synchronization together with lightweight thread creation and zero-overhead thread scheduling efficiently support very fine-grained parallelism, allowing, for example, a low granularity decomposition of problems by assigning one thread to each data element (such as a pixel in an image, a voxel in a volume, a cell in a grid-based computation).

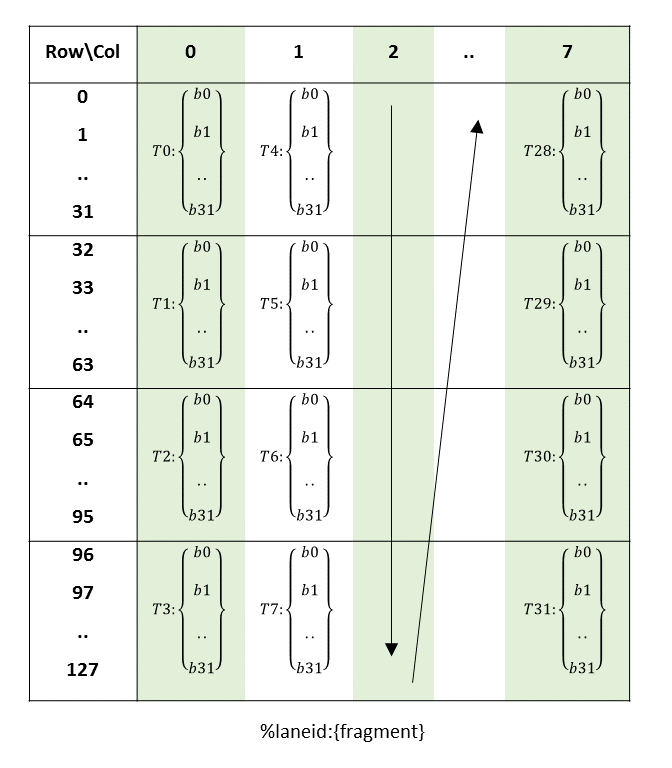

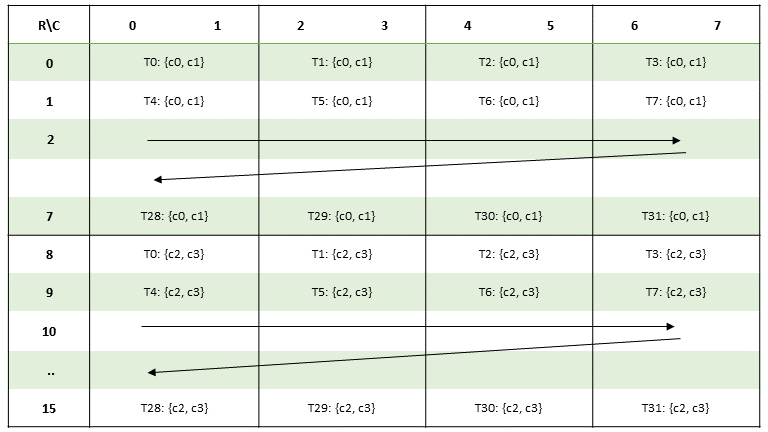

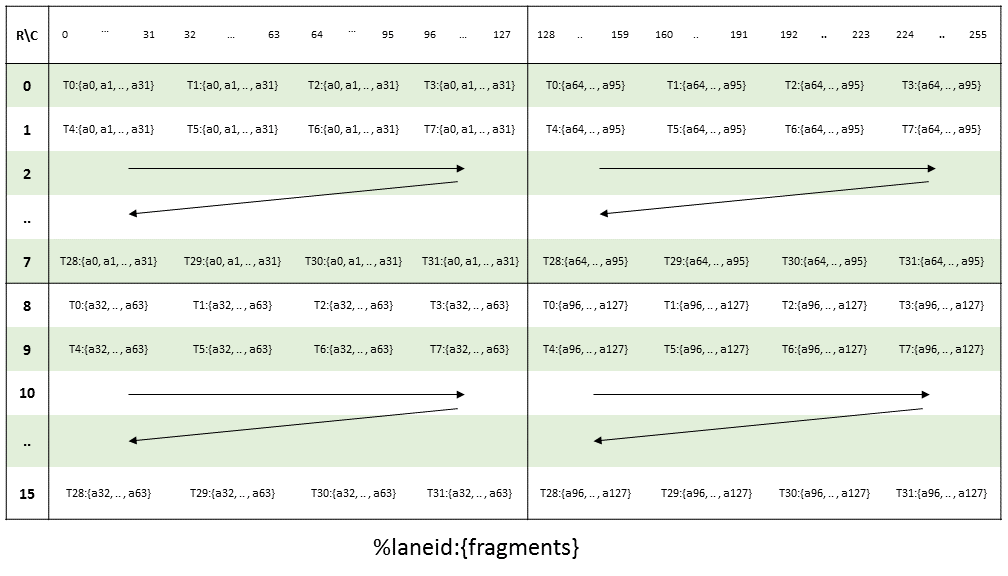

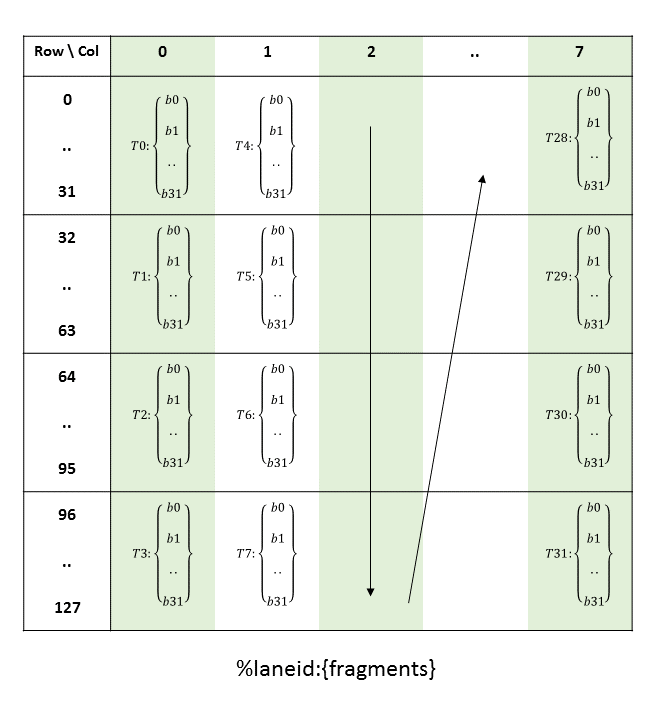

To manage hundreds of threads running several different programs, the multiprocessor employs an architecture we call SIMT (single-instruction, multiple-thread). The multiprocessor maps each thread to one scalar processor core, and each scalar thread executes independently with its own instruction address and register state. The multiprocessor SIMT unit creates, manages, schedules, and executes threads in groups of parallel threads called warps. (This term originates from weaving, the first parallel thread technology.) Individual threads composing a SIMT warp start together at the same program address but are otherwise free to branch and execute independently.

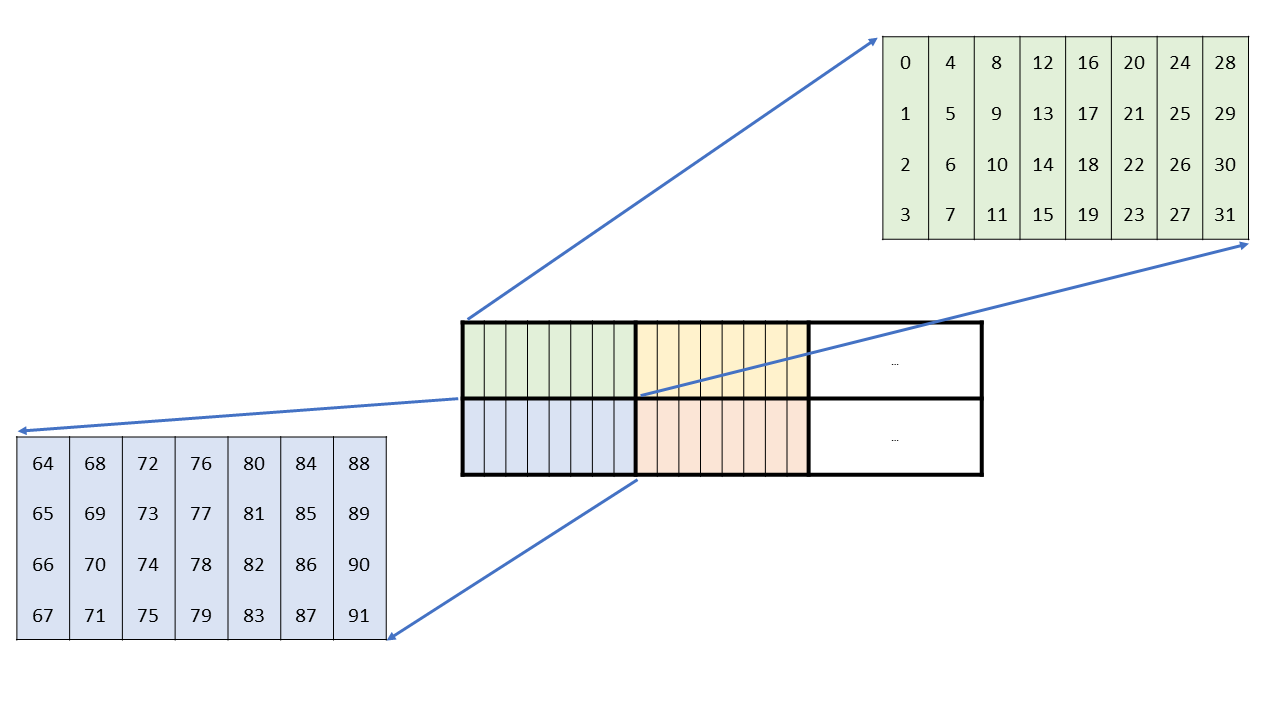

When a multiprocessor is given one or more thread blocks to execute, it splits them into warps that get scheduled by the SIMT unit. The way a block is split into warps is always the same; each warp contains threads of consecutive, increasing thread IDs with the first warp containing thread 0.

At every instruction issue time, the SIMT unit selects a warp that is ready to execute and issues the next instruction to the active threads of the warp. A warp executes one common instruction at a time, so full efficiency is realized when all threads of a warp agree on their execution path. If threads of a warp diverge via a data-dependent conditional branch, the warp serially executes each branch path taken, disabling threads that are not on that path, and when all paths complete, the threads converge back to the same execution path. Branch divergence occurs only within a warp; different warps execute independently regardless of whether they are executing common or disjointed code paths.

SIMT architecture is akin to SIMD (Single Instruction, Multiple Data) vector organizations in that a single instruction controls multiple processing elements. A key difference is that SIMD vector organizations expose the SIMD width to the software, whereas SIMT instructions specify the execution and branching behavior of a single thread. In contrast with SIMD vector machines, SIMT enables programmers to write thread-level parallel code for independent, scalar threads, as well as data-parallel code for coordinated threads. For the purposes of correctness, the programmer can essentially ignore the SIMT behavior; however, substantial performance improvements can be realized by taking care that the code seldom requires threads in a warp to diverge. In practice, this is analogous to the role of cache lines in traditional code: Cache line size can be safely ignored when designing for correctness but must be considered in the code structure when designing for peak performance. Vector architectures, on the other hand, require the software to coalesce loads into vectors and manage divergence manually.

How many blocks a multiprocessor can process at once depends on how many registers per thread and how much shared memory per block are required for a given kernel since the multiprocessor’s registers and shared memory are split among all the threads of the batch of blocks. If there are not enough registers or shared memory available per multiprocessor to process at least one block, the kernel will fail to launch.

Figure 4 Hardware Model

A set of SIMT multiprocessors with on-chip shared memory.

3.2. Independent Thread Scheduling

On architectures prior to Volta, warps used a single program counter shared amongst all 32 threads in the warp together with an active mask specifying the active threads of the warp. As a result, threads from the same warp in divergent regions or different states of execution cannot signal each other or exchange data, and algorithms requiring fine-grained sharing of data guarded by locks or mutexes can easily lead to deadlock, depending on which warp the contending threads come from.

Starting with the Volta architecture, Independent Thread Scheduling allows full concurrency between threads, regardless of warp. With Independent Thread Scheduling, the GPU maintains execution state per thread, including a program counter and call stack, and can yield execution at a per-thread granularity, either to make better use of execution resources or to allow one thread to wait for data to be produced by another. A schedule optimizer determines how to group active threads from the same warp together into SIMT units. This retains the high throughput of SIMT execution as in prior NVIDIA GPUs, but with much more flexibility: threads can now diverge and reconverge at sub-warp granularity.

Independent Thread Scheduling can lead to a rather different set of threads participating in the executed code than intended if the developer made assumptions about warp-synchronicity of previous hardware architectures. In particular, any warp-synchronous code (such as synchronization-free, intra-warp reductions) should be revisited to ensure compatibility with Volta and beyond. See the section on Compute Capability 7.x in the Cuda Programming Guide for further details.

4. Syntax

PTX programs are a collection of text source modules (files). PTX source modules have an assembly-language style syntax with instruction operation codes and operands. Pseudo-operations specify symbol and addressing management. The ptxas optimizing backend compiler optimizes and assembles PTX source modules to produce corresponding binary object files.

4.1. Source Format

Source modules are ASCII text. Lines are separated by the newline character (\n).

All whitespace characters are equivalent; whitespace is ignored except for its use in separating tokens in the language.

The C preprocessor cpp may be used to process PTX source modules. Lines beginning with # are

preprocessor directives. The following are common preprocessor directives:

#include, #define, #if, #ifdef, #else, #endif, #line, #file

C: A Reference Manual by Harbison and Steele provides a good description of the C preprocessor.

PTX is case sensitive and uses lowercase for keywords.

Each PTX module must begin with a .version directive specifying the PTX language version,

followed by a .target directive specifying the target architecture assumed. See PTX Module

Directives for a more information on these directives.

4.3. Statements

A PTX statement is either a directive or an instruction. Statements begin with an optional label and end with a semicolon.

Examples

.reg .b32 r1, r2;

.global .f32 array[N];

start: mov.b32 r1, %tid.x;

shl.b32 r1, r1, 2; // shift thread id by 2 bits

ld.global.b32 r2, array[r1]; // thread[tid] gets array[tid]

add.f32 r2, r2, 0.5; // add 1/2

4.3.1. Directive Statements

Directive keywords begin with a dot, so no conflict is possible with user-defined identifiers. The directives in PTX are listed in Table 1 and described in State Spaces, Types, and Variables and Directives.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

4.3.2. Instruction Statements

Instructions are formed from an instruction opcode followed by a comma-separated list of zero or

more operands, and terminated with a semicolon. Operands may be register variables, constant

expressions, address expressions, or label names. Instructions have an optional guard predicate

which controls conditional execution. The guard predicate follows the optional label and precedes

the opcode, and is written as @p, where p is a predicate register. The guard predicate may

be optionally negated, written as @!p.

The destination operand is first, followed by source operands.

Instruction keywords are listed in Table 2. All instruction keywords are reserved tokens in PTX.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

4.4. Identifiers

User-defined identifiers follow extended C++ rules: they either start with a letter followed by zero or more letters, digits, underscore, or dollar characters; or they start with an underscore, dollar, or percentage character followed by one or more letters, digits, underscore, or dollar characters:

followsym: [a-zA-Z0-9_$]

identifier: [a-zA-Z]{followsym}* | {[_$%]{followsym}+

PTX does not specify a maximum length for identifiers and suggests that all implementations support a minimum length of at least 1024 characters.

Many high-level languages such as C and C++ follow similar rules for identifier names, except that the percentage sign is not allowed. PTX allows the percentage sign as the first character of an identifier. The percentage sign can be used to avoid name conflicts, e.g., between user-defined variable names and compiler-generated names.

PTX predefines one constant and a small number of special registers that begin with the percentage sign, listed in Table 4.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

4.5. Constants

PTX supports integer and floating-point constants and constant expressions. These constants may be

used in data initialization and as operands to instructions. Type checking rules remain the same for

integer, floating-point, and bit-size types. For predicate-type data and instructions, integer

constants are allowed and are interpreted as in C, i.e., zero values are False and non-zero

values are True.

4.5.1. Integer Constants

Integer constants are 64-bits in size and are either signed or unsigned, i.e., every integer

constant has type .s64 or .u64. The signed/unsigned nature of an integer constant is needed

to correctly evaluate constant expressions containing operations such as division and ordered

comparisons, where the behavior of the operation depends on the operand types. When used in an

instruction or data initialization, each integer constant is converted to the appropriate size based

on the data or instruction type at its use.

Integer literals may be written in decimal, hexadecimal, octal, or binary notation. The syntax

follows that of C. Integer literals may be followed immediately by the letter U to indicate that

the literal is unsigned.

hexadecimal literal: 0[xX]{hexdigit}+U?

octal literal: 0{octal digit}+U?

binary literal: 0[bB]{bit}+U?

decimal literal {nonzero-digit}{digit}*U?

Integer literals are non-negative and have a type determined by their magnitude and optional type

suffix as follows: literals are signed (.s64) unless the value cannot be fully represented in

.s64 or the unsigned suffix is specified, in which case the literal is unsigned (.u64).

The predefined integer constant WARP_SZ specifies the number of threads per warp for the target

platform; to date, all target architectures have a WARP_SZ value of 32.

4.5.2. Floating-Point Constants

Floating-point constants are represented as 64-bit double-precision values, and all floating-point constant expressions are evaluated using 64-bit double precision arithmetic. The only exception is the 32-bit hex notation for expressing an exact single-precision floating-point value; such values retain their exact 32-bit single-precision value and may not be used in constant expressions. Each 64-bit floating-point constant is converted to the appropriate floating-point size based on the data or instruction type at its use.

Floating-point literals may be written with an optional decimal point and an optional signed exponent. Unlike C and C++, there is no suffix letter to specify size; literals are always represented in 64-bit double-precision format.

PTX includes a second representation of floating-point constants for specifying the exact machine

representation using a hexadecimal constant. To specify IEEE 754 double-precision floating point

values, the constant begins with 0d or 0D followed by 16 hex digits. To specify IEEE 754

single-precision floating point values, the constant begins with 0f or 0F followed by 8 hex

digits.

0[fF]{hexdigit}{8} // single-precision floating point

0[dD]{hexdigit}{16} // double-precision floating point

Example

mov.f32 $f3, 0F3f800000; // 1.0

4.5.3. Predicate Constants

In PTX, integer constants may be used as predicates. For predicate-type data initializers and

instruction operands, integer constants are interpreted as in C, i.e., zero values are False and

non-zero values are True.

4.5.4. Constant Expressions

In PTX, constant expressions are formed using operators as in C and are evaluated using rules similar to those in C, but simplified by restricting types and sizes, removing most casts, and defining full semantics to eliminate cases where expression evaluation in C is implementation dependent.

Constant expressions are formed from constant literals, unary plus and minus, basic arithmetic

operators (addition, subtraction, multiplication, division), comparison operators, the conditional

ternary operator ( ?: ), and parentheses. Integer constant expressions also allow unary logical

negation (!), bitwise complement (~), remainder (%), shift operators (<< and

>>), bit-type operators (&, |, and ^), and logical operators (&&, ||).

Constant expressions in PTX do not support casts between integer and floating-point.

Constant expressions are evaluated using the same operator precedence as in C. Table 5 gives operator precedence and associativity. Operator precedence is highest for unary operators and decreases with each line in the chart. Operators on the same line have the same precedence and are evaluated right-to-left for unary operators and left-to-right for binary operators.

Kind |

Operator Symbols |

Operator Names |

Associates |

|---|---|---|---|

Primary |

|

parenthesis |

n/a |

Unary |

|

plus, minus, negation, complement |

right |

|

casts |

right |

|

Binary |

|

multiplication, division, remainder |

left |

|

addition, subtraction |

||

|

shifts |

||

|

ordered comparisons |

||

|

equal, not equal |

||

|

bitwise AND |

||

|

bitwise XOR |

||

|

bitwise OR |

||

|

logical AND |

||

|

logical OR |

||

Ternary |

|

conditional |

right |

4.5.5. Integer Constant Expression Evaluation

Integer constant expressions are evaluated at compile time according to a set of rules that

determine the type (signed .s64 versus unsigned .u64) of each sub-expression. These rules

are based on the rules in C, but they’ve been simplified to apply only to 64-bit integers, and

behavior is fully defined in all cases (specifically, for remainder and shift operators).

-

Literals are signed unless unsigned is needed to prevent overflow, or unless the literal uses a

Usuffix. For example:42,0x1234,0123are signed.0xfabc123400000000,42U,0x1234Uare unsigned.

-

Unary plus and minus preserve the type of the input operand. For example:

+123,-1,-(-42)are signed.-1U,-0xfabc123400000000are unsigned.

Unary logical negation (

!) produces a signed result with value0or1.Unary bitwise complement (

~) interprets the source operand as unsigned and produces an unsigned result.Some binary operators require normalization of source operands. This normalization is known as the usual arithmetic conversions and simply converts both operands to unsigned type if either operand is unsigned.

Addition, subtraction, multiplication, and division perform the usual arithmetic conversions and produce a result with the same type as the converted operands. That is, the operands and result are unsigned if either source operand is unsigned, and is otherwise signed.

Remainder (

%) interprets the operands as unsigned. Note that this differs from C, which allows a negative divisor but defines the behavior to be implementation dependent.Left and right shift interpret the second operand as unsigned and produce a result with the same type as the first operand. Note that the behavior of right-shift is determined by the type of the first operand: right shift of a signed value is arithmetic and preserves the sign, and right shift of an unsigned value is logical and shifts in a zero bit.

AND (

&), OR (|), and XOR (^) perform the usual arithmetic conversions and produce a result with the same type as the converted operands.AND_OP (

&&), OR_OP (||), Equal (==), and Not_Equal (!=) produce a signed result. The result value is 0 or 1.Ordered comparisons (

<,<=,>,>=) perform the usual arithmetic conversions on source operands and produce a signed result. The result value is0or1.Casting of expressions to signed or unsigned is supported using (

.s64) and (.u64) casts.For the conditional operator (

? :) , the first operand must be an integer, and the second and third operands are either both integers or both floating-point. The usual arithmetic conversions are performed on the second and third operands, and the result type is the same as the converted type.

4.5.6. Summary of Constant Expression Evaluation Rules

Table 6 contains a summary of the constant expression evaluation rules.

Kind |

Operator |

Operand Types |

Operand Interpretation |

Result Type |

|---|---|---|---|---|

Primary |

|

any type |

same as source |

same as source |

constant literal |

n/a |

n/a |

|

|

Unary |

|

any type |

same as source |

same as source |

|

integer |

zero or non-zero |

|

|

|

integer |

|

|

|

Cast |

|

integer |

|

|

|

integer |

|

|

|

Binary |

|

|

|

|

integer |

use usual conversions |

converted type |

||

|

|

|

|

|

integer |

use usual conversions |

|

||

|

|

|

|

|

integer |

use usual conversions |

|

||

|

integer |

|

|

|

|

integer |

1st unchanged, 2nd is |

same as 1st operand |

|

|

integer |

|

|

|

|

integer |

zero or non-zero |

|

|

Ternary |

|

|

same as sources |

|

|

use usual conversions |

converted type |

5. State Spaces, Types, and Variables

While the specific resources available in a given target GPU will vary, the kinds of resources will be common across platforms, and these resources are abstracted in PTX through state spaces and data types.

5.1. State Spaces

A state space is a storage area with particular characteristics. All variables reside in some state space. The characteristics of a state space include its size, addressability, access speed, access rights, and level of sharing between threads.

The state spaces defined in PTX are a byproduct of parallel programming and graphics programming. The list of state spaces is shown in Table 7,and properties of state spaces are shown in Table 8.

Name |

Description |

|---|---|

|

Registers, fast. |

|

Special registers. Read-only; pre-defined; platform-specific. |

|

Shared, read-only memory. |

|

Global memory, shared by all threads. |

|

Local memory, private to each thread. |

|

Kernel parameters, defined per-grid; or Function or local parameters, defined per-thread. |

|

Addressable memory, defined per CTA, accessible to all threads in the cluster throughout the lifetime of the CTA that defines it. |

|

Global texture memory (deprecated). |

Name |

Addressable |

Initializable |

Access |

Sharing |

|---|---|---|---|---|

|

No |

No |

R/W |

per-thread |

|

No |

No |

RO |

per-CTA |

|

Yes |

Yes1 |

RO |

per-grid |

|

Yes |

Yes1 |

R/W |

Context |

|

Yes |

No |

R/W |

per-thread |

|

Yes2 |

No |

RO |

per-grid |

|

Restricted3 |

No |

R/W |

per-thread |

|

Yes |

No |

R/W |

per-cluster5 |

|

No4 |

Yes, via driver |

RO |

Context |

|

Notes: 1 Variables in 2 Accessible only via the 3 Accessible via 4 Accessible only via the 5 Visible to the owning CTA and other active CTAs in the cluster. |

||||

5.1.1. Register State Space

Registers (.reg state space) are fast storage locations. The number of registers is limited, and

will vary from platform to platform. When the limit is exceeded, register variables will be spilled

to memory, causing changes in performance. For each architecture, there is a recommended maximum

number of registers to use (see the CUDA Programming Guide for details).

Registers may be typed (signed integer, unsigned integer, floating point, predicate) or

untyped. Register size is restricted; aside from predicate registers which are 1-bit, scalar

registers have a width of 8-, 16-, 32-, 64-, or 128-bits, and vector registers have a width of

16-, 32-, 64-, or 128-bits. The most common use of 8-bit registers is with ld, st, and cvt

instructions, or as elements of vector tuples.

Registers differ from the other state spaces in that they are not fully addressable, i.e., it is not

possible to refer to the address of a register. When compiling to use the Application Binary

Interface (ABI), register variables are restricted to function scope and may not be declared at

module scope. When compiling legacy PTX code (ISA versions prior to 3.0) containing module-scoped

.reg variables, the compiler silently disables use of the ABI. Registers may have alignment

boundaries required by multi-word loads and stores.

5.1.2. Special Register State Space

The special register (.sreg) state space holds predefined, platform-specific registers, such as

grid, cluster, CTA, and thread parameters, clock counters, and performance monitoring registers. All

special registers are predefined.

5.1.3. Constant State Space

The constant (.const) state space is a read-only memory initialized by the host. Constant memory

is accessed with a ld.const instruction. Constant memory is restricted in size, currently

limited to 64 KB which can be used to hold statically-sized constant variables. There is an

additional 640 KB of constant memory, organized as ten independent 64 KB regions. The driver may

allocate and initialize constant buffers in these regions and pass pointers to the buffers as kernel

function parameters. Since the ten regions are not contiguous, the driver must ensure that constant

buffers are allocated so that each buffer fits entirely within a 64 KB region and does not span a

region boundary.

Statically-sized constant variables have an optional variable initializer; constant variables with no explicit initializer are initialized to zero by default. Constant buffers allocated by the driver are initialized by the host, and pointers to such buffers are passed to the kernel as parameters. See the description of kernel parameter attributes in Kernel Function Parameter Attributes for more details on passing pointers to constant buffers as kernel parameters.

5.1.3.1. Banked Constant State Space (deprecated)

Previous versions of PTX exposed constant memory as a set of eleven 64 KB banks, with explicit bank numbers required for variable declaration and during access.

Prior to PTX ISA version 2.2, the constant memory was organized into fixed size banks. There were

eleven 64 KB banks, and banks were specified using the .const[bank] modifier, where bank

ranged from 0 to 10. If no bank number was given, bank zero was assumed.

By convention, bank zero was used for all statically-sized constant variables. The remaining banks were used to declare incomplete constant arrays (as in C, for example), where the size is not known at compile time. For example, the declaration

.extern .const[2] .b32 const_buffer[];

resulted in const_buffer pointing to the start of constant bank two. This pointer could then be

used to access the entire 64 KB constant bank. Multiple incomplete array variables declared in the

same bank were aliased, with each pointing to the start address of the specified constant bank.

To access data in contant banks 1 through 10, the bank number was required in the state space of the load instruction. For example, an incomplete array in bank 2 was accessed as follows:

.extern .const[2] .b32 const_buffer[];

ld.const[2].b32 %r1, [const_buffer+4]; // load second word

In PTX ISA version 2.2, we eliminated explicit banks and replaced the incomplete array representation of driver-allocated constant buffers with kernel parameter attributes that allow pointers to constant buffers to be passed as kernel parameters.

5.1.4. Global State Space

The global (.global) state space is memory that is accessible by all threads in a context. It is

the mechanism by which threads in different CTAs, clusters, and grids can communicate. Use

ld.global, st.global, and atom.global to access global variables.

Global variables have an optional variable initializer; global variables with no explicit initializer are initialized to zero by default.

5.1.5. Local State Space

The local state space (.local) is private memory for each thread to keep its own data. It is

typically standard memory with cache. The size is limited, as it must be allocated on a per-thread

basis. Use ld.local and st.local to access local variables.

When compiling to use the Application Binary Interface (ABI), .local state-space variables

must be declared within function scope and are allocated on the stack. In implementations that do

not support a stack, all local memory variables are stored at fixed addresses, recursive function

calls are not supported, and .local variables may be declared at module scope. When compiling

legacy PTX code (ISA versions prior to 3.0) containing module-scoped .local variables, the

compiler silently disables use of the ABI.

5.1.6. Parameter State Space

The parameter (.param) state space is used (1) to pass input arguments from the host to the

kernel, (2a) to declare formal input and return parameters for device functions called from within

kernel execution, and (2b) to declare locally-scoped byte array variables that serve as function

call arguments, typically for passing large structures by value to a function. Kernel function

parameters differ from device function parameters in terms of access and sharing (read-only versus

read-write, per-kernel versus per-thread). Note that PTX ISA versions 1.x supports only kernel

function parameters in .param space; device function parameters were previously restricted to the

register state space. The use of parameter state space for device function parameters was introduced

in PTX ISA version 2.0 and requires target architecture sm_20 or higher. Additional sub-qualifiers

::entry or ::func can be specified on instructions with .param state space to indicate

whether the address refers to kernel function parameter or device function parameter. If no

sub-qualifier is specified with the .param state space, then the default sub-qualifier is specific

to and dependent on the exact instruction. For example, st.param is equivalent to st.param::func

whereas isspacep.param is equivalent to isspacep.param::entry. Refer to the instruction

description for more details on default sub-qualifier assumption.

Note

The location of parameter space is implementation specific. For example, in some implementations

kernel parameters reside in global memory. No access protection is provided between parameter and

global space in this case. Though the exact location of the kernel parameter space is

implementation specific, the kernel parameter space window is always contained within the global

space window. Similarly, function parameters are mapped to parameter passing registers and/or

stack locations based on the function calling conventions of the Application Binary Interface

(ABI). Therefore, PTX code should make no assumptions about the relative locations or ordering

of .param space variables.

5.1.6.1. Kernel Function Parameters

Each kernel function definition includes an optional list of parameters. These parameters are

addressable, read-only variables declared in the .param state space. Values passed from the host

to the kernel are accessed through these parameter variables using ld.param instructions. The

kernel parameter variables are shared across all CTAs from all clusters within a grid.

The address of a kernel parameter may be moved into a register using the mov instruction. The

resulting address is in the .param state space and is accessed using ld.param instructions.

Example

.entry foo ( .param .b32 N, .param .align 8 .b8 buffer[64] )

{

.reg .u32 %n;

.reg .f64 %d;

ld.param.u32 %n, [N];

ld.param.f64 %d, [buffer];

...

Example

.entry bar ( .param .b32 len )

{

.reg .u32 %ptr, %n;

mov.u32 %ptr, len;

ld.param.u32 %n, [%ptr];

...

Kernel function parameters may represent normal data values, or they may hold addresses to objects in constant, global, local, or shared state spaces. In the case of pointers, the compiler and runtime system need information about which parameters are pointers, and to which state space they point. Kernel parameter attribute directives are used to provide this information at the PTX level. See Kernel Function Parameter Attributes for a description of kernel parameter attribute directives.

Note

The current implementation does not allow creation of generic pointers to constant variables

(cvta.const) in programs that have pointers to constant buffers passed as kernel parameters.

5.1.6.2. Kernel Function Parameter Attributes

Kernel function parameters may be declared with an optional .ptr attribute to indicate that a

parameter is a pointer to memory, and also indicate the state space and alignment of the memory

being pointed to. Kernel Parameter Attribute: .ptr

describes the .ptr kernel parameter attribute.

5.1.6.3. Kernel Parameter Attribute: .ptr

.ptr

Kernel parameter alignment attribute.

Syntax

.param .type .ptr .space .align N varname

.param .type .ptr .align N varname

.space = { .const, .global, .local, .shared };

Description

Used to specify the state space and, optionally, the alignment of memory pointed to by a pointer type kernel parameter. The alignment value N, if present, must be a power of two. If no state space is specified, the pointer is assumed to be a generic address pointing to one of const, global, local, or shared memory. If no alignment is specified, the memory pointed to is assumed to be aligned to a 4 byte boundary.

Spaces between .ptr, .space, and .align may be eliminated to improve readability.

PTX ISA Notes

Introduced in PTX ISA version 2.2.

Support for generic addressing of .const space added in PTX ISA version 3.1.

Target ISA Notes

Supported on all target architectures.

Examples

.entry foo ( .param .u32 param1,

.param .u32 .ptr.global.align 16 param2,

.param .u32 .ptr.const.align 8 param3,

.param .u32 .ptr.align 16 param4 // generic address

// pointer

) { .. }

5.1.6.4. Device Function Parameters

PTX ISA version 2.0 extended the use of parameter space to device function parameters. The most

common use is for passing objects by value that do not fit within a PTX register, such as C

structures larger than 8 bytes. In this case, a byte array in parameter space is used. Typically,

the caller will declare a locally-scoped .param byte array variable that represents a flattened

C structure or union. This will be passed by value to a callee, which declares a .param formal

parameter having the same size and alignment as the passed argument.

Example

// pass object of type struct { double d; int y; };

.func foo ( .reg .b32 N, .param .align 8 .b8 buffer[12] )

{

.reg .f64 %d;

.reg .s32 %y;

ld.param.f64 %d, [buffer];

ld.param.s32 %y, [buffer+8];

...

}

// code snippet from the caller

// struct { double d; int y; } mystruct; is flattened, passed to foo

...

.reg .f64 dbl;

.reg .s32 x;

.param .align 8 .b8 mystruct;

...

st.param.f64 [mystruct+0], dbl;

st.param.s32 [mystruct+8], x;

call foo, (4, mystruct);

...

See the section on function call syntax for more details.

Function input parameters may be read via ld.param and function return parameters may be written

using st.param; it is illegal to write to an input parameter or read from a return parameter.

Aside from passing structures by value, .param space is also required whenever a formal

parameter has its address taken within the called function. In PTX, the address of a function input

parameter may be moved into a register using the mov instruction. Note that the parameter will

be copied to the stack if necessary, and so the address will be in the .local state space and is

accessed via ld.local and st.local instructions. It is not possible to use mov to get

the address of or a locally-scoped .param space variable. Starting PTX ISA version 6.0, it is

possible to use mov instruction to get address of return parameter of device function.

Example

// pass array of up to eight floating-point values in buffer

.func foo ( .param .b32 N, .param .b32 buffer[32] )

{

.reg .u32 %n, %r;

.reg .f32 %f;

.reg .pred %p;

ld.param.u32 %n, [N];

mov.u32 %r, buffer; // forces buffer to .local state space

Loop:

setp.eq.u32 %p, %n, 0;

@%p: bra Done;

ld.local.f32 %f, [%r];

...

add.u32 %r, %r, 4;

sub.u32 %n, %n, 1;

bra Loop;

Done:

...

}

5.1.8. Texture State Space (deprecated)

The texture (.tex) state space is global memory accessed via the texture instruction. It is

shared by all threads in a context. Texture memory is read-only and cached, so accesses to texture

memory are not coherent with global memory stores to the texture image.

The GPU hardware has a fixed number of texture bindings that can be accessed within a single kernel

(typically 128). The .tex directive will bind the named texture memory variable to a hardware

texture identifier, where texture identifiers are allocated sequentially beginning with

zero. Multiple names may be bound to the same physical texture identifier. An error is generated if

the maximum number of physical resources is exceeded. The texture name must be of type .u32 or

.u64.

Physical texture resources are allocated on a per-kernel granularity, and .tex variables are

required to be defined in the global scope.

Texture memory is read-only. A texture’s base address is assumed to be aligned to a 16 byte boundary.

Example

.tex .u32 tex_a; // bound to physical texture 0

.tex .u32 tex_c, tex_d; // both bound to physical texture 1

.tex .u32 tex_d; // bound to physical texture 2

.tex .u32 tex_f; // bound to physical texture 3

Note

Explicit declarations of variables in the texture state space is deprecated, and programs should

instead reference texture memory through variables of type .texref. The .tex directive is

retained for backward compatibility, and variables declared in the .tex state space are

equivalent to module-scoped .texref variables in the .global state space.

For example, a legacy PTX definitions such as

.tex .u32 tex_a;

is equivalent to:

.global .texref tex_a;

See Texture Sampler and Surface Types for the

description of the .texref type and Texture Instructions

for its use in texture instructions.

5.2. Types

5.2.1. Fundamental Types

In PTX, the fundamental types reflect the native data types supported by the target architectures. A fundamental type specifies both a basic type and a size. Register variables are always of a fundamental type, and instructions operate on these types. The same type-size specifiers are used for both variable definitions and for typing instructions, so their names are intentionally short.

Table 9 lists the fundamental type specifiers for each basic type:

Basic Type |

Fundamental Type Specifiers |

|---|---|

Signed integer |

|

Unsigned integer |

|

Floating-point |

|

Bits (untyped) |

|

Predicate |

|

Most instructions have one or more type specifiers, needed to fully specify instruction behavior. Operand types and sizes are checked against instruction types for compatibility.

Two fundamental types are compatible if they have the same basic type and are the same size. Signed and unsigned integer types are compatible if they have the same size. The bit-size type is compatible with any fundamental type having the same size.

In principle, all variables (aside from predicates) could be declared using only bit-size types, but typed variables enhance program readability and allow for better operand type checking.

5.2.2. Restricted Use of Sub-Word Sizes

The .u8, .s8, and .b8 instruction types are restricted to ld, st, and cvt

instructions. The .f16 floating-point type is allowed only in conversions to and from .f32,

.f64 types, in half precision floating point instructions and texture fetch instructions. The

.f16x2 floating point type is allowed only in half precision floating point arithmetic

instructions and texture fetch instructions.

For convenience, ld, st, and cvt instructions permit source and destination data

operands to be wider than the instruction-type size, so that narrow values may be loaded, stored,

and converted using regular-width registers. For example, 8-bit or 16-bit values may be held

directly in 32-bit or 64-bit registers when being loaded, stored, or converted to other types and

sizes.

5.2.3. Alternate Floating-Point Data Formats

The fundamental floating-point types supported in PTX have implicit bit representations that

indicate the number of bits used to store exponent and mantissa. For example, the .f16 type

indicates 5 bits reserved for exponent and 10 bits reserved for mantissa. In addition to the

floating-point representations assumed by the fundamental types, PTX allows the following alternate

floating-point data formats:

-

bf16data format: -

This data format is a 16-bit floating point format with 8 bits for exponent and 7 bits for mantissa. A register variable containing

bf16data must be declared with.b16type. -

e4m3data format: -

This data format is an 8-bit floating point format with 4 bits for exponent and 3 bits for mantissa. The

e4m3encoding does not support infinity andNaNvalues are limited to0x7fand0xff. A register variable containinge4m3value must be declared using bit-size type. -

e5m2data format: -

This data format is an 8-bit floating point format with 5 bits for exponent and 2 bits for mantissa. A register variable containing

e5m2value must be declared using bit-size type. -

tf32data format: -

This data format is a special 32-bit floating point format supported by the matrix multiply-and-accumulate instructions, with the same range as

.f32and reduced precision (>=10 bits). The internal layout oftf32format is implementation defined. PTX facilitates conversion from single precision.f32type totf32format. A register variable containingtf32data must be declared with.b32type.

Alternate data formats cannot be used as fundamental types. They are supported as source or destination formats by certain instructions.

5.2.4. Packed Data Types

Certain PTX instructions operate on two sets of inputs in parallel, and produce two outputs. Such instructions can use the data stored in a packed format. PTX supports packing two values of the same scalar data type into a single, larger value. The packed value is considered as a value of a packed data type. In this section we describe the packed data types supported in PTX.

5.2.4.1. Packed Floating Point Data Types

PTX supports the following four variants of packed floating point data types:

.f16x2packed type containing two.f16floating point values..bf16x2packed type containing two.bf16alternate floating point values..e4m3x2packed type containing two.e4m3alternate floating point values..e5m2x2packed type containing two.e5m2alternate floating point values.

.f16x2 is supported as a fundamental type. .bf16x2, .e4m3x2 and .e5m2x2 cannot be

used as fundamental types - they are supported as instruction types on certain instructions. A

register variable containing .bf16x2 data must be declared with .b32 type. A register

variable containing .e4m3x2 or .e5m2x2 data must be declared with .b16 type.

5.2.4.2. Packed Integer Data Types

PTX supports two variants of packed integer data types: .u16x2 and .s16x2. The packed data

type consists of two .u16 or .s16 values. A register variable containing .u16x2 or

.s16x2 data must be declared with .b32 type. Packed integer data types cannot be used as

fundamental types. They are supported as instruction types on certain instructions.

5.3. Texture Sampler and Surface Types

PTX includes built-in opaque types for defining texture, sampler, and surface descriptor variables. These types have named fields similar to structures, but all information about layout, field ordering, base address, and overall size is hidden to a PTX program, hence the term opaque. The use of these opaque types is limited to:

Variable definition within global (module) scope and in kernel entry parameter lists.

Static initialization of module-scope variables using comma-delimited static assignment expressions for the named members of the type.

Referencing textures, samplers, or surfaces via texture and surface load/store instructions (

tex,suld,sust,sured).Retrieving the value of a named member via query instructions (

txq,suq).Creating pointers to opaque variables using

mov, e.g.,mov.u64 reg, opaque_var;. The resulting pointer may be stored to and loaded from memory, passed as a parameter to functions, and de-referenced by texture and surface load, store, and query instructions, but the pointer cannot otherwise be treated as an address, i.e., accessing the pointer withldandstinstructions, or performing pointer arithmetic will result in undefined results.Opaque variables may not appear in initializers, e.g., to initialize a pointer to an opaque variable.

Note

Indirect access to textures and surfaces using pointers to opaque variables is supported

beginning with PTX ISA version 3.1 and requires target sm_20 or later.

Indirect access to textures is supported only in unified texture mode (see below).

The three built-in types are .texref, .samplerref, and .surfref. For working with

textures and samplers, PTX has two modes of operation. In the unified mode, texture and sampler

information is accessed through a single .texref handle. In the independent mode, texture and

sampler information each have their own handle, allowing them to be defined separately and combined

at the site of usage in the program. In independent mode, the fields of the .texref type that

describe sampler properties are ignored, since these properties are defined by .samplerref

variables.

Table 11 and

Table 12 list the named members

of each type for unified and independent texture modes. These members and their values have

precise mappings to methods and values defined in the texture HW class as well as

exposed values via the API.

Member |

.texref values |

.surfref values |

|---|---|---|

|

in elements |

|

|

in elements |

|

|

in elements |

|

|

|

|

|

|

|

|

|

N/A |

|

|

N/A |

|

|

N/A |

|

as number of textures in a texture array |

as number of surfaces in a surface array |

|

as number of levels in a mipmapped texture |

N/A |

|

as number of samples in a multi-sample texture |

N/A |

|

N/A |

|

5.3.1. Texture and Surface Properties

Fields width, height, and depth specify the size of the texture or surface in number of

elements in each dimension.

The channel_data_type and channel_order fields specify these properties of the texture or

surface using enumeration types corresponding to the source language API. For example, see Channel

Data Type and Channel Order Fields for

the OpenCL enumeration types currently supported in PTX.

5.3.2. Sampler Properties

The normalized_coords field indicates whether the texture or surface uses normalized coordinates

in the range [0.0, 1.0) instead of unnormalized coordinates in the range [0, N). If no value is

specified, the default is set by the runtime system based on the source language.

The filter_mode field specifies how the values returned by texture reads are computed based on

the input texture coordinates.

The addr_mode_{0,1,2} fields define the addressing mode in each dimension, which determine how

out-of-range coordinates are handled.

See the CUDA C++ Programming Guide for more details of these properties.

Member |

.samplerref values |

.texref values |

.surfref values |

|---|---|---|---|

|

N/A |

in elements |

|

|

N/A |

in elements |

|

|

N/A |

in elements |

|

|

N/A |

|

|

|

N/A |

|

|

|

N/A |

|

N/A |

|

|

N/A |

N/A |

|

|

ignored |

N/A |

|

|

N/A |

N/A |

|

N/A |

as number of textures in a texture array |

as number of surfaces in a surface array |

|

N/A |

as number of levels in a mipmapped texture |

N/A |

|

N/A |

as number of samples in a multi-sample texture |

N/A |

|

N/A |

N/A |

|

In independent texture mode, the sampler properties are carried in an independent .samplerref

variable, and these fields are disabled in the .texref variables. One additional sampler

property, force_unnormalized_coords, is available in independent texture mode.

The force_unnormalized_coords field is a property of .samplerref variables that allows the

sampler to override the texture header normalized_coords property. This field is defined only in

independent texture mode. When True, the texture header setting is overridden and unnormalized

coordinates are used; when False, the texture header setting is used.

The force_unnormalized_coords property is used in compiling OpenCL; in OpenCL, the property of

normalized coordinates is carried in sampler headers. To compile OpenCL to PTX, texture headers are

always initialized with normalized_coords set to True, and the OpenCL sampler-based

normalized_coords flag maps (negated) to the PTX-level force_unnormalized_coords flag.

Variables using these types may be declared at module scope or within kernel entry parameter

lists. At module scope, these variables must be in the .global state space. As kernel

parameters, these variables are declared in the .param state space.

Example

.global .texref my_texture_name;

.global .samplerref my_sampler_name;

.global .surfref my_surface_name;

When declared at module scope, the types may be initialized using a list of static expressions assigning values to the named members.

Example

.global .texref tex1;

.global .samplerref tsamp1 = { addr_mode_0 = clamp_to_border,

filter_mode = nearest

};

5.3.3. Channel Data Type and Channel Order Fields

The channel_data_type and channel_order fields have enumeration types corresponding to the

source language API. Currently, OpenCL is the only source language that defines these

fields. Table 14 and

Table 13 show the

enumeration values defined in OpenCL version 1.0 for channel data type and channel order.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

5.4. Variables

In PTX, a variable declaration describes both the variable’s type and its state space. In addition to fundamental types, PTX supports types for simple aggregate objects such as vectors and arrays.

5.4.1. Variable Declarations

All storage for data is specified with variable declarations. Every variable must reside in one of the state spaces enumerated in the previous section.

A variable declaration names the space in which the variable resides, its type and size, its name, an optional array size, an optional initializer, and an optional fixed address for the variable.

Predicate variables may only be declared in the register state space.

Examples

.global .u32 loc;

.reg .s32 i;

.const .f32 bias[] = {-1.0, 1.0};

.global .u8 bg[4] = {0, 0, 0, 0};

.reg .v4 .f32 accel;

.reg .pred p, q, r;

5.4.2. Vectors

Limited-length vector types are supported. Vectors of length 2 and 4 of any non-predicate

fundamental type can be declared by prefixing the type with .v2 or .v4. Vectors must be

based on a fundamental type, and they may reside in the register space. Vectors cannot exceed

128-bits in length; for example, .v4 .f64 is not allowed. Three-element vectors may be

handled by using a .v4 vector, where the fourth element provides padding. This is a common case

for three-dimensional grids, textures, etc.

Examples

.global .v4 .f32 V; // a length-4 vector of floats

.shared .v2 .u16 uv; // a length-2 vector of unsigned ints

.global .v4 .b8 v; // a length-4 vector of bytes

By default, vector variables are aligned to a multiple of their overall size (vector length times base-type size), to enable vector load and store instructions which require addresses aligned to a multiple of the access size.

5.4.3. Array Declarations

Array declarations are provided to allow the programmer to reserve space. To declare an array, the variable name is followed with dimensional declarations similar to fixed-size array declarations in C. The size of each dimension is a constant expression.

Examples

.local .u16 kernel[19][19];

.shared .u8 mailbox[128];

The size of the array specifies how many elements should be reserved. For the declaration of array kernel above, 19*19 = 361 halfwords are reserved, for a total of 722 bytes.

When declared with an initializer, the first dimension of the array may be omitted. The size of the first array dimension is determined by the number of elements in the array initializer.

Examples

.global .u32 index[] = { 0, 1, 2, 3, 4, 5, 6, 7 };

.global .s32 offset[][2] = { {-1, 0}, {0, -1}, {1, 0}, {0, 1} };

Array index has eight elements, and array offset is a 4x2 array.

5.4.4. Initializers

Declared variables may specify an initial value using a syntax similar to C/C++, where the variable name is followed by an equals sign and the initial value or values for the variable. A scalar takes a single value, while vectors and arrays take nested lists of values inside of curly braces (the nesting matches the dimensionality of the declaration).

As in C, array initializers may be incomplete, i.e., the number of initializer elements may be less than the extent of the corresponding array dimension, with remaining array locations initialized to the default value for the specified array type.

Examples

.const .f32 vals[8] = { 0.33, 0.25, 0.125 };

.global .s32 x[3][2] = { {1,2}, {3} };

is equivalent to

.const .f32 vals[8] = { 0.33, 0.25, 0.125, 0.0, 0.0, 0.0, 0.0, 0.0 };

.global .s32 x[3][2] = { {1,2}, {3,0}, {0,0} };

Currently, variable initialization is supported only for constant and global state spaces. Variables in constant and global state spaces with no explicit initializer are initialized to zero by default. Initializers are not allowed in external variable declarations.

Variable names appearing in initializers represent the address of the variable; this can be used to

statically initialize a pointer to a variable. Initializers may also contain var+offset

expressions, where offset is a byte offset added to the address of var. Only variables in

.global or .const state spaces may be used in initializers. By default, the resulting

address is the offset in the variable’s state space (as is the case when taking the address of a

variable with a mov instruction). An operator, generic(), is provided to create a generic

address for variables used in initializers.

Starting PTX ISA version 7.1, an operator mask() is provided, where mask is an integer

immediate. The only allowed expressions in the mask() operator are integer constant expression

and symbol expression representing address of variable. The mask() operator extracts n

consecutive bits from the expression used in initializers and inserts these bits at the lowest

position of the initialized variable. The number n and the starting position of the bits to be

extracted is specified by the integer immediate mask. PTX ISA version 7.1 only supports

extracting a single byte starting at byte boundary from the address of the variable. PTX ISA version

7.3 supports Integer constant expression as an operand in the mask() operator.

Supported values for mask are: 0xFF, 0xFF00, 0XFF0000, 0xFF000000, 0xFF00000000, 0xFF0000000000,

0xFF000000000000, 0xFF00000000000000.

Examples

.const .u32 foo = 42;

.global .u32 bar[] = { 2, 3, 5 };

.global .u32 p1 = foo; // offset of foo in .const space

.global .u32 p2 = generic(foo); // generic address of foo

// array of generic-address pointers to elements of bar

.global .u32 parr[] = { generic(bar), generic(bar)+4,

generic(bar)+8 };

// examples using mask() operator are pruned for brevity

.global .u8 addr[] = {0xff(foo), 0xff00(foo), 0xff0000(foo), ...};

.global .u8 addr2[] = {0xff(foo+4), 0xff00(foo+4), 0xff0000(foo+4),...}

.global .u8 addr3[] = {0xff(generic(foo)), 0xff00(generic(foo)),...}

.global .u8 addr4[] = {0xff(generic(foo)+4), 0xff00(generic(foo)+4),...}

// mask() operator with integer const expression

.global .u8 addr5[] = { 0xFF(1000 + 546), 0xFF00(131187), ...};

Note

PTX 3.1 redefines the default addressing for global variables in initializers, from generic

addresses to offsets in the global state space. Legacy PTX code is treated as having an implicit

generic() operator for each global variable used in an initializer. PTX 3.1 code should

either include explicit generic() operators in initializers, use cvta.global to form

generic addresses at runtime, or load from the non-generic address using ld.global.

Device function names appearing in initializers represent the address of the first instruction in the function; this can be used to initialize a table of function pointers to be used with indirect calls. Beginning in PTX ISA version 3.1, kernel function names can be used as initializers e.g. to initialize a table of kernel function pointers, to be used with CUDA Dynamic Parallelism to launch kernels from GPU. See the CUDA Dynamic Parallelism Programming Guide for details.

Labels cannot be used in initializers.

Variables that hold addresses of variables or functions should be of type .u8 or .u32 or

.u64.

Type .u8 is allowed only if the mask() operator is used.

Initializers are allowed for all types except .f16, .f16x2 and .pred.

Examples

.global .s32 n = 10;

.global .f32 blur_kernel[][3]

= {{.05,.1,.05},{.1,.4,.1},{.05,.1,.05}};

.global .u32 foo[] = { 2, 3, 5, 7, 9, 11 };

.global .u64 ptr = generic(foo); // generic address of foo[0]

.global .u64 ptr = generic(foo)+8; // generic address of foo[2]

5.4.5. Alignment

Byte alignment of storage for all addressable variables can be specified in the variable

declaration. Alignment is specified using an optional .alignbyte-count specifier immediately

following the state-space specifier. The variable will be aligned to an address which is an integer

multiple of byte-count. The alignment value byte-count must be a power of two. For arrays, alignment

specifies the address alignment for the starting address of the entire array, not for individual

elements.

The default alignment for scalar and array variables is to a multiple of the base-type size. The default alignment for vector variables is to a multiple of the overall vector size.

Examples

// allocate array at 4-byte aligned address. Elements are bytes.

.const .align 4 .b8 bar[8] = {0,0,0,0,2,0,0,0};

Note that all PTX instructions that access memory require that the address be aligned to a multiple

of the access size. The access size of a memory instruction is the total number of bytes accessed in

memory. For example, the access size of ld.v4.b32 is 16 bytes, while the access size of

atom.f16x2 is 4 bytes.

5.4.6. Parameterized Variable Names

Since PTX supports virtual registers, it is quite common for a compiler frontend to generate a large number of register names. Rather than require explicit declaration of every name, PTX supports a syntax for creating a set of variables having a common prefix string appended with integer suffixes.

For example, suppose a program uses a large number, say one hundred, of .b32 variables, named

%r0, %r1, …, %r99. These 100 register variables can be declared as follows:

.reg .b32 %r<100>; // declare %r0, %r1, ..., %r99

This shorthand syntax may be used with any of the fundamental types and with any state space, and may be preceded by an alignment specifier. Array variables cannot be declared this way, nor are initializers permitted.

5.4.7. Variable Attributes

Variables may be declared with an optional .attribute directive which allows specifying special

attributes of variables. Keyword .attribute is followed by attribute specification inside

parenthesis. Multiple attributes are separated by comma.

Variable and Function Attribute Directive: .attribute describes the .attribute

directive.

5.4.8. Variable and Function Attribute Directive: .attribute

.attribute

Variable and function attributes

Description

Used to specify special attributes of a variable or a function.

The following attributes are supported.

.managed-

.managedattribute specifies that variable will be allocated at a location in unified virtual memory environment where host and other devices in the system can reference the variable directly. This attribute can only be used with variables in .global state space. See the CUDA UVM-Lite Programming Guide for details. .unified-

.unifiedattribute specifies that function has the same memory address on the host and on other devices in the system. Integer constantsuuid1anduuid2respectively specify upper and lower 64 bits of the unique identifier associated with the function or the variable. This attribute can only be used on device functions or on variables in the.globalstate space. Variables with.unifiedattribute are read-only and must be loaded by specifying.unifiedqualifier on the address operand ofldinstruction, otherwise the behavior is undefined.

PTX ISA Notes

Introduced in PTX ISA version 4.0.

Support for function attributes introduced in PTX ISA version 8.0.

Target ISA Notes

.managedattribute requiressm_30or higher..unifiedattribute requiressm_90or higher.

Examples

.global .attribute(.managed) .s32 g;

.global .attribute(.managed) .u64 x;

.global .attribute(.unified(19,95)) .f32 f;

.func .attribute(.unified(0xAB, 0xCD)) bar() { ... }

5.5. Tensors

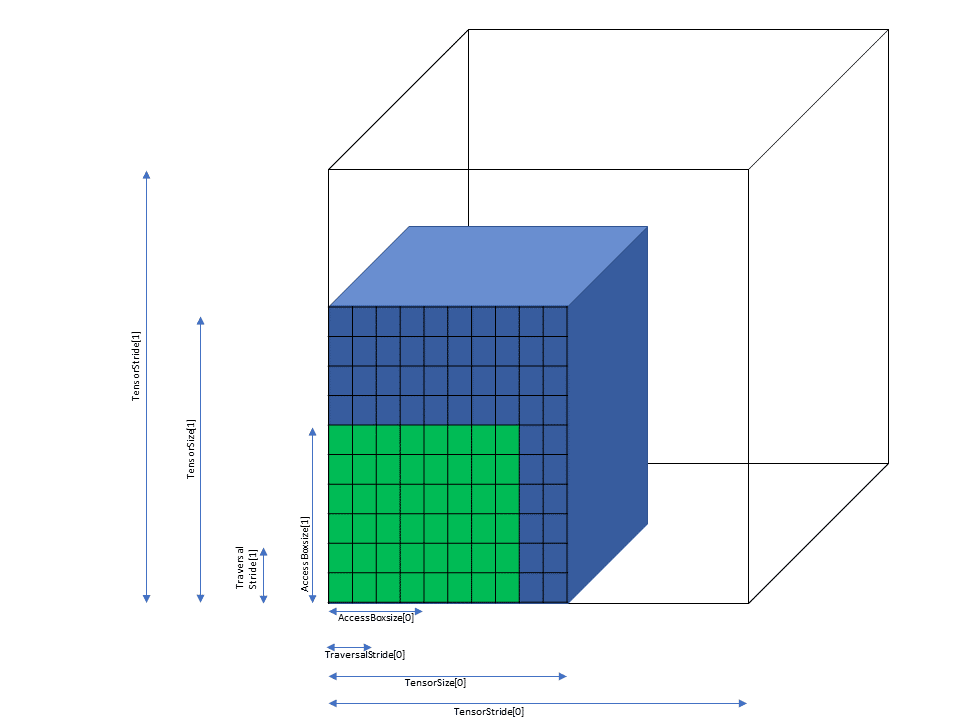

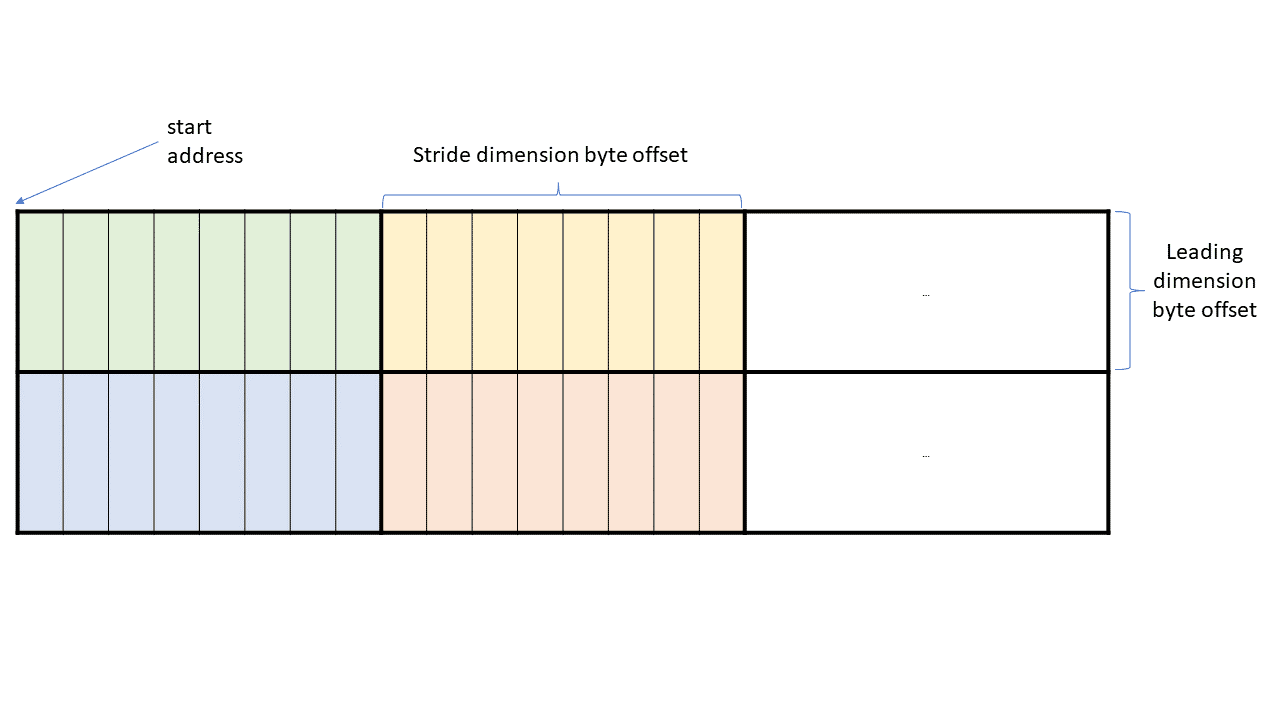

A tensor is a multi-dimensional matrix structure in the memory. Tensor is defined by the following properties:

Dimensionality

Dimension sizes across each dimension

Individual element types

Tensor stride across each dimension

PTX supports instructions which can operate on the tensor data. PTX Tensor instructions include:

Copying data between global and shared memories

Reducing the destination tensor data with the source.

The Tensor data can be operated on by various wmma.mma, mma and wgmma.mma_async

instructions.

PTX Tensor instructions treat the tensor data in the global memory as a multi-dimensional structure and treat the data in the shared memory as a linear data.