2. Usage#

2.1. CUPTI Compatibility and Requirements#

CUPTI, the CUDA Profiling Tools Interface, ensures seamless profiling compatibility for CUDA applications across various GPU architectures and CUDA driver versions. As part of the CUDA Toolkit, CUPTI adheres to CUDA Toolkit compatibility requirements with CUDA drivers, which includes support for Backward, Forward and Enhanced compatibilities. For instance, a profiling tool based on an older version of CUPTI can still operate with a more recent CUDA driver.

It’s essential to refer to the CUDA Toolkit and Corresponding Driver Versions table in the

CUDA Toolkit Release Notes

to determine the minimum CUDA driver version required for each release of CUPTI corresponding to a

CUDA Toolkit release. Attempting to use CUPTI calls with an incompatible CUDA driver version will

result in a CUPTI_ERROR_NOT_INITIALIZED error code.

2.2. CUPTI Initialization#

CUPTI initialization occurs lazily the first time you invoke any CUPTI function. For the Activity, and Callback APIs there are no requirements on when this initialization must occur (i.e. you can invoke the first CUPTI function at any point). See the CUPTI Activity API section for more information on CUPTI initialization requirements for the activity API.

It is recommended that CUPTI clients call the subscription API, cuptiSubscribe(), before starting the profiling session. This means the API

should be invoked prior to any other CUPTI calls. If another CUPTI client is already subscribed, cuptiSubscribe() will return the error code

CUPTI_ERROR_MULTIPLE_SUBSCRIBERS_NOT_SUPPORTED. In this case, the CUPTI client should terminate further CUPTI calls and handle the error appropriately.

This ensures that only one CUPTI client is active at a time, preventing interference with each other’s profiling state.

The cuptiSubscribe_v2() API version offers improved handling, allowing for easier identification of the previous subscriber if the current subscription fails.

2.3. CUPTI Activity API#

The CUPTI Activity API allows you to asynchronously collect a trace of an application’s CPU and GPU CUDA activity. This API is provided in the header file cupti_activity.h. The following terminology is used by the activity API.

- Activity Record

CPU and GPU activity is reported in C data structures called activity records. There is a different C structure type for each activity kind (e.g.

CUpti_ActivityAPI). Records are generically referred to using theCUpti_Activitytype. This type contains only a field that indicates the kind of the activity record. Using this kind, the object can be cast from the genericCUpti_Activitytype to the specific type representing the activity. See theprintActivityfunction in the activity_trace_async sample for an example.- Activity Buffer

An activity buffer is used to transfer one or more activity records from CUPTI to the client. CUPTI fills activity buffers with activity records as the corresponding activities occur on the CPU and GPU. But CUPTI doesn’t guarantee any ordering of the activities in the activity buffer as activity records for few activity kinds are added lazily. The CUPTI client is responsible for providing empty activity buffers as necessary to ensure that no records are dropped.

An asynchronous buffering API is implemented by cuptiActivityRegisterCallbacks and cuptiActivityFlushAll.

It is not required that the activity API be initialized before CUDA initialization. All related activities occurring after initializing the activity API are collected. You can force initialization of the activity API by enabling one or more activity kinds using cuptiActivityEnable or cuptiActivityEnableContext, as shown in the initTrace function of the activity_trace_async sample. Some activity kinds cannot be directly enabled, see the API documentation for CUpti_ActivityKind for details. The functions cuptiActivityEnable and cuptiActivityEnableContext will return CUPTI_ERROR_NOT_COMPATIBLE if the requested activity kind cannot be enabled.

The activity buffer API uses callbacks to request and return buffers of activity records. To use the asynchronous buffering API, you must first register two callbacks using cuptiActivityRegisterCallbacks. One of these callbacks will be invoked whenever CUPTI needs an empty activity buffer. The other callback is used to deliver a buffer containing one or more activity records to the client. To minimize profiling overhead the client should return as quickly as possible from these callbacks. Client can pre-allocate a pool of activity buffers and return an empty buffer from the pool when requested by CUPTI. Activity buffer size should be chosen carefully, smaller buffers can result in frequent requests by CUPTI and bigger buffers can delay the automatic delivery of completed activity buffers. For typical workloads, it’s suggested to choose a size between 1 and 10 MB.

By default, CUPTI requests activity buffers separately for each thread that generates activity records. The per-thread activity buffer request strategy is especially beneficial for multi-threaded applications, as it avoids contention when multiple threads are generating activities concurrently and improve the runtime performance of the application. This can slightly increase the memory footprint. Alternatively, the client can configure CUPTI to request activity buffers at the application level. In this mode, a single shared set of buffers is used across all threads in the application. This can help reduce memory usage, though it may introduce contention when multiple threads are generating activities concurrently.

The functions cuptiActivityGetAttribute and cuptiActivitySetAttribute can be used to read and write attributes that control how the buffering API behaves. See the API documentation for more information.

Flushing of the activity buffers

CUPTI is expected to deliver the activity buffer automatically as soon as it gets full and all the activity records in it are completed. For performance reasons, CUPTI calls the underlying methods based on certain heuristics, thus it can cause delay in the delivery of the buffer. However client can make a request to deliver the activity buffer/s at any time, and this can be achieved using the APIs cuptiActivityFlushAll and cuptiActivityFlushPeriod. Behavior of these APIs is as follows:

For on-demand flush using the API

cuptiActivityFlushAllwith the flag set as 0, CUPTI returns all the activity buffers which have all the activity records completed, buffers need not to be full though. It doesn’t return buffers which have one or more incomplete records. This flush can be done at a regular interval in a separate thread.For on-demand forced flush using the API

cuptiActivityFlushAllwith the flag set as CUPTI_ACTIVITY_FLAG_FLUSH_FORCED, CUPTI returns all the activity buffers including the ones which have one or more incomplete activity records. It’s suggested to do the forced flush before the termination of the profiling session to allow remaining buffers to be delivered.For periodic flush using the API

cuptiActivityFlushPeriod, CUPTI returns only those activity buffers which are full and have all the activity records completed. It’s allowed to use the APIcuptiActivityFlushAllto flush the buffers on-demand, even when client sets the periodic flush.

Note that activity record is considered as completed if it has all the information filled up including the timestamps (if any).

The activity_trace_async sample shows how to use the activity buffer API to collect a trace of CPU and GPU activity for a simple application.

CUPTI Threads

CUPTI creates a worker thread to minimize the perturbance for the application created threads. CUPTI offloads certain operations from the application threads to the worker thread, this incldues synchronization of profiling resources between host and device, delivery of the activity buffers to the client using the buffer completed callback registered in the API cuptiActivityRegisterCallbacks etc. To minimize the overhead, CUPTI wakes up the worker thread based on certain heuristics. API cuptiActivityFlushPeriod introduced in CUDA 11.1 can be used to control the flush period of the worker thread. This setting overrides the CUPTI heuristics. It’s allowed to use the API cuptiActivityFlushAll to flush the data on-demand, even when client sets the periodic flush.

Further, CUPTI creates separate threads when certain activity kinds are enabled. For example, CUPTI creates one thread each for activity kinds CUPTI_ACTIVITY_KIND_UNIFIED_MEMORY_COUNTER and CUPTI_ACTIVITY_KIND_ENVIRONMENT to collect the information from the backend.

Kernel Name Demagling

CUPTI reports kernel names in mangled form in both activity records and callbacks. To obtain human-readable names, use cu++filt (included with the NVIDIA CUDA Toolkit) to demangle CUDA C++ symbols, or link your application with the cu++filt static library (libcufilt) to demangle in process.

Correlation ID

In CUPTI, a correlation ID is a integer value that links a CUDA API call (e.g., cuLaunchKernel, cudaMemcpy, cudaMemset) to the GPU activity records generated by that call.

Core idea

Every CUDA driver/runtime API invocation that CUPTI records is assigned a unique correlation ID.

The activity records produced as a result of that API call (kernel executions, memory copies, memsets etc.) carry the same correlation ID, so they can all be associated back to the originating CUDA API call.

The correlationId field is present in multiple CUPTI data structures:

CUDA API records:

CUpti_ActivityAPIhascorrelationIdfor the CUDA driver/runtime function call.GPU activity records: records such as

CUpti_ActivityKernel*(kernels),CUpti_ActivityMemcpy*(memory copies), andCUpti_ActivityMemset*(memsets) include acorrelationIdthat matches the originating CUDA API call.Callback data structures:

CUpti_CallbackDataexposes the same ID, allowing callback-based tools to correlate callbacks with activity records.

Typical uses of the correlation ID:

API-to-Activity Mapping: Build mappings from CUDA API calls (CPU side) to GPU activities (kernels, memcpys, memsets) by matching the shared

correlationId.External Correlation: When external correlation is enabled (OpenACC, OpenMP, MPI, application phases), external IDs are further mapped to CUPTI correlation IDs so you can relate high-level phases to lower-level CUDA work.

Note

For CUPTI to generate correlationId values, the CUDA API activity kinds i.e. CUPTI_ACTIVITY_KIND_RUNTIME and/or CUPTI_ACTIVITY_KIND_DRIVER must be enabled.

2.3.1. NVLink#

NVIDIA NVLink is a high-bandwidth, energy-efficient interconnect that enables fast communication between the CPU and GPU, and between GPUs. CUPTI provides NVLink topology information and NVLink transmit/receive throughput metrics.

The activity record CUpti_ActivityNVLink5, enabled using the activity kind CUPTI_ACTIVITY_KIND_NVLINK,

outputs NVLink topology information in terms of logical NVLinks. A logical NVLink connects two devices,

which can be either CPUs or GPUs, referred to as NVLink Processing Units (NPUs).

The physicalNvLinkCount field indicates the number of physical links in a logical link.

The fields portDev0 and portDev1 provide information about the slots where physical NVLinks are connected

for a logical link. This port corresponds to the instance of NVLink metrics profiled from a device.

Therefore, port and instance information should be used to correlate per-instance metric values with the physical

NVLinks and, in turn, with the topology.

The flag field describes the properties of a logical link, such as access to system memory or peer device memory,

and the capability to perform system memory or peer memory atomics.

The bandwidth field indicates the bandwidth of the logical link in kilobytes per second.

The number of links and total bandwidth depend on the specific GPU model and NVLink generation, with newer architectures offering more connections and higher bandwidth for improved multi-GPU communication.

CUPTI provides various metrics for each physical link, including data transmitted/received, transmit/receive throughput, and header versus user data overhead for each physical NVLink. These metrics are also provided per packet type (read/write/atomics/response) to offer more detailed insights into NVLink traffic.

The nvlink_bandwidth sample demonstrates how to use these APIs to collect NVLink metrics and topology information, as well as how to correlate metrics with the topology.

2.3.2. OpenACC#

CUPTI supports collecting information for OpenACC applications using the OpenACC tools interface implementation of the PGI runtime. OpenACC profiling is available only on Linux x86_64, IBM POWER and Arm server platform (arm64 SBSA) platforms. This feature also requires PGI runtime version 19.1 or higher.

The activity records CUpti_ActivityOpenAccData, CUpti_ActivityOpenAccLaunch, and CUpti_ActivityOpenAccOther are created, representing the three groups of callback events specified in the OpenACC tools interface. CUPTI_ACTIVITY_KIND_OPENACC_DATA, CUPTI_ACTIVITY_KIND_OPENACC_LAUNCH, and CUPTI_ACTIVITY_KIND_OPENACC_OTHER can be enabled to collect the respective activity records.

Due to the restrictions of the OpenACC tools interface, CUPTI cannot record OpenACC records from within the client application. Instead, a shared library that exports the acc_register_library function defined in the OpenACC tools interface specification must be implemented. Parameters passed into this function from the OpenACC runtime can be used to initialize the CUPTI OpenACC measurement using cuptiOpenACCInitialize. Before starting the client application, the environment variable ACC_PROFLIB must be set to point to this shared library.

cuptiOpenACCInitialize is defined in cupti_openacc.h, which is included by cupti_activity.h. Since the CUPTI OpenACC header is only available on supported platforms, CUPTI clients must define CUPTI_OPENACC_SUPPORT when compiling.

The openacc_trace sample shows how to use CUPTI APIs for OpenACC data collection.

2.3.3. CUDA Graphs#

CUPTI can collect trace of CUDA Graphs applications without breaking driver performance optimizations. CUPTI has added fields graphId and graphNodeId in the kernel, memcpy and memset activity records to denote the unique ID of the graph and the graph node respectively of the GPU activity. CUPTI issues callbacks for graph operations like graph and graph node creation/destruction/cloning and also for executable graph creation/destruction. The cuda_graphs_trace sample shows how to collect GPU trace and API trace for CUDA Graphs and how to correlate a graph node launch to the node creation API by using CUPTI callbacks for graph operations.

2.3.4. External Correlation#

CUPTI supports correlation of CUDA API activity records with external APIs. Such APIs include OpenACC, OpenMP, and MPI. This associates CUPTI correlation IDs with IDs provided by the external API. Both IDs are stored in a new activity record of type CUpti_ActivityExternalCorrelation.

CUPTI maintains a stack of external correlation IDs per CPU thread and per CUpti_ExternalCorrelationKind. Clients must use cuptiActivityPushExternalCorrelationId to push an external ID of a specific kind to this stack and cuptiActivityPopExternalCorrelationId to remove the latest ID. If a CUDA API activity record is generated while any CUpti_ExternalCorrelationKind-stack on the same CPU thread is non-empty, one CUpti_ActivityExternalCorrelation record per CUpti_ExternalCorrelationKind-stack is inserted into the activity buffer before the respective CUDA API activity record. The CUPTI client is responsible for tracking passed external API correlation IDs, in order to eventually associate external API calls with CUDA API calls. Along with the activity kind CUPTI_ACTIVITY_KIND_EXTERNAL_CORRELATION, it is necessary to enable the CUDA API activity kinds i.e. CUPTI_ACTIVITY_KIND_RUNTIME and CUPTI_ACTIVITY_KIND_DRIVER to generate external correlation activity records.

If both CUPTI_ACTIVITY_KIND_EXTERNAL_CORRELATION and any of CUPTI_ACTIVITY_KIND_OPENACC_* activity kinds are enabled, CUPTI will generate external correlation activity records for OpenACC with externalKindCUPTI_EXTERNAL_CORRELATION_KIND_OPENACC.

The cupti_external_correlation sample shows how to use CUPTI APIs for external correlation.

2.3.5. Dynamic Attach and Detach#

CUPTI provides mechanisms for attaching to or detaching from a running process to support on-demand profiling. CUPTI can be attached by calling any CUPTI API as CUPTI supports lazy initialization. To detach CUPTI, call the API cuptiFinalize() which destroys and cleans up all the resources associated with CUPTI in the current process. After CUPTI detaches from the process, the process will keep on running with no CUPTI attached to it. Any subsequent CUPTI API call will reinitialize the CUPTI. You can attach and detach CUPTI any number of times. For safe operation of the API, it is recommended that API cuptiFinalize() is invoked from the exit call site of any of the CUDA Driver or Runtime API. Otherwise, CUPTI client needs to make sure that CUDA synchronization and CUPTI activity buffer flush is done before calling the API cuptiFinalize(). To understand the need for calling the API cuptiFinalize() from specific point/s in the code flow, consider multiple application threads performing various CUDA activities. While one thread is in the middle of the cuptiFinalize(), it is quite possible that other threads continue to call into the CUPTI and try to access the state of various objects (device, context, thread state etc) maintained by CUPTI, which might be rendered invalid as part of the cuptiFinalize(), thus resulting in the crash. We have to block the other threads until CUPTI teardown is completed via cuptiFinalize(). API exit call site is one such location where we can ensure that the work submitted by all the threads has been completed and we can safely teardown CUPTI. cuptiFinalize() is a heavy operation as it does context synchronization for all active CUDA contexts and blocks all the application threads until CUPTI teardown is completed. Sample code showing the usage of the API cuptiFinalize() in the cupti callback handler code:

void CUPTIAPI

cuptiCallbackHandler(void *userdata, CUpti_CallbackDomain domain,

CUpti_CallbackId cbid, void *cbdata)

{

const CUpti_CallbackData *cbInfo = (CUpti_CallbackData *)cbdata;

// Take this code path when CUPTI detach is requested

if (detachCupti) {

switch(domain)

{

case CUPTI_CB_DOMAIN_RUNTIME_API:

case CUPTI_CB_DOMAIN_DRIVER_API:

if (cbInfo->callbackSite == CUPTI_API_EXIT) {

// call the CUPTI detach API

cuptiFinalize();

}

break;

default:

break;

}

}

}

Full code can be found in the sample cupti_finalize.

2.3.6. Device Memory Allocation Source Tracking#

CUDA applications utilize various shared libraries such as cuBLAS, cuFFT, cuDNN etc, each serving distinct purposes.

These libraries can be integrated either statically at compile time or loaded dynamically during runtime. In the

case of dynamic loading, CUPTI enables precise attribution of memory allocations to their respective shared libraries.

This can be achieved by calling the cuptiActivityEnableAllocationSource() API. The filepath of the responsible

shared object is assigned in the source field in the activity record CUpti_ActivityMemory4 which is enabled using

the activity kind CUPTI_ACTIVITY_KIND_MEMORY2. This functionality is currently exclusive to Linux x86_64 platform.

However, if the library is statically linked, the source is identified as the main application executable rather than

the library.

2.3.7. Hardware Event System (HES)#

The NVIDIA Blackwell architecture introduces a new hardware method known as the Hardware Event System (HES) for collecting CUDA kernel timestamps. This method provides more accurate timestamps and is expected to add minimal launch overhead compared to traditional software instrumentation and semaphore-based approaches used in CUPTI. The overhead is significantly reduced for CUDA graphs in per-node trace.

This is an opt-in feature in CUPTI, and the new CUPTI API, cuptiActivityEnableHWTrace enables the collection of kernel timestamps through hardware events.

It is required to call this API after CUDA driver initialization but before creating the CUDA context.

As a generic solution, this API can be called from the CUPTI_CBID_RESOURCE_CU_INIT_FINISHED callback.

CUPTI depends on Perfworks library to support this feature. This library is shipped as libnvperf_host.so on Linux and nvperf_host.dll on Windows in the same directory as CUPTI when the user installs CUDA toolkit.

This feature currently has certain limitations:

It is not supported on MPS, WSL, MCDM, MIG, vGPU, or confidential compute setups.

It is supported on Linux x86_64, Linux aarch64 SBSA desktop and Windows x86_64 platforms only.

It does not support late attach functionality. CUPTI should be attached to the application from the start.

Once enabled, HES-based tracing cannot be disabled and remains active throughout the profiling session.

This API is not compatible with

cuptiActivityEnableLatencyTimestamps.

The activity_trace_async sample has an option --enableHwTrace to use HES.

2.3.8. Compute Engine Context Switch#

CUPTI provides the ability to trace GPU compute engine context switches, providing insights into how these

events affect application performance.

Users will be able to retrieve information such as timestamps, operation types (start/end),

and associated CUDA context IDs, which will help them understand context switch frequency

and identify processes contributing to frequent context switching.

The information is reported in the CUpti_ActivityComputeEngineCtxSwitch activity record,

enabled with CUPTI_ACTIVITY_KIND_COMPUTE_ENGINE_CTX_SWITCH.

To identify the process associated with a switch, refer to the contextId from CUpti_ActivityComputeEngineCtxSwitch

in the corresponding CUpti_ActivityContext record and check the processId.

Note that context switch events are not supported on MIG, vGPU or QNX setups.

2.4. Multiple Subscribers#

Note

Beta Release: The CUPTI multiple subscribers feature (introduced in CUDA 13.2) is currently in beta. APIs and behavior may change in future releases.

2.4.1. Overview#

CUPTI supports multiple subscribers to trace CUDA activities simultaneously. This feature enables scenarios where multiple CUPTI-based tracing tools need to trace the same application at the same time, with each tool maintaining its own independent activity buffers and callbacks.

When multiple subscribers are enabled, each subscriber:

Has its own set of activity buffers (managed via per-subscriber buffer callbacks)

Can have different buffer sizes and callback configurations

Can independently enable/disable activity kinds

2.4.2. Enabling Multiple Subscribers#

To enable multiple subscribers, all subscribers must use newly added V2 APIs instead of the unversioned (V1) APIs. The required V2 APIs are:

cuptiSubscribe_v2, cuptiActivityEnable_v2, cuptiActivityDisable_v2, cuptiActivityEnableAndDump_v2, cuptiActivityGetAttribute_v2, cuptiActivitySetAttribute_v2, and cuptiActivityRegisterCallbacks_v2.

Important: The first subscriber that calls cuptiSubscribe_v2 must set allowMultipleSubscribers in the CUpti_SubscriberParams structure to 1. This setting is global and applies to all subsequent subscribers. Once set, subsequent cuptiSubscribe_v2 passing the same allowMultipleSubscribers value will succeed without returning CUPTI_ERROR_MULTIPLE_SUBSCRIBERS_NOT_SUPPORTED.

To check whether multiple subscribers mode has already been enabled by a previous subscriber, use cuptiActivityGetAttribute_v2 with the CUPTI_ACTIVITY_ATTR_MULTIPLE_SUBSCRIBER_STATE attribute.

2.4.3. Per-Subscriber Activity Management#

Use the following V2 APIs to manage activity tracing for each subscriber independently:

cuptiActivityEnable_v2andcuptiActivityDisable_v2: Enable and disable specific activity kinds for a subscriber. Each subscriber can enable different activity kinds.cuptiActivityGetAttribute_v2andcuptiActivitySetAttribute_v2: Get and set attributes for a specific subscriber. Note that some attributes are enforced at the subscriber level while others are global — refer to the documentation of each attribute for details.cuptiActivityRegisterCallbacks_v2: Register buffer request and completion callbacks for a specific subscriber. Each subscriber receives its own activity buffers through its registered callbacks.

Important: All tools must call cuptiSubscribe_v2 before calling any other CUPTI APIs.

2.4.4. Limitations and Restrictions#

V1/V2 API Mixing: Do not mix V1 APIs that have corresponding V2 versions (e.g.,

cuptiActivityEnable,cuptiActivityRegisterCallbacks) with V2 APIs (e.g.,cuptiActivityEnable_v2,cuptiActivityRegisterCallbacks_v2). Mixing these APIs can lead to undefined behavior.First Subscriber Setting: The

allowMultipleSubscribersparameter is determined by the first subscriber and cannot be changed by subsequent subscribers. All subscribers must use the same setting.Static Linking: Currently, this feature is only supported for dynamically linked CUPTI library. Static linking will be supported in future CUDA releases.

Selected Activity Kinds: The current beta version provides all subscribers with the union of activity kinds enabled across all subscribers. In future releases, each subscriber will be able to receive records for its own set of activity kinds.

Supports only CUPTI subscribers: This feature is only supported for multiple CUPTI subscribers from one or more CUPTI-based tools. CUPTI still does not support tracing or profiling along with other NVIDIA Developer Tools like cuda-gdb or Compute Sanitizer.

For a complete example, see the multiple_subscribers_trace sample.

2.5. CUPTI User-Defined Activity Records#

2.5.1. Overview#

Note

Beta Release: The CUPTI user-defined activity records feature is currently in beta. APIs and behavior may change in future releases.

The CUPTI user-defined activity records feature allows users to customize activity records by selecting specific fields instead of collecting complete predefined activity records. This provides memory efficiency through reduced data collection and flexibility for application-specific profiling needs.

Minimum CUPTI Version: CUPTI_API_VERSION 130200 (CUDA 13.2). The CUPTI version can be queried using cuptiGetVersion() API.

Challenges with Predefined Records:

Traditional fixed-field records like CUpti_ActivityKernel9 and CUpti_ActivityAPI present several challenges:

Inflexible layouts: Fixed-field records cannot be tailored to specific user needs

Memory waste: Unused fields consume memory and include padding for record alignment

Performance impact: Larger records with unused fields affect data access speeds

Backward compatibility issues: Adding new fields or removing obsolete fields can break existing code

Difficult customization: Each user cannot define its own activity record format

Key Benefits of User-defined Records:

Custom field selection: Users can choose only the fields necessary for their data collection; each user can define its own activity record format

Optimized memory usage: No extra storage is required for unused fields, leading to efficient memory utilization

Fast data access: Compact, structured buffers enhance performance by improving data access speeds

Future-proof: New fields can be added without disrupting existing formats. For example, if fields are bumped from 32-bit to 64-bit, users can switch to the updated fields without facing compatibility issues or redundant space usage

Lower overhead: Reduced data collection and processing costs

For a step-by-step tutorial on using this feature, see CUDA tracing with User-Defined Activity Records.

2.5.2. Pre-defined vs User-Defined Activity Records#

Pre-defined Activity Records:

Using cuptiActivityEnable(), users collect fixed records with all predefined fields. For example, CUpti_ActivityAPI record structure contains all fields regardless of specific needs.

User-Defined Activity Records:

Using cuptiActivityEnable_v2(), users select specific fields to collect.

For CUDA API tracing, each field in CUpti_ActivityAPI is mapped to an enum value in CUpti_ActivityApiFieldIds, allowing users to specify only the fields they need.

Field Selection and Mapping:

To enable field selection, CUPTI provides enum mappings for each supported activity kind.

These enums are defined in cupti_activity.h, where the data types of the enum values are documented in comments above each field.

Users can specify which fields they need through these enum values, and CUPTI will provide only those fields in the activity records.

For example, for CUDA API tracing with CUpti_ActivityAPI, each field is mapped to an enum value:

|

|

|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Important Data Type Changes:

While the majority of field names and data types remain the same as in predefined records, some fields have been updated for improved functionality:

threadId: Changed from 32-bit (uint32_t) to 64-bit (uint64_t)correlationId: Changed from 32-bit (uint32_t) to 64-bit (uint64_t)

These changes ensure better compatibility with modern systems and provide sufficient range for these identifiers.

Memory Impact:

For CUDA API activity records, if you only need 6 fields instead of all 8:

Standard approach: Collect all fields in the predefined record

User-defined: Collect only selected fields (e.g.,

API_FIELD_KIND,API_FIELD_CBID,API_FIELD_START,API_FIELD_END,API_FIELD_THREAD_ID,API_FIELD_CORRELATION_ID)

2.5.3. Data Structures#

The following data structures are used for configuring and parsing user-defined activity records:

CUpti_ActivityConfig: Configuration structure for enabling activity kinds with field selectionCUpti_ActivityFieldSelection: Specifies which fields to include in activity recordsCUpti_BufferCallbackCompleteInfo: Provides record layouts in the buffer completed callbackCUpti_ActivityRecordLayout: Describes the complete layout of a user-defined activity recordCUpti_ActivityFieldLayoutEntry: Describes a single field’s layout inside a user-defined record

For detailed structure definitions and field descriptions, refer to the documentation in cupti_activity.h.

2.5.4. Usage#

User-defined activity records require using v2 APIs that support field selection and provide record layout information. See CUDA tracing with User-Defined Activity Records for complete step-by-step instructions with compilable examples.

Mandatory Requirements:

The following are mandatory to use user-defined activity records:

Subscribe to CUPTI - Call

cuptiSubscribe()orcuptiSubscribe_v2()before calling any other CUPTI APIs. UsingcuptiSubscribe_v2()is recommended:CUpti_SubscriberHandle subscriber; cuptiSubscribe_v2(&subscriber, NULL, NULL, NULL);

Enable the feature - Set

CUPTI_ACTIVITY_ATTR_USER_DEFINED_RECORDSattribute before enabling any activity kinds:size_t valueSize = sizeof(uint8_t); uint8_t value = 1; cuptiActivitySetAttribute(CUPTI_ACTIVITY_ATTR_USER_DEFINED_RECORDS, &valueSize, &value);

Use v2 callback registration:

CUptiResult cuptiActivityRegisterCallbacks_v2(CUpti_SubscriberHandle subscriber, CUpti_BufferCallbackRequestFunc_v2 funcBufferRequested, CUpti_BufferCallbackCompleteFunc_v2 funcBufferCompleted);

The

BufferCompletedcallback receivesCUpti_BufferCallbackCompleteInfocontaining record layouts for all enabled activity kinds through theppRecordLayoutsfield.Use v2 enable API with field selection:

CUptiResult cuptiActivityEnable_v2(CUpti_SubscriberHandle subscriber, CUpti_ActivityKind kind, CUpti_ActivityConfig *config);

Use v2 disable API when changing field selection:

CUptiResult cuptiActivityDisable_v2(CUpti_SubscriberHandle subscriber, CUpti_ActivityKind kind, CUpti_ActivityConfig *config);

Include KIND field first - The

*_FIELD_KINDfield must be the first field in any selection (e.g.,API_FIELD_KIND,KERNEL_FIELD_KIND). Select only fields needed for your analysis to minimize memory usage.Flush when changing field selection - To modify field selection for an already-enabled activity kind:

Disable the activity kind using

cuptiActivityDisable_v2()Call

cuptiActivityFlushAll(1)(mandatory before re-enabling)Re-enable with new field selection using

cuptiActivityEnable_v2()

Explicit enabling of dependent activity kinds - Some activity kinds that are implicitly enabled with predefined records must be explicitly enabled with user-defined records:

CUPTI_ACTIVITY_KIND_MEMCPY2is implicitly enabled when enablingCUPTI_ACTIVITY_KIND_MEMCPYwithcuptiActivityEnable(), but must be explicitly enabled withcuptiActivityEnable_v2()when using user-defined recordsCUPTI_ACTIVITY_KIND_DEVICE_GRAPH_TRACEis implicitly enabled when enablingCUPTI_ACTIVITY_KIND_GRAPH_TRACEwithcuptiActivityEnable(), but must be explicitly enabled withcuptiActivityEnable_v2()when using user-defined records

Without explicit enabling and field selection, CUPTI will not provide records for these kinds.

Parsing Record Layouts:

Record layouts are provided through the ppRecordLayouts field in CUpti_BufferCallbackCompleteInfo received in the CUpti_BufferCallbackCompleteFunc_v2 callback. Two parsing approaches are available:

Field-by-field parsing (recommended): Iterate through

CUpti_ActivityRecordLayout.pEntriesand extract fields using offset/size information. Handles alignment automatically.Typecasting to custom struct: Define a struct matching your field selection and typecast the record pointer. Requires exact alignment matching.

See CUDA tracing with User-Defined Activity Records for complete parsing examples.

Version Compatibility:

The structSize field in all structures (CUpti_ActivityConfig, CUpti_ActivityFieldSelection, CUpti_BufferCallbackRequestInfo, CUpti_BufferCallbackCompleteInfo, CUpti_ActivityRecordLayout, CUpti_ActivityFieldLayoutEntry) is used for CUPTI version compatibility. CUPTI fills this field to indicate structure version. When newer fields are introduced in future CUPTI versions, check structSize to determine which fields are valid and can be safely accessed. Users do not need to set structSize - CUPTI always fills this field.

2.5.5. Limitations#

Not all activity kinds support user-defined records. Unsupported kinds include:

CUPTI_ACTIVITY_KIND_ENVIRONMENTCUPTI_ACTIVITY_KIND_OPENACC_DATACUPTI_ACTIVITY_KIND_OPENACC_LAUNCHCUPTI_ACTIVITY_KIND_OPENACC_OTHERCUPTI_ACTIVITY_KIND_NVLINKCUPTI_ACTIVITY_KIND_PCIECUPTI_ACTIVITY_KIND_OPENMPCUPTI_ACTIVITY_KIND_MEMORY

The below activity kinds are also not supported but CUPTI currently does not provide records for these kinds:

CUPTI_ACTIVITY_KIND_CDP_KERNELCUPTI_ACTIVITY_KIND_PREEMPTIONCUPTI_ACTIVITY_KIND_MODULECUPTI_ACTIVITY_KIND_DEVICE_ATTRIBUTECUPTI_ACTIVITY_KIND_FUNCTION

Field selection cannot be modified after enabling an activity kind. To change field selection, the activity kind must be disabled,

cuptiActivityFlushAll(1)must be called, and then the activity kind can be re-enabled with the new field selection. See CUDA tracing with User-Defined Activity Records for a complete example of changing field selection.Calling

cuptiActivityFlushAll(1)is only required when changing field selection. If the field selection remains the same when re-enabling an activity kind after disabling it, flushing is not required.Pre-defined records cannot be used alongside user-defined records. Once user-defined records are enabled, all activity kinds must use

cuptiActivityEnable_v2(). You cannot enable the same activity kind with bothcuptiActivityEnable()andcuptiActivityEnable_v2().Mixture of pre-defined and user-defined records for different activity kinds is not supported. You cannot enable some activity kinds with

cuptiActivityEnable()and others withcuptiActivityEnable_v2().

2.5.6. Tutorial and Sample Code#

Tutorial:

For a comprehensive step-by-step guide on using user-defined records, see CUDA tracing with User-Defined Activity Records.

Sample Code:

Refer to sample cupti_user_defined_records for practical examples of using user-defined records in CUPTI.

Refer to helper files helper_cupti_activity_user_defined_records.h (Location: $CUPTI_DIR/samples/common/) to reference parsing of user-defined activity records.

2.6. CUPTI Callback API#

The CUPTI Callback API allows you to register a callback into your own code. Your callback will be invoked when the application being profiled calls a CUDA runtime or driver function, or when certain events occur in the CUDA driver. This API is provided in the header file cupti_callbacks.h. The following terminology is used by the callback API.

- Callback Domain

Callbacks are grouped into domains to make it easier to associate your callback functions with groups of related CUDA functions or events. There are currently four callback domains, as defined by

CUpti_CallbackDomain: a domain for CUDA runtime functions, a domain for CUDA driver functions, a domain for CUDA resource tracking, and a domain for CUDA synchronization notification.- Callback ID

Each callback is given a unique ID within the corresponding callback domain so that you can identify it within your callback function. The CUDA driver API IDs are defined in

cupti_driver_cbid.hand the CUDA runtime API IDs are defined incupti_runtime_cbid.h. Both of these headers are included for you when you includecupti.h. The CUDA resource callback IDs are defined byCUpti_CallbackIdResource, and the CUDA synchronization callback IDs are defined byCUpti_CallbackIdSync.- Callback Function

Your callback function must be of type

CUpti_CallbackFunc. This function type has two arguments that specify the callback domain and ID so that you know why the callback is occurring. The type also has acbdataargument that is used to pass data specific to the callback.- Subscriber

A subscriber is used to associate each of your callback functions with one or more CUDA API functions. There can be at most one subscriber initialized with

cuptiSubscribe()at any time. Before initializing a new subscriber, the existing subscriber must be finalized withcuptiUnsubscribe().

Each callback domain is described in detail below. Unless explicitly stated, it is not supported to call any CUDA runtime or driver API from within a callback function. Doing so may cause the application to hang.

2.6.1. Driver and Runtime API Callbacks#

Using the callback API with the CUPTI_CB_DOMAIN_DRIVER_API or CUPTI_CB_DOMAIN_RUNTIME_API domains, you can associate a callback function with one or more CUDA API functions. When those CUDA functions are invoked in the application, your callback function is invoked as well. For these domains, the cbdata argument to your callback function will be of the type CUpti_CallbackData.

It is legal to call cudaThreadSynchronize(), cudaDeviceSynchronize(), cudaStreamSynchronize(), cuCtxSynchronize(), and cuStreamSynchronize() from within a driver or runtime API callback function.

The following code shows a typical sequence used to associate a callback function with one or more CUDA API functions. To simplify the presentation, error checking code has been removed.

CUpti_SubscriberHandle subscriber;

MyDataStruct *my_data = ...;

...

cuptiSubscribe(&subscriber,

(CUpti_CallbackFunc)my_callback , my_data);

cuptiEnableDomain(1, subscriber,

CUPTI_CB_DOMAIN_RUNTIME_API);

First, cuptiSubscribe is used to initialize a subscriber with the my_callback callback function. Next, cuptiEnableDomain is used to associate that callback with all the CUDA runtime API functions. Using this code sequence will cause my_callback to be called twice each time any of the CUDA runtime API functions are invoked, once on entry to the CUDA function and once just before exit from the CUDA function. CUPTI callback API functions cuptiEnableCallback and cuptiEnableAllDomains can also be used to associate CUDA API functions with a callback (see reference below for more information).

The following code shows a typical callback function.

void CUPTIAPI

my_callback(void *userdata, CUpti_CallbackDomain domain,

CUpti_CallbackId cbid, const void *cbdata)

{

const CUpti_CallbackData *cbInfo = (CUpti_CallbackData *)cbdata;

MyDataStruct *my_data = (MyDataStruct *)userdata;

if ((domain == CUPTI_CB_DOMAIN_RUNTIME_API) &&

(cbid == CUPTI_RUNTIME_TRACE_CBID_cudaMemcpy_v3020)) {

if (cbInfo->callbackSite == CUPTI_API_ENTER) {

cudaMemcpy_v3020_params *funcParams =

(cudaMemcpy_v3020_params *)(cbInfo->

functionParams);

size_t count = funcParams->count;

enum cudaMemcpyKind kind = funcParams->kind;

...

}

...

In your callback function, you use the CUpti_CallbackDomain and CUpti_CallbackID parameters to determine which CUDA API function invocation is causing this callback. In the example above, we are checking for the CUDA runtime cudaMemcpy function. The cbdata parameter holds a structure of useful information that can be used within the callback. In this case, we use the callbackSite member of the structure to detect that the callback is occurring on entry to cudaMemcpy, and we use the functionParams member to access the parameters that were passed to cudaMemcpy. To access the parameters, we first cast functionParams to a structure type corresponding to the cudaMemcpy function. These parameter structures are contained in generated_cuda_runtime_api_meta.h, generated_cuda_meta.h, and a number of other files. When possible, these files are included for you by cupti.h.

The callback_event and callback_timestamp samples described on the samples page both show how to use the callback API for the driver and runtime API domains.

2.6.2. Resource Callbacks#

Using the callback API with the CUPTI_CB_DOMAIN_RESOURCE domain, you can associate a callback function with some CUDA resource creation and destruction events. For example, when a CUDA context is created, your callback function will be invoked with a callback ID equal to CUPTI_CBID_RESOURCE_CONTEXT_CREATED. For this domain, the cbdata argument to your callback function will be of the type CUpti_ResourceData.

Note that APIs cuptiActivityFlush and cuptiActivityFlushAll will result in deadlock when called from stream destroy starting callback identified using callback ID CUPTI_CBID_RESOURCE_STREAM_DESTROY_STARTING.

2.6.3. Synchronization Callbacks#

Using the callback API with the CUPTI_CB_DOMAIN_SYNCHRONIZE domain, you can associate a callback function with CUDA context and stream synchronizations. For example, when a CUDA context is synchronized, your callback function will be invoked with a callback ID equal to CUPTI_CBID_SYNCHRONIZE_CONTEXT_SYNCHRONIZED. For this domain, the cbdata argument to your callback function will be of the type CUpti_SynchronizeData.

2.6.4. State Callbacks#

Any fatal error encountered by an explicit CUPTI API call is returned by the API itself, whereas errors encountered by CUPTI in the background is returned to the user only during the next explicit CUPTI API call. Using the callback API with the CUPTI_CB_DOMAIN_STATE domain, you can associate a callback function with errors in CUPTI, and receive the reported error instantaneously. For example, when a CUPTI runs into a fatal error, your callback function will be invoked with a callback ID equal to CUPTI_CBID_STATE_FATAL_ERROR. For this domain, the cbdata argument to your callback function will be of the type CUpti_StateData.

As part of CUpti_StateData, you can receive the error code of the failure, along with an appropriate error message with possible causes or appropriate links to documentation. The example usage of these callbacks can be found in the CUPTI trace samples.

2.7. NVIDIA Tools Extension (NVTX) Support#

CUPTI provides both callback and activity record support for NVTX (NVIDIA Tools Extension) APIs, including extended payload decoding introduced in NVTX v3.2.

2.7.1. NVTX Activity Records#

To collect CUPTI activity records for NVTX events, you must enable the appropriate activity kinds using the CUPTI Activity API cuptiActivityEnable:

CUptiResult res = cuptiActivityEnable(CUPTI_ACTIVITY_KIND_MARKER);

CUptiResult res = cuptiActivityEnable(CUPTI_ACTIVITY_KIND_MARKER_DATA);

Note:

CUPTI_ACTIVITY_KIND_MARKER must be enabled before enabling CUPTI_ACTIVITY_KIND_MARKER_DATA.

Without MARKER, no MARKER_DATA records will be emitted.

2.7.2. NVTX Callbacks#

You can associate a callback function with NVTX API calls using the CUPTI_CB_DOMAIN_NVTX domain. When an NVTX function is invoked, CUPTI triggers your registered callback.

First, cuptiSubscribe is used to initialize a subscriber with the NvtxCallbacks callback function. Next, cuptiEnableDomain is used to associate that callback with all the NVTX functions. Using this code sequence will cause NvtxCallbacks to be called once each time any of the NVTX functions are invoked

2.7.2.1. Typical callback registration#

CUpti_SubscriberHandle subscriber;

MyDataStruct *my_data = ...;

cuptiSubscribe(&subscriber, (CUpti_CallbackFunc)NvtxCallbacks, my_data);

cuptiEnableDomain(1, subscriber, CUPTI_CB_DOMAIN_NVTX);

Alternatively, use the CUPTI Callback API cuptiEnableCallback for fine-grained control.

2.7.2.2. Callback function example#

void CUPTIAPI

NvtxCallbacks(void *userdata, CUpti_CallbackDomain domain,

CUpti_CallbackId cbid, const void *cbdata)

{

const CUpti_NvtxData *nvtxInfo = (const CUpti_NvtxData *)cbdata;

if ((domain == CUPTI_CB_DOMAIN_NVTX) &&

(cbid == CUPTI_CBID_NVTX_nvtxRangeStartEx))

{

nvtxRangeStartEx_params *params =

(nvtxRangeStartEx_params *)nvtxInfo->functionParams;

nvtxRangeId_t *id =

(nvtxRangeId_t *)nvtxInfo->functionReturnValue;

...

}

}

Use functionParams to access input arguments and functionReturnValue for output.

2.7.3. Initialization to Receive NVTX Callbacks or Activity Records#

2.7.3.1. For your CUPTI-based profiling library#

The environment must be configured to inject the library at runtime:

setenv("NVTX_INJECTION32_PATH", "/path/to/32-bit/version/of/cupti/based/profiling/library", 1);

setenv("NVTX_INJECTION64_PATH", "/path/to/64-bit/version/of/cupti/based/profiling/library", 1);

Export the following injection functions:

/* Extern the CUPTI NVTX initialization APIs. The APIs are thread-safe */

extern "C" CUptiResult CUPTIAPI cuptiNvtxInitialize(void *pfnGetExportTable);

extern "C" CUptiResult CUPTIAPI cuptiNvtxInitialize2(void *pfnGetExportTable);

Ensure these functions are defined in your profiling library to initialize NVTX support when the library is loaded:

extern "C" int InitializeInjectionNvtx(void* p)

{

return (cuptiNvtxInitialize(p) == CUPTI_SUCCESS) ? 1 : 0;

}

extern "C" int InitializeInjectionNvtx2(void* p)

{

return (cuptiNvtxInitialize2(p) == CUPTI_SUCCESS) ? 1 : 0;

}

2.7.3.2. For application that have embedded CUPTI APIs#

The environment variables must be set to CUPTI library itself:

setenv("NVTX_INJECTION32_PATH", "/path/to/cupti/library/libcupti.so", 1);

setenv("NVTX_INJECTION64_PATH", "/path/to/cupti/library/libcupti.so", 1);

NOTE: User does not need to export cuptiNvtxInitialize or cuptiNvtxInitialize2 functions in this case.

2.7.3.3. NVTX Versions#

NVTX v1/v2: Initialization is done once per process.

NVTX v3: Each dynamic library with NVTX v3 initializes separately on its first NVTX call.

2.7.4. CUPTI NVTX Extended Payload Support#

CUPTI provides utilities to parse and decode extended payloads emitted by NVTX v3.2 APIs.

Refer nvToolsExtPayload.h for the NVTX extended payload API documentation.

2.7.4.1. New CUPTI APIs Introduced#

cuptiNvtxExtensionInitializecuptiActivityGetNvtxExtPayloadAttrcuptiActivityGetNvtxExtPayloadEntryTypeInfo

2.7.4.2. CUPTI initialization for NVTX extended payload parsing#

The workflow is similar to above section Initialization to Receive NVTX Callbacks or Activity Records. Export the following injection function:

/* Extern the CUPTI NVTX Extenstion initialization APIs. The API is thread-safe */

extern "C" CUptiResult CUPTIAPI cuptiNvtxExtensionInitialize(nvtxExtModuleInfo_t *moduleInfo);

Ensure this function is defined in your profiling library to initialize NVTX extension support when the library is loaded:

extern "C" int InitializeInjectionNvtxExtension(void* p)

{

return (cuptiNvtxExtensionInitialize(p) == CUPTI_SUCCESS) ? 1 : 0;

}

NOTE: User does not need to export cuptiNvtxExtensionInitialize function in the case where CUPTI APIs are embedded in the application.

Export these CUPTI APIs to parse NVTX extended payloads:

extern "C" CUptiResult CUPTIAPI cuptiActivityGetNvtxExtPayloadAttr(

uint32_t cuptiDomainId, uint64_t schemaId, CUpti_NvtxExtPayloadAttr *pPayloadAttributes);

extern "C" const nvtxPayloadEntryTypeInfo_t * CUPTIAPI cuptiActivityGetNvtxExtPayloadEntryTypeInfo();

2.7.4.3. Supported Features#

Payload schema registration via

nvtxPayloadSchemaRegisterEnum type registration via

nvtxPayloadEnumRegisterPayload schema type

NVTX_PAYLOAD_SCHEMA_TYPE_STATICPayload schema type

NVTX_PAYLOAD_SCHEMA_TYPE_DYNAMICNOTE: If the dynamic schema is nested schema, it is not supported.

Payload schema entry type is an array:

Fixed-size arrays i.e. entry types with flag

NVTX_PAYLOAD_ENTRY_FLAG_ARRAY_FIXED_SIZELength-indexed arrays i.e. entry types with flag

NVTX_PAYLOAD_ENTRY_FLAG_ARRAY_LENGTH_INDEXZero-terminated arrays i.e. entry types with flag

NVTX_PAYLOAD_ENTRY_FLAG_ARRAY_ZERO_TERMINATED

NCCL library support:

NVTX payloads used in the NCCL library are supported.

2.7.4.4. Limitations#

NVTX callbacks for NVTX APIs

nvtxPayloadSchemaRegisterandnvtxPayloadEnumRegisterare not supported.Payload entries that are registered strings are not supported:

Entry type is

NVTX_PAYLOAD_ENTRY_TYPE_NVTX_REGISTERED_STRING_HANDLE

Payload semantics are not supported:

nvtxSemanticsHeader_tis used to define semantics for payloads.

Payload schema types not supported:

NVTX_PAYLOAD_SCHEMA_TYPE_UNIONNVTX_PAYLOAD_SCHEMA_TYPE_UNION_WITH_INTERNAL_SELECTOR

Payload entries that are deep copy are not supported:

Entry types with flag

NVTX_PAYLOAD_SCHEMA_FLAG_DEEP_COPY

New payload-based NVTX APIs are not supported:

nvtxRangePushPayloadnvtxRangePopPayloadnvtxRangeStartPayloadnvtxRangeEndPayloadnvtxMarkPayload

2.7.4.5. Helper files#

Location:

$CUPTI_DIR/samples/common/nvtx/nvtx_payload_parser.[cpp|h]: Decodes CUPTI-collected NVTX extended payloads.nvtx_payload_attributes.[cpp|h]: Schema and attribute descriptor logic.

Look for the comment below to locate where the decoded data appears:

// NOTE: PLACEHOLDER FOR GETTING PAYLOAD DATA FOR AN ENTRY IN THE SCHEMA

Refer samples cupti_nvtx and cupti_nvtx_ext_payload for practical examples of using NVTX with CUPTI.

CuptiParseNvtxPayload() is used to decode NVTX extended payloads from CUPTI activity records in cupti_nvtx_ext_payload sample.

2.8. CUPTI Profiler Host API#

CUPTI has introduced a new set of profiler host APIs cuptiProfilerHost* in the CUDA 12.6 release. These APIs can be used to perform various

host-side tasks necessary for collecting profiling data through either PM Sampling APIs or the new Range Profiler APIs.

These APIs are provided in the header file cupti_profiler_host.h.

These new APIs replace the old Perfworks APIs and are more high-level compared to the previous NVPW Raw Metric and Metric Evaluator APIs. They maintain key functionality while abstracting away low-level details, offering a more streamlined and efficient way to gather performance metrics.

In CUPTI Profiling there are three types of host operations, these are enumeration, configuration and evaluation.

2.8.1. Enumeration#

A metric is a quantifiable measurement used to assess the performance and efficiency of a CUDA kernel. Metrics can provide insights into various computational characteristics such as execution time, instruction throughput, or cache efficiency.

When profiling a kernel, metrics are predefined to capture particular performance data. After the profiling is complete, the values associated with these metrics allow developers to analyze the kernel’s behavior, identify performance bottlenecks, and make informed optimizations.

For example, common metrics during kernel profiling might include:

Execution Time: The duration the kernel takes to complete.

Memory Bandwidth: The amount of data transferred between units.

Instruction Throughput: The number of instructions executed per second.

Cache Hit Rate: The percentage of memory accesses that are served by the cache.

Metrics provide a detailed, quantitative view of the kernel’s performance, making them essential for performance tuning and optimization.

CUPTI has introduced several APIs for querying the supported metrics for various chips and retrieving properties of those metrics, such as the metric type and a description of what each metric represents.

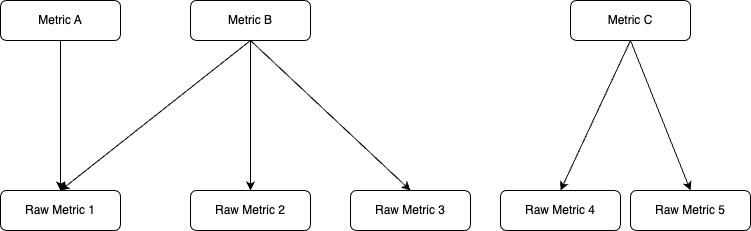

Each metric can be treated as a function of single or multiple raw metrics (also referred as raw counters). CUPTI will schedule (refer configuration) and profile the associated raw metrics and store it in a counter data image (refer evaluation).

Metric Types:

All the supported metrics in CUPTI can be categorized into 3 groups that are counters, ratio and throughput metrics. For profiling a metric user needs to add a rollup or a submetric or sometimes both based on metric type one wants to collect profiling data. A counter metric type needs to have a rollup but having a submetric is optional. For ratio metric type rollups are not supported so only a submetric will be added as a suffix for a valid profiling metric. For throughput metrics both rollups and submetrics are needed.

CUPTI supports four types of rollups that are sum, avg, min and max.

Rollup Table# Rollup Type

Metric details

.sum

The sum of counter values across all unit instances.

.avg

The average of counter values across all unit instances.

.min

The minimum of counter values across all unit instances.

.max

The maximum of counter values across all unit instances.

For counter metric types, refer to the below table for a list of optional sub metrics that can be added to the base metric for profiling.

Submetrics supported for counter metrics# Submetrics

Description

.peak_sustained

The peak sustained rate.

.peak_sustained_active

The peak sustained rate during unit active cycles.

.peak_sustained_active.per_second

The peak sustained rate during unit active cycles, per second.

.peak_sustained_elapsed

The peak sustained rate during unit elapsed cycles.

.peak_sustained_elapsed.per_second

The peak sustained rate during unit elapsed cycles, per second.

.per_second

The number of operations per second.

.per_cycle_active

The number of operations per unit active cycle.

.per_cycle_elapsed

The number of operations per unit elapsed cycle.

.pct_of_peak_sustained_active

% of peak sustained rate achieved during unit active cycles.

.pct_of_peak_sustained_elapsed

% of peak sustained rate achieved during unit elapsed cycles.

For example,

smsp__warps_launched.sum.per_second

Base metric:

smsp__warps_launchedRollup:

.sumSubmetric:

.per_secondRatio metric only supports below three sub-metrics.

Submetrics supported for ratio metrics# Submetrics

Description

.pct

The value expressed as a percentage.

.ratio

The value expressed as a ratio.

.max_rate

The ratio’s maximum value.

For example,

smsp__average_inst_executed_per_warp.max_rate

Base metric:

smsp__average_inst_executed_per_warpRollup:

NOT ALLOWEDSubmetric:

.max_rateFor throughput metric, users need to add one of the rollups with below submetrics.

Submetrics supported for throughput metrics# Submetrics

Description

.pct_of_peak_sustained_active

% of peak sustained rate achieved during unit active cycles.

.pct_of_peak_sustained_elapsed

% of peak sustained rate achieved during unit elapsed cycles.

For example,

sm__throughput.sum.pct_of_peak_sustained_active

Base metric:

sm__throughputRollup:

.sumSubmetric:

.pct_of_peak_sustained_activeNote

For decoding the metric name, the user can refer to the Metrics Decoder section in kernel profiling guide mentioned in Nsight compute documentation.

APIs:

The

cuptiProfilerHostGetBaseMetrics()API for listing base metrics for a metric type (counter, throughput and ratio).The

cuptiProfilerHostGetSubMetrics()API for listing the submetric for a metric.The

cuptiProfilerHostGetMetricProperties()API for querying the details about a metric like associated hardware unit, metric type and a short description about the metric.Code Samples:

// Initialize profiler host CUptiResult GetSupportedBaseMetrics(std::vector<std::string>& metricsList) { for (size_t metricTypeIndex = 0; metricTypeIndex < CUPTI_METRIC_TYPE__COUNT; ++metricTypeIndex) { CUpti_Profiler_Host_GetBaseMetrics_Params getBaseMetricsParams {CUpti_Profiler_Host_GetBaseMetrics_Params_STRUCT_SIZE}; getBaseMetricsParams.pHostObject = m_pHostObject; getBaseMetricsParams.metricType = (CUpti_MetricType)metricTypeIndex; cuptiProfilerHostGetBaseMetrics(&getBaseMetricsParams); for (size_t metricIndex = 0; metricIndex < getBaseMetricsParams.numMetrics; ++metricIndex) { metricsList.push_back(getBaseMetricsParams.ppMetricNames[metricIndex]); } } return CUPTI_SUCCESS; } CUptiResult GetMetricProperties(const std::string& metricName, CUpti_MetricType& metricType, std::string& metricDescription) { CUpti_Profiler_Host_GetMetricProperties_Params getMetricPropertiesParams {CUpti_Profiler_Host_GetMetricProperties_Params_STRUCT_SIZE}; getMetricPropertiesParams.pHostObject = m_pHostObject; getMetricPropertiesParams.pMetricName = metricName.c_str(); cuptiProfilerHostGetMetricProperties(&getMetricPropertiesParams); metricType = getMetricPropertiesParams.metricType; metricDescription = getMetricPropertiesParams.pDescription; return CUPTI_SUCCESS; } CUptiResult GetSubMetrics(const std::string& metricName, std::vector<std::string>& subMetricsList) { CUpti_MetricType metricType; std::string metricDescription; GetMetricProperties(metricName, metricType, metricDescription); CUpti_Profiler_Host_GetSubMetrics_Params getSubMetricsParams {CUpti_Profiler_Host_GetSubMetrics_Params_STRUCT_SIZE}; getSubMetricsParams.pHostObject = m_pHostObject; getSubMetricsParams.pMetricName = metricName.c_str(); getSubMetricsParams.metricType = metricType; cuptiProfilerHostGetSubMetrics(&getSubMetricsParams); for (size_t subMetricIndex = 0;subMetricIndex < getSubMetricsParams.numOfSubmetrics; ++subMetricIndex) { subMetricsList.push_back(getSubMetricsParams.ppSubMetrics[subMetricIndex]); } return CUPTI_SUCCESS; } // Deinitialize profiler hostSample cupti_metric_properties shows how to query number of passes required to collect a set of counters.

2.8.1.1. Single Pass Metric Sets#

For PM Sampling, CUPTI requires that the metrics configuration specified by the user be collected in a single pass. Selecting compatible metrics for single pass collection can be challenging, so CUPTI provides predefined single pass metric sets. Each set contains metrics that can be gathered together in one pass, and these sets vary depending on the GPU architecture. Metrics from different single pass metric sets cannot be combined for PM sampling.

From CUPTI 13.2 onwards, CUPTI provides two new APIs to help users query available single pass metric sets and the metrics within each set:

cuptiProfilerHostGetSinglePassSets():Lists the available single pass metric sets for a given chip.cuptiProfilerHostGetMetricsInSinglePassSet():Lists the metrics included in a specific single pass metric set.

These APIs enable users to programmatically select compatible metrics for PM sampling instead of parsing metric names with “Triage” namespace. For usage example, refer to the pm_sampling sample in CUPTI samples.

Code Samples:

CUptiResult GetSupportedSinglePassMetricsSets(std::vector<const char*>& metricsSetsList, const char* pChipName) { CUpti_Profiler_Host_GetSinglePassSets_Params getSinglePassSetsParams {CUpti_Profiler_Host_GetSinglePassSets_Params_STRUCT_SIZE}; getSinglePassSetsParams.pChipName = pChipName; CUPTI_API_CALL(cuptiProfilerHostGetSinglePassSets(&getSinglePassSetsParams)); metricsSetsList.resize(getSinglePassSetsParams.numOfSinglePassSets); getSinglePassSetsParams.ppSinglePassSets = metricsSetsList.data(); CUPTI_API_CALL(cuptiProfilerHostGetSinglePassSets(&getSinglePassSetsParams)); return CUPTI_SUCCESS; } CUptiResult GetMetricsInSinglePassSet(const char* pSinglePassSet, std::vector<std::string>& metricsList, const char* pChipName) { std::vector<uint8_t> metricsBuffer; std::vector<size_t> metricsIndices; { CUpti_Profiler_Host_GetMetricsInSinglePassSet_Params params {CUpti_Profiler_Host_GetMetricsInSinglePassSet_Params_STRUCT_SIZE}; params.pChipName = pChipName; params.pSinglePassSetName = pSinglePassSet; CUPTI_API_CALL(cuptiProfilerHostGetMetricsInSinglePassSet(¶ms)); metricsBuffer.resize(params.metricsBufferSize); metricsIndices.resize(params.numOfMetricsInSinglePassSet); } { CUpti_Profiler_Host_GetMetricsInSinglePassSet_Params params {CUpti_Profiler_Host_GetMetricsInSinglePassSet_Params_STRUCT_SIZE}; params.pChipName = pChipName; params.pSinglePassSetName = pSinglePassSet; params.numOfMetricsInSinglePassSet = metricsIndices.size(); params.pMetricsIndicesBuffer = metricsIndices.data(); params.metricsBufferSize = metricsBuffer.size(); params.pMetricsBuffer = metricsBuffer.data(); CUPTI_API_CALL(cuptiProfilerHostGetMetricsInSinglePassSet(¶ms)); } metricsList.resize(metricsIndices.size()); for (uint32_t i = 0; i < metricsIndices.size(); i++) { const auto metrixIndex = metricsIndices[i]; metricsList[i] = std::string((const char*)&metricsBuffer[metrixIndex]); } return CUPTI_SUCCESS; }

2.8.2. Configuration#

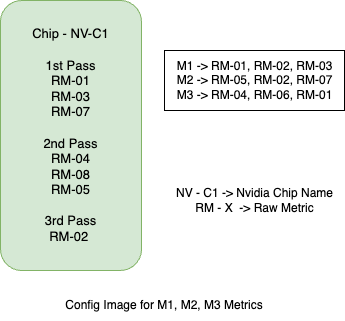

After users have selected the metrics to be profiled, they must create a configuration image before starting the profiling session. This image contains the scheduling details for the specified metrics, including how many passes are required to collect the profiling data and which metrics will be collected during each pass. Users can store the config image offline and can reuse it for profiling the same list of metrics and for the same chip.

Below image shows a very high level overview of config image content. As we can see the config image has scheduling information for 3 metrics (M1, M2, M3) when collected together, it takes 3 passes. Each metric has defined raw metrics (RM-X) which are scheduled to collect in respective passes.

Note

For PM sampling the config image should be able to schedule the metric for collecting profiling data in

single pass, else CUPTI will report CUPTI_ERROR_NOT_SUPPORTED error.

APIs:

cuptiProfilerHostConfigAddMetrics(): Add a list of metrics which will be scheduled for profiling in the config image.cuptiProfilerHostGetConfigImageSize(): Once the metrics list is added, the user can call this API for getting how much memory will be allocated for storing the config image information.cuptiProfilerHostGetConfigImage(): The config image will be stored in the user allocated buffer.

Code Samples:

// Initialize profiler host CUpti_Profiler_Host_ConfigAddMetrics_Params configAddMetricsParams {CUpti_Profiler_Host_ConfigAddMetrics_Params_STRUCT_SIZE}; configAddMetricsParams.pHostObject = profilerHostObjectPtr; configAddMetricsParams.ppMetricNames = metricNames.data(); configAddMetricsParams.numMetrics = metricNames.size(); cuptiProfilerHostConfigAddMetrics(&configAddMetricsParams); CUpti_Profiler_Host_GetConfigImageSize_Params getConfigImageSizeParams {CUpti_Profiler_Host_GetConfigImageSize_Params_STRUCT_SIZE}; getConfigImageSizeParams.pHostObject = profilerHostObjectPtr; cuptiProfilerHostGetConfigImageSize(&getConfigImageSizeParams); configImage.resize(getConfigImageSizeParams.configImageSize, 0); CUpti_Profiler_Host_GetConfigImage_Params initializeConfigImageParams {CUpti_Profiler_Host_GetConfigImage_Params_STRUCT_SIZE}; initializeConfigImageParams.pHostObject = profilerHostObjectPtr; initializeConfigImageParams.pConfigImage = configImage.data(); initializeConfigImageParams.configImageSize = configImage.size(); cuptiProfilerHostGetConfigImage(&initializeConfigImageParams); // Deinitialize profiler host

NVLINK (nvl*), C2C, and PCIe metrics are collected at the device level, while all other metrics are collected at the context level.

Device-level metrics cannot be collected alongside context-level metrics. For device-level metrics, you need to pass an empty

counter availability image when setting up CUPTI profiler host APIs, which is required before creating the config image.

This limitation is addressed in the CUDA 13.1 release. To include device-level metrics in the image, set the parameter

bAllowDeviceLevelCounters in the CUpti_Profiler_GetCounterAvailability_Params structure.

2.8.3. Evaluation#

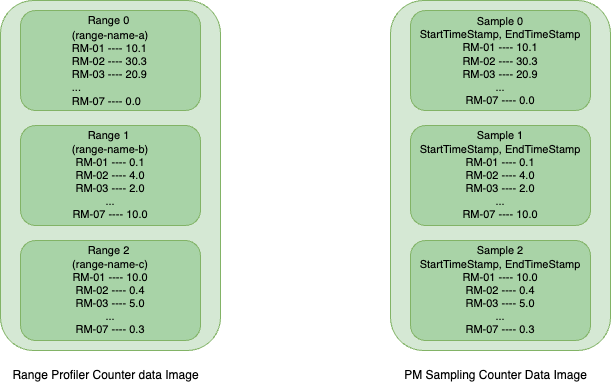

After collecting the profiling data, users must call the decode API for each profiling type (Range Profiling or PM Sampling) to decode the data stored in a hardware buffer into a user-allocated host buffer, known as the CounterData Image. This CounterData Image contains the profiling data for the queried metrics in an internal format, and users need to call the evaluation APIs to extract the data in a human-readable format.

As shown in the above image which represents a very high level overview of how data is stored in a counter data image. For Range Profiling, each range is identified by a unique range name, whereas in PM Sampling, each sample is distinguished by its start and end timestamps.

APIs:

cuptiRangeProfilerGetCounterDataInfo(): In Range profiling, this API reports how many ranges have been profiled and stored in the counter data.

cuptiRangeProfilerCounterDataGetRangeInfo(): User can use this API for querying the range name for a particular range index in the counter data.

cuptiProfilerHostEvaluateToGpuValues(): Reports the profiling data for a list of metrics for a range index or sample index.Code Samples:

// Initialize profiler host CUpti_RangeProfiler_GetCounterDataInfo_Params getCounterDataInfoParams {CUpti_RangeProfiler_GetCounterDataInfo_Params_STRUCT_SIZE}; getCounterDataInfoParams.pCounterDataImage = counterData; getCounterDataInfoParams.counterDataImageSize = counterDataSize; cuptiRangeProfilerGetCounterDataInfo(&getCounterDataInfoParams); size_t numOfRanges = getCounterDataInfoParams.numTotalRanges; metricNameValueMap.resize(metricNames.size()); for (size_t rangeIndex = 0; rangeIndex < numOfRanges; ++rangeIndex) { CUpti_RangeProfiler_CounterData_GetRangeInfo_Params getRangeInfoParams {CUpti_RangeProfiler_CounterData_GetRangeInfo_Params_STRUCT_SIZE}; getRangeInfoParams.pCounterDataImage = counterData; getRangeInfoParams.counterDataImageSize = counterDataSize; getRangeInfoParams.rangeIndex = rangeIndex; getRangeInfoParams.rangeDelimiter = "/"; cuptiRangeProfilerCounterDataGetRangeInfo(&getRangeInfoParams); std::vector<double> metricValues(metricNames.size()); CUpti_Profiler_Host_EvaluateToGpuValues_Params evalauateToGpuValuesParams {CUpti_Profiler_Host_EvaluateToGpuValues_Params_STRUCT_SIZE}; evalauateToGpuValuesParams.pHostObject = profilerHostObjectPtr; evalauateToGpuValuesParams.pCounterDataImage = counterData; evalauateToGpuValuesParams.counterDataImageSize = counterDataSize; evalauateToGpuValuesParams.ppMetricNames = metricNames.data(); evalauateToGpuValuesParams.numMetrics = metricNames.size(); evalauateToGpuValuesParams.rangeIndex = rangeIndex; evalauateToGpuValuesParams.pMetricValues = metricValues.data(); cuptiProfilerHostEvaluateToGpuValues(&evalauateToGpuValuesParams); } // Deinitialize profiler host

2.9. CUPTI Range Profiling API#

Beginning with CUDA 12.6 Update 2, CUPTI has launched a new suite of high-level profiling APIs known as cuptiRangeProfiler*. These APIs are similar

to the previous cuptiProfiler* APIs, enabling users to collect profiling data for metrics at the CUDA context level. This uniformity in API calls

ensures alignment with other profiling components within CUPTI. Users can designate specific ranges within their applications to gather profiling

data on the GPU. These APIs are provided in the header file cupti_range_profiler.h.

The new APIs are compatible with Turing and newer GPU architectures (compute capability 7.5 and above) and require handling only two image types: the config image, which contains scheduling information for metrics, and the counter data image, where profiling data is stored after being decoded from the GPU. This method resembles the earlier profiler APIs and PM sampling APIs, involving both Host operations (enumeration, configuration, and evaluation) and Target operations (data collection).

2.9.1. Usage#

For Host tasks such as enumeration, configuration, and evaluation, please see the CUPTI Host API Usage section.

Collecting Profiling Data (collection):

Once you have the configuration image set up with all essential scheduling details, you can employ the range profiler APIs to gather profiling data. It is important to become familiar with the specific concepts related to range profiling before using these APIs.

Range Modes:

CUPTI offers two range modes to determine the definition of a profiler range:

Auto Range:

In this mode, each kernel launch is treated as an individual range. CUPTI assigns numeric values starting from 0 to these ranges while evaluating the collected data. Being defined around the boundary of a kernel, this mode includes a context synchronization at the end of each kernel launch, making operations synchronous. This can lead to problems like hang state, if there are dependencies between two kernel launches.

User Range:

In this mode, users can explicitly define ranges using Push/Pop APIs, allowing ranges to span multiple kernel launches. As a result, kernels can be launched asynchronously in this mode.

Replay Modes:

A metric includes various raw counters, which CUPTI gathers to generate the final metric value. Due to hardware limitations, some metrics need multiple replays. Replay mode is crucial for profiling multi-pass metric collections. For single-pass metrics, no replays are required; hence, replay modes can be disregarded.

Kernel Replay:

In this mode, a kernel is executed multiple times to gather complete profiling data, with CUPTI managing save/restore operations and launching the kernel several times. This replay mode is only supported in Auto Range Mode.

User Replay:

Users must save and restore the context state prior to replaying the range. This mode supports both Auto and User range modes. CUPTI has checkpoint APIs for managing save and restore of context data at a given time.

Application Replay:

Suitable for devices with limited memory, users relaunch the application using the same configuration image and intermediate counter data file to gather profiling data. This mode supports both Auto and User range modes.

Note

The application needs to have a deterministic runtime workload, else profiling data will be not correct.

Nested Ranges:

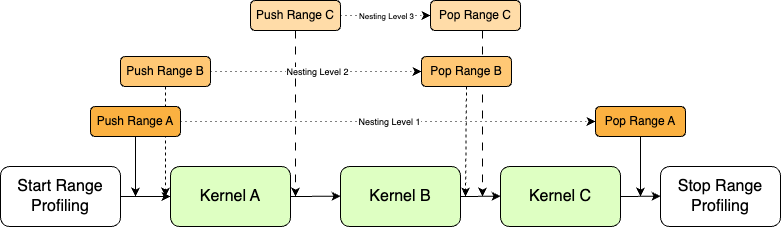

CUPTI allows nested Push/Pop range API calls, useful for detailed profiling. For example, Range A could encompass kernels A, B, and C, while Range B includes B and C, and Range C only includes C. Each range has an associated nesting level like for RangeA which is at base level is assigned to level 1 and nesting level for Range B and C are 2 and 3 respectively.

The Nested range is only allowed in User range mode and as the nesting level increases the number of replay required for collecting the profiling data for nested ranges also increases. In

CUpti_RangeProfiler_SetConfig_Paramsstruct numNestingLevels and minNestingLevel are specific to nested ranges where minNestingLevel will set the base level for the profiling session. The numNestingLevels parameters sets how many levels to be profiled starting from the base level in a profiling session; if set to 2 then only ranges in nesting level 1 and 2 will be profiled.

2.9.2. API usage#

Enable Range Profiling:

Before starting range profiling, enable it using

cuptiRangeProfilerEnable()for a CUDA context. This creates aCUpti_RangeProfiler_Object, which stores intermediate data and identifies other profiler APIs for the context.Create Counter Data Image:

Users need to allocate a CPU buffer for storing the decoded profiling data collected in the GPU. The buffer has to be in a specific format for storing the data and for this CUPTI exposes a set of APIs for getting the size of buffer required for storing the profiling data and once the buffer is allocated, CUPTI Initializes the data to default values.

cuptiRangeProfilerGetCounterDataSize(): For getting the size of buffer required based on metrics and number of ranges to be profiled in the application.cuptiRangeProfilerCounterDataImageInitialize(): This API initializes the buffer allocated by the user. This can also be used to reset the counter data image.

Set configuration:

Use

cuptiRangeProfilerSetConfig()API to customize the profiling configuration. Users must provide both the configuration and counter data images, along with parameters like range mode, replay mode, and the number of ranges per pass.Start Range Profiling:

After setting the configuration, call

cuptiRangeProfilerStart()API to define the profiling session’s boundary. Kernels outside this boundary will not be profiled.Push Range:

For User range mode, call

cuptiRangeProfilerPushRange()API to define the start of a range. Kernels launched between Push/Pop API calls will be profiled.Pop Range:

Use

cuptiRangeProfilerPopRange()API to mark the end of a range. Ensure the number of Pop range API calls matches the number of Push range calls to avoid errors.Note

CUPTI allows nested Push/Pop API calls for nested range profiling. Refer Nested Range Profiling.

Stop Range Profiling:

The

cuptiRangeProfilerStop()API sets the end of the boundary for range profiling. Along with that it also reports if all the passes for collecting profiling data for requested metrics are completed or not. If not then users need to replay the application or the Start/Stop boundary until all the passes are completed. For Kernel Replay mode, CUPTI internally replay the kernel so the allPassSubmitted parameter will always be 1. For application replay mode, it reports the current passIndex and targetNestingLevel which users need to set in thecuptiRangeProfilerSetConfig()API when the next replay is done.Decode Range Profiling data:

Profiling data stored in the GPU buffer must be decoded using

cuptiRangeProfilerDecodeData()API. This API adds a CUDA context synchronization before decoding.Note

If the number of ranges profiled in a pass is greater than the maximum limit of ranges can be stored in the counter data image then the ranges will be dropped and will be reported in the

cuptiRangeProfilerDecodeData()API.Disable Range Profiling:

Users need to call the

cuptiRangeProfilerDisable()API for destroying all the resources allocated by CUPTI for range profiling.

2.9.3. Sample code#

CUPTI ships a sample i.e. range_profiling, which showcases the API usage. The sample has two files range_profiling.h and range_profiling.cu.

For early prototypes users can simply take the range_profiling.h header file which has all the wrapper functions for host and target operations,

and use it in their application for collecting profiling data.

// Enable Range profiler

pRangeProfilerTarget->EnableRangeProfiler();

// Create CounterData Image

std::vector<uint8_t> counterDataImage;

pRangeProfilerTarget->CreateCounterDataImage(args.metrics, counterDataImage);

// Set range profiler configuration

pRangeProfilerTarget->SetConfig(CUPTI_AutoRange, CUPTI_KernelReplay, configImage,counterDataImage);

do

{

// Start Range Profiling

pRangeProfilerTarget->StartRangeProfiler();

{

// Push Range (Level 1)

pRangeProfilerTarget->PushRange("VectorAdd");

// Launch CUDA workload

vectorLaunchWorkLoad.LaunchKernel();

{

// Push Range (Level 2)

pRangeProfilerTarget->PushRange("Nested VectorAdd");

vectorLaunchWorkLoad.LaunchKernel();

// Pop Range (Level 2)

pRangeProfilerTarget->PopRange();

}

// Pop Range (Level 1)

pRangeProfilerTarget->PopRange();

}

vectorLaunchWorkLoad.LaunchKernel();

// Stop Range Profiling

pRangeProfilerTarget->StopRangeProfiler();

}

while (!pRangeProfilerTarget->IsAllPassSubmitted());

// Get Profiler Data

pRangeProfilerTarget->DecodeCounterData();

// Evaluate the results

size_t numRanges = 0;

pCuptiProfilerHost->GetNumOfRanges(counterDataImage, numRanges);

for (size_t rangeIndex = 0; rangeIndex < numRanges; ++rangeIndex) {

pCuptiProfilerHost->EvaluateCounterData(rangeIndex, args.metrics, counterDataImage);

}

pCuptiProfilerHost->PrintProfilerRanges();

// Clean up