Install NVIDIA GPU Operator

Install Helm

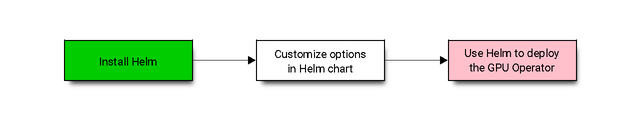

The preferred method to deploy the GPU Operator is using helm.

$ curl -fsSL -o get_helm.sh https://raw.githubusercontent.com/helm/helm/master/scripts/get-helm-3 \

&& chmod 700 get_helm.sh \

&& ./get_helm.sh

Now, add the NVIDIA Helm repository:

$ helm repo add nvidia https://helm.ngc.nvidia.com/nvidia \

&& helm repo update

Install the GPU Operator

The GPU Operator Helm chart offers a number of customizable options that can be configured depending on your environment.

Chart Customization Options

The following options are available when using the Helm chart. These options can be used with --set when installing via Helm.

Parameter |

Description |

Default |

|---|---|---|

|

When set to |

|

|

When set to Pods can specify |

|

|

When set to |

|

|

Map of custom annotations to add to all GPU Operator managed pods. |

|

|

Map of custom labels to add to all GPU Operator managed pods. |

|

|

By default, the Operator deploys NVIDIA drivers as a container on the system.

Set this value to |

|

|

The images are downloaded from NGC. Specify another image repository when using custom driver images. |

|

|

Controls whether the driver daemonset should build and load the |

|

|

Indicate if MOFED is directly pre-installed on the host. This is used to build and load |

|

|

By default, the driver container has an initial delay of |

|

|

When set to |

|

|

Version of the NVIDIA datacenter driver supported by the Operator. If you set |

Depends on the version of the Operator. See the Component Matrix for more information on supported drivers. |

|

The GPU Operator deploys NVIDIA Kata Manager when this field is |

|

|

Controls the strategy to be used with MIG on supported NVIDIA GPUs. Options

are either |

|

|

The MIG manager watches for changes to the MIG geometry and applies reconfiguration as needed. By default, the MIG manager only runs on nodes with GPUs that support MIG (for e.g. A100). |

|

|

Deploys Node Feature Discovery plugin as a daemonset.

Set this variable to |

|

|

Installs node feature rules that are related to confidential computing.

NFD uses the rules to detect security features in CPUs and NVIDIA GPUs.

Set this variable to |

|

|

DEPRECATED as of v1.9 |

|

|

Map of custom labels that will be added to all GPU Operator managed pods. |

|

|

The GPU operator deploys |

|

|

By default, the Operator deploys the NVIDIA Container Toolkit ( |

|

Namespace

Prior to GPU Operator v1.9, the operator was installed in the default namespace while all operands were

installed in the gpu-operator-resources namespace.

Starting with GPU Operator v1.9, both the operator and operands get installed in the same namespace.

The namespace is configurable and is determined during installation. For example, to install the GPU Operator

in the gpu-operator namespace:

$ helm install --wait --generate-name \

-n gpu-operator --create-namespace \

nvidia/gpu-operator

If a namespace is not specified during installation, all GPU Operator components will be installed in the

default namespace.

Operands

By default, the GPU Operator operands are deployed on all GPU worker nodes in the cluster.

GPU worker nodes are identified by the presence of the label feature.node.kubernetes.io/pci-10de.present=true,

where 0x10de is the PCI vendor ID assigned to NVIDIA.

To disable operands from getting deployed on a GPU worker node, label the node with nvidia.com/gpu.deploy.operands=false.

$ kubectl label nodes $NODE nvidia.com/gpu.deploy.operands=false

Common Deployment Scenarios

In this section, we present some common deployment recipes when using the Helm chart to install the GPU Operator.

Bare-metal/Passthrough with default configurations on Ubuntu

In this scenario, the default configuration options are used:

$ helm install --wait --generate-name \

-n gpu-operator --create-namespace \

nvidia/gpu-operator

For installing on Secure Boot systems or using Precompiled modules refer to Precompiled Driver Containers.

Bare-metal/Passthrough with default configurations on Red Hat Enterprise Linux

In this scenario, use the NVIDIA Container Toolkit image that is built on UBI 8:

$ helm install --wait --generate-name \

-n gpu-operator --create-namespace \

nvidia/gpu-operator \

--set toolkit-version=1.13.4-ubi8

Replace the 1.13.4 value in the preceding command with the version that is supported

with the NVIDIA GPU Operator.

Refer to the GPU Operator Component Matrix on the platform support page.

When using RHEL8 with Kubernetes, SELinux must be enabled either in permissive or enforcing mode for use with the GPU Operator. Additionally, network restricted environments are not supported.

Bare-metal/Passthrough with default configurations on CentOS

In this scenario, use the NVIDIA Container Toolkit image that is build on CentOS:

$ helm install --wait --generate-name \

-n gpu-operator --create-namespace \

nvidia/gpu-operator \

--set toolkit.version=1.13.4-centos7

For CentOS 8 systems, use the UBI 8 image: toolkit.version=1.13.4-ubi8.

Replace the 1.13.4 value in the preceding command with the version that is supported with the NVIDIA GPU Operator.

Refer to the GPU Operator Component Matrix on the platform support page.

You can also refer to the tags

for the NVIDIA Container Toolkit image from the NVIDIA NGC Catalog.

NVIDIA vGPU

Note

The GPU Operator with NVIDIA vGPUs requires additional steps to build a private driver image prior to install. Refer to the document NVIDIA vGPU for detailed instructions on the workflow and required values of the variables used in this command.

The command below will install the GPU Operator with its default configuration for vGPU:

$ helm install --wait --generate-name \

-n gpu-operator --create-namespace \

nvidia/gpu-operator \

--set driver.repository=$PRIVATE_REGISTRY \

--set driver.version=$VERSION \

--set driver.imagePullSecrets={$REGISTRY_SECRET_NAME} \

--set driver.licensingConfig.configMapName=licensing-config

NVIDIA AI Enterprise

Refer to GPU Operator with NVIDIA AI Enterprise.

Bare-metal/Passthrough with pre-installed NVIDIA drivers

In this example, the user has already pre-installed NVIDIA drivers as part of the system image:

$ helm install --wait --generate-name \

-n gpu-operator --create-namespace \

nvidia/gpu-operator \

--set driver.enabled=false

Bare-metal/Passthrough with pre-installed drivers and NVIDIA Container Toolkit

In this example, the user has already pre-installed the NVIDIA drivers and NVIDIA Container Toolkit (nvidia-docker2)

as part of the system image.

Note

These steps should be followed when using the GPU Operator v1.9+ on DGX A100 systems with DGX OS 5.1+.

Before installing the operator, ensure that the following configurations are modified depending on the container runtime configured in your cluster.

Docker:

Update the Docker configuration to add

nvidiaas the default runtime. Thenvidiaruntime should be setup as the default container runtime for Docker on GPU nodes. This can be done by adding thedefault-runtimeline into the Docker daemon config file, which is usually located on the system at/etc/docker/daemon.json:{ "default-runtime": "nvidia", "runtimes": { "nvidia": { "path": "/usr/bin/nvidia-container-runtime", "runtimeArgs": [] } } }Restart the Docker daemon to complete the installation after setting the default runtime:

$ sudo systemctl restart docker

Containerd:

Update

containerdto usenvidiaas the default runtime and addnvidiaruntime configuration. This can be done by adding below config to/etc/containerd/config.tomland restartingcontainerdservice.version = 2 [plugins] [plugins."io.containerd.grpc.v1.cri"] [plugins."io.containerd.grpc.v1.cri".containerd] default_runtime_name = "nvidia" [plugins."io.containerd.grpc.v1.cri".containerd.runtimes] [plugins."io.containerd.grpc.v1.cri".containerd.runtimes.nvidia] privileged_without_host_devices = false runtime_engine = "" runtime_root = "" runtime_type = "io.containerd.runc.v2" [plugins."io.containerd.grpc.v1.cri".containerd.runtimes.nvidia.options] BinaryName = "/usr/bin/nvidia-container-runtime"Restart the Containerd daemon to complete the installation after setting the default runtime:

$ sudo systemctl restart containerd

Install the GPU operator with the following options:

$ helm install --wait --generate-name \

-n gpu-operator --create-namespace \

nvidia/gpu-operator \

--set driver.enabled=false \

--set toolkit.enabled=false

Bare-metal/Passthrough with pre-installed NVIDIA Container Toolkit (but no drivers)

In this example, the user has already pre-installed the NVIDIA Container Toolkit (nvidia-docker2) as part of the system image.

Before installing the operator, ensure that the following configurations are modified depending on the container runtime configured in your cluster.

Docker:

Update the Docker configuration to add

nvidiaas the default runtime. Thenvidiaruntime should be setup as the default container runtime for Docker on GPU nodes. This can be done by adding thedefault-runtimeline into the Docker daemon config file, which is usually located on the system at/etc/docker/daemon.json:{ "default-runtime": "nvidia", "runtimes": { "nvidia": { "path": "/usr/bin/nvidia-container-runtime", "runtimeArgs": [] } } }Restart the Docker daemon to complete the installation after setting the default runtime:

$ sudo systemctl restart docker

Containerd:

Update

containerdto usenvidiaas the default runtime and addnvidiaruntime configuration. This can be done by adding below config to/etc/containerd/config.tomland restartingcontainerdservice.version = 2 [plugins] [plugins."io.containerd.grpc.v1.cri"] [plugins."io.containerd.grpc.v1.cri".containerd] default_runtime_name = "nvidia" [plugins."io.containerd.grpc.v1.cri".containerd.runtimes] [plugins."io.containerd.grpc.v1.cri".containerd.runtimes.nvidia] privileged_without_host_devices = false runtime_engine = "" runtime_root = "" runtime_type = "io.containerd.runc.v2" [plugins."io.containerd.grpc.v1.cri".containerd.runtimes.nvidia.options] BinaryName = "/usr/bin/nvidia-container-runtime"Restart the Containerd daemon to complete the installation after setting the default runtime:

$ sudo systemctl restart containerd

Configure toolkit to use the root directory of the driver installation as /run/nvidia/driver, which is the path mounted by driver container.

$ sudo sed -i 's/^#root/root/' /etc/nvidia-container-runtime/config.toml

Once these steps are complete, now install the GPU operator with the following options (which will provision a driver):

$ helm install --wait --generate-name \

-n gpu-operator --create-namespace \

nvidia/gpu-operator \

--set toolkit.enabled=false

Custom driver image (based off a specific driver version)

If you want to use custom driver container images (for e.g. using 465.27), then you would need to build a new driver container image. Follow these steps:

Rebuild the driver container by specifying the

$DRIVER_VERSIONargument when building the Docker image. For reference, the driver container Dockerfiles are available on the Git repo hereBuild the container using the appropriate Dockerfile. For example:

$ docker build --pull -t \ --build-arg DRIVER_VERSION=455.28 \ nvidia/driver:455.28-ubuntu20.04 \ --file Dockerfile .

Ensure that the driver container is tagged as shown in the example by using the

driver:<version>-<os>schema.Specify the new driver image and repository by overriding the defaults in the Helm install command. For example:

$ helm install --wait --generate-name \ -n gpu-operator --create-namespace \ nvidia/gpu-operator \ --set driver.repository=docker.io/nvidia \ --set driver.version="465.27"

Note that these instructions are provided for reference and evaluation purposes. Not using the standard releases of the GPU Operator from NVIDIA would mean limited support for such custom configurations.

Custom configuration for runtime containerd

When you use containerd as the container runtime, the following configuration options are used with the container-toolkit deployed with GPU Operator:

toolkit:

env:

- name: CONTAINERD_CONFIG

value: /etc/containerd/config.toml

- name: CONTAINERD_SOCKET

value: /run/containerd/containerd.sock

- name: CONTAINERD_RUNTIME_CLASS

value: nvidia

- name: CONTAINERD_SET_AS_DEFAULT

value: true

These options are defined as follows:

- CONTAINERD_CONFIGThe path on the host to the

containerdconfigyou would like to have updated with support for the

nvidia-container-runtime. By default this will point to/etc/containerd/config.toml(the default location forcontainerd). It should be customized if yourcontainerdinstallation is not in the default location.

- CONTAINERD_SOCKETThe path on the host to the socket file used to

communicate with

containerd. The operator will use this to send aSIGHUPsignal to thecontainerddaemon to reload its config. By default this will point to/run/containerd/containerd.sock(the default location forcontainerd). It should be customized if yourcontainerdinstallation is not in the default location.

- CONTAINERD_RUNTIME_CLASSThe name of the

Runtime Class you would like to associate with the

nvidia-container-runtime. Pods launched with aruntimeClassNameequal to CONTAINERD_RUNTIME_CLASS will always run with thenvidia-container-runtime. The default CONTAINERD_RUNTIME_CLASS isnvidia.

- CONTAINERD_SET_AS_DEFAULTA flag indicating whether you want to set

nvidia-container-runtimeas the default runtime used to launch all containers. When set to false, only containers in pods with aruntimeClassNameequal to CONTAINERD_RUNTIME_CLASS will be run with thenvidia-container-runtime. The default value istrue.

Rancher Kubernetes Engine 2

For Rancher Kubernetes Engine 2 (RKE2), set the following in the ClusterPolicy.

toolkit:

env:

- name: CONTAINERD_CONFIG

value: /var/lib/rancher/rke2/agent/etc/containerd/config.toml.tmpl

- name: CONTAINERD_SOCKET

value: /run/k3s/containerd/containerd.sock

- name: CONTAINERD_RUNTIME_CLASS

value: nvidia

- name: CONTAINERD_SET_AS_DEFAULT

value: "true"

These options can be passed to GPU Operator during install time as below.

helm install gpu-operator -n gpu-operator --create-namespace \

nvidia/gpu-operator $HELM_OPTIONS \

--set toolkit.env[0].name=CONTAINERD_CONFIG \

--set toolkit.env[0].value=/var/lib/rancher/rke2/agent/etc/containerd/config.toml.tmpl \

--set toolkit.env[1].name=CONTAINERD_SOCKET \

--set toolkit.env[1].value=/run/k3s/containerd/containerd.sock \

--set toolkit.env[2].name=CONTAINERD_RUNTIME_CLASS \

--set toolkit.env[2].value=nvidia \

--set toolkit.env[3].name=CONTAINERD_SET_AS_DEFAULT \

--set-string toolkit.env[3].value=true

MicroK8s

For MicroK8s, set the following in the ClusterPolicy.

toolkit:

env:

- name: CONTAINERD_CONFIG

value: /var/snap/microk8s/current/args/containerd-template.toml

- name: CONTAINERD_SOCKET

value: /var/snap/microk8s/common/run/containerd.sock

- name: CONTAINERD_RUNTIME_CLASS

value: nvidia

- name: CONTAINERD_SET_AS_DEFAULT

value: "true"

These options can be passed to GPU Operator during install time as below.

helm install gpu-operator -n gpu-operator --create-namespace \

nvidia/gpu-operator $HELM_OPTIONS \

--set toolkit.env[0].name=CONTAINERD_CONFIG \

--set toolkit.env[0].value=/var/snap/microk8s/current/args/containerd-template.toml \

--set toolkit.env[1].name=CONTAINERD_SOCKET \

--set toolkit.env[1].value=/var/snap/microk8s/common/run/containerd.sock \

--set toolkit.env[2].name=CONTAINERD_RUNTIME_CLASS \

--set toolkit.env[2].value=nvidia \

--set toolkit.env[3].name=CONTAINERD_SET_AS_DEFAULT \

--set-string toolkit.env[3].value=true

Proxy Environments

Refer to the section Install GPU Operator in Proxy Environments for more information on how to install the Operator on clusters behind a HTTP proxy.

Air-gapped Environments

Refer to the section Install NVIDIA GPU Operator in Air-Gapped Environments for more information on how to install the Operator in air-gapped environments.

Multi-Instance GPU (MIG)

Refer to the document GPU Operator with MIG for more information on how use the Operator with Multi-Instance GPU (MIG) on NVIDIA Ampere products. For information about configuring MIG support for the NVIDIA GPU Operator in an OpenShift Container Platform cluster, refer to MIG Support in OpenShift Container Platform for more information.

KubeVirt / OpenShift Virtualization

Refer to the document GPU Operator with KubeVirt for more information on how to use the GPU Operator to provision GPU nodes for running KubeVirt virtual machines with access to GPU. For guidance on using the GPU Operator with OpenShift Virtualization, refer to the document NVIDIA GPU Operator with OpenShift Virtualization.

Outdated Kernels

Refer to the section Considerations when Installing with Outdated Kernels in Cluster for more information on how to install the Operator successfully when nodes in the cluster are not running the latest kernel

Verify GPU Operator Install

Once the Helm chart is installed, check the status of the pods to ensure all the containers are running and the validation is complete:

$ kubectl get pods -n gpu-operator

NAME READY STATUS RESTARTS AGE

gpu-feature-discovery-crrsq 1/1 Running 0 60s

gpu-operator-7fb75556c7-x8spj 1/1 Running 0 5m13s

gpu-operator-node-feature-discovery-master-58d884d5cc-w7q7b 1/1 Running 0 5m13s

gpu-operator-node-feature-discovery-worker-6rht2 1/1 Running 0 5m13s

gpu-operator-node-feature-discovery-worker-9r8js 1/1 Running 0 5m13s

nvidia-container-toolkit-daemonset-lhgqf 1/1 Running 0 4m53s

nvidia-cuda-validator-rhvbb 0/1 Completed 0 54s

nvidia-dcgm-5jqzg 1/1 Running 0 60s

nvidia-dcgm-exporter-h964h 1/1 Running 0 60s

nvidia-device-plugin-daemonset-d9ntc 1/1 Running 0 60s

nvidia-device-plugin-validator-cm2fd 0/1 Completed 0 48s

nvidia-driver-daemonset-5xj6g 1/1 Running 0 4m53s

nvidia-mig-manager-89z9b 1/1 Running 0 4m53s

nvidia-operator-validator-bwx99 1/1 Running 0 58s

We can now proceed to running some sample GPU workloads to verify that the Operator (and its components) are working correctly.