Allows a serialized functionally unsafe engine to be deserialized. More...

#include <NvInferRuntime.h>

Public Member Functions | |

| virtual | ~IRuntime () noexcept=default |

| void | setDLACore (int32_t dlaCore) noexcept |

| Sets the DLA core used by the network. Defaults to -1. More... | |

| int32_t | getDLACore () const noexcept |

| Get the DLA core that the engine executes on. More... | |

| int32_t | getNbDLACores () const noexcept |

| Returns number of DLA hardware cores accessible or 0 if DLA is unavailable. More... | |

| void | setGpuAllocator (IGpuAllocator *allocator) noexcept |

| Set the GPU allocator. More... | |

| void | setErrorRecorder (IErrorRecorder *recorder) noexcept |

| Set the ErrorRecorder for this interface. More... | |

| IErrorRecorder * | getErrorRecorder () const noexcept |

| get the ErrorRecorder assigned to this interface. More... | |

| ICudaEngine * | deserializeCudaEngine (void const *blob, std::size_t size) noexcept |

| Deserialize an engine from host memory. More... | |

| ICudaEngine * | deserializeCudaEngine (IStreamReader &streamReader) |

| Deserialize an engine from a stream. More... | |

| ILogger * | getLogger () const noexcept |

| get the logger with which the runtime was created More... | |

| bool | setMaxThreads (int32_t maxThreads) noexcept |

| Set the maximum number of threads. More... | |

| int32_t | getMaxThreads () const noexcept |

| Get the maximum number of threads that can be used by the runtime. More... | |

| void | setTemporaryDirectory (char const *path) noexcept |

| Set the directory that will be used by this runtime for temporary files. More... | |

| char const * | getTemporaryDirectory () const noexcept |

| Get the directory that will be used by this runtime for temporary files. More... | |

| void | setTempfileControlFlags (TempfileControlFlags flags) noexcept |

| Set the tempfile control flags for this runtime. More... | |

| TempfileControlFlags | getTempfileControlFlags () const noexcept |

| Get the tempfile control flags for this runtime. More... | |

| IPluginRegistry & | getPluginRegistry () noexcept |

| Get the local plugin registry that can be used by the runtime. More... | |

| IRuntime * | loadRuntime (char const *path) noexcept |

| Load IRuntime from the file. More... | |

| void | setEngineHostCodeAllowed (bool allowed) noexcept |

| Set whether the runtime is allowed to deserialize engines with host executable code. More... | |

| bool | getEngineHostCodeAllowed () const noexcept |

| Get whether the runtime is allowed to deserialize engines with host executable code. More... | |

Protected Attributes | |

| apiv::VRuntime * | mImpl |

Additional Inherited Members | |

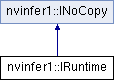

Protected Member Functions inherited from nvinfer1::INoCopy Protected Member Functions inherited from nvinfer1::INoCopy | |

| INoCopy ()=default | |

| virtual | ~INoCopy ()=default |

| INoCopy (INoCopy const &other)=delete | |

| INoCopy & | operator= (INoCopy const &other)=delete |

| INoCopy (INoCopy &&other)=delete | |

| INoCopy & | operator= (INoCopy &&other)=delete |

Detailed Description

Allows a serialized functionally unsafe engine to be deserialized.

- Warning

- Do not inherit from this class, as doing so will break forward-compatibility of the API and ABI.

Constructor & Destructor Documentation

◆ ~IRuntime()

|

virtualdefaultnoexcept |

Member Function Documentation

◆ deserializeCudaEngine() [1/2]

|

inline |

Deserialize an engine from a stream.

If an error recorder has been set for the runtime, it will also be passed to the engine.

This deserialization path will reduce host memory usage when weight streaming is enabled.

- Parameters

-

streamReader a read-only stream from which TensorRT will deserialize a previously serialized engine.

- Returns

- The engine, or nullptr if it could not be deserialized.

◆ deserializeCudaEngine() [2/2]

|

inlinenoexcept |

Deserialize an engine from host memory.

If an error recorder has been set for the runtime, it will also be passed to the engine.

- Parameters

-

blob The memory that holds the serialized engine. size The size of the memory.

- Returns

- The engine, or nullptr if it could not be deserialized.

◆ getDLACore()

|

inlinenoexcept |

Get the DLA core that the engine executes on.

- Returns

- assigned DLA core or -1 for DLA not present or unset.

◆ getEngineHostCodeAllowed()

|

inlinenoexcept |

Get whether the runtime is allowed to deserialize engines with host executable code.

- Returns

- Whether the runtime is allowed to deserialize engines with host executable code.

◆ getErrorRecorder()

|

inlinenoexcept |

get the ErrorRecorder assigned to this interface.

Retrieves the assigned error recorder object for the given class. A nullptr will be returned if an error handler has not been set.

- Returns

- A pointer to the IErrorRecorder object that has been registered.

- See also

- setErrorRecorder()

◆ getLogger()

|

inlinenoexcept |

get the logger with which the runtime was created

- Returns

- the logger

◆ getMaxThreads()

|

inlinenoexcept |

Get the maximum number of threads that can be used by the runtime.

Retrieves the maximum number of threads that can be used by the runtime.

- Returns

- The maximum number of threads that can be used by the runtime.

- See also

- setMaxThreads()

◆ getNbDLACores()

|

inlinenoexcept |

Returns number of DLA hardware cores accessible or 0 if DLA is unavailable.

◆ getPluginRegistry()

|

inlinenoexcept |

Get the local plugin registry that can be used by the runtime.

- Returns

- The local plugin registry that can be used by the runtime.

◆ getTempfileControlFlags()

|

inlinenoexcept |

Get the tempfile control flags for this runtime.

- Returns

- The flags currently set.

◆ getTemporaryDirectory()

|

inlinenoexcept |

Get the directory that will be used by this runtime for temporary files.

- Returns

- A path to the temporary directory in use, or nullptr if no path is specified.

- See also

- setTemporaryDirectory()

◆ loadRuntime()

|

inlinenoexcept |

Load IRuntime from the file.

This method loads a runtime library from a shared library file. The runtime can then be used to execute a plan file built with BuilderFlag::kVERSION_COMPATIBLE and BuilderFlag::kEXCLUDE_LEAN_RUNTIME both set and built with the same version of TensorRT as the loaded runtime library.

- Parameters

-

path Path to the runtime lean library.

- Returns

- the runtime library, or nullptr if it could not be loaded

- Warning

- The path string must be null-terminated, and be at most 4096 bytes including the terminator.

◆ setDLACore()

|

inlinenoexcept |

Sets the DLA core used by the network. Defaults to -1.

- Parameters

-

dlaCore The DLA core to execute the engine on, in the range [0,getNbDlaCores()).

This function is used to specify which DLA core to use via indexing, if multiple DLA cores are available.

- Warning

- if getNbDLACores() returns 0, then this function does nothing.

- See also

- getDLACore()

◆ setEngineHostCodeAllowed()

|

inlinenoexcept |

Set whether the runtime is allowed to deserialize engines with host executable code.

- Parameters

-

allowed Whether the runtime is allowed to deserialize engines with host executable code.

The default value is false.

◆ setErrorRecorder()

|

inlinenoexcept |

Set the ErrorRecorder for this interface.

Assigns the ErrorRecorder to this interface. The ErrorRecorder will track all errors during execution. This function will call incRefCount of the registered ErrorRecorder at least once. Setting recorder to nullptr unregisters the recorder with the interface, resulting in a call to decRefCount if a recorder has been registered.

If an error recorder is not set, messages will be sent to the global log stream.

- Parameters

-

recorder The error recorder to register with this interface.

- See also

- getErrorRecorder()

◆ setGpuAllocator()

|

inlinenoexcept |

Set the GPU allocator.

- Parameters

-

allocator Set the GPU allocator to be used by the runtime. All GPU memory acquired will use this allocator. If NULL is passed, the default allocator will be used.

Default: uses cudaMalloc/cudaFree.

If nullptr is passed, the default allocator will be used.

◆ setMaxThreads()

|

inlinenoexcept |

Set the maximum number of threads.

- Parameters

-

maxThreads The maximum number of threads that can be used by the runtime.

- Returns

- True if successful, false otherwise.

The default value is 1 and includes the current thread. A value greater than 1 permits TensorRT to use multi-threaded algorithms. A value less than 1 triggers a kINVALID_ARGUMENT error.

◆ setTempfileControlFlags()

|

inlinenoexcept |

Set the tempfile control flags for this runtime.

- Parameters

-

flags The flags to set.

The default value is all flags set, i.e.

(1U << static_cast<uint32_t>(kALLOW_IN_MEMORY_FILES)) | (1U << static_cast<uint32_t>(kALLOW_TEMPORARY_FILES))

◆ setTemporaryDirectory()

|

inlinenoexcept |

Set the directory that will be used by this runtime for temporary files.

On some platforms the TensorRT runtime may need to create and use temporary files with read/write/execute permissions to implement runtime functionality.

- Parameters

-

path Path to the temporary directory for use, or nullptr.

If path is nullptr, then TensorRT will use platform-specific heuristics to pick a default temporary directory if required:

- On UNIX/Linux platforms, TensorRT will first try the TMPDIR environment variable, then fall back to /tmp

- On Windows, TensorRT will try the TEMP environment variable.

See the TensorRT Developer Guide for more information.

The default value is nullptr.

- Warning

- If path is not nullptr, it must be a non-empty string representing a relative or absolute path in the format expected by the host operating system.

- The string path must be null-terminated, and be at most 4096 bytes including the terminator. Note that the operating system may have stricter path length requirements.

- The process using TensorRT must have rwx permissions for the temporary directory, and the directory shall be configured to disallow other users from modifying created files (e.g. on Linux, if the directory is shared with other users, the sticky bit must be set).

- See also

- getTemporaryDirectory()

Member Data Documentation

◆ mImpl

|

protected |

The documentation for this class was generated from the following file: