Key Components of DGX SuperPOD#

The DGX SuperPOD architecture has been designed to maximize performance for state-of-the-art model training or inferencing, scale to exaflops of performance, provide the highest performance to storage and support all customers in the enterprise, higher education, research, and the public sector. It is a digital twin of the main NVIDIA research and development system, meaning the company’s software, applications, and support structure are first tested and vetted on the same architecture. By using SUs, system deployment times are reduced from months to weeks. Leveraging the DGX SuperPOD design reduces time-to-solution and time-to-market of next generation models and applications.

DGX SuperPOD is the integration of key NVIDIA components, as well as storage solutions from partners certified to work in the DGX SuperPOD environment.

NVIDIA DGX RUBIN NVL8 System#

The NVIDIA DGX RUBIN NVL8 system (Figure 2) is an AI powerhouse that enables enterprises to expand the frontiers of business innovation and optimization. The DGX RUBIN NVL8 system delivers breakthrough AI performance with the most powerful chips ever built, in an eight GPU configuration. The NVIDIA Rubin GPU architecture provides the latest technologies that bring months of computational effort down to days and hours, on some of the largest AI/ML workloads.

Figure 2 DGX RUBIN NVL8 System#

Some of the key highlights of the DGX RUBIN NVL8 system when compared to the DGX B300 system include:

DC bus bar powered, liquid-cooled, MGX-rack compatible design for high density deployment in modern data centers

800 Gbps NVIDIA Spectrum-X™ or Quantum-X800 InfiniBand XDR based compute fabric

140 petaFLOPS FP8 training and 400 petaFLOPS FP4 inference

Sixth generation of NVIDIA NVLink.

Eight Rubin GPUs

2304 GB of aggregated HBM3 memory

MGX Racks and DGX Power Shelves#

The DGX RUBIN NVL8 system features a DC busbar-powered MGX design that leverages the groundbreaking modularity in the DGX GB200/300 system. This design allows a higher rack density and better power efficiency than individual power supply units (PSUs). It also enables data center-level compatibility to the DGX GB200/300 system.

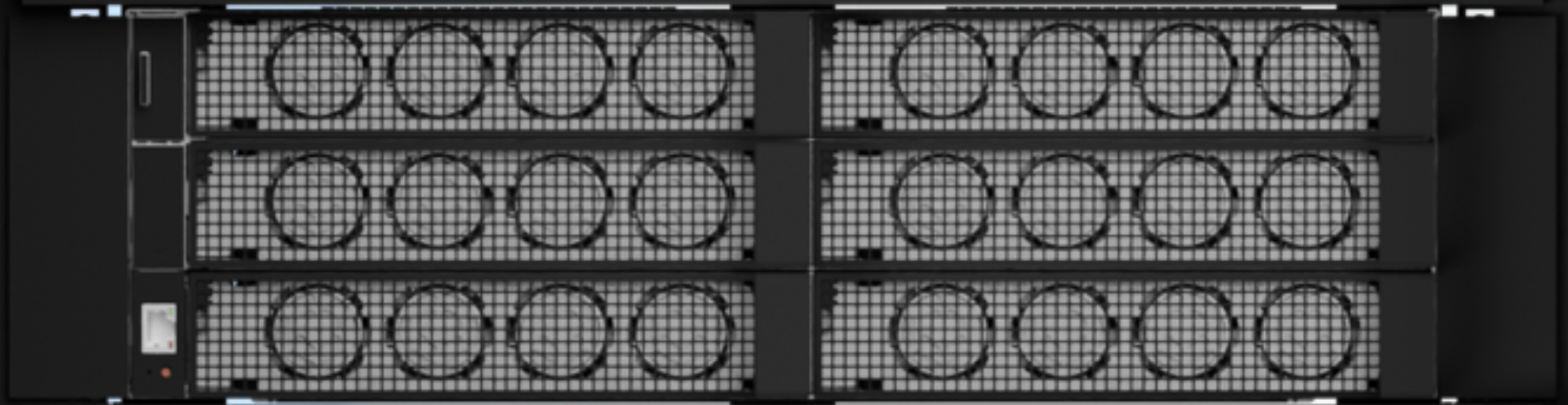

The power shelf used for DGX RUBIN NVL8 SuperPOD has four 110 kW power shelves configured as N+N redundancy and can deliver up to 220 kW of power. At the rear of the power shelf is a set of RJ45 ports used for the power brake feature and current sharing feature. The power shelves are daisy chained to each other using these RJ45 ports. At the front of the power shelf is the Baseboard Management Controller (BMC) port. Figure 3 shows the front of the power shelf.

Figure 3 110 kW Power Shelf#

NVIDIA InfiniBand Technology#

InfiniBand is a high-performance, low latency, Remote Direct Memory Access (RDMA) capable networking technology, proven over 20 years in the harshest computing environments, to provide the best inter-node network performance. InfiniBand continues to evolve and lead data center network performance.

NVIDIA InfiniBand XDR has a peak speed of 800 Gbps per direction with an extremely low port-to-port latency and is backwards compatible with the previous generations of InfiniBand specifications. InfiniBand is more than just peak bandwidth and low latency. InfiniBand provides additional features to optimize performance including Adaptive Routing (AR), collective communication with NVIDIA Scalable Hierarchical Aggregation and Reduction Protocol (SHARP)TM, dynamic network healing with Self-Healing Interconnect Enhancement for Intelligent Datacenters (SHIELD), and supports several network topologies.

NVIDIA BlueField-4#

NVIDIA BlueField‑4 DPUs provide the secure, accelerated networking foundation for DGX Rubin NVL8 systems. They offload infrastructure services (networking, storage, and security) from CPU and GPU nodes, preserving GPU cycles for AI workloads and improving overall system efficiency.

NVIDIA DOCA#

NVIDIA DOCA™ is the infrastructure software framework that programs NVIDIA BlueField‑4 DPUs and NVIDIA ConnectX‑9 SuperNICs as a unified infrastructure services platform for AI factories and DGX SuperPOD deployments. DOCA‑based services on BlueField‑4 handle telemetry, traffic shaping, in‑line threat detection, encryption, and storage data paths directly on the DPU, offloading these tasks from host CPUs to preserve GPU cycles for AI compute.

DOCA is part of the DGX SuperPOD software stack and is included as part of the DGX OS.

NVIDIA ConnectX-9#

The NVIDIA ConnectX-9 SuperNIC supercharges gigascale AI computing workloads with up to 800 gigabits per second (Gb/s) over InfiniBand or Ethernet. It delivers extremely fast, low latency, and highly efficient network connectivity that significantly enhances system performance for AI factories and cloud platforms.

NVIDIA Mission Control#

The DGX SuperPOD Reference Architecture represents the best practices for building high-performance AI factories. There is flexibility in how these systems can be presented to customers and users. NVIDIA Mission Control software is used to manage all DGX SuperPOD with DGX RUBIN NVL8 systems deployments.

NVIDIA Mission Control is a sophisticated full-stack software solution. As an essential part of the DGX SuperPOD experience, it optimizes developer workload performance and resiliency, ensures unmatched uptime with automated failure handling, and provides unified cluster-scale telemetry and manageability. Key features include full-stack resiliency, predictive maintenance, unified error reporting, data center optimizations, cluster health checks, and automated node management.

NVIDIA Mission Control software incorporates the same technology that NVIDIA uses to manage thousands of systems for our award-winning data scientists and provides an immediate path for organizations that need the best of the best.

DGX SuperPOD is to be deployed on-premises, meaning the customer owns and manages the hardware. This can be within a customer’s data center or co-located at a commercial data center. In each case the customer owns the hardware, the service it provides, and is responsible for their cluster infrastructure as well as providing the building management system for integration.

Components#

The hardware components of DGX SuperPOD are described in Table 1. The software components are shown in Table 2.

Component |

NVIDIA Technology |

Description |

|---|---|---|

Compute nodes |

NVIDIA DGX RUBIN NVL8 system with eight Rubin GPUs |

The world’s premier purpose-built AI systems featuring NVIDIA Rubin GPUs, sixth-generation NVIDIA NVLink, and sixth-generation NVIDIA NVSwitch™ technologies. |

Compute node transceiver and cable |

NVIDIA Octal Small Form Factor Pluggable (OSFP) twin port flat top transceiver, multimode fiber (MMF) passive fiber cable |

Transceiver and cables for DGX nodes. |

Networking Connectivity |

NVIDIA ConnectX‑9 SuperNICs (800 Gb/s networking); NVIDIA BlueField-4 Data Processing Units (DPUs) (800 Gb/s throughput) |

High-performance scale-out connectivity to AI factories using standard Ethernet protocols. BlueField-4 DPUs enable accelerated multi-tenant north-south networking, rapid data access, AI runtime security, and high-performance inference. |

Compute (East-West) fabric |

NVIDIA Quantum-X800 Q3400-RD 800 Gbps InfiniBand |

Rail-optimized, non-blocking, twin-plane, fat tree topology for next-generation extreme-scale AI factory. |

Ethernet (North-South) fabric |

NVIDIA Spectrum-4 SN5600D 800 Gbps Ethernet switches |

A single ethernet fabric physical layer for both the in-band and storage network segments. |

Ethernet Storage fabric switch transceiver and cable |

NVIDIA Quad Small Form-factor Pluggable (QSFP) single-port flat top transceiver 800 GB (NIC side) and NVIDIA OSFP twin port finned (switch side) transceiver 1600 GB SMF passive fiber cable |

Transceiver and cables for Spectrum-4 switches. |

In-band management network |

NVIDIA SN5600D switch |

64 port 800 Gbps and up to 256 ports of 200 Gbps Ethernet switch providing high port density with high performance. |

Ethernet in-band fabric switch transceiver and cable |

NVIDIA QSFP single port flat top transceiver 400 GB or NVIDIA OSFP twin port finned transceiver 800 GB MMF passive fiber cable |

Transceivers for DGX and Control Plane nodes. |

Out-of-band (OOB) management network |

NVIDIA SN2201M switch |

48-port 1 Gbps Ethernet and 4 x 100 Gbps switch leveraging copper ports to minimize complexity. |

Component |

Description |

|---|---|

NVIDIA Mission Control software delivers a full-stack data center solution engineered for NVIDIA DGX SuperPOD deployments based on NVIDIA Rubin-class architectures. It integrates essential management and operational capabilities into a unified platform, providing enterprise customers with simplified control over their NVIDIA DGX SuperPOD infrastructure at scale. As part of integrated software delivery, NVIDIA Mission Control includes NVIDIA Base Command Manager (BCM), NVIDIA Run:ai, and NVIDIA Unified Fabric Manager (UFM). |

|

NVIDIA DOCA microservices unlock the full capabilities of NVIDIA BlueField-4, turning it into a programmable engine for secure, accelerated data movement in DGX Rubin NVL8-based DGX SuperPOD deployments. DOCA microservices deliver cloud networking, cybersecurity, data acceleration, and orchestration, as prebuilt, composable, containerized APIs that run natively on BlueField-4 DPUs. |

|

NVIDIA AI Enterprise is an end-to-end, cloud-native software platform that accelerates data science pipelines and streamlines development and deployment of production-grade co-pilots and other generative AI applications. |

|

Enables increased performance for AI and HPC. |

|

The NGC catalog provides a collection of GPU-optimized containers for AI and HPC. |

|

A classic workload manager used to manage complex workloads in a multi-node, batch-style, compute environment. |

|

Cloud-native AI workload and GPU orchestration platform enabling fractional, full, and multi-node support for the entire enterprise AI lifecycle including interactive development environments, training, and inference. |

Note

NVIDIA Mission Control now includes Base Command Manager and Run:ai functionality. No separate purchase is needed. SuperPOD only supports multiteam environments through Base Command Manager; multitenancy is not supported with SuperPOD currently.

Design Requirements#

DGX SuperPOD is designed to minimize system bottlenecks throughout the tightly coupled configuration to provide the best performance and application scalability. Each subsystem has been thoughtfully designed to meet this goal. In addition, the overall design remains flexible so that data center requirements can be tailored to better integrate into existing data centers.

System Design#

DGX SuperPOD is optimized for a customers’ particular workload of multi-node AI and HPC applications:

A modular architecture based on SUs of 72 DGX RUBIN NVL8 systems.

A fully tested system scales 16 SUs, but larger deployments can be built based on customer requirements.

Single rack that can support up to eight DGX RUBIN NVL8 systems per rack, enabling modification to accommodate different data center requirements if there are additional power and cooling capacity.

Storage partner equipment that has been certified to work in DGX SuperPOD environments.

Full system support, including compute, storage, network, and Mission Control software is provided by NVEX.

Compute Fabric#

The compute fabric provides the high-bandwidth, low-latency InfiniBand backbone that interconnects DGX Rubin NVL8 systems within the DGX SuperPOD and is implemented as follows:

The compute fabric is rail-optimized, full-fat tree topology.

Managed Quantum-X800 switches are used throughout the design to provide better management of the fabric.

NVIDIA ConnectX‑9 adapters on DGX Rubin NVL8 systems deliver up to 800 Gb/s InfiniBand connectivity into this fabric.

NVIDIA UFM appliances for management of InfiniBand fabric.

Ethernet Fabric#

The Ethernet fabric is logically segmented to separate storage traffic from in-band management, as described in the following.

The Ethernet fabric connects to NVIDIA BlueField-4 DPUs on each compute tray, providing access to the control plane and high-performance storage over a programmable DPU for maximum performance and flexibility.

It is independent of the compute fabric to minimize the effects on the AI workload.

The Ethernet fabric is segmented into the following networks:

Storage network (high-speed storage)

In-band management network

The storage network provides high-bandwidth, low-latency access to the shared high-performance storage. It also has the following characteristics:

Storage is provided using RDMA over Converged Ethernet to provide maximum performance and minimize CPU overhead.

It is flexible and can scale to meet specific capacity and bandwidth requirements.

The in-band management network has the following additional requirements:

The in-band management network fabric is Ethernet-based and is used for node provisioning, data movement, internet access, and other services that must be accessible by users.

The in-band management network connections for compute and management nodes operate at 400 Gb/s and run over a Layer-3 weighted Equal-Cost Multi-Path (wECMP)-routed connection, together with the storage network over the Ethernet fabric for resiliency, which supports automatic failover between different ports.

Out-of-Band Management Network#

The OOB management network connects all the BMC ports, as well as other devices that should be physically isolated from users. The Switch Management Network is a subset of the OOB Network that provides additional security and resiliency and provides direct Zero Touch Provisioning (ZTP) capability from a single switch boot-strapping point. Customers are encouraged to provide dedicated uplink handover point for the OOB network to allow dedicated access to the cluster independent of the main uplink.

Storage Requirements#

The DGX SuperPOD compute architecture must be paired with a high-performance, balanced, storage system to maximize overall system performance. DGX SuperPOD is designed to use two separate storage systems, high-performance storage (HPS) and user storage, optimized for key operations of throughput, parallel I/O, as well as higher input/output operations per second (IOPS) and metadata workloads.

High-Performance Storage#

High-Performance Storage is provided through InfiniBand or high-speed Ethernet connected storage from a DGX SuperPOD certified storage partner, and is engineered and tested with the following attributes in mind:

High-performance, resilient, POSIX-style file system optimized for multi-threaded read and write operations across multiple nodes.

RDMA on InfiniBand or Ethernet support.

Local system RAM for transparent caching of data.

Leverage local flash device transparently for read and write caching.

The specific storage fabric topology, capacity, and components are determined by the DGX SuperPOD certified storage partner as part of the DGX SuperPOD design process.

User Storage#

User storage differs from HPS in that it exposes an NFS share on the in-band management fabric for multiple uses. It is typically used for home-directory-type usage (especially with clusters deployed with Slurm), administrative scratch space, shared storage as needed by DGX SuperPOD components in a high-availability configuration (for example, BCM), and log files.

With that in mind, user storage has the following minimum requirements:

100-400 Gb/s Ethernet connectivity, with 100G PHY-per-lane connectivity.

High metadata performance, IOPS, and key enterprise features such as checkpointing. This is different from HPS, which is optimized for parallel I/O and large capacity.

Communication over Ethernet using NFS.

User storage in a DGX SuperPOD is often satisfied with existing NFS servers already deployed, where a new export is created and made accessible to the DGX SuperPOD in-band management network. For best performance, user storage should provide a minimum bandwidth of 100 Gb/s.

Footnotes