Linux Software Stack

This topic describes the NVIDIA DRIVE™ PX 2 hardware and software architecture at a high level, such as Hypervisor, guest operating systems, Ubuntu Linux, the NVIDIA drivers, and NVIDIA tools.

SDK Architecture

This topic describes the NVIDIA DRIVE™ Linux SDK for:

• NVIDIA DRIVE™ PX 2 AutoCruise (P3407) Platform

• NVIDIA DRIVE™ PX 2 AutoChauffeur (P2379) Platform

NVIDIA DRIVE™ Foundation PDK

The NVIDIA DRIVE™ Foundation PDK is provided in a series of RUN files wrapped in a 7‑Zip archive containing:

• HTML-based documentation for setup and development

• Boot loader and flashing tools

• Cross toolchain for arm64 development on the host

• OSS source code for all OSS binaries

NVIDIA DRIVE™ Linux PDK

The NVIDIA DRIVE™ Linux PDK is also provided in a series of RUN files wrapped in a 7‑Zip archive containing:

• HTML-based documentation for setup and development

• Pre-built Linux kernel image and device tree binaries for supported NVIDIA® DRIVE™ platforms. Check the Release Notes for which platforms are supported in your release.

• Pre-built Ubuntu minimal root filesystem for the supported 16.04 (64-bit distribution)

• NVIDIA BSP drivers, libraries, firmware, and setup scripts for the target

• Yocto Poky build environment for building GENIVI 7 reference root filesystem from the host

• OSS source code for all OSS (including kernel, DTS, imaging tools, Yocto and samples)

Why does NVIDIA provide separate PDKs?

NVIDIA DRIVE™ Foundation is the base-level product of the NVIDIA DRIVE™ family. It is closely tied to the boot loader, which is common for every guest OS, including the native Linux use case.

NVIDIA DRIVE™ Linux is a simply a guest OS and is largely agnostic to the Foundation stack. Foundation still provides the boot loader services and is required in either native or virtualized OS operation.

Architecture

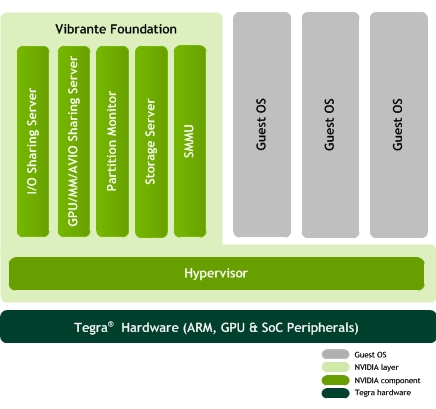

The following diagram shows NVIDIA DRIVE™ Foundation run-time software stack. With this infrastructure, multiple guest operating systems can run on the Tegra hardware, with NVIDIA DRIVE™ Hypervisor managing use of hardware resources.

The NVIDIA DRIVE™ Foundation stack includes NVIDIA DRIVE™ Hypervisor and a set of Foundation partitions that together implement NVIDIA DRIVE™ virtualization technology to run multiple guest operating systems on the Tegra processor, including NVIDIA DRIVE™ Linux.

For information on using Hypervisor, see the Flashing and Using the Board > Flashing the Board > Using Hypervisor topic.

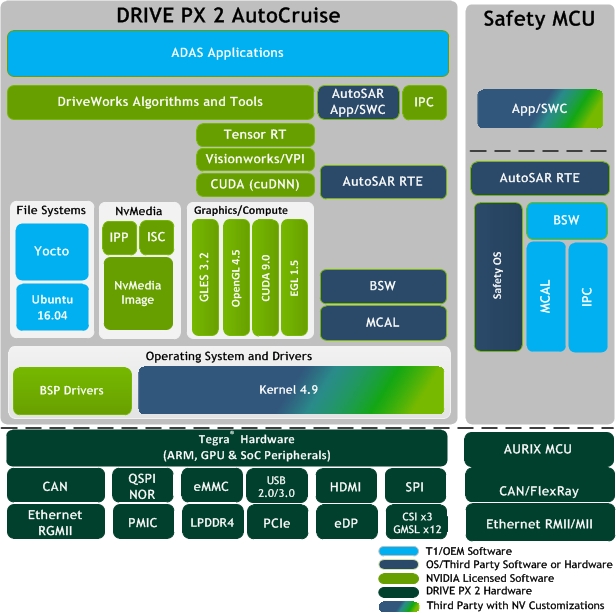

The following diagram shows the architecture of the NVIDIA DRIVE™ Linux DRIVE PX 2 AutoCruise platform.

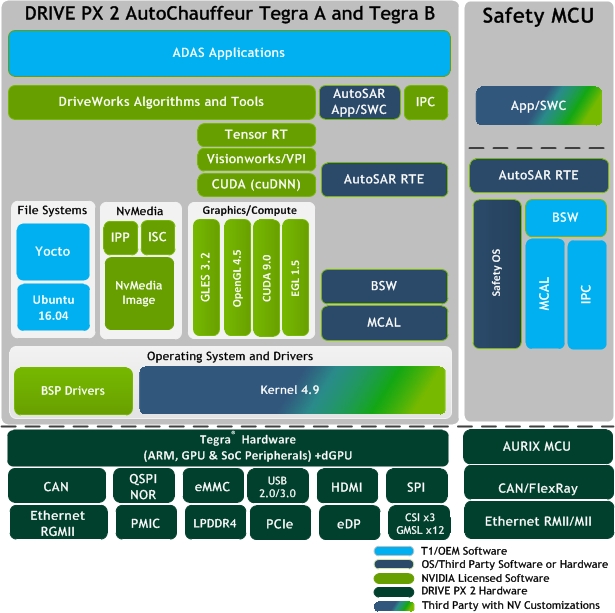

The following diagram shows the architecture of the NVIDIA DRIVE™ Linux DRIVE PX 2 AutoChauffeur platform.

Software Components

This topic describes the root filesystem choice and lists the major software components in NVIDIA DRIVE™ Foundation and components that are specific to NVIDIA DRIVE™ Linux SDK.

NVIDIA DRIVE™ Linux RootFS Choice

• Ubuntu Core (delivered pre-built contained in tarball and installed via .run file)

• Binaries pre-built and downloaded directly from Canonical builder/servers

• apt-get capable from online personal package archives (PPA), thousands of available package debs

• Quickly and easily set up with a familiar desktop Linux knowledge

• Ideal for rapid development even on the target itself (not ideal for embedded production and deployment)

• Requires to host ‘sudo’ to extract the pre-built rootfs image from .tgz and and also requires ‘sudo’ to copy and populate the rootfs with NVIDIA BSP files (drivers, libraries, firmware, and scripts)

• Yocto Poky (provided in source form only, must be built from scratch on host)

• No pre-built binaries; 100% generated from OSS sources packaged in the PDK (NV kernel, rootfs packages)

• Complete, controlled embedded Linux distro managed by BitBake recipes and manageable via ‘rpm’

• Full Yocto BSP support from NVIDIA layer recipes (parses NV PDK pre-built driver binaries and installs them into the rootfs build image)

• Intended to be the starting place and a reference for an embedded production rootfs

• Does not require ‘sudo’ level privileges to build the rootfs image

NVIDIA DRIVE™ Foundation Components

The following tables list the major software that are specific to NVIDIA DRIVE™ Foundation PDK.

Component | Description |

NVIDIA DRIVE™ Hypervisor | Privileged software component optimized to run on the ARM® based Cortex-A57 MPCore. Hypervisor separates system resources between multiple VM partitions. Each partition can run an operating system or a bare-metal application. The Hypervisor manages: • Guest OS partitions and the run-time isolation between them • Partitions’ virtual views of the CPU and memory resources • I/O resource allocation and sharing between partitions |

Boot Loader | Responsible for loading Foundation images. Based on NVIDIA Quickboot technology. |

Flashing tools | Allows flashing and updating Foundation components and Guest OS partitions. Based on NVIDIA Bootburn technology. |

I2C Server | A Bare metal partition that manages I/O device sharing between partitions. |

OS Loader | Responsible for loading OS images. Implements OS specific boot protocol for NVIDIA DRIVE™ platforms. Based on NVIDIA Quickboot technology. |

Partition Configuration Tool | Allows configuring partition parameters and resource allocation |

Partition Loader | Responsible for loading Guest OS boot image into partition container. Additionally: • Plays a key role in restarting individual Guest partitions. • Dynamically loads/unloads partitions and virtual memory allocations. Based on NVIDIA Quickboot technology. |

Monitor Partition | Monitors partitions, including: • Starts/stops Guest OSs. • Coordinates Guest OS restarts. • Performs watchdog services for Guest OSs. • Logs partition use. |

RM Server | Manages isolated hardware services for: • Graphics and computation ( GPU) • Codecs (MM) • Display and video capture I/O |

System Memory Management Unit (SMMU) Server | Bare metal partition responsible for Tegra SMMU management for I/O device memory access protection between partitions |

Virtualization Storage Client Server (VSC) | Controls access to a single eMMC device and virtualizes this device so that VMs see the device as a set of individual disks. VSC also controls and secures access to partitions on the eMMC device from multiple guests |

Components Specific to NVIDIA DRIVE™ Linux

The following tables list the major software that are specific to NVIDIA DRIVE™ Linux SDK.

Component | Description |

OS Drivers | OS driver support for enabling OEM applications to establish standard I/O interfaces. These drivers support Linux file systems (such as ext3, ext4, and NFS), networking (such as TCP, IP, and UDP), USB 3.0 mass storage file systems (ext3, ext4, fat32 with vfat), and USB 2.0 host (such as HID, Com, and Mass Storage). |

Linux Kernel 4.9 | Widely used open-source POSIX compatible operating system. |

Board Support Package (BSP) Drivers | NVIDIA drivers and applications that support communication, configuration, and flashing from a host computer. |

NVIDIA® CUDA® | An NVIDIA parallel computing platform and programming model. It enables dramatic increases in computing performance by harnessing the power of the graphics processing unit (GPU). |

OpenGL 4.5 | NVIDIA implementation of the OpenGL ES standard accelerated 3D graphics rendering library. This NVIDIA library includes implementations of NVIDIA and multi-vendor OpenGL ES extensions. In many cases, these extensions use platform-specific features to provide efficient implementations. OpenGL 4.5 is backward compatible with GLES 3.2. For more information, see OpenGL ES 2/3 Specs and Extensions in API Modules, Graphics APIs. |

EGL 1.5 | NVIDIA implementation of the EGL interface library for use between rendering libraries (like OpenGL ES) and the underlying native platform window system. This library includes NVIDIA enhancements specific to the Tegra platform. For more information, see EGL Specifications Extensions in API Modules, Graphics APIs. |

Applies to: NVIDIA DRIVE™ Linux Vulkan 1.0 | NVIDIA supports the Vulkan 1.0.35 specification for instance and device extensions. For more information, see Vulkan Specs and Extensions in API Modules, Graphics APIs. |

NvMedia | NVIDIA API that provides a complete solution for decoding, post-processing, compositing, and displaying compressed or uncompressed video streams. |

RM Driver Note: Available with Hypervisor features. | Resource Manager that provides inter-process communication (IPC) and data transfer between the guest operating system and the hypervisor. IPC includes processing requests and hardware settings. |

AURIX Microcontroller

The NVIDIA DRIVE™ PX 2 open AI car computing platform integrates with Elektrobit AUTOSAR 4.x-compliant EB tresos software, which runs on the NVIDIA® Tegra® processor and the AURIX 32-bit TriCore Infineon microcontroller.

The NVIDIA DRIVE™ PX 2 platform enables developing systems to capture and process multiple high-definition (HD) camera and sensor inputs to enable development of advanced graphics, computer vision, and machine learning applications, which are all required for auto-cruise and autonomous-driving applications.

Integration with the AURIX microcontroller enables high, real-time processing performance with enhanced embedded safety and security features for use in ADAS applications. The EB tresos software integrates the capability of Linux and AUTOSAR applications with NVIDIA DRIVE™ PX 2 functionality for monitoring and redundancy processing. It enables a safe and reliable, cross-CPU-communication execution environment in compliance with the ISO 26262 standard at the highest automotive safety integrity level (ASIL D).

For more information about AURIX, see their Web pages at:

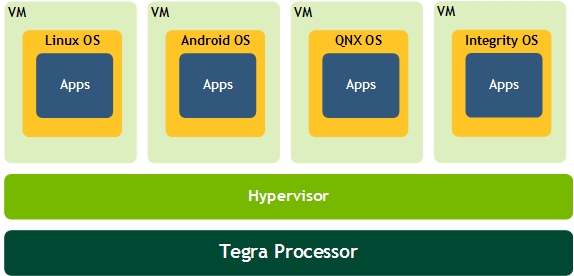

Hypervisor and the Guest Operating System

NVIDIA DRIVE™ PX 2 provides hypervisor functionality through virtualization capabilities of the Cortex-A15 processor. Hypervisor (also known as a Virtual Machine Monitor or VMM) is a small layer of full-privilege software. Hypervisors can be hosted and run on the host operating system, or they can be run directly on the hardware. They enable running multiple guest operating systems (or baremetal software) on the same chipset, and each guest OS stack has its own security and safety requirements. The partitions run as if they own the hardware exclusively, but they run in a multiplexed manner, sharing memory, CPU resources, and devices.

Hypervisors are small, start quickly, can be verified for correctness and safety, and have realtime features. This enables booting very quickly (50ms or less), so any code running in a bare metal partition, for example, could start running just as quickly.

Fast booting features in hypervisor enable safety-critical operations and multiple OS’s to run reliably. CANBUS related software can run next to Android, for example, without being affected by software bugs in the Android partition. With realtime capabilities, drivers can be ported to a partition running on a realtime OS or a bare metal partition, and then provide realtime response. Any partition using that driver can then receive that benefit.

The hypervisor can shutdown and restart guest operating systems without a hard reset, enabling software to be restarted and issues logged when needed.

Run Mode vs. Recovery Mode

When running NVIDIA DRIVE™ PX 2 device such as to run sample applications or to develop software, those operations occur in Run mode.

When running DRIVE PX 2 in Recovery mode, a mechanism to recover the device from a failure state is executing.