Intersection Detection Sample (WaitNet)

Table of Contents

- Note

- SW Release Applicability: This sample is available in NVIDIA DRIVE Software releases.

Description

The Intersection Detection sample demonstrates how to use the NVIDIA® proprietary WaitNet deep neural network (DNN) to detect intersections. It reads video streams sequentially. For each frame, it detects the intersection location.

Running the Sample

The command line for the sample is:

./sample_intersection_detector --rig=[path/to/rig/file]

--liveCam=[0|1]

where

--rig=[path/to/rig/file]

Rig file containing all information about vehicle sensors and calibration.

Default value with video: path/to/data/samples/waitcondition/rig.json

Default value with live camera: path/to/data/samples/waitcondition/live_cam_rig.json

--liveCam=[0|1]

Use live camera or video file. Takes no effect on x86.

Need to be set to 1 if passing in a rig with live camera setup.

To switch the mode, pass `--liveCam=0/1` as the argument.

Default value: 0

- Note

- The live camera type in rig file is a supported

RCCBsensor. See List of cameras supported out of the box for the list of supported cameras for each platform. The video type in rig file can be raw or h264.

Examples

Running the sample with default rig file with video

./sample_intersection_detector

Running the sample with rig file with live camera

./sample_intersection_detector --liveCam=1

Output

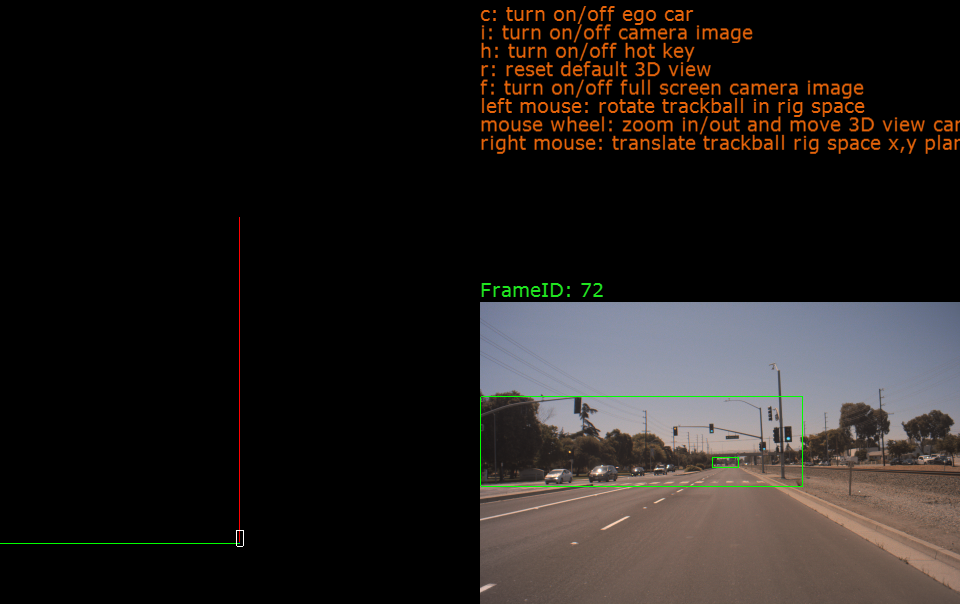

The sample creates a window, displays the video stream, and overlays the bounding box of the detected intersection. When the ego car approaches to an intersection, bottom of the visualized bounding box aligns with stop line. When the ego car is within an intersection, the visualized bounding box covers entire image area.

Intersection Detector Sample

Additional Information

For more details see Wait Conditions Detection and Classification.