NVIDIA DSX Documentation

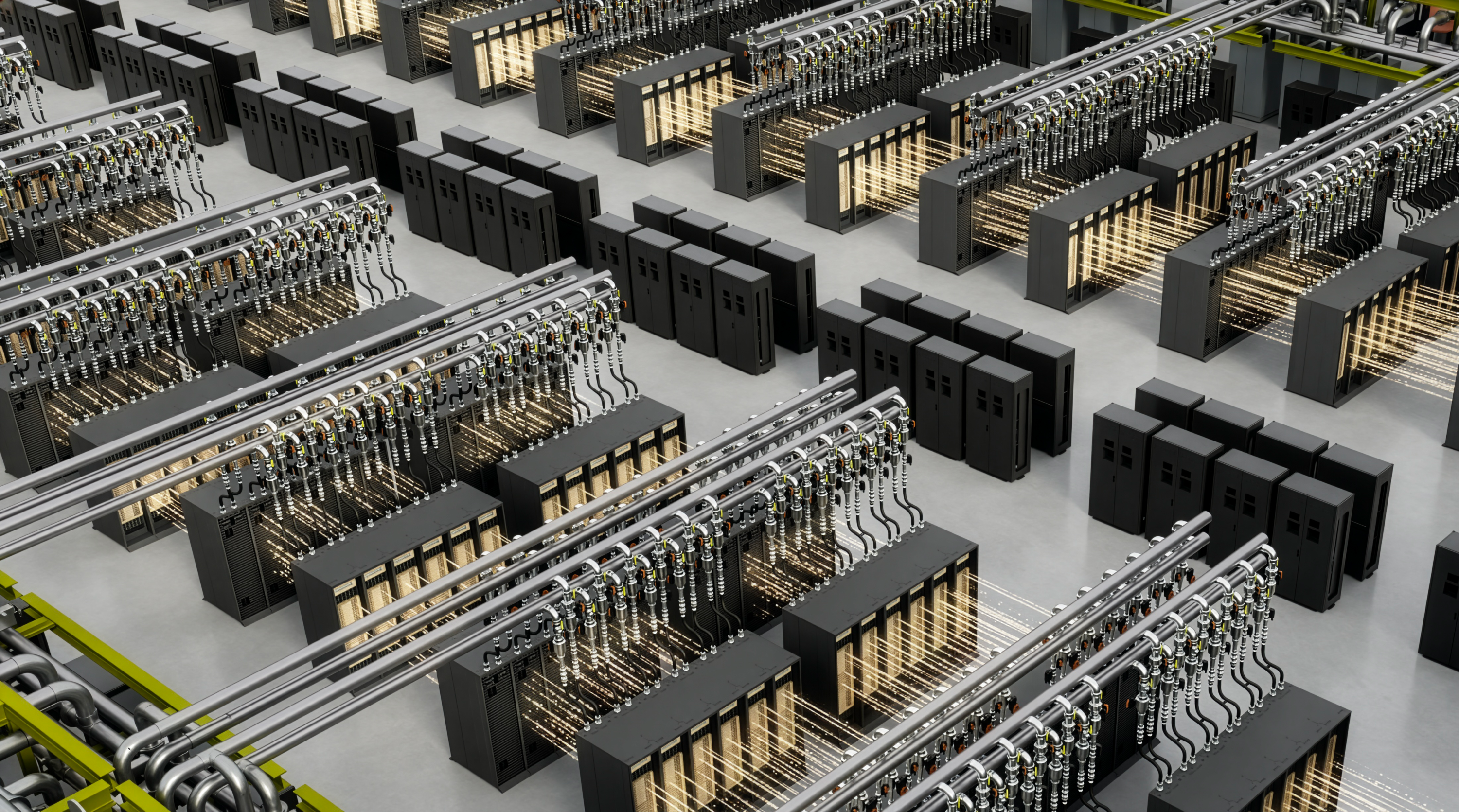

NVIDIA DSX unifies design, simulation, operations, and ecosystem technologies to help build AI factories optimized for tokens per watt.

DSX Sim

A suite of simulation technologies that together enable partners to design, validate, and operate AI factories before and after physical deployment.

High-fidelity logical simulation of NVIDIA hardware and software infrastructure — GPUs, SuperNICs, DPUs, switches — plus validated integrations with storage, security, and orchestration partners.

An end-to-end framework to design, simulate, build, and operate gigawatt-scale AI factory digital twins.

Browse the DSX Blueprint Github repo or get started on build.nvidia.com

Sample scripts and presets for creating DSX SimReady USD assets, covering CAD ingestion, optimization, validation, and metadata workflows for digital twin and AI factory applications.

Browse the AIF Pipeline Samples Github repo

DSX OS

The operating layer within the broader DSX portfolio — open source, modular software for lifecycle management, runtime consistency, fleet health, and multi-tenant AI factory operations.

Unified API layer for scaling inference and simulation workloads across one or more Kubernetes clusters.

Scalable Kubernetes scheduler optimized for GPU resource allocation.

Modular component of NVIDIA Dynamo — provides a Kubernetes API for defining and scaling multi-component AI inference workloads.

Distributed inference-serving framework built to deploy models in multi-node environments.

Agent-based managed service offering continuous GPU health monitoring and predictive failure signals.

Bare-metal provisioning and secure lifecycle management for multi-tenant GPU infrastructure.

Canonical, continuously validated definition of the NVIDIA-accelerated Kubernetes runtime.

Open-source, Kubernetes-native GPU monitoring and fault remediation.

Orchestration system to build, deploy, and operate BlueField-accelerated infrastructure services.

DSX Reference Design

Generation-specific, validated AI factory architectures covering compute, networking, storage, facilities infrastructure, and hardware cluster design.

(Requires NVIDIA Partner Network membership.)Resources

Infrastructure-native reference for building AI cloud services — software components, architecture patterns, and deployment guidance.

Software architecture to help operators build performant, cost-effective inference solutions on NVIDIA platforms.

Primary requirements document covering the full stack of AI cloud infrastructure services — SLAs, security, networking, storage, and operations.

Defines the partner-run qualification process for a Battery Energy Storage System (BESS) intended to support AI load buffering, demand response (DR), and low voltage ride-through (LVRT) in grid-connected and islanded on-site generation modes.

Products that have been validated to meet NVIDIA functional requirements for AI factory applications.

Improves performance per TCO, security, and reliability for cloud providers with hardware and software recipes, tools, capabilities, and references.

Browse the NVIDIA Performance Benchmarking recipes

Qualifies and validates AI infrastructure and platform software for deployment on NVIDIA-accelerated cloud infrastructure.

Browse the ISV NCP Validation Suite