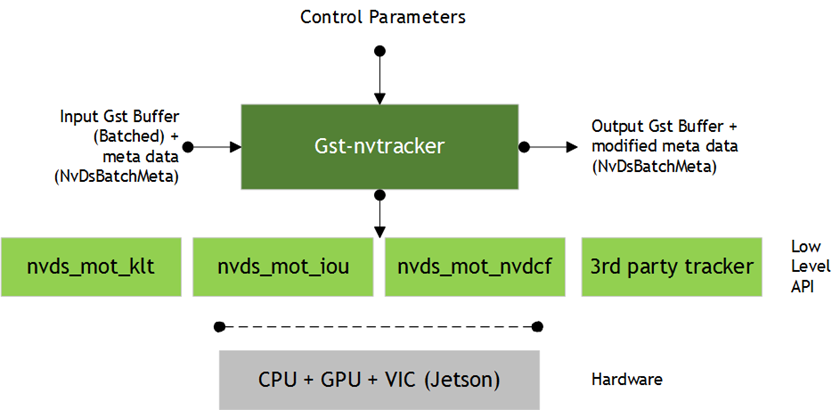

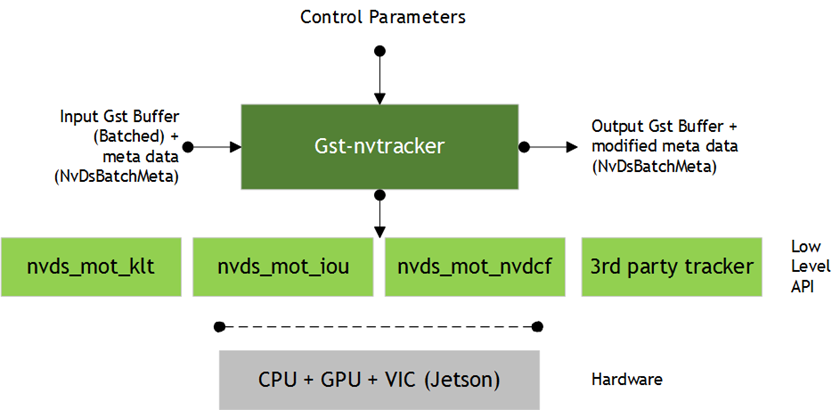

Gst-nvtracker

This plugin tracks detected objects and gives each new object a unique ID.

The plugin adapts a low-level tracker library to the pipeline. It supports any low-level library that implements the low-level API, including the three reference implementations, the NvDCF, KLT, and IOU trackers.

As part of this API, the plugin queries the low-level library for capabilities and requirements concerning input format and memory type. It then converts input buffers into the format requested by the low-level library. For example, the KLT tracker uses Luma-only format; NvDCF uses NV12 or RGBA; and IOU requires no buffer at all.

The low-level capabilities also include support for batch processing across multiple input streams. Batch processing is typically more efficient than processing each stream independently. If a low-level library supports batch processing, that is the preferred mode of operation. However, this preference can be overridden with the enable-batch-process configuration option if the low-level library supports both batch and per-stream modes.

The low-level capabilities also include support for passing the past-frame data, which includes the object tracking data generated in the past frames but not reported as output yet. This can be the case when the low-level tracker stores the object tracking data generated in the past frames internally because of, say, low confidence, but later decided to report due to, say, increased confidence. These past-frame data are reported as a user-meta. This can be enabled by the enable-past-frame configuration option.

The plugin accepts NV12/RGBA data from the upstream component and scales (converts) the input buffer to a buffer in the format required by the low-level library, with tracker width and height. (Tracker width and height must be specified in the configuration file’s [tracker] section.)

The low -level tracker library is selected via the ll-lib-file configuration option in the tracker configuration section. The selected low-level library may also require its own configuration file, which can be specified via the ll-config-file option.

The three reference low level libraries support different algorithms:

• The KLT tracker uses a CPU-based implementation of the Kanade Lucas Tomasi (KLT) tracker algorithm. This library requires no configuration file.

• The Intersection of Union (IOU) tracker uses the intersection of the detector’s bounding boxes across frames to determine the object’s unique ID. This library takes an optional configuration file.

• The Nv-adapted Discriminative Correlation Filter (NvDCF) tracker uses a correlation filter-based online discriminative learning algorithm, coupled with a Hungarian algorithm for data association in multi-object tracking. This library accepts an optional configuration file.

`

Inputs and Outputs

This section summarizes the inputs, outputs, and communication facilities of the Gst-nvtracker plugin.

• Inputs

• Gst Buffer (batched)

• NvDsBatchMeta

Formats supported are NV12 and RGBA.

• Control parameters

• tracker-width

• tracker-height

• gpu-id (for dGPU only)

• ll-lib-file

• ll-config-file

• enable-batch-process

• enable-past-frame

• Output

• Gst Buffer (provided as an input)

• NvDsBatchMeta (Updated by Gst-nvtracker with tracked object coordinates and object IDs)

Features

The following table summarizes the features of the plugin.

Features of the Gst-nvtracker plugin | ||

|---|---|---|

Feature | Description | Release |

Configurable tracker width/height | Frames are internally scaled to specified resolution for tracking | DS 2.0 |

Multi-stream CPU/GPU tracker | Supports tracking on batched buffers consisting of frames from different sources | DS 2.0 |

NV12 Input | — | DS 2.0 |

RGBA Input | — | DS 3.0 |

Allows low FPS tracking | IOU tracker | DS 3.0 |

Configurable GPU device | User can select GPU for internal scaling/color format conversions and tracking | DS2.0 |

Dynamic addition/deletion of sources at runtime | Supports tracking on new sources added at runtime and cleanup of resources when sources are removed | DS 3.0 |

Support for user’s choice of low-level library | Dynamically loads user selected low-level library | DS 4.0 |

Support for batch processing | Supports sending frames from multiple input streams to the low-level library as a batch if the low-level library advertises capability to handle that | DS 4.0 |

Support for multiple buffer formats as input to low-level library | Converts input buffer to formats requested by the low-level library, for up to 4 formats per frame | DS 4.0 |

Support for reporting past-frame data | Supports reporting past-frame data if the low-level library supports the capability | DS 5.0 |

Gst Properties

The following table describes the Gst properties of the Gst-nvtracker plugin.

Gst-nvtracker plugin, Gst Properties | |||

|---|---|---|---|

Property | Meaning | Type and Range | Example Notes |

tracker-width | Frame width at which the tracker is to operate, in pixels. | Integer, 0 to 4,294,967,295 | tracker-width=640 |

tracker-height | Frame height at which the tracker is to operate, in pixels. | Integer, 0 to 4,294,967,295 | tracker-height=368 |

ll-lib-file | Pathname of the low-level tracker library to be loaded by Gst-nvtracker. | String | ll-lib-file=/opt/nvidia/deepstream/libnvds_nvdcf.so |

ll-config-file | Configuration file for the low-level library if needed. | Path to configuration file | ll-config-file=/opt/nvidia/deepstream/tracker_config.yml |

gpu-id | ID of the GPU on which device/unified memory is to be allocated, and with which buffer copy/scaling is to be done. (dGPU only.) | Integer, 0 to 4,294,967,295 | gpu-id=1 |

enable-batch-process | Enables/disables batch processing mode. Only effective if the low-level library supports both batch and per-stream processing. (Optional.) | Boolean | enable-batch-process=1 |

enable-past-frame | Enables/disables reporting past-frame data mode. Only effective if the low-level library supports it. | Boolean | enable-past-frame=1 |

Custom Low-Level Library

To write a custom low-level tracker library, implement the API defined in sources/includes/nvdstracker.h. Parts of the API refer to sources/includes/nvbufsurface.h.

The names of API functions and data structures are prefixed with NvMOT, which stands for NVIDIA Multi-Object Tracker.

This is the general flow of the API from a low-level library perspective:

1. The first required function is:

NvMOTStatus NvMOT_Query(

uint16_t customConfigFilePathSize,

char* pCustomConfigFilePath,

NvMOTQuery *pQuery

);

The plugin uses this function to query the low-level library’s capabilities and requirements before it starts any processing sessions (contexts) with the library. Queried properties include the input frame memory format (e.g., RGBA or NV12), memory type (e.g., NVIDIA® CUDA® device or CPU mapped NVMM), and support for batch processing.

The plugin performs this query once, and its results apply to all contexts established with the low-level library. If a low-level library configuration file is specified, it is provided in the query for the library to consult.

The query reply structure NvMOTQuery contains the following fields:

• NvMOTCompute computeConfig: Reports compute targets supported by the library. The plugin currently only echoes the reported value when initiating a context.

• uint8_t numTransforms: The number of color formats required by the low-level library. The valid range for this field is 0 to NVMOT_MAX_TRANSFORMS. Set this to 0 if the library does not require any visual data. Note that 0 does not mean that untransformed data will be passed to the library.

• NvBufSurfaceColorFormat colorFormats[NVMOT_MAX_TRANSFORMS]: The list of color formats required by the low-level library. Only the first numTransforms entries are valid.

• NvBufSurfaceMemType memType: Memory type for the transform buffers. The plugin allocates buffers of this type to store color and scale-converted frames, and the buffers are passed to the low-level library for each frame. Note that support is currently limited to the following types:

dGPU: NVBUF_MEM_CUDA_PINNED

NVBUF_MEM_CUDA_UNIFIED

NVBUF_MEM_CUDA_UNIFIED

Jetson: NVBUF_MEM_SURFACE_ARRAY

• bool supportBatchProcessing: True if the low-library support batch processing across multiple streams; otherwise false.

2. After the query, and before any frames arrive, the plugin must initialize a context with the low-level library by calling:

NvMOTStatus NvMOT_Init(

NvMOTConfig *pConfigIn,

NvMOTContextHandle *pContextHandle,

NvMOTConfigResponse *pConfigResponse

);

The context handle is opaque outside the low-level library. In batch processing mode, the plugin requests a single context for all input streams. In per-stream processing mode, the plugin makes this call for each input stream so that each stream has its own context.

This call includes a configuration request for the context. The low-level library has an opportunity to:

• Review the configuration and create a context only if the request is accepted. If any part of the configuration request is rejected, no context is created, and the return status must be set to NvMOTStatus_Error. The pConfigResponse field can optionally contain status for specific configuration items.

• Pre-allocate resources based on the configuration.

Note: | • In the NvMOTMiscConfig structure, the logMsg field is currently unsupported and uninitialized. • The customConfigFilePath pointer is only valid during the call. |

3. Once a context is initialized, the plugin sends frame data along with detected object bounding boxes to the low-level library each time it receives such data from upstream. It always presents the data as a batch of frames, although the batch contains only a single frame in per-stream processing contexts. Each batch is guaranteed to contain at most one frame from each stream.

The function call for this processing is:

NvMOTStatus NvMOT_Process(NvMOTContextHandle contextHandle,

NvMOTProcessParams *pParams,

NvMOTTrackedObjBatch *pTrackedObjectsBatch

);

Where:

• pParams is a pointer to the input batch of frames to process. The structure contains a list of one or more frames, with at most one frame from each stream. No two frame entries have the same streamID. Each entry of frame data contains a list of one or more buffers in the color formats required by the low-level library, as well as a list of object descriptors for the frame. Most libraries require at most one-color format.

• pTrackedObjectsBatch is a pointer to the output batch of object descriptors. It is pre-populated with a value for numFilled, the number of frames included in the input parameters.

If a frame has no output object descriptors, it is still counted in numFilled and is represented with an empty list entry (NvMOTTrackedObjList). An empty list entry has the correct streamID set and numFilled set to 0.

Note: | The output object descriptor NvMOTTrackedObj contains a pointer to the associated input object, associatedObjectIn. You must set this to the associated input object only for the frame where the input object is passed in. For example: • Frame 0: NvMOTObjToTrack X is passed in. The tracker assigns it ID 1, and the output object associatedObjectIn points to X. • Frame 1: Inference is skipped, so there is no input object. The tracker finds object 1, and the output object associatedObjectIn points to NULL. • Frame 2: NvMOTObjToTrack Y is passed in. The tracker identifies it as object 1. The output object 1 has associatedObjectIn pointing to Y. |

4. Depending on the capability of the low-level tracker, there could be some tracked object data generated in the past frames but stored internally without being reported due to, say, low confidence in the past frames. If it becomes more confident in the later frames and ready to report them, then those past-frame data can be retrieved from the tracker plug-in using the following function call:

NvMOTStatus NvMOT_ProcessPast(NvMOTContextHandle contextHandle,

NvMOTProcessParams *pParams,

NvDsPastFrameObjBatch *pPastFrameObjBatch

);

Where:

• pParams is a pointer to the input batch of frames to process. This structure is needed to check the list of streamID in the batch.

• pPastFrameObjBatch is a pointer to the output batch of object descriptors generated in the past frames. The data structure NvDsPastFrameObjBatch is defined in include/nvds_tracker_meta.h. It may include a set of tracking data for each stream in the input. For each object, there could be multiple past-frame data in case the tracking data is stored for multiple frames for the object.

5. In case that a video stream source is removed on the fly, the plugin calls the following function so that the low-level tracker lib can remove it as well. Note that this API is optional and valid only when the batch processing mode is enabled, meaning that it will be executed only when the low-level tracker lib has an actual implementation. If called, the low-level tracker lib can release any per-stream resource that it may be allocated:

void NvMOT_RemoveStreams(NvMOTContextHandle contextHandle, NvMOTStreamId streamIdMask);

6. When all processing is complete, the plugin calls this function to clean up the context:

void NvMOT_DeInit(NvMOTContextHandle contextHandle);

Low-Level Tracker Library Comparisons and Tradeoffs

DeepStream 5.0 provides three low-level tracker libraries which have different resource requirements and performance characteristics, in terms of accuracy, robustness, and efficiency, allowing you to choose the best tracker based on you use case. See the following table for comparison.

Tracker library comparison | |||||

|---|---|---|---|---|---|

Tracker | Computational Load | Pros | Cons | Best Use Cases | |

GPU | CPU | ||||

IOU | X | Very Low | Light weight | No visual features for matching, so prone to frequent tracker ID switches and failures. Not suitable for fast moving scene. | Objects are sparsely located, with distinct sizes. Detector is expected to run every frame or very frequently (ex. every alternate frame). |

KLT | X | High | Works reasonably well for simple scenes | High CPU utilization. Susceptible to change in the visual appearance due to noise and perturbations, such as shadow, non-rigid deformation, out-of-plane rotation, and partial occlusion. Cannot work on objects with low textures. | Objects with strong textures and simpler background. Ideal for high CPU resource availability. |

NvDCF | Medium | Low | Highly robust against partial occlusions, shadow, and other transient visual changes. Less frequent ID switches. | Slower than KLT and IOU due to increased computational complexity. Reduces the total number of streams processed. | Multi-object, complex scenes with partial occlusion. |

NvDCF Low-Level Tracker

NvDCF is a reference implementation of the custom low-level tracker library that supports multi-stream, multi-object tracking in a batch mode using a discriminative correlation filter (DCF) based approach for visual object tracking and a Hungarian algorithm for data association.

NvDCF allocates memory during initialization based on:

• The number of streams to be processed

• The maximum number of objects to be tracked per stream (denoted as maxTargetsPerStream in a configuration file for the NvDCF low-level library, tracker_config.yml)

Once the number of objects being tracked reaches the configured maximum value, any new objects will be discarded until resources for some existing tracked objects are released. Note that the number of objects being tracked includes objects that are tracked in Shadow Mode (described below). Therefore, NVIDIA recommends that you make maxTargetsPerStream large enough to accommodate the maximum number of objects of interest that may appear in a frame, as well as the past objects that may be tracked in shadow mode. Also, note that GPU memory usage by NvDCF is linearly proportional to the total number of objects being tracked, which is (number of video streams) × (maxTargetsPerStream).

DCF-based trackers typically apply an exponential moving average for temporal consistency when the optimal correlation filter is created and updated. The learning rate for this moving average can be configured as filterLr. The standard deviation for Gaussian for desired response when creating an optimal DCF filter can also be configured as gaussianSigma.

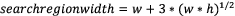

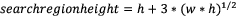

DCF-based trackers also define a search region around the detected target location large enough for the same target to be detected in the search region in the next frame. The SearchRegionPaddingScale property determines the size of the search region as a multiple of the target’s bounding box size. For example, with SearchRegionPaddingScale:3, the size of the search region would be:

Where w and h are the width and height of the target’s bounding box.

Once the search region is defined for each target, the image patches for the search regions are cropped and scaled to a predefined feature image size, then the visual features are extracted. The featureImgSizeLevel property defines the size of the feature image. A lower value of featureImgSizeLevel causes NvDCF to use a smaller feature size, increasing GPU performance at the cost of accuracy and robustness.

Consider the relationship between featureImgSizeLevel and SearchRegionPaddingScale when configuring the parameters. If SearchRegionPaddingScale is increased while featureImgSizeLevel is fixed, the number of pixels corresponding to the target in the feature images is effectively decreased.

The minDetectorConfidence property sets confidence level below which object detection results are filtered out.

To achieve robust tracking, NvDCF employs two approaches to handling false alarms from PGIE detectors: late activation for handling false positives and shadow tracking for false negatives. Whenever a new object is detected a new tracker is instantiated in temporary mode. It must be activated to be considered as a valid target. Before it is activated it undergoes a probationary period, defined by probationAge, in temporary mode. If the object is not detected in more than earlyTerminationAge consecutive frames during the period, the tracker is terminated early.

Once the tracker for an object is activated, it is put into inactive mode only when (1) no matching detector input is found during the data association, or (2) the tracker confidence falls below a threshold defined by minTrackerConfidence. The per-object tracker will be put into active mode again if a matching detector input is found. The length of period during which a per-object tracker is in inactive mode is called the shadow tracking age; if it reaches the threshold defined by maxShadowTrackingAge, the tracker is terminated. If the bounding box of an object being tracked goes partially out of the image frame and so its visibility falls below a predefined threshold defined by minVisibiilty4Tracking, the tracker is also terminated.

Note that probationAge is counted against a clock that is incremented at every frame, while maxShadowTrackingAge and earlyTerminationAge are counted against a clock incremented only when the shadow tracking age is incremented. When the PGIE detector runs on every frame (i.e., interval=0 in the [primary-gie] section of the deepstream-app configuration file), for example, all the ages are incremented based on the same clock. If the PGIE detector runs on every other frame (i.e., interval is set to 1 in [primary-gie]) and probationAge:12, it will yield almost the same results as interval=0 with probationAge:24, because shadowTrackingAge would be incremented at a half speed compared to the case with PGIE interval=0.

NvDCF can generate a unique ID to some extent. If enabled by setting `useUniqueID: 1`, NvDCF would generate a 32-bit random number at the initialization stage and use it as the upper 32-bit of the following target ID generation, which is uint64_t type. The randomly generated upper 32-bit number allows the target ID to increment from a random position in the possible ID space. The initial value of the lower 32-bit of the target ID starts from 0. If disabled (which is the default value), the target ID generation would be incremented from 0.

NvDCF employs two types of state estimators: MovingAvgEstimator (MAE) and Kalman Filter (KF). Both KF and MAE have 7 states defined, which include {x, y, a, h, dx, dy, dh}, where x and y indicate the coordinates of the top-left corner of a target bbox, while a and h do the aspect ratio and the height of the bbox, respectively. dx, dy, and dh are the velocity of the three parameters. KF employs a constant velocity model for generic use. The measurement vector is defined as {x, y, a, h}. The process noise variance for {x, y}, {a, h}, and {dx, dy, dh} can be configured by `kfProcessNoiseVar4Loc`, `kfProcessNoiseVar4Scale`, and `kfProcessNoiseVar4Vel`, respectively. Note that from the state estimator’s point of view, there could be two different measurements: the bbox from the detector (i.e., PGIE) and the bbox from the tracker. This is because NvDCF is capable of localizing targets using its learned filter. The measurement noise variance for these two different types of measurements can be configured by `kfMeasurementNoiseVar4Det` and `kfMeasurementNoiseVar4Trk`. MAE is much simpler than KF, so more efficient in processing. The learning rate for the moving average of the defined states can be configured by `trackExponentialSmoothingLr_loc`, `trackExponentialSmoothingLr_scale`, and `trackExponentialSmoothingLr_velocity`.

To enhance the robustness, NvDCF allows to add the cross-correlation score to nearby objects as an additional regularization term, which is referred to as instance-awareness. If enabled by `useInstanceAwareness`, the number of nearby instances and the regularization weight for each instance would be determined by the config params `maxInstanceNum_ia` and `lambda_ia`, respectively.

The following table summarizes the configuration parameters for an NvDCF low-level tracker.

NvDCF low-level tracker, configuration properties | |||

Property | Meaning | Type and Range | Example Notes |

useUniqueID | Enable unique ID generation scheme | Boolean | useUniqueID: 1 |

maxTargetsPerStream | Max number of targets to track per stream | Integer, 0 to 65535 | maxTargetsPerStream: 30 |

filterLr | Learning rate for DCF filter in exponential moving average | Float, 0.0 to 1.0 | filterLr: 0.11 |

filterChannelWeightsLr | Learning rate for weights for different feature channels in DCF | Float, 0.0 to 1.0 | filterChannelWeightsLr: 0.22 |

gaussianSigma | Standard deviation for Gaussian for desired response | Float, >0.0 | gaussianSigma: 0.75 |

featureImgSizeLevel | Size of a feature image | Integer, 1 to 5 | featureImgSizeLevel: 1 |

SearchRegionPaddingScale | Search region size | Integer, 1 to 3 | SearchRegionPaddingScale: 3 |

minDetectorConfidence | Minimum detector confidence for a valid object | Float, -inf to inf | minDetectorConfidence: 0.0 |

minTrackerConfidence | Minimum detector confidence for a valid target | Float, 0.0 to 1.0 | minTrackerConfidence: 0.6 |

minTargetBboxSize | Minimum bbox size for a valid target [pixel] | Int, ≥0 | minTargetBboxSize: 10 |

minDetectorBboxVisibilityTobeTracked | Minimum detector bbox visibility for a valid candidate | Float, 0.0 to 1.0 | minDetectorBboxVisibilityTobeTracked: 0 |

minVisibiilty4Tracking | Minimum visibility of target bounding box to be considered valid | Float, 0.0 to 1.0 | minVisibiilty4Tracking: 0.1 |

targetDuplicateRunInterval | Interval in which duplicate target removal is carried out [frame] | Int, -inf to inf | targetDuplicateRunInterval: 5 |

minIou4TargetDuplicate | Min IOU for two bboxes to be considered as duplicates | Float, 0.0 to 1.0 | minIou4TargetDuplicate: 0.9 |

useGlobalMatching | Enable Hungarian method for data association | Boolean | useGlobalMatching: 1 |

maxShadowTrackingAge | Maximum length of shadow tracking | Integer, ≥0 | maxShadowTrackingAge: 9 |

probationAge | Length of probationary period | Integer, ≥0 | probationAge: 12 |

earlyTerminationAge | Early termination age | Integer, ≥0 | earlyTerminationAge: 2 |

minMatchingScore4Overall | Min total score for valid matching | Float, 0.0 to 1.0 | minMatchingScore4Overall: 0 |

minMatchingScore4SizeSimilarity | Min bbox size similarity score for valid matching | Float, 0.0 to 1.0 | minMatchingScore4SizeSimilarity: 0.5 |

minMatchingScore4Iou | Min IOU score for valid matching | Float, 0.0 to 1.0 | minMatchingScore4Iou: 0.1 |

minMatchingScore4VisualSimilarity | Min visual similarity score for valid matching | Float, 0.0 to 1.0 | minMatchingScore4VisualSimilarity: 0.2 |

matchingScoreWeight4VisualSimilarity | Weight for visual similarity term in matching cost function | Float, 0.0 to 1.0 | matchingScoreWeight4VisualSimilarity: 0.8 |

matchingScoreWeight4SizeSimilarity | Weight for size similarity term in matching cost function | Float, 0.0 to 1.0 | matchingScoreWeight4SizeSimilarity: 0 |

matchingScoreWeight4Iou | Weight for IOU term in matching cost function | Float, 0.0 to 1.0 | matchingScoreWeight4Iou: 0.1 |

matchingScoreWeight4Age | Weight for tracking age term in matching cost function | Float, 0.0 to 1.0 | matchingScoreWeight4Age: 0.1 |

useTrackSmoothing | Enable state estimator | Boolean | useTrackSmoothing: 1 |

stateEstimatorType | Type of state estimator among {MovingAvg: 1, Kalman: 2} | Int | stateEstimatorType: 2 |

trackExponentialSmoothingLr_loc | Learning rate for location | Float, 0.0 to 1.0 | trackExponentialSmoothingLr_loc: 0.5 |

trackExponentialSmoothingLr_scale | Learning rate for scale | Float, 0.0 to 1.0 | trackExponentialSmoothingLr_scale: 0.3 |

trackExponentialSmoothingLr_velocity | Learning rate for velocity | Float, 0.0 to 1.0 | trackExponentialSmoothingLr_velocity: 0.05 |

kfProcessNoiseVar4Loc | Process noise variance for location | Float, ≥0 | kfProcessNoiseVar4Loc: 0.1 |

kfProcessNoiseVar4Scale | Process noise variance for scale | Float, ≥0 | kfProcessNoiseVar4Scale: 0.04 |

kfProcessNoiseVar4Vel | Process noise variance for velocity | Float, ≥0 | kfProcessNoiseVar4Vel: 0.04 |

kfMeasurementNoiseVar4Trk | Measurement noise variance for tracker bbox | Float, ≥0 | kfMeasurementNoiseVar4Trk: 9 |

kfMeasurementNoiseVar4Det | Measurement noise variance for detector bbox | Float, ≥0 | kfMeasurementNoiseVar4Det: 9 |