Detailed Description

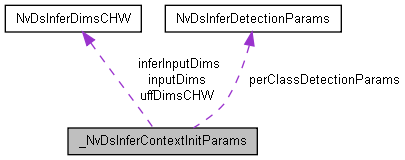

Holds the initialization parameters required for the NvDsInferContext interface.

Definition at line 241 of file sources/includes/nvdsinfer_context.h.

Data Fields | |

| unsigned int | uniqueID |

| Holds a unique identifier for the instance. More... | |

| NvDsInferNetworkMode | networkMode |

| Holds an internal data format specifier used by the inference engine. More... | |

| char | protoFilePath [_PATH_MAX] |

| Holds the pathname of the prototxt file. More... | |

| char | modelFilePath [_PATH_MAX] |

| Holds the pathname of the caffemodel file. More... | |

| char | uffFilePath [_PATH_MAX] |

| Holds the pathname of the UFF model file. More... | |

| char | onnxFilePath [_PATH_MAX] |

| Holds the pathname of the ONNX model file. More... | |

| char | tltEncodedModelFilePath [_PATH_MAX] |

| Holds the pathname of the TLT encoded model file. More... | |

| char | int8CalibrationFilePath [_PATH_MAX] |

| Holds the pathname of the INT8 calibration file. More... | |

| union { | |

| NvDsInferDimsCHW inputDims | |

| Holds the input dimensions for the model. More... | |

| NvDsInferDimsCHW uffDimsCHW | |

| Holds the input dimensions for the UFF model. More... | |

| } | instead |

| NvDsInferTensorOrder | uffInputOrder |

| Holds the original input order for the UFF model. More... | |

| char | uffInputBlobName [_MAX_STR_LENGTH] |

| Holds the name of the input layer for the UFF model. More... | |

| NvDsInferTensorOrder | netInputOrder |

| Holds the original input order for the network. More... | |

| char | tltModelKey [_MAX_STR_LENGTH] |

| Holds the string key for decoding the TLT encoded model. More... | |

| char | modelEngineFilePath [_PATH_MAX] |

| Holds the pathname of the serialized model engine file. More... | |

| unsigned int | maxBatchSize |

| Holds the maximum number of frames to be inferred together in a batch. More... | |

| char | labelsFilePath [_PATH_MAX] |

| Holds the pathname of the labels file containing strings for the class labels. More... | |

| char | meanImageFilePath [_PATH_MAX] |

| Holds the pathname of the mean image file (PPM format). More... | |

| float | networkScaleFactor |

| Holds the normalization factor with which to scale the input pixels. More... | |

| NvDsInferFormat | networkInputFormat |

| Holds the network input format. More... | |

| float | offsets [_MAX_CHANNELS] |

| Holds the per-channel offsets for mean subtraction. More... | |

| unsigned int | numOffsets |

| NvDsInferNetworkType | networkType |

| Holds the network type. More... | |

| Use NvDsInferClusterMode instead int | useDBScan |

| Holds a Boolean; true if DBScan is to be used for object clustering, or false if OpenCV groupRectangles is to be used. More... | |

| unsigned int | numDetectedClasses |

| Holds the number of classes detected by a detector network. More... | |

| NvDsInferDetectionParams * | perClassDetectionParams |

| Holds per-class detection parameters. More... | |

| float | classifierThreshold |

| Holds the minimum confidence threshold for the classifier to consider a label valid. More... | |

| float | segmentationThreshold |

| char ** | outputLayerNames |

| Holds a pointer to an array of pointers to output layer names. More... | |

| unsigned int | numOutputLayers |

| Holds the number of output layer names. More... | |

| char | customLibPath [_PATH_MAX] |

| Holds the pathname of the library containing custom methods required to support the network. More... | |

| char | customBBoxParseFuncName [_MAX_STR_LENGTH] |

| Holds the name of the custom bounding box function in the custom library. More... | |

| char | customClassifierParseFuncName [_MAX_STR_LENGTH] |

| Name of the custom classifier attribute parsing function in the custom library. More... | |

| int | copyInputToHostBuffers |

| Holds a Boolean; true if the input layer contents are to be copied to host memory for access by the application. More... | |

| unsigned int | gpuID |

| Holds the ID of the GPU which is to run the inference. More... | |

| int | useDLA |

| Holds a Boolean; true if DLA is to be used. More... | |

| int | dlaCore |

| Holds the ID of the DLA core to use. More... | |

| unsigned int | outputBufferPoolSize |

| Holds the number of sets of output buffers (host and device) to be allocated. More... | |

| char | customNetworkConfigFilePath [_PATH_MAX] |

| Holds the pathname of the configuration file for custom network creation. More... | |

| char | customEngineCreateFuncName [_MAX_STR_LENGTH] |

| Name of the custom engine creation function in the custom library. More... | |

| int | forceImplicitBatchDimension |

| For model parsers supporting both implicit batch dim and full dims, prefer to use implicit batch dim. More... | |

| unsigned int | workspaceSize |

| Max workspace size (unit MB) that will be used as tensorrt build settings for cuda engine. More... | |

| NvDsInferDimsCHW | inferInputDims |

| Inference input dimensions for runtime engine. More... | |

| NvDsInferClusterMode | clusterMode |

| Holds the type of clustering mode. More... | |

| char | customBBoxInstanceMaskParseFuncName [_MAX_STR_LENGTH] |

| Holds the name of the bounding box and instance mask parse function in the custom library. More... | |

| char ** | outputIOFormats |

| Can be used to specify the format and datatype for bound output layers. More... | |

| unsigned int | numOutputIOFormats |

| Holds number of output IO formats specified. More... | |

| char | customSegmentationParseFuncName [_MAX_STR_LENGTH] |

| Holds the name of segmentation parse function in the custom library. More... | |

| char ** | layerDevicePrecisions |

| Can be used to specify the device type and inference precision of layers. More... | |

| unsigned int | numLayerDevicePrecisions |

| Holds number of layer device precisions specified. More... | |

| NvDsInferTensorOrder | segmentationOutputOrder |

| Holds output order for segmentation network. More... | |

| int | inputFromPreprocessedTensor |

| Boolean flag indicating that caller will supply preprocessed tensors for inferencing. More... | |

| int | disableOutputHostCopy |

| Boolean flag indicating that whether we will post processing on GPU if this flag enabled, nvinfer will return gpu buffer to prossprocessing user must write cuda post processing code. More... | |

| int | autoIncMem |

| Boolean flag indicating that whether we will automatically increase bufferpool size when facing a bottleneck. More... | |

| double | maxGPUMemPer |

| Max gpu memory that can be occupied while expanding the bufferpool. More... | |

| int | dumpIpTensor |

| Boolean flag indicating whether or not to dump raw input tensor data. More... | |

| int | dumpOpTensor |

| Boolean flag indicating whether or not to dump raw input tensor data. More... | |

| int | overwriteIpTensor |

| Boolean flag indicating whether or not to overwrite raw input tensor data provided by the user into the buffer for inference. More... | |

| char | ipTensorFilePath [_PATH_MAX] |

| Path to the raw input tensor data that is going to be used to overwrite the buffer. More... | |

| int | overwriteOpTensor |

| Boolean flag indicating whether or not to overwrite raw ouput tensor data provided by the user into the buffer for inference. More... | |

| char ** | opTensorFilePath |

| List of paths to the raw output tensor data that are going to be used to overwrite the different output buffers. More... | |

| int | useStronglyTyped |

| Boolean flag indicating whether to enable strongly typed network mode. More... | |

| char ** | dynamicPropertyKeys |

| Dynamic properties for TensorRT/trtexec flags. More... | |

| char ** | dynamicPropertyValues |

| unsigned int | numDynamicProperties |

| int | warmupEngine |

| Boolean flag indicating whether to run TensorRT engine warmup during initialization. More... | |

| union { | |

| NvDsInferDimsCHW inputDims | |

| Holds the input dimensions for the model. More... | |

| NvDsInferDimsCHW uffDimsCHW | |

| Holds the input dimensions for the UFF model. More... | |

| } | instead |

Field Documentation

◆ autoIncMem

| int _NvDsInferContextInitParams::autoIncMem |

Boolean flag indicating that whether we will automatically increase bufferpool size when facing a bottleneck.

Definition at line 430 of file sources/includes/nvdsinfer_context.h.

◆ classifierThreshold

| float _NvDsInferContextInitParams::classifierThreshold |

Holds the minimum confidence threshold for the classifier to consider a label valid.

Definition at line 331 of file sources/includes/nvdsinfer_context.h.

◆ clusterMode

| NvDsInferClusterMode _NvDsInferContextInitParams::clusterMode |

Holds the type of clustering mode.

Definition at line 389 of file sources/includes/nvdsinfer_context.h.

◆ copyInputToHostBuffers

| int _NvDsInferContextInitParams::copyInputToHostBuffers |

Holds a Boolean; true if the input layer contents are to be copied to host memory for access by the application.

Definition at line 353 of file sources/includes/nvdsinfer_context.h.

◆ customBBoxInstanceMaskParseFuncName

| char _NvDsInferContextInitParams::customBBoxInstanceMaskParseFuncName |

Holds the name of the bounding box and instance mask parse function in the custom library.

Definition at line 393 of file sources/includes/nvdsinfer_context.h.

◆ customBBoxParseFuncName

| char _NvDsInferContextInitParams::customBBoxParseFuncName |

Holds the name of the custom bounding box function in the custom library.

Definition at line 346 of file sources/includes/nvdsinfer_context.h.

◆ customClassifierParseFuncName

| char _NvDsInferContextInitParams::customClassifierParseFuncName |

Name of the custom classifier attribute parsing function in the custom library.

Definition at line 349 of file sources/includes/nvdsinfer_context.h.

◆ customEngineCreateFuncName

| char _NvDsInferContextInitParams::customEngineCreateFuncName |

Name of the custom engine creation function in the custom library.

Definition at line 373 of file sources/includes/nvdsinfer_context.h.

◆ customLibPath

| char _NvDsInferContextInitParams::customLibPath |

Holds the pathname of the library containing custom methods required to support the network.

Definition at line 343 of file sources/includes/nvdsinfer_context.h.

◆ customNetworkConfigFilePath

| char _NvDsInferContextInitParams::customNetworkConfigFilePath |

Holds the pathname of the configuration file for custom network creation.

This can be used to store custom properties required by the custom network creation function.

Definition at line 370 of file sources/includes/nvdsinfer_context.h.

◆ customSegmentationParseFuncName

| char _NvDsInferContextInitParams::customSegmentationParseFuncName |

Holds the name of segmentation parse function in the custom library.

Definition at line 403 of file sources/includes/nvdsinfer_context.h.

◆ disableOutputHostCopy

| int _NvDsInferContextInitParams::disableOutputHostCopy |

Boolean flag indicating that whether we will post processing on GPU if this flag enabled, nvinfer will return gpu buffer to prossprocessing user must write cuda post processing code.

Definition at line 425 of file sources/includes/nvdsinfer_context.h.

◆ dlaCore

| int _NvDsInferContextInitParams::dlaCore |

Holds the ID of the DLA core to use.

Definition at line 361 of file sources/includes/nvdsinfer_context.h.

◆ dumpIpTensor

| int _NvDsInferContextInitParams::dumpIpTensor |

Boolean flag indicating whether or not to dump raw input tensor data.

Definition at line 438 of file sources/includes/nvdsinfer_context.h.

◆ dumpOpTensor

| int _NvDsInferContextInitParams::dumpOpTensor |

Boolean flag indicating whether or not to dump raw input tensor data.

Definition at line 441 of file sources/includes/nvdsinfer_context.h.

◆ dynamicPropertyKeys

| char ** _NvDsInferContextInitParams::dynamicPropertyKeys |

Dynamic properties for TensorRT/trtexec flags.

This allows adding new properties without modifying the structure. Each property is stored as a key-value pair where:

- key: property name (e.g., "data_max", "init_max", "calibration_data")

- value: property value as string (can be converted to appropriate type)

Definition at line 472 of file sources/includes/nvdsinfer_context.h.

◆ dynamicPropertyValues

| char ** _NvDsInferContextInitParams::dynamicPropertyValues |

Definition at line 473 of file sources/includes/nvdsinfer_context.h.

◆ forceImplicitBatchDimension

| int _NvDsInferContextInitParams::forceImplicitBatchDimension |

For model parsers supporting both implicit batch dim and full dims, prefer to use implicit batch dim.

By default, full dims network mode is used.

Definition at line 378 of file sources/includes/nvdsinfer_context.h.

◆ gpuID

| unsigned int _NvDsInferContextInitParams::gpuID |

Holds the ID of the GPU which is to run the inference.

Definition at line 356 of file sources/includes/nvdsinfer_context.h.

◆ inferInputDims

| NvDsInferDimsCHW _NvDsInferContextInitParams::inferInputDims |

Inference input dimensions for runtime engine.

Definition at line 386 of file sources/includes/nvdsinfer_context.h.

◆ inputDims

| NvDsInferDimsCHW _NvDsInferContextInitParams::inputDims |

Holds the input dimensions for the model.

Definition at line 267 of file sources/includes/nvdsinfer_context.h.

◆ inputFromPreprocessedTensor

| int _NvDsInferContextInitParams::inputFromPreprocessedTensor |

Boolean flag indicating that caller will supply preprocessed tensors for inferencing.

NvDsInferContext will skip preprocessing initialization steps and will not interpret network input layer dimensions.

Definition at line 419 of file sources/includes/nvdsinfer_context.h.

◆ instead [1/2]

| union { ... } Use inferInputDims _NvDsInferContextInitParams::instead |

Definition at line 265 of file 9.0/sources/includes/nvdsinfer_context.h.

◆ instead [2/2]

| union { ... } Use inferInputDims _NvDsInferContextInitParams::instead |

Definition at line 265 of file sources/includes/nvdsinfer_context.h.

◆ int8CalibrationFilePath

| char _NvDsInferContextInitParams::int8CalibrationFilePath |

Holds the pathname of the INT8 calibration file.

Required only when using INT8 mode.

Definition at line 263 of file sources/includes/nvdsinfer_context.h.

◆ ipTensorFilePath

| char _NvDsInferContextInitParams::ipTensorFilePath |

Path to the raw input tensor data that is going to be used to overwrite the buffer.

Definition at line 449 of file sources/includes/nvdsinfer_context.h.

◆ labelsFilePath

| char _NvDsInferContextInitParams::labelsFilePath |

Holds the pathname of the labels file containing strings for the class labels.

The labels file is optional. The file format is described in the custom models section of the DeepStream SDK documentation.

Definition at line 296 of file sources/includes/nvdsinfer_context.h.

◆ layerDevicePrecisions

| char ** _NvDsInferContextInitParams::layerDevicePrecisions |

Can be used to specify the device type and inference precision of layers.

For each layer specified the format is "<layer-name>:<device-type>:<precision>"

Definition at line 408 of file sources/includes/nvdsinfer_context.h.

◆ maxBatchSize

| unsigned int _NvDsInferContextInitParams::maxBatchSize |

Holds the maximum number of frames to be inferred together in a batch.

The number of input frames in a batch must be less than or equal to this.

Definition at line 291 of file sources/includes/nvdsinfer_context.h.

◆ maxGPUMemPer

| double _NvDsInferContextInitParams::maxGPUMemPer |

Max gpu memory that can be occupied while expanding the bufferpool.

Definition at line 434 of file sources/includes/nvdsinfer_context.h.

◆ meanImageFilePath

| char _NvDsInferContextInitParams::meanImageFilePath |

Holds the pathname of the mean image file (PPM format).

File resolution must be equal to the network input resolution.

Definition at line 300 of file sources/includes/nvdsinfer_context.h.

◆ modelEngineFilePath

| char _NvDsInferContextInitParams::modelEngineFilePath |

Holds the pathname of the serialized model engine file.

When using the model engine file, other parameters required for creating the model engine are ignored.

Definition at line 286 of file sources/includes/nvdsinfer_context.h.

◆ modelFilePath

| char _NvDsInferContextInitParams::modelFilePath |

Holds the pathname of the caffemodel file.

Definition at line 253 of file sources/includes/nvdsinfer_context.h.

◆ netInputOrder

| NvDsInferTensorOrder _NvDsInferContextInitParams::netInputOrder |

Holds the original input order for the network.

Definition at line 278 of file sources/includes/nvdsinfer_context.h.

◆ networkInputFormat

| NvDsInferFormat _NvDsInferContextInitParams::networkInputFormat |

Holds the network input format.

Definition at line 306 of file sources/includes/nvdsinfer_context.h.

◆ networkMode

| NvDsInferNetworkMode _NvDsInferContextInitParams::networkMode |

Holds an internal data format specifier used by the inference engine.

Definition at line 248 of file sources/includes/nvdsinfer_context.h.

◆ networkScaleFactor

| float _NvDsInferContextInitParams::networkScaleFactor |

Holds the normalization factor with which to scale the input pixels.

Definition at line 303 of file sources/includes/nvdsinfer_context.h.

◆ networkType

| NvDsInferNetworkType _NvDsInferContextInitParams::networkType |

Holds the network type.

Definition at line 315 of file sources/includes/nvdsinfer_context.h.

◆ numDetectedClasses

| unsigned int _NvDsInferContextInitParams::numDetectedClasses |

Holds the number of classes detected by a detector network.

Definition at line 323 of file sources/includes/nvdsinfer_context.h.

◆ numDynamicProperties

| unsigned int _NvDsInferContextInitParams::numDynamicProperties |

Definition at line 474 of file sources/includes/nvdsinfer_context.h.

◆ numLayerDevicePrecisions

| unsigned int _NvDsInferContextInitParams::numLayerDevicePrecisions |

Holds number of layer device precisions specified.

Definition at line 410 of file sources/includes/nvdsinfer_context.h.

◆ numOffsets

| unsigned int _NvDsInferContextInitParams::numOffsets |

Definition at line 312 of file sources/includes/nvdsinfer_context.h.

◆ numOutputIOFormats

| unsigned int _NvDsInferContextInitParams::numOutputIOFormats |

Holds number of output IO formats specified.

Definition at line 400 of file sources/includes/nvdsinfer_context.h.

◆ numOutputLayers

| unsigned int _NvDsInferContextInitParams::numOutputLayers |

Holds the number of output layer names.

Definition at line 338 of file sources/includes/nvdsinfer_context.h.

◆ offsets

| float _NvDsInferContextInitParams::offsets |

Holds the per-channel offsets for mean subtraction.

This is an alternative to the mean image file. The number of offsets in the array must be equal to the number of input channels.

Definition at line 311 of file sources/includes/nvdsinfer_context.h.

◆ onnxFilePath

| char _NvDsInferContextInitParams::onnxFilePath |

Holds the pathname of the ONNX model file.

Definition at line 257 of file sources/includes/nvdsinfer_context.h.

◆ opTensorFilePath

| char ** _NvDsInferContextInitParams::opTensorFilePath |

List of paths to the raw output tensor data that are going to be used to overwrite the different output buffers.

Definition at line 457 of file sources/includes/nvdsinfer_context.h.

◆ outputBufferPoolSize

| unsigned int _NvDsInferContextInitParams::outputBufferPoolSize |

Holds the number of sets of output buffers (host and device) to be allocated.

Definition at line 365 of file sources/includes/nvdsinfer_context.h.

◆ outputIOFormats

| char ** _NvDsInferContextInitParams::outputIOFormats |

Can be used to specify the format and datatype for bound output layers.

For each layer specified the format is "<layer-name>:<data-type>:<format>"

Definition at line 398 of file sources/includes/nvdsinfer_context.h.

◆ outputLayerNames

| char ** _NvDsInferContextInitParams::outputLayerNames |

Holds a pointer to an array of pointers to output layer names.

Definition at line 336 of file sources/includes/nvdsinfer_context.h.

◆ overwriteIpTensor

| int _NvDsInferContextInitParams::overwriteIpTensor |

Boolean flag indicating whether or not to overwrite raw input tensor data provided by the user into the buffer for inference.

Definition at line 445 of file sources/includes/nvdsinfer_context.h.

◆ overwriteOpTensor

| int _NvDsInferContextInitParams::overwriteOpTensor |

Boolean flag indicating whether or not to overwrite raw ouput tensor data provided by the user into the buffer for inference.

Definition at line 453 of file sources/includes/nvdsinfer_context.h.

◆ perClassDetectionParams

| NvDsInferDetectionParams * _NvDsInferContextInitParams::perClassDetectionParams |

Holds per-class detection parameters.

The array's size must be equal to numDetectedClasses.

Definition at line 327 of file sources/includes/nvdsinfer_context.h.

◆ protoFilePath

| char _NvDsInferContextInitParams::protoFilePath |

Holds the pathname of the prototxt file.

Definition at line 251 of file sources/includes/nvdsinfer_context.h.

◆ segmentationOutputOrder

| NvDsInferTensorOrder _NvDsInferContextInitParams::segmentationOutputOrder |

Holds output order for segmentation network.

Definition at line 413 of file sources/includes/nvdsinfer_context.h.

◆ segmentationThreshold

| float _NvDsInferContextInitParams::segmentationThreshold |

Definition at line 333 of file sources/includes/nvdsinfer_context.h.

◆ tltEncodedModelFilePath

| char _NvDsInferContextInitParams::tltEncodedModelFilePath |

Holds the pathname of the TLT encoded model file.

Definition at line 259 of file sources/includes/nvdsinfer_context.h.

◆ tltModelKey

| char _NvDsInferContextInitParams::tltModelKey |

Holds the string key for decoding the TLT encoded model.

Definition at line 281 of file sources/includes/nvdsinfer_context.h.

◆ uffDimsCHW

| NvDsInferDimsCHW _NvDsInferContextInitParams::uffDimsCHW |

Holds the input dimensions for the UFF model.

Definition at line 269 of file sources/includes/nvdsinfer_context.h.

◆ uffFilePath

| char _NvDsInferContextInitParams::uffFilePath |

Holds the pathname of the UFF model file.

Definition at line 255 of file sources/includes/nvdsinfer_context.h.

◆ uffInputBlobName

| char _NvDsInferContextInitParams::uffInputBlobName |

Holds the name of the input layer for the UFF model.

Definition at line 275 of file sources/includes/nvdsinfer_context.h.

◆ uffInputOrder

| NvDsInferTensorOrder _NvDsInferContextInitParams::uffInputOrder |

Holds the original input order for the UFF model.

Definition at line 273 of file sources/includes/nvdsinfer_context.h.

◆ uniqueID

| unsigned int _NvDsInferContextInitParams::uniqueID |

Holds a unique identifier for the instance.

This can be used to identify the instance that is generating log and error messages.

Definition at line 245 of file sources/includes/nvdsinfer_context.h.

◆ useDBScan

| Use NvDsInferClusterMode instead int _NvDsInferContextInitParams::useDBScan |

Holds a Boolean; true if DBScan is to be used for object clustering, or false if OpenCV groupRectangles is to be used.

Definition at line 320 of file sources/includes/nvdsinfer_context.h.

◆ useDLA

| int _NvDsInferContextInitParams::useDLA |

Holds a Boolean; true if DLA is to be used.

Definition at line 359 of file sources/includes/nvdsinfer_context.h.

◆ useStronglyTyped

| int _NvDsInferContextInitParams::useStronglyTyped |

Boolean flag indicating whether to enable strongly typed network mode.

When enabled, tensor data types are inferred from network input types and operator type specification following TensorRT type inference rules. This corresponds to the –stronglyTyped flag in trtexec.

Definition at line 464 of file sources/includes/nvdsinfer_context.h.

◆ warmupEngine

| int _NvDsInferContextInitParams::warmupEngine |

Boolean flag indicating whether to run TensorRT engine warmup during initialization.

When enabled, dummy inference is run for batch sizes 1 through maxBatchSize to pre-warm TRT's internal CUDA kernel caches and execution graphs. This eliminates the latency penalty that occurs when the first real inference arrives with a previously unseen batch size (partial batch), which can cause persistent latency accumulation in streaming pipelines.

Definition at line 484 of file sources/includes/nvdsinfer_context.h.

◆ workspaceSize

| unsigned int _NvDsInferContextInitParams::workspaceSize |

Max workspace size (unit MB) that will be used as tensorrt build settings for cuda engine.

Definition at line 383 of file sources/includes/nvdsinfer_context.h.

The documentation for this struct was generated from the following file: