Detailed Description

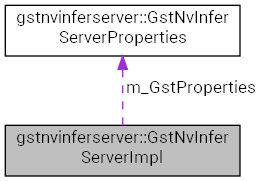

Class of the nvinferserver element implementation.

Definition at line 142 of file sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

Public Member Functions | |

| GstNvInferServerImpl (GstNvInferServer *infer) | |

| Constructor, registers the handle of the parent GStreamer element. More... | |

| ~GstNvInferServerImpl () | |

| Constructor, do nothing. More... | |

| void | setRawoutputCb (RawOutputCallback cb) |

| Saves the callback function pointer for the raw tensor output. More... | |

| NvDsInferStatus | start () |

| Reads the configuration file and sets up processing context. More... | |

| NvDsInferStatus | stop () |

| Deletes the inference context. More... | |

| NvDsInferStatus | processBatchMeta (NvDsBatchMeta *batchMeta, NvBufSurface *inSurf, uint64_t seqId, void *gstBuf) |

| Submits the input batch for inference. More... | |

| NvDsInferStatus | sync () |

| Waits for the output thread to finish processing queued operations. More... | |

| NvDsInferStatus | queueOperation (FuncItem func) |

| Queues the inference done operation for the request to the output thread. More... | |

| bool | addTrackingSource (uint32_t sourceId) |

| Add a new source to the object history structure. More... | |

| void | eraseTrackingSource (uint32_t sourceId) |

| Removes a source from the object history structure. More... | |

| void | resetIntervalCounter () |

| Resets the inference interval used in frame process mode to 0. More... | |

| uint32_t | uniqueId () const |

| const std::string & | classifierType () const |

| uint32_t | maxBatchSize () const |

| nvtxDomainHandle_t | nvtxDomain () |

| bool | isAsyncMode () const |

| bool | canSupportGpu (int gpuId) const |

| NvDsInferStatus | lastError () const |

| const ic::PluginControl & | config () const |

| void | updateInterval (guint interval) |

| GstNvInferServerImpl (GstNvInferServer *infer) | |

| Constructor, registers the handle of the parent GStreamer element. More... | |

| ~GstNvInferServerImpl () | |

| Constructor, do nothing. More... | |

| void | setRawoutputCb (RawOutputCallback cb) |

| Saves the callback function pointer for the raw tensor output. More... | |

| NvDsInferStatus | start () |

| Reads the configuration file and sets up processing context. More... | |

| NvDsInferStatus | stop () |

| Deletes the inference context. More... | |

| NvDsInferStatus | processBatchMeta (NvDsBatchMeta *batchMeta, NvBufSurface *inSurf, uint64_t seqId, void *gstBuf) |

| Submits the input batch for inference. More... | |

| NvDsInferStatus | sync () |

| Waits for the output thread to finish processing queued operations. More... | |

| NvDsInferStatus | queueOperation (FuncItem func) |

| Queues the inference done operation for the request to the output thread. More... | |

| bool | addTrackingSource (uint32_t sourceId) |

| Add a new source to the object history structure. More... | |

| void | eraseTrackingSource (uint32_t sourceId) |

| Removes a source from the object history structure. More... | |

| void | resetIntervalCounter () |

| Resets the inference interval used in frame process mode to 0. More... | |

| uint32_t | uniqueId () const |

| const std::string & | classifierType () const |

| uint32_t | maxBatchSize () const |

| nvtxDomainHandle_t | nvtxDomain () |

| bool | isAsyncMode () const |

| bool | canSupportGpu (int gpuId) const |

| NvDsInferStatus | lastError () const |

| const ic::PluginControl & | config () const |

| void | updateInterval (guint interval) |

Data Fields | |

| GstNvInferServerProperties | m_GstProperties |

Constructor & Destructor Documentation

◆ GstNvInferServerImpl() [1/2]

| gstnvinferserver::GstNvInferServerImpl::GstNvInferServerImpl | ( | GstNvInferServer * | infer | ) |

Constructor, registers the handle of the parent GStreamer element.

- Parameters

-

[in] infer Pointer to the nvinferserver GStreamer element.

◆ ~GstNvInferServerImpl() [1/2]

| gstnvinferserver::GstNvInferServerImpl::~GstNvInferServerImpl | ( | ) |

Constructor, do nothing.

◆ GstNvInferServerImpl() [2/2]

| gstnvinferserver::GstNvInferServerImpl::GstNvInferServerImpl | ( | GstNvInferServer * | infer | ) |

Constructor, registers the handle of the parent GStreamer element.

- Parameters

-

[in] infer Pointer to the nvinferserver GStreamer element.

◆ ~GstNvInferServerImpl() [2/2]

| gstnvinferserver::GstNvInferServerImpl::~GstNvInferServerImpl | ( | ) |

Constructor, do nothing.

Member Function Documentation

◆ addTrackingSource() [1/2]

| bool gstnvinferserver::GstNvInferServerImpl::addTrackingSource | ( | uint32_t | sourceId | ) |

Add a new source to the object history structure.

Whenever a new source is added to the pipeline, corresponding source ID is captured in the GStreamer event on the sink pad and the object history for this source is initialized.

- Parameters

-

[in] id Source ID.

◆ addTrackingSource() [2/2]

| bool gstnvinferserver::GstNvInferServerImpl::addTrackingSource | ( | uint32_t | sourceId | ) |

Add a new source to the object history structure.

Whenever a new source is added to the pipeline, corresponding source ID is captured in the GStreamer event on the sink pad and the object history for this source is initialized.

- Parameters

-

[in] id Source ID.

◆ canSupportGpu() [1/2]

| bool gstnvinferserver::GstNvInferServerImpl::canSupportGpu | ( | int | gpuId | ) | const |

◆ canSupportGpu() [2/2]

| bool gstnvinferserver::GstNvInferServerImpl::canSupportGpu | ( | int | gpuId | ) | const |

◆ classifierType() [1/2]

|

inline |

Definition at line 349 of file sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

References gstnvinferserver::GstNvInferServerProperties::classifierType, and m_GstProperties.

◆ classifierType() [2/2]

|

inline |

Definition at line 349 of file 9.0/sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

References gstnvinferserver::GstNvInferServerProperties::classifierType, and m_GstProperties.

◆ config() [1/2]

|

inline |

Definition at line 357 of file sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

◆ config() [2/2]

|

inline |

Definition at line 357 of file 9.0/sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

◆ eraseTrackingSource() [1/2]

| void gstnvinferserver::GstNvInferServerImpl::eraseTrackingSource | ( | uint32_t | sourceId | ) |

Removes a source from the object history structure.

Whenever a source is removed from the pipeline, corresponding source ID is captured in the GStreamer event on the sink pad and the object history for this source is deleted.

- Parameters

-

[in] id Source ID.

◆ eraseTrackingSource() [2/2]

| void gstnvinferserver::GstNvInferServerImpl::eraseTrackingSource | ( | uint32_t | sourceId | ) |

Removes a source from the object history structure.

Whenever a source is removed from the pipeline, corresponding source ID is captured in the GStreamer event on the sink pad and the object history for this source is deleted.

- Parameters

-

[in] id Source ID.

◆ isAsyncMode() [1/2]

| bool gstnvinferserver::GstNvInferServerImpl::isAsyncMode | ( | ) | const |

◆ isAsyncMode() [2/2]

| bool gstnvinferserver::GstNvInferServerImpl::isAsyncMode | ( | ) | const |

◆ lastError() [1/2]

| NvDsInferStatus gstnvinferserver::GstNvInferServerImpl::lastError | ( | ) | const |

◆ lastError() [2/2]

| NvDsInferStatus gstnvinferserver::GstNvInferServerImpl::lastError | ( | ) | const |

◆ maxBatchSize() [1/2]

| uint32_t gstnvinferserver::GstNvInferServerImpl::maxBatchSize | ( | ) | const |

◆ maxBatchSize() [2/2]

| uint32_t gstnvinferserver::GstNvInferServerImpl::maxBatchSize | ( | ) | const |

◆ nvtxDomain() [1/2]

|

inline |

Definition at line 351 of file 9.0/sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

◆ nvtxDomain() [2/2]

|

inline |

Definition at line 351 of file sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

◆ processBatchMeta() [1/2]

| NvDsInferStatus gstnvinferserver::GstNvInferServerImpl::processBatchMeta | ( | NvDsBatchMeta * | batchMeta, |

| NvBufSurface * | inSurf, | ||

| uint64_t | seqId, | ||

| void * | gstBuf | ||

| ) |

Submits the input batch for inference.

This function submits the input batch buffer for inferencing as per the configured processing mode: full frame inference, inference on detected objects or inference on attached input tensors.

- Parameters

-

[in,out] batchMeta NvDsBatchMeta associated with the input buffer. [in] inSurf Input batch buffer. [in] seqId The sequence number of the input batch. [in] gstBuf Pointer to the input GStreamer buffer.

◆ processBatchMeta() [2/2]

| NvDsInferStatus gstnvinferserver::GstNvInferServerImpl::processBatchMeta | ( | NvDsBatchMeta * | batchMeta, |

| NvBufSurface * | inSurf, | ||

| uint64_t | seqId, | ||

| void * | gstBuf | ||

| ) |

Submits the input batch for inference.

This function submits the input batch buffer for inferencing as per the configured processing mode: full frame inference, inference on detected objects or inference on attached input tensors.

- Parameters

-

[in,out] batchMeta NvDsBatchMeta associated with the input buffer. [in] inSurf Input batch buffer. [in] seqId The sequence number of the input batch. [in] gstBuf Pointer to the input GStreamer buffer.

◆ queueOperation() [1/2]

| NvDsInferStatus gstnvinferserver::GstNvInferServerImpl::queueOperation | ( | FuncItem | func | ) |

Queues the inference done operation for the request to the output thread.

◆ queueOperation() [2/2]

| NvDsInferStatus gstnvinferserver::GstNvInferServerImpl::queueOperation | ( | FuncItem | func | ) |

Queues the inference done operation for the request to the output thread.

◆ resetIntervalCounter() [1/2]

| void gstnvinferserver::GstNvInferServerImpl::resetIntervalCounter | ( | ) |

Resets the inference interval used in frame process mode to 0.

◆ resetIntervalCounter() [2/2]

| void gstnvinferserver::GstNvInferServerImpl::resetIntervalCounter | ( | ) |

Resets the inference interval used in frame process mode to 0.

◆ setRawoutputCb() [1/2]

|

inline |

Saves the callback function pointer for the raw tensor output.

- Parameters

-

[in] cb Pointer to the callback function.

Definition at line 264 of file 9.0/sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

◆ setRawoutputCb() [2/2]

|

inline |

Saves the callback function pointer for the raw tensor output.

- Parameters

-

[in] cb Pointer to the callback function.

Definition at line 264 of file sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

◆ start() [1/2]

| NvDsInferStatus gstnvinferserver::GstNvInferServerImpl::start | ( | ) |

Reads the configuration file and sets up processing context.

This function reads the configuration file and validates the user provided configuration. Configuration file settings are overridden with those set by element properties. It then creates the inference context, initializes it and starts the output thread. The inference context is either InferGrpcContext (Triton Inference Server in gRPC mode) or InferTrtISContext (Triton Inference server C-API mode) depending on the configuration setting. Object history is initialized for source 0.

◆ start() [2/2]

| NvDsInferStatus gstnvinferserver::GstNvInferServerImpl::start | ( | ) |

Reads the configuration file and sets up processing context.

This function reads the configuration file and validates the user provided configuration. Configuration file settings are overridden with those set by element properties. It then creates the inference context, initializes it and starts the output thread. The inference context is either InferGrpcContext (Triton Inference Server in gRPC mode) or InferTrtISContext (Triton Inference server C-API mode) depending on the configuration setting. Object history is initialized for source 0.

◆ stop() [1/2]

| NvDsInferStatus gstnvinferserver::GstNvInferServerImpl::stop | ( | ) |

Deletes the inference context.

This function waits for the output thread to finish and then de-initializes the inference context. The object history is cleared.

◆ stop() [2/2]

| NvDsInferStatus gstnvinferserver::GstNvInferServerImpl::stop | ( | ) |

Deletes the inference context.

This function waits for the output thread to finish and then de-initializes the inference context. The object history is cleared.

◆ sync() [1/2]

| NvDsInferStatus gstnvinferserver::GstNvInferServerImpl::sync | ( | ) |

Waits for the output thread to finish processing queued operations.

◆ sync() [2/2]

| NvDsInferStatus gstnvinferserver::GstNvInferServerImpl::sync | ( | ) |

Waits for the output thread to finish processing queued operations.

◆ uniqueId() [1/2]

|

inline |

Definition at line 348 of file 9.0/sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

References m_GstProperties, and gstnvinferserver::GstNvInferServerProperties::uniqueId.

◆ uniqueId() [2/2]

|

inline |

Definition at line 348 of file sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

References m_GstProperties, and gstnvinferserver::GstNvInferServerProperties::uniqueId.

◆ updateInterval() [1/2]

|

inline |

Definition at line 358 of file sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

◆ updateInterval() [2/2]

|

inline |

Definition at line 358 of file 9.0/sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

Field Documentation

◆ m_GstProperties

| GstNvInferServerProperties gstnvinferserver::GstNvInferServerImpl::m_GstProperties |

Definition at line 549 of file sources/gst-plugins/gst-nvinferserver/gstnvinferserver_impl.h.

Referenced by classifierType(), and uniqueId().

The documentation for this class was generated from the following file: