Models#

This section provides a brief overview of TTS models that NeMo’s TTS collection currently supports.

Model Recipes can be accessed through examples/tts/*.py.

Configuration Files can be found in the directory of examples/tts/conf/. For detailed information about TTS configuration files and how they should be structured, please refer to the section NeMo TTS Configuration Files.

Pretrained Model Checkpoints are available for any users for immediately synthesizing speech or fine-tuning models on your custom datasets. Please follow the section Checkpoints for instructions on how to use those pretrained models.

Mel-Spectrogram Generators#

FastPitch#

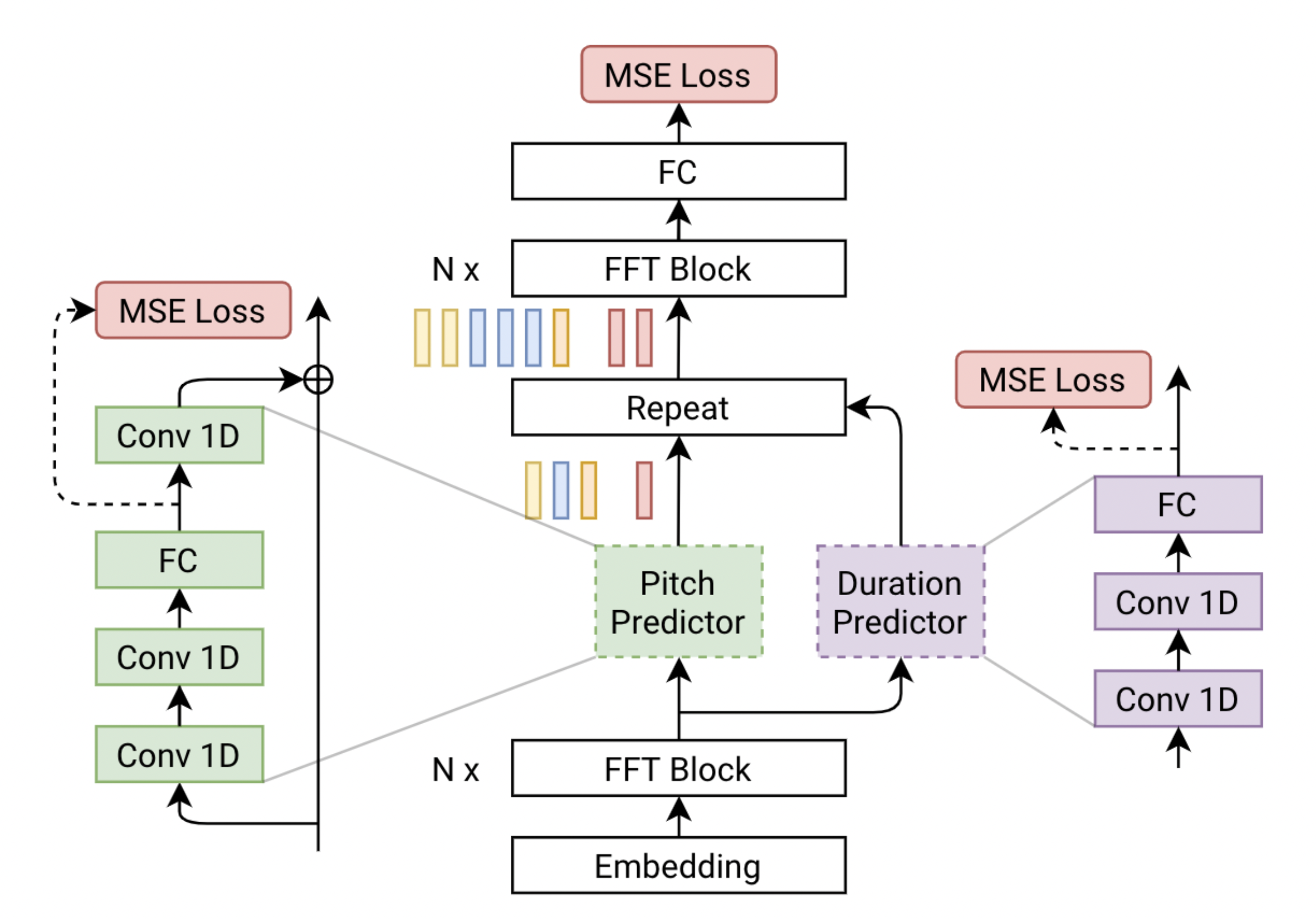

FastPitch is a fully-parallel text-to-speech synthesis model based on FastSpeech, conditioned on fundamental frequency contours. The model predicts pitch contours during inference. By altering these predictions, the generated speech can be more expressive, better match the semantic of the utterance, and in the end more engaging to the listener. Uniformly increasing or decreasing pitch with FastPitch generates speech that resembles the voluntary modulation of voice. Conditioning on frequency contours improves the overall quality of synthesized speech, making it comparable to the state of the art. It does not introduce an overhead, and FastPitch retains the favorable, fully-parallel Transformers architecture, with over 900x real-time factor for mel-spectrogram synthesis of a typical utterance. The architecture of FastPitch is shown below. It is based on FastSpeech and consists of two feed-forward Transformer (FFTr) stacks. The first FFTr operates in the resolution of input tokens, and the other one in the resolution of the output frames. Please refer to [TTS-MODELS5] for details.

SSL FastPitch#

This experimental version of FastPitch takes in content and speaker embeddings generated by an SSL Disentangler and generates mel-spectrograms, with the goal that voice characteristics are taken from the speaker embedding while the content of speech is determined by the content embedding. Voice conversion can be done using this model by swapping the speaker embedding input to that of a target speaker, while keeping the content embedding the same. More details to come.

End-to-End LLM-based TTS#

MagpieTTS#

MagpieTTS is an encoder-decoder transformer TTS model that operates on discrete audio tokens from a neural audio codec. It uses monotonic alignment (CTC loss and attention priors) to reduce hallucinations and supports voice cloning via audio or text context conditioning. For architecture, training, inference, and preference optimization (DPO/GRPO), see Magpie-TTS documentation. To adapt a pretrained checkpoint to new speakers or new languages, see Magpie-TTS Finetuning.

Vocoders#

HiFiGAN#

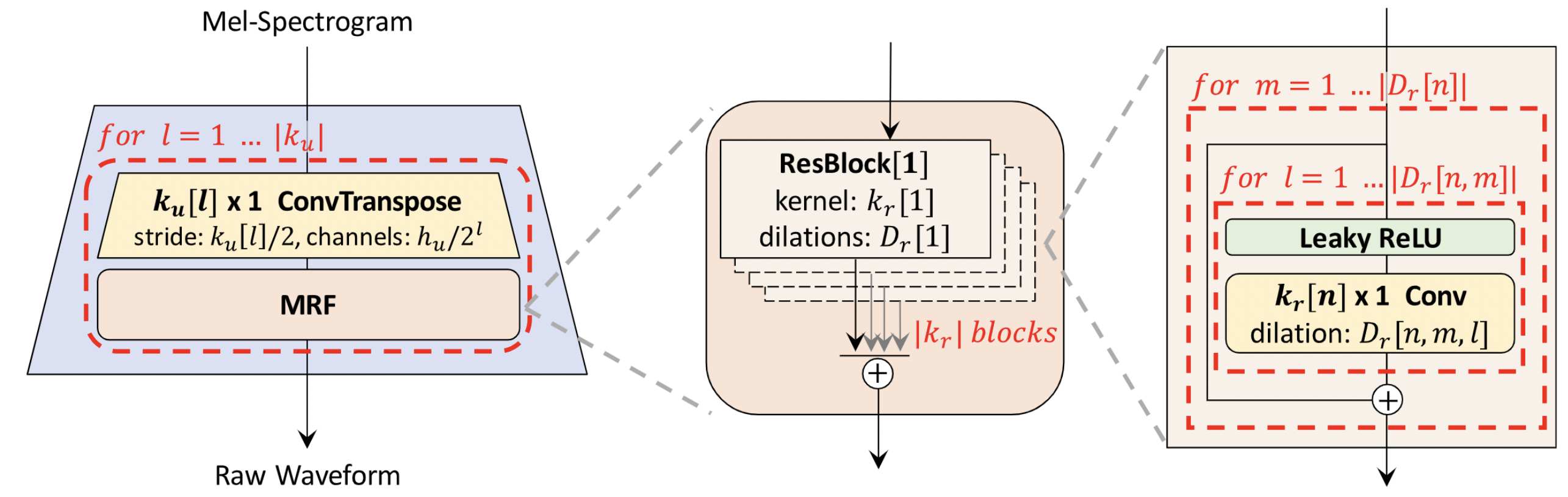

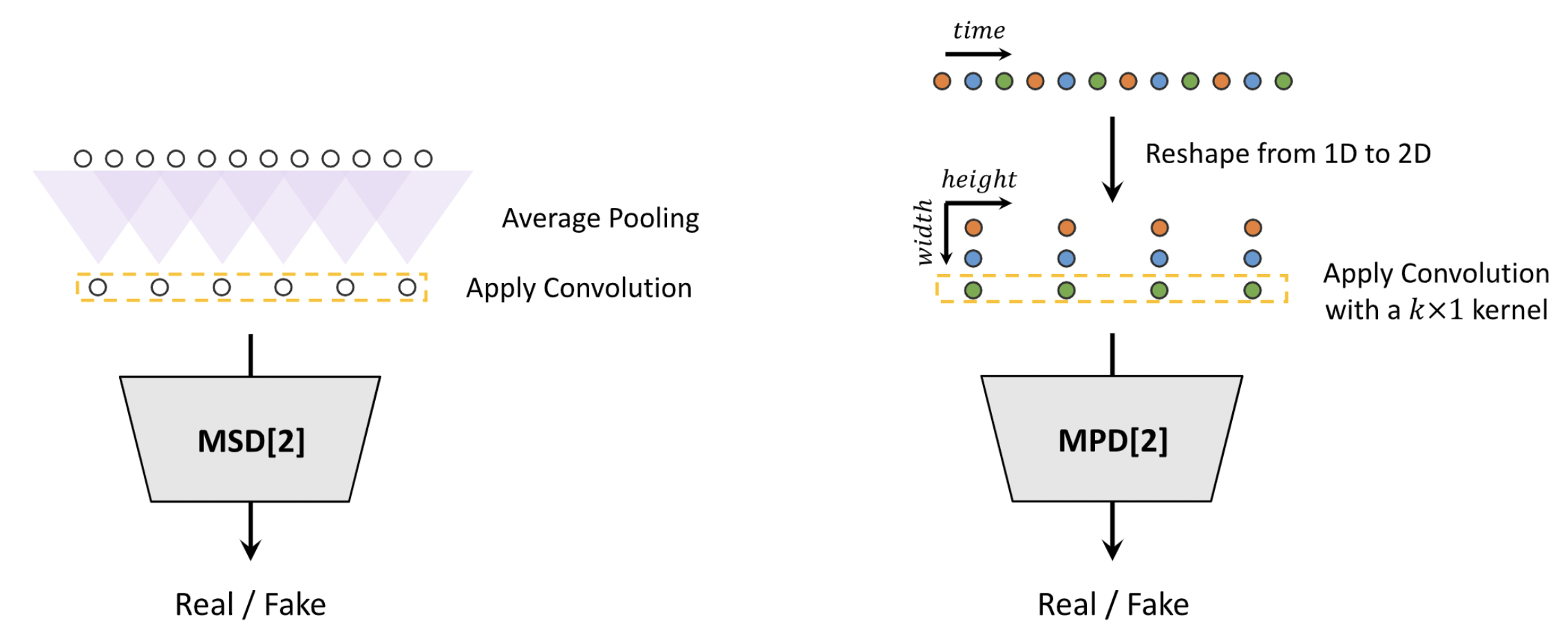

HiFi-GAN focuses on designing a vocoder model that efficiently synthesizes raw waveform audios from the intermediate mel-spectrograms. It consists of one generator and two discriminators (multi-scale and multi-period). The generator and discriminators are trained adversarially with two additional loses for improving training stability and model performance. The generator is a fully convolutional neural network which takes a mel-spectrogram as input and upsamples it through transposed convolutions until the length of the output sequence matches the temporal resolution of raw waveforms. Every transposed convolution is followed by a multi-receptive field fusion (MRF) module. The architecture of the generator is shown below (left). Multi-period discriminator (MPD) is a mixer of sub-discriminators, each of which only accepts equally spaced samples of an input audio. The sub-discriminators are designed to capture different implicit structures from each other by looking at different parts of an input audio. While MPD only accepts disjoint samples, multi-scale discriminator (MSD) is added to consecutively evaluate the audio sequence. MSD is a mixer of 3 sub-discriminators operating on different input scales (raw audio, x2 average-pooled audio, and x4 average-pooled audio). HiFi-GAN could achieve both higher computational efficiency and sample quality than the best publicly available auto-regressive or flow-based models, such as WaveNet and WaveGlow. Please refer to [TTS-MODELS2] for details.

Codecs#

Audio Codec#

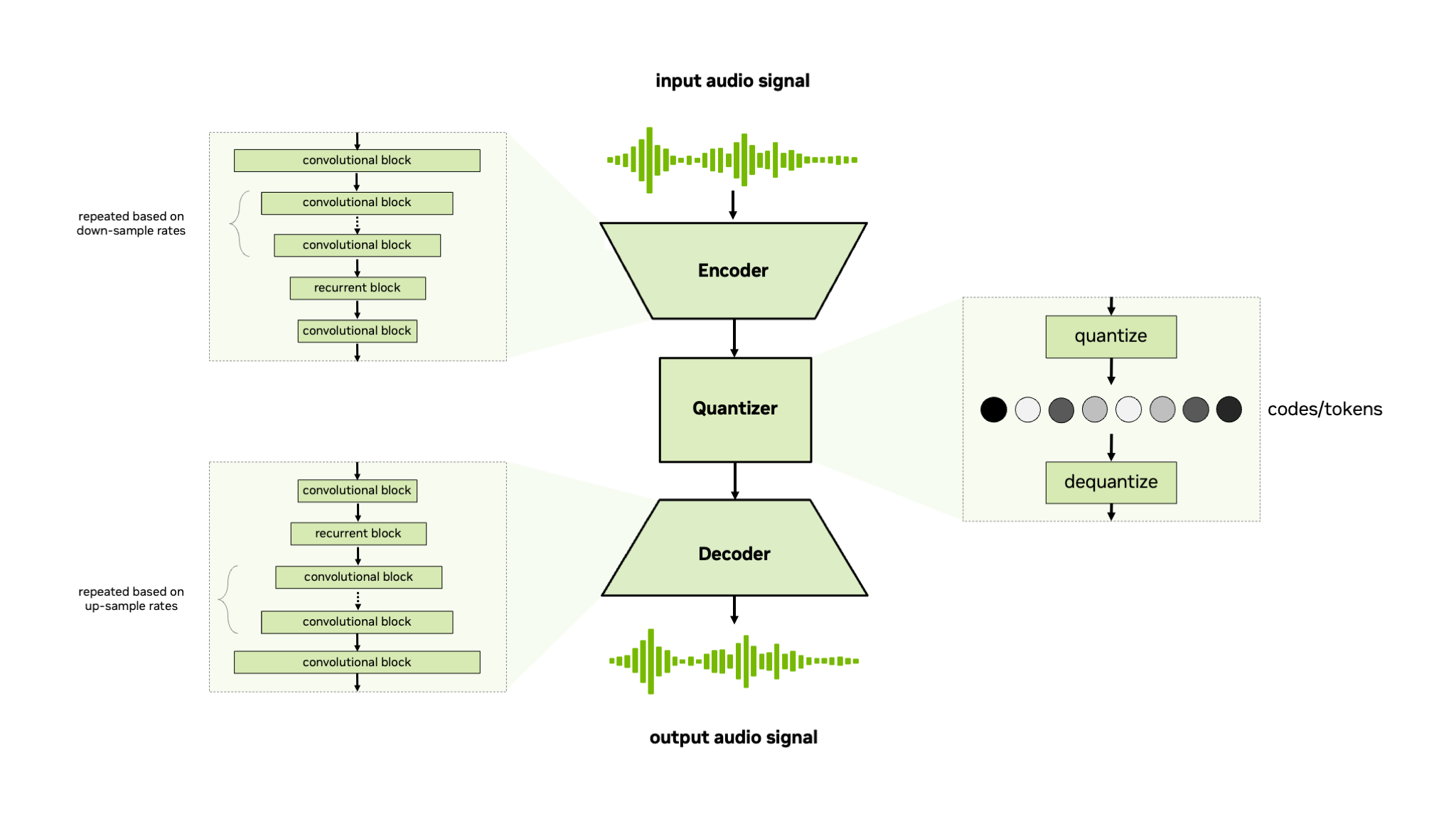

The NeMo Audio Codec model is a non-autoregressive convolutional encoder-quantizer-decoder model for coding or tokenization of raw audio signals or mel-spectrogram features.

The NeMo Audio Codec model supports residual vector quantizer (RVQ) [TTS-MODELS4] and finite scalar quantizer (FSQ) [TTS-MODELS3] for quantization of the encoder output.

This model is trained end-to-end using generative loss, discriminative loss, and reconstruction loss, similar to other neural audio codecs such as SoundStream [TTS-MODELS4] and EnCodec [TTS-MODELS1].

For further information refer to the Audio Codec Training tutorial in the TTS tutorial section.

References#

Alexandre Défossez, Jade Copet, Gabriel Synnaeve, and Yossi Adi. High fidelity neural audio compression. arXiv preprint arXiv:2210.13438, 2022.

Jungil Kong, Jaehyeon Kim, and Jaekyoung Bae. HiFi-GAN: generative adversarial networks for efficient and high fidelity speech synthesis. Advances in Neural Information Processing Systems, 33:17022–17033, 2020.

Fabian Mentzer, David Minnen, Eirikur Agustsson, and Michael Tschannen. Finite scalar quantization: VQ-VAE made simple. arXiv preprint arXiv:2309.15505, 2023.

Neil Zeghidour, Alejandro Luebs, Ahmed Omran, Jan Skoglund, and Marco Tagliasacchi. SoundStream: an end-to-end neural audio codec. IEEE/ACM Transactions on Audio, Speech, and Language Processing, 30:495–507, 2022. doi:10.1109/TASLP.2021.3129994.

Adrian Łańcucki. Fastpitch: parallel text-to-speech with pitch prediction. In ICASSP 2021-2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 6588–6592. IEEE, 2021.