Flow Steering

Flow steering is a new model which steers network flows based on flow specifications to specific QPs. Those flows can be either unicast or multicast network flows. In order to maintain flexibility, domains and priorities are used. Flow steering uses a methodology of flow attribute, which is a combination of L2-L4 flow specifications, a destination QP and a priority. Flow steering rules may be inserted either by using ethtool or by using InfiniBand verbs. The verbs abstraction uses different terminology from the flow attribute (ibv_exp_flow_attr), defined by a combination of specifications (struct ibv_exp_flow_spec_*).

Applicable to ConnectX®-3 and ConnectX®-3 Pro adapter cards only.

In ConnectX®-4 and ConnectX®-4 Lx adapter cards, Flow Steering is automatically enabled as of MLNX_OFED v3.1-x.0.0.

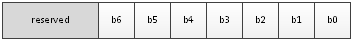

Flow steering is generally enabled when the log_num_mgm_entry_size module parameter is non positive (e.g., -log_num_mgm_entry_size), meaning the absolute value of the parameter, is a bit field. Every bit indicates a condition or an option regarding the flow steering mechanism:

|

Bit |

Operation |

Description |

|

b0 |

Force device managed Flow Steering |

When set to 1, it forces HCA to be enabled regardless of whether NC-SI Flow Steering is supported or not. |

|

b1 |

Disable IPoIB Flow Steering |

When set to 1, it disables the support of IPoIB Flow Steering. This bit should be set to 1 when "b2- Enable A0 static DMFS steering" is used (see " A0 Static Device Managed Flow Steering " ). |

|

b2 |

Enable A0 static DMFS steering (see " A0 Static Device Managed Flow Steering " ) |

When set to 1, A0 static DMFS steering is enabled. This bit should be set to 0 when "b1- Disable IPoIB Flow Steering" is 0. |

|

b3 |

Enable DMFS only if the HCA supports more than 64QPs per MCG entry |

When set to 1, DMFS is enabled only if the HCA supports more than 64 QPs attached to the same rule. For example, attaching 64VFs to the same multicast address causes 64QPs to be attached to the same MCG. If the HCA supports less than 64 QPs per MCG, B0 is used. |

|

b4 |

Optimize IPoIB steering table for non-source IP rules when possible |

When set to 1, IPoIB steering table will be optimized to support rules ignoring source IP check. This optimization is available only when IPoIB Flow Steering is set. |

|

b5 |

Optimize the steering table for non-source IP rules when possible |

When set to 1, steering table will be optimized to support rules ignoring source IP check. This optimization is possible only when DMFS mode is set. |

|

b6 |

Enable/disable VXLAN offloads |

When set to 1, VXLAN offloads will be disabled. When VXLAN offloads are disabled, ethool -k will display: tx-udp_tnl-segmentation: off [fixed] tx-udp_tnl-csum-segmentation: off [fixed] tx-gso-partial: off [fixed] |

For example, a value of (-7) means:

Forcing Flow Steering regardless of NC-SI Flow Steering support

Disabling IPoIB Flow Steering support

Enabling A0 static DMFS steering

Steering table is not optimized for rules ignoring source IP check

The default value of log_num_mgm_entry_size is (-10). Meaning Ethernet Flow Steering (i.e IPoIB DMFS is disabled by default) is enabled by default if NC-SI DMFS is supported and the HCA supports at least 64 QPs per MCG entry. Otherwise, L2 steering (B0) is used.

When using SR-IOV, flow steering is enabled if there is an adequate amount of space to store the flow steering table for the guest/master.

To enable Flow Steering

:

Open the /etc/modprobe.d/mlnx.conf file.

Set the parameter log_num_mgm_entry_size to a non-positive value by writing the option mlx4_core log_num_mgm_entry_size=<value>.

Restart the driver.

To disable Flow Steering:

Open the /etc/modprobe.d/mlnx.conf file.

Remove the options mlx4_core log_num_mgm_entry_size= <value>.

Restart the driver.

Flow Steering is supported in ConnectX®-3, ConnectX®-3 Pro, ConnectX®-4 and ConnectX®-4 Lx adapter cards.

[For ConnectX®-3 and ConnectX®-3 Pro only] To determine which Flow Steering features are supported:

ethtool --show-priv-flags eth4

The following output will be received:

mlx4_flow_steering_ethernet_l2: on Creating Ethernet L2 (MAC) rules is supported

mlx4_flow_steering_ipv4: on Creating IPv4 rules is supported

mlx4_flow_steering_tcp: on Creating TCP/UDP rules is supported

For ConnectX-4 and ConnectX-4 Lx adapter cards, all supported features are enabled.

Flow Steering support in InfiniBand is determined according to the EXP_MANAGED_- FLOW_STEERING flag.

This mode enables fast steering, however it might impact flexibility. Using it increases the packet rate performance by ~30%, with the following limitations for Ethernet link-layer unicast QPs:

Limits the number of opened RSS Kernel QPs to 96. MACs should be unique (1 MAC per 1 QP). The number of VFs is limited

When creating Flow Steering rules for user QPs, only MAC--> QP rules are allowed. Both MACs and QPs should be unique between rules. Only 62 such rules could be created

When creating rules with Ethtool, MAC--> QP rules could be used, where the QP must be the indirection (RSS) QP. Creating rules that indirect traffic to other rings is not allowed. Ethtool MAC rules to drop packets (action -1) are supported

RFS is not supported in this mode

VLAN is not supported in this mode

ConnectX®-4 and ConnectX®-4 Lx adapter cards support only User Verbs domain with struct ibv_exp_flow_spec_eth flow specification using 4 priorities.

Flow steering defines the concept of domain and priority. Each domain represents a user agent that can attach a flow. The domains are prioritized. A higher priority domain will always supersede a lower priority domain when their flow specifications overlap. Setting a lower priority value will result in a higher priority.

In addition to the domain, there is a priority within each of the domains. Each domain can have at most 2^12 priorities in accordance with its needs.

The following are the domains at a descending order of priority:

User Verbs allows a user application QP to be attached to a specified flow when using ibv_exp_create_flow and ibv_exp_destroy_flow verbs

ibv_exp_create_flow

struct ibv_exp_flow *ibv_exp_create_flow(struct ibv_qp *qp, struct ibv_exp_flow_attr *flow)

Input parameters:

struct ibv_qp - the attached QP.

struct ibv_exp_flow_attr - attaches the QP to the flow specified. The flow contains mandatory control parameters and optional L2, L3 and L4 headers. The optional headers are detected by setting the size and num_of_specs fields:

struct ibv_exp_flow_attr can be followed by the optional flow headers structs:struct ibv_exp_flow_spec_ib struct ibv_exp_flow_spec_eth struct ibv_exp_flow_spec_ipv4 struct ibv_exp_flow_spec_tcp_udp struct ibv_exp_flow_spec_ipv6

Note: ipv6 is applicable for ConnectX®-4 and ConnectX®-4 Lx only.

For further information, please refer to the ibv_exp_create_flow man page.

WarningBe advised that as of MLNX_OFED v2.0-3.0.0, the parameters (both the value and the mask) should be set in big-endian format.

Each header struct holds the relevant network layer parameters for matching. To enforce the match, the user sets a mask for each parameter.

The mlx5 driver supports partial masks. The mlx4 driver supports the following masks:All one mask - include the parameter value in the attached rule

Note: Since the VLAN ID in the Ethernet header is 12bit long, the following parameter should be used: flow_spec_eth.mask.vlan_tag = htons(0x0fff)

All zero mask - ignore the parameter value in the attached rule

When setting the flow type to NORMAL, the incoming traffic will be steered according to the rule specifications. ALL_DEFAULT and MC_DEFAULT rules options are valid only for Ethernet link type since InfiniBand link type packets always include QP number.

For further information, please refer to the relevant man pages.

ibv_exp_destroy_flow

intibv_exp_destroy_flow(struct ibv_exp_flow *flow_id)Input parameters:

ibv_exp_destroy_flow requires struct ibv_exp_flow which is the return value of ibv_ex- p_create_flow in case of success.

Output parameters:

Returns 0 on success, or the value of errno on failure.

For further information, please refer to the ibv_exp_destroy_flow man page.

Ethtool

Ethtool domain is used to attach an RX ring, specifically its QP to a specified flow. Please refer to the most recent ethtool manpage for all the ways to specify a flow.

Examples:

ethtool –U eth5 flow-type ether dst 00:11:22:33:44:55 loc 5 action 2

All packets that contain the above destination MAC address are to be steered into rx-ring 2 (its underlying QP), with priority 5 (within the ethtool domain)ethtool –U eth5 flow-type tcp4 src-ip 1.2.3.4 dst-port 8888 loc 5 action 2

All packets that contain the above destination IP address and source port are to be steered into rx- ring 2. When destination MAC is not given, the user's destination MAC is filled automatically.ethtool -U eth5 flow-type ether dst 00:11:22:33:44:55 vlan 45 m 0xf000 loc 5 action 2

All packets that contain the above destination MAC address and specific VLAN are steered into ring 2. Please pay attention to the VLAN's mask 0xf000. It is required in order to add such a rule.ethtool –u eth5

Shows all of ethtool's steering rule

When configuring two rules with the same priority, the second rule will overwrite the first one, so this ethtool interface is effectively a table. Inserting Flow Steering rules in the kernel requires support from both the ethtool in the user space and in kernel (v2.6.28).

MLX4 Driver Support

The mlx4 driver supports only a subset of the flow specification the ethtool API defines. Asking for an unsupported flow specification will result in an "invalid value" failure.

The following are the flow specific parameters:

|

ether |

tcp4/udp4 |

ip4 |

|

|

Mandatory |

dst |

src-ip/dst-ip |

|

|

Optional |

vlan |

src-ip, dst-ip, src-port, dst-port, vlan |

src-ip, dst-ip, vlan |

Accelerated Receive Flow Steering (aRFS)

WarningaRFS is supported in both ConnectX®-3 and ConnectX®-4 adapter cards.

Receive Flow Steering (RFS) and Accelerated Receive Flow Steering (aRFS) are kernel features currently available in most distributions. For RFS, packets are forwarded based on the location of the application consuming the packet. aRFS boosts the speed of RFS by adding support for the hardware. By usingaRFS(unlike RFS), the packets are directed to a CPU that is local to the thread running the application.

aRFSis an in-kernel-logic responsible for load balancing between CPUs by attaching flows to CPUs that are used by flow's owner applications. This domain allows the aRFS mechanism to use the flow steering infrastructure to support the aRFS logic by implementing the ndo_rx_- flow_steer, which, in turn, calls the underlying flow steering mechanism with the aRFS domain.

To configure RFS:

Configure the RFS flow table entries (globally and per core).

Note: The functionality remains disabled until explicitly configured (by default it is 0).

The number of entries in the global flow table is set as follows:

Warning/proc/sys/net/core/rps_sock_flow_entries

The number of entries in the per-queue flow table are set as follows:

Warning/sys/class/net/<dev>/queues/rx-<n>/rps_flow_cnt

Example:

# echo 32768 > /proc/sys/net/core/rps_sock_flow_entries

# for f in /sys/class/net/ens6/queues/rx-*/rps_flow_cnt; do echo 32768 > $f; done

To Configure aRFS:

The aRFS feature requires explicit configuration in order to enable it. Enabling the aRFS requires enabling the 'ntuple' flag via the ethtool.

For example, to enable ntuple for eth0, run:

ethtool -K eth0 ntuple on

aRFS requires the kernel to be compiled with the CONFIG_RFS_ACCEL option. This option is available in kernels 2.6.39 and above. Furthermore, aRFS requires Device Managed Flow Steering support.

RFS cannot function if LRO is enabled. LRO can be disabled via ethtool.

All of the rest

The lowest priority domain serves the following users:

The mlx4 Ethernet driver attaches its unicast and multicast MACs addresses to its QP using L2 flow specifications

The mlx4 ipoib driver when it attaches its QP to his configured GIDS

WarningFragmented UDP traffic cannot be steered. It is treated as 'other' protocol by hardware (from the first packet) and not considered as UDP traffic.

WarningWe recommend using libibverbs v2.0-3.0.0 and libmlx4 v2.0-3.0.0 and higher as of MLNX_OFED v2.0-3.0.0 due to API changes.

This tool is only supported for ConnectX-4 and above adapter cards.

The mlx_fs_dump is a python tool that prints the steering rules in a readable manner. Python v2.7 or above, as well as pip, anytree and termcolor libraries are required to be installed on the host.

Running example:

./ofed_scripts/utils/mlx_fs_dump -d /dev/mst/mt4115_pciconf0

FT: 9 (level: 0x18, type: NIC_RX)

+-- FG: 0x15 (MISC)

|-- FTE: 0x0 (FWD) to (TIR:0x7e) out.ethtype:IPv4 out.ip_prot:UDP out.udp_dport:0x140

+-- FTE: 0x1 (FWD) to (TIR:0x7e) out.ethtype:IPv4 out.ip_prot:UDP out.udp_dport:0x13f

...

For further information on the mlx_fs_dump tool, please refer to mlx_fs_dump Community post.