Configure the VLM#

VSS is designed to be configurable with many VLMs, such as:

GPT-4o

Fine-tuned LoRA Checkpoint for VILA 1.5

NVILA research model

Custom VLMs

VSS supports integrating custom VLM models. Depending on the model to be integrated, some configurations must be updated or the interface code is implemented. The model can ONLY be selected at initialization time.

Following segments explain those approaches in details.

Configuring for GPT-4o#

Obtain OpenAI API Key#

VSS does not use OpenAI GPT-4o by default. This is required only when using the GPT-4o model as the VLM or as the LLM for tool calling.

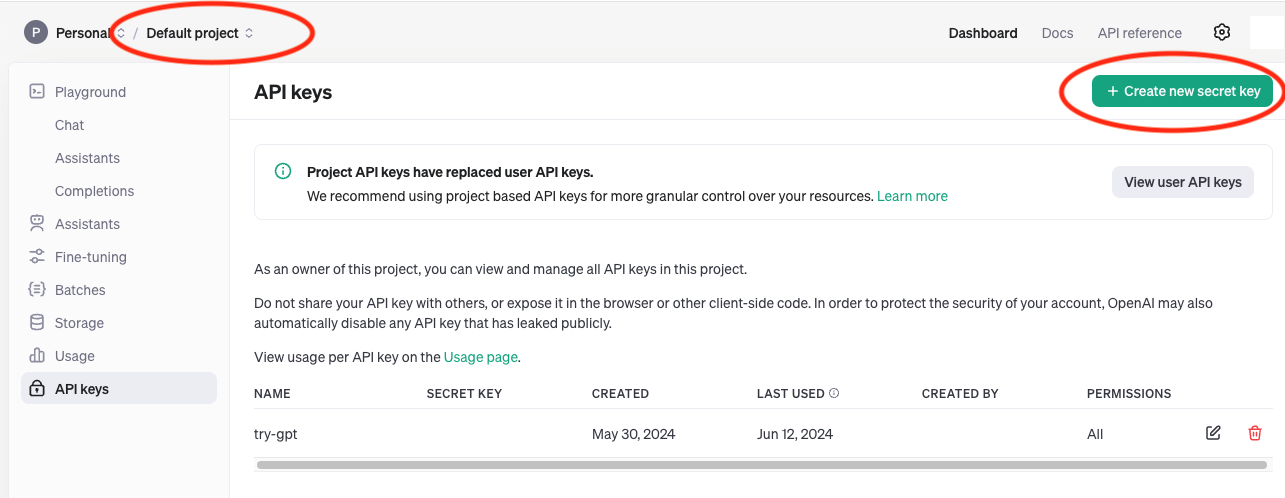

Login at: https://platform.openai.com/apps.

Select API.

Create a new API key for your project at: https://platform.openai.com/api-keys.

Make sure you have access to GPT-4o model at https://platform.openai.com/apps.

Make sure you have enough credits available at Settings > Usage and be educated on rate limits at Settings > Limits. https://platform.openai.com/settings/organization/usage

Store the generated API Key securely for future use.

Override the configuration#

To use GPT-4o as the VLM model in VSS, see Configuration Options and modify the config VLM_MODEL_TO_USE.

Overview of the steps to do this:

Fetch the Helm Chart following Deploy the Blueprint.

Create a new

overrides.yamlfile.Copy the example overrides file from Configuration Options.

Edit the

overrides.yamlfile and changeVLM_MODEL_TO_USEtovalue: openai-compatand add the environment variable for theOPENAI_API_KEYas shown below.vss: applicationSpecs: vss-deployment: containers: vss: env: - name: VLM_MODEL_TO_USE value: openai-compat - name: OPENAI_API_KEY valueFrom: secretKeyRef: name: openai-api-key-secret key: OPENAI_API_KEY

Obtain the OpenAI API Key as described in Obtain OpenAI API Key.

Create the OpenAI API Key secret:

sudo microk8s kubectl create secret generic openai-api-key-secret --from-literal=OPENAI_API_KEY=$OPENAI_API_KEYInstall the Helm Chart:

sudo microk8s helm install vss-blueprint nvidia-blueprint-vss-2.2.0.tgz --set global.ngcImagePullSecretName=ngc-docker-reg-secret -f overrides.yamlFollow steps to Launch VSS UI at Launch VSS UI.

Configuring for Fine-tuned VILA 1.5 (LoRA)#

Custom finetuned Low-Rank Adaptation (LoRA) checkpoints for VILA 1.5 can be used with VSS and have demonstrated improved accuracy as compared to the base VILA 1.5 model. Once you have a fine-tuned checkpoint, follow the steps to configure VSS to use it as the VLM:

Copy the LoRA checkpoint to a directory

<LORA_CHECKPOINT_DIR>on the node where the VSS container will be deployed. The contents of the directory should be similar to:$ ls <LORA_CHECKPOINT_DIR> adapter_config.json adapter_model.safetensors config.json non_lora_trainables.bin trainer_state.json

Make the

<LORA_CHECKPOINT_DIR>directory writable since VSS will generate the TensorRT-LLM weights for the LoRA in the same container.

chmod -R a+w <LORA_CHECKPOINT_DIR>

Add the

VILA_LORA_PATHenvironment variable,extraPodVolumesandextraPodVolumeMountsto the overrides file described in Configuration Options as shown below. Make sure VILA 1.5 is being used as the base model.

vss: applicationSpecs: vss-deployment: containers: vss: env: - name: VLM_MODEL_TO_USE value: vila-1.5 - name: MODEL_PATH value: "ngc:nim/nvidia/vila-1.5-40b:vila-yi-34b-siglip-stage3_1003_video_v8" - name: VILA_LORA_PATH value: /models/lora extraPodVolumes: - name: lora-checkpoint hostPath: path: <LORA_CHECKPOINT_DIR> # Path on host - name: secret-ngc-api-key-volume secret: secretName: ngc-api-key-secret items: - key: NGC_API_KEY path: ngc-api-key - name: secret-graph-db-username-volume secret: secretName: graph-db-creds-secret items: - key: username path: graph-db-username - name: secret-graph-db-password-volume secret: secretName: graph-db-creds-secret items: - key: password path: graph-db-password extraPodVolumeMounts: - name: lora-checkpoint mountPath: /models/lora - name: secret-ngc-api-key-volume mountPath: /secrets/ngc-api-key subPath: ngc-api-key readOnly: true - name: secret-graph-db-username-volume mountPath: /secrets/graph-db-username subPath: graph-db-username readOnly: true - name: secret-graph-db-password-volume mountPath: /secrets/graph-db-password subPath: graph-db-password readOnly: true

Install the Helm Chart:

sudo microk8s helm install vss-blueprint nvidia-blueprint-vss-2.2.0.tgz --set global.ngcImagePullSecretName=ngc-docker-reg-secret -f overrides.yamlFollow steps to Launch VSS UI at Launch VSS UI.

Configuring for NVILA research model#

To deploy VSS with the NVILA research model, specify the following in your overrides file (see Configuration Options):

vss:

applicationSpecs:

vss-deployment:

containers:

vss:

env:

- name: VLM_MODEL_TO_USE

value: nvila

- name: MODEL_PATH

value: "git:https://huggingface.co/Efficient-Large-Model/NVILA-15B"

OpenAI Compatible REST API#

If the VLM model provides an OpenAI compatible REST API, refer to Configuration Options.

Other Custom Models#

VSS allows you to drop in your own models to the model directory by providing the pre-trained weight of the model and implementing an interface to bridge to the VSS pipeline.

The interface includes an inference.py file and a manifest.yaml.

In the inference.py, you must define a class named Inference with the following two methods:

def get_embeddings(self, tensor:torch.tensor) -> tensor:torch.tensor:

# Generate video embeddings for the chunk / file.

# Do not implement if explicit video embeddings are not supported by model

return tensor

def generate(self, prompt:str, input:torch.tensor, configs:Dict):

# Generate summary string from the input prompt and frame/embedding input.

# configs contains VLM generation parameters like

# max_new_tokens, seed, top_p, top_k, temperature

return summary

The optional get_embeddings method is used to generate embeddings for

a given video clip wrapped in a TCHW tensor and must be removed if

the model doesn’t support the feature.

The generate method is used to generate the text summary based on the given prompt and the video clip wrapped in the TCHW tensor.

The generate method supports models that need to be executed locally on the system or models with REST APIs.

Some examples are included inside the VSS container at

/opt/nvidia/via/via-engine/models/custom/samples.

Examples include models fuyu8b, neva, and phi3v.

The VSS container image or the Blueprint Helm Chart may need to be modified to use custom VLMs. Configuration Options mentions how to use a custom VSS container image and how to specify the model path for custom models. If mounting of custom paths is required, the VSS subchart in the Blueprint Helm Chart can be modified to mount the custom paths.

Example:

For fuyu8b, you can add the following to the Helm overrides file using the instructions

in Configuration Options. In the following, VIA_SRC_HOST_DIR is a directory on the host machine and for this example, could have code copied

from the VSS container at /opt/nvidia/via/via-engine/models/custom/samples with

any necessary changes you need to the fuyu8b/ code including: inference.py, manifest.yaml.

vss:

applicationSpecs:

vss-deployment:

containers:

vss:

env:

- name: VLM_MODEL_TO_USE

value: custom

- name: MODEL_PATH

value: "/tmp/custom-model"

extraPodVolumes:

- name: custom-model

hostPath:

path: <VIA_SRC_HOST_DIR>/.../fuyu8b

extraPodVolumeMounts:

- name: custom-model

mountPath: /tmp/custom-model

Note

Custom VLM models may not work well with GPU-sharing topology.