Product Description#

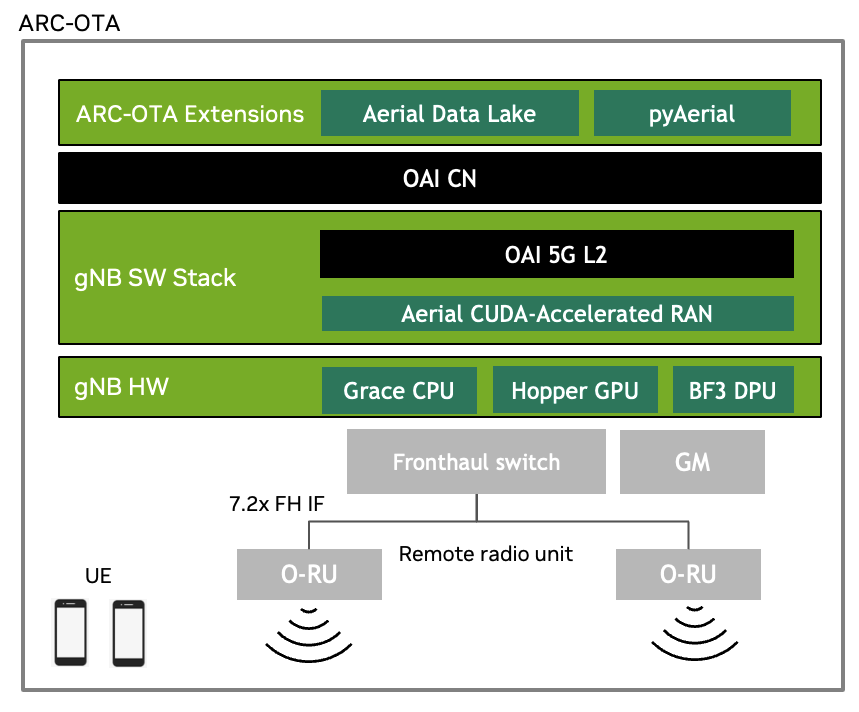

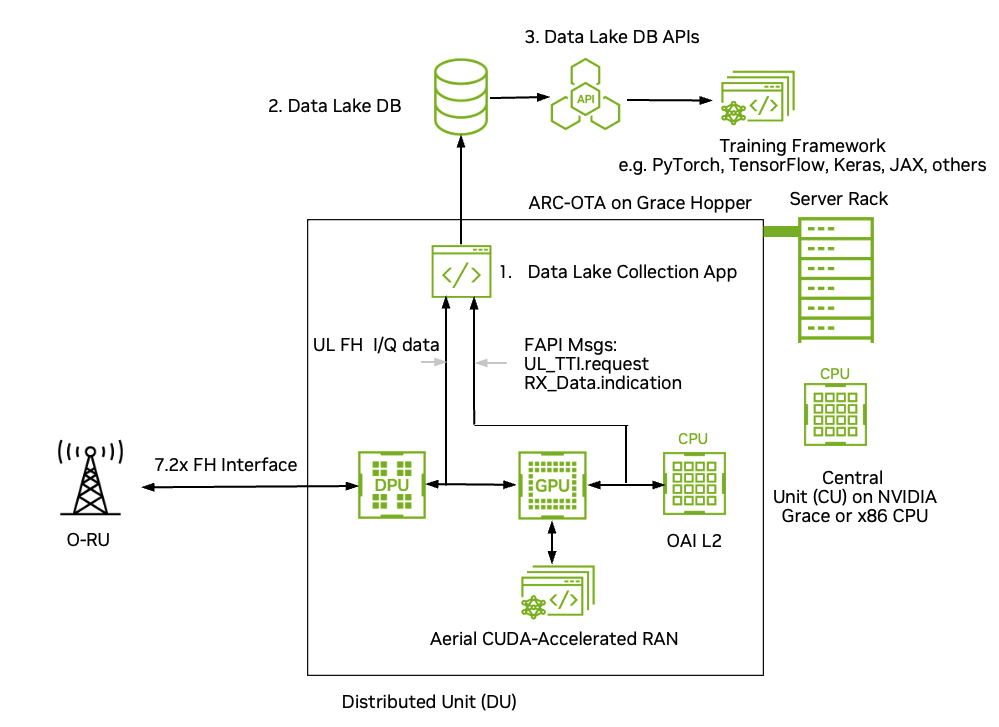

ARC-OTA is an AI/ML enabled end-to-end, real-time Over-The-Air (OTA) testbed for AI-RAN research. As shown in the figure below, ARC-OTA is an on-prem edge-cloud datacenter that is built on the NVIDIA Aerial CUDA-Accelerated RAN in-line accelerated L1, integrated with the OpenAirInterface (OAI) Software Alliance L2, and Core Network (CN). The Aerial CUDA-Accelerated RAN L1 runs on the Hopper GPU and the OAI L2 runs on the Grace CPU. The default L2 configuration assumes the CN is run on the same host as the L1 and L2, but the L2 also supports running the CN on a separate x86 or ARM server. An NVIDIA NIC connects via a fronthaul switch to one or more O-RUs using O-RAN 7.2x for single or multi-cell operation.

NVIDIA Aerial CUDA-Accelerated RAN L1 and OAI L2 stacks are open source. Researchers can bring their innovations to reality through customization of the modulation, coding and signal processing algorithms in the data and control channels of the air interface. With source for L2 machine learning (ML) algorithms, deep reinforcement learning (DRL) can be implemented in the MAC and scheduler.

With an ARC-OTA testbed you can test ML algorithms at all layers of the stack. You can bring ML to the physical layer, to layer 2, and benchmark them in a live network. As real O-RUs are used in the testbed, your algorithms can be verified and benchmarked in the context of real-world wireless channels, in addition to all the non-idealities present in a physical gNB such as power amplifier non-linearities, RF gain and phase mismatch, and other imperfections in the analog electronics. ARC-OTA can be used in conjunction with real-time channel emulators and UE emulators to test algorithms with traditional 3GPP stochastic channel models in addition to using site-specific models generated by RF ray tracing in a digital twin, such as NVIDIA Aerial Omniverse Digital Twin.

ARC-OTA is built with an eye to enabling AI/ML research. It supports the capture of OTA data for use in training pipelines. Data collection is facilitated using NVIDIA Aerial Data Lake, which collects uplink I/Q samples from O-RU(s) over the 7.2x fronthaul interface and writes them to a database. FAPI meta information exchanged between L1 and L2 is also collected and populated in the database and can be used for indexing into and extracting data from the Data Lake database.

While the uplink I/Q samples and L2 meta information are useful for some types of algorithm development, each type of ML, or for that matter non-ML, algorithm design requires a data set tailored to the use case at hand. This is where NVIDIA pyAerial helps. While there are multiple uses for pyAerial, one application is for generating data sets corresponding to any node in the cuPHY PUSCH pipeline. pyAerial brings cuPHY CUDA kernels to Python. It is a library of cuPHY L1 kernels that have been provisioned with Python APIs. It is straightforward for a researcher to, for example, assemble a complete PUSCH pipeline using pyAerial blocks. Since under the pyAerial API the blocks are invoking the same CUDA code that is employed in the real-time cuPHY L1, the pyAerial pipeline is bit-equivalent to the pure CUDA cuPHY pipeline. You might want access to, for example, the input and output samples of cuPHY minimum mean square error (MMSE) channel estimator. You can simply instrument your pyAerial Python code with file I/O operations for each node of interest in the pyAerial graph.

The Figure below shows the E2E architecture and software stack for ARC-OTA.

Key Features and Specifications#

The configuration and capabilities of ARC-OTA 1.7 are outlined in the following sections.

Feature |

Value |

|---|---|

Number Antennas |

4T4R |

Number of Component Carriers |

1x 100MHz carrier |

Subcarrier Spacing (PDxCH; PUxCH, SSB) |

30 kHz |

FFT Size |

4096 |

MIMO layers |

DL: 4 layers; UL: 1 layer |

Duplex Mode |

Release 15 SA TDD |

Number of RRC connected UEs |

Up to 100 |

Number of UEs/TTI |

16 |

Frame structure and slot format |

DDDDDDSUUU |

DSUUU |

|

User plane latency (RRC connected) |

< 10ms one way for DL and UL mode |

Synchronization and Timing |

IEEE 1588v2 PTP; SyncE; LLS-C3 |

Frequency Band |

n78, n48 (CBRS) |

Max Transmit Power |

22 dBm at RF connector |

Peak throughput |

Refer to the Release Notes for the latest peak throughput values. |

Bi-directional UDP Traffic |

> 10+ hours exercised (SMC-GH) |

Tip

To learn how KPIs have changed from last release, refer to the Release Notes.

5G NR gNB Features#

Component |

Capabilities |

|---|---|

gNB PHY |

Refer to the Aerial CUDA-Accelerated RAN cuBB documentation |

gNB MAC |

Refer to the Aerial CUDA-Accelerated RAN cuBB documentation |

5G Core Features#

AMF |

Features |

NGAP AMF status indication (3GPP TS 38.413) |

Add UE Retention Information support (3GPP TS 38.413) |

||

Support of Location services with LMF and AMF (3GPP TS 29.518, 3GPP TS 38.413, 3GPP TS 23.502) |

||

Update NAS with Rel 16.14.0 IEs: Refactor code for Encode/Decode functions; cleanup NAS library (3GPP TS 24.501) |

||

Fixes |

Fix typo for N1N2MessageSubscribe (3GPP TS 29.518) |

|

Fix issue when receiving PDU session reject from SMF (3GPP TS 29.518, 3GPP TS 23.502) |

||

Technical Debt |

Reformatting of the SCTP code |

|

Refactor promise handling |

||

Removing dependencies to libconfig++ (Only YAML file can be read as configuration) |

||

SMF |

Features |

Add N1/N2 info in the message response to AMF if available (3GPP TS 29.502) |

Fixes |

Add connection handling mechanism between NRF and SMF |

|

Technical Debt |

Refactor SMF PFCP associations to use UPF profile |

|

UDM |

Fixes |

Add connection handling mechanism between NRF and UDM |

UDR |

Technical Debt |

Fixed builds |

Add connection handling mechanism between NRF and UDR |

||

Improve MongoDB support |

||

Common |

New HTTP Client library (CPR) for all the NFs |

|

Support mobility registration update procedure (3GPP TS 23.502) |

5G Fronthaul Features#

RU Category |

Category A |

|---|---|

FH Split Compliance |

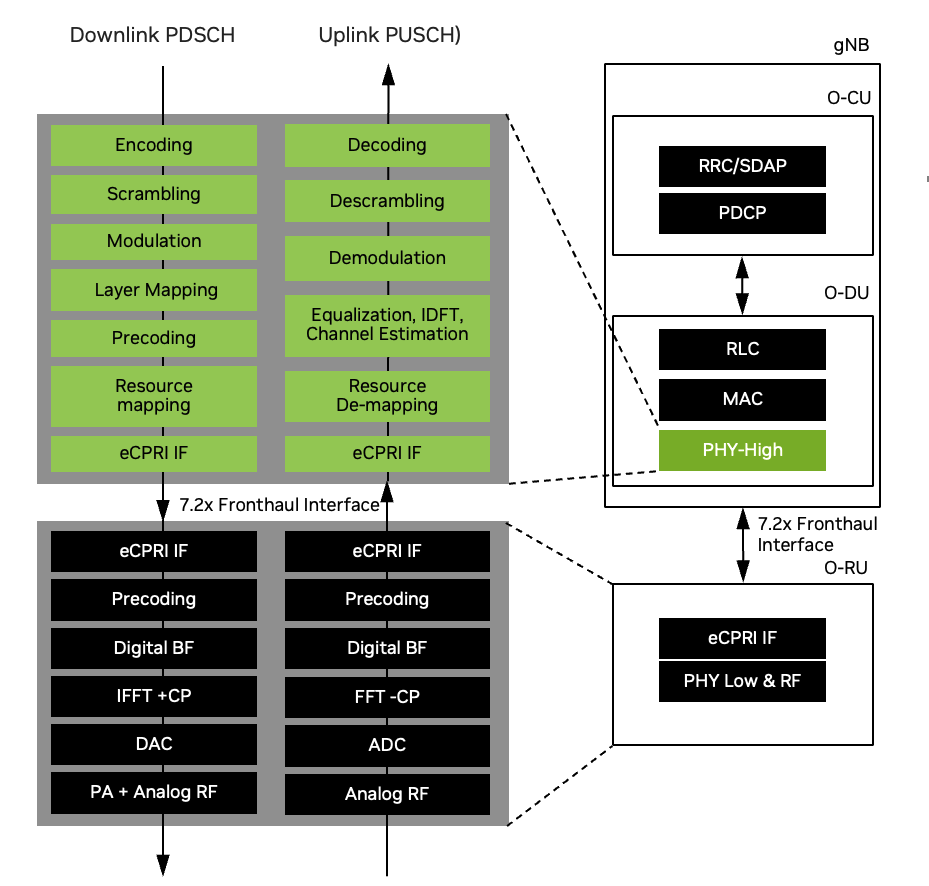

7.2x with DL low-PHY to include Precoding, Digital BF, iFFT+CP and UL low-PHY to include FFT-CP, Digital BF |

FH Ethernet Link |

25Gbps x 1 lane |

Transport encapsulation |

Ethernet |

Transport header |

eCPRI |

C Plane |

Conformant to O-RAN-WG4.CUS.0-v02.00 7.2x split |

U Plane |

Conformant to O-RAN-WG4.CUS.0-v02.00 7.2x split |

S Plane |

Conformant to O-RAN-WG4.CUS.0-v02.00 7.2x split |

M Plane |

Conformant to O-RAN-WG4.CUS.0-v02.00 7.2x split |

RU Beamforming Type |

Code book based |

Product Blueprints#

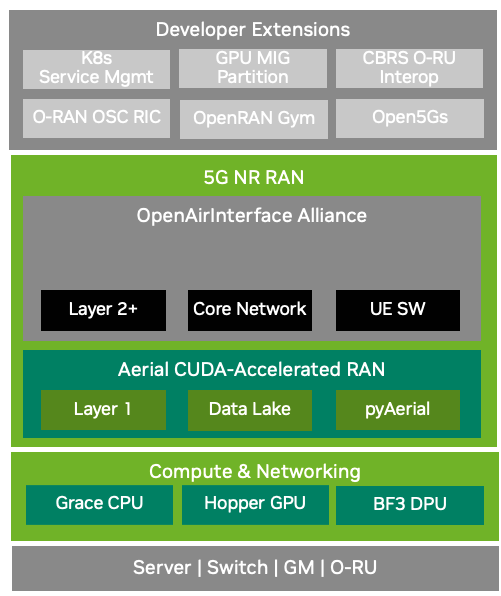

To ease developer onboarding, this section provides reference blueprints with key ingredients for creating a full-stack tested product prototype.

ARC-OTA with AI-RAN integration offers several advantages:

Real-world validation: Many assumptions made for analytical and simulation studies can be rigorously tested in a real OTA network environment, now including AI-enhanced RAN functionality.

Comprehensive experience sharing: Our extensive experience in innovation labs, including design, setup, deployment, and integration of tools and frameworks, is made available to all developers, incorporating insights from AI-RAN implementations

Qualified components: Hardware components and software configurations have undergone rigorous qualification processes, addressing potential difficulties and pitfalls, now including AI-RAN accelerated computing platform

Controlled experimentation: Developer variations in lab experimental networks are limited to environment variability, transmission power, attenuation, and a select set of variables

Full-stack programmability: ARC-OTA provides complete access to source code, allowing developers to onboard any experiments with quick-turnaround validation and benchmarking results, now extended to AI-RAN applications

Cutting-edge technology: Built on principles of disaggregation, virtualization, software-defined systems, adaptability, and O-RAN specifications, ARC-OTA serves as a true advanced wireless developer launchpad

AI-RAN integration: The platform now supports concurrent AI and RAN processing, enabling developers to explore multi-tenancy and orchestration capabilities, maximizing capacity utilization

Energy efficiency: AI-RAN integration allows for optimized energy consumption without compromising performance, enabling developers to test and validate energy-efficient network designs

Enhanced performance: Developers can leverage AI-enhanced functionalities such as improved throughput, handover speed, and network anomaly detection

As we look to leverage, extend, and innovate, the key guiding attributes for these blueprints focus on creating a reliable, stable, performant, and scalable experimental radio network that empowers developers to push the boundaries of wireless technology.

Full-Stack Innovation#

Components |

Feature |

|---|---|

COTS hardware |

COTS infrastructure composed of accelerated compute, virtualization, radios, fronthaul networking, and precision timing. |

Virtualization |

vRAN workloads from NVIDIA and OAI |

AI/ML Frameworks |

Data Lake + pyAerial for AI/ML frameworks: RF / IQ data + FAPI |

Standards |

3GPP Release 15 + O-RAN 7.2 split P5G on-prem lab network |

Developer Extensions |

For more information on developer contributions, refer to the Developer Zone section |

Note

We welcome developer contributions through extensions and plugins–for community benefits and to accelerate pace of innovations!

ARC-OTA and O-RAN#

The O-RAN framework is designed to foster openness, programmability, automation, intelligence, and the decoupling of hardware and software through standardized, interoperable interfaces. It champions a multi-vendor and multi-stakeholder ecosystem within a cloud-native and virtualized wireless infrastructure, enhancing the efficiency of RAN deployment, operation, and maintenance.

The O-RAN split-RAN concept disaggregates the RAN into multiple functional components: O-RAN Central Unit (O-CU), O-RAN Distributed Unit (O-DU) and O-RAN Radio Unit (O-RU), as shown in the figure below. These components can be deployed on different hardware and software platforms and can be interconnected using open interfaces.

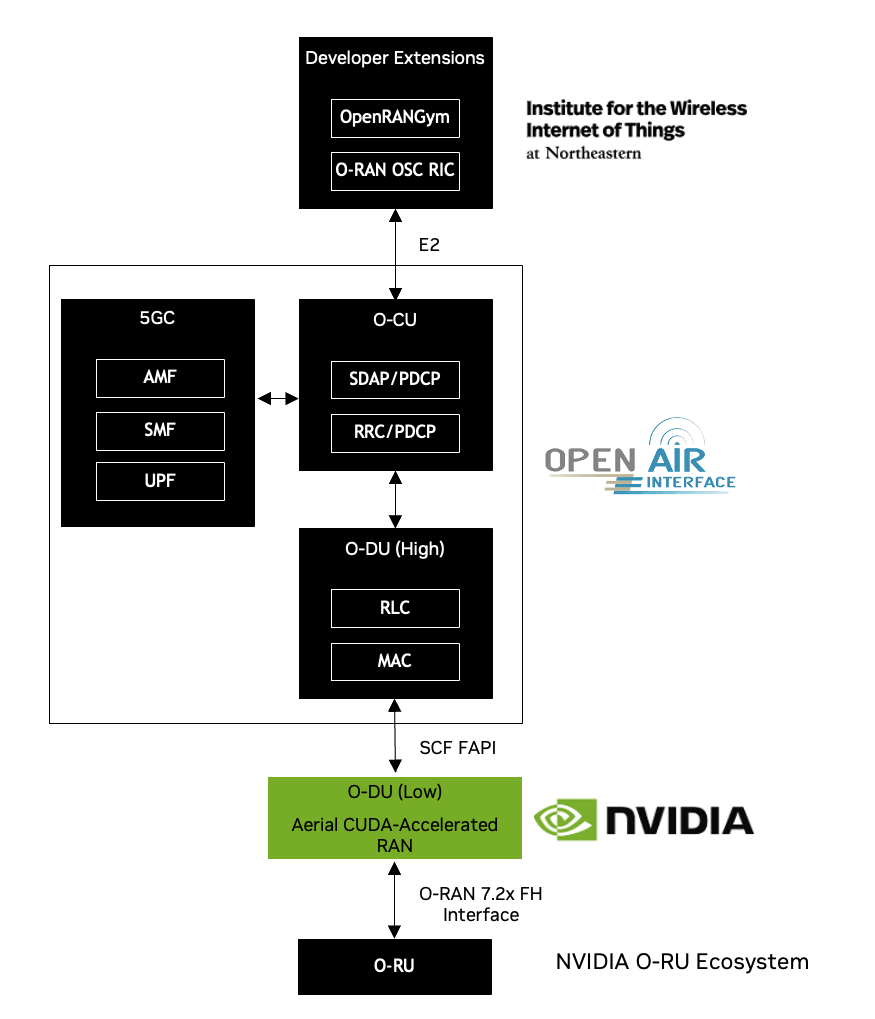

ARC-OTA conforms to an O-RAN blueprint as shown in the figure and table below. The figure highlights the multi-vendor aspect of O-RAN, with O-RUs supplied from NVIDIA O-RU ecosystem partners, Layer-1 from NVIDIA Aerial-CUDA Accelerated RAN, and O-DU (high), O-CU, and 5G Core from OAI.

The developer extension from Northeastern University (NEU) has the following highlights:

OpenRAN Gym, an open toolbox for data collection and experimentation with AI in O-RAN architectures

Integration of the OSC (O-RAN Software Community) near real-time RIC (RAN Intelligent Controller)

Organization |

Components |

|---|---|

Northeastern |

E2 interface plugin leveraging O-RAN OSC RIC and template xApps |

OAI |

O-DU-High (Layer 2), O-CU and 5GC |

NVIDIA |

O-DU Low / High PHY |

Others |

Handsets (Apple iPhone 14, Samsung S23), Viavi Qualsar Grandmaster, Dell FH switch, Supermicro GH200 server. |

Data Collection on ARC-OTA with Data Lake#

While synthetic data generation, powered by tools like NVIDIA Omniverse Digital Twin and NVIDIA Sionna/Sionna RT, play a crucial role in AI-driven research, real-world OTA waveform data from live systems is equally essential. This is where Data Lake comes in—a cutting-edge data capture platform designed to collect real-time OTA RF data from the NVIDIA ARC-OTA testbed, enabling deeper insights and advancements in AI-native wireless 6G.

Data Lake consists of three parts:

The Data Collection App (DCA) running on the Grace CPU

The Data Lake Database (DLDB)

A set of DLDB APIs used for retrieving data from the DLDB

The DCA captures UL I/Q samples from 7.2x fronthaul interface in addition to a sub-set of the FAPI messages exchanged between L2 and L1.

Data Lake has the following key features:

Real-time capture of RF data from OTA testbed

Data Lake is designed to operate with the NVIDIA ARC-OTA network testbed. I/Q samples from O-RUs connected to the GPU platform via a 7.2x split fronthaul interface are delivered to the host CPU and exported to the DLDB.

Powerful database access APIs

The layer-2 messages Rx_Data.indication and UL.TTI.request are filtered from the layer-2/layer-1 FAPI message stream and exported to the database. The fields in these data structures form the basis of the database access APIs to extract data from the database for training in ML frameworks such as PyTorch, Sionna and JAX to name a few.

Scalable and time-coherent across multiple base stations

The data collection app runs on the same CPU that supports the DU. It only consumes a small number of CPU cores. Because each base station is responsible for collecting its own uplink data the collection process scales as more base stations are added to the ARC-OTA network. Databases are time-stamped, so data collected on multiple base stations can be used in a training flow in a time-coherent manner.

Data Lake is designed to be used in conjunction with pyAerial. Using the DLDB APIs, pyAerial can access RF samples in a DLDB and transform them into training data for signal processing functions.

Information about installing and using Data Lake and pyAerial can be found in the Aerial CUDA-Accelerated RAN documentation.