NVIDIA AI Enterprise and NVIDIA vGPU (C-Series)#

NVIDIA AI Enterprise offers a flexible licensing mechanism delivered through a comprehensive software suite designed for enterprise-grade AI. This suite combines infrastructure tools, application frameworks, libraries, and NVIDIA Inference Microservice (NIM), streamlining the deployment of AI workloads across various environments. This approach helps organizations tailor AI solutions to specific requirements, maximizing performance and scalability without the need for multiple dedicated GPU resources.

NVIDIA vGPU (C-Series) drivers are one of the deployment approaches supported by NVIDIA AI Enterprise in virtualized environments. NVIDIA vGPU (C-Series) extends the capabilities of NVIDIA AI Enterprise by enabling AI and machine learning tasks to run in virtual machines that share GPU resources. This allows AI tasks to maximize GPU utilization while supporting multiple users, making it easier to handle dynamic workloads on local servers or in the cloud. By leveraging vGPU (C-Series) within NVIDIA AI Enterprise, organizations can confidently scale their AI infrastructure and integrate advanced computing power into existing virtualization strategies.

Key Concepts#

vGPU (C-Series)#

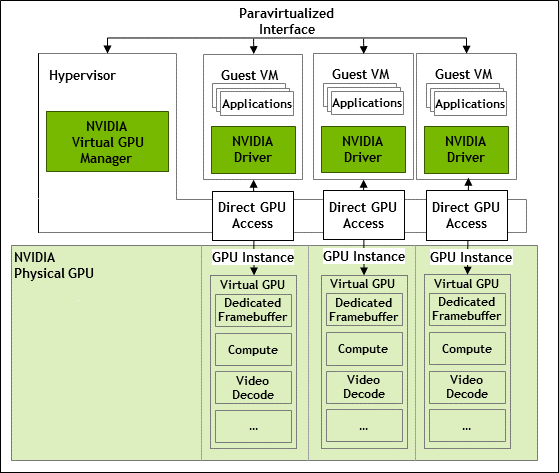

NVIDIA Virtual GPU (C-Series) accelerates AI and ML workloads by enabling multiple virtual machines to have simultaneous, direct access to a single physical GPU while maintaining the high-performance compute capabilities required for complex model training, inference, and data processing. By distributing GPU resources dynamically and efficiently across multiple VMs, vGPU (C-Series) optimizes utilization and lowers overall hardware costs, making it especially beneficial for large-scale and rapidly evolving AI environments.

NVIDIA vGPU (C-Series) Virtual GPU Manager#

The NVIDIA vGPU (C-Series) Virtual GPU Manager enables GPU virtualization by allowing multiple virtual machines (VMs) to share a physical GPU, optimizing GPU allocation for different workloads. The NVIDIA AI Enterprise host driver, also known as the NVIDIA vGPU (C-Series) Virtual GPU Manager, is installed on the hypervisor, is hypervisor specific,and is best suited for running AI and machine learning workloads in virtualized environments by optimizing GPU performance for tasks like deep learning, model training, and inference. It’s part of the NVIDIA AI Enterprise software, which is designed for deep learning, data science, and other high-performance computing in virtual environments.

The NVIDIA vGPU (C-Series) Virtual GPU Manager packages can be downloaded from the NGC Catalog.

NVIDIA vGPU (C-Series) Driver#

The NVIDIA vGPU (C-Series) Driver is installed on each VM’s operating system, allowing the VM to fully leverage the virtualized GPU resources. It works with the NVIDIA vGPU (C-Series) Virtual GPU Manager to ensure resource sharing and isolation. The NVIDIA vGPU (C-Series) Driver maintains a consistent, high-performance computing experience for AI and ML workloads, streamlining the deployment and management of GPU-accelerated applications within virtualized environments run AI workloads on vGPU powered VMs in virtualized environments. It’s part of the NVIDIA AI Enterprise software, which is designed for deep learning, data science, and other high-performance computing in virtual environments.

The NVIDIA vGPU (C-Series) Driver packages can be downloaded from the NGC Catalog.

Features#

Below are the key features that highlight the capabilities and benefits of NVIDIA vGPU (C-Series), designed to work alongside NVIDIA AI Enterprise, and optimize performance and efficiency across various use cases.

- GPU Virtualization for Multiple VMs and Containers

NVIDIA vGPU (C-Series) lets multiple virtual machines (VMs) or containers share one physical GPU. This means you can distribute GPU power to different tasks (like AI, machine learning, or data processing) without needing a separate GPU for each task, making better use of the available hardware.

vGPU (C-Series) also supports a multi-GPU scenario by enabling multiple GPUs to be assigned to a single VM for larger models and increasingly demanding workflows.

- Scalability and High User Density

NVIDIA vGPU (C-Series) allows many users or tasks to use GPU resources at the same time, which is perfect for cloud setups, virtual desktops, and shared AI environments. This makes it easier for AI businesses to support lots of users or applications without needing extra hardware.

- High-Performance GPU Acceleration

With NVIDIA vGPU (C-Series), you can use GPU power for demanding tasks like deep learning, training models, and data analysis. This helps AI and machine learning tasks run much faster compared to using just the CPU.

- Flexible Resource Allocation

NVIDIA vGPU (C-Series) lets businesses control how GPU resources are distributed. Admins can assign specific amounts of GPU memory and processing power to different VMs or containers, making sure each task gets the right amount of resources. This flexibility helps improve efficiency and reduce costs.

- Multi-Workload Support

NVIDIA vGPU (C-Series) can handle many types of tasks, from graphics rendering for remote workstations to AI/ML and high-performance computing. This flexibility is important for NVIDIA AI Enterprise customers who need to run different demanding applications on the same system.

- Support for AI Frameworks and Libraries

NVIDIA vGPU (C-Series) works smoothly with popular AI tools like TensorFlow, PyTorch, and MXNet, boosting performance for deep learning tasks. This helps NVIDIA AI Enterprise customers fully use GPU power for AI and ML, making their work faster and more efficient.

- Cloud and Data Center Optimization

NVIDIA vGPU (C-Series) lets both on-premises data centers and cloud environments make full use of GPU resources. Customers can set up AI systems in hybrid or multi-cloud setups, ensuring GPU resources are well-distributed, which helps manage and scale AI workloads more easily in the cloud.

- MIG-Backed NVIDIA vGPUs

With MIG-backed NVIDIA vGPU (C-Series), a physical GPU can be split into smaller, separate parts. This lets businesses assign different amounts of GPU power to various tasks, so less demanding AI tasks can run smoothly while still giving more power to bigger tasks. This feature is especially useful in shared, cloud, or mixed environments.

FAQs#

Q: What is the difference between time-sliced vGPUs and MIG-backed vGPUs?

A: Time-sliced vGPUs and MIG-backed vGPUs are two different approaches to sharing GPU resources in virtualized environments. Here are the key differences:

Time-sliced vGPUs |

MIG-backed vGPUs |

|---|---|

Share the entire GPU among multiple virtual machines (VMs). |

Partition the GPU into smaller, dedicated instances. |

Each vGPU gets full access to all streaming multiprocessors (SMs) and engines, but only for a specific time slice. |

Each vGPU gets exclusive access to a portion of the GPU’s memory and compute resources. |

Processes run in series, with each vGPU waiting while others use the GPU. |

Processes run in parallel on dedicated hardware slices. |

The number of VMs per GPU is limited only by framebuffer size. |

Depending on the number of MIG instances supported on a GPU, this can range from 4 to 7 VMs per GPU. |

Better for workloads that require occasional bursts of full GPU power. |

Provides better performance isolation and more consistent latency. |

Release Notes#

Prerequisites#

Using NVIDIA vGPU (C-Series)#

Because the NVIDIA vGPU (C-Series) has large BAR memory settings, using these vGPUs has some restrictions on VMware ESXi.

The guest OS must be a 64-bit OS.

64-bit MMIO and EFI boot must be enabled for the VM.

The guest OS must be able to be installed in EFI boot mode.

The VM’s MMIO space must be increased 64GB or more, depending on the GPU’s MMIO requirements, as explained in VMware Knowledge Base Article: VMware vSphere VMDirectPath I/O: Requirements for Platforms and Devices (2142307).

Using NVIDIA vGPU (C-Series) on GPUs Requiring 64GB or More of MMIO Space with Large-Memory VMs#

Some GPUs require 64GB or more of MMIO (Memory-Mapped I/O) space. When a vGPU on a GPU that requires 64GB or more of MMIO space is assigned to a VM with 32GB or more of memory on ESXi, the VM’s MMIO space must be increased to the amount that the GPU requires.

For more information, refer to the VMware Knowledge Base Article: VMware vSphere VMDirectPath I/O: Requirements for Platforms and Devices (2142307).

No extra configuration is needed.

The following table lists the GPUs that require 64GB or more of MMIO space and the amount of MMIO space that each GPU requires.

GPU |

MMIO Space Required |

|---|---|

NVIDIA H200 (all variants) |

512GB |

NVIDIA H100 (all variants) |

256GB |

NVIDIA H800 (all variants) |

256GB |

NVIDIA H20 141GB |

512GB |

NVIDIA H20 96GB |

256GB |

NVIDIA L40 |

128GB |

NVIDIA L20 |

128GB |

NVIDIA L4 |

64GB |

NVIDIA L2 |

64GB |

NVIDIA RTX 6000 Ada |

128GB |

NVIDIA RTX 5000 Ada |

64GB |

NVIDIA A40 |

128GB |

NVIDIA A30 |

64GB |

NVIDIA A10 |

64GB |

NVIDIA A100 80GB (all variants) |

256GB |

NVIDIA A100 40GB (all variants) |

128GB |

NVIDIA RTX A6000 |

128GB |

NVIDIA RTX A5500 |

64GB |

NVIDIA RTX A5000 |

64GB |

Quadro RTX 8000 Passive |

64GB |

Quadro RTX 6000 Passive |

64GB |

Tesla V100 (all variants) |

64GB |

Platform Support#

Microsoft Windows Guest Operating Systems#

NVIDIA AI Enterprise supports only the Tesla Compute Cluster (TCC) driver model for Windows guest drivers.

Windows guest OS support is limited to running applications natively in Windows VMs without containers. NVIDIA AI Enterprise features that depend on the containerization of applications are not supported on Windows guest operating systems.

If you are using a generic Linux supported by the KVM hypervisor, consult the documentation from your hypervisor vendor for information about Windows releases supported as a guest OS.

For more information, refer to the Non-containerized Applications on Hypervisors and Guest Operating Systems Supported with vGPU table.

NVIDIA vGPU (C-Series) Migration#

NVIDIA vGPU (C-Series) Migration, which includes vMotion and suspend-resume, is supported for both time-sliced and MIG-backed vGPUs on all supported GPUs and guest operating systems but only on a subset of supported hypervisor software releases.

Limitations with NVIDIA vGPU (C-Series) Migration Support

Red Hat Enterprise Linux with KVM: Migration between hosts running different versions of the NVIDIA Virtual GPU Manager driver is not supported, even within the same NVIDIA Virtual GPU Manager driver branch.

NVIDIA vGPU (C-Series) migration is disabled for a VM for which any of the following NVIDIA CUDA Toolkit features is enabled:

Unified memory

Debuggers

Profilers

Supported Hypervisor Software Releases

Since Red Hat Enterprise Linux with KVM 9.4

Not supported on Ubuntu

All supported releases of VMware vSphere

Known Issues with NVIDIA vGPU (C-Series) Migration Support

Use Case |

Affected GPUs |

Issue |

|---|---|---|

Migration between hosts with different ECC memory configuration |

All GPUs that support vGPU migration |

Migration of VMs configured with vGPU stops before the migration is complete |

vGPUs that Support Multiple vGPUs Assigned to a VM#

The supported vGPUs depend on the hypervisor:

For Linux with KVM hypervisors listed in NVIDIA AI Enterprise Infrastructure Support Matrix, Red Hat Enterprise Linux KVM, and Ubuntu, all NVIDIA vGPU (C-Series) are supported with PCIe GPUs. On GPUs that support the Multi-Instance GPU (MIG) feature, both time-sliced and MIG-backed vGPUs are supported.

For VMware vSphere, the supported vGPUs depend on the hypervisor release:

Since VMware vSphere 8.0: All NVIDIA vGPU (C-Series) are supported. On GPUs that support the Multi-Instance GPU (MIG) feature, both time-sliced and MIG-backed vGPUs are supported.

VMware vSphere 7.x releases: Only NVIDIA vGPU (C-Series) allocated all of the physical GPU’s framebuffer are supported.

You can assign multiple vGPUs with differing amounts of frame buffer to a single VM, provided the board type and the series of all the vGPUs are the same. For example, you can assign an A40-48C vGPU and an A40-16C vGPU to the same VM. However, you cannot assign an A30-8C vGPU and an A16-8C vGPU to the same VM.

Board |

vGPU |

|---|---|

NVIDIA L40 |

|

NVIDIA L40S |

|

NVIDIA L20 |

|

NVIDIA L4 |

|

NVIDIA L2 |

|

NVIDIA RTX 6000 Ada |

|

NVIDIA RTX 5880 Ada |

|

NVIDIA RTX 5000 Ada |

|

Board |

vGPU [1] |

|---|---|

|

|

NVIDIA A800 PCIe 40GB active-cooled |

|

NVIDIA A800 HGX 80GB |

|

|

|

NVIDIA A100 HGX 80GB |

|

NVIDIA A100 PCIe 40GB |

|

NVIDIA A100 HGX 40GB |

|

NVIDIA A40 |

|

|

|

NVIDIA A16 |

|

NVIDIA A10 |

|

NVIDIA RTX A6000 |

|

NVIDIA RTX A5500 |

|

NVIDIA RTX A5000 |

|

Board |

vGPU [1] |

|---|---|

NVIDIA H800 PCIe 94GB (H800 NVL) |

All NVIDIA vGPU (C-Series) |

NVIDIA H800 PCIe 80GB |

All NVIDIA vGPU (C-Series) |

NVIDIA H800 SXM5 80GB |

NVIDIA vGPU (C-Series) [6] |

NVIDIA H200 PCIe 141GB (H200 NVL) |

All NVIDIA vGPU (C-Series) |

NVIDIA H200 SXM5 141GB |

NVIDIA vGPU (C-Series) [6] |

NVIDIA H100 PCIe 94GB (H100 NVL) |

All NVIDIA vGPU (C-Series) |

NVIDIA H100 SXM5 94GB |

NVIDIA vGPU (C-Series) [6] |

NVIDIA H100 PCIe 80GB |

All NVIDIA vGPU (C-Series) |

NVIDIA H100 SXM5 80GB |

NVIDIA vGPU (C-Series) [6] |

NVIDIA H100 SXM5 64GB |

NVIDIA vGPU (C-Series) [6] |

NVIDIA H20 SXM5 141GB |

NVIDIA vGPU (C-Series) [6] |

NVIDIA H20 SXM5 96GB |

NVIDIA vGPU (C-Series) [6] |

Board |

vGPU |

|---|---|

Tesla T4 |

|

Quadro RTX 6000 passive |

|

Quadro RTX 8000 passive |

|

Board |

vGPU |

|---|---|

Tesla V100 SXM2 |

|

Tesla V100 SXM2 32GB |

|

Tesla V100 PCIe |

|

Tesla V100 PCIe 32GB |

|

Tesla V100S PCIe 32GB |

|

Tesla V100 FHHL |

|

vGPUs that Support Peer-to-Peer CUDA Transfers#

Only C-series time-sliced vGPUs allocated all of the physical GPU framebuffer on physical GPUs that support NVLink are supported.

Board |

vGPU |

|---|---|

|

A800D-80C |

NVIDIA A800 PCIe 40GB active-cooled |

A800-40C |

NVIDIA A800 HGX 80GB |

A800DX-80C [2] |

|

A100D-80C |

NVIDIA A100 HGX 80GB |

A100DX-80C [2] |

NVIDIA A100 PCIe 40GB |

A100-40C |

NVIDIA A100 HGX 40GB |

A100X-40C [2] |

NVIDIA A40 |

A40-48C |

|

A30-24C |

NVIDIA A16 |

A16-16C |

NVIDIA A10 |

A10-24C |

NVIDIA RTX A6000 |

A6000-48C |

NVIDIA RTX A5500 |

A5500-24C |

NVIDIA RTX A5000 |

A5000-24C |

Board |

vGPU |

|---|---|

NVIDIA H800 PCIe 94GB (H800 NVL) |

H800L-94C |

NVIDIA H800 PCIe 80GB |

H800-80C |

NVIDIA H200 PCIe 141GB (H200 NVL) |

H200-141C |

NVIDIA H200 SXM5 141GB |

H200X-141C |

NVIDIA H100 PCIe 94GB (H100 NVL) |

H100L-94C |

NVIDIA H100 SXM5 94GB |

H100XL-94C |

NVIDIA H100 PCIe 80GB |

H100-80C |

NVIDIA H100 SXM5 80GB |

H100XM-80C |

NVIDIA H100 SXM5 64GB |

H100XS-64C |

NVIDIA H20 SXM5 141GB |

H20X-141C |

NVIDIA H20 SXM5 96GB |

H20-96C |

Board |

vGPU |

|---|---|

Quadro RTX 8000 passive |

RTX8000P-48C |

Quadro RTX 6000 passive |

RTX6000P-24C |

Board |

vGPU |

|---|---|

Tesla V100 SXM2 |

V100X-16C |

Tesla V100 SXM2 32GB |

V100DX-32C |

GPUDirect Technology#

NVIDIA GPUDirect Remote Direct Memory Access (RDMA) technology enables network devices to access the vGPU frame buffer directly, bypassing CPU host memory altogether. GPUDirect Storage technology enables a direct data path for direct memory access (DMA) transfers between GPU and storage. GPUDirect technology is supported only on a subset of vGPUs and guest OS releases.

Supported vGPUs

GPUDirect RDMA and GPUDirect Storage technology are supported on all time-sliced and MIG-backed NVIDIA vGPU (C-Series) on physical GPUs that support single root I/O virtualization (SR-IOV).

GPUs are based on the following GPU architectures:

NVIDIA L40

NVIDIA L40S

NVIDIA L20

NVIDIA L20 liquid-cooled

NVIDIA L4

NVIDIA L2

NVIDIA RTX 6000 Ada

NVIDIA RTX 5880 Ada

NVIDIA RTX 5000 Ada

NVIDIA A800 PCIe 80GB

NVIDIA A800 PCIe 80GB liquid-cooled

NVIDIA A800 HGX 80GB

NVIDIA AX800

NVIDIA A800 PCIe 40GB active-cooled

NVIDIA A100 PCIe 80GB

NVIDIA A100 PCIe 80GB liquid-cooled

NVIDIA A100 HGX 80GB

NVIDIA A100 PCIe 40GB

NVIDIA A100 HGX 40GB

NVIDIA A100X

NVIDIA A40

NVIDIA A30

NVIDIA A30 liquid-cooled

NVIDIA A30X

NVIDIA A16

NVIDIA A10

NVIDIA A2

NVIDIA RTX A6000

NVIDIA RTX A5500

NVIDIA RTX A5000

NVIDIA H800 PCIe 94GB (H800 NVL)

NVIDIA H800 PCIe 80GB

NVIDIA H800 SXM5 80GB

NVIDIA H200 PCIe 141GB (H200 NVL)

NVIDIA H200 SXM5 141GB

NVIDIA H100 PCIe 94GB (H100 NVL)

NVIDIA H100 SXM5 94GB

NVIDIA H100 PCIe 80GB

NVIDIA H100 SXM5 80GB

NVIDIA H100 SXM5 64GB

NVIDIA H20 SXM5 141GB

NVIDIA H20 SXM5 96G

Supported Guest OS Releases

Linux only. GPUDirect technology is not supported on Windows.

Supported Network Interface Cards

GPUDirect technology is supported on the following network interface cards:

NVIDIA ConnectX- 7 SmartNIC

Mellanox Connect-X 6 SmartNIC

Mellanox Connect-X 5 Ethernet adapter card

Limitations

Starting with GPUDirect Storage technology release 1.7.2, the following limitations apply:

GPUDirect Storage technology is not supported on GPUs based on the NVIDIA Ampere GPU architecture.

On GPUs based on the NVIDIA Hopper GPU architecture and the NVIDIA Ada Lovelace GPU architecture, GPUDirect Storage technology is supported only with the guest driver for Linux based on NVIDIA Linux open GPU kernel modules.

GPUDirect Storage technology releases before 1.7.2 are supported only with guest drivers with Linux kernel versions earlier than 6.6.

GPUDirect Storage technology is supported only on the following guest OS releases:

Ubuntu 22.04 LTS

Ubuntu 20.04 LTS

NVIDIA NVSwitch On-Chip Memory Fabric#

NVIDIA NVSwitch on-chip memory fabric enables peer-to-peer vGPU communication within a single node over the NVLink fabric. It is supported only on a subset of hardware platforms, vGPUs, hypervisor software releases, and guest OS releases.

For information about using the NVSwitch on-chip memory fabric, refer to the Fabric Manager for NVIDIA NVSwitch Systems User Guide.

Hardware Platforms

NVIDIA HGX H800 8-GPU baseboard

NVIDIA HGX H100 8-GPU baseboard

NVIDIA HGX A100 8-GPU baseboard

Supported vGPUs

Only the following C-series time-sliced vGPUs that are allocated all of the physical GPU’s framebuffer are supported:

NVIDIA H800

NVIDIA H200 HGX

NVIDIA H100 SXM5

NVIDIA H20

NVIDIA A800

NVIDIA A100 HGX

Board |

vGPU |

|---|---|

NVIDIA H800 SXM5 80GB |

H800XM-80C |

NVIDIA H200 SXM5 141GB |

H200X-141C |

NVIDIA H100 SXM5 80GB |

H100XM-80C |

NVIDIA H20 SXM5 141GB |

H20X-141C |

NVIDIA H20 SXM5 96GB |

H20-96C |

Board |

vGPU |

|---|---|

NVIDIA A800 HGX 80GB |

A800DX-80C |

NVIDIA A100 HGX 80GB |

A100DX-80C |

NVIDIA A100 HGX 40GB |

A100X-40C |

Hypervisor Releases

Consult the documentation from your hypervisor vendor for information about which generic Linux with KVM hypervisor software releases supports NVIDIA NVSwitch on-chip memory fabric.

All supported Red Hat Enterprise Linux KVM releases support NVIDIA NVSwitch on-chip memory fabric.

On the Ubuntu hypervisor, NVSwitch is not supported.

The earliest VMware vSphere Hypervisor (ESXi) release that supports NVIDIA NVSwitch on-chip memory fabric depends on the GPU architecture.

GPU Architecture |

Earliest Supported VMware vSphere Hypervisor (ESXi) Release |

|---|---|

NVIDIA Hopper |

VMware vSphere Hypervisor (ESXi) 8 update 2 |

NVIDIA Ampere |

VMware vSphere Hypervisor (ESXi) 8 update 1 |

Guest OS Releases

Linux only. NVIDIA NVSwitch on-chip memory fabric is not supported on Windows.

Limitations

Only time-sliced vGPUs are supported. MIG-backed vGPUs are not supported.

On the Ubuntu hypervisor, NVSwitch is not supported.

GPU passthrough is not supported.

SLI is not supported.

All vGPUs communicating peer-to-peer must be assigned to the same VM.

On GPUs based on the NVIDIA Hopper GPU architecture, multicast is not supported.

NVLink Multicast#

NVLink multicast support requires that unified memory is enabled. For more information about enabling unified memory, refer to the Enabling Unified Memory for a vGPU section.

Only full-sized, time-sliced NVIDIA vGPUs (C-Series) support NVLink multicast.

Board |

vGPU |

|---|---|

NVIDIA HGX H800 |

NVIDIA H800XM-80C |

NVIDIA HGX H200 |

NVIDIA H200X-141C |

NVIDIA HGX H100 |

NVIDIA H100XM-80C |

NVIDIA H20 HGX 141GB |

H20X-141C |

HGX H20 96GB |

NVIDIA H20-96C |

vGPUs that Support Unified Memory#

All MIG-backed vGPUs are supported on GPUs that support the Multi-Instance GPU (MIG) feature. Only time-sliced NVIDIA vGPUs (C-Series) that allocate all of the physical GPU’s frame buffer on physical GPUs that support unified memory are supported.

Board |

vGPU |

|---|---|

NVIDIA L40 |

L40-48C |

NVIDIA L40S |

L40S-48C |

|

L20-48C |

NVIDIA L4 |

L4-24C |

NVIDIA L2 |

L2-24C |

NVIDIA RTX 6000 Ada |

RTX 6000 Ada-48C |

NVIDIA RTX 5880 Ada |

RTX 5880 Ada-48C |

NVIDIA RTX 5000 Ada |

RTX 6000 Ada-32C |

Board |

vGPU |

|---|---|

|

|

NVIDIA A800 PCIe 40GB active-cooled |

|

NVIDIA A800 HGX 80GB |

|

|

|

NVIDIA A100 HGX 80GB |

|

NVIDIA A100 PCIe 40GB |

|

NVIDIA A100 HGX 40GB |

|

NVIDIA A40 |

A40-48C |

|

|

NVIDIA A16 |

A16-16C |

NVIDIA A10 |

A10-24C |

NVIDIA RTX A6000 |

A6000-48C |

NVIDIA RTX A5500 |

A5500-24C |

NVIDIA RTX A5000 |

A5000-24C |

Board |

vGPU |

|---|---|

NVIDIA H800 PCIe 94GB (H800 NVL) |

|

NVIDIA H800 PCIe 80GB |

|

NVIDIA H800 SXM5 80GB |

|

NVIDIA H200 SXM5 |

|

NVIDIA H200 NVL |

|

NVIDIA H100 PCIe 94GB (H100 NVL) |

|

NVIDIA H100 SXM5 94GB |

|

NVIDIA H100 PCIe 80GB |

|

NVIDIA H100 SXM5 80GB |

|

NVIDIA H100 SXM5 64GB |

|

NVIDIA H20 SXM5 141GB |

|

NVIDIA H20 SXM5 96GB |

|

Limitations#

Total Frame Buffer for vGPUs is Less Than the Total Frame Buffer on the Physical GPU#

The hypervisor uses some of the physical GPU’s frame buffer on behalf of the VM for allocations that the guest OS would otherwise have made in its frame buffer. The frame buffer used by the hypervisor is not available for vGPUs on the physical GPU. In NVIDIA vGPU deployments, the frame buffer for the guest OS is reserved in advance, whereas in bare-metal deployments, the frame buffer for the guest OS is reserved based on the runtime needs of applications.

If error-correcting code (ECC) memory is enabled on a physical GPU that does not have HBM2 memory, the amount of frame buffer usable by vGPUs is further reduced. All types of vGPUs are affected, not just those that support ECC memory.

An additional frame buffer is allocated for dynamic page retirement on all GPUs that support ECC memory and, therefore, dynamic page retirement. The allocated amount is inversely proportional to the maximum number of vGPUs per physical GPU. All GPUs that support ECC memory are affected, even those with HBM2 memory or for which ECC memory is disabled.

The approximate amount of frame buffer that NVIDIA AI Enterprise reserves can be calculated from the following formula:

max-reserved-fb = vgpu-profile-size-in-mb÷16 + 16 + ecc-adjustments + page-retirement-allocation + compression-adjustment

max-reserved-fb - The total amount of reserved frame buffer in Mbytes that is unavailable for vGPUs.

vgpu-profile-size-in-mb - The amount of frame buffer allocated to a single vGPU in Mbytes. This amount depends on the vGPU type. For example, for the T4-16Q vGPU type, vgpu-profile-size-in-mb is 16384.

ecc-adjustments - The amount of frame buffer in Mbytes that is not usable by vGPUs when ECC is enabled on a physical GPU that does not have HBM2 memory.

If ECC is enabled on a physical GPU that does not have HBM2 memory,

ecc-adjustmentsisfb-without-ecc/16, equivalent to 64 Mbytes for every Gbyte of frame buffer assigned to the vGPU.fb-without-eccis the total amount of frame buffer with ECC disabled.If ECC is disabled or the GPU has HBM2 memory,

ecc-adjustmentsis 0.

page-retirement-allocation - The amount of frame buffer in Mbytes reserved for dynamic page retirement.

On GPUs based on the NVIDIA Maxwell GPU architecture,

page-retirement-allocation = 4÷max-vgpus-per-gpu.On GPUs based on NVIDIA GPU architectures after the Maxwell architecture,

page-retirement-allocation = 128÷max-vgpus-per-gpu.

max-vgpus-per-gpu - The maximum number of vGPUs that can be created simultaneously on a physical GPU. This number varies according to the vGPU type. For example, for the T4-16Q vGPU type, max-vgpus-per-gpu is 1.

compression-adjustment - The amount of frame buffer in Mbytes that is reserved for the higher compression overhead in vGPU types with 12 Gbytes or more of frame buffer on GPUs based on the Turing architecture. compression-adjustment depends on the vGPU type, as shown in the following table.

vGPU Type |

Compression Adjustment (MB) |

|---|---|

T4-16C |

28 |

RTX6000-12C |

32 |

RTX6000-24C |

104 |

RTX6000P-12C |

32 |

RTX6000P-24C |

104 |

RTX8000-12C |

32 |

RTX8000-16C |

64 |

RTX8000-24C |

96 |

RTX8000-48C |

238 |

RTX8000P-12C |

32 |

RTX8000P-16C |

64 |

RTX8000P-24C |

96 |

RTX8000P-48C |

238 |

For all other vGPU types, compression-adjustment is 0.

Single vGPU Benchmark Scores are Lower Than Passthrough GPU#

Description

A single vGPU configured on a physical GPU produces lower benchmark scores than the physical GPU run in passthrough mode.

Aside from performance differences that may be attributed to a vGPU’s smaller frame buffer size, vGPU incorporates a performance balancing feature known as a Frame Rate Limiter (FRL). On vGPUs that use the best-effort scheduler, FRL is enabled. On vGPUs that use the fixed share or equal share scheduler, FRL is disabled.

FRL ensures balanced performance across multiple vGPUs resident on the same physical GPU. The FRL setting is designed to give a good interactive remote graphics experience. Still, it may reduce scores in benchmarks that depend on measuring frame rendering rates compared to the same benchmarks running on a passthrough GPU.

Resolution

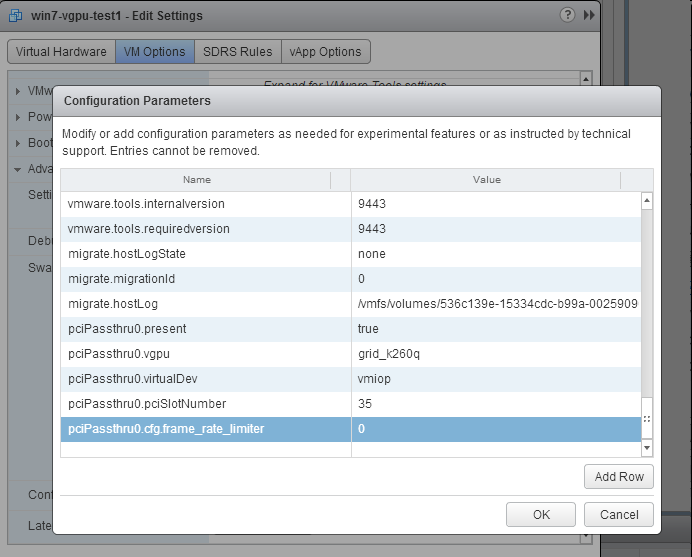

An internal vGPU setting controls FRL. On vGPUs that use the best-effort scheduler, NVIDIA does not validate a vGPU with FRL disabled. Still, for benchmark performance validation, FRL can be temporarily disabled by adding the configuration parameter pciPassthru0.cfg.frame_rate_limiter in the VM’s advanced configuration options.

Note

This setting can only be changed when the VM is powered off.

Select Edit Settings.

In the Edit Settings window, select the VM Options tab.

From the Advanced drop-down list, select Edit Configuration.

In the Configuration Parameters dialog box, click Add Row.

In the Name field, type the parameter

pciPassthru0.cfg.frame_rate_limiter.In the Value field, type

0and click OK.

With this setting, the VM’s vGPU will run without any frame rate limit. The FRL can be reverted to its default setting by setting pciPassthru0.cfg.frame_rate_limiter to 1 or removing the parameter from the advanced settings.

Resolution

An internal vGPU setting controls FRL. On vGPUs that use the best-effort scheduler, NVIDIA does not validate a vGPU with FRL disabled, but for benchmark performance validation, FRL can be temporarily disabled by setting frame_rate_limiter=0 in the vGPU configuration file.

# echo "frame_rate_limiter=0" > /sys/bus/mdev/devices/vgpu-id/nvidia/vgpu_params

For example:

# echo "frame_rate_limiter=0" > /sys/bus/mdev/devices/aa618089-8b16-4d01-a136-25a0f3c73123/nvidia/vgpu_params

The setting takes effect the next time any VM using the given vGPU type is started.

With this setting, the VM’s vGPU will run without any frame rate limit.

The FRL can be reverted to its default setting as follows:

Clear all parameter settings in the vGPU configuration file.

# echo " " > /sys/bus/mdev/devices/vgpu-id/nvidia/vgpu_paramsNote

You cannot clear specific parameter settings. If your vGPU configuration file contains other parameter settings that you want to keep, you must reinstate them in the next step.

Set

frame_rate_limiter=1in the vGPU configuration file.# echo "frame_rate_limiter=1" > /sys/bus/mdev/devices/vgpu-id/nvidia/vgpu_paramsIf you need to reinstate other parameter settings, include them in the command to set

frame_rate_limiter=1. For example:# echo "frame_rate_limiter=1 disable_vnc=1" > /sys/bus/mdev/devices/aa618089-8b16-4d01-a136-25a0f3c73123/nvidia/vgpu_params

User Guide#

Installing the NVIDIA Virtual GPU Manager#

NVIDIA Virtual GPU Manager for Red Hat Enterprise Linux KVM#

This topic assumes you want to set up a single Red Hat Enterprise Linux Kernel-based Virtual Machine (KVM) VM to use NVIDIA vGPU.

Caution

Output from the VM console is unavailable for VMs running vGPU. Before configuring vGPU, ensure you have installed an alternate means of accessing the VM (such as a VNC server).

Follow this sequence of instructions:

Install the Virtual GPU Manager Package for Red Hat Enterprise Linux KVM

Verify the Installation of the NVIDIA AI Enterprise for Red Hat Enterprise Linux KVM

MIG-backed vGPUs only: Configure a GPU for MIG-Backed vGPUs

vGPUs that support SR-IOV only: Prepare the Virtual Function for an NVIDIA vGPU that Supports SR-IOV on a Linux with KVM Hypervisor

Optional: Put a GPU into Mixed-Size Mode

Get the BDF and Domain of a GPU on a Linux with KVM Hypervisor

Create an NVIDIA vGPU on a Linux with KVM Hypervisor

Add one or more vGPUs to a Linux with KVM Hypervisor VM

Optional: Place a vGPU on a Physical GPU in Mixed-Size Mode

Set the vGPU Plugin Parameters on a Linux with KVM Hypervisor

After the process, you can install the NVIDIA vGPU (C-Series) Driver for your guest OS and license any NVIDIA AI Enterprise-licensed products you use.

NVIDIA Virtual GPU Manager for Ubuntu#

Caution

Output from the VM console is unavailable for VMs running vGPU. Before configuring vGPU, ensure you have installed an alternate means of accessing the VM (such as a VNC server).

Follow this sequence of instructions to set up a single Ubuntu VM to use NVIDIA vGPU.

Install the NVIDIA Virtual GPU Manager for Ubuntu

MIG-backed vGPUs only: Configure a GPU for MIG-Backed vGPUs

Get the BDF and Domain of a GPU on a Linux with KVM Hypervisor

vGPUs that support SR-IOV only: Prepare the Virtual Function for an NVIDIA vGPU that Supports SR-IOV on a Linux with KVM Hypervisor

Optional: Put a GPU Into Mixed-Size Mode

Create an NVIDIA vGPU on a Linux with KVM Hypervisor

Add one or more vGPUs to a Linux with KVM Hypervisor VM

Optional: Place a vGPU on a Physical GPU in Mixed-Size Mode

Set the vGPU Plugin Parameters on a Linux with KVM Hypervisor

After the process, you can install the NVIDIA vGPU (C-Series) Driver for your guest OS and license any NVIDIA AI Enterprise-licensed products you use.

NVIDIA Virtual GPU Manager for VMware vSphere#

You can use the NVIDIA Virtual GPU Manager for VMware vSphere to set up a VMware vSphere VM to use NVIDIA vGPU.

Note

Some servers, for example, the Dell R740, do not configure SR-IOV capability if the SR-IOV SBIOS setting is disabled on the server. If you are using the Tesla T4 GPU with VMware vSphere on such a server, you must ensure that the SR-IOV SBIOS setting is enabled on the server.

However, with any server hardware, SR-IOV is not enabled in the VMware vCenter Server for the Tesla T4 GPU. If SR-IOV is enabled in the VMware vCenter Server for T4, the GPU’s status is listed as needing a reboot. You can ignore this status message.

Requirements for Configuring NVIDIA vGPU in a DRS Cluster

You can configure a VM with NVIDIA vGPU on an ESXi host in a VMware Distributed Resource Scheduler (DRS) cluster. However, to ensure that the cluster’s automation level supports VMs configured with NVIDIA vGPU, you must set the automation level to Partially Automated or Manual.

For more information about these settings, refer to Edit Cluster Settings in the VMware documentation.

Administering MIG-Backed NVIDIA vGPUs#

Architecture#

A MIG-backed vGPU is a vGPU that resides on a GPU instance in a MIG-capable physical GPU. Each MIG-backed vGPU resident on a GPU has exclusive access to the GPU instance’s engines, including the compute and video decode engines.

In a MIG-backed vGPU, processes that run on the vGPU run in parallel with processes running on other vGPUs on the GPU. The process runs on all vGPUs resident on a physical GPU simultaneously.

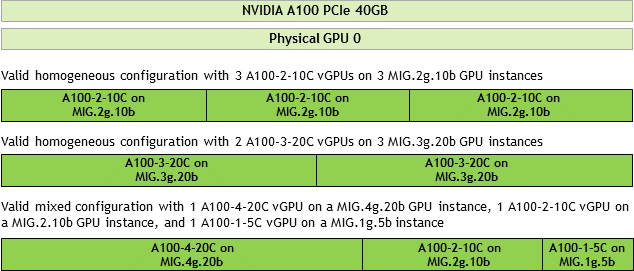

Configurations on a Single GPU#

NVIDIA vGPU supports homogeneous and mixed MIG-backed virtual GPUs based on the underlying GPU instance configuration.

For example, an NVIDIA A100 PCIe 40GB card has one physical GPU and can support several types of virtual GPU. The figure shows examples of valid homogeneous and mixed MIG-backed virtual GPU configurations on NVIDIA A100 PCIe 40GB.

A valid homogeneous configuration with 3 A100-2-10C vGPUs on 3 MIG.2g.10b GPU instances

A valid homogeneous configuration with 2 A100-3-20C vGPUs on 3 MIG.3g.20b GPU instances

A valid mixed configuration with 1 A100-4-20C vGPU on a MIG.4g.20b GPU instance, 1 A100-2-10C vGPU on a MIG.2.10b GPU instance, and 1 A100-1-5C vGPU on a MIG.1g.5b instance

Configuring the NVIDIA Virtual GPU Manager#

Modifying a MIG-Backed vGPU’s Configuration#

If compute instances weren’t created within the GPU instances when the GPU was configured for MIG-backed vGPUs, you can add the compute instances for an individual vGPU from within the guest VM. If you want to replace the compute instances created when the GPU was configured for MIG-backed vGPUs, you can delete them before adding the compute instances from within the guest VM.

Ensure that the following prerequisites are met:

You have root user privileges in the guest VM.

Other processes, such as CUDA applications, monitoring applications, or the

nvidia-smicommand, do not use the GPU instance.

Perform this task in a guest VM command shell.

Open a command shell as the root user in the guest VM. You can use a secure shell (SSH) on all supported hypervisors. Individual hypervisors may provide additional means for logging in. For details, refer to the documentation for your hypervisor.

List the available GPU instances.

$ nvidia-smi mig -lgi +----------------------------------------------------+ | GPU instances: | | GPU Name Profile Instance Placement | | ID ID Start:Size | |====================================================| | 0 MIG 2g.10gb 0 0 0:8 | +----------------------------------------------------+

Optional: If compute instances were created when the GPU was configured for MIG-backed vGPUs that you no longer require, delete them.

$ nvidia-smi mig -dci -ci compute-instance-id -gi gpu-instance-id

compute-instance-id- The ID of the compute instance that you want to delete.gpu-instance-id- The ID of the GPU instance from which you want to delete the compute instance.Note

This command fails if another process is using the GPU instance. In this situation, stop all processes using the GPU instance and retry the command.

This example deletes

compute instance 0from GPU instance0onGPU 0.$ nvidia-smi mig -dci -ci 0 -gi 0 Successfully destroyed compute instance ID 0 from GPU 0 GPU instance ID 0

List the compute instance profiles that are available for your GPU instance.

$ nvidia-smi mig -lcip

This example shows that one

MIG 2g.10gbcompute instance or twoMIG 1c.2g.10gbcompute instances can be created within the GPU instance.$ nvidia-smi mig -lcip +-------------------------------------------------------------------------------+ | Compute instance profiles: | | GPU GPU Name Profile Instances Exclusive Shared | | Instance ID Free/Total SM DEC ENC OFA | | ID CE JPEG | |===============================================================================| | 0 0 MIG 1c.2g.10gb 0 2/2 14 1 0 0 | | 2 0 | +-------------------------------------------------------------------------------+ | 0 0 MIG 2g.10gb 1* 1/1 28 1 0 0 | | 2 0 | +-------------------------------------------------------------------------------+

Create the compute instances that you need within the available GPU instance. Run the following command to create each compute instance individually.

$ nvidia-smi mig -cci compute-instance-profile-id -gi gpu-instance-id

compute-instance-profile-id- The compute instance profile ID that specifies the compute instance.gpu-instance-id- The GPU instance ID specifies the GPU instance within which you want to create the compute instance.Note

This command fails if another process is using the GPU instance. In this situation, stop all GPU processes and retry the command.

This example creates a

MIG 2g.10gbcompute instance on GPU instance 0.$ nvidia-smi mig -cci 1 -gi 0 Successfully created compute instance ID 0 on GPU 0 GPU instance ID 0 using profile MIG 2g.10gb (ID 1)

This example creates two

MIG 1c.2g.10gbcompute instances on GPU instance 0 by running the same command twice.$ nvidia-smi mig -cci 0 -gi 0 Successfully created compute instance ID 0 on GPU 0 GPU instance ID 0 using profile MIG 1c.2g.10gb (ID 0) $ nvidia-smi mig -cci 0 -gi 0 Successfully created compute instance ID 1 on GPU 0 GPU instance ID 0 using profile MIG 1c.2g.10gb (ID 0)

Verify that the compute instances were created within the GPU instance. Use the nvidia-smi command for this purpose. This example confirms that a

MIG 2g.10gbcompute instance was created on GPU instance 0.nvidia-smi Mon Mar 25 19:01:24 2024 +-----------------------------------------------------------------------------+ | NVIDIA-SMI 550.54.16 Driver Version: 550.54.16 CUDA Version: 12.3 | |-------------------------------+----------------------+----------------------+ | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |===============================+======================+======================| | 0 GRID A100X-2-10C On | 00000000:00:08.0 Off | On | | N/A N/A P0 N/A / N/A | 1058MiB / 10235MiB | N/A Default | | | | Enabled | +-------------------------------+----------------------+----------------------+ +-----------------------------------------------------------------------------+ | MIG devices: | +------------------+----------------------+-----------+-----------------------+ | GPU GI CI MIG | Memory-Usage | Vol| Shared | | ID ID Dev | BAR1-Usage | SM Unc| CE ENC DEC OFA JPG| | | | ECC| | |==================+======================+===========+=======================| | 0 0 0 0 | 1058MiB / 10235MiB | 28 0 | 2 0 1 0 0 | | | 0MiB / 4096MiB | | | +------------------+----------------------+-----------+-----------------------+ +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | No running processes found | +-----------------------------------------------------------------------------+

This example confirms that two

MIG 1c.2g.10gbcompute instances were created on GPU instance 0.$ nvidia-smi Mon Mar 25 19:01:24 2024 +-----------------------------------------------------------------------------+ | NVIDIA-SMI 550.54.16 Driver Version: 550.54.16 CUDA Version: 12.3 | |-------------------------------+----------------------+----------------------+ | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |===============================+======================+======================| | 0 GRID A100X-2-10C On | 00000000:00:08.0 Off | On | | N/A N/A P0 N/A / N/A | 1058MiB / 10235MiB | N/A Default | | | | Enabled | +-------------------------------+----------------------+----------------------+ +-----------------------------------------------------------------------------+ | MIG devices: | +------------------+----------------------+-----------+-----------------------+ | GPU GI CI MIG | Memory-Usage | Vol| Shared | | ID ID Dev | BAR1-Usage | SM Unc| CE ENC DEC OFA JPG| | | | ECC| | |==================+======================+===========+=======================| | 0 0 0 0 | 1058MiB / 10235MiB | 14 0 | 2 0 1 0 0 | | | 0MiB / 4096MiB | | | +------------------+ +-----------+-----------------------+ | 0 0 1 1 | | 14 0 | 2 0 1 0 0 | | | | | | +------------------+----------------------+-----------+-----------------------+ +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================| | No running processes found | +-----------------------------------------------------------------------------+

Configuring a GPU for MIG-Backed vGPUs#

To support GPU instances with NVIDIA vGPU, a GPU must be configured with MIG mode enabled, and GPU instances must be created and configured on the physical GPU. Optionally, you can create compute instances within the GPU instances. If you don’t create compute instances within the GPU instances, they can be added later for individual vGPUs from within the guest VMs.

Ensure that the following prerequisites are met:

The NVIDIA Virtual GPU Manager is installed on the hypervisor host.

You have root user privileges on your hypervisor host machine.

You have determined which GPU instances correspond to the vGPU types of the MIG-backed vGPUs you will create.

Other processes, such as CUDA applications, monitoring applications, or the

nvidia-smicommand, do not use the GPU.

To configure a GPU for MIG-backed vGPUs, follow these instructions:

Enable MIG mode for a GPU.

Note

For VMware vSphere, only enabling MIG mode is required because VMware vSphere creates the GPU instances, and after the VM is booted and the guest driver is installed, one compute instance is automatically created in the VM.

Create a GPU instance on a MIG-enabled GPU.

Optional: Create a compute instance in a GPU instance.

After configuring a GPU for MIG-backed vGPUs, create the vGPUs you need and add them to their VMs.

Enabling MIG Mode for a GPU#

Perform this task in your hypervisor command shell.

Open a command shell as the root user on your hypervisor host machine. You can use a secure shell (SSH) on all supported hypervisors. Individual hypervisors may provide additional means for logging in. For details, refer to the documentation for your hypervisor.

Determine whether MIG mode is enabled. Use the

nvidia-smicommand for this purpose. By default, MIG mode is disabled. This example shows that MIG mode is disabled on GPU 0.Note

In the output from

nvidia-smi, the NVIDIA A100 HGX 40GB GPU is referred to as A100-SXM4-40GB.$ nvidia-smi -i 0 +-----------------------------------------------------------------------------+ | NVIDIA-SMI 550.54.16 Driver Version: 550.54.16 CUDA Version: 12.3 | |-------------------------------+----------------------+----------------------+ | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |===============================+======================+======================| | 0 A100-SXM4-40GB On | 00000000:36:00.0 Off | 0 | | N/A 29C P0 62W / 400W | 0MiB / 40537MiB | 6% Default | | | | Disabled | +-------------------------------+----------------------+----------------------+

If MIG mode is disabled, enable it.

$ nvidia-smi -i [gpu-ids] -mig 1

gpu-ids- A comma-separated list of GPU indexes, PCI bus IDs, or UUIDs specifying the GPUs you want to enable MIG mode. Ifgpu-idsare omitted, MIG mode is enabled on all GPUs on the system.This example enables MIG mode on GPU 0.

$ nvidia-smi -i 0 -mig 1 Enabled MIG Mode for GPU 00000000:36:00.0 All done.

Note

If another process is using the GPU, this command fails and displays a warning message that MIG mode for the GPU is in the pending enable state. In this situation, stop all GPU processes and retry the command.

VMware vSphere ESXi with GPUs based only on the NVIDIA Ampere architecture: Reboot the hypervisor host. If you are using a different hypervisor or GPUs based on the NVIDIA Hopper GPU architecture or a later architecture, omit this step.

Query the GPUs on which you enabled MIG mode to confirm that MIG mode is enabled. This example queries GPU 0 for the PCI bus ID and MIG mode in comma-separated values (CSV) format.

$ nvidia-smi -i 0 --query-gpu=pci.bus_id,mig.mode.current --format=csv pci.bus_id, mig.mode.current 00000000:36:00.0, Enabled

Creating GPU Instances on a MIG-Enabled GPU#

Note

If you are using VMware vSphere, omit this task. VMware vSphere creates the GPU instances automatically.

Perform this task in your hypervisor command shell.

Open a command shell as the root user on your hypervisor host machine if necessary.

List the GPU instance profiles that are available on your GPU. When you create a profile, you must specify the profiles by their IDs, not their names.

$ nvidia-smi mig -lgip +--------------------------------------------------------------------------+ | GPU instance profiles: | | GPU Name ID Instances Memory P2P SM DEC ENC | | Free/Total GiB CE JPEG OFA | |==========================================================================| | 0 MIG 1g.5gb 19 7/7 4.95 No 14 0 0 | | 1 0 0 | +--------------------------------------------------------------------------+ | 0 MIG 2g.10gb 14 3/3 9.90 No 28 1 0 | | 2 0 0 | +--------------------------------------------------------------------------+ | 0 MIG 3g.20gb 9 2/2 19.79 No 42 2 0 | | 3 0 0 | +--------------------------------------------------------------------------+ | 0 MIG 4g.20gb 5 1/1 19.79 No 56 2 0 | | 4 0 0 | +--------------------------------------------------------------------------+ | 0 MIG 7g.40gb 0 1/1 39.59 No 98 5 0 | | 7 1 1 | +--------------------------------------------------------------------------+

Create the GPU instances corresponding to the vGPU types of the MIG-backed vGPUs you will create.

Note

$ nvidia-smi mig -cgi gpu-instance-profile-ids

gpu-instance-profile-ids- A comma-separated list of GPU instance profile IDs specifying the GPU instances you want to create.This example creates two GPU instances of type

2g.10gbwith profileID 14.$ nvidia-smi mig -cgi 14,14 Successfully created GPU instance ID 5 on GPU 2 using profile MIG 2g.10gb (ID 14) Successfully created GPU instance ID 3 on GPU 2 using profile MIG 2g.10gb (ID 14)

Optional: Creating Compute Instances in a GPU Instance#

Creating compute instances within GPU instances is optional. If you don’t create compute instances within the GPU instances, they can be added later for individual vGPUs from within the guest VMs.

Note

If you are using VMware vSphere, omit this task. One compute instance is automatically created after the VM is booted and the guest driver is installed.

Perform this task in your hypervisor command shell.

Open a command shell as the root user on your hypervisor host machine if necessary.

List the available GPU instances.

$ nvidia-smi mig -lgi +----------------------------------------------------+ | GPU instances: | | GPU Name Profile Instance Placement | | ID ID Start:Size | |====================================================| | 2 MIG 2g.10gb 14 3 0:2 | +----------------------------------------------------+ | 2 MIG 2g.10gb 14 5 4:2 | +----------------------------------------------------+

Create the compute instances that you need within each GPU instance.

$ nvidia-smi mig -cci -gi gpu-instance-ids

gpu-instance-ids- A comma-separated list of GPU instance IDs that specifies the GPU instances within which you want to create the compute instances.Caution

To avoid an inconsistent state between a guest VM and the hypervisor host, do not create compute instances from the hypervisor on a GPU instance on which an active guest VM is running. Instead, create the compute instances from within the guest VM as explained in Modifying a MIG-Backed vGPU’s Configuration.

This example creates a compute instance on each GPU instance 3 and 5.

$ nvidia-smi mig -cci -gi 3,5 Successfully created compute instance on GPU 0 GPU instance ID 1 using profile ID 2 Successfully created compute instance on GPU 0 GPU instance ID 2 using profile ID 2

Verify that the compute instances were created within each GPU instance.

$ nvidia-smi +-----------------------------------------------------------------------------+ | MIG devices: | +------------------+----------------------+-----------+-----------------------+ | GPU GI CI MIG | Memory-Usage | Vol| Shared | | ID ID Dev | BAR1-Usage | SM Unc| CE ENC DEC OFA JPG| | | | ECC| | |==================+======================+===========+=======================| | 2 3 0 0 | 0MiB / 9984MiB | 28 0 | 2 0 1 0 0 | | | 0MiB / 16383MiB | | | +------------------+----------------------+-----------+-----------------------+ | 2 5 0 1 | 0MiB / 9984MiB | 28 0 | 2 0 1 0 0 | | | 0MiB / 16383MiB | | | +------------------+----------------------+-----------+-----------------------+ +-----------------------------------------------------------------------------+ | Processes: | | GPU GI CI PID Type Process name GPU Memory | | ID ID Usage | |=============================================================================|

Note

Additional compute instances created in a VM are destroyed when the VM is shut down or rebooted. After the shutdown or reboot, only one compute instance remains in the VM. This compute instance is created automatically after installing the NVIDIA vGPU (C-Series) Driver.

Disabling MIG Mode for one or more GPUs#

If a GPU you want to use for time-sliced vGPUs or GPU passthrough has previously been configured for MIG-backed vGPUs, disable MIG mode on the GPU.

Ensure that the following prerequisites are met:

The NVIDIA Virtual GPU Manager is installed on the hypervisor host.

You have root user privileges on your hypervisor host machine.

Other processes, such as CUDA applications, monitoring applications, or the

nvidia-smicommand, do not use the GPU.

Perform this task in your hypervisor command shell.

Open a command shell as the root user on your hypervisor host machine. You can use a secure shell (SSH) on all supported hypervisors. Individual hypervisors may provide additional means for logging in. For details, refer to the documentation for your hypervisor.

Determine whether MIG mode is disabled. Use the

nvidia-smicommand for this purpose. By default, MIG mode is disabled but might have previously been enabled. This example shows that MIG mode is enabled on GPU 0.Note

In the output from

nvidia-smi, the NVIDIA A100 HGX 40GB GPU is referred to as A100-SXM4-40GB.$ nvidia-smi -i 0 +-----------------------------------------------------------------------------+ | NVIDIA-SMI 550.54.16 Driver Version: 550.54.16 CUDA Version: 12.3 | |-------------------------------+----------------------+----------------------+ | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |===============================+======================+======================| | 0 A100-SXM4-40GB Off | 00000000:36:00.0 Off | 0 | | N/A 29C P0 62W / 400W | 0MiB / 40537MiB | 6% Default | | | | Enabled | +-------------------------------+----------------------+----------------------+

If MIG mode is enabled, disable it.

$ nvidia-smi -i [gpu-ids] -mig 0

gpu-ids- A comma-separated list of GPU indexes, PCI bus IDs, or UUIDs specifying the GPUs you want to disable MIG mode. Ifgpu-idsare omitted, MIG mode is disabled for all GPUs in the system.This example disables MIG Mode on GPU 0.

$ sudo nvidia-smi -i 0 -mig 0 Disabled MIG Mode for GPU 00000000:36:00.0 All done.

Confirm that MIG mode was disabled. Use the

nvidia-smicommand for this purpose. This example shows that MIG mode is disabled on GPU 0.$ nvidia-smi -i 0 +-----------------------------------------------------------------------------+ | NVIDIA-SMI 550.54.16 Driver Version: 550.54.16 CUDA Version: 12.3 | |-------------------------------+----------------------+----------------------+ | GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC | | Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. | | | | MIG M. | |===============================+======================+======================| | 0 A100-SXM4-40GB Off | 00000000:36:00.0 Off | 0 | | N/A 29C P0 62W / 400W | 0MiB / 40537MiB | 6% Default | | | | Disabled | +-------------------------------+----------------------+----------------------+

Monitoring MIG-backed vGPU Activity#

Note

MIG-backed vGPU activity cannot be monitored on GPUs based on the NVIDIA Ampere GPU architecture because the required hardware feature is absent.

To monitor MIG-backed vGPU activity across multiple vGPUs, run nvidia-smi vgpu with the --gpm-metrics ID-list option.

ID-list - A comma-separated list of integer IDs that specify the statistics to monitor, as shown in the following table. The table also shows the column’s name in the command output under which the statistic is reported.

Statistic |

ID |

Column |

|---|---|---|

Graphics activity |

1 |

|

Streaming multiprocessor (SM) activity |

2 |

|

SM occupancy |

3 |

|

Integer activity |

4 |

|

Tensor activity |

5 |

|

Double-precision fused multiply-add (DFMA) tensor activity |

6 |

|

Half matrix multiplication and accumulation (HMMA) tensor activity |

7 |

|

Integer matrix multiplication and accumulation (IMMA) tensor activity |

9 |

|

Dynamic random-access memory (DRAM) activity |

10 |

|

Double-precision 64-bit floating-point (FP64) activity |

11 |

|

Single-precision 32-bit floating-point (FP32) activity |

12 |

|

Half-precision 16-bit FP16 activity |

13 |

|

Each reported percentage is the percentage of the physical GPU’s capacity that a vGPU is using. For example, a vGPU that uses 20% of the GPU’s DRAM capacity will report 20%.

For each vGPU, the specified statistics are reported once every second.

To modify the reporting frequency, use the -l or --loop option.

To limit monitoring to a subset of the platform’s GPUs, use the -i or --id option to select one or more GPUs.

The following example reports graphics activity, SM activity, SM occupancy, and integer activity for one vGPU VM powered on and within which one application runs.

[root@vgpu ~]# nvidia-smi vgpu --gpm-metrics 1,2,3,4

# gpu vgpu mig_id gi_id ci_id gract smutil smocc intutil

# Idx Id Idx Idx Idx % % % %

0 3251634249 0 2 0 - - - -

0 3251634249 0 2 0 99 97 26 13

0 3251634249 0 2 0 99 96 23 13

0 3251634249 0 2 0 99 97 27 13

No activity is reported when no vGPUs are active on the hypervisor host.

[root@vgpu ~]# nvidia-smi vgpu --gpm-metrics 1,2,3,4

# gpu vgpu mig_id gi_id ci_id gract smutil smocc intutil

# Idx Id Idx Idx Idx % % % %

0 - - - - - - - -

0 - - - - - - - -

0 - - - - - - - -

Installing NVIDIA AI Enterprise Software Components#

Installing the NVIDIA AI Enterprise Software Components Using Kubernetes#

Perform this task if you are using one of the following combinations of guest operating system and container platform:

Ubuntu with Kubernetes

Ensure that the following prerequisites are met:

If you are using Kubernetes, ensure that:

Kubernetes is installed in the VM.

NVIDIA vGPU (C-Series) Virtual GPU Manager is installed.

NVIDIA vGPU License Server with licenses is installed.

You have generated your NGC API key to access the NVIDIA AI Enterprise Software on NGC Catalog using the URL provided to you by NVIDIA.

Transforming Container Images for AI and Data Science Applications and Frameworks into Kubernetes Pods#

The AI and data science applications and frameworks are distributed as NGC container images through the NGC private registry. If you are using Kubernetes or Red Hat OpenShift, you must transform each image that you want to use into a Kubernetes pod. Each container image contains the entire user-space software stack required to run the application or framework: the CUDA libraries, cuDNN, any required Magnum IO components, TensorRT, and the framework.

Installing the NVIDIA AI Enterprise Application Software and Deep Learning Framework Components Using Docker#

NVIDIA AI Enterprise Application Software is available through the NGC Catalog and identifiable by the NVIDIA AI Enterprise Supported label.

The container image for each application or framework contains the entire user-space software stack required to run it, namely, the CUDA libraries, cuDNN, any required Magnum IO components, TensorRT, and the framework.

Ensure that you have completed the following tasks in the NGC Private Registry User Guide:

Perform this task from the VM.

Obtain the Docker pull command to download the NVIDIA AI Enterprise Application Software you like to leverage from the NGC Catalog.

Installing the NVIDIA GPU Operator Using a Bash Shell Script#

A bash shell script for installing the NVIDIA GPU Operator with the NVIDIA vGPU (C-Series) Driver is available for download from NVIDIA NGC.

Before performing this task, ensure that the following prerequisites are met:

A client configuration token has been generated for the client on which the script will install the NVIDIA vGPU (C-Series) Driver.

The NVIDIA NGC user’s API key to create the image pull secret has been generated.

The following environment variables are set:

NGC_API_KEY- The NVIDIA NGC user’s API key to create the image pull secret. For example:export NGC_API_KEY="RLh1zerCiG4wPGWWt4Tyj2VMyd7T8MnDyCT95pygP5VJFv8en4eLvdXVZzjm"

NGC_USER_EMAIL- The email address of the NVIDIA NGC user to be used for creating the image pull secret. For example:export NGC_USER_EMAIL="ada.lovelace@example.com"

Download the NVIDIA GPU Operator - Deploy Installer Script from NVIDIA NGC.

Ensure that the file access modes of the script allow the owner to execute the script.

Change to the directory that contains the script.

# cd script-directoryscript-directory- The directory to which you downloaded the script in the previous step.Determine the current file access modes of the script.

# ls -l gpu-operator-nvaie.shIf necessary, grant execute permission to the owner of the script.

# chmod u+x gpu-operator-nvaie.sh

Copy the client configuration token to the directory that contains the script.

Rename the client configuration token to

client_configuration_token.tok. The client configuration token is generated with a file name and a time stamp:client_configuration_token_mm-dd-yyy-hh-mm-ss.tok.Start the script from the directory that contains it, specifying the option to install the NVIDIA vGPU (C-Series) Driver.

# bash gpu-operator-nvaie.sh install

Virtual GPU Types for Supported GPUs#

NVIDIA Ada Lovelace GPU Architecture#

Physical GPUs per board: 1

The maximum number of vGPUs per board is the product of the maximum number of vGPUs per GPU and the number of physical GPUs per board.

Required license edition: NVIDIA AI Enterprise

Intended use cases:

vGPUs with more than 4096 MB of frame buffer: Training Workloads

vGPUs with 4096 MB of frame buffer: Inference Workloads

These vGPU types support a single display with a fixed maximum resolution.

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

L40-48C |

49152 |

1 |

1 |

3840x2400 |

1 |

L40-24C |

24576 |

2 |

2 |

3840x2400 |

1 |

L40-16C |

16384 |

3 |

2 |

3840x2400 |

1 |

L40-12C |

12288 |

4 |

4 |

3840x2400 |

1 |

L40-8C |

8192 |

6 |

4 |

3840x2400 |

1 |

L40-6C |

6144 |

8 |

8 |

3840x2400 |

1 |

L40-4C |

4096 |

12 [5] |

8 |

3840x2400 |

1 |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

L40S-48C |

49152 |

1 |

1 |

3840x2400 |

1 |

L40S-24C |

24576 |

2 |

2 |

3840x2400 |

1 |

L40S-16C |

16384 |

3 |

2 |

3840x2400 |

1 |

L40S-12C |

12288 |

4 |

4 |

3840x2400 |

1 |

L40S-8C |

8192 |

6 |

4 |

3840x2400 |

1 |

L40S-6C |

6144 |

8 |

8 |

3840x2400 |

1 |

L40S-4C |

4096 |

12 [5] |

8 |

3840x2400 |

1 |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

L20-48C |

49152 |

1 |

1 |

3840x2400 |

1 |

L20-24C |

24576 |

2 |

2 |

3840x2400 |

1 |

L20-16C |

16384 |

3 |

2 |

3840x2400 |

1 |

L20-12C |

12288 |

4 |

4 |

3840x2400 |

1 |

L20-8C |

8192 |

6 |

4 |

3840x2400 |

1 |

L20-6C |

6144 |

8 |

8 |

3840x2400 |

1 |

L20-4C |

4096 |

12 [5] |

8 |

3840x2400 |

1 |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

L20-48C |

49152 |

1 |

1 |

3840x2400 |

1 |

L20-24C |

24576 |

2 |

2 |

3840x2400 |

1 |

L20-16C |

16384 |

3 |

2 |

3840x2400 |

1 |

L20-12C |

12288 |

4 |

4 |

3840x2400 |

1 |

L20-8C |

8192 |

6 |

4 |

3840x2400 |

1 |

L20-6C |

6144 |

8 |

8 |

3840x2400 |

1 |

L20-4C |

4096 |

12 [5] |

8 |

3840x2400 |

1 |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

L4-24C |

24576 |

1 |

1 |

3840x2400 |

1 |

L4-12C |

12288 |

2 |

2 |

3840x2400 |

1 |

L4-8C |

8192 |

3 |

2 |

3840x2400 |

1 |

L4-6C |

6144 |

4 |

4 |

3840x2400 |

1 |

L4-4C |

4096 |

6 |

4 |

3840x2400 |

1 |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

L2-24C |

24576 |

1 |

1 |

3840x2400 |

1 |

L2-12C |

12288 |

2 |

2 |

3840x2400 |

1 |

L2-8C |

8192 |

3 |

2 |

3840x2400 |

1 |

L2-6C |

6144 |

4 |

4 |

3840x2400 |

1 |

L2-4C |

4096 |

6 |

4 |

3840x2400 |

1 |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

RTX 6000 Ada-48C |

49152 |

1 |

1 |

3840x2400 |

1 |

RTX 6000 Ada-24C |

24576 |

2 |

2 |

3840x2400 |

1 |

RTX 6000 Ada-16C |

16384 |

3 |

2 |

3840x2400 |

1 |

RTX 6000 Ada-12C |

12288 |

4 |

4 |

3840x2400 |

1 |

RTX 6000 Ada-8C |

8192 |

6 |

4 |

3840x2400 |

1 |

RTX 6000 Ada-6C |

6144 |

8 |

8 |

3840x2400 |

1 |

RTX 6000 Ada-4C |

4096 |

12 [5] |

8 |

3840x2400 |

1 |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

RTX 5880 Ada-48C |

49152 |

1 |

1 |

3840x2400 |

1 |

RTX 5880 Ada-24C |

24576 |

2 |

2 |

3840x2400 |

1 |

RTX 5880 Ada-16C |

16384 |

3 |

2 |

3840x2400 |

1 |

RTX 5880 Ada-12C |

12288 |

4 |

4 |

3840x2400 |

1 |

RTX 5880 Ada-8C |

8192 |

6 |

4 |

3840x2400 |

1 |

RTX 5880 Ada-6C |

6144 |

8 |

8 |

3840x2400 |

1 |

RTX 5880 Ada-4C |

4096 |

12 [5] |

8 |

3840x2400 |

1 |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

RTX 5000 Ada-32C |

32768 |

1 |

1 |

3840x2400 |

1 |

RTX 5000 Ada-16C |

16384 |

2 |

2 |

3840x2400 |

1 |

RTX 5000 Ada-8C |

8192 |

4 |

4 |

3840x2400 |

1 |

RTX 5000 Ada-4C |

4096 |

8 |

8 |

3840x2400 |

1 |

NVIDIA Ampere GPU Architecture#

Physical GPUs per board: 1 (with the exception of NVIDIA A16)

The maximum number of vGPUs per board is the product of the maximum number of vGPUs per GPU and the number of physical GPUs per board.

Required license edition: NVIDIA AI Enterprise

Intended use cases:

vGPUs with more than 4096 MB of frame buffer: Training Workloads

vGPUs with 4096 MB of frame buffer: Inference Workloads

These vGPU types support a single display with a fixed maximum resolution.

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

A40-48C |

49152 |

1 |

1 |

3840x2400 |

1 |

A40-24C |

24576 |

2 |

2 |

3840x2400 |

1 |

A40-16C |

16384 |

3 |

2 |

3840x2400 |

1 |

A40-12C |

12288 |

4 |

4 |

3840x2400 |

1 |

A40-8C |

8192 |

6 |

4 |

3840x2400 |

1 |

A40-6C |

6144 |

8 |

8 |

3840x2400 |

1 |

A40-4C |

4096 |

12 [5] |

8 |

3840x2400 |

1 |

Physical GPUs per board: 4

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

A10-24C |

24576 |

1 |

1 |

3840x2400 |

1 |

A10-12C |

12288 |

2 |

2 |

3840x2400 |

1 |

A10-8C |

8192 |

3 |

2 |

3840x2400 |

1 |

A10-6C |

6144 |

4 |

4 |

3840x2400 |

1 |

A10-4C |

4096 |

6 |

4 |

3840x2400 |

1 |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

RTXA6000-48C |

49152 |

1 |

1 |

3840x2400 |

1 |

RTXA6000-24C |

24576 |

2 |

2 |

3840x2400 |

1 |

RTXA6000-16C |

16384 |

3 |

2 |

3840x2400 |

1 |

RTXA6000-12C |

12288 |

4 |

4 |

3840x2400 |

1 |

RTXA6000-8C |

8192 |

6 |

4 |

3840x2400 |

1 |

RTXA6000-6C |

6144 |

8 |

8 |

3840x2400 |

1 |

RTXA6000-4C |

4096 |

12 [5] |

8 |

3840x2400 |

1 |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

RTXA5500-24C |

24576 |

1 |

1 |

3840x2400 |

1 |

RTXA5500-12C |

12288 |

2 |

2 |

3840x2400 |

1 |

RTXA5500-8C |

8192 |

3 |

2 |

3840x2400 |

1 |

RTXA5500-6C |

6144 |

4 |

4 |

3840x2400 |

1 |

RTXA5500-4C |

4096 |

6 |

4 |

3840x2400 |

1 |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

RTXA5000-24 |

24576 |

1 |

1 |

3840x2400 |

1 |

RTXA5000-12C |

12288 |

2 |

2 |

3840x2400 |

1 |

RTXA5000-8C |

8192 |

3 |

2 |

3840x2400 |

1 |

RTXA5000-6C |

6144 |

4 |

4 |

3840x2400 |

1 |

RTXA5000-4C |

4096 |

6 |

4 |

3840x2400 |

1 |

MIG-Backed and Time-Sliced NVIDIA vGPU (C-Series) for the NVIDIA Ampere GPU Architecture#

Physical GPUs per board: 1

The maximum number of vGPUs per board is the product of the maximum number of vGPUs per GPU and the number of physical GPUs per board.

Required license edition: NVIDIA AI Enterprise

MIG-Backed NVIDIA vGPU (C-Series)

For details on GPU instance profiles, refer to the NVIDIA Multi-Instance GPU User Guide.

Time-Sliced NVIDIA vGPU (C-Series)

Intended use cases:

vGPUs with more than 4096 MB of frame buffer: Training Workloads

vGPUs with 4096 MB of frame buffer: Inference Workloads

These vGPU types support a single display with a fixed maximum resolution.

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU |

Slices per vGPU |

Compute Instances per vGPU |

Corresponding GPU Instance Profile |

|---|---|---|---|---|---|

A800D-7-80C |

81920 |

1 |

7 |

7 |

MIG 7g.80gb |

A800D-4-40C |

40960 |

1 |

4 |

4 |

MIG 4g.40gb |

A800D-3-40C |

40960 |

2 |

3 |

3 |

MIG 3g.40gb |

A800D-2-20C |

20480 |

3 |

2 |

2 |

MIG 2g.20gb |

A800D-1-20C [3] |

20480 |

4 |

1 |

1 |

MIG 1g.20gb |

A800D-1-10C |

10240 |

7 |

1 |

1 |

MIG 1g.10gb |

A800D-1-10CME [3] |

10240 |

1 |

1 |

1 |

MIG 1g.10gb+me |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

A800D-80C |

81920 |

1 |

1 |

3840x2400 |

1 |

A800D-40C |

40960 |

2 |

2 |

3840x2400 |

1 |

A800D-20C |

20480 |

4 |

4 |

3840x2400 |

1 |

A800D-16C |

16384 |

5 |

4 |

3840x2400 |

1 |

A800D-10C |

10240 |

8 |

8 |

3840x2400 |

1 |

A800D-8C |

8192 |

10 |

8 |

3840x2400 |

1 |

A800D-4C |

4096 |

20 |

16 |

3840x2400 |

1 |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU |

Slices per vGPU |

Compute Instances per vGPU |

Corresponding GPU Instance Profile |

|---|---|---|---|---|---|

A800D-7-80C |

81920 |

1 |

7 |

7 |

MIG 7g.80gb |

A800D-4-40C |

40960 |

1 |

4 |

4 |

MIG 4g.40gb |

A800D-3-40C |

40960 |

2 |

3 |

3 |

MIG 3g.40gb |

A800D-2-20C |

20480 |

3 |

2 |

2 |

MIG 2g.20gb |

A800D-1-20C [3] |

20480 |

4 |

1 |

1 |

MIG 1g.20gb |

A800D-1-10C |

10240 |

7 |

1 |

1 |

MIG 1g.10gb |

A800D-1-10CME [3] |

10240 |

1 |

1 |

1 |

MIG 1g.10gb+me |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU in Equal-Size Mode |

Maximum vGPUs per GPU in Mixed-Size Mode |

Maximum Display Resolution [4] |

Virtual Displays per vGPU |

|---|---|---|---|---|---|

A800D-80C |

81920 |

1 |

1 |

3840x2400 |

1 |

A800D-40C |

40960 |

2 |

2 |

3840x2400 |

1 |

A800D-20C |

20480 |

4 |

4 |

3840x2400 |

1 |

A800D-16C |

16384 |

5 |

4 |

3840x2400 |

1 |

A800D-10C |

10240 |

8 |

8 |

3840x2400 |

1 |

A800D-8C |

8192 |

10 |

8 |

3840x2400 |

1 |

A800D-4C |

4096 |

20 |

16 |

3840x2400 |

1 |

Virtual GPU Type |

Frame Buffer (MB) |

Maximum vGPUs per GPU |

Slices per vGPU |

Compute Instances per vGPU |

Corresponding GPU Instance Profile |

|---|---|---|---|---|---|

A800D-7-80C |

81920 |

1 |

7 |

7 |

MIG 7g.80gb |

A800D-4-40C |

40960 |

1 |

4 |

4 |

MIG 4g.40gb |

A800D-3-40C |

40960 |

2 |

3 |

3 |

MIG 3g.40gb |

A800D-2-20C |

20480 |

3 |

2 |

2 |

MIG 2g.20gb |

A800D-1-20C [3] |

20480 |

4 |

1 |

1 |

MIG 1g.20gb |

A800D-1-10C |

10240 |

7 |

1 |

1 |

MIG 1g.10gb |

A800D-1-10CME [3] |

10240 |

1 |

1 |