AI Grid Software Reference Design#

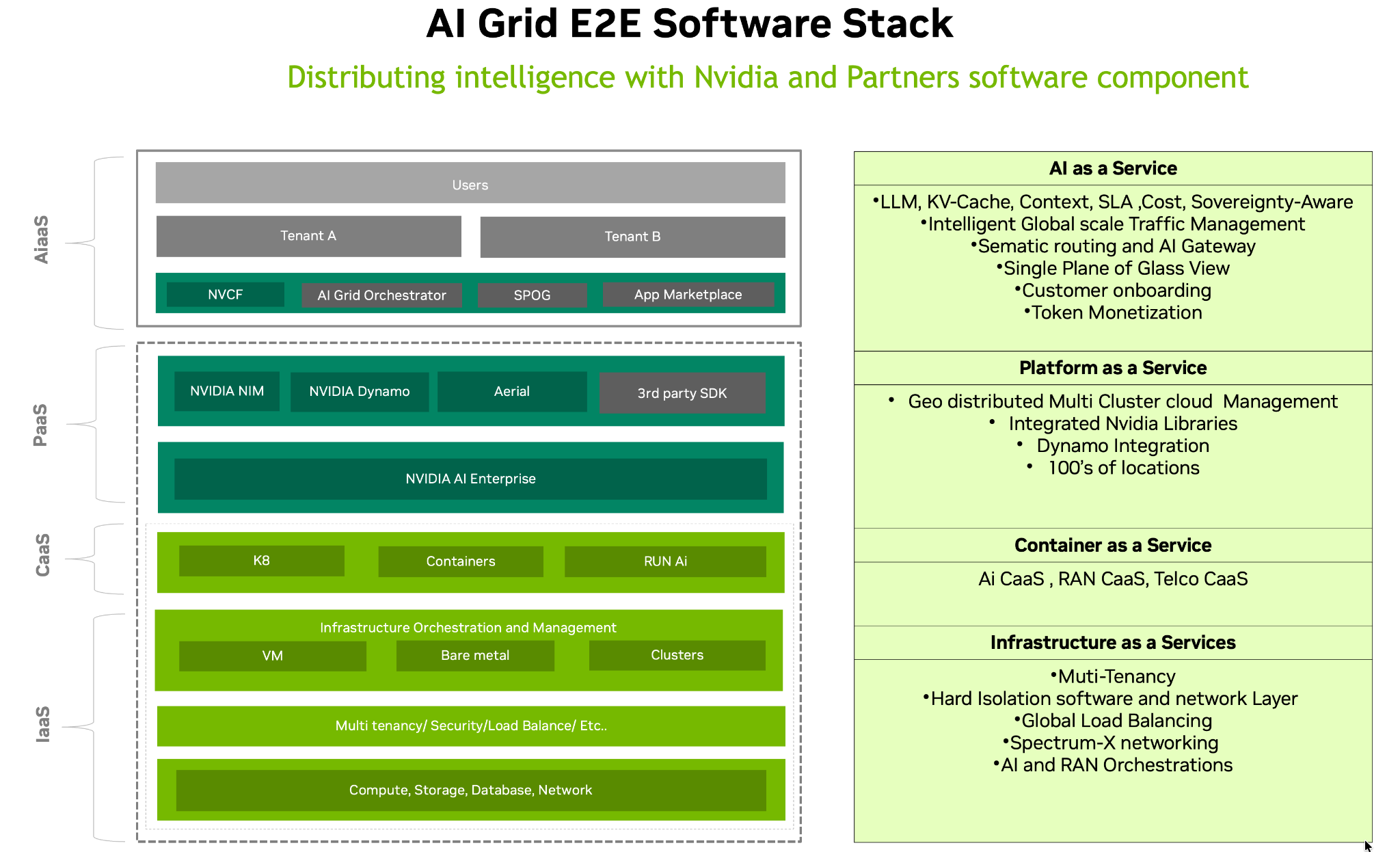

Figure 9. NVIDIA AI Grid end‑to‑end software stack outlining infrastructure, container, platform, and AI‑as‑a‑Service layers that unify NVIDIA and partner software for multi‑tenant AI operations.

The AI Grid end-to-end software stack is designed to let operators deliver AI as a fully managed, multi‑tenant cloud service, from bare‑metal infrastructure up to LLM‑powered, multi‑model agentic applications. At the foundation, the stack standardizes compute, storage, database, and networking, then layers security, load balancing, and cluster lifecycle management to provide a robust IaaS footprint. On top of this, a container and Kubernetes layer (CaaS) abstracts deployment details, enabling teams to run AI and telco workloads consistently across VMs, bare metal, and heterogeneous clusters.

The PaaS tier adds NVIDIA AI Enterprise, NVIDIA NIM, NVIDIA Dynamo, and third‑party SDKs, exposing optimized libraries and runtime services that simplify integrating accelerated AI into existing platforms. At the top of the stack, the AIaaS (AI-as-a-Service) layer provides optional deployment though NVCF, the AI Grid Orchestrator, a single‑pane‑of‑glass management experience, and an application marketplace, so providers can expose SLA‑aware, globally routed, token‑monetized AI services to tenants without each customer having to manage the underlying complexity.