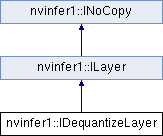

A Dequantize layer in a network definition. More...

#include <NvInfer.h>

Public Member Functions | |

| int32_t | getAxis () const noexcept |

| Get the quantization axis. More... | |

| void | setAxis (int32_t axis) noexcept |

| Set the quantization axis. More... | |

| void | setToType (DataType toType) noexcept |

| Set the Dequantize layer output type. More... | |

| DataType | getToType () const noexcept |

| Return the Dequantize layer output type. More... | |

Public Member Functions inherited from nvinfer1::ILayer Public Member Functions inherited from nvinfer1::ILayer | |

| LayerType | getType () const noexcept |

| Return the type of a layer. More... | |

| void | setName (char const *name) noexcept |

| Set the name of a layer. More... | |

| char const * | getName () const noexcept |

| Return the name of a layer. More... | |

| int32_t | getNbInputs () const noexcept |

| Get the number of inputs of a layer. More... | |

| ITensor * | getInput (int32_t index) const noexcept |

| Get the layer input corresponding to the given index. More... | |

| int32_t | getNbOutputs () const noexcept |

| Get the number of outputs of a layer. More... | |

| ITensor * | getOutput (int32_t index) const noexcept |

| Get the layer output corresponding to the given index. More... | |

| void | setInput (int32_t index, ITensor &tensor) noexcept |

| Replace an input of this layer with a specific tensor. More... | |

| void | setPrecision (DataType dataType) noexcept |

| Set the preferred or required computational precision of this layer in a weakly-typed network. More... | |

| DataType | getPrecision () const noexcept |

| get the computational precision of this layer More... | |

| bool | precisionIsSet () const noexcept |

| whether the computational precision has been set for this layer More... | |

| void | resetPrecision () noexcept |

| reset the computational precision for this layer More... | |

| void | setOutputType (int32_t index, DataType dataType) noexcept |

| Set the output type of this layer in a weakly-typed network. More... | |

| DataType | getOutputType (int32_t index) const noexcept |

| get the output type of this layer More... | |

| bool | outputTypeIsSet (int32_t index) const noexcept |

| whether the output type has been set for this layer More... | |

| void | resetOutputType (int32_t index) noexcept |

| reset the output type for this layer More... | |

| void | setMetadata (char const *metadata) noexcept |

| Set the metadata for this layer. More... | |

| char const * | getMetadata () const noexcept |

| Get the metadata of the layer. More... | |

Protected Member Functions | |

| virtual | ~IDequantizeLayer () noexcept=default |

Protected Member Functions inherited from nvinfer1::ILayer Protected Member Functions inherited from nvinfer1::ILayer | |

| virtual | ~ILayer () noexcept=default |

Protected Member Functions inherited from nvinfer1::INoCopy Protected Member Functions inherited from nvinfer1::INoCopy | |

| INoCopy ()=default | |

| virtual | ~INoCopy ()=default |

| INoCopy (INoCopy const &other)=delete | |

| INoCopy & | operator= (INoCopy const &other)=delete |

| INoCopy (INoCopy &&other)=delete | |

| INoCopy & | operator= (INoCopy &&other)=delete |

Protected Attributes | |

| apiv::VDequantizeLayer * | mImpl |

Protected Attributes inherited from nvinfer1::ILayer Protected Attributes inherited from nvinfer1::ILayer | |

| apiv::VLayer * | mLayer |

Detailed Description

A Dequantize layer in a network definition.

This layer accepts a quantized type input tensor, and uses the configured scale and zeroPt inputs to dequantize the input according to: output = (input - zeroPt) * scale

The first input (index 0) is the tensor to be quantized. The second (index 1) and third (index 2) are the scale and zero point respectively. scale and zeroPt should have identical dimensions, and rank lower or equal to 2.

The zeroPt tensor is optional, and if not set, will be assumed to be zero. Its data type must be identical to the input's data type. zeroPt must only contain zero-valued coefficients, because only symmetric quantization is supported. The scale value must be either a scalar for per-tensor quantization, a 1-D tensor for per-channel quantization, or a 2-D tensor for block quantization (supported for DataType::kINT4 only). All scale coefficients must have positive values. The size of the 1-D scale tensor must match the size of the quantization axis. For block quantization, the shape of scale tensor must match the shape of the input, except for one dimension in which blocking occurs. The size of zeroPt must match the size of scale.

The subgraph which terminates with the scale tensor must be a build-time constant. The same restrictions apply to the zeroPt. The output type, if constrained, must be constrained to DataType::kFLOAT, DataType::kHALF, or DataType::kBF16. The input type, if constrained, must be constrained to DataType::kINT8, DataType::kFP8 or DataType::kINT4. The output size is the same as the input size. The quantization axis is in reference to the input tensor's dimensions.

IDequantizeLayer supports DataType::kINT8, DataType::kFP8 or DataType::kINT4 precision and will default to DataType::kINT8 precision during instantiation. For strongly typed networks, input data type must be same as zeroPt data type.

IDequantizeLayer supports DataType::kFLOAT, DataType::kHALF, or DataType::kBF16 output. For strongly typed networks, output data type is inferred from scale data type.

As an example of the operation of this layer, imagine a 4D NCHW activation input which can be quantized using a single scale coefficient (referred to as per-tensor quantization): For each n in N: For each c in C: For each h in H: For each w in W: output[n,c,h,w] = (input[n,c,h,w] - zeroPt) * scale

Per-channel dequantization is supported only for input that is rooted at an IConstantLayer (i.e. weights). Activations cannot be quantized per-channel. As an example of per-channel operation, imagine a 4D KCRS weights input and K (dimension 0) as the quantization axis. The scale is an array of coefficients, which is the same size as the quantization axis. For each k in K: For each c in C: For each r in R: For each s in S: output[k,c,r,s] = (input[k,c,r,s] - zeroPt[k]) * scale[k]

Block dequantization is supported only for 2-D input tensors with DataType::kINT4 that are rooted at an IConstantLayer (i.e. weights). As an example of blocked operation, imagine a 2-D RS weights input with R (dimension 0) as the blocking axis and B as the block size. The scale is a 2-D array of coefficients, with dimensions (R//B, S). For each r in R: For each s in S: output[r,s] = (input[r,s] - zeroPt[r//B, s]) * scale[r//B, s]

- Note

- Only symmetric quantization is supported.

-

Currently the only allowed build-time constant

scaleandzeroPtsubgraphs are:- Constant -> Quantize

- Constant -> Cast -> Quantize

- The input tensor for this layer must not be a scalar.

- Warning

- Do not inherit from this class, as doing so will break forward-compatibility of the API and ABI.

Constructor & Destructor Documentation

◆ ~IDequantizeLayer()

|

protectedvirtualdefaultnoexcept |

Member Function Documentation

◆ getAxis()

|

inlinenoexcept |

Get the quantization axis.

- Returns

- axis parameter set by setAxis(). The return value is the index of the quantization axis in the input tensor's dimensions. A value of -1 indicates per-tensor quantization. The default value is -1.

◆ getToType()

|

inlinenoexcept |

Return the Dequantize layer output type.

- Returns

- toType parameter set during layer creation or by setToType(). The return value is the output type of the quantize layer. The default value is DataType::kFLOAT.

◆ setAxis()

|

inlinenoexcept |

Set the quantization axis.

Set the index of the quantization axis (with reference to the input tensor's dimensions). The axis must be a valid axis if the scale tensor has more than one coefficient. The axis value will be ignored if the scale tensor has exactly one coefficient (per-tensor quantization).

◆ setToType()

|

inlinenoexcept |

Set the Dequantize layer output type.

- Parameters

-

toType The DataType of the output tensor.

Set the output type of the dequantize layer. Valid values are DataType::kFLOAT and DataType::kHALF. If the network is strongly typed, setToType must be used to set the output type, and use of setOutputType is an error. Otherwise, types passed to setOutputType and setToType must be the same.

Member Data Documentation

◆ mImpl

|

protected |

The documentation for this class was generated from the following file: