A MoE layer in a network definition. Mixture of Experts (MoE) is a collection of experts with each expert specializing in processing different subsets of input data. The key innovation lies in using a Router that selectively activates only the specific experts needed for a given input, rather than engaging the entire neural network for every task.

More...

|

| void | setGatedWeights (ITensor &fcGateWeights, ITensor &fcUpWeights, ITensor &fcDownWeights, MoEActType activationType) noexcept |

| | Set the weights of the experts when each expert is a GLU (gated linear unit). In each GLU, there are 3 linear layers and 1 activation function, so this function requires 3 weight tensors and 1 activation type. More...

|

| |

| void | setGatedBiases (ITensor &fcGateBiases, ITensor &fcUpBiases, ITensor &fcDownBiases) noexcept |

| | Set the biases of the experts when each expert is a GLU (gated linear unit). In each GLU, there are 3 linear layers, so this function requires 3 bias tensors. More...

|

| |

| void | setActivationType (MoEActType activationType) noexcept |

| | Set the activation type for the MoE layer. More...

|

| |

| MoEActType | getActivationType () const noexcept |

| | Get the activation type for the MoE layer. More...

|

| |

| void | setQuantizationStatic (ITensor &fcDownActivationScale, DataType dataType) noexcept |

| | Configure static quantization after the mul op. ┌── fcGate ── activation ───┐ │ │ hiddenStates ───┤ ├── mul ── {Q ── DQ} ── fcDown ── output │ │ └── fcUp ───────────────────┘ When using mul output static quantization, the user must provide: More...

|

| |

| void | setQuantizationDynamicDblQ (ITensor &fcDownActivationDblQScale, DataType dataType, Dims const &blockShape, DataType dynQOutputScaleType) noexcept |

| | Configure dynamic quantization (with double quantization) after the mul op. ┌── fcGate ── activation ───┐ ┌──── DQ │ │ │ │ hiddenStates ───┤ ├── mul ── {DynQ ── DQ} ── fcDown ── output │ │ └── fcUp ───────────────────┘ When using mul output dynamic quantization (with double quantization), the user must provide: More...

|

| |

| void | setQuantizationToType (DataType type) noexcept |

| | Set the data type the mul output is quantized to. More...

|

| |

| DataType | getQuantizationToType () const noexcept |

| | Get the data type the mul in MoE layer is quantized to. More...

|

| |

| void | setQuantizationBlockShape (Dims const &blockShape) noexcept |

| | Set the block shape for the quantization of the Mul output. More...

|

| |

| Dims | getQuantizationBlockShape () const noexcept |

| | Get the block shape for the quantization of the Mul output. More...

|

| |

| void | setDynQOutputScaleType (DataType type) noexcept |

| | Set the dynamic quantization output scale type. More...

|

| |

| DataType | getDynQOutputScaleType () const noexcept |

| | Get the dynamic quantization output scale type. More...

|

| |

| void | setSwigluParams (float limit, float alpha, float beta) noexcept |

| | Set the SwiGLU parameters. More...

|

| |

| void | setSwigluParamLimit (float limit) noexcept |

| | Set the SwiGLU parameter limit. More...

|

| |

| float | getSwigluParamLimit () const noexcept |

| | Get the SwiGLU parameter limit. More...

|

| |

| void | setSwigluParamAlpha (float alpha) noexcept |

| | Set the SwiGLU parameter alpha. More...

|

| |

| float | getSwigluParamAlpha () const noexcept |

| | Get the SwiGLU parameter alpha. More...

|

| |

| void | setSwigluParamBeta (float beta) noexcept |

| | Set the SwiGLU parameter beta. More...

|

| |

| float | getSwigluParamBeta () const noexcept |

| | Get the SwiGLU parameter beta. More...

|

| |

| void | setInput (int32_t index, ITensor &tensor) noexcept |

| | Set the input of the MoE layer. More...

|

| |

| void | setInput (int32_t index, ITensor &tensor) noexcept |

| | Replace an input of this layer with a specific tensor. More...

|

| |

| LayerType | getType () const noexcept |

| | Return the type of a layer. More...

|

| |

| void | setName (char const *name) noexcept |

| | Set the name of a layer. More...

|

| |

| char const * | getName () const noexcept |

| | Return the name of a layer. More...

|

| |

| int32_t | getNbInputs () const noexcept |

| | Get the number of inputs of a layer. More...

|

| |

| ITensor * | getInput (int32_t index) const noexcept |

| | Get the layer input corresponding to the given index. More...

|

| |

| int32_t | getNbOutputs () const noexcept |

| | Get the number of outputs of a layer. More...

|

| |

| ITensor * | getOutput (int32_t index) const noexcept |

| | Get the layer output corresponding to the given index. More...

|

| |

| void | setInput (int32_t index, ITensor &tensor) noexcept |

| | Replace an input of this layer with a specific tensor. More...

|

| |

| TRT_DEPRECATED void | setPrecision (DataType dataType) noexcept |

| | Set the preferred or required computational precision of this layer in a weakly-typed network. More...

|

| |

| DataType | getPrecision () const noexcept |

| | get the computational precision of this layer More...

|

| |

| TRT_DEPRECATED bool | precisionIsSet () const noexcept |

| | whether the computational precision has been set for this layer More...

|

| |

| TRT_DEPRECATED void | resetPrecision () noexcept |

| | reset the computational precision for this layer More...

|

| |

| TRT_DEPRECATED void | setOutputType (int32_t index, DataType dataType) noexcept |

| | Set the output type of this layer in a weakly-typed network. More...

|

| |

| DataType | getOutputType (int32_t index) const noexcept |

| | get the output type of this layer More...

|

| |

| TRT_DEPRECATED bool | outputTypeIsSet (int32_t index) const noexcept |

| | whether the output type has been set for this layer More...

|

| |

| TRT_DEPRECATED void | resetOutputType (int32_t index) noexcept |

| | reset the output type for this layer More...

|

| |

| void | setMetadata (char const *metadata) noexcept |

| | Set the metadata for this layer. More...

|

| |

| char const * | getMetadata () const noexcept |

| | Get the metadata of the layer. More...

|

| |

| bool | setNbRanks (int32_t nbRanks) noexcept |

| | Set the number of ranks for multi-device execution. More...

|

| |

| int32_t | getNbRanks () const noexcept |

| | Get the number of ranks for multi-device execution. More...

|

| |

A MoE layer in a network definition. Mixture of Experts (MoE) is a collection of experts with each expert specializing in processing different subsets of input data. The key innovation lies in using a Router that selectively activates only the specific experts needed for a given input, rather than engaging the entire neural network for every task.

┌──────────────┐┌────────────────────────┐┌────────────────────────┐ │ hiddenStates ││selectedExpertsForTokens││scoresForSelectedExperts│ └──────────────┘└────────────────────────┘└────────────────────────┘ │ │ │ │ │ │ ┌───────────────────────────────────────────────────────────────────────────────────┐ │ │ │ ┌──────────────────────────┐ ┌──────────────────────────┐ │ │ │ │ Expert 0 │ │ MOE │ │ Expert i │ │ │ │ │ │ │ │ │ │ │ │ │ │ │ ┌────────┐ ┌────────┐│ │ ┌────────┐ ┌────────┐│ │ │ │ │ fcGate │ │ fcUp ││ │ │ fcGate │ │ fcUp ││ │ │ │ │ │ │ ││ │ │ │ │ ││ │ │ │ └───┬────┘ └────┬───┘│ │ └───┬────┘ └────┬───┘│ │ │ │ │ │ │ │ │ │ │ │ │ │ ┌──────────┐ │ │ │ ┌──────────┐ │ │ │ │ │ │activation│ │ │ │ │activation│ │ │ │ │ │ └────┬─────┘ │ │ │ └────┬─────┘ │ │ │ │ │ │ │ │ ....... │ │ │ │ │ │ │ └──────┬───────┘ │ │ └──────┬───────┘ │ │ │ │ │ │ │ │ │ │ │ │ ┌────────┐ │ │ ┌────────┐ │ │ │ │ │ mul │ │ │ │ mul │ │ │ │ │ └───┬────┘ │ │ └───┬────┘ │ │ │ │ │ │ │ │ │ │ │ │ ┌───▼────┐ │ │ ┌───▼────┐ │ │ │ │ │ fcDown │ │ │ │ fcDown │ │ │ │ │ └───┬────┘ │ │ └───┬────┘ │ │ │ │ │ │ │ │ │ │ │ │ ┌───▼────┐ │ │ ┌───▼────┐ │ │ │ │ │output 0│ │ │ │output i│ │ │ │ │ └───┬────┘ │ │ └───┬────┘ │ │ │ └─────────────┼────────────┘ └─────────────┼────────────┘ │ │ │ │ │ │ └───────────────────┬───────────────────────────────┘ │ │ │ │ │ ▼ │ │ ┌───────────────┐ │ │ │ weightedSum │ │ │ └───────┬───────┘ │ └────────────────────────────────────│──────────────────────────────────────────────┘ ▼ ┌───────────────┐ │ moeOutput │ └───────────────┘

Definition in the MoE layer: fcDown, fcGate, fcUp are three linear layers. fc(x) = x * w + b, where x is the input, w is the weight, b is the bias, * is the matrix multiplication. activation is the activation function. mul is the multiplication between the output of fc_up and the output of fc_gate. weightedSum is the weighted sum of the output of the experts. moeOutput is the output of the MoE layer.

MoE is a collection of experts. Each expert is a GLU (gated linear unit), which consists by fcGate, fcUp, fcDown, activation, mul.

Definitions and Abbreviations: batchSize: batch size seqLen: sequence length hiddenSize: the size of the hidden states numExperts: the number of experts in the MoE layer moeInterSize: the intermediate size of the MoE layer topK: the number of experts to select for each token

This layer takes several activation inputs:

hiddenStates: the hidden states of the layer, with shape [batchSize, seqLen, hiddenSize]selectedExpertsForTokens: the top K experts selected for each token, with shape [batchSize, seqLen, topK]scoresForSelectedExperts: the scales for the selected experts per token, with shape [batchSize, seqLen, topK] The MoE will take the selected experts and the corresponding scales for the selected experts to compute the output.

The weights in the MoE layer:

fcGateWeights with shape [numExperts, hiddenSize, moeInterSize]: the weight matrix for fcGatefcUpWeights with shape [numExperts, hiddenSize, moeInterSize]: the weight matrix for fcUpfcDownWeights with shape [numExperts, moeInterSize, hiddenSize]: the weight matrix for fcDown

Several optional inputs are supported:

fcGateBias: the bias for the fcGate, with shape [numExperts, moeInterSize]fcUpBias: the bias for the fcUp, with shape [numExperts, moeInterSize]fcDownBias: the bias for the fcDown, with shape [numExperts, hiddenSize] All the bias are none by default. You must either set all the bias or none of them.activation: the activation type for the MoE layer, currently only support SILU.

MoE computation process description: For each token, the MoE layer computation process is as follows:

- Input processing:

- Receive

hiddenStates:

- Receive

selectedExpertsForTokens:

- Receive

scoresForSelectedExperts:

- Expert computation for each token:

- output_i = fcDown(fcUp(hiddenStates) * activation(fcGate(hiddenStates)))

- Expert output aggregation: For each token, firstly select all the experts that need to be activated to do the computation.

- calculate the selected expert's output according to expert id in

selectedExpertsForTokens for each token

- Weighted sum of each expert's output according to weights in

scoresForSelectedExperts for each token

- Final output for the token: moeOutput = Σ(score_i * output_i) The output of MoE has the same shape as the input

hiddenStates.

- Warning

- MoE is only supported on Thor. And performance is limited when seqLen > 16.

-

Do not inherit from this class, as doing so will break forward-compatibility of the API and ABI.

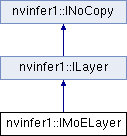

Public Member Functions inherited from nvinfer1::ILayer

Public Member Functions inherited from nvinfer1::ILayer Protected Member Functions inherited from nvinfer1::ILayer

Protected Member Functions inherited from nvinfer1::ILayer Protected Member Functions inherited from nvinfer1::INoCopy

Protected Member Functions inherited from nvinfer1::INoCopy Protected Attributes inherited from nvinfer1::ILayer

Protected Attributes inherited from nvinfer1::ILayer