DOCA Flow

This guide describes how to deploy the DOCA Flow library, the philosophy of the DOCA Flow API, and how to use it. The guide is intended for developers writing network function applications that focus on packet processing (such as gateways). It assumes familiarity with the network stack and DPDK.

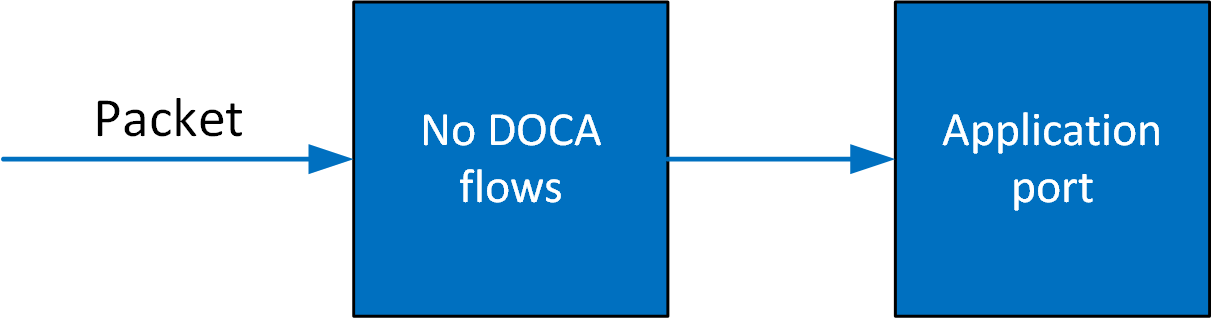

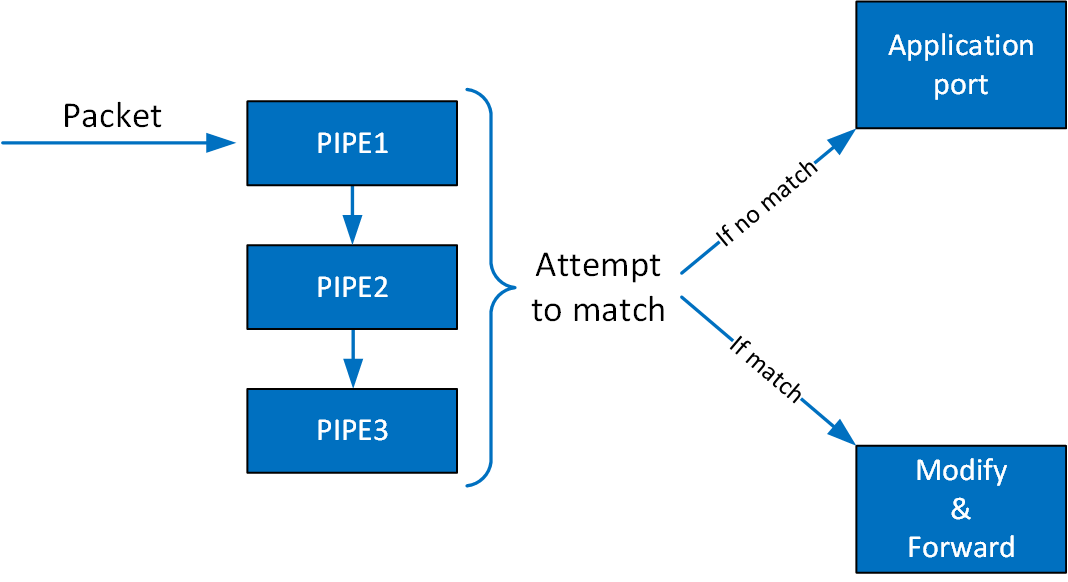

DOCA Flow is the most fundamental API for building generic packet processing pipes in hardware. The DOCA Flow library provides an API for building a set of pipes, where each pipe consists of match criteria, monitoring, and a set of actions. Pipes can be chained so that after a pipe-defined action is executed, the packet may proceed to another pipe.

Using DOCA Flow API, it is easy to develop hardware-accelerated applications that have a match on up to two layers of packets (tunneled).

MAC/VLAN/ETHERTYPE

IPv4/IPv6

TCP/UDP/ICMP

GRE/VXLAN/GTP-U

Metadata

The execution pipe can include packet modification actions such as the following:

Modify MAC address

Modify IP address

Modify L4 (ports, TCP sequences and acknowledgments)

Strip tunnel

Add tunnel

Set metadata

The execution pipe can also have monitoring actions such as the following:

Count

Policers

The pipe also has a forwarding target which can be any of the following:

Software (RSS to subset of queues)

Port

Another pipe

Drop packets

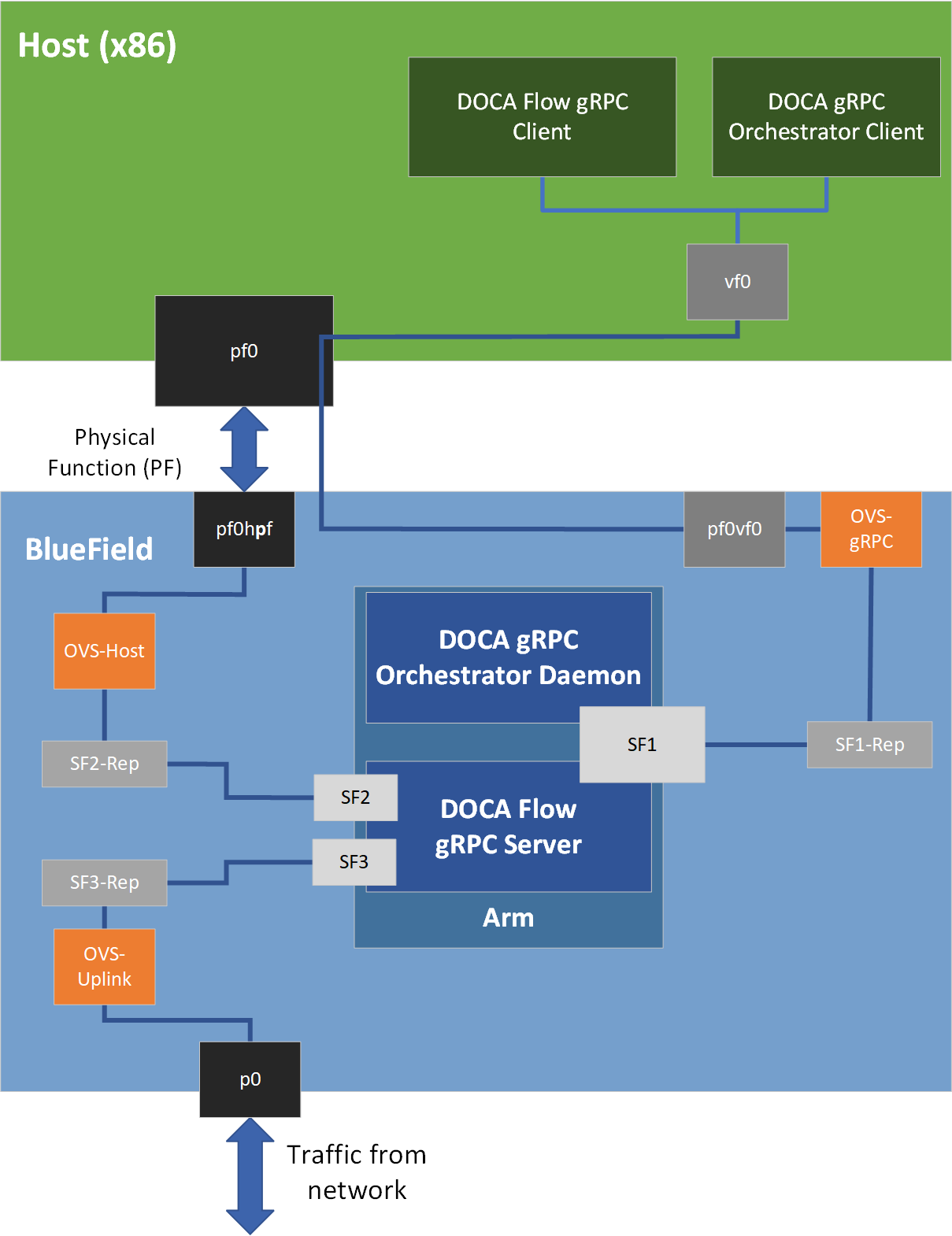

A DOCA Flow-based application can run either on the host machine or on an NVIDIA® BlueField® DPU target. Flow-based programs require an allocation of huge pages, hence the following commands are required:

echo '1024' | sudo tee -a /sys/kernel/mm/hugepages/hugepages-2048kB/nr_hugepages

sudo mkdir /mnt/huge

sudo mount -t hugetlbfs nodev /mnt/huge

On some operating systems (RockyLinux, OpenEuler, CentOS 8.2) the default huge page size on the DPU (and Arm hosts) is larger than 2MB, and is often 512MB instead. In such cases, the guiding principal is to allocate 2GB of RAM, and instead of allocating 1024 pages, one should allocate the matching amount (4 pages):

sudo echo 4 > /proc/sys/vm/nr_hugepages

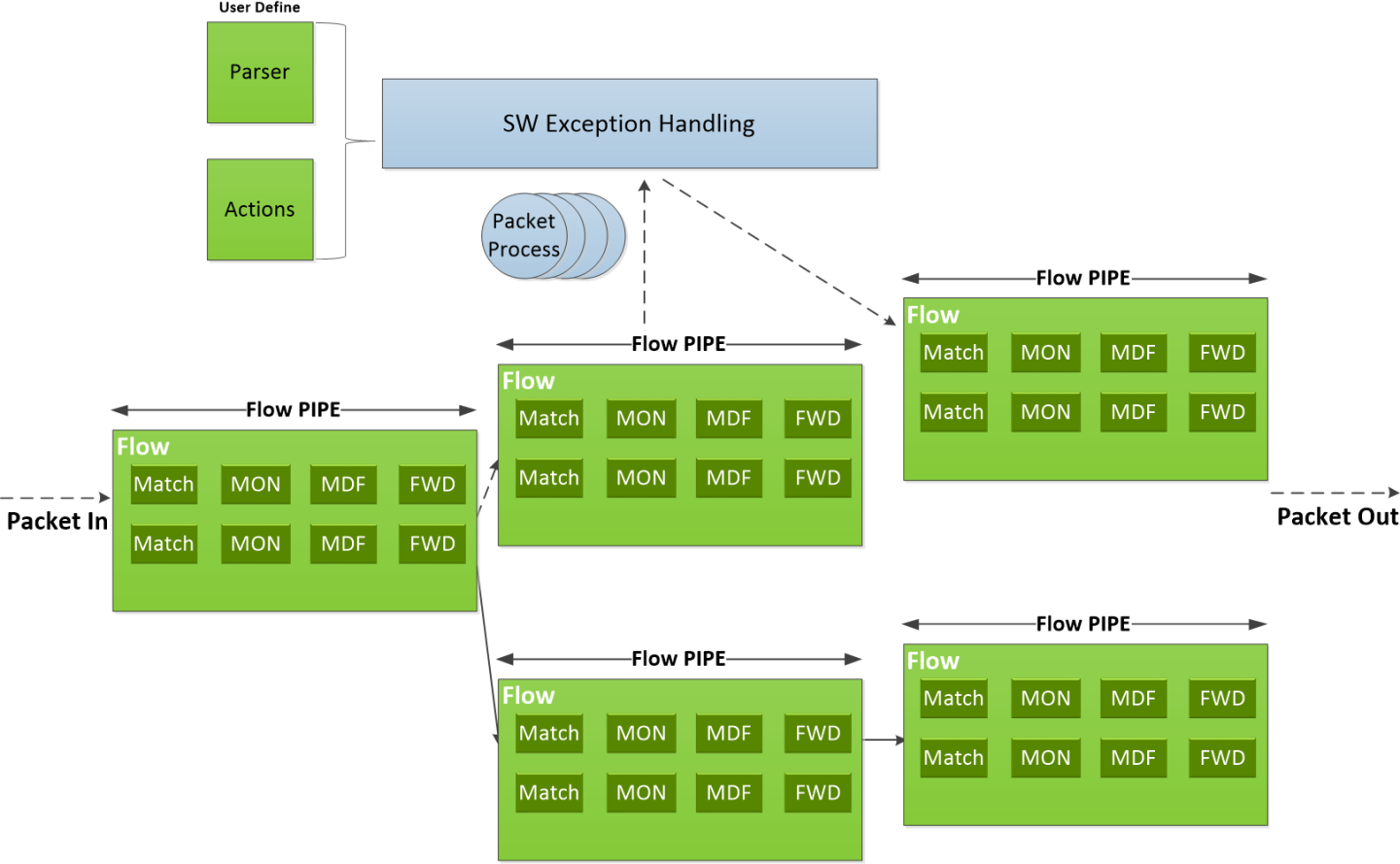

The following diagram shows how the DOCA Flow library defines a pipe template, receives a packet for processing, creates the pipe entry, and offloads the flow rule in NIC hardware.

Features of DOCA Flow:

User-defined set of matches parser and actions

DOCA Flow pipes can be created or destroyed dynamically

Packet processing is fully accelerated by hardware with a specific entry in a flow pipe

Packets that do not match any of the pipe entries in hardware can be sent to Arm cores for exception handling and then reinjected back to hardware

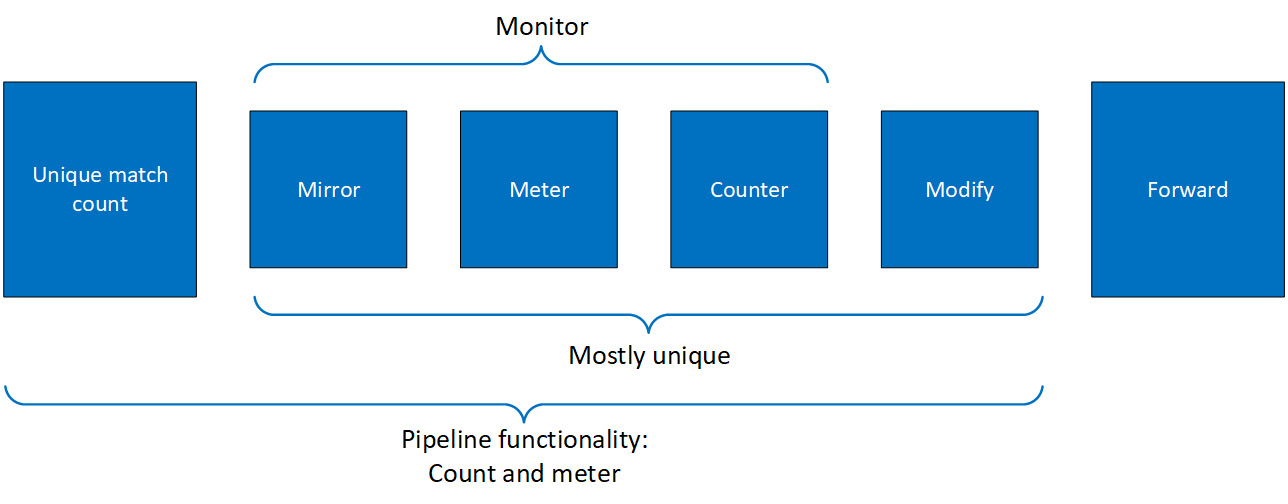

The DOCA Flow pipe consists of the following components:

Monitor (MON in the diagram) - counts, meters, or mirrors

Modify (MDF in the diagram) - modifies a field

Forward (FWD in the diagram) - forwards to the next stage in packet processing

DOCA Flow organizes pipes into high-level containers named domains to address the specific needs of the underlying architecture.

A key element in defining a domain is the packet direction and a set of allowed actions.

A domain is a pipe attribute (also relates to shared objects)

A domain restricts the set of allowed actions

Transition between domains is well-defined (packets cannot cross domains arbitrarily)

A domain may restrict the sharing of objects between packet directions

Packet direction can restrict the move between domains

List of Steering Domains

DOCA Flow provides the following set of predefined steering domains:

|

Domain |

Description |

|

DOCA_FLOW_PIPE_DOMAIN_DEFAULT |

|

|

DOCA_FLOW_PIPE_DOMAIN_SECURE_INGRESS |

|

|

DOCA_FLOW_PIPE_DOMAIN_EGRESS |

|

|

DOCA_FLOW_PIPE_DOMAIN_SECURE_EGRESS |

|

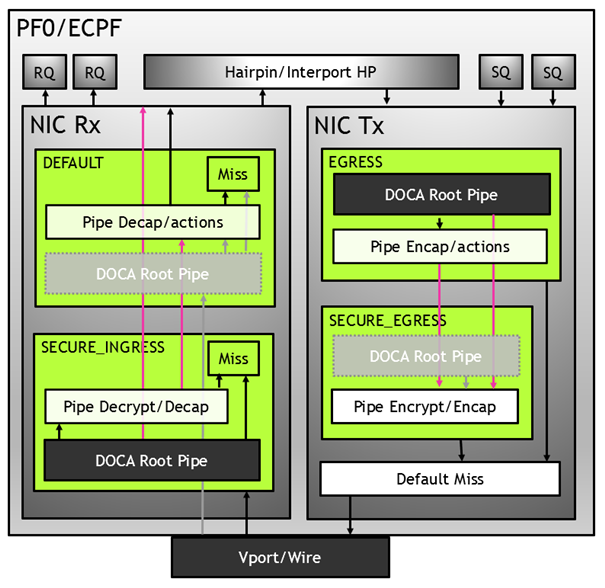

Domains in VNF Mode

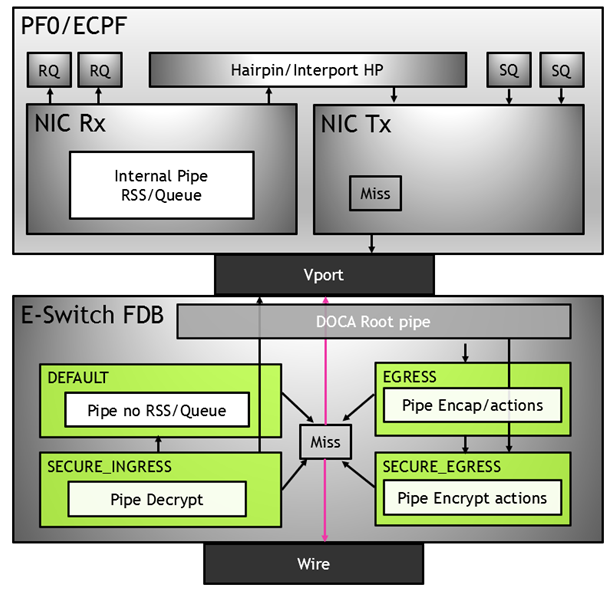

Domains in Switch Mode

You can find more detailed information on DOCA Flow API in NVIDIA DOCA Libraries API Reference Manual.

The pkg-config (*.pc file) for the DOCA Flow library is included in DOCA's regular definitions (i.e., doca).

The following sections provide additional details about the library API.

doca_flow_cfg

This structure is required input for the the DOCA Flow global initialization function - doca_flow_init.

struct doca_flow_cfg {

uint64_t flags;

uint16_t queues;

struct doca_flow_resources resource;

uint8_t nr_acl_collisions;

const char *mode_args;

uint32_t nr_shared_resources[DOCA_FLOW_SHARED_RESOURCE_MAX];

unit32_t queue_depth;

doca_flow_entry_process_cb cb;

doca_flow_shared_resource_unbind_cb unbind_cb;

};

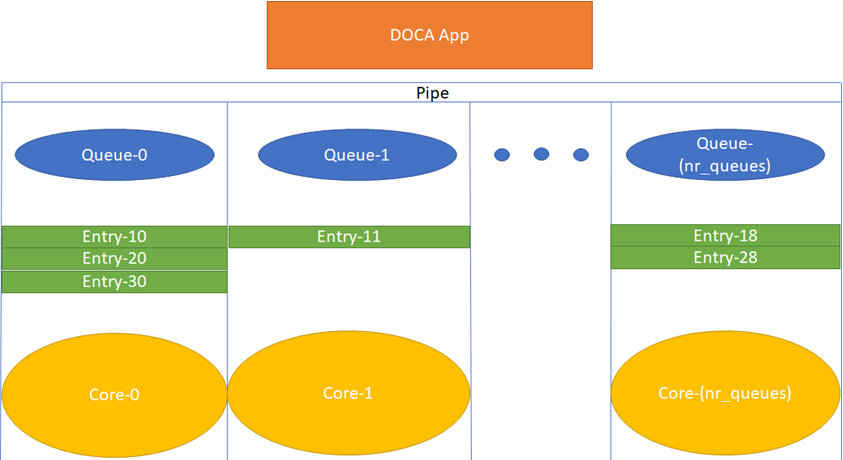

flag – configuration flags

queues – the number of hardware acceleration control queues. It is expected that the same core always uses the same queue_id. In cases where multiple cores access the API using the same queue_id, it is up to the application to use locks between different cores/threads.

resource – resource quota. This field includes the flow resource quota defined in the following structs:

uint32_t nb_counters – number of regular (non-shared) counters to configure

uint32_b nb_meters – number of regular (non-shared) traffic meters to configure

nr_acl_collisions – number of collisions for the ACL module. Default value is 3. Maximum value is 8.

mode_args – sets the DOCA Flow architecture mode.

nr_shared_resources – total shared resource per type. See "Shared Counter Resource" section for more information.

Index DOCA_FLOW_SHARED_RESOURCE_METER – number of meters that can be shared among flows

Index DOCA_FLOW_SHARED_RESOURCE_COUNT – number of counters that can be shared among flows

Index DOCA_FLOW_SHARED_RESOURCE_RSS – number of RSS that can be shared among flows

Index DOCA_FLOW_SHARED_RESOURCE_CRYPTO – number of crypto actions that can be shared among flows

queue_depth – n umber of flow rule operations a queue can hold. This value is preconfigured at port start ( queue_size). Default value is 128 (configuring 0 sets default)

cb – callback function for entry to be called during doca_flow_entries_process to complete entry operation (add, update, delete, and aged)

unbind_cb – callback for unbinding of a shared resource

doca_flow_entry_process_cb

typedef void (*doca_flow_entry_process_cb)(struct doca_flow_pipe_entry *entry,

uint16_t pipe_queue, enum doca_flow_entry_status status,

enum doca_flow_entry_op op, void *user_ctx);

entry [in] – p ointer to pipe entry

pipe_queue [in] – q ueue identifier

status [in] – entry processing status (see doca_flow_entry_status)

op [in] – entry's operation, defined in the following enum:

DOCA_FLOW_ENTRY_OP_ADD – Add entry operation

DOCA_FLOW_ENTRY_OP_DEL – Delete entry operation

DOCA_FLOW_ENTRY_OP_UPD – Update entry operation

DOCA_FLOW_ENTRY_OP_AGED – Aged entry operation

user_ctx [in] – user context as provided to doca_flow_pipe_add_entry

WarningUser context is set once to the value provided to doca_flow_pipe_add_entry (or to any doca_flow_pipe_*_add_entry variant) as the usr_ctx parameter, and is then reused in subsequent callback invocation for all operations. This user context must remain available for all potential invocations of the callback depending on it, as it is memorized as part of the entry and provided each time.

shared_resource_unbind_cb

typedef void (*shared_resource_unbind_cb)(enum engine_shared_resource_type type,

uint32_t shared_resource_id,

struct engine_bindable *bindable);

type [in] – engine shared resource type. Supported types are: meter, counter, rss, crypto, mirror.

shared_resource_id [in] – shared resource; unique ID

bindable [in] – pointer to bindable object (e.g., port, pipe)

doca_flow_port_cfg

This struct is required input for the DOCA Flow port initialization function - doca_flow_port_start.

struct doca_flow_port_cfg {

uint16_t port_id;

enum doca_flow_port_type type;

const char *devargs;

uint16_t priv_data_size;

void *dev;

};

The struct doca_flow_port_cfg contains the following elements:

port_id – DPDK port ID.

type – depends on underlying API. This field includes the following port type:

DOCA_FLOW_PORT_DPDK_BY_ID – DPDK port by mapping ID

devargs – a string containing the exact configuration needed according to the type

NoteFor usage information of the type and devargs fields, refer to section "Start Point".

priv_data_size – per port, users may define private data where application-specific info can be stored

dev – port's doca_dev; used to create internal hairpin resource for switch mode if it is set

doca_flow_pipe_cfg

This is a pipe configuration that contains the user-defined template for the packet process.

struct doca_flow_pipe_attr {

const char *name;

enum doca_flow_pipe_type type;

enum doca_flow_pipe_domain domain;

bool is_root;

uint32_t nb_flows;

bool enable_strict_matching;

uint8_t nb_actions;

uint8_t nb_ordered_lists;

enum doca_flow_direction_info dir_info;

bool miss_counter;

};

name – a string containing the name of the pipeline.

type – type of pipe (enum doca_flow_pipe_type). This field includes the following pipe types:

DOCA_FLOW_PIPE_BASIC – flow pipe

DOCA_FLOW_PIPE_CONTROL – control pipe

DOCA_FLOW_PIPE_LPM – LPM pipe

DOCA_FLOW_PIPE_ACL – ACL pipe

DOCA_FLOW_PIPE_ORDERED_LIST – ordered list pipe

DOCA_FLOW_PIPE_HASH – hash pipe

domain – pipe steering domain:

DOCA_FLOW_PIPE_DOMAIN_DEFAULT – default pipe domain for actions on ingress traffic

DOCA_FLOW_PIPE_DOMAIN_SECURE_INGRESS – pipe domain for secure actions on ingress traffic

DOCA_FLOW_PIPE_DOMAIN_EGRESS – pipe domain for actions on egress traffic

DOCA_FLOW_PIPE_DOMAIN_SECURE_EGRESS – pipe domain for actions on egress traffic

is_root – determines whether or not the pipeline is root. If true, then the pipe is a root pipe executed on packet arrival

WarningOnly one root pipe is allowed per port of any type.

enable_strict_matching – if true, relaxed matching (enabled by default) is disabled for this pipe

nb_flows – maximum number of flow rules. Default is 8k if not set

nb_actions – maximum number of DOCA Flow action array. Default is 1 if not set

Warningnb_actions is mutually exclusive with nb_ordered_lists.

nb_ordered_lists – Number of ordered lists in the array. Default is 0.

Warningnb_ordered_lists is mutually exclusive with nb_actions.

dir_info – pipe direction information:

DOCA_FLOW_DIRECTION_BIDIRECTIONAL – default for traffic in both directions

DOCA_FLOW_DIRECTION_NETWORK_TO_HOST – network to host traffic

DOCA_FLOW_DIRECTION_HOST_TO_NETWORK – host to network traffic

Warningdir_info is supported in Switch Mode only.

Warningdir_info is optional. It can provide potential optimization at the driver layer. The driver may ignore it silently.

miss_counter – determines whether or not to add a miss counter to the pipe. If true, then the pipe will have a miss counter and the user can query it using doca_flow_query_pipe_miss.

WarningMiss counter may affect performance and should be avoided if not required by the application.

struct doca_flow_ordered_list {

uint32_t idx;

uint32_t size;

const void **elements;

enum doca_flow_ordered_list_element_type *types;

};

idx – list index among the lists of the pipe.

At pipe creation, it must match the list position in the array of lists

At entry insertion, it determines which list to use

size – number of elements in the list.

elements – an array of DOCA flow structure pointers, depending on the types.

types – types of DOCA Flow structures each of the elements is pointing to. This field includes the following ordered list element types:

DOCA_FLOW_ORDERED_LIST_ELEMENT_ACTIONS – ordered list element is struct doca_flow_actions. The next element is struct doca_flow_action_descs which is associated with the current element

DOCA_FLOW_ORDERED_LIST_ELEMENT_ACTION_DESCS – ordered list element is struct doca_flow_action_descs. If the previous element type is ACTIONS, the current element is associated with it. Otherwise, the current element is ordered with regards to the previous one

DOCA_FLOW_ORDERED_LIST_ELEMENT_MONITOR – ordered list element is struct doca_flow_monitor

struct doca_flow_pipe_cfg {

struct doca_flow_pipe_attr attr;

struct doca_flow_port *port;

struct doca_flow_match *match;

struct doca_flow_match *match_mask;

struct doca_flow_actions **actions;

struct doca_flow_actions **actions_masks;

struct doca_flow_action_descs **action_descs;

struct doca_flow_monitor *monitor;

struct doca_flow_ordered_list **ordered_lists;

};

attr – attributes for the pipeline

port – port for the pipeline

match – matcher for the pipeline except for DOCA_FLOW_PIPE_HASH

match_mask – match mask for the pipeline. Only for DOCA_FLOW_PIPE_BASIC, DOCA_FLOW_PIPE_CONTROL, DOCA_FLOW_PIPE_HASH, and DOCA_FLOW_PIPE_ORDERED_LIST

actions – action references array for the pipeline. Only for DOCA_FLOW_PIPE_BASIC and DOCA_FLOW_PIPE_HASH

actions_masks – actions' masks array for the pipeline. Only for DOCA_FLOW_PIPE_BASIC and DOCA_FLOW_PIPE_HASH

action_descs – action descriptors array. Only for DOCA_FLOW_PIPE_BASIC, DOCA_FLOW_PIPE_CONTROL, and DOCA_FLOW_PIPE_HASH

monitor – monitor for the pipeline. Only for DOCA_FLOW_PIPE_BASIC, DOCA_FLOW_PIPE_CONTROL, and DOCA_FLOW_PIPE_HASH

ordered_list – array of ordered list types. Only for DOCA_FLOW_PIPE_ORDERED_LIST

doca_flow_parser_geneve_opt_cfg

This is a parser configuration that contains the user-defined template for a single GENEVE TLV option.

struct doca_flow_parser_geneve_opt_cfg {

enum doca_flow_parser_geneve_opt_mode match_on_class_mode;

doca_be16_t option_class;

uint8_t option_type;

uint8_t option_len;

doca_be32_t data_mask[DOCA_FLOW_GENEVE_DATA_OPTION_LEN_MAX];

};

match_on_class_mode – role of option_class in this option (enum doca_flow_parser_geneve_opt_mode). This field includes the following class modes:

DOCA_FLOW_PARSER_GENEVE_OPT_MODE_IGNORE – class is ignored, its value is neither part of the option identifier nor changeable per pipe/entry

DOCA_FLOW_PARSER_GENEVE_OPT_MODE_FIXED – class is fixed (the class defines the option along with the type)

DOCA_FLOW_PARSER_GENEVE_OPT_MODE_MATCHABLE – class is the field of this option; different values can be matched for the same option (defined by type only)

option_class – option class ID (must be set when class mode is fixed)

option_type – option type

option_len – length of the option data (in 4-byte granularity)

data_mask – mask for indicating which dwords (DWs) should be configured on this option

WarningThis is not a bit mask. Each DW can contain either 0xffffffff for configure or 0x0 for ignore. Other values are not valid.

doca_flow_meta

There is a maximum DOCA_FLOW_META_MAX-byte scratch area which exists throughout the pipeline.

The user can set a value to metadata, copy from a packet field, then match in later pipes. Mask is supported in both match and modification actions.

The user can modify the metadata in different ways based on its actions' masks or descriptors:

ADD – set metadata scratch value from a pipe action or an action of a specific entry. Width is specified by the descriptor.

COPY – copy metadata scratch value from a packet field (including the metadata scratch itself). Width is specified by the descriptor.

WarningIn a real application, it is encouraged to create a union of doca_flow_meta defining the application's scratch fields to use as metadata.

struct doca_flow_meta { union { uint32_t pkt_meta; /**< Shared with application via packet. */ struct { uint32_t lag_port :2; /**< Bits of LAG member port. */ uint32_t type :2; /**< 0: traffic 1: SYN 2: RST 3: FIN. */ uint32_t zone :28; /**< Zone ID for CT processing. */ } ct; }; uint32_t u32[DOCA_FLOW_META_MAX / 4 - 1]; /**< Programmable user data. */ uint32_t mark; /**< Mark id. */ };

pkt_meta – Metadata can be received along with the packet

u32[] – Scratch are u32[]

u32[0] contains the IPsec syndrome in the lower 8 bits if the packet passes the pipe with IPsec crypto action configured in full offload mode:

0 – signifies a successful IPsec operation on the packet

1 – bad replay. Ingress packet sequence number is beyond anti-reply window boundaries.

u32[1] contains the IPsec packet sequence number (lower 32 bits) if the packet passes the pipe with IPsec crypto action configured in full offload mode

mark – O ptional parameter that may be communicated to the software. If it is set and the packet arrives to the software, the value can be examined using the software API.

When DPDK is used, MARK is placed on the struct rte_mbuf (see "Action: MARK" section in official DPDK documentation )

When the Kernel is used, MARK is placed on the struct sk_buff 's MARK field

Some DOCA pipe types (or actions) use several bytes in the scratch area for internal usage. So, if the user has set these bytes in PIPE-1 and read them in PIPE-2, and between PIPE-1 and PIPE-2 there is PIPE-A which also uses these bytes for internal purpose, then these bytes are overwritten by the PIPE-A. This must be considered when designing the pipe tree.

The bytes used in the scratch area are presented by pipe type in the following table:

|

Pipe Type/Action |

Bytes Used in Scratch |

|

orderd_list |

[0, 1, 2, 3] |

|

LPM |

[0, 1, 2, 3] |

|

mirror |

[0, 1, 2, 3] |

|

ACL |

[0, 1, 2, 3] |

|

Fwd from ingress to egress |

[0, 1, 2, 3] |

doca_flow_parser_meta

This structure contains all metadata information which hardware extracts from the packet.

These fields contain read-only hardware data which can be used to match on.

struct doca_flow_parser_meta {

uint32_t port_meta;

uint16_t random;

uint8_t ipsec_syndrome;

enum doca_flow_meter_color meter_color;

enum doca_flow_l2_meta outer_l2_type;

enum doca_flow_l3_meta outer_l3_type;

enum doca_flow_l4_meta outer_l4_type;

enum doca_flow_l2_meta inner_l2_type;

enum doca_flow_l3_meta inner_l3_type;

enum doca_flow_l4_meta inner_l4_type;

uint8_t outer_ip_fragmented;

uint8_t inner_ip_fragmented;

uint8_t outer_l3_ok;

uint8_t outer_ip4_checksum_ok;

uint8_t outer_l4_ok;

uint8_t inner_l3_ok;

uint8_t inner_ip4_checksum_ok;

uint8_t inner_l4_ok;

};

port_meta – Programmable source vport.

random – Random value to match regardless to packet data/headers content. Application should not assume that this value is kept during the lifetime of the packet. It holds a different random value for each matching.

WarningWhen random matching is used for sampling, the number of entries in the pipe must be 1 (doca_flow_pipe_attr.nb_flows = 1).

ipsec_syndrome – IPsec decrypt/authentication syndrome

meter_color – Meter colors (enum doca_flow_meter_color). Valid colors:

DOCA_FLOW_METER_COLOR_GREEN – Meter marking packet color as green

DOCA_FLOW_METER_COLOR_YELLOW – Meter marking packet color as yellow

DOCA_FLOW_METER_COLOR_RED – Meter marking packet color as red.

outer_l2_type – Outer L2 packet type (enum doca_flow_l2_meta). Valid L2 types:

DOCA_FLOW_L2_META_NO_VLAN – No VLAN present

DOCA_FLOW_L2_META_SINGLE_VLAN – Single VLAN present

DOCA_FLOW_L2_META_MULTI_VLAN – Multiple VLAN present

outer_l3_type – Outer L3 packet type (enum doca_flow_l3_meta). Valid L3 types:

DOCA_FLOW_L3_META_NONE – L3 type is none of the below

DOCA_FLOW_L3_META_IPV4 – L3 type is IPv4

DOCA_FLOW_L3_META_IPV6 – L3 type is IPv6

outer_l4_type – Outer L4 packet type (enum doca_flow_l4_meta). Valid L4 types:

DOCA_FLOW_L4_META_NONE – L4 type is none of the below

DOCA_FLOW_L4_META_TCP – L4 type is TCP

DOCA_FLOW_L4_META_UDP – L4 type is UDP

DOCA_FLOW_L4_META_ICMP – L4 type is ICMP

DOCA_FLOW_L4_META_ESP – L4 type is ESP

inner_l2_type – Inner L2 packet type (enum doca_flow_l2_meta). Valid L2 types:

DOCA_FLOW_L2_META_NO_VLAN – No VLAN present

DOCA_FLOW_L2_META_SINGLE_VLAN – Single VLAN present

DOCA_FLOW_L2_META_MULTI_VLAN – Multiple VLAN present

inner_l3_type – Inner L3 packet type (enum doca_flow_l3_meta). Valid L3 types:

DOCA_FLOW_L3_META_NONE – L3 type is none of the below

DOCA_FLOW_L3_META_IPV4 – L3 type is IPv4

DOCA_FLOW_L3_META_IPV6 – L3 type is IPv6

inner_l4_type – Inner L4 packet type (enum doca_flow_l4_meta). Valid L4 types:

DOCA_FLOW_L4_META_NONE – L4 type is none of the below

DOCA_FLOW_L4_META_TCP – L4 type is TCP

DOCA_FLOW_L4_META_UDP – L4 type is UDP

DOCA_FLOW_L4_META_ICMP – L4 type is ICMP

DOCA_FLOW_L4_META_ESP – L4 type is ESP

outer_ip_fragmented – Whether outer IP packet is fragmented

inner_ip_fragmented – Whether inner IP packet is fragmented

outer_l3_ok – Whether outer network layer is valid regardless to IPv4 checksum

outer_ip4_checksum_ok – Whether outer IPv4 checksum is valid, packets without outer IPv4 header are taken as invalid checksum

outer_l4_ok – Whether outer transport layer is valid including L4 checksum

inner_l3_ok – Whether inner network layer is valid regardless to IPv4 checksum

inner_ip4_checksum_ok – Whether inner IPv4 checksum is valid, packets without inner IPv4 header are taken as invalid checksum

inner_l4_ok – Whether inner transport layer is valid including L4 checksum

Matching on either outer_l4_ok=1 or inner_l4_ok=1 means that all L4 checks (length, checksum, etc.) are ok.

Matching on either outer_l4_ok=0 or inner_l4_ok=0 means that all L4 checks are not ok.

It is not possible to match using these fields for cases where a part of the checks is okay and a part is not ok.

doca_flow_header_format

This structure defines each layer of the packet header format.

struct doca_flow_header_format {

struct doca_flow_header_eth eth;

uint16_t l2_valid_headers;

struct doca_flow_header_eth_vlan eth_vlan[DOCA_FLOW_VLAN_MAX];

enum doca_flow_l3_type l3_type;

union {

struct doca_flow_header_ip4 ip4;

struct doca_flow_header_ip6 ip6;

};

enum doca_flow_l4_type_ext l4_type_ext;

union {

struct doca_flow_header_icmp icmp;

struct doca_flow_header_udp udp;

struct doca_flow_header_tcp tcp;

struct doca_flow_header_l4_port transport;

};

};

eth – Ethernet header format, including source and destination MAC address and the Ethernet layer type. If a VLAN header is present then eth.type represents the type following the last VLAN tag.

l2_valid_headers – bitwise OR one of the following options: DOCA_FLOW_L2_VALID_HEADER_VLAN_0, DOCA_FLOW_L2_VALID_HEADER_VLAN_1

eth_vlan – VLAN tag control information for each VLAN header.

l3_type – Layer 3 type, indicates the next layer is IPv4 or IPv6.

ip4 – IPv4 header format including source and destination IP address, type of service (dscp and ecn), and the next protocol.

ip6 – IPv6 header format including source and destination IP address, traffic class (dscp and ecn), and the next protocol.

l4_type_ext – The next layer type after the layer 3.

icmp – ICMP header format.

udp – UDP header format.

tcp – TCP header format.

transport – header format for source and destination ports; used for defining match or actions with relaxed matching while not caring about the L4 protocol (whether TCP or UDP). This is used only if the l4_type_ext is DOCA_FLOW_L4_TYPE_EXT_TRANSPORT.

doca_flow_tun

This structure defines tunnel headers.

struct doca_flow_tun {

enum doca_flow_tun_type type;

union {

struct {

doca_be32_t vxlan_tun_id;

};

struct {

bool key_present;

doca_be16_t protocol;

doca_be32_t gre_key;

};

struct {

doca_be32_t gtp_teid;

};

struct {

doca_be32_t esp_spi;

doca_be32_t esp_sn;

};

struct {

struct doca_flow_header_mpls mpls[DOCA_FLOW_MPLS_LABELS_MAX];

};

struct {

struct doca_flow_header_geneve geneve;

union doca_flow_geneve_option geneve_options[DOCA_FLOW_GENEVE_OPT_LEN_MAX];

};

};

};

type – type of tunnel (enum doca_flow_tun_type). Valid tunnel types:

DOCA_FLOW_TUN_VXLAN – VXLAN tunnel

DOCA_FLOW_TUN_GRE – GRE tunnel with option KEY (optional)

DOCA_FLOW_TUN_GTP – GTP tunnel

DOCA_FLOW_TUN_ESP – ESP tunnel

DOCA_FLOW_TUN_MPLS_O_UDP – MPLS tunnel (supports up to 5 headers)

DOCA_FLOW_TUN_GENEVE – GENEVE header format including option length, VNI, next protocol, and options.

vxlan_tun_vni – VNI (24) + reserved (8)

key_present – GRE option KEY is present

protocol – GRE next protocol

gre_key – GRE key option, match on this field only when key_present is true

gtp_teid – GTP TEID

esp_spi – IPsec session parameter index

esp_sn – IPsec sequence number

mpls – List of MPLS header format

geneve – GENEVE header format

geneve_options – List DWs describing GENEVE TLV options

The following table details which tunnel types support which operation on the tunnel header:

|

Tunnel Type |

Match(a) |

L2 encap(b) |

L2 decap(b) |

L3 encap(b) |

L3 decap(b) |

Modify(c) |

Copy(d) |

|

DOCA_FLOW_TUN_VXLAN |

✔ |

✔ |

✔ |

✘ |

✘ |

✔ |

✔ |

|

DOCA_FLOW_TUN_GRE |

✔ |

✔ |

✔ |

✔ |

✔ |

✘ |

✘ |

|

DOCA_FLOW_TUN_GTP |

✔ |

✘ |

✘ |

✔ |

✔ |

✔ |

✔ |

|

DOCA_FLOW_TUN_ESP |

✔ |

✘ |

✘ |

✘ |

✘ |

✘ |

✘ |

|

DOCA_FLOW_TUN_MPLS_O_UDP |

✔ |

✘ |

✘ |

✔ |

✔ |

✘ |

✔ |

|

DOCA_FLOW_TUN_GENEVE |

✔ |

✔ |

✔ |

✔ |

✔ |

✔ |

✔ |

(a) Support for matching on this tunnel header, configured in the tun field in struct doca_flow_match.

(b) Decapsulation/encapsulation of the tunnel header is to be enabled in struct doca_flow_actions using the Boolean fields decap and has_encap. An action descriptor with the type DOCA_FLOW_ACTION_DECAP_ENCAP must be provided if decap/encap is enabled. The action descriptor's Boolean field is_l2 determines the decapsulation/encapsulation tunnel type. It is the user's responsibility to determine whether a tunnel is type L2 or L3. If the user sets settings unaligned with the packets coming, anomalous behavior may occur.

(c) Support for modifying this tunnel header, configured in the tun field in struct doca_flow_actions.

(d) Support for copying fields to/from this tunnel header, configured in struct doca_flow_action_descs.

DOCA Flow Tunnel GENEVE

The DOCA_FLOW_TUN_GENEVE type includes the basic header for GENEVE and an array for GENEVE TLV options.

The GENEVE TLV options must be configured before in parser creation (doca_flow_parser_geneve_opt_create).

doca_flow_header_geneve

This structure defines GENEVE protocol header.

struct doca_flow_header_geneve {

uint8_t ver_opt_len;

uint8_t o_c;

doca_be16_t next_proto;

doca_be32_t vni;

};

ver_opt_len – version (2) + options length (6). The length is expressed in 4-byte multiples, excluding the GENEVE header.

o_c – OAM packet (1) + critical options present (1) + reserved (6).

next_proto – GENEVE next protocol. When GENEVE has options, it describes the protocol after the options.

gre_key – GENEVE VNI (24) + reserved (8).

doca_flow_geneve_option

This object describes a single DW (4-bytes) from the GENEVE option header. It describes either the first DW in the option including class, type, and length, or any other data DW.

union doca_flow_geneve_option {

struct {

doca_be16_t class_id;

uint8_t type;

uint8_t length;

};

doca_be32_t data;

};

class_id – Option class ID

type – Option type

length – Reserved (3) + option data length (5). The length is expressed in 4-byte multiples, excluding the option header.

data – 4 bytes of option data

GENEVE Matching Notes

Option type and length must be provided for each option at pipe creation time in a match structure

When class mode is DOCA_FLOW_PARSER_GENEVE_OPT_MODE_FIXED, option class must also be provided for each option at pipe creation time in a match structure

Option length field cannot be matched

Type field is the option identifier, it must be provided as a specific value upon pipe creation

Option data is taken as changeable when all data is filled with 0xffffffff including DWs which were not configured at parser creation time

In the match_mask structure, the DWs which have not been configured at parser creation time must be zero

GENEVE Encapsulation Notes

The encapsulation size is constant per doca_flow_actions structure. The size is determined by the tun.geneve.ver_opt_len field at pipe creation time. The default is 0 (no options).

The total encapsulation size is limited by 128 bytes. The tun.geneve.ver_opt_len field should follow this limitation according to the requested outer header sizes:

Header Included in Outer

Maximal tun.geneve.ver_opt_len Value

ETH + VLAN + IPV4 + UDP + GENEVE

18

ETH + VLAN + IPV6 + UDP + GENEVE

13

ETH + IPV4 + UDP + GENEVE

19

ETH + IPV6 + UDP + GENEVE

14

Options in encapsulation data do not have to be configured at parser creation time

GENEVE Decapsulation Notes

The options to decapsulate do not have to be configured at parser creation time.

doca_flow_match

This structure is a match configuration that contains the user-defined fields that should be matched on the pipe.

struct doca_flow_match {

uint32_t flags;

struct doca_flow_meta meta;

struct doca_flow_parser_meta parser_meta;

struct doca_flow_header_format outer;

struct doca_flow_tun tun;

struct doca_flow_header_format inner;

};

flags – Match items which are no value needed.

meta – Programmable metadata.

parser_meta – Read-only metadata.

outer – Outer packet header format.

tun – Tunnel info

inner – Inner packet header format.

doca_flow_actions

This structure is a flow actions configuration.

struct doca_flow_actions {

uint8_t action_idx;

uint32_t flags;

bool decap;

bool pop;

struct doca_flow_meta meta;

struct doca_flow_header_format outer;

struct doca_flow_tun tun;

bool has_encap;

struct doca_flow_encap_action encap;

bool has_push;

struct doca_flow_push_action push;

bool has_crypto_encap;

struct doca_flow_crypto_encap_action crypto_encap;

struct doca_flow_crypto_action crypto;

};

action_idx – Index according to place provided on creation.

flags – Action flags.

decap – Decap while it is set to true. If set to true, struct doca_flow_action_descs must be provided and an action descriptor of type DOCA_FLOW_ACTION_DECAP_ENCAP must be a part of it.

pop – Pop header while it is set to true.

meta – Modify meta value.

outer – Modify outer header.

tun – Modify tunnel header.

has_encap – Encap while it is set to true. If set to true, struct doca_flow_action_descs must be provided and an action descriptor of type DOCA_FLOW_ACTION_DECAP_ENCAP must be a part of it.

encap – Encap data information.

has_push – Push header while it is set to true.

push – Push header data information.

has_crypto_encap – Perform packet reformat for crypto protocols while it is set to true. If set to true, the structure doca_flow_crypto_encap_action provides a description for the header and trailer to be inserted or removed.

crypto_encap – Crypto protocols header and trailer data information.

crypto – Contains crypto action information.

doca_flow_encap_action

This structure is an encapsulation action configuration.

struct doca_flow_encap_action {

struct doca_flow_header_format outer;

struct doca_flow_tun tun;

};

outer – L2/3/4 layers of the outer tunnel header.

L2 - src/dst MAC addresses, ether type, VLAN

L3 - IPv4/6 src/dst IP addresses, TTL/hop_limit, dscp_ecn

L4 - the UDP dst port is determined by the tunnel type

tun – The specific fields of the used tunnel protocol. Supported tunnel types: GRE, GTP, VXLAN, MPLS, GENEVE.

doca_flow_push_action

This structure is a push action configuration.

struct doca_flow_push_action {

enum doca_flow_push_action_type type;

union {

struct doca_flow_header_eth_vlan vlan;

};

};

type – Push action type.

vlan – VLAN data.

The type field includes the following push action types:

DOCA_FLOW_PUSH_ACTION_VLAN – push VLAN.

doca_flow_crypto_encap_action

This structure is a crypto protocol packet reformat action configuration.

struct doca_flow_crypto_encap_action {

enum doca_flow_crypto_encap_action_type action_type;

enum doca_flow_crypto_encap_net_type net_type;

uint16_t icv_size;

uint16_t data_size;

uint8_t encap_data[DOCA_FLOW_CRYPTO_HEADER_LEN_MAX];

};

action_type – Reformat action type.

net_type – Protocol mode, network header type.

icv_size – Integrity check value size, in bytes; defines the trailer size.

data_size – Header size in bytes to be inserted from encap_data.

encap_data – Header data to be inserted.

The action_type field includes the following crypto encap action types:

DOCA_FLOW_CRYPTO_REFORMAT_NONE – No crypto encap action performed.

DOCA_FLOW_CRYPTO_REFORMAT_ENCAP – Add/insert the crypto header and trailer to the packet. data_size and encap_data should provide the data for the headers being inserted.

DOCA_FLOW_CRYPTO_REFORMAT_DECAP – Remove the crypto header and trailer from the packet. data_size and encap_data should provide the data for the headers being inserted for tunnel mode.

The net_type field includes the following protocol/network header types:

DOCA_FLOW_CRYPTO_HEADER_NONE – No header type specified.

DOCA_FLOW_CRYPTO_HEADER_ESP_TUNNEL – ESP tunnel mode header type. On encap, the full tunnel header data for new L2+L3+ESP should be provided. On decap, the new L2 header data should be provided.

DOCA_FLOW_CRYPTO_HEADER_ESP_OVER_IP – ESP transport over IP mode header type. On encap, the data for ESP header being inserted should be provided.

DOCA_FLOW_CRYPTO_HEADER_UDP_ESP_OVER_IP – UDP+ESP transport over IP mode header type. On encap, the data for UDP+ ESP headers being inserted should be provided.

DOCA_FLOW_CRYPTO_HEADER_ESP_OVER_LAN – ESP transport over UDP/TCP mode header type. On encap, the data for ESP header being inserted should be provided.

The icv_size field can be either 8, 12 or 16 for ESP.

The data_size field should not exceed DOCA_FLOW_CRYPTO_HEADER_LEN_MAX; can be zero for decap in non-tunnel modes.

doca_flow_crypto_action

This structure is a crypto action configuration to perform packet data encryption and decryption.

struct doca_flow_crypto_action {

enum doca_flow_crypto_action_type action_type;

enum doca_flow_crypto_protocol_type proto_type;

union {

struct {

bool sn_en;

} esp;

};

uint32_t crypto_id;

};

action_type – Crypto action type.

proto_type – Protocol type.

esp.sn_en – Enable ESP sequence number generation/checking.

crypto_id – Shared crypto resource ID.

The action_type field includes the following crypto action types:

DOCA_FLOW_CRYPTO_ACTION_NONE – No crypto action specified.

DOCA_FLOW_CRYPTO_ACTION_ENCRYPT – Encrypt packet data according to the chosen protocol.

DOCA_FLOW_CRYPTO_ACTION_DECRYPT – Decrypt packet data according to the chosen protocol.

The proto_type field includes the following protocols supported:

DOCA_FLOW_CRYPTO_PROTOCOL_NONE – No crypto protocol specified.

DOCA_FLOW_CRYPTO_PROTOCOL_ESP – IPsec ESP protocol.

doca_flow_action_descs

This structure describes operations executed on packets matched by the pipe.

Detailed compatibility matrix and usage can be found in section "Summary of Action Types".

struct doca_flow_action_descs {

uint8_t nb_action_desc;

struct doca_flow_action_desc *desc_array;

};

nb_action_desc – Maximum number of action descriptor array (i.e., number of descriptor array elements)

desc_array – Action descriptor array pointer

struct doca_flow_action_desc

struct doca_flow_action_desc {

enum doca_flow_action_type type;

union {

struct {

struct doca_flow_action_desc_field src;

struct doca_flow_action_desc_field dst;

unit32_t width;

} field_op;

struct {

bool is_l2;

} decap_encap;

};

};

type – Action type.

field_op – Field to copy/add source and destination descriptor. Add always applies from field bit 0 .

decap_encap – Descriptor of decap or encap action.

The type field includes the following forwarding modification types:

DOCA_FLOW_ACTION_AUTO – modification type derived from pipe action

DOCA_FLOW_ACTION_ADD – add from field value or packet field. Supports meta scratch, ipv4_ttl, ipv6_hop, tcp_seq, and tcp_ack.

DOCA_FLOW_ACTION_COPY – copy field

DOCA_FLOW_ACTION_DECAP_ENCAP – configures decap/encap for L2 or L3 level. Descriptor of this type is mandatory when decap or has_encap is true.

doca_flow_action_desc_field

This struct is the action descriptor's field configuration.

struct doca_flow_action_desc_field {

const char *field_string;

/**< Field selection by string. */

uint32_t bit_offset;

/**< Field bit offset. */

};

field_string – Field string. Describes which packet field is selected in string format.

bit_offset – Bit offset in the field.

WarningThe complete supported field string could be found in the "Field String Support" appendix.

doca_flow_monitor

This structure is a monitor configuration.

struct doca_flow_monitor {

enum doca_flow_resource_type meter_type;

/**< Type of meter configuration. */

union {

struct {

enum doca_flow_meter_limit_type limit_type;

/**< Meter rate limit type: bytes / packets per second */

uint64_t cir;

/**< Committed Information Rate (bytes/second). */

uint64_t cbs;

/**< Committed Burst Size (bytes). */

} non_shared_meter;

struct {

uint32_t shared_meter_id;

/**< shared meter id */

enum doca_flow_meter_color meter_init_color;

/**< meter initial color */

} shared_meter;

};

enum doca_flow_resource_type counter_type;

/**< Type of counter configuration. */

union {

struct {

uint32_t shared_counter_id;

/**< shared counter id */

} shared_counter;

};

uint32_t shared_mirror_id;

/**< shared mirror id. */

bool aging_enabled;

/**< Specify if aging is enabled */

uint32_t aging_sec;

/**< aging time in seconds.*/

};

meter_type – Defines the type of meter. Meters can be shared, non-shared, or not used at all.

non_shared_meter – non-shared meter params

limit_type – bytes versus packets measurement

cir – committed information rate of non-shared meter

cbs – committed burst size of non-shared meter

shared_meter – shared meter params

shared_meter_id – meter ID that can be shared among multiple pipes

meter_init_colr – the initial color assigned to a packet entering the meter

counter_type – defines the type of counter. Counters can be shared, or not used at all.

shared_counter_id – counter ID that can be shared among multiple pipes

shared_mirror_id – mirror ID that can be shared among multiple pipes

aging_enabled – set to true to enable aging

aging_sec – number of seconds from the last hit after which an entry is aged out

doca_flow_resource_type is defined as follows.:

enum doca_flow_resource_type {

DOCA_FLOW_RESOURCE_TYPE_NONE,

DOCA_FLOW_RESOURCE_TYPE_SHARED,

DOCA_FLOW_RESOURCE_TYPE_NON_SHARED

};

T(c) is the number of available tokens. For each packet where b equals the number of bytes, if t(c)-b≥0 the packet can continue, and tokens are consumed so that t(c)=t(c)-b. If t(c)-b<0, the packet is dropped.

CIR is the maximum bandwidth at which packets continue being confirmed. Packets surpassing this bandwidth are dropped. CBS is the maximum number of bytes allowed to exceed the CIR to be still CIR confirmed. Confirmed packets are handled based on the fwd parameter.

The number of <cir,cbs> pair different combinations is limited to 128.

Metering packets can be individual (i.e., per entry) or shared among multiple entries:

For the individual use case, set meter_type to DOCA_FLOW_RESOURCE_TYPE_NON_SHARED

For the shared use case, set meter_type to DOCA_FLOW_RESOURCE_TYPE_SHARED and shared_meter_id to the meter identifier

Counting packets can be individual (i.e., per entry) or shared among multiple entries:

For the individual use case, set counter type to DOCA_FLOW_RESOURCE_TYPE_SHARED and shared_counter_id to the counter identifier

For the shared use case, use a non-zero shared_counter_id

Mirroring packets can only be used as shared with a non-zero shared_mirror_id.

doca_flow_fwd

This structure is a forward configuration which directs where the packet goes next.

struct doca_flow_fwd {

enum doca_flow_fwd_type type;

union {

struct {

uint32_t rss_outer_flags;

uint32_t rss_inner_flags;

unit32_t *rss_queues;

int num_of_queues;

};

struct {

unit16_t port_id;

};

struct {

struct doca_flow_pipe *next_pipe;

};

struct {

struct doca_flow_pipe *pipe;

uint32_t idx;

} ordered_list_pipe;

struct {

struct doca_flow_target *target;

};

};

};

type – indicates the forwarding type

rss_outer_flags – RSS offload types on the outer-most layer (tunnel or non-tunnel).

rss_inner_flags – RSS offload types on the inner layer of a tunneled packet.

rss_queues – RSS queues array

num_of_queues – number of queues

port_id – destination port ID

next_pipe – next pipe pointer

ordered_list_pipe.pipe – ordered list pipe to select an entry from

ordered_list_pipe.idx – index of the ordered list pipe entry

target - target pointer

The type field includes the forwarding action types defined in the following enum:

DOCA_FLOW_FWD_RSS – forwards packets to RSS

DOCA_FLOW_FWD_PORT – forwards packets to port

DOCA_FLOW_FWD_PIPE – forwards packets to another pipe

DOCA_FLOW_FWD_DROP – drops packets

DOCA_FLOW_FWD_ORDERED_LIST_PIPE - forwards packet to a specific entry in an ordered list pipe

DOCA_FLOW_FWD_TARGET – forwards packets to a target

The rss_outer_flags and rss_inner_flags fields must be configured exclusively (either outer or inner).

Each outer/inner field is a bitwise OR of the RSS fields defined in the following enum:

DOCA_FLOW_RSS_IPV4 – RSS by IPv4 header

DOCA_FLOW_RSS_IPV6 – RSS by IPv6 header

DOCA_FLOW_RSS_UDP – RSS by UDP header

DOCA_FLOW_RSS_TCP – RSS by TCP header

When specifying an RSS L4 type (DOCA_FLOW_RSS_TCP or DOCA_FLOW_RSS_UDP) it must have a bitwise OR with RSS L3 types (DOCA_FLOW_RSS_IPV4 or DOCA_FLOW_RSS_IPV6).

doca_flow_query

This struct is a flow query result.

struct doca_flow_query {

uint64_t total_bytes;

uint64_t total_pkts;

};

The struct doca_flow_query contains the following elements:

total_bytes – total bytes that hit this flow.

total_ptks – total packets that hit this flow.

doca_flow_init

This function is the global initialization function for DOCA Flow.

doca_error_t doca_flow_init(const struct doca_flow_cfg *cfg);

cfg [in] – Pointer to flow config structure.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input

DOCA_ERROR_NO_MEMORY – Memory allocation failed

DOCA_ERROR_NOT_SUPPORTED – Unsupported pipe type

DOCA_ERROR_UNKNOWN – Otherwise

doca_flow_init must be invoked first before any other function in this API. This is a one-time call used for DOCA Flow initialization and global configurations.

doca_flow_destroy

This function is the global destroy function for DOCA Flow.

void doca_flow_destroy(void);

doca_flow_destroy must be invoked last to stop using DOCA Flow.

doca_flow_port_start

This function starts a port with its given configuration. It creates one port in the DOCA Flow layer, allocates all resources used by this port, and creates the default offload flow rules to redirect packets into software queues .

doca_error_t doca_flow_port_start(const struct doca_flow_port_cfg *cfg,

struct doca_flow_port **port);

cfg [in] – Pointer to DOCA Flow config structure.

port [out] – Pointer to DOCA Flow port handler on success.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input

DOCA_ERROR_NO_MEMORY – Memory allocation failed

DOCA_ERROR_NOT_SUPPORTED – Unsupported pipe type

DOCA_ERROR_UNKNOWN – Otherwise

doca_flow_port_start modifies the state of the underlying DPDK port implementing the DOCA port. The DPDK port is stopped, then the flow configuration is applied, calling rte_flow_configure before starting the port again.

doca_flow_port_start must be called before any other DOCA Flow API to avoid conflicts.

In switch mode, the representor port must be stopped before switch port is stopped.

doca_flow_port_stop

This function releases all resources used by a DOCA flow port and removes the port's default offload flow rules.

doca_error_t doca_flow_port_stop(struct doca_flow_port *port);

port [in] – Pointer to DOCA Flow port handler.

doca_flow_port_pair

This function pairs two DOCA ports. After successfully pairing the two ports, traffic received on either port is transmitted via the other port by default.

For a pair of non-representor ports, this operation is required before port-based forwarding flows can be created. It is optional, however, if either port is a representor.

The two paired ports have no order.

A port cannot be paired with itself.

doca_error_t *doca_flow_port_pair(struct doca_flow_port *port,

struct doca_flow_port *pair_port);

port [in] – Pointer to the DOCA Flow port structure.

pair_port [in] – Pointer to another DOCA Flow port structure.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input.

DOCA_ERROR_NO_MEMORY – Memory allocation failed.

DOCA_ERROR_UNKNOWN – Otherwise.

doca_flow_pipe_create

This function creates a new pipeline to match and offload specific packets. The pipeline configuration is defined in the doca_flow_pipe_cfg. The API creates a new pipe, but does not start the hardware offload.

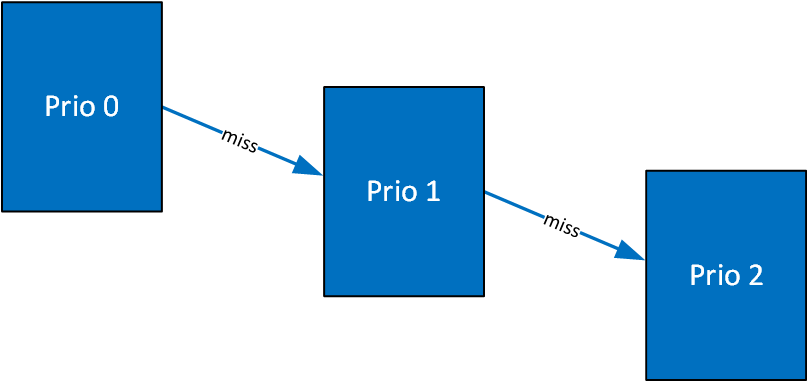

When cfg type is DOCA_FLOW_PIPE_CONTROL, the function creates a special type of pipe that can have dynamic matches and forwards with priority.

doca_error_t

doca_flow_pipe_create(const struct doca_flow_pipe_cfg *cfg,

const struct doca_flow_fwd *fwd,

const struct doca_flow_fwd *fwd_miss,

struct doca_flow_pipe **pipe);

cfg [in] – Pointer to flow pipe config structure.

fwd [in] – Pointer to flow forward config structure.

fwd_miss [in] – Pointer to flow forward miss config structure. NULL for no fwd_miss.

WarningWhen fwd_miss configuration is provided for basic and hash pipes , they are executed on miss. For any other pipe type, the configuration is ignored.

pipe [out] – Pointer to pipe handler on success.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input

DOCA_ERROR_NOT_SUPPORTED – Unsupported pipe type

DOCA_ERROR_DRIVER – Driver error

doca_flow_pipe_add_entry

This function adds a new entry to a pipe. When a packet matches a single pipe, it starts hardware offload. The pipe defines which fields to match. This API does the actual hardware offload, with the information from the fields of the input packets.

doca_error_t

doca_flow_pipe_add_entry(uint16_t pipe_queue,

struct doca_flow_pipe *pipe,

const struct doca_flow_match *match,

const struct doca_flow_actions *actions,

const struct doca_flow_monitor *monitor,

const struct doca_flow_fwd *fwd,

unit32_t flags,

void *usr_ctx,

struct doca_flow_pipe_entry **entry);

pipe_queue [in] – Queue identifier

priority [in] – Priority value

pipe [in] – Pointer to flow pipe

match [in] – Pointer to flow match. Indicates specific packet match information.

actions [in] – Pointer to modify actions. Indicates specific modify information.

monitor [in] – Pointer to monitor actions

fwd [in] – Pointer to flow forward actions

flags [in] – can be set as DOCA_FLOW_WAIT_FOR_BATCH or DOCA_FLOW_NO_WAIT

DOCA_FLOW_WAIT_FOR_BATCH – this entry waits to be pushed to hardware

DOCA_FLOW_NO_WAIT – this entry is pushed to hardware immediately

usr_ctx [in] – Pointer to user context (see note at doca_flow_entry_process_cb)

entry [out] – Pointer to pipe entry handler on success

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input

DOCA_ERROR_DRIVER – Driver error

Some sanity checks may be omitted to avoid extra delays during flow insertion. For example, when forwarding to a pipe, the next_pipe field of struct doca_flow_fwd must contain a valid pointer. DOCA does not detect misconfigurations like these unless doca_flow_init() is configured to with the verify option.

doca_flow_pipe_update_entry

This function updates an entry with a new set of actions.

doca_error_t

doca_flow_pipe_update_entry(uint16_t pipe_queue,

struct doca_flow_pipe *pipe,

const struct doca_flow_actions *actions,

const struct doca_flow_monitor *mon,

const struct doca_flow_fwd *fwd,

const enum doca_flow_flags_type flags,

struct doca_flow_pipe_entry *entry);

pipe_queue [in] – Queue identifier.

pipe [in] – Pointer to flow pipe.

actions [in] – Pointer to modify actions. Indicates specific modify information.

mon [in] – Pointer to monitor actions.

fwd [in] – Pointer to flow forward actions.

flags [in] – can be set as DOCA_FLOW_WAIT_FOR_BATCH or DOCA_FLOW_NO_WAIT.

DOCA_FLOW_WAIT_FOR_BATCH – this entry waits to be pushed to hardware.

DOCA_FLOW_NO_WAIT – this entry is pushed to hardware immediately.

entry [in] – Pointer to pipe entry to update.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input

DOCA_ERROR_DRIVER – Driver error

doca_flow_pipe_control_add_entry

This function adds a new entry to a control pipe. When a packet matches a single pipe, it starts hardware offload. The pipe defines which fields to match. This API does the actual hardware offload with the information from the fields of the input packets.

doca_error_t

doca_flow_pipe_control_add_entry(uint16_t pipe_queue,

struct doca_flow_pipe *pipe,

const struct doca_flow_match *match,

const struct doca_flow_match *match_mask,

const struct doca_flow_actions *actions,

const struct doca_flow_actions *actions_mask,

const struct doca_flow_action_descs *action_descs,

const struct doca_flow_monitor *monitor,

const struct doca_flow_fwd *fwd,

struct doca_flow_pipe_entry **entry);

pipe_queue [in] – Queue identifier.

priority [in] – Priority value.

pipe [in] – Pointer to flow pipe.

match [in] – Pointer to flow match. Indicates specific packet match information.

match_mask [in] – Pointer to flow match mask information.

actions [in] – Pointer to modify actions. Indicates specific modify information.

actions_mask [in] – Pointer to modify actions' mask. Indicates specific modify mask information.

action_descs [in] – Pointer to action descriptors.

monitor [in] – Pointer to monitor actions.

fwd [in] – Pointer to flow forward actions.

entry [out] – Pointer to pipe entry handler on success.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input

DOCA_ERROR_DRIVER – Driver error

doca_flow_pipe_lpm_add_entry

This function adds a new entry to an LPM pipe. This API does the actual hardware offload all entries when flags is set to DOCA_FLOW_NO_WAIT.

doca_error_t

doca_flow_pipe_lpm_add_entry(uint16_t pipe_queue,

struct doca_flow_pipe *pipe,

const struct doca_flow_match *match,

const struct doca_flow_match *match_mask,

const struct doca_flow_actions *actions,

const struct doca_flow_monitor *monitor,

const struct doca_flow_fwd *fwd,

unit32_t flags,

void *usr_ctx,

struct doca_flow_pipe_entry **entry);

pipe_queue [in] – Queue identifier.

pipe [in] – Pointer to flow pipe.

match [in] – Pointer to flow match. Indicates specific packet match information.

match_mask [in] – Pointer to flow match mask information.

actions [in] – Pointer to modify actions. Indicates specific modify information.

monitor [in] – Pointer to monitor actions.

fwd [in] – Pointer to flow FWD actions.

flags [in] – Can be set as DOCA_FLOW_WAIT_FOR_BATCH or DOCA_FLOW_NO_WAIT.

DOCA_FLOW_WAIT_FOR_BATCH – LPM collects this flow entry

DOCA_FLOW_NO_WAIT – LPM adds this entry, builds the LPM software tree, and pushes all entries to hardware immediately

usr_ctx [in] – Pointer to user context (see note at doca_flow_entry_process_cb)

entry [out] – Pointer to pipe entry handler on success.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input.

DOCA_ERROR_DRIVER – Driver error.

doca_flow_pipe_lpm_update_entry

This function updates an LPM entry with a new set of actions.

doca_error_t

doca_flow_pipe_lpm_update_entry(uint16_t pipe_queue,

struct doca_flow_pipe *pipe,

const struct doca_flow_actions *actions,

const struct doca_flow_monitor *monitor,

const struct doca_flow_fwd *fwd,

const enum doca_flow_flags_type flags,

struct doca_flow_pipe_entry *entry);

pipe_queue [in] – Queue identifier.

pipe [in] – Pointer to flow pipe.

actions [in] – Pointer to modify actions. Indicates specific modify information.

monitor [in] – Pointer to monitor actions.

fwd [in] – Pointer to flow FWD actions.

flags [in] – Can be set as DOCA_FLOW_WAIT_FOR_BATCH or DOCA_FLOW_NO_WAIT.

DOCA_FLOW_WAIT_FOR_BATCH – LPM collects this flow entry

DOCA_FLOW_NO_WAIT – LPM updates this entry and pushes all entries to hardware immediately

entry [in] – Pointer to pipe entry to update.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input.

DOCA_ERROR_DRIVER – Driver error.

doca_flow_pipe_acl_add_entry

This function adds a new entry to an ACL pipe. This API performs the actual hardware offload for all entries when flags is set to DOCA_FLOW_NO_WAIT.

doca_error_t

doca_flow_pipe_acl_add_entry(uint16_t pipe_queue,

struct doca_flow_pipe *pipe,

const struct doca_flow_match *match,

const struct doca_flow_match *match_mask,

uint8_t priority,

const struct doca_flow_fwd *fwd,

unit32_t flags,

void *usr_ctx,

struct doca_flow_pipe_entry **entry);

pipe_queue [in] – Queue identifier.

pipe [in] – Pointer to flow pipe.

match [in] – Pointer to flow match. Indicates specific packet match information.

match_mask [in] – Pointer to flow match mask information.

priority [in] – Priority value.

fwd [in] – Pointer to flow FWD actions.

flags [in] – Can be set as DOCA_FLOW_WAIT_FOR_BATCH or DOCA_FLOW_NO_WAIT.

DOCA_FLOW_WAIT_FOR_BATCH – ACL collects this flow entry

DOCA_FLOW_NO_WAIT – ACL adds this entry, builds the ACL software model, and pushes all entries to hardware immediately

usr_ctx [in] – Pointer to user context (see note at doca_flow_entry_process_cb)

entry [out] – Pointer to pipe entry handler on success.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input

DOCA_ERROR_DRIVER – Driver error

doca_flow_pipe_ordered_list_add_entry

This function adds a new entry to an order list pipe. When a packet matches a single pipe, it starts hardware offload. The pipe defines which fields to match. This API does the actual hardware offload, with the information from the fields of the input packets.

doca_error_t

doca_flow_pipe_ordered_list_add_entry(uint16_t pipe_queue,

struct doca_flow_pipe *pipe,

uint32_t idx,

const struct doca_flow_ordered_list *ordered_list,

const struct doca_flow_fwd *fwd,

enum doca_flow_flags_type flags,

void *user_ctx,

struct doca_flow_pipe_entry **entry);

pipe_queue [in] – Queue identifier.

pipe [in] – Pointer to flow pipe.

idx [in] – A unique entry index. It is the user's responsibility to ensure uniqueness.

ordered_list [in] – Pointer to an ordered list structure with pointers to struct doca_flow_actions and struct doca_flow_monitor at the same indices as they were at the pipe creation time. If the configuration contained an element of struct doca_flow_action_descs, the corresponding array element is ignored and can be NULL.

fwd [in] – Pointer to flow FWD actions.

flags [in] – Can be set as DOCA_FLOW_WAIT_FOR_BATCH or DOCA_FLOW_NO_WAIT.

DOCA_FLOW_WAIT_FOR_BATCH – this entry waits to be pushed to hardware

DOCA_FLOW_NO_WAIT – this entry is pushed to hardware immediately

user_ctx [in] – Pointer to user context (see note at doca_flow_entry_process_cb).

entry [out] – Pointer to pipe entry handler to fill.

Returns – DOCA_SUCCESS in case of success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input.

DOCA_ERROR_NO_MEMORY – Memory allocation failed.

DOCA_ERROR_DRIVER – Driver error.

doca_flow_pipe_hash_add_entry

This function adds a new entry to a hash pipe. When a packet matches a single pipe, it starts hardware offload. The pipe defines which fields to match. This API does the actual hardware offload with the information from the fields of the input packets.

doca_error_t

doca_flow_pipe_hash_add_entry(uint16_t pipe_queue,

struct doca_flow_pipe *pipe,

uint32_t entry_index,

const struct doca_flow_actions *actions,

const struct doca_flow_monitor *monitor,

const struct doca_flow_fwd *fwd,

const enum doca_flow_flags_type flags,

void *usr_ctx,

struct doca_flow_pipe_entry **entry);

pipe_queue [in] – Queue identifier.

pipe [in] – Pointer to flow pipe.

entry_index [in] – A unique entry index. If the index is not unique, the function returns error.

actions [in] – Pointer to modify actions. Indicates specific modify information.

monitor [in] – Pointer to monitor actions.

fwd [in] – Pointer to flow FWD actions.

flags [in] – Can be set as DOCA_FLOW_WAIT_FOR_BATCH or DOCA_FLOW_NO_WAIT

DOCA_FLOW_WAIT_FOR_BATCH – This entry waits to be pushed to hardware

DOCA_FLOW_NO_WAIT – This entry is pushed to hardware immediately

usr_ctx [in] – Pointer to user context (see note at doca_flow_entry_process_cb)

entry [out] – Pointer to pipe entry handler to fill.

Returns – DOCA_SUCCESS in case of success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input.

DOCA_ERROR_DRIVER – Driver error.

doca_flow_pipe_resize

This function resizes a pipe (currently only type DOCA_FLOW_PIPE_CONTROL is supported if, on creation, its configuration had is_resizable == true). The new congestion level is the percentage by which the new pipe size is determined. For example, if there are 800 entries in the pipe and congestion level is 50%, the new size of the pipe would be 1600 entries rounded up to the nearest a power of 2. The nr_entries_changed_cb and entry_relocation_cb are optional callbacks. The first callback informs on the exact new number of entries in the pipe. The second callback informs on entries that have been relocated from the smaller to the resized pipe.

doca_error_t

doca_flow_pipe_resize(struct doca_flow_pipe *pipe,

uint8_t new_congestion_level,

doca_flow_pipe_resize_nr_entries_changed_cb nr_entries_changed_cb,

doca_flow_pipe_resize_entry_relocate_cb entry_relocation_cb);

pipe [in] – Pointer to pipe to be resized

new_congestion_level [in] – Percentage to calculate the new new pipe size based on the current number of entries. The final size may be rounded up to the nearest power of 2.

nr_entries_changed_cb [in] – Pointer to callback when the new pipe size is set.

entry_relocation_cb [in] – Pointer to callback for each entry in the pipe that will be part of the resized pipe.

WarningCallbacks are optional. Either both are set or none.

Returns – DOCA_SUCCESS on success. Error code in case of failure.

doca_flow_pipe_process_cb

This typedef callback defines the received notification API for once congestion is reached or once the resize operation is completed.

enum doca_flow_pipe_op {

DOCA_FLOW_PIPE_OP_CONGESTION_REACHED,

DOCA_FLOW_PIPE_OP_RESIZED,

...

};

enum doca_flow_pipe_status {

DOCA_FLOW_PIPE_STATUS_SUCCESS = 1,

DOCA_FLOW_PIPE_STATUS_ERROR,

};

typedef void (*doca_flow_pipe_process_cb)(struct doca_flow_pipe *pipe,

enum doca_flow_pipe_status status,

enum doca_flow_pipe_op op, void *user_ctx);

pipe [in] – Pointer to the pipe whose process is reported.

status [in] – Process completion status (i.e., success or error).

op [in] – Operation reported in process, such as CONGESTION_REACHED, RESIZED.

user_ctx [in] – Pointer to user pipe context as configured on pipe creation.

WarningCallbacks are optional. Either both are set or none.

Returns – DOCA_SUCCESS on success. Error code in case of failure.

doca_flow_entries_process

This function processes entries in the queue. The application must invoke this function to complete flow rule offloading and to receive the flow rule's operation status.

If doca_flow_pipe_resize() is called, this function must be invoked as well to complete the resize process (i.e., until DOCA_FLOW_OP_PIPE_RESIZED is received as part of doca_flow_pipe_process_cb() callback).

doca_error_t

doca_flow_entries_process(struct doca_flow_port *port,

uint16_t pipe_queue,

uint64_t timeout,

uint32_t max_processed_entries);

port [in] – Pointer to the flow port structure.

pipe_queue [in] – Queue identifier.

timeout [in] – Timeout value in microseconds.

max_processed_entries [in] – Pointer to the flow pipe.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_DRIVER – Driver error.

doca_flow_entry_status

This function gets the status of pipe entry.

enum doca_flow_entry_status

doca_flow_entry_get_status(struct doca_flow_entry *entry);

entry [in] – Pointer to the flow pipe entry to query.

Returns – Entry's status, defined in the following enum:

DOCA_FLOW_ENTRY_STATUS_IN_PROCESS – the operation is in progress

DOCA_FLOW_ENTRY_STATUS_SUCCESS – the operation completed successfully

DOCA_FLOW_ENTRY_STATUS_ERROR – the operation failed

doca_flow_entry_query

This function queries packet statistics about a specific pipe entry.

The pipe must have been created with the DOCA_FLOW_MONITOR_COUNT flag or the query will return an error.

doca_error_t doca_flow_query_entry(struct doca_flow_pipe_entry *entry, struct doca_flow_query *query_stats);

entry [in] – Pointer to the flow pipe entry to query

query_stats [out] – Pointer to the data retrieved by the query

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input

DOCA_ERROR_UNKNOWN – Otherwise

doca_flow_query_pipe_miss

This function queries packet statistics about a specific pipe miss flow.

The pipe must have been created with the miss_counter set to true or the query will return an error.

doca_error_t doca_flow_query_pipe_miss(struct doca_flow_pipe *pipe, struct doca_flow_query *query_stats);

pipe [in] – Pointer to the flow pipe to query.

query_stats [out] – Pointer to the data retrieved by the query.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input

DOCA_ERROR_UNKNOWN – Otherwise

doca_flow_aging_handle

This function handles the aging of all the pipes of a given port. It goes over all flows and releases aged flows from being tracked. The user gets a notification in the callback about the aged entries. Since the number of flows can be very large, it can take a significant amount of time to go over all flows, so this function is limited by a time quota. This means it might return without handling all flows which requires the user to call it again.

The pipe needs to have been created with the DOCA_FLOW_MONITOR_COUNT flag or the query will return an error.

int doca_flow_aging_handle(struct doca_flow_port *port,

uint16_t queue,

uint64_t quota);

queue [in] – Queue identifier.

quota [in] – Max time quota in microseconds for this function to handle aging.

Returns

>0 – the number of aged flows filled in entries array.

0 – no aged entries in current call.

-1 – full cycle is done.

doca_flow_mpls_label_encode

This function prepares an MPLS label header in big-endian. Input variables are provided in CPU-endian.

doca_error_t

doca_flow_mpls_label_encode(uint32_t label, uint8_t traffic_class, uint8_t ttl, bool bottom_of_stack,

struct doca_flow_header_mpls *mpls);

label [in] – Label value (20 bits).

traffic_class [in] – Traffic class (3 bits).

ttl [in] – Time to live (8 bits).

bottom_of_stack [in] – Whether this MPLS is bottom-of-stack.

mpls [out] – Pointer to MPLS structure to fill.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input.

doca_flow_mpls_label_decode

This function decodes an MPLS label header. Output variables are returned in CPU-endian.

doca_error_t

doca_flow_mpls_label_decode(const struct doca_flow_header_mpls *mpls, uint32_t *label,

uint8_t *traffic_class, uint8_t *ttl, bool *bottom_of_stack);

mpls [in] – Pointer to MPLS structure to decode.

label [out] – Pointer to fill MPLS label value.

traffic_class [out] – Pointer to fill MPLS traffic class value.

ttl [out] – Pointer to fill MPLS TTL value.

bottom_of_stack [out] – Pointer to fill whether this MPLS is bottom-of-stack.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input

doca_flow_parser_geneve_opt_create

This function must be called before creation of any pipe using GENEVE option.

This operation is only supported when FLEX_PARSER_PROFILE_ENABLE = 8. To do that, run:

mlxconfig -d <device-id> set FLEX_PARSER_PROFILE_ENABLE=8

This function prepares a GENEVE TLV parser for a selected port.

This API is port oriented, but the configuration is done once for all ports under the same physical device. Each port should call this API before using GENEVE options, but it must use the same options in the same order in the list.

Each physical device has 7 DWs for GENEVE TLV options. Each non-zero element in the data_mask array consumes one DW, and choosing a matchable mode per class consumes additional one.

Calling this API for a second port under the same physical device does not consume more DWs as it uses same configuration.

doca_error_t

doca_flow_parser_geneve_opt_create(const struct doca_flow_port *port,

const struct doca_flow_parser_geneve_opt_cfg tlv_list[i],

uint8_t nb_options, struct doca_flow_parser **parser);

port [in] – Pointer to DOCA Flow port.

tlv_list [in] – An array to create GENEVE TLV parser for. The index option in this array is used as an option identifier in the action descriptor string.

nb_options [in] – The number of options in the TLV array.

parser [out] – Pointer to parser handler to fill on success.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input

DOCA_ERROR_NO_MEMORY – Memory allocation failed

DOCA_ERROR_NOT_SUPPORTED – Unsupported configuration

DOCA_ERROR_ALREADY_EXIST – Physical device already has parser, by either same or another port

DOCA_ERROR_UNKNOWN – Otherwise

doca_flow_parser_geneve_opt_destroy

This function must be called after the last use of the GENEVE option and before port closing.

This function destroys GENEVE TLV parser.

doca_error_t

doca_flow_parser_geneve_opt_destroy(struct doca_flow_parser *parser);

parser [in] – Pointer to parser to be destroyed.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input.

DOCA_ERROR_IN_USE – One of the options is being used by a pipe.

DOCA_ERROR_DRIVER – There is no valid GENEVE TLV parser in this handle.

DOCA_ERROR_UNKNOWN – Otherwise.

doca_flow_get_target

This function gets a target handler.

doca_error_t

doca_flow_get_target(enum doca_flow_target_type, struct doca_flow_target **target);

type [in] – Target type.

target [out] – Pointer to target handler.

Returns – DOCA_SUCCESS on success. Error code in case of failure:

DOCA_ERROR_INVALID_VALUE – Received invalid input.

DOCA_ERROR_NOT_SUPPORTED – Unsupported type.

The type field includes the following target type:

DOCA_FLOW_TARGET_KERNEL

doca_flow_port_switch_get

doca_flow_port_switch_get(const struct doca_flow_port *port);

port [in] – The port for which to get the associated switch port

Returns - the switch port or NULL if none exists

doca_flow_pipe_calc_hash

doca_flow_pipe_calc_hash(struct doca_flow_pipe *pipe, const struct doca_flow_match *match, uint32_t *hash);

pipe [in] – Pointer to flow pipe.

match [in] – Pointer to flow match. Indicates specific packet match information.

hash [out] – Holds the calculation result for a given packet.

Limitations:

Can only be used by hash pipe

Must have only fixed actions

Only supports full masked items. Partial masks are considered full mask.

A shared counter can be used in multiple pipe entries. The following are the steps for configuring and using shared counters.

On doca_flow_init()

Specify the total number of shared counters to be used, nb_shared_counters.

This call implicitly defines the shared counters IDs in the range of 0-nb_shared_counters-1.

.nr_shared_resources = {

[DOCA_FLOW_SHARED_RESOURCE_COUNT] = nb_shared_counters

},

On doca_flow_shared_resource_cfg()

This call can be skipped for shared counters.

On doca_flow_shared_resource_bind()

This call binds a bulk of shared counters IDs to a specific pipe or port.

doca_error_t

doca_flow_shared_resources_bind(enum doca_flow_shared_resource_type type, uint32_t *res_array,

uint32_t res_array_len, void *bindable_obj);

res_array [in] – Array of shared counters IDs to be bound.

res_array_len [in] – Array length.

bindable_obj – Pointer to either a pipe or port.

This call allocates the counter's objects. A counter ID specified in this array can only be used later by the corresponding bindable object (pipe or port).

The following example binds counter IDs 2, 4, and 7 to a pipe. The counters' IDs must be within the range 0-nb_shared_coutners-1.

uint32_t shared_counters_ids[] = {2, 4, 7};

struct doca_flow_pipe *pipe = ...

doca_flow_shared_resources_bind(

DOCA_FLOW_SHARED_RESOURCE_COUNT,

shared_counters_ids, 3, pipe, &error);

On doca_flow_pipe_add_entry() or Pipe Configuration (struct doca_flow_pipe_cfg)

The shared counter ID is included in the monitor parameter. It must be bound in advance to the pipe object.

struct doca_flow_monitor {

...

uint32_t shared_counter_id;

/**< shared counter id */

...

}

Packets matching the pipe entry are counted on the shared_counter_id. In pipe configuration, the shared_counter_id can be changeable (all FFs) and then the pipe entry holds the specific shared counter ID.

In switch mode, verifying counter domain is skipped.

Querying Bulk of Shared Counter IDs

Use this API:

int

doca_flow_shared_resources_query(enum doca_flow_shared_resource_type type,

uint32_t *res_array,

struct doca_flow_shared_resource_result *query_results_array,

uint32_t array_len,

struct doca_flow_error *error);

res_array [in] – Array of shared counters IDs to be queried.

res_array_len [in] – Array length.

query_results_array [out] – Query results array. The user must have allocated it prior to calling this API.

The type parameter is DOCA_FLOW_SHARED_RESOURCE_COUNT.

On doca_flow_pipe_destroy() or doca_flow_port_stop()

All bound resource IDs of this pipe or port are destroyed.

A shared meter can be used in multiple pipe entries (hardware steering mode support only).

The shared meter action marks a packet with one of three colors: Green, Yellow, and Red. The packet color can then be matched in the next pipe, and an appropriate action may be taken. For example, packets marked in red color are usually dropped. So, the next pipe to meter action may have an entry which matches on red and has fwd type DOCA_FLOW_FWD_DROP.

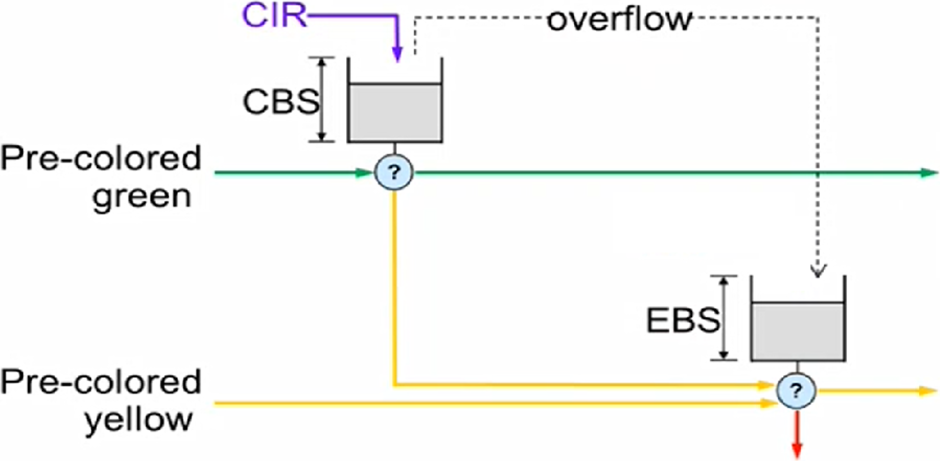

DOCA Flow supports three marking algorithms based on RFCs: 2697, 2698, and 4115.

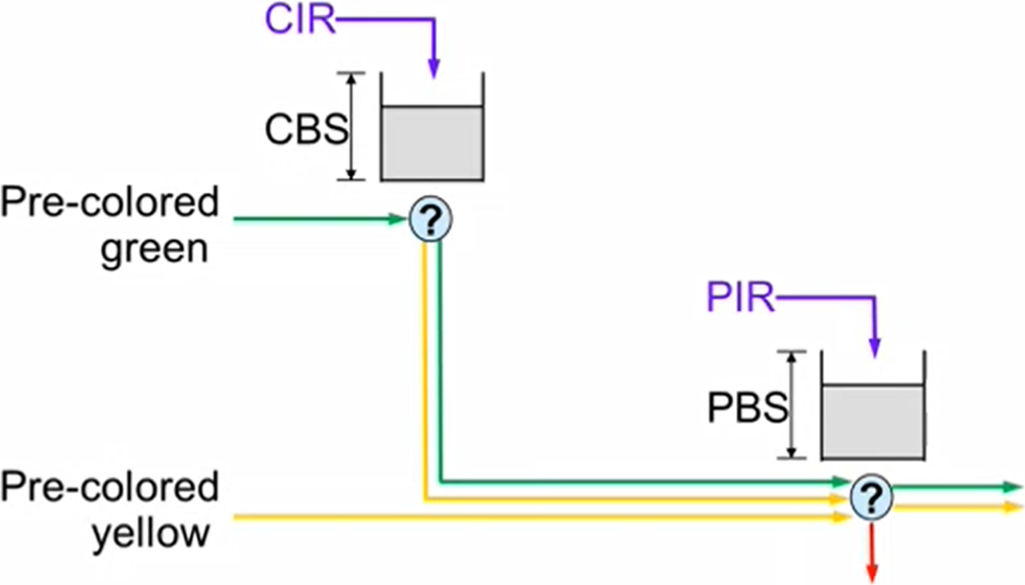

RFC 2697 – Single-rate Three Color Marker (srTCM)

CBS (committed burst size) is the bucket size which is granted credentials at a CIR (committed information rate). If CBS overflow occurs, credentials are passed to the EBS (excess burst size) bucket. Packets passing through the meter consume credentials. A packet is marked green if it does not exceed the CBS, yellow if it exceeds the CBS but not the EBS, and red otherwise. A packet can have an initial color upon entering the meter. A pre-colored yellow packet will start consuming credentials from the EBS.

RFC 2698 – Two-rate Three Color Marker (trTCM)

CBS and CIR are defined as in RFC 2697. PBS (peak burst size) is a second bucket which is granted credentials at a PIR (peak information rate). There is no overflow of credentials from the CBS bucket to the PBS bucket. The PIR must be equal to or greater than the CIR. Packets consuming CBS credentials consume PBS credentials as well. A packet is marked red if it exceeds the PIR. Otherwise, it is marked either yellow or green depending on whether it exceeds the CIR or not. A packet can have an initial color upon entering the meter. A pre-colored yellow packet starts consuming credentials from the PBS.

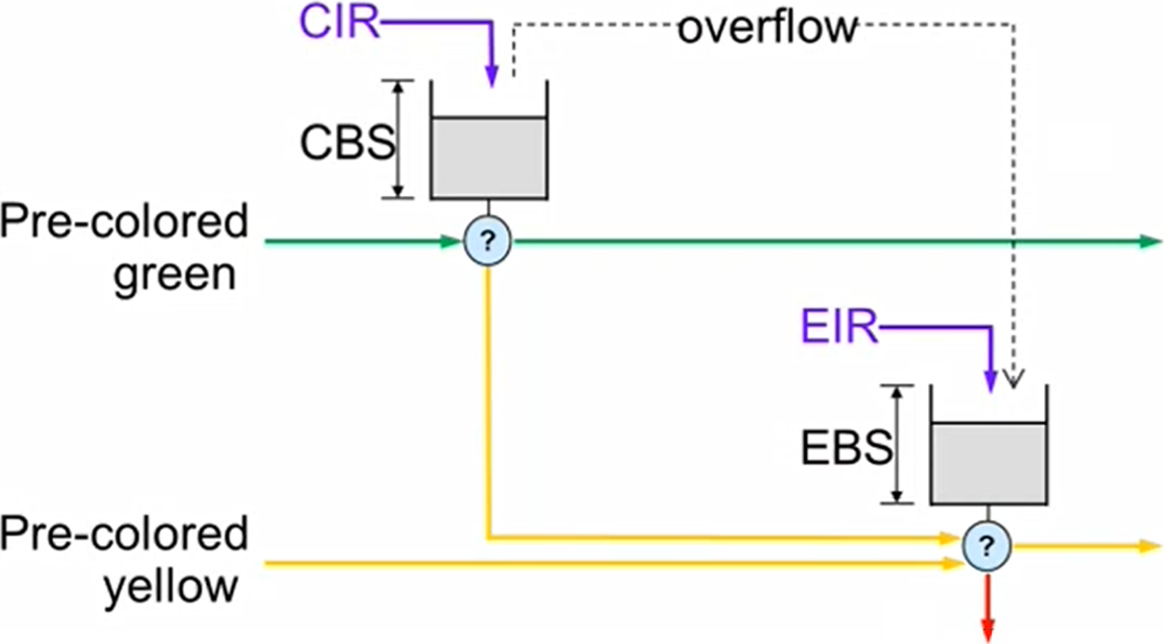

RFC 4115 – trTCM without Peak-rate Dependency

EBS is a second bucket which is granted credentials at a EIR (excess information rate) and gets overflowed credentials from the CBS. For the packet marking algorithm, refer to RFC 4115.

The following sections present the steps for configuring and using shared meters to mark packets.

On doca_flow_init()

Specify the total number of shared meters to be used, nb_shared_meters.

The following call is an example how to initialize both shared counters and meter ranges. This call implicitly defines the shared counter IDs in the range of 0-nb_shared_counters-1 and the shared meter IDs in the range of 0-nb_shared_meters-1.

struct doca_flow_cfg cfg = {

.queues = queues,

...

.nr_shared_resources = {nb_shared_meters, nb_shared_counters, ...},

}

doca_flow_init(&cfg, &error);

On doca_flow_shared_resource_cfg()

This call binds a specific meter ID with its committed information rate (CIR) and committed burst size (CBS).

struct doca_flow_resource_meter_cfg {

...

uint64_t cir;

/**< Committed Information Rate (bytes/second). */

uint64_t cbs;

/**< Committed Burst Size (bytes). */

...

};

struct doca_flow_shared_resource_cfg {

union {

struct doca_flow_resource_meter_cfg meter_cfg;

...

};

};

int

doca_flow_shared_resource_cfg(enum doca_flow_shared_resource_type type, uint32_t id,