NVIDIA DRIVE OS 5.1 Linux SDK Developer Guide 5.1.12.0 Release |

NVIDIA DRIVE OS 5.1 Linux SDK Developer Guide 5.1.12.0 Release |

Component | Description |

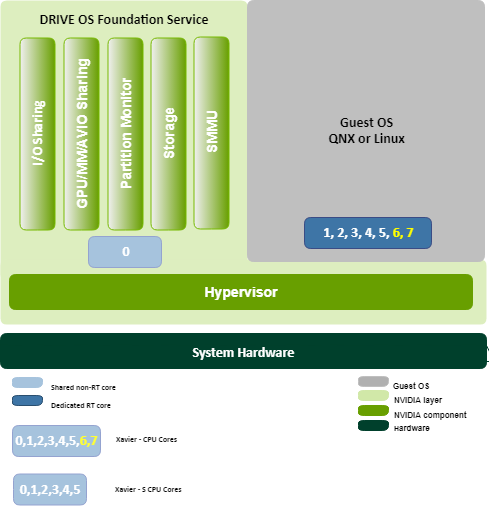

Hypervisor | Trusted Software server that separates the system into partitions. Each partition can contain an operating system or a bare-metal application. The Hypervisor manages: • Guest OS partitions and the isolation between them. • Partitions’ virtual views of the CPU and memory resources. • Hardware interactions • Run-lists • Channel recovery Hypervisor is optimized to run on the ARMv8.2 Architecture. |

I/O Sharing Server | Allocates peripherals that Guest OS need to control. |

Storage | Virtualizes storage devices on SoC. |

GPU/MM/AVIO Sharing | Components that manage isolated hardware services for: • Graphics and computation (GPU) • Codecs (MM) • Display, video capture and audio I/O (AVIO) |

Partition Monitor | Monitors partitions, including: • Dynamically loads/unloads and starts/stops partitions and virtual memory allocations. • Logs partition use. • Provides interactive debugging of guest operating systems. |

Note: | Starting in DRIVE OS 5.1.9.0, the Guest OS is assigned 7 dedicated CPU cores and the other VMs depicted are assigned to 1 core. Earlier releases had all functions shared across all 8 cores. Previous releases had all functions shared across all 8 cores (prior to 5.1.6.0) or across 6 cores to Guest and 2 cores to VM servers in 5.1.6.0. |

Component | Description |

The NVIDIA CUDA® Deep Neural Network library (cuDNN) is a GPU-accelerated library of primitives for deep neural networks. cuDNN provides highly tuned implementations for standard routines such as forward and backward convolution, pooling, normalization, and activation layers. cuDNN is part of the NVIDIA Deep Learning SDK. Consult the cuDNN Deep Neural Network Library of primitives for deep neural network development. | |

NVIDIA TensorRT™ is a high-performance deep learning inference optimizer and runtime that delivers low latency, high-throughput inference for deep learning applications. Consult the TensorRT Documentation for deep learning development. | |

CUDA® is a parallel computing platform and programming model developed by NVIDIA for general computing on graphical processing units (GPUs). With CUDA, developers are able to dramatically speed up computing applications by harnessing the power of GPUs. Consult the CUDA Samples provided as an educational resource. Consult the CUDA Computing Platform Development Guide for general purpose computing development. |