Open topic with navigation

With NVIDIA Nsight 4.7, you can set parameters of your CUDA project in order to customize your debugging experience.

To configure your project's CUDA properties page:

- In the Solution Explorer, click on the project name so that it is highlighted.

- From the Project menu, choose Properties. The Property Pages window opens.

- Select CUDA C/C++ in the left pane.

Common

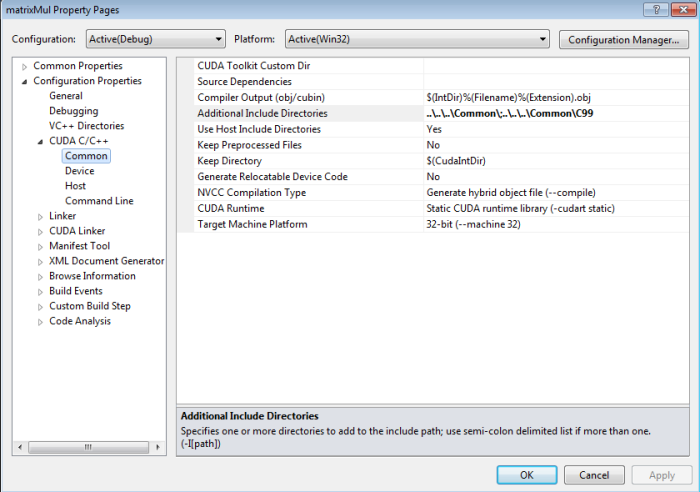

On the Common page, you can configure the following options:

- CUDA Toolkit Custom Dir – This option sets a custom path to the CUDA toolkit. You can edit the path, select "Browse" to choose the path, or select "inherit from parent or project defaults."

- Source Dependencies – This option allows you to add additional source file dependencies. If you have a dependency that has been set with an #include statement, it does not need to be explicitly specified in this

- Compiler Output (obj/cubin) – This sets the output as a an

.obj or a .cubin file. The default setting is $(IntDir)%(Filename)%(Extension).obj.

- Additional Include Directories – This option allows you to list at least one additional directory to add to the include path. If you have more than one, use a semi-colon to separate them.

- Use Host Include Directories –

- Keep Preprocessed Files – This option allows you to choose whether or not the preprocessor files generated by the CUDA compiler (for example,

.ptx, .cubin, .cudafe1.c, etc.) will be deleted.

- Keep Directory – This option sets the path the directory where the preprocessor files generated by the CUDA compiler will be kept.

- Generate Relocatable Device Code – This setting chooses whether or not to compile the input file into an object file that contains relocatable device code.

- NVCC Compilation Type – This option sets your desired output of NVCC compilation. Choices here include the following:

- Generate hybrid object file (

--compile) - Generate hybrid .c file (

-cuda) - Generate .gpu file (

-gpu) - Generate .cubin file (

-cubin) - Generate .ptx file (

-ptx)

- CUDA Runtime – This option allows you to specify the type of CUDA runtime library to be used. The choices here include the following:

- No CUDA runtime library (

-cudart none) - Shared/dynamic CUDA runtime library (

-cudart shared) - Static CUDA runtime library (

-cudart static)

- Target Machine Platform – This sets the platform of the target machine (either x86 or x64).

Device

On the Device page, you can configure the following options:

- C interleaved in PTXAS Output – This setting chooses whether or not to insert source code into generated PTX.

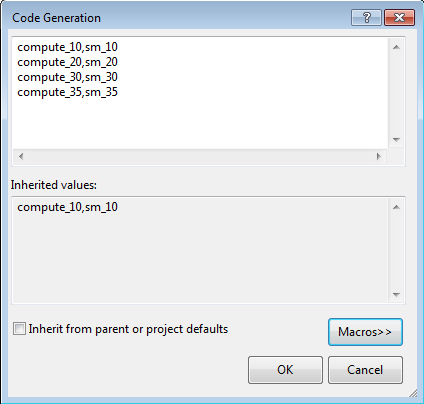

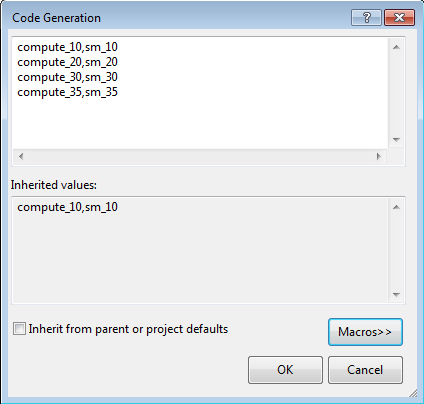

- Code Generation – This option specifies the names of the NVIDIA GPU architectures to generate code for. If you click Edit from the drop-down menu, the following pop-up appears:

If you edit this field, the correct syntax to use is [arch],[code] (for example, compute_10,sm_10). If the selected NVCC Compilation Type is compile, then multiple arch/code pairs may be listed, separated by a semi-colon (for example, compute_10,sm_10;compute_20,sm_20).

Valid values for arch and code include the following: compute_10 compute_11 compute_12 compute_13 compute_20 compute_30 sm_10 sm_11 sm_12 sm_13 sm_20 sm_21 sm_30 sm_35

- Generate GPU Debug Information – This setting selects whether or not GPU debugging information is generated by the CUDA compiler.

- Generate Line Number Information – This option chooses whether or not to generate line number information for device code. If Generate GPU Debug Information is on (

-G), line information (-lineinfo) is automatically generated as well.

- Max Used Register – This option specifies the maximum amount of registers that GPU functions can use.

- Verbose PTXAS Output – This option selects whether or not to use verbose PTXAS output.

Host

On the Host page, you can configure the following options:

- Additional Compiler Options – This setting lists additional host compiler options that are not supported by the host's project properties.

- Preprocessor Definitions – This option allows you to list preprocessor defines.

- Use Host Preprocessor Definitions – This option selects whether or not to use the defines that were used by the host compiler for device code.

- Emulation – This option specifies whether or not to generate emulated code.

- Generate Host Debug Information – This option specifies whether or not the host debugging information will be generated by the CUDA compiler.

- Use Fast Math – This option selects whether or not to make use of the fast math library.

- Optimization – This field selects the option for code optimization. Available choices include the following:

- <inherit from host>

- Disabled (

/Od) - Minimize Size (

/O1) - Maximize Speed (

/O2) - Full Optimization (

/Ox)

- Runtime Library – This field selects the runtime library to use for linking. Available choices include the following:

- <inherit from host>

- Multi-Threaded (

/Mt) - Multi-Threaded Debug (

/Mtd) - Multi-Threaded DLL (

/MD) - Multi-Threaded Debug DLL (

/MDd) - Single-Threaded (

/ML) - Single-Threaded Debug (

/MLd)

- Basic Runtime Checks – This field performs basic runtime error checks, incompatible with any optimization type other than debug. Available choices include the following:

- <inherit from host>

- Default

- Stack Frames (

/RTCs) - Uninitialized Variables (

/RTCu) - Both (

/RTC1)

- Enable Run-Time Type Info – This option chooses whether or not to add code for checking C++ object types at run time.

- Warning Level – This option selects how strictly you want the compiler to be when checking for potentially suspect constructs. Available choices here include:

- <inherit from host>

- Off: Turn Off All Warnings (

/W0) - Level 1 (

/W1) - Level 2 (

/W2) - Level 3 (

/W3) - Level 4 (

/W4) - Enable All Warnings (

/Wall)

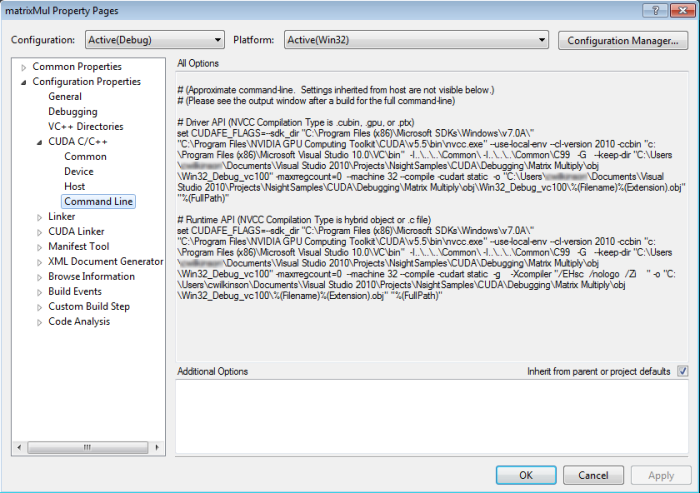

Command Line

The Command Line page shows the approximate command line parameters, given the settings you've chosen.

NVIDIA GameWorks Documentation Rev. 1.0.150630 ©2015. NVIDIA Corporation. All Rights Reserved.