TAO Toolkit Integration with DeepStream¶

NVIDIA TAO toolkit is a simple, easy-to-use training toolkit that requires minimal coding to create vision AI models using the user’s own data. Using TAO toolkit, users can transfer learn from NVIDIA pre-trained models to create their own model. Users can add new classes to an existing pre-trained model, or they can re-train the model to adapt to their use case. Users can use model pruning capability to reduce the overall size of the model.

Pre-trained models¶

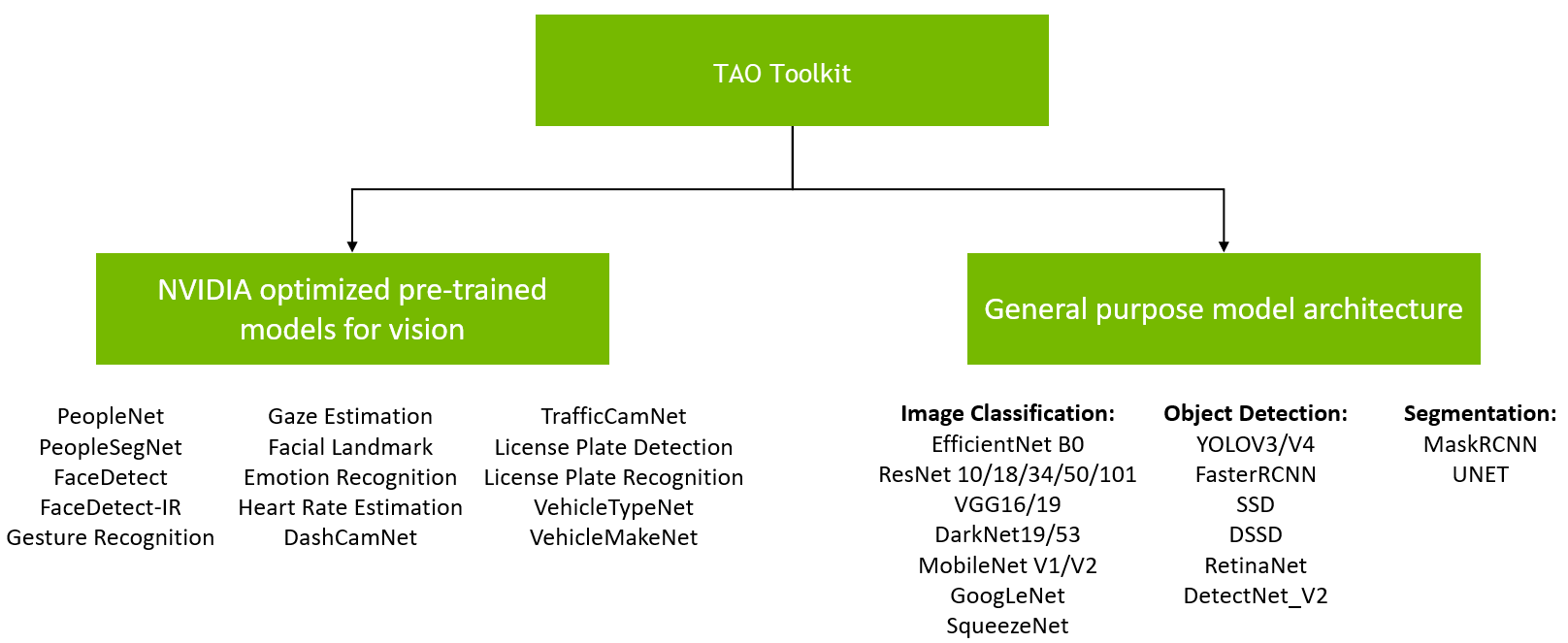

There are 2 types of pre-trained models that users can start with - purpose-built pre-trained models and meta-architecture vision models. Purpose-built pre-trained models are highly accurate models that are trained on millions of objects for a specific task. The pre-trained weights for meta-architecture vision models merely act as a starting point to build more complex models. These pre-trained weights are trained on Open image dataset and they provide a much better starting point for training versus starting from scratch or starting from random weights. With the latter choice, users can choose from 100+ permutations of model architecture and backbone. See the illustration below.

The purpose-built models are built for high accuracy and performance. These models can be deployed out of the box for applications in smart city or smart places or can also be used to re-train with user’s own data. All models are trained on millions of objects and can achieve more than very high accuracy on our test data. More information about each of these models is available in Purpose-built models chapter of TAO toolkit documentation – Purpose built models or in the individual model cards. Typical use cases and some model KPIs are provided in the table below. PeopleNet can be used for detecting and counting people in smart buildings, retail, hospitals, etc. For smart traffic applications, TrafficCamNet and DashCamNet can be used to detect and track vehicles on the road.

TAO toolkit pretrained models - use cases¶ Model Name

Network Architecture

Number of classes

Accuracy

Use case

DetectNet_v2-ResNet18

4

83.50%

Detect and track cars

DetectNet_v2-ResNet18/34

3

84%

People counting, heatmap generation, social distancing.

DetectNet_v2-ResNet18

4

80%

Identify objects from a moving object

DetectNet_v2-ResNet18

1

96%

Detect face in a dark environment with IR camera

ResNet18

20

91%

Classifying car models

ResNet18

6

96%

Classifying type of cars as coupe, sedan, truck, etc

DetectNet_v2-ResNet18

1

98%

Detect License plates on Vehicles

Tuned ResNet18

36(US)/68(CH)

97%(US)/99%(CH)

Recognize characters in License plates. Available in American and Chinese License plates

MaskRCNN-ResNet50

1

85%

Detect and segment people in crowded environment

UNET

3

94.01

Detect people and provide a semantic segmentation mask in an image

Most models trained with TAO toolkit are natively integrated for inference with DeepStream. If the model is integrated, it is supported by the reference deepstream-app. If the model is not natively integrated in the SDK, you can find a reference application on the GitHub repo. See the table below for information on the models supported. For models integrated into deepstream-app, we have provided sample config files for each of the networks. The sample config files are available in the samples/configs/tao_pretrained_models folder. The table below also lists config files for each model.

Note

Refer README in package /opt/nvidia/deepstream/deepstream/samples/configs/tao_pretrained_models/README.md to obtain TAO toolkit config files and models mentioned in following table.

The TAO toolkit pre-trained models table shows the deployment information of purpose-built pre-trained models.

TAO toolkit pre-trained models in DeepStream¶ Pre-Trained model

DeepStream reference app

Config files

DLA supported

deepstream-app

deepstream_app_source1_trafficcamnet,config_infer_primary_trafficcamnet.txt,labels_trafficnet.txtYes

deepstream-app

deepstream_app_source1_peoplenet.txt,config_infer_primary_peoplenet.txt,labels_peoplenet.txtYes

deepstream-app

deepstream_app_source1_dashcamnet_vehiclemakenet_vehicletypenet.txt,config_infer_primary_dashcamnet.txt,labels_dashcamnet.txtYes

deepstream-app

deepstream_app_source1_faceirnet.txt,config_infer_primary_faceirnet.txt,labels_faceirnet.txtYes

deepstream-app

deepstream_app_source1_dashcamnet_vehiclemakenet_vehicletypenet.txt,config_infer_secondary_vehiclemakenet.txt,labels_vehiclemakenet.txtYes

deepstream-app

deepstream_app_source1_dashcamnet_vehiclemakenet_vehicletypenet.txt,config_infer_secondary_vehicletypenet.txt,labels_vehicletypenet.txtYes

Yes

No

No

No

No

No

No

The TAO toolkit model arch table shows the deployment information of the open model architecture models from TAO toolkit.

TAO Toolkit 2.0 model architecture in DeepStream¶ TLT 2.0 Model

Architecture

Location

Reference app

Config files

DS Version

YoloV3

deepstream-app

config_infer_primary_yolov3.txtyolov3_labels.txtDS5.0 onwards

RetinaNet

deepstream-app

config_infer_primary_retinanet.txtretinanet_labels.txtDS5.0 onwards

SSD

deepstream-app

config_infer_primary_ssd.txtssd_labels.txtDS5.0 onwards

DSSD

deepstream-app

config_infer_primary_dssd.txtdssd_labels.txtDS5.0 onwards

FasterRCNN

deepstream-app

config_infer_primary_frcnn.txtfrcnn_labels.txtDS5.0 onwards

MaskRCNN

deepstream-app

config_infer_primary_mrcnn.txtmrcnn_labels.txtDS5.0 onwards

Note

For Yolov3/ReinaNet/SSD/DSSD/FasterRCNN:

cd $DS_TOP/samples/configs/tao_pretrained_models/then editdeepstream_app_source1_detection_models.txt, let config-file under primary-gie section point to the model config files you want, then rundeepstream-app -c deepstream_app_source1_detection_models.txt

For MaskRCNN:

deepstream-app -c deepstream_app_source1_mrcnn.txt

TAO toolkit 3.0 model architecture in DeepStream¶ TAO 3.0 Model Architecture

Location

Reference app

Config file

DS Version

YoloV3

https://github.com/NVIDIA-AI-IOT/deepstream_tao_apps/tree/release/tao3.0

DS5.0 onwards

YoloV4

https://github.com/NVIDIA-AI-IOT/deepstream_tao_apps/tree/release/tao3.0

DS5.0 onwards

RetinaNet

https://github.com/NVIDIA-AI-IOT/deepstream_tao_apps/tree/release/tao3.0

DS5.0 onwards

SSD

https://github.com/NVIDIA-AI-IOT/deepstream_tao_apps/tree/release/tao3.0

DS5.0 onwards

DSSD

https://github.com/NVIDIA-AI-IOT/deepstream_tao_apps/tree/release/tao3.0

DS5.0 onwards

FasterRCNN

https://github.com/NVIDIA-AI-IOT/deepstream_tao_apps/tree/release/tao3.0

DS5.0 onwards

UNET

https://github.com/NVIDIA-AI-IOT/deepstream_tao_apps/tree/release/tao3.0

DS5.1 onwards

PeopleSegNet

https://github.com/NVIDIA-AI-IOT/deepstream_tao_apps/tree/release/tao3.0

DS5.0 onwards

Note

Learn more about running the tao3.0 models here: https://github.com/NVIDIA-AI-IOT/deepstream_tao_apps/tree/release/tao3.0

Except for UNET and License plate recognition (LPR), all models can be deployed in native etlt format. etlt is the TAO export format. For UNET and LPR model, you will need to convert the etlt file to TensorRT engine before running with DeepStream. Use tao-converter to converter etlt to TensorRT engine. tao-converter are very hardware specific, see table below for the appropriate version for your hardware.

TAO converter¶ Platform

Compute

Link

x86 + GPU

CUDA 10.2 / cuDNN 8.0 / TensorRT 7.1

x86 + GPU

CUDA 10.2 / cuDNN 8.0 / TensorRT 7.1

x86 + GPU

CUDA 11.0 / cuDNN 8.0 / TensorRT 7.1

x86 + GPU

CUDA 11.0 / cuDNN 8.0 / TensorRT 7.1

x86 + GPU

CUDA 11.1 / cuDNN 8.0 / TensorRT 7.2

x86 + GPU

CUDA 11.3 / cuDNN 8.0 / TensorRT 8.0

Jetson

JetPack 4.4

Jetson

JetPack 4.5

Jetson

Jetpack 4.6

Clara AGX

CUDA 11.1 / CuDNN 8.0.5 / TensorRT 7.2.2

For more information about TAO and how to deploy TAO models with DeepStream, refer to Integrating TAO Models into DeepStream chapter of TAO toolkit user guide.

For more information about deployment of architecture specific models, refer to https://github.com/NVIDIA-AI-IOT/deepstream_tao_apps and https://github.com/NVIDIA-AI-IOT/deepstream_lpr_app GitHub repo.