Detailed Description

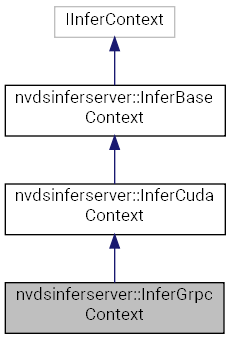

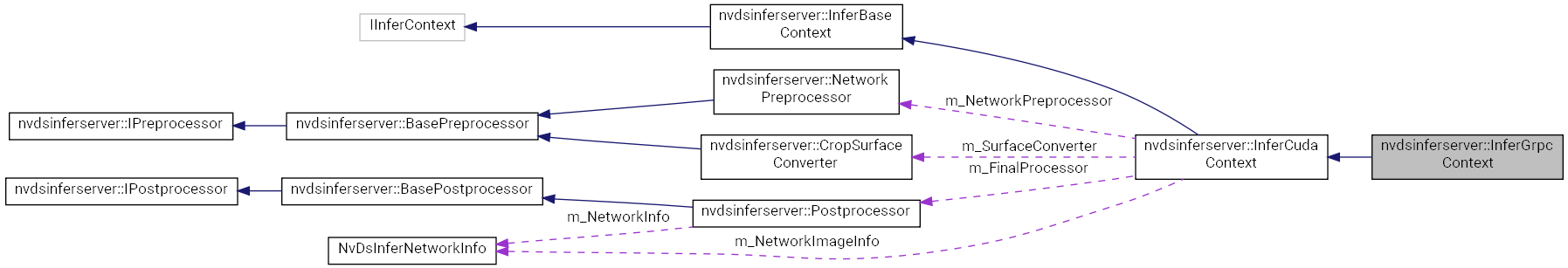

Inference context class accessing Triton Inference Server in gRPC mode.

Definition at line 37 of file sources/libs/nvdsinferserver/infer_grpc_context.h.

Public Member Functions | |

| InferGrpcContext () | |

| Constructor, default. More... | |

| ~InferGrpcContext () override | |

| Destructor, default. More... | |

| InferGrpcContext () | |

| Constructor, default. More... | |

| ~InferGrpcContext () override | |

| Destructor, default. More... | |

| SharedSysMem | acquireTensorHostBuf (const std::string &name, size_t bytes) |

| Allocator. More... | |

| SharedSysMem | acquireTensorHostBuf (const std::string &name, size_t bytes) |

| Allocator. More... | |

| SharedCuEvent | acquireTensorHostEvent () |

| Acquire a CUDA event from the events pool. More... | |

| SharedCuEvent | acquireTensorHostEvent () |

| Acquire a CUDA event from the events pool. More... | |

| NvDsInferStatus | initialize (const std::string &prototxt, InferLoggingFunc logFunc) final |

| NvDsInferStatus | initialize (const std::string &prototxt, InferLoggingFunc logFunc) final |

| NvDsInferStatus | run (SharedIBatchArray input, InferOutputCb outputCb) final |

| NvDsInferStatus | run (SharedIBatchArray input, InferOutputCb outputCb) final |

Protected Member Functions | |

| NvDsInferStatus | createNNBackend (const ic::BackendParams ¶ms, int maxBatchSize, UniqBackend &backend) |

| Create the Triton gRPC mode inference processing backend. More... | |

| NvDsInferStatus | deinit () override |

| Synchronize on the CUDA stream and call InferCudaContext::deinit(). More... | |

| SharedCuStream & | mainStream () override |

| Get the main processing CUDA event. More... | |

| NvDsInferStatus | createNNBackend (const ic::BackendParams ¶ms, int maxBatchSize, UniqBackend &backend) |

| Create the Triton gRPC mode inference processing backend. More... | |

| NvDsInferStatus | deinit () override |

| Synchronize on the CUDA stream and call InferCudaContext::deinit(). More... | |

| SharedCuStream & | mainStream () override |

| Get the main processing CUDA event. More... | |

| NvDsInferStatus | fixateInferenceInfo (const ic::InferenceConfig &config, BaseBackend &backend) override |

| Check the tensor order, media format, and datatype for the input tensor. More... | |

| NvDsInferStatus | fixateInferenceInfo (const ic::InferenceConfig &config, BaseBackend &backend) override |

| Check the tensor order, media format, and datatype for the input tensor. More... | |

| NvDsInferStatus | createPreprocessor (const ic::PreProcessParams ¶ms, std::vector< UniqPreprocessor > &processors) override |

| Create the surface converter and network preprocessor. More... | |

| NvDsInferStatus | createPreprocessor (const ic::PreProcessParams ¶ms, std::vector< UniqPreprocessor > &processors) override |

| Create the surface converter and network preprocessor. More... | |

| NvDsInferStatus | createPostprocessor (const ic::PostProcessParams ¶ms, UniqPostprocessor &processor) override |

| Create the post-processor as per the network output type. More... | |

| NvDsInferStatus | createPostprocessor (const ic::PostProcessParams ¶ms, UniqPostprocessor &processor) override |

| Create the post-processor as per the network output type. More... | |

| NvDsInferStatus | preInference (SharedBatchArray &inputs, const ic::InferenceConfig &config) override |

| Initialize non-image input layers if the custom library has implemented the interface. More... | |

| NvDsInferStatus | preInference (SharedBatchArray &inputs, const ic::InferenceConfig &config) override |

| Initialize non-image input layers if the custom library has implemented the interface. More... | |

| NvDsInferStatus | extraOutputTensorCheck (SharedBatchArray &outputs, SharedOptions inOptions) override |

| Post inference steps for the custom processor and LSTM controller. More... | |

| NvDsInferStatus | extraOutputTensorCheck (SharedBatchArray &outputs, SharedOptions inOptions) override |

| Post inference steps for the custom processor and LSTM controller. More... | |

| void | notifyError (NvDsInferStatus status) override |

| In case of error, notify the waiting threads. More... | |

| void | notifyError (NvDsInferStatus status) override |

| In case of error, notify the waiting threads. More... | |

| void | getNetworkInputInfo (NvDsInferNetworkInfo &networkInfo) override |

| Get the network input layer information. More... | |

| void | getNetworkInputInfo (NvDsInferNetworkInfo &networkInfo) override |

| Get the network input layer information. More... | |

| int | tensorPoolSize () const |

| Get the size of the tensor pool. More... | |

| int | tensorPoolSize () const |

| Get the size of the tensor pool. More... | |

| virtual void | backendConsumedInputs (SharedBatchArray inputs) |

| virtual void | backendConsumedInputs (SharedBatchArray inputs) |

| const ic::InferenceConfig & | config () const |

| const ic::InferenceConfig & | config () const |

| int | maxBatchSize () const |

| int | maxBatchSize () const |

| int | uniqueId () const |

| int | uniqueId () const |

| BaseBackend * | backend () |

| BaseBackend * | backend () |

| const SharedDllHandle & | customLib () const |

| const SharedDllHandle & | customLib () const |

| bool | needCopyInputToHost () const |

| bool | needCopyInputToHost () const |

| void | print (NvDsInferLogLevel l, const char *msg) |

| void | print (NvDsInferLogLevel l, const char *msg) |

| bool | needPreprocess () const |

| bool | needPreprocess () const |

Protected Attributes | |

| NvDsInferNetworkInfo | m_NetworkImageInfo {0, 0, 0} |

| Network input height, width, channels for preprocessing. More... | |

| InferMediaFormat | m_NetworkImageFormat = InferMediaFormat::kRGB |

| The input layer media format. More... | |

| std::string | m_NetworkImageName |

| The input layer name. More... | |

| InferTensorOrder | m_InputTensorOrder = InferTensorOrder::kNone |

| The input layer tensor order. More... | |

| InferDataType | m_InputDataType = InferDataType::kFp32 |

| The input layer datatype. More... | |

| std::vector< SharedCudaTensorBuf > | m_ExtraInputs |

| Array of buffers of the additional inputs. More... | |

| MapBufferPool< std::string, UniqSysMem > | m_HostTensorPool |

| Map of pools for the output tensors. More... | |

| SharedBufPool< std::unique_ptr< CudaEventInPool > > | m_HostTensorEvents |

| Pool of CUDA events for host tensor copy. More... | |

| UniqLstmController | m_LstmController |

| LSTM controller. More... | |

| UniqStreamManager | m_MultiStreamManager |

| stream-id based management. More... | |

| UniqInferExtraProcessor | m_ExtraProcessor |

| Extra and custom processing pre/post inference. More... | |

Constructor & Destructor Documentation

◆ InferGrpcContext() [1/2]

| nvdsinferserver::InferGrpcContext::InferGrpcContext | ( | ) |

Constructor, default.

◆ ~InferGrpcContext() [1/2]

|

override |

Destructor, default.

◆ InferGrpcContext() [2/2]

| nvdsinferserver::InferGrpcContext::InferGrpcContext | ( | ) |

Constructor, default.

◆ ~InferGrpcContext() [2/2]

|

override |

Destructor, default.

Member Function Documentation

◆ acquireTensorHostBuf() [1/2]

|

inherited |

Allocator.

Acquire a host buffer for the inference output.

- Parameters

-

[in] name Name of the output layer. [in] bytes Size of the buffer.

- Returns

- Pointer to the memory buffer.

◆ acquireTensorHostBuf() [2/2]

|

inherited |

Allocator.

Acquire a host buffer for the inference output.

- Parameters

-

[in] name Name of the output layer. [in] bytes Size of the buffer.

- Returns

- Pointer to the memory buffer.

◆ acquireTensorHostEvent() [1/2]

|

inherited |

Acquire a CUDA event from the events pool.

◆ acquireTensorHostEvent() [2/2]

|

inherited |

Acquire a CUDA event from the events pool.

◆ backend() [1/2]

|

inlineprotectedinherited |

Definition at line 101 of file sources/libs/nvdsinferserver/infer_base_context.h.

◆ backend() [2/2]

|

inlineprotectedinherited |

Definition at line 101 of file 9.0/sources/libs/nvdsinferserver/infer_base_context.h.

◆ backendConsumedInputs() [1/2]

|

inlineprotectedvirtualinherited |

Definition at line 93 of file 9.0/sources/libs/nvdsinferserver/infer_base_context.h.

◆ backendConsumedInputs() [2/2]

|

inlineprotectedvirtualinherited |

Definition at line 93 of file sources/libs/nvdsinferserver/infer_base_context.h.

◆ config() [1/2]

|

inlineprotectedinherited |

Definition at line 98 of file sources/libs/nvdsinferserver/infer_base_context.h.

◆ config() [2/2]

|

inlineprotectedinherited |

Definition at line 98 of file 9.0/sources/libs/nvdsinferserver/infer_base_context.h.

◆ createNNBackend() [1/2]

|

protectedvirtual |

Create the Triton gRPC mode inference processing backend.

- Parameters

-

[in] params The backend configuration parameters. [in] maxBatchSize The maximum batch size configuration. [out] backend Pointer for the backend handle.

- Returns

- Status code.

Implements nvdsinferserver::InferBaseContext.

◆ createNNBackend() [2/2]

|

protectedvirtual |

Create the Triton gRPC mode inference processing backend.

- Parameters

-

[in] params The backend configuration parameters. [in] maxBatchSize The maximum batch size configuration. [out] backend Pointer for the backend handle.

- Returns

- Status code.

Implements nvdsinferserver::InferBaseContext.

◆ createPostprocessor() [1/2]

|

overrideprotectedvirtualinherited |

Create the post-processor as per the network output type.

- Parameters

-

[in] params The post processing configuration parameters. [out] processor The handle to the created post processor.

- Returns

- Status code.

Implements nvdsinferserver::InferBaseContext.

◆ createPostprocessor() [2/2]

|

overrideprotectedvirtualinherited |

Create the post-processor as per the network output type.

- Parameters

-

[in] params The post processing configuration parameters. [out] processor The handle to the created post processor.

- Returns

- Status code.

Implements nvdsinferserver::InferBaseContext.

◆ createPreprocessor() [1/2]

|

overrideprotectedvirtualinherited |

Create the surface converter and network preprocessor.

- Parameters

-

params The preprocessor configuration. processors List of the created preprocessor handles.

- Returns

- Status code.

Implements nvdsinferserver::InferBaseContext.

◆ createPreprocessor() [2/2]

|

overrideprotectedvirtualinherited |

Create the surface converter and network preprocessor.

- Parameters

-

params The preprocessor configuration. processors List of the created preprocessor handles.

- Returns

- Status code.

Implements nvdsinferserver::InferBaseContext.

◆ customLib() [1/2]

|

inlineprotectedinherited |

Definition at line 102 of file sources/libs/nvdsinferserver/infer_base_context.h.

◆ customLib() [2/2]

|

inlineprotectedinherited |

Definition at line 102 of file 9.0/sources/libs/nvdsinferserver/infer_base_context.h.

◆ deinit() [1/2]

|

overrideprotected |

Synchronize on the CUDA stream and call InferCudaContext::deinit().

◆ deinit() [2/2]

|

overrideprotected |

Synchronize on the CUDA stream and call InferCudaContext::deinit().

◆ extraOutputTensorCheck() [1/2]

|

overrideprotectedvirtualinherited |

Post inference steps for the custom processor and LSTM controller.

- Parameters

-

[in,out] outputs The output batch buffers array. [in] inOptions The configuration options for the buffers.

- Returns

- Status code.

Reimplemented from nvdsinferserver::InferBaseContext.

◆ extraOutputTensorCheck() [2/2]

|

overrideprotectedvirtualinherited |

Post inference steps for the custom processor and LSTM controller.

- Parameters

-

[in,out] outputs The output batch buffers array. [in] inOptions The configuration options for the buffers.

- Returns

- Status code.

Reimplemented from nvdsinferserver::InferBaseContext.

◆ fixateInferenceInfo() [1/2]

|

overrideprotectedvirtualinherited |

Check the tensor order, media format, and datatype for the input tensor.

Initiate the extra processor and lstm controller if configured.

- Parameters

-

[in] config The inference configuration protobuf message. [in] backend The inference backend instance.

- Returns

- Status code.

Implements nvdsinferserver::InferBaseContext.

◆ fixateInferenceInfo() [2/2]

|

overrideprotectedvirtualinherited |

Check the tensor order, media format, and datatype for the input tensor.

Initiate the extra processor and lstm controller if configured.

- Parameters

-

[in] config The inference configuration protobuf message. [in] backend The inference backend instance.

- Returns

- Status code.

Implements nvdsinferserver::InferBaseContext.

◆ getNetworkInputInfo() [1/2]

|

inlineoverrideprotectedinherited |

Get the network input layer information.

Definition at line 132 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

References nvdsinferserver::InferCudaContext::m_NetworkImageInfo.

◆ getNetworkInputInfo() [2/2]

|

inlineoverrideprotectedinherited |

Get the network input layer information.

Definition at line 132 of file 9.0/sources/libs/nvdsinferserver/infer_cuda_context.h.

References nvdsinferserver::InferCudaContext::m_NetworkImageInfo.

◆ initialize() [1/2]

|

finalinherited |

◆ initialize() [2/2]

|

finalinherited |

◆ mainStream() [1/2]

|

inlineoverrideprotectedvirtual |

Get the main processing CUDA event.

Implements nvdsinferserver::InferBaseContext.

Definition at line 69 of file 9.0/sources/libs/nvdsinferserver/infer_grpc_context.h.

◆ mainStream() [2/2]

|

inlineoverrideprotectedvirtual |

Get the main processing CUDA event.

Implements nvdsinferserver::InferBaseContext.

Definition at line 69 of file sources/libs/nvdsinferserver/infer_grpc_context.h.

◆ maxBatchSize() [1/2]

|

inlineprotectedinherited |

Definition at line 99 of file sources/libs/nvdsinferserver/infer_base_context.h.

◆ maxBatchSize() [2/2]

|

inlineprotectedinherited |

Definition at line 99 of file 9.0/sources/libs/nvdsinferserver/infer_base_context.h.

◆ needCopyInputToHost() [1/2]

|

protectedinherited |

◆ needCopyInputToHost() [2/2]

|

protectedinherited |

◆ needPreprocess() [1/2]

|

protectedinherited |

◆ needPreprocess() [2/2]

|

protectedinherited |

◆ notifyError() [1/2]

|

overrideprotectedvirtualinherited |

In case of error, notify the waiting threads.

Implements nvdsinferserver::InferBaseContext.

◆ notifyError() [2/2]

|

overrideprotectedvirtualinherited |

In case of error, notify the waiting threads.

Implements nvdsinferserver::InferBaseContext.

◆ preInference() [1/2]

|

overrideprotectedvirtualinherited |

Initialize non-image input layers if the custom library has implemented the interface.

- Parameters

-

[in,out] inputs Array of the input batch buffers. [in] config The inference configuration settings.

- Returns

- Status code.

Reimplemented from nvdsinferserver::InferBaseContext.

◆ preInference() [2/2]

|

overrideprotectedvirtualinherited |

Initialize non-image input layers if the custom library has implemented the interface.

- Parameters

-

[in,out] inputs Array of the input batch buffers. [in] config The inference configuration settings.

- Returns

- Status code.

Reimplemented from nvdsinferserver::InferBaseContext.

◆ print() [1/2]

|

protectedinherited |

◆ print() [2/2]

|

protectedinherited |

◆ run() [1/2]

|

finalinherited |

◆ run() [2/2]

|

finalinherited |

◆ tensorPoolSize() [1/2]

|

protectedinherited |

Get the size of the tensor pool.

◆ tensorPoolSize() [2/2]

|

protectedinherited |

Get the size of the tensor pool.

◆ uniqueId() [1/2]

|

inlineprotectedinherited |

Definition at line 100 of file 9.0/sources/libs/nvdsinferserver/infer_base_context.h.

◆ uniqueId() [2/2]

|

inlineprotectedinherited |

Definition at line 100 of file sources/libs/nvdsinferserver/infer_base_context.h.

Field Documentation

◆ m_ExtraInputs

|

protectedinherited |

Array of buffers of the additional inputs.

Definition at line 213 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

◆ m_ExtraProcessor

|

protectedinherited |

Extra and custom processing pre/post inference.

Definition at line 228 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

◆ m_FinalProcessor

|

protectedinherited |

Definition at line 234 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

◆ m_HostTensorEvents

|

protectedinherited |

Pool of CUDA events for host tensor copy.

Definition at line 221 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

◆ m_HostTensorPool

|

protectedinherited |

Map of pools for the output tensors.

Definition at line 217 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

◆ m_InputDataType

|

protectedinherited |

The input layer datatype.

Definition at line 208 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

◆ m_InputTensorOrder

|

protectedinherited |

The input layer tensor order.

Definition at line 204 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

◆ m_LstmController

|

protectedinherited |

LSTM controller.

Definition at line 224 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

◆ m_MultiStreamManager

|

protectedinherited |

stream-id based management.

Definition at line 226 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

◆ m_NetworkImageFormat

|

protectedinherited |

The input layer media format.

Definition at line 196 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

◆ m_NetworkImageInfo

|

protectedinherited |

Network input height, width, channels for preprocessing.

Definition at line 192 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

Referenced by nvdsinferserver::InferCudaContext::getNetworkInputInfo().

◆ m_NetworkImageName

|

protectedinherited |

The input layer name.

Definition at line 200 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

◆ m_NetworkPreprocessor

|

protectedinherited |

Definition at line 233 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

◆ m_SurfaceConverter

|

protectedinherited |

Preprocessor and post-processor handles.

Definition at line 232 of file sources/libs/nvdsinferserver/infer_cuda_context.h.

The documentation for this class was generated from the following file: