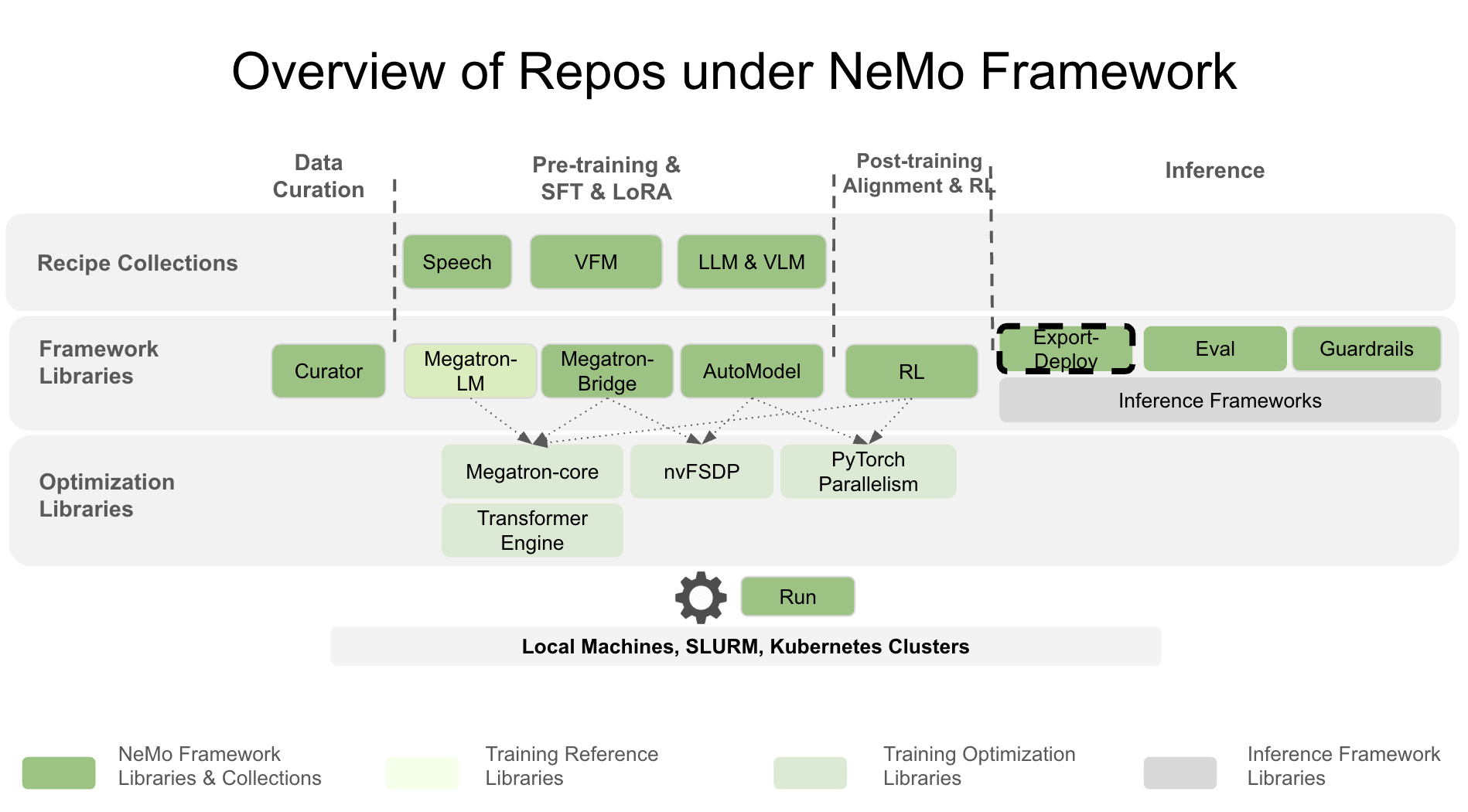

NeMo Export-Deploy#

The Export-Deploy library (“NeMo Export-Deploy”) provides tools and APIs for exporting and deploying NeMo and 🤗Hugging Face models to production environments. It supports various deployment paths including TensorRT and vLLM deployment through NVIDIA Triton Inference Server and Ray Serve.

📣 News#

[03/12/2026] Deprecating Python 3.10 support: We’re officially dropping Python 3.10 support with the upcoming 0.4.0 release. Downstream applications must raise their lower boundary to 3.12 to stay compatible with Export-Deploy.

🚀 Key Features#

Support for Large Language Models (LLMs) and Multimodal Models (MMs)

Export Megatron-Brdige and Hugging Face models to optimized inference formats including vLLM

Deploy Megatron-Brdige and Hugging Face models using Ray Serve or NVIDIA Triton Inference Server

Multi-GPU and distributed inference capabilities

Multi-instance deployment options

Feature Support Matrix#

Model Export Capabilities#

Model / Checkpoint |

vLLM |

ONNX |

TensorRT |

|---|---|---|---|

bf16 |

N/A |

N/A |

|

N/A |

bf16, fp8, int8 (PTQ) |

bf16, fp8, int8 (PTQ) |

|

N/A |

Coming Soon |

Coming Soon |

The support matrix above outlines the export capabilities for each model or checkpoint, including the supported precision options across various inference-optimized libraries. The export module enables exporting models that have been quantized using post-training quantization (PTQ) with the TensorRT Model Optimizer library, as shown above. Models trained with low precision or quantization-aware training are also supported, as indicated in the table.

The inference-optimized libraries listed above also support on-the-fly quantization during model export, with configurable parameters available in the export APIs. However, please note that the precision options shown in the table above indicate support for exporting models that have already been quantized, rather than the ability to quantize models during export.

Please note that not all large language models (LLMs) and multimodal models (MMs) are currently supported. For the most complete and up-to-date information, please refer to the LLM documentation and MM documentation.

Model Deployment Capabilities#

Model / Checkpoint |

RayServe |

PyTriton |

|---|---|---|

Limited |

Limited |

|

Single-Node Multi-GPU, |

Single-Node Multi-GPU |

|

N/A |

Single-Node Multi-GPU |

The support matrix above outlines the available deployment options for each model or checkpoint, highlighting multi-node and multi-GPU support where applicable. For comprehensive details, please refer to the documentation.

Refer to the table below for an overview of optimized inference and deployment support for NeMo Framework and Hugging Face models with Triton Inference Server.

Model / Checkpoint |

vLLM + Triton Inference Server |

Direct Triton Inference Server |

|---|---|---|

Hugging Face |

☑ |

☑ |

🔧 Install#

For quick exploration of NeMo Export-Deploy, we recommend installing our pip package:

pip install nemo-export-deploy

This installation comes without extra dependencies like TransformerEngine or vLLM. The installation serves for navigating around and for exploring the project.

For a feature-complete install, please refer to the following sections.

Use NeMo-FW Container#

Best experience, highest performance and full feature support is guaranteed by the NeMo Framework container. Please fetch the most recent $TAG and run the following command to start a container:

docker run --rm -it -w /workdir -v $(pwd):/workdir \

--entrypoint bash \

--gpus all \

nvcr.io/nvidia/nemo:${TAG}

Build with Dockerfile#

For containerized development, use our Dockerfile for building your own container. There are two flavors: INFERENCE_FRAMEWORK=inframework and INFERENCE_FRAMEWORK=vllm:

docker build \

-f docker/Dockerfile.pytorch \

-t nemo-export-deploy \

--build-arg INFERENCE_FRAMEWORK=$INFERENCE_FRAMEWORK \

.

Start your container:

docker run --rm -it -w /workdir -v $(pwd):/workdir \

--entrypoint bash \

--gpus all \

nemo-export-deploy

Install from Source#

For complete feature coverage, we recommend to install TransformerEngine and additionally vLLM.

Recommended Requirements#

Python 3.12

PyTorch 2.7

CUDA 12.9

Ubuntu 24.04

Install TransformerEngine + InFramework#

For highly optimized TransformerEngine path with PyTriton backend, please make sure to install the following prerequisites first:

pip install torch==2.7.0 setuptools pybind11 wheel_stub # Required for TE

Now proceed with the main installation:

git clone https://github.com/NVIDIA-NeMo/Export-Deploy

cd Export-Deploy/

pip install --no-build-isolation .

Install TransformerEngine + vLLM#

For highly optimized TransformerEngine path with vLLM backend, please make sure to install the following prerequisites first:

pip install torch==2.7.0 setuptools pybind11 wheel_stub # Required for TE

Now proceed with the main installation:

pip install --no-build-isolation .[vllm]

Install TransformerEngine + TRT-ONNX#

For highly optimized TransformerEngine path with TRT-ONNX backend, please make sure to install the following prerequisites first:

pip install torch==2.7.0 setuptools pybind11 wheel_stub # Required for TE

Now proceed with the main installation:

pip install --no-build-isolation .[trt-onnx]

🤝 Contributing#

We welcome contributions to NeMo Export-Deploy! Please see our Contributing Guidelines for more information on how to get involved.

License#

NeMo Export-Deploy is licensed under the Apache License 2.0.