Introduction

This is the User Guide for InfiniBand/Ethernet adapter card kit based on the ConnectX®-5 integrated circuit device. This adapter connectivity provides the highest-performing and most flexible interconnect solution for PCI Express Gen 3.0 servers used in Enterprise Data Centers, High-Performance Computing, and Embedded environments.

Caution: Powering up the PCIe Auxiliary cards before the ConnectX-5 main adapter card is powered up, may cause serious damage to the cards.

NVIDIA Multi-Host technology enables connecting up to 4 compute / storage hosts to a single ConnectX® network adapter. The deployment of multi-host platforms significantly reduces the overall number of data-center network connections, enabling great infrastructure efficiency and simplicity with CAPEX and OPEX cost savings. NVIDIA Multi-Host technology is built into intelligent ConnectX adapters and is ideal for high-performance, compute-intensive, data-center environments delivering cloud, web 2.0 and telecom services.

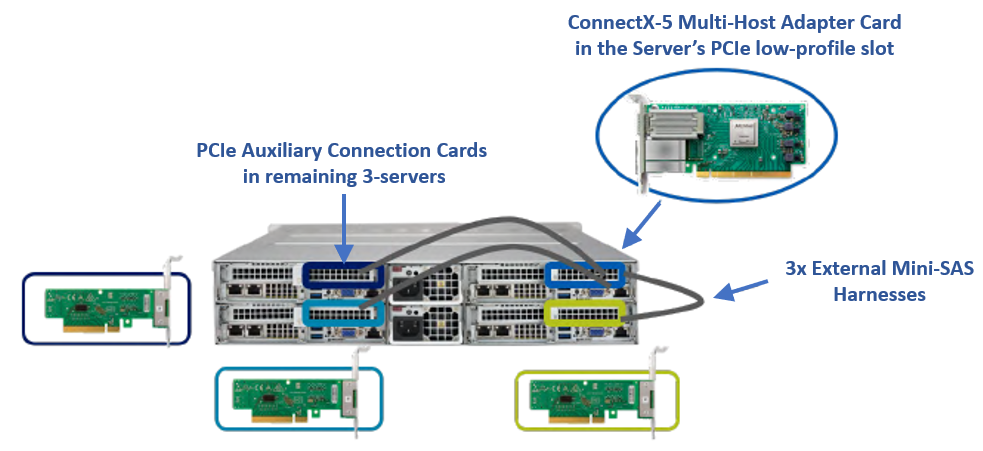

The externally connected multi-host solution leverages the same multi-host technology built into the network adapter ASIC. The external Mini-SAS harnesses make up the connectivity between each host and the network card. The solution is perfectly positioned for highly dense 4-node 2U chassis systems, enabling 25Gb/s connectivity per connected node, as illustrated in the figure below.

The externally connected NVIDIA Multi-host solution offers a superior price/performance ratio for both new and existing scale-out computing fabrics, while dramatically reducing total cost of ownership on the following:

Network adapters, operating as a single network adapter, serve up to 4 nodes.

Network switch ports, operating as a single switch port, serve up to 4 nodes.

Active cabling, as a single cable now serves up to four nodes.

Rack space, power and cooling, attributed to the overall reduction in network connections in the data center.

The following table provides the ordering part number, port speed, number of ports, and PCI Express speed.

Configuration | Ordering Part Number | Marketing Description |

ConnectX-5 Adapter Card Kit | MCX553Q-ECAS-AK70 | ConnectX-5 InfiniBand/Ethernet adapter card kit with Multi-Host, EDR IB (100Gb/s) and 100GbE, Single-port QSFP28, PCIe3.0 x4 on board, with 3x auxiliary cards, with 3x 70 CM PCIe cables, Short brackets |

ConnectX-5 Adapter Card Kit

Model | ConnectX-5 Adapter Card Kit |

Part Number | MCX553Q-ECAS-AK70 |

Data Rate | InfiniBand: SDR/DDR/QDR/FDR/EDR |

Network Connector Type | Single-port QSFP28 |

PCI Express Connectors | 4x PCIe Gen 3.0 x4; SerDes @ 8.0GT/s |

Dimensions | Adapter Card: 2.71 in. x 5.85 in. (68.90mm x 148.65 mm) – low profile |

RoHS | RoHS Compliant |

Adapter IC Part Number | MT28808A0-FCCF-EV |

Device ID (decimal) | 4119 for Physical Function (PF) and 4120 for Virtual Function (VF) |

For more detailed information see Specifications.

This section describes hardware features and capabilities. Please refer to the relevant driver and/or firmware release notes for feature availability.

For visualization scenarios, please contact NVIDIA support.

Feature | Description |

PCI Express (PCIe) | Uses the following PCIe interfaces:

|

EDR InfiniBand | A standard InfiniBand data rate, where each lane of a 4X port runs a bit rate of 25.78125Gb/s with a 64b/66b encoding, resulting in an effective bandwidth of 100Gb/s. |

100Gb/s InfiniBand/Ethernet Adapter | ConnectX-5 offers the highest throughput InfiniBand/Ethernet adapter, supporting EDR 100Gb/s InfiniBand and 100Gb/s Ethernet and enabling any standard networking, clustering, or storage to operate seamlessly over any converged network leveraging a consolidated software stack. |

InfiniBand Architecture Specification v1.3 compliant | ConnectX-5 delivers low latency, high bandwidth, and computing efficiency for performance-driven server and storage clustering applications. ConnectX-5 is InfiniBand Architecture Specification v1.3 compliant. |

Up to 100 Gigabit Ethernet | NVIDIA adapters comply with the following IEEE 802.3 standards:

|

Memory |

|

Overlay Networks | In order to better scale their networks, data center operators often create overlay networks that carry traffic from individual virtual machines over logical tunnels in encapsulated formats such as NVGRE and VXLAN. While this solves network scalability issues, it hides the TCP packet from the hardware offloading engines, placing higher loads on the host CPU. ConnectX-5 effectively addresses this by providing advanced NVGRE and VXLAN hardware offloading engines that encapsulate and de-capsulate the overlay protocol. |

RDMA and RDMA over Converged Ethernet (RoCE) | ConnectX-5, utilizing IBTA RDMA (Remote Data Memory Access) and RoCE (RDMA over Converged Ethernet) technology, delivers low-latency and high performance over Band and Ethernet networks. Leveraging data center bridging (DCB) capabilities as well as ConnectX-5 advanced congestion control hardware mechanisms, RoCE provides efficient low-latency RDMA services over Layer 2 and Layer 3 networks. |

NVIDIA PeerDirect™ | PeerDirect™ communication provides high-efficiency RDMA access by eliminating unnecessary internal data copies between components on the PCIe bus (for example, from GPU to CPU), and therefore significantly reduces application run time. ConnectX-5 advanced acceleration technology enables higher cluster efficiency and scalability to tens of thousands of nodes. |

CPU Offload | Adapter functionality enabling reduced CPU overhead allowing more available CPU for computation tasks. |

Open VSwitch (OVS) offload using ASAP2 | • Flexible match-action flow tables |

Quality of Service (QoS) | Support for port-based Quality of Service enabling various application requirements for latency and SLA. |

Storage Acceleration | A consolidated compute and storage network achieves significant cost-performance advantages over multi-fabric networks. Standard block and file access protocols can leverage InfiniBand RDMA for high-performance storage access. |

SR-IOV | ConnectX-5 SR-IOV technology provides dedicated adapter resources and guaranteed isolation and protection for virtual machines (VM) within the server. |

High-Performance Accelerations | • Tag Matching and Rendezvous Offloads |

OpenFabrics Enterprise Distribution (OFED)

Interoperable with 1/10/25/40/50/100 Gb/s Ethernet switches

Passive copper cable with ESD protection

Powered connectors for optical and active cable support