User Guide

NVIDIA Nsight Systems user guide

Profiling from the CLI

Installing the CLI on Your Target

The Nsight Systems CLI provides a simple interface to collect on a target without using the GUI. The collected data can then be copied to any system and analyzed later.

The CLI is distributed in the Target directory of the standard Nsight Systems download package.

If you wish to run the CLI without root (recommended mode), you will want to install in a directory where you have full access.

Note

You must run the CLI on Windows as administrator.

Command Line Options

The Nsight Systems command lines can have one of two forms:

nsys [global_option]

or

nsys [command_switch][optional command_switch_options][application] [optional application_options]

All command line options are case-sensitive. For command switch options, when short options are used, the parameters should follow the switch after a space; e.g., -s process-tree. When long options are used, the switch should be followed by an equal sign and then the parameter(s); e.g., --sample=process-tree.

For this version of Nsight Systems, if you launch a process from the command line to begin analysis, the launched process will be terminated when collection is complete, including runs with --duration set, unless the user specifies the --kill none option (details below). The exception is that if the user uses NVTX, cudaProfilerStart/Stop, or hotkeys to control the duration, the application will continue unless --kill is set.

The Nsight Systems CLI supports concurrent analysis by using sessions. Each Nsight Systems session is defined by a sequence of CLI commands that define one or more collections (e.g., when and what data is collected). A session begins with either a start, launch, or profile command. A session ends with a shutdown command, when a profile command terminates, or, if requested, when all the process tree(s) launched in the session exit. Multiple sessions can run concurrently on the same system.

CLI Global Options

Short |

Long |

Description |

|---|---|---|

-h |

|

Help message providing information about available command switches and their options. |

-v |

|

Output Nsight Systems CLI version information. |

CLI Command Switches

The Nsight Systems command line interface can be used in two modes. You may

launch your application and begin analysis with options specified to the

nsys profile command. Alternatively, you can control the launch of an

application and data collection using interactive CLI commands.

Command |

Description |

|---|---|

analyze |

Post process existing Nsight Systems result, either in .nsys-rep or SQLite format, to generate expert systems report. |

cancel |

Cancels an existing collection started in interactive mode. All data already collected in the current collection is discarded. |

export |

Generates an export file from an existing |

launch |

In interactive mode, launches an application in an environment that supports the requested options. The launch command can be executed before or after a start command. |

nvprof |

Special option to help with transition from legacy NVIDIA nvprof tool. Calling |

profile |

A fully formed profiling description requiring and accepting no further input. The command switch options used (see below table) determine when the collection starts, stops, what collectors are used (e.g., API trace, IP sampling, etc.), what processes are monitored, etc. |

recipe |

Post process multiple existing Nsight Systems results to generate statistical information and create various plots. See the Multi-Report Analysis topic for details. |

sessions |

Gives information about all sessions running on the system. |

shutdown |

Disconnects the CLI process from the launched application and forces the CLI process to exit. If a collection is pending or active, it is canceled. |

start |

Starts a collection in interactive mode. The start command can be executed before or after a launch command. |

stats |

Post process existing Nsight Systems result, either in |

status |

Reports on the status of a CLI-based collection or the suitability of the profiling environment. |

stop |

Stops a collection that was started in interactive mode. When executed, all active collections stop, the CLI process terminates but the application continues running. |

CLI Profile Command Switch Options

After choosing the profile command switch, the following options are available. Usage:

nsys [global-options] profile [options] [application] [application-arguments]

Short |

Long |

Possible Parameters |

Default |

Switch Description |

|---|---|---|---|---|

|

none,tegra-accelerators |

none |

Collect other accelerators workload trace from the hardware engine units. Available in Nsight Systems Embedded Platforms Edition only. |

|

|

true, false |

false |

Derive report file name from collected data using details of the profiled graphics application. Format:

|

|

-b |

|

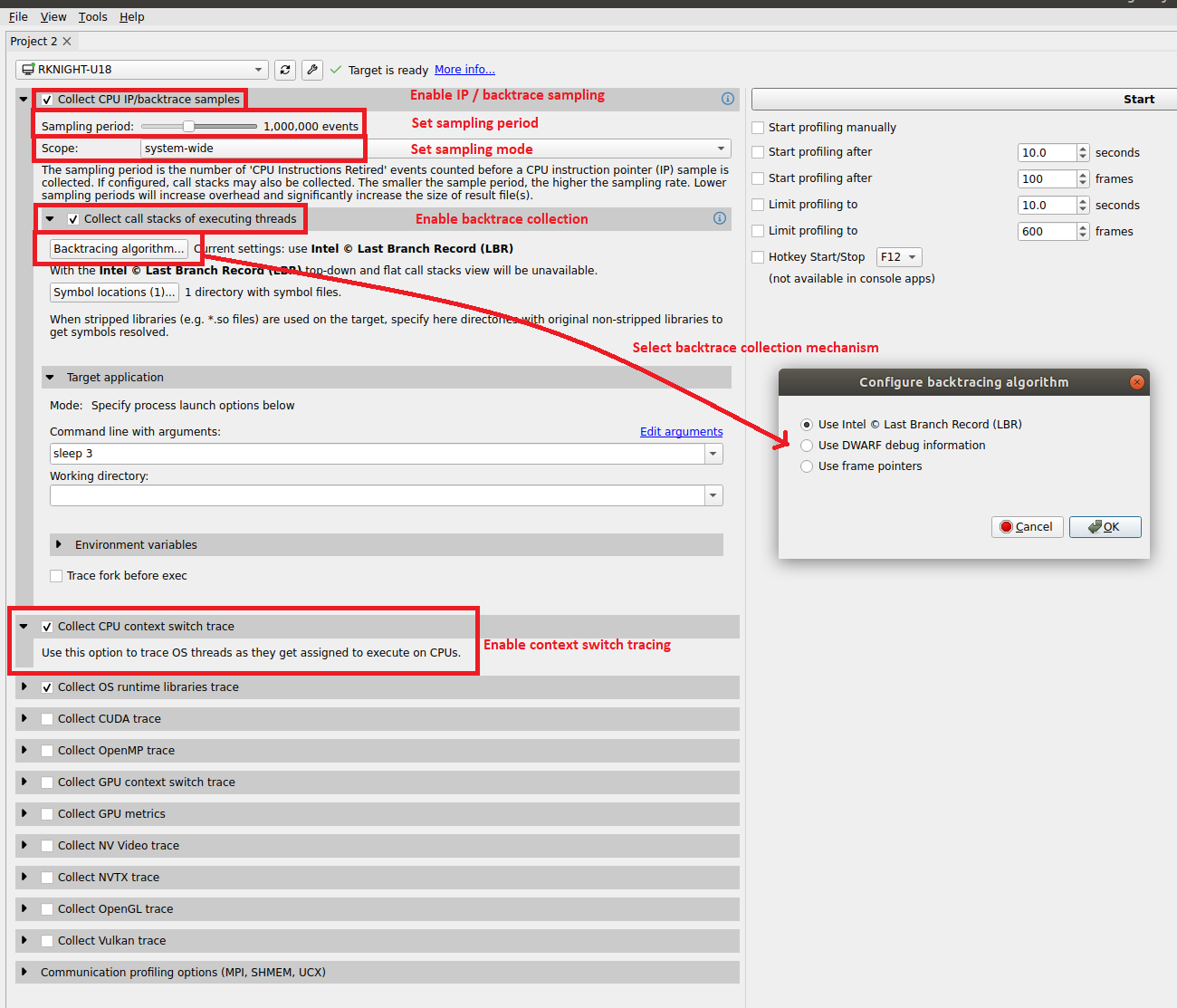

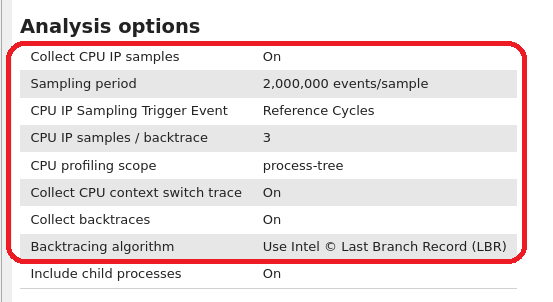

auto,fp,lbr,dwarf,none |

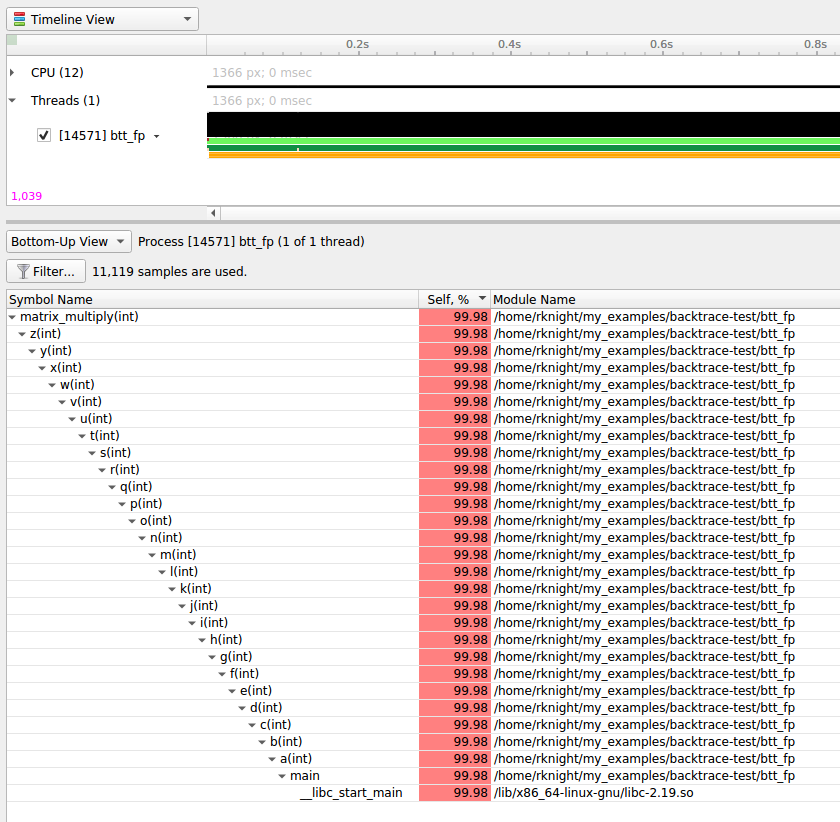

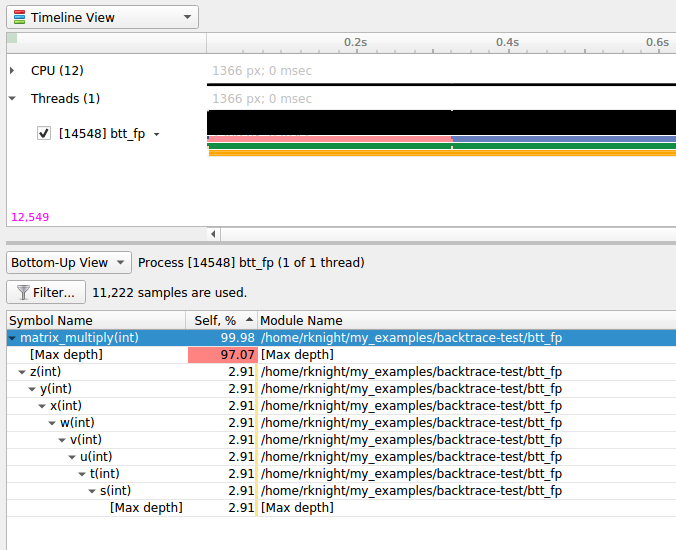

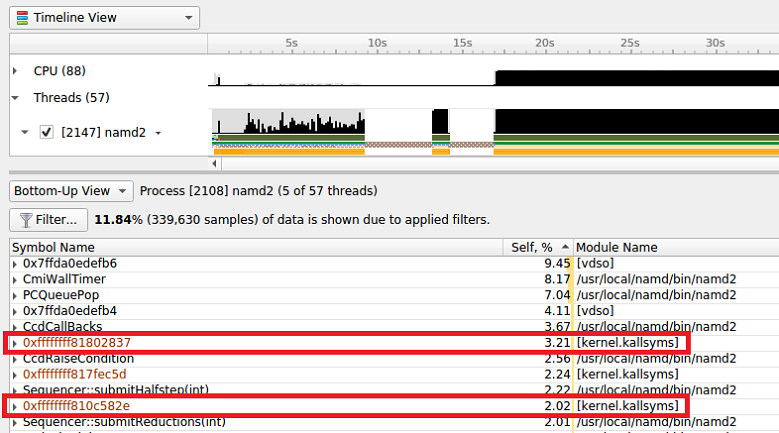

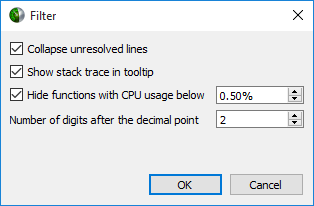

Select the backtrace method to use while sampling. The option |

|

-c |

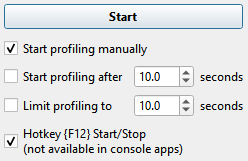

|

none, cudaProfilerApi, hotkey, nvtx |

none |

When Note Hotkey works for graphic applications only. |

|

none, stop, stop-shutdown, repeat[:N], repeat-shutdown:N |

stop-shutdown |

Specify the desired behavior when a capture range ends. Applicable only when used along with the

|

|

|

true, false |

false |

Collect clock frequency changes. Available only in Nsight Systems Embedded Platforms Edition and Arm server (SBSA) platforms. |

|

|

< filename > |

none |

Open a file that contains profile switches and parse the switches. Note additional switches on the command line will override switches in the file. This flag can be specified more than once. |

|

|

0x16, 0x17, …, none |

none |

Collect per-cluster Uncore PMU counters. Multiple values can be selected, separated by commas only (no

spaces). Use the |

|

|

0x11,0x13,…,none |

none |

Collect per-core PMU counters. Multiple values can be selected, separated by commas only (no spaces). Use

the |

|

|

‘help’ or the end users selected events in the format ‘x,y’ |

‘2’ i.e., Instructions Retired |

Select the CPU Core events to sample. Use the |

|

|

0,1,2,…,none |

none |

Collect metrics on the CPU core. Multiple values can be selected, separated by commas only (no spaces). Use

the Note Only available on Grace. |

|

|

0x2a,0x2c,…,none |

none |

Collect per-socket Uncore PMU counters. Multiple values can be selected, separated by commas only (no spaces).

Use the |

|

|

‘help’ or the end users selected events in the format ‘x,y’ |

none |

Select the Uncore CPU Socket events to sample. Use the |

|

|

0,1,2,…,none |

none |

Collect Uncore metrics on the CPU socket. Multiple values can be selected, separated by commas only (no spaces).

Use the Note Only available on Grace. |

|

|

process-tree, system-wide, none |

process-tree |

Trace OS thread scheduling activity. Select Note If the |

|

|

milliseconds |

See Description |

Set the interval when buffered CUDA data is automatically saved to storage in milliseconds. The CUDA data

buffer saves may cause profiler overhead. Buffer save behavior can be controlled with this switch. If the

CUDA flush interval is set to 0 on systems running CUDA 11.0 or newer, buffers are saved when they fill.

If a flush interval is set to a non-zero value on such systems, buffers are saved only when the flush

interval expires. If a flush interval is set and the profiler runs out of available buffers before the

flush interval expires, additional buffers will be allocated as needed. In this case, setting a flush

interval can reduce buffer save overhead but increase memory use by the profiler. If the flush interval

is set to 0 on systems running older versions of CUDA, buffers are saved at the end of the collection. If

the profiler runs out of available buffers, additional buffers are allocated as needed. If a flush interval

is set to a non-zero value on such systems, buffers are saved when the flush interval expires. A

|

|

|

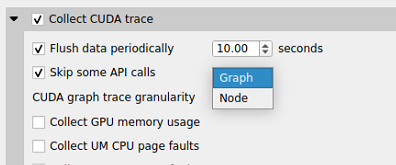

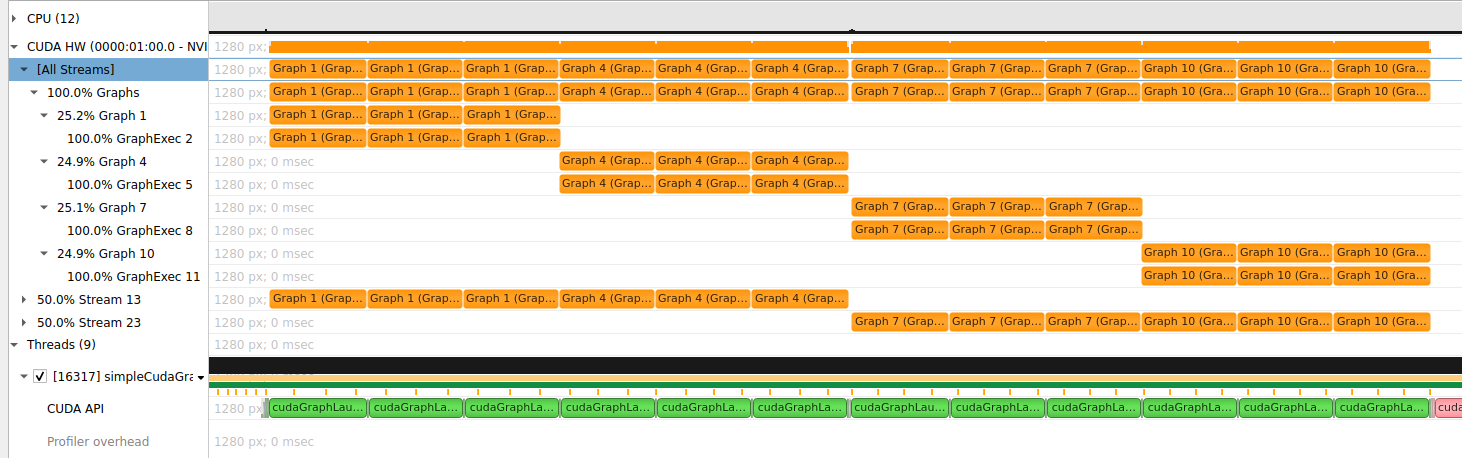

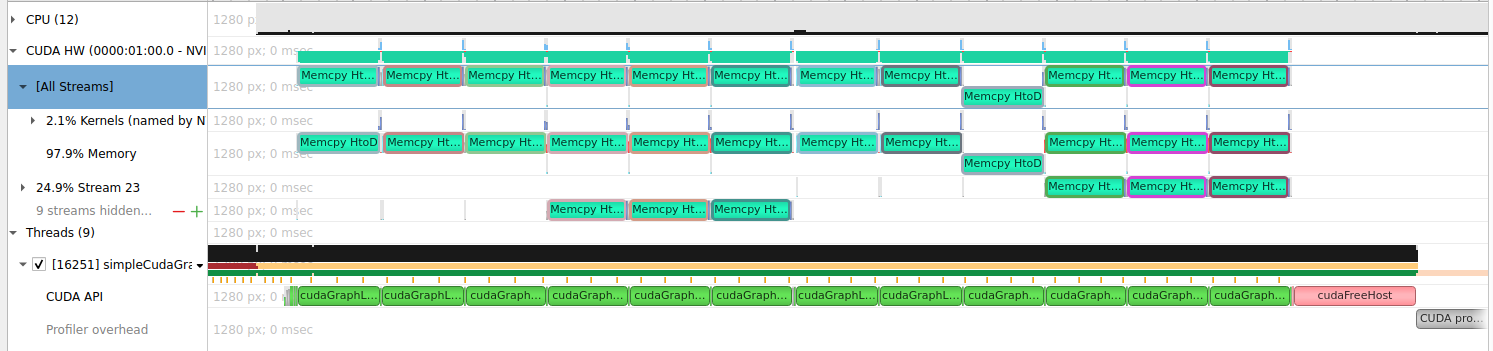

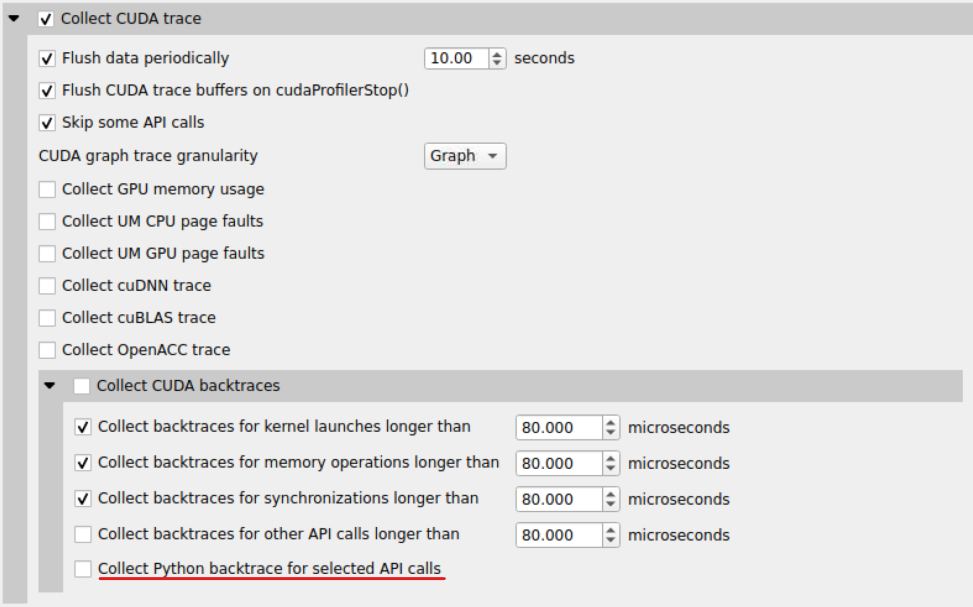

graph, node |

graph |

If |

|

|

true, false |

false |

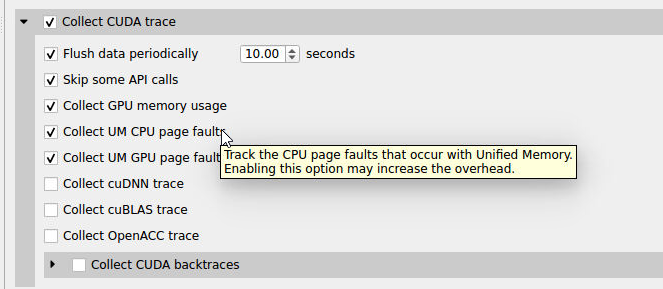

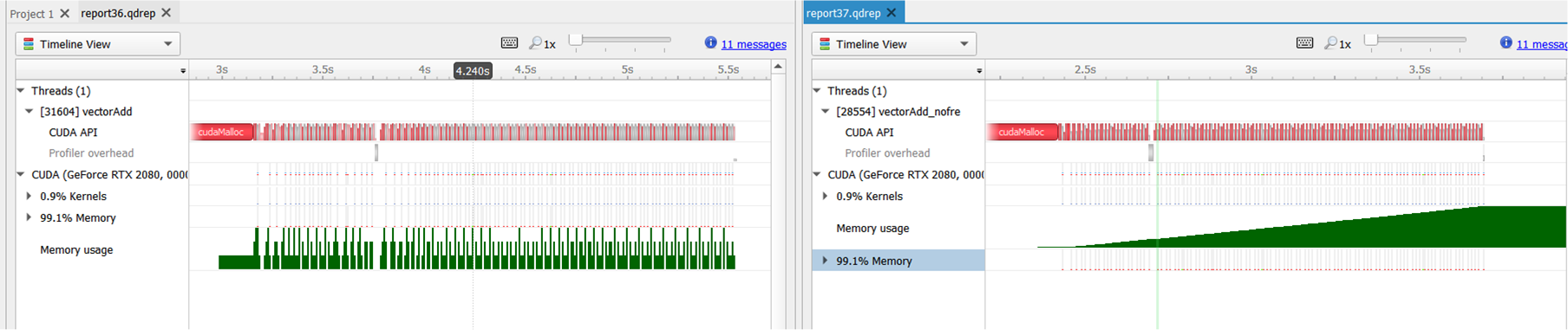

Track the GPU memory usage by CUDA kernels. Applicable only when CUDA tracing is enabled. Note This feature may cause significant runtime overhead. |

|

|

true, false |

false |

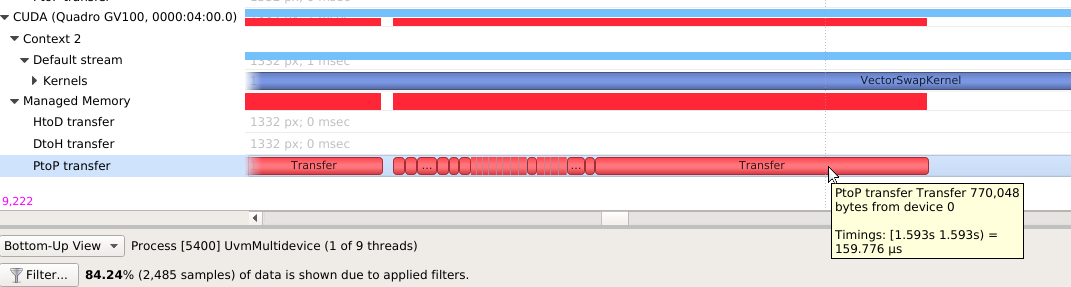

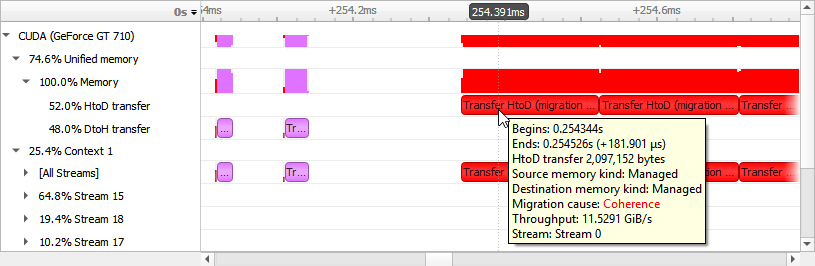

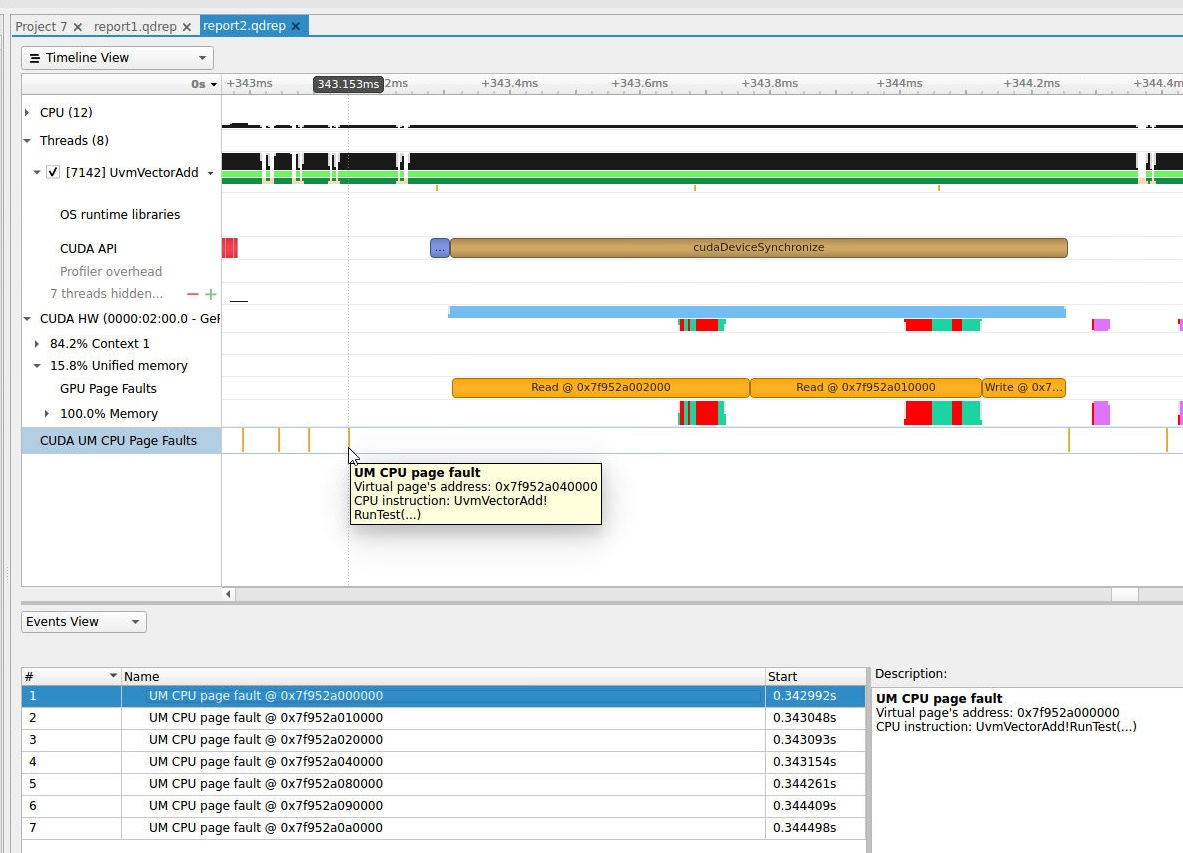

This switch tracks the page faults that occur when CPU code tries to access a memory page that resides on the device. Note that this feature may cause significant runtime overhead. Not available on Nsight Systems Embedded Platforms Edition. |

|

|

true, false |

false |

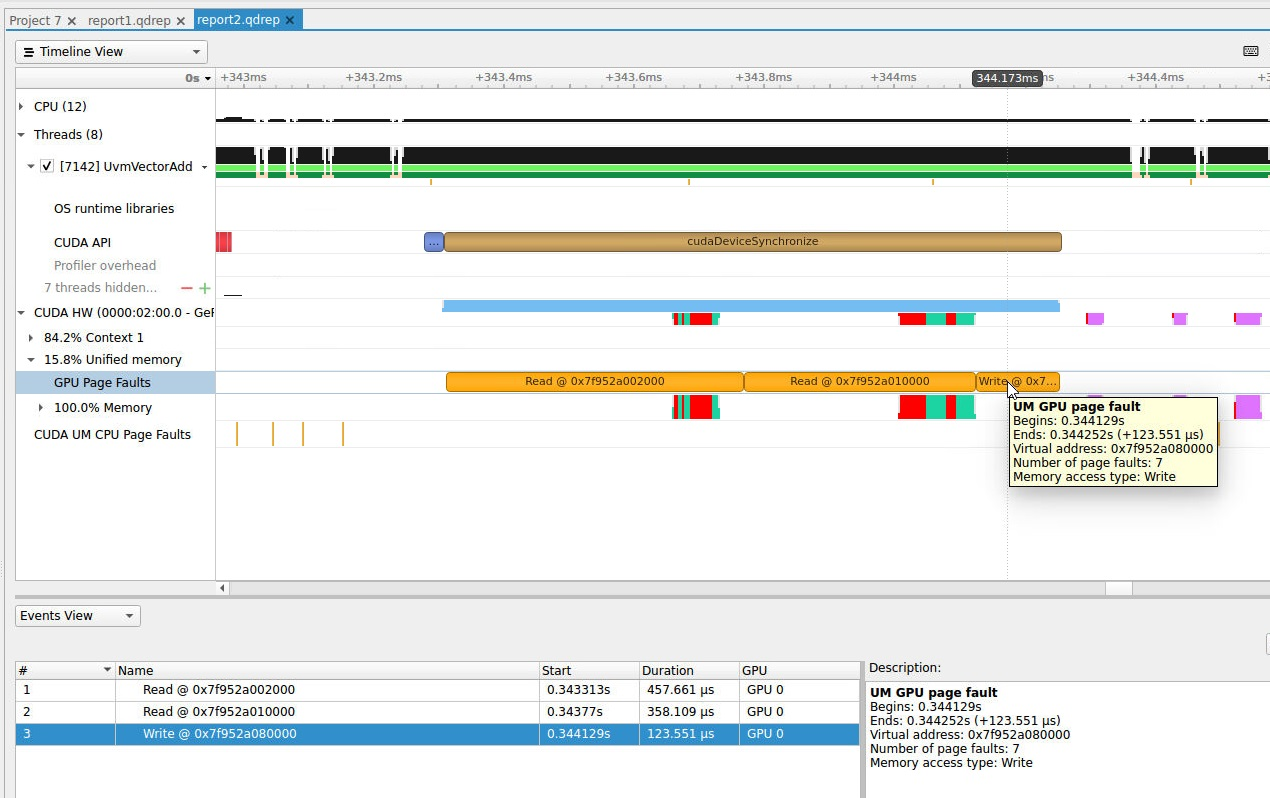

This switch tracks the page faults that occur when GPU code tries to access a memory page that resides on the host. Note that this feature may cause significant runtime overhead. Not available on Nsight Systems Embedded Platforms Edition. |

|

|

all, none, kernel, memory, sync, other |

none |

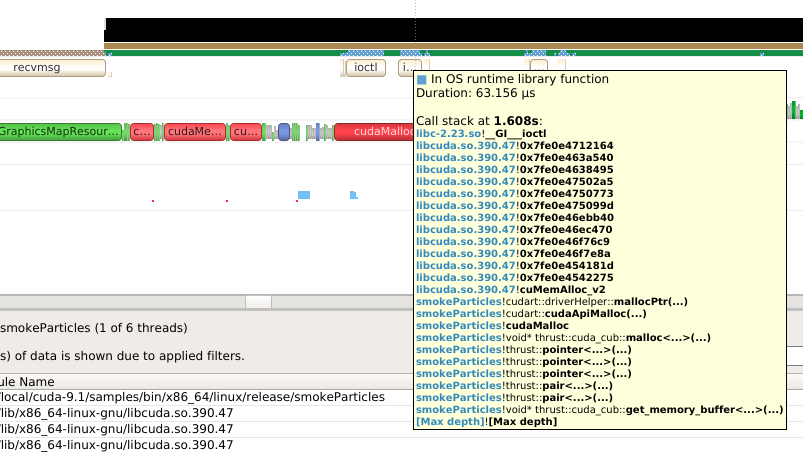

When tracing CUDA APIs, enable the collection of a backtrace when a CUDA API is invoked. Significant runtime

overhead may occur. Values may be combined using Note CPU sampling must be enabled. |

|

-y |

|

< seconds > |

0 |

Collection start delay in seconds. |

-d |

|

< seconds > |

NA |

Collection duration in seconds; duration must be greater than zero. The launched process will be terminated

when the specified profiling duration expires unless the user specifies the |

|

60 <= integer |

Stop the recording session after this many frames have been captured. When it is selected, command cannot include any other stop options. If not specified, the default is disabled. |

||

|

true, false |

false |

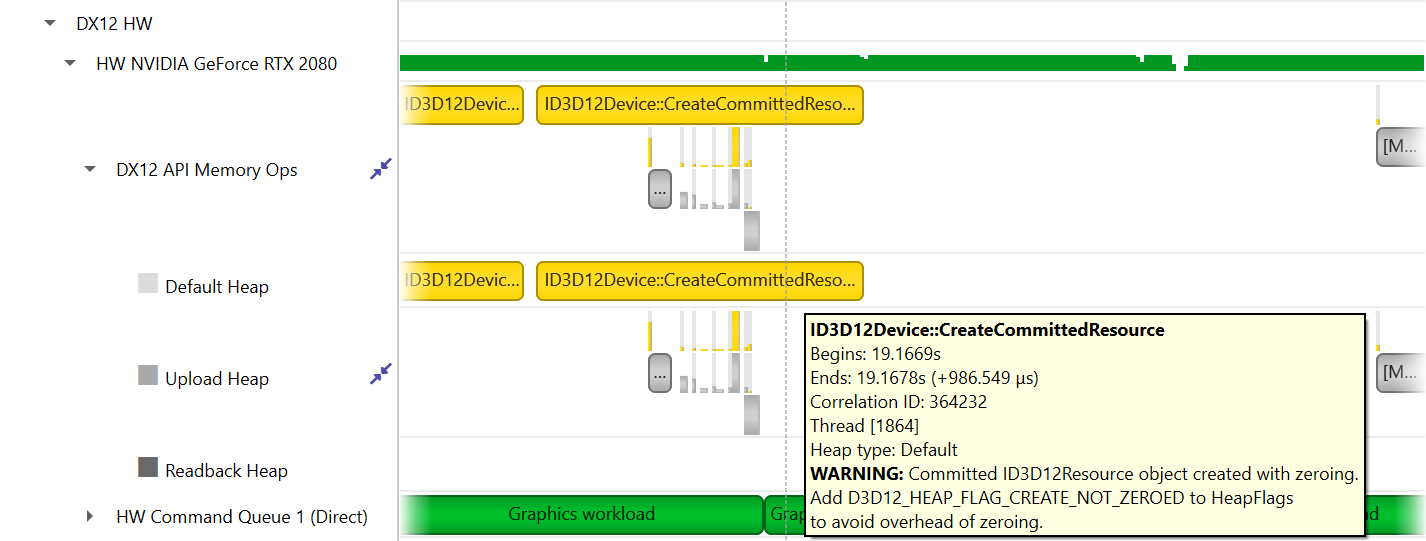

The Nsight Systems trace initialization involves creating a D3D device and discarding it. Enabling this flag

makes a call to |

|

|

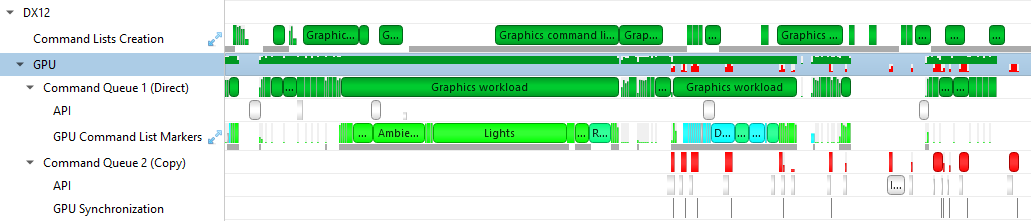

true, false, individual, batch, none |

individual |

If individual or true, trace each DX12 workload’s GPU activity individually. If batch, trace DX12 workloads’

GPU activity in |

|

|

true, false |

true |

If true, trace wait calls that block on fences for DX12. Note that this switch is applicable only when

|

|

|

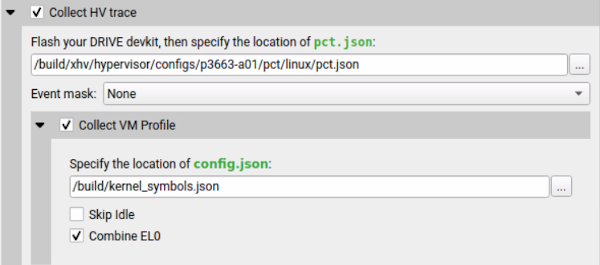

<filepath kernel_symbols.json> |

none |

XHV sampling config file. Available in Nsight Systems Embedded Platforms Edition only. |

|

-e |

|

A=B |

NA |

Set environment variable(s) for the application process to be launched. Environment variables should be defined as A=B. Multiple environment variables can be specified as A=B,C=D. |

|

<plugin_name>[,arg1,arg2,…] |

NA |

Use the specified plugin. The option can be specified multiple times to enable multiple plugins.

Plugin arguments are separated by commas only (no spaces). Commas can be escaped with a backslash |

|

|

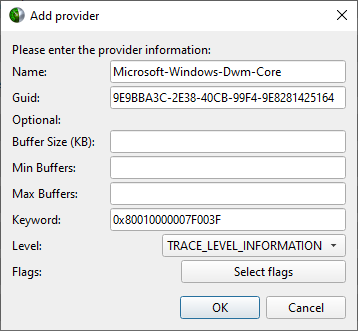

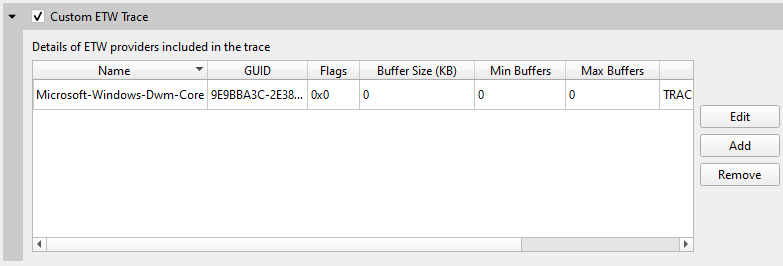

“<name>,<guid>”, or path to JSON file |

none |

Add custom ETW trace provider(s). If you want to specify more attributes than Name and GUID, provide a JSON configuration file as as outlined below. This switch can be used multiple times to add multiple providers. Note: Only available for Windows targets. |

|

|

system-wide, none |

none |

Use the |

|

|

Integers from 1 to 20 Hz |

3 |

The sampling frequency used to collect event counts. Minimum event sampling frequency is 1 Hz. Maximum event sampling frequency is 20 Hz. Not available in Nsight Systems Embedded Platforms Edition. |

|

|

arrow, arrowdir, hdf, json, parquetdir, sqlite, text, none |

none |

Create additional output file(s) based on the data collected. This option can be given more than once. Warning If the collection captures a large amount of data, creating the export file may take several minutes to complete. |

|

|

true, false |

true |

If set to true, any call to |

|

-f |

|

true, false |

false |

If true, overwrite all existing result files with same output filename (.nsys-rep, .sqlite, .h5, .txt, .json, .arrows, _arwdir, _pqtdir). |

|

Collect ftrace events. Argument should list events to collect as: subsystem1/event1,subsystem2/event2. Requires root. No ftrace events are collected by default. |

|||

|

Skip initial ftrace setup and collect already configured events. Default resets the ftrace configuration. |

|||

|

GPU ID, help, all, none |

none |

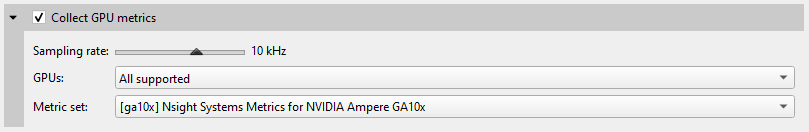

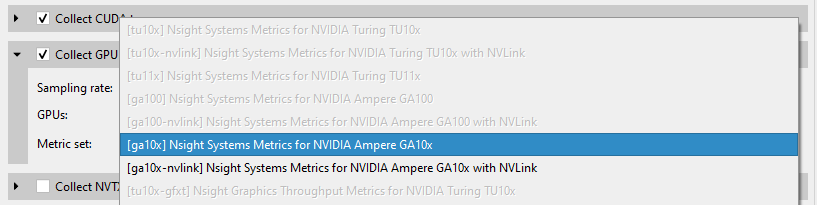

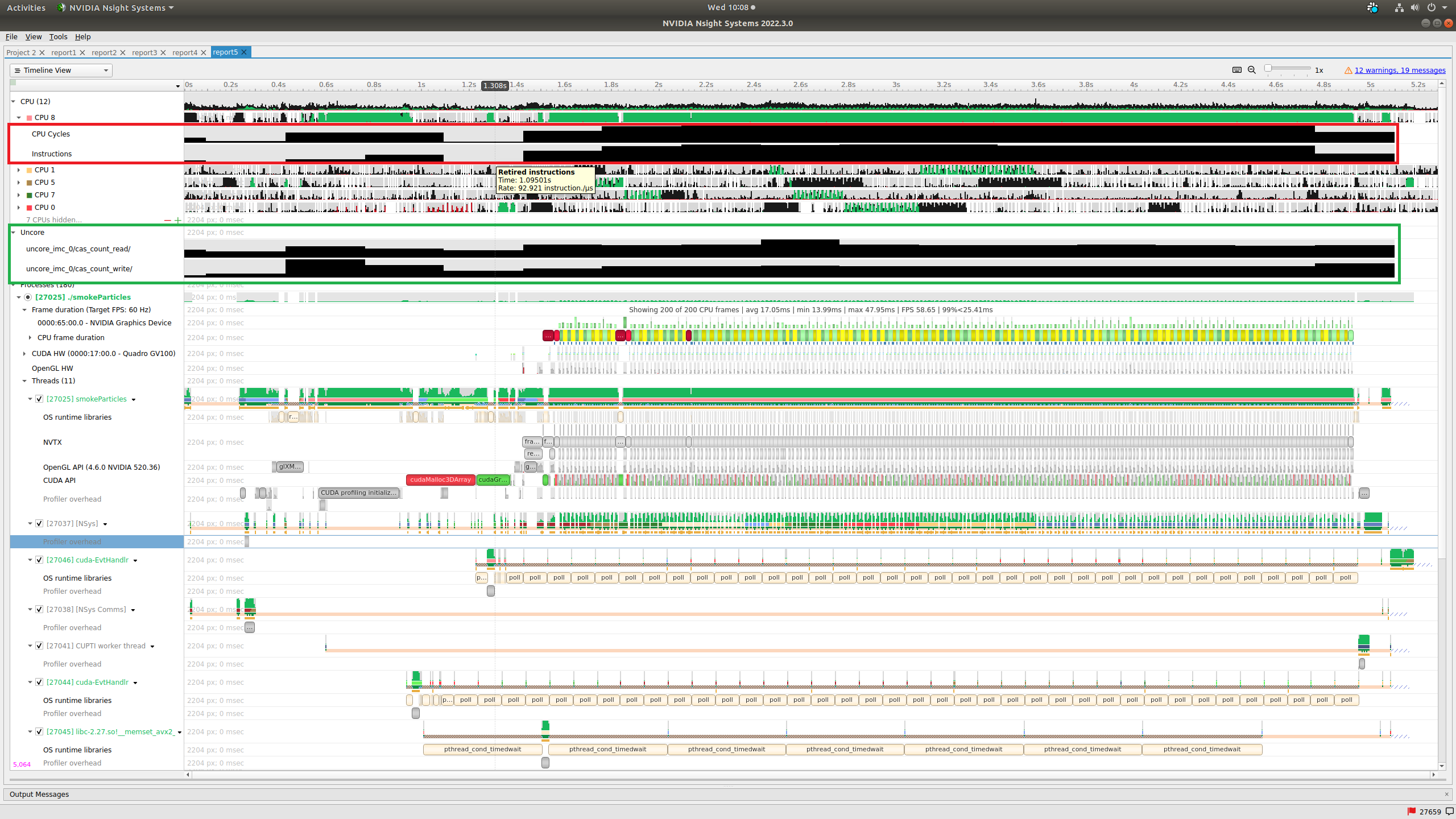

Collect GPU Metrics from specified devices. Determine GPU IDs by using |

|

|

integer |

10000 |

Specify GPU Metrics sampling frequency. Minimum supported frequency is 10 (Hz). Maximum supported frequency is 200000 (Hz). |

|

|

alias, file:<file name> |

see description |

Specify metric set for GPU Metrics. The argument must be one of the aliases reported by

|

|

|

help, <id1,id2,…>, all, none |

none |

Analyze video devices. |

|

|

true,false |

false |

Trace GPU context switches. Note that this requires driver r435.17 or later and root permission. |

|

|

<tag> |

none |

Print the help message. The option can take one optional argument that will be used as a tag. If a tag is provided, only options relevant to the tag will be printed. |

|

|

‘F1’ to ‘F12’ |

‘F12’ |

Hotkey to trigger the profiling session. Note that this switch is applicable only when

|

|

|

<NIC names> |

none |

A comma-separated list of NIC names. The NICs which |

|

|

<file paths> |

none |

A comma-separated list of file paths. Paths of an existing ibdiagnet db_csv files, containing networks

information data. Nsight Systems will read the networks’ information from these files. Don’t use |

|

|

<directory path> |

none |

Sets the path of a directory into which ibdiagnet network discovery data will be written. Use this option

together with the |

|

|

<IB switch GUIDs> |

none |

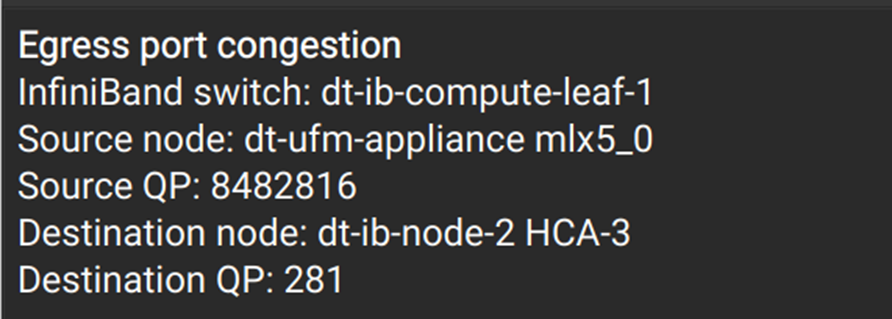

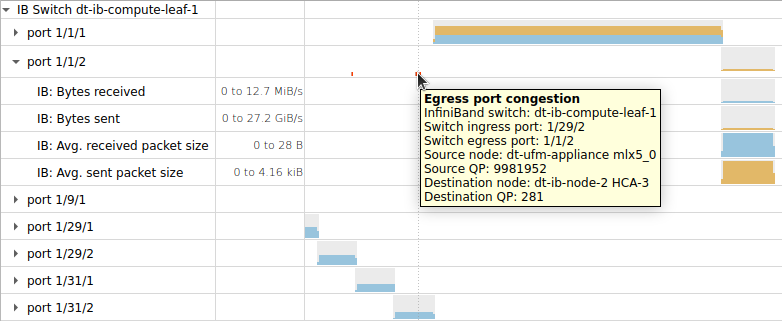

A comma-separated list of InfiniBand switch GUIDs. Collect InfiniBand switch congestion events from

switches identified by the specified GUIDs. This switch can be used multiple times. System scope. Use

the |

|

|

<NIC name> |

none |

The name of the NIC (HCA) through which InfiniBand switches will be accessed. By default, the first

active NIC will be used. One way to find a NIC’s name is via the |

|

|

1 <= integer <= 100 |

50 |

Percent of InfiniBand switch congestion events to be collected. This option enables reducing the network bandwidth consumed by reporting congestion events. |

|

|

1 < integer <= 1023 |

75 |

High threshold percentage for InfiniBand switch egress port buffer size. Before a packet leaves an InfiniBand switch, it is stored at an egress port buffer. The buffer’s size is checked and if it exceeds the given threshold percentage, a congestion event is reported. The percentage can be greater than 100. |

|

|

<IB switch GUIDs> |

none |

A comma-separated list of InfiniBand switch GUIDs. Collect metrics from the specified InfiniBand switches. This switch can be used multiple times. System scope. |

|

|

<NIC name> |

none |

The name of the NIC (HCA) through which InfiniBand switches will be accessed for performance metrics. By

default, the first active NIC will be used. One way to find a NIC’s name is via the |

|

-n |

|

true, false |

true |

When true, the current environment variables and the tool’s environment variables will be specified for the launched process. When false, only the tool’s environment variables will be specified for the launched process. |

|

true,false |

true |

Use detours for injection. If false, process injection will be performed by windows hooks which allows it to bypass anti-cheat software. |

|

|

true, false |

false |

Trace Interrupt Service Routines (ISRs) and Deferred Procedure Calls (DPCs). Requires administrative privileges. Available only on Windows devices. |

|

|

none, sigkill, sigterm, signal number |

sigterm |

Send signal to the target application’s process group. Can be used with |

|

|

openmpi,mpich |

openmpi |

When using |

|

|

true, false |

false |

Collect metrics from supported NIC/HCA devices. System scope. Not available on Nsight Systems Embedded Platforms Edition. |

|

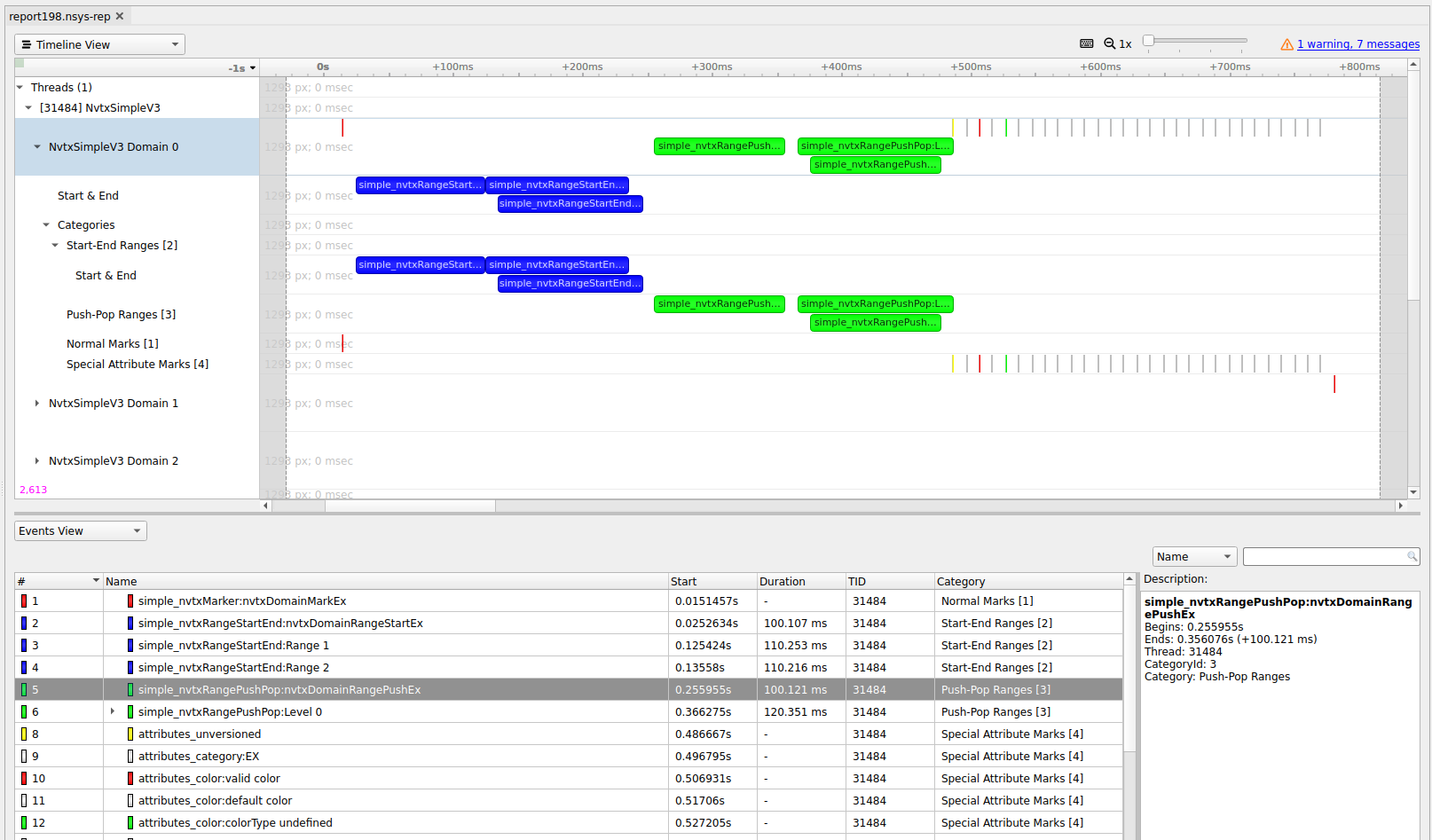

-p |

|

range@domain, range, range@* |

none |

Specify NVTX range and domain to trigger the profiling session. This option is applicable only when

used along with |

|

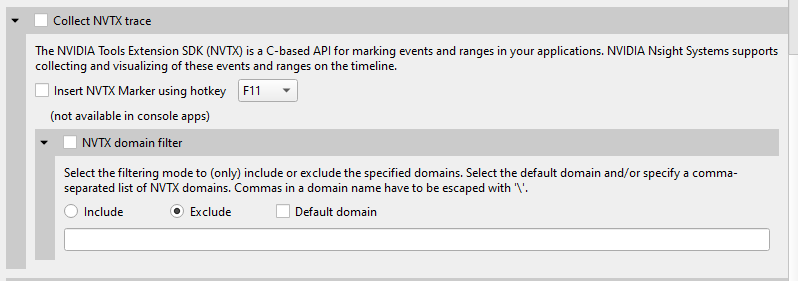

default, <domain_names> |

Choose to exclude NVTX events from a comma separated list of domains. Note Only one of |

||

|

default, <domain_names> |

Choose to only include NVTX events from a comma separated list of domains. Note Only one of |

||

|

<json_file> |

Specify the path to the JSON file containing the requested NVTX annotations. |

||

|

true, false |

true |

If true, trace the OpenGL workloads’ GPU activity. Note that this switch is applicable only when

|

|

|

‘help’ or the end users selected events in the format ‘x,y’ |

Select the OS events to sample. Use the |

||

|

integer |

24 |

Set the depth for the backtraces collected for OS runtime libraries calls. |

|

|

integer |

6144 |

Set the stack dump size, in bytes, to generate backtraces for OS runtime libraries calls. |

|

|

nanoseconds |

80000 |

Set the duration, in nanoseconds, that all OS runtime libraries calls must execute before backtraces are collected. |

|

|

< nanoseconds > |

1000 ns |

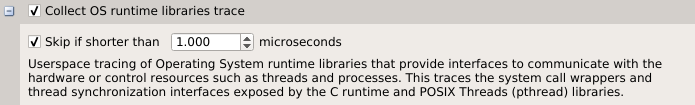

Set the duration, in nanoseconds, that Operating System Runtime (osrt) APIs must execute before they are traced. Values significantly less than 1000 may cause significant overhead and result in extremely large result files. Note This setting is ignored for APIs that interact with files when |

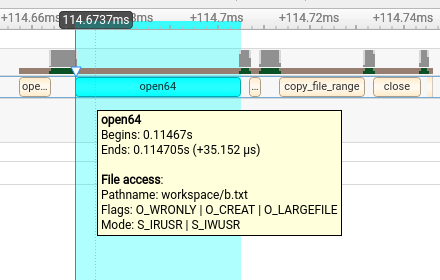

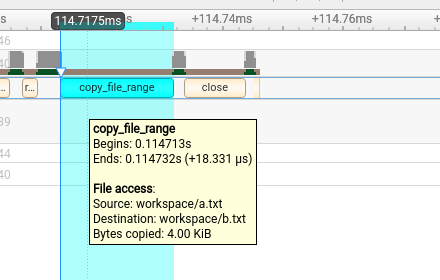

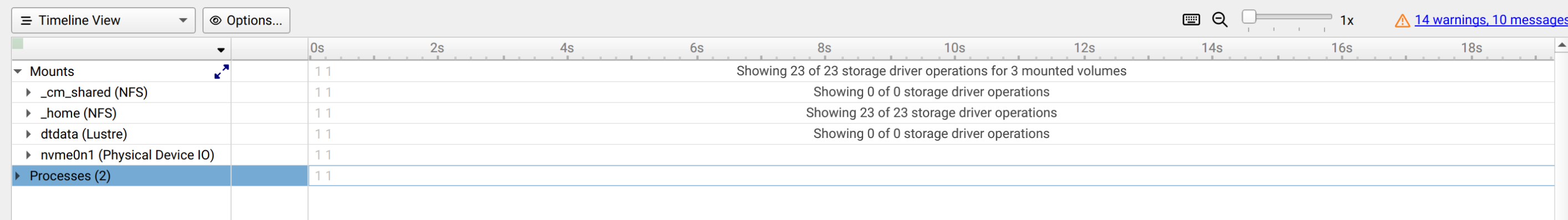

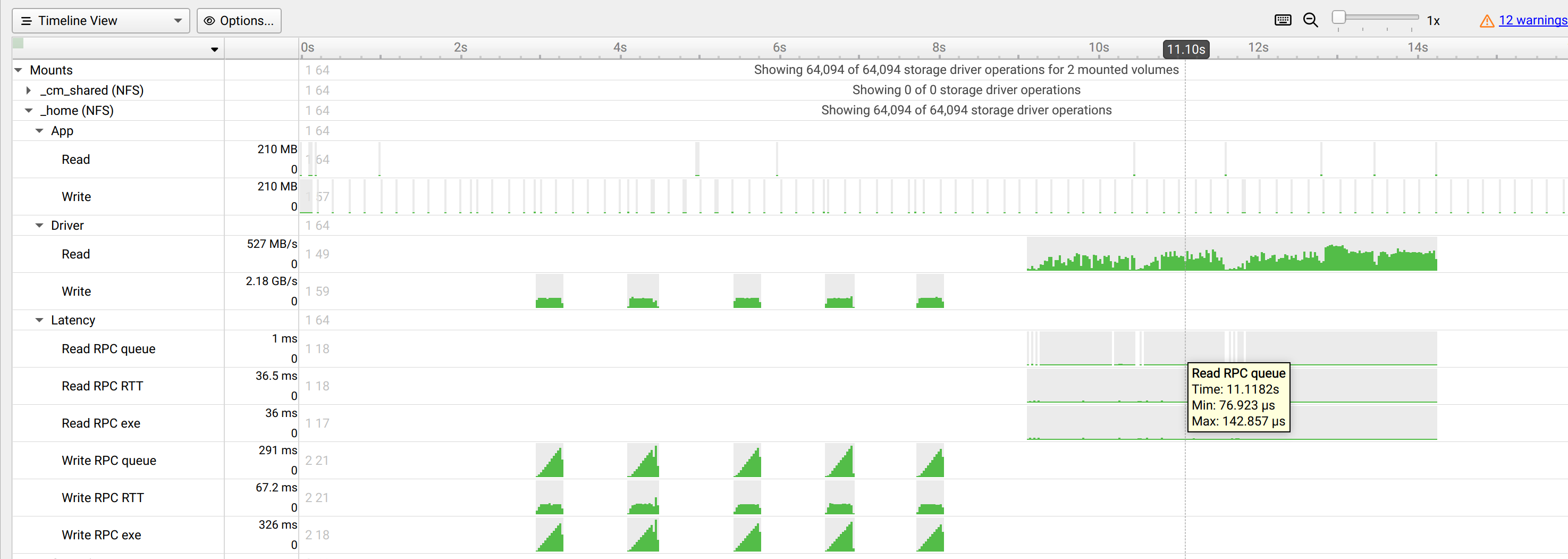

|

|

true, false |

false |

Collect file access data when tracing Operating System Runtime (osrt) APIs that interact with files. Note When this setting is set to true the |

|

-o |

|

< filename > |

report# |

Set the report file name. Any |

|

main, process-tree, system-wide |

main |

Select which process(es) to trace. Available in Nsight Systems Embedded Platforms Edition only. Nsight Systems Workstation Edition will always trace system-wide in this version of the tool. |

|

|

cuda, none |

none |

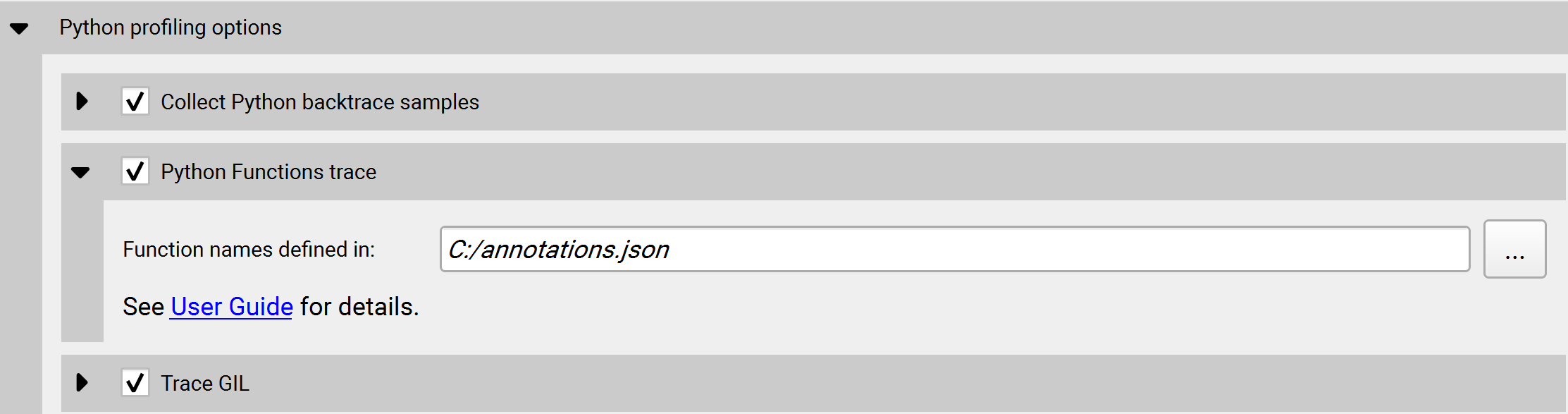

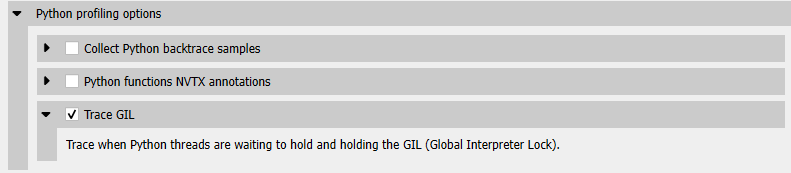

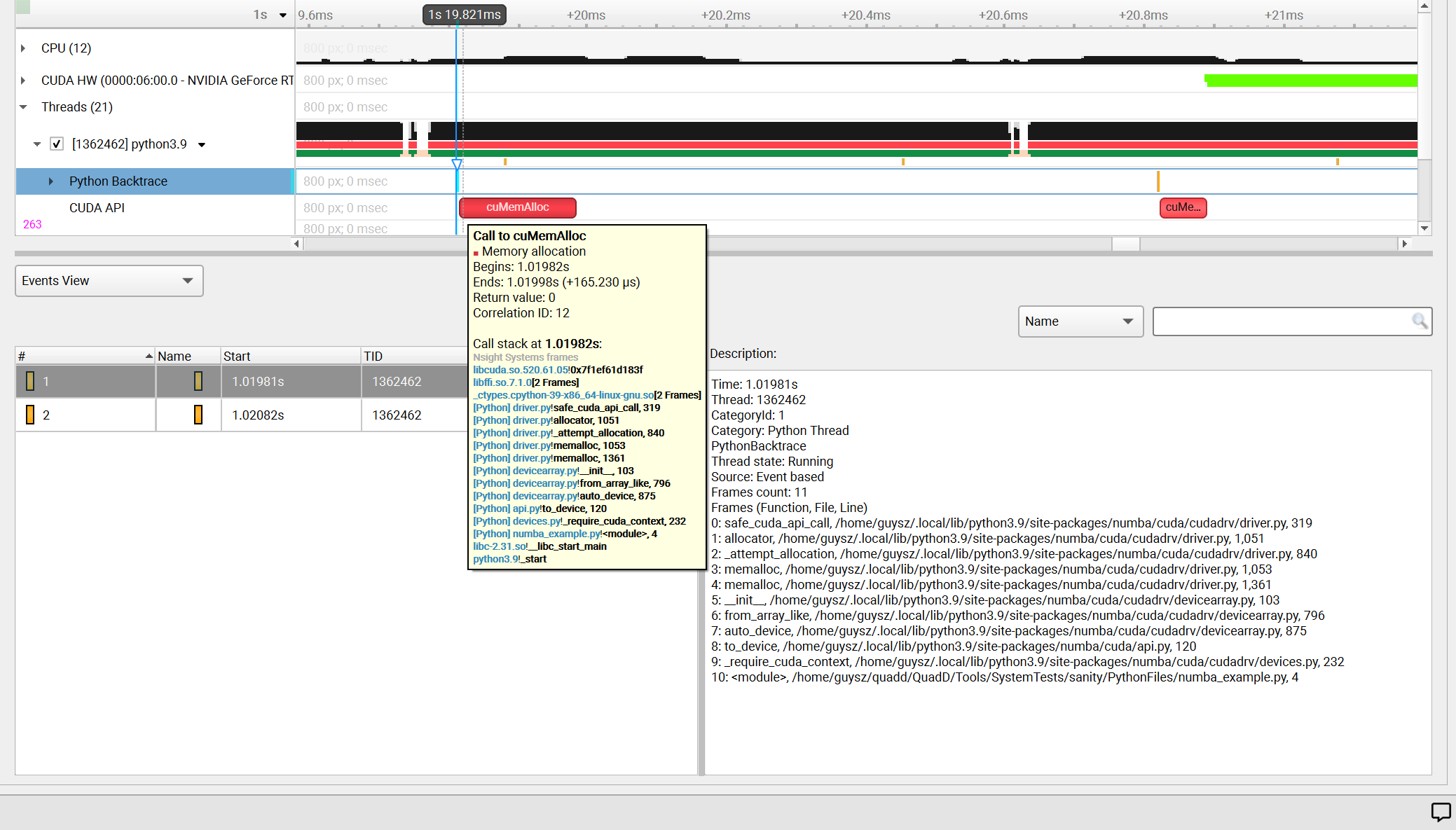

Collect Python backtrace event when tracing the selected API’s trigger. This option is supported

on Arm server (SBSA) platforms and x86 Linux targets. Note: tracing and backtraces of the selected API and CPU

sampling must be enabled. For example, |

|

|

true, false |

false |

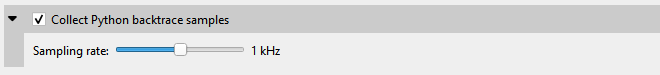

Collect Python backtrace sampling events. This option is supported on Arm server (SBSA) platforms, x86 Linux and Windows targets. Note: When profiling Python-only workflows, consider disabling the CPU sampling option to reduce overhead. |

|

|

1 < integers < 2000 |

1000 |

Specify the Python sampling frequency. The minimum supported frequency is 1Hz. The maximum

supported frequency is 2KHz. This option is ignored if the |

|

|

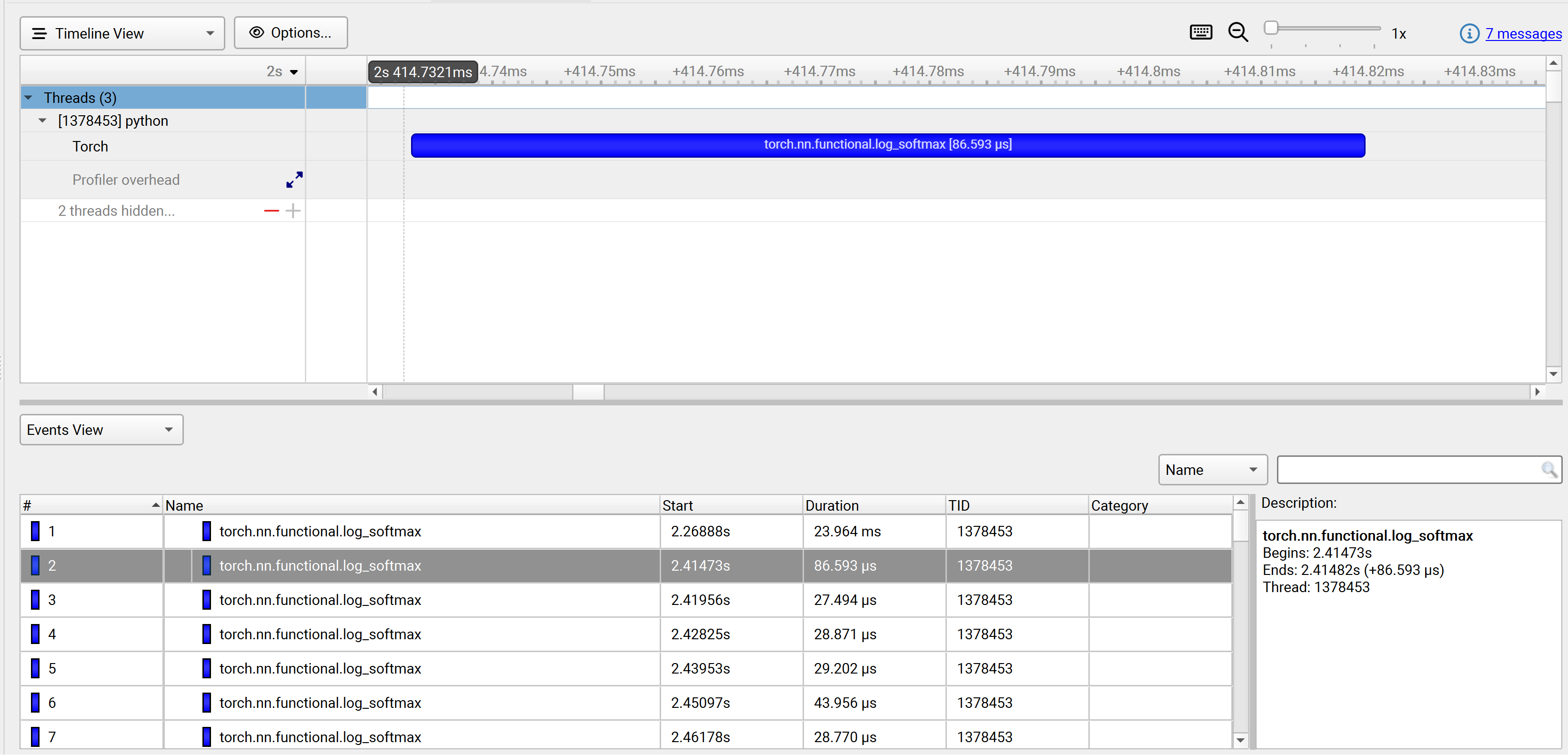

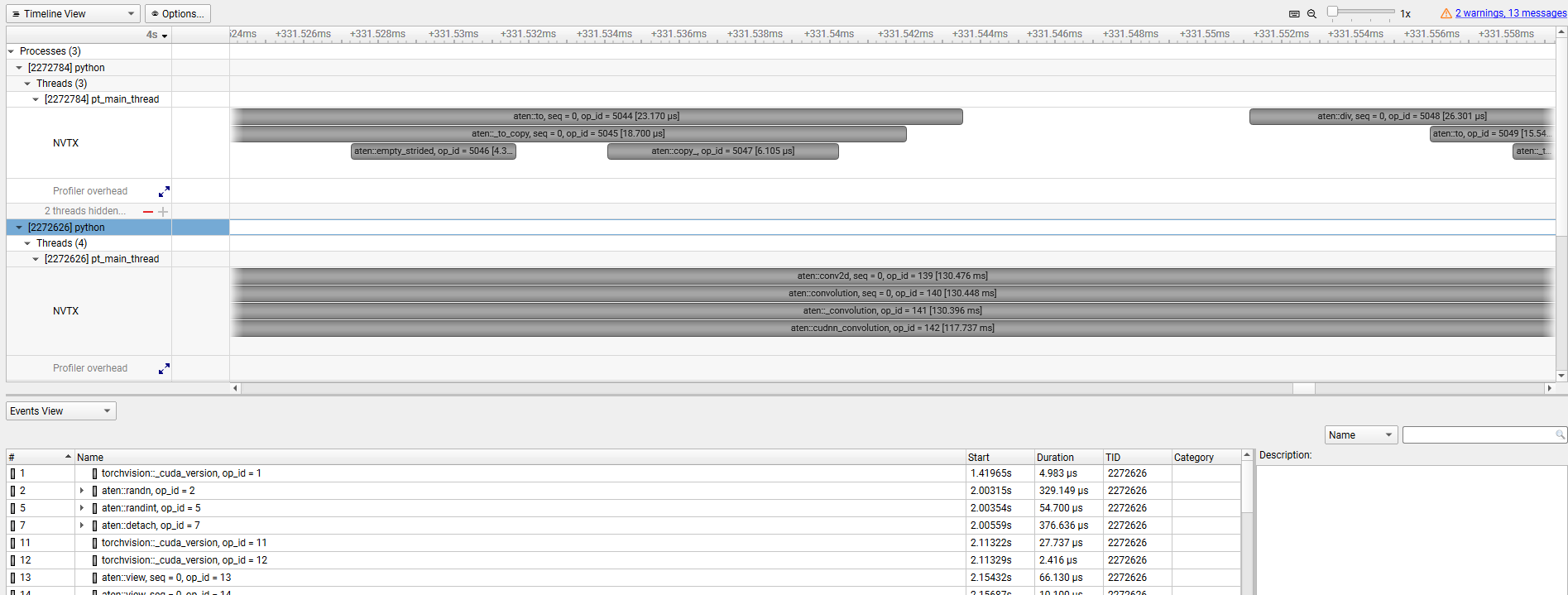

autograd-nvtx, autograd-shapes-nvtx, functions-trace, none |

none |

Enable automatic annotations of PyTorch functions. |

|

|

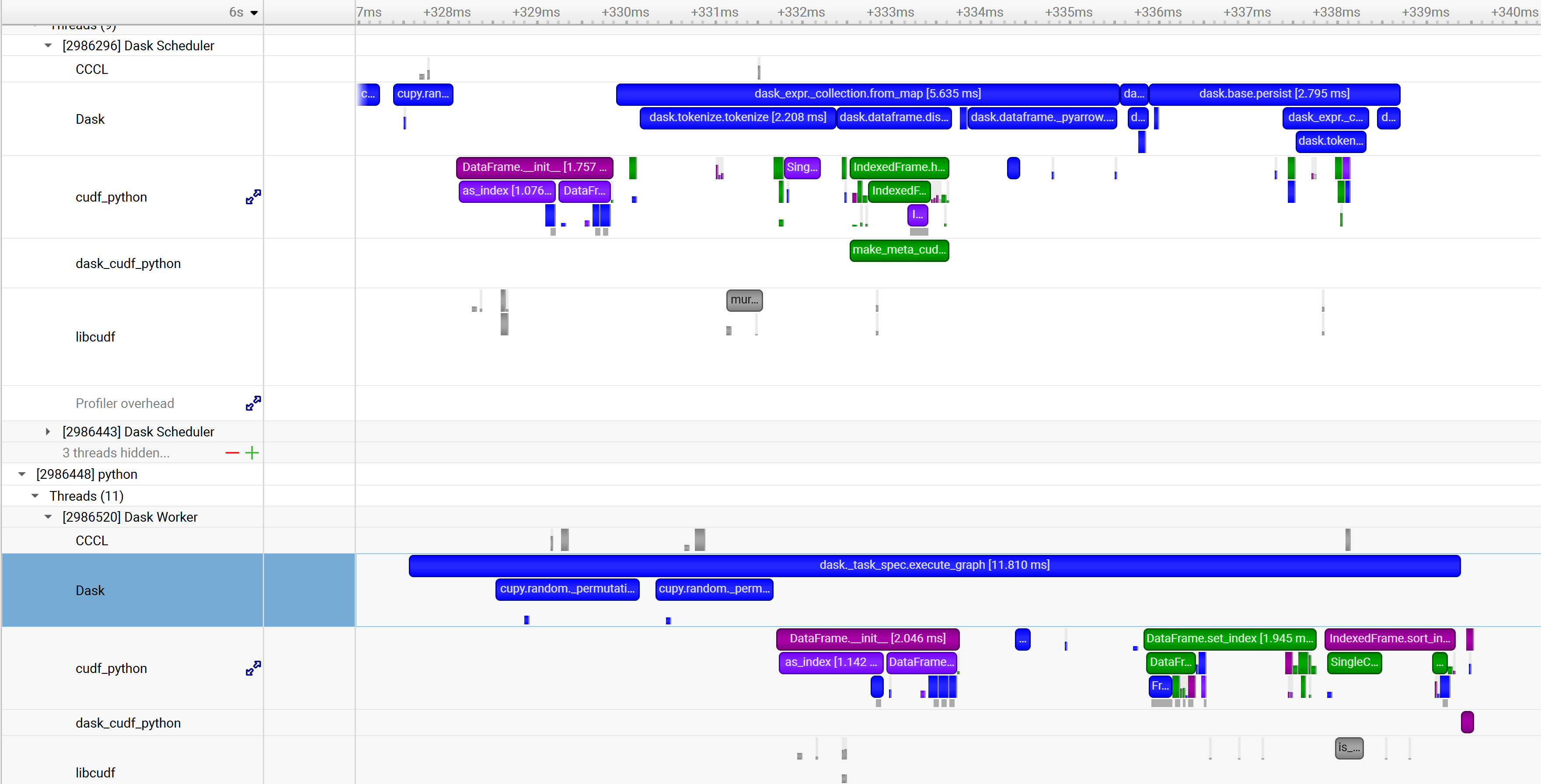

functions-trace, none |

none |

Enable automatic annotations of Dask functions |

|

|

class/event,event, class/event:mode, class:mode,help,none |

none |

Multiple values can be selected, separated by commas only (no spaces). See the

|

|

|

system,process,fast,wide |

system:fast |

Values are separated by a colon ( |

|

|

true,false |

true |

Resolve symbols of captured samples and backtraces. |

|

|

true, false |

false |

Retain ETW files generated by the trace, merge and move the files to the output directory. |

|

|

< username > |

none |

Run the target application as the specified username. If not specified, the target application will be run by the same user as Nsight Systems. Requires root privileges. Available for Linux targets only. |

|

-s |

|

process-tree, system-wide, xhv, xhv-system-wide, none |

process-tree |

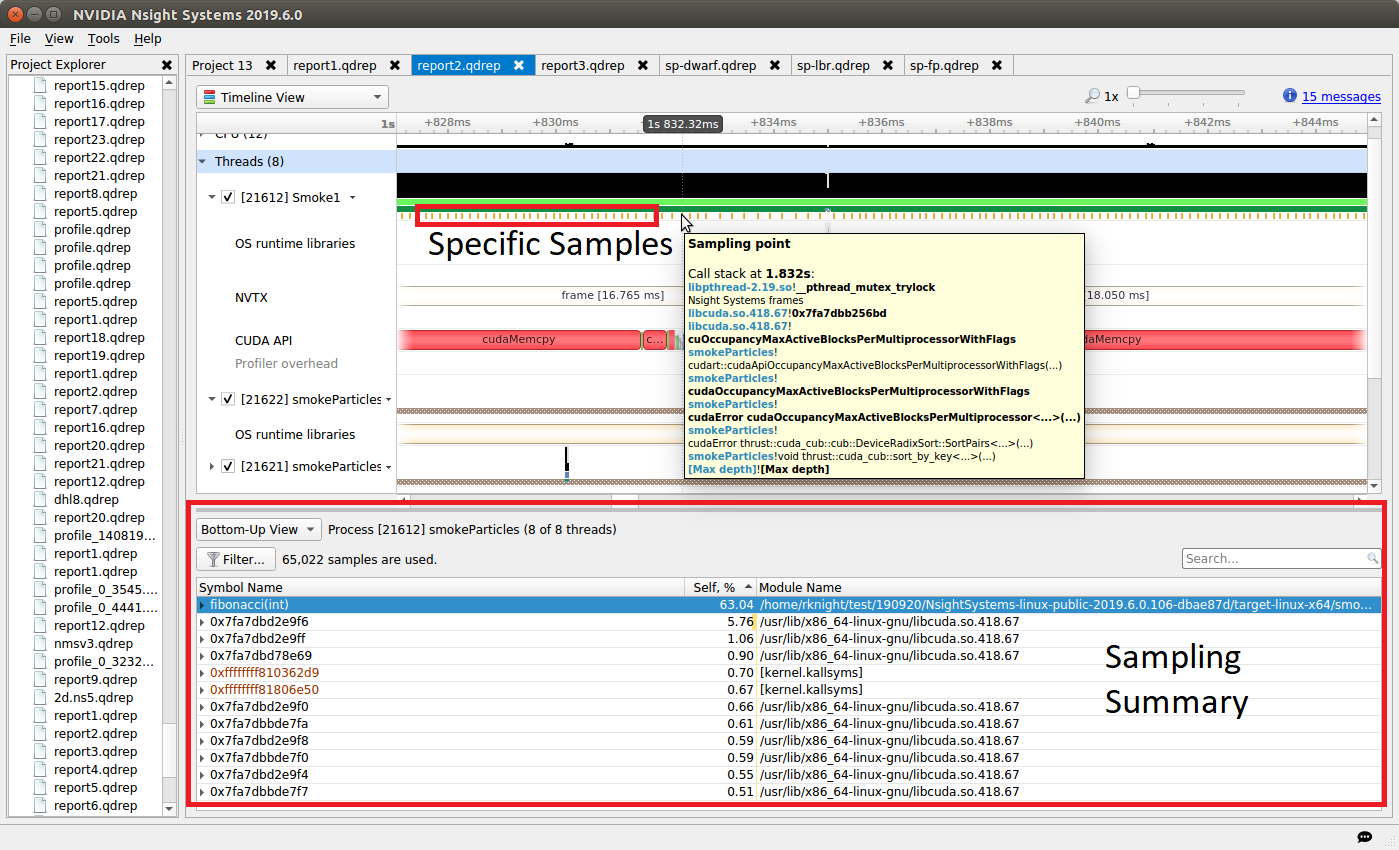

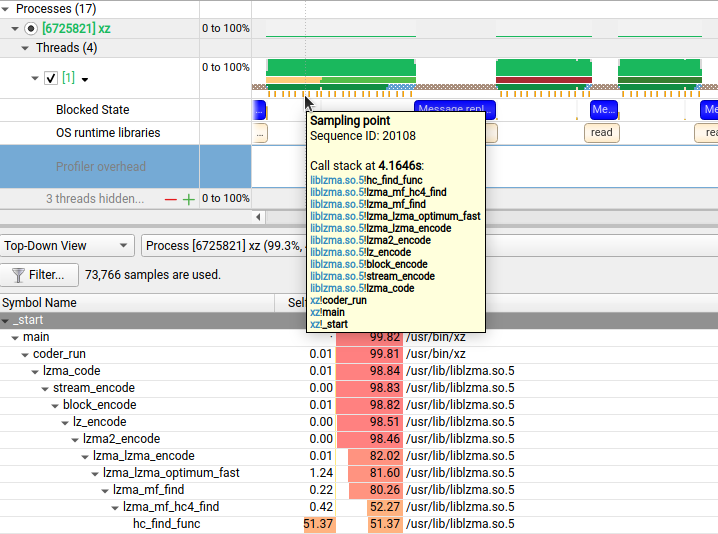

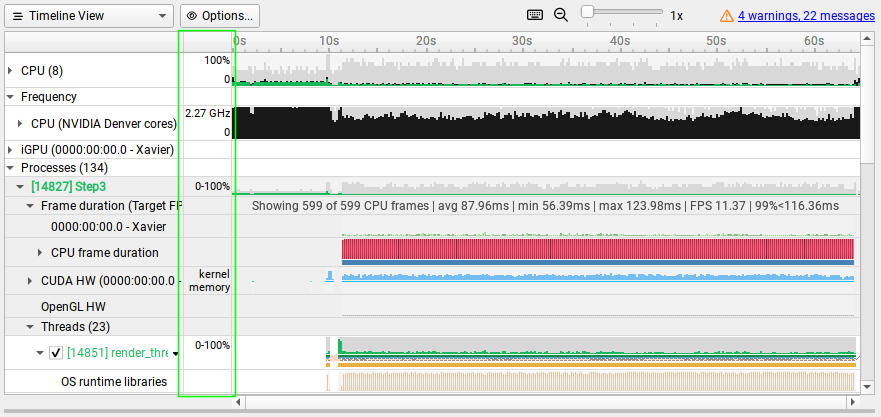

Select how to collect CPU IP/backtrace samples. If Note

Note If set to |

|

integer <= 32 |

1 |

The number of CPU IP samples collected for every CPU IP/backtrace sample collected. For example, if set to 4, on the fourth CPU IP sample collected, a backtrace will also be collected. Lower values increase the amount of data collected. Higher values can reduce collection overhead and reduce the number of CPU IP samples dropped. If DWARF backtraces are collected, the default is 4, otherwise the default is 1. This option is not available on Nsight Systems Embedded Platforms Edition or on non-Linux targets. |

|

|

100 < integers < 8000 |

1000 |

Specify the sampling/backtracing frequency. The minimum supported frequency is 100 Hz. The maximum supported frequency is 8000 Hz. This option is supported only on QNX, Linux for Tegra, and Windows targets. |

|

|

integer |

determined dynamically |

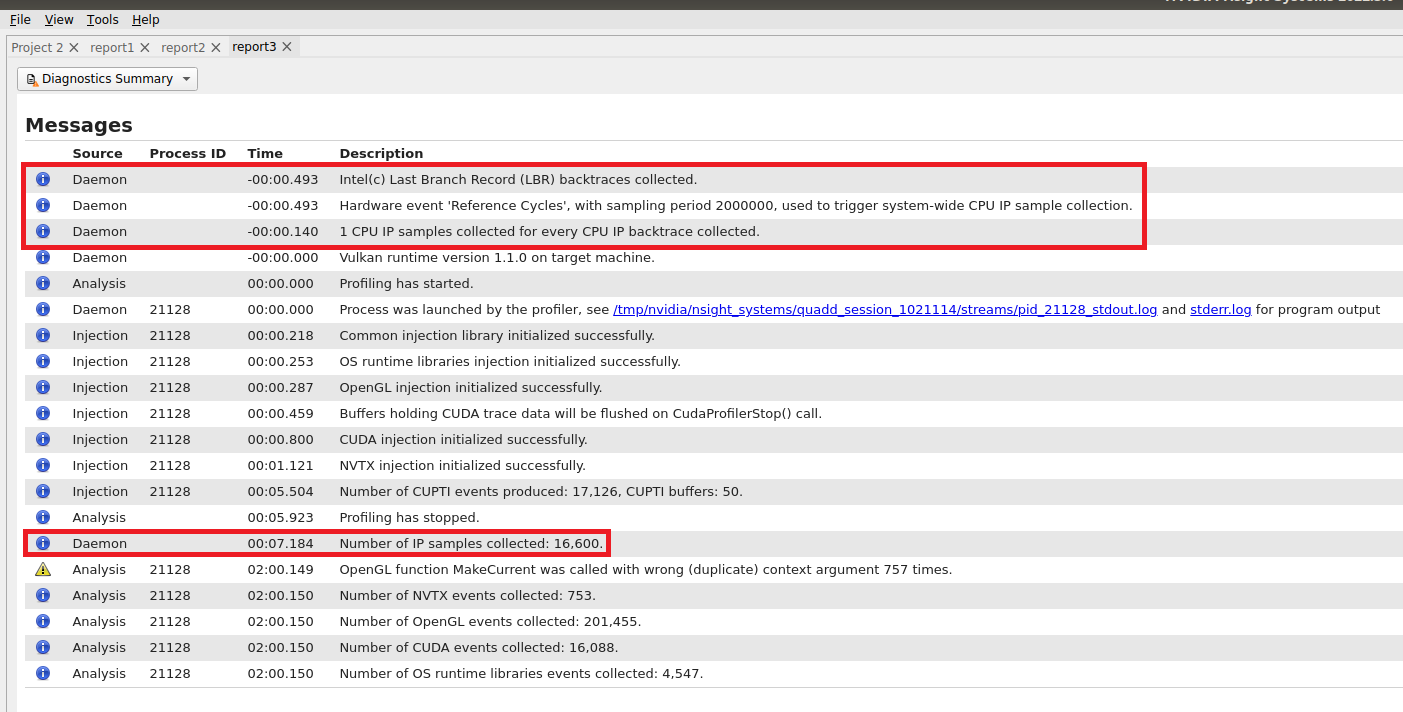

The number of CPU Cycle events counted before a CPU instruction pointer (IP) sample is collected. If configured, backtraces may also be collected. The smaller the sampling period, the higher the sampling rate. Note that smaller sampling periods will increase overhead and significantly increase the size of the result file(s). Requires the``–sampling-trigger=perf`` switch. |

|

|

integer |

determined dynamically |

The number of events counted before a CPU instruction pointer (IP) sample is collected. The event used to trigger the collection of a sample is determined dynamically. For example, on Intel based platforms, it will probably be “Reference Cycles” and on AMD platforms, “CPU Cycles”. If configured, backtraces may also be collected. The smaller the sampling period, the higher the sampling rate. Note that smaller sampling periods will increase overhead and significantly increase the size of the result file(s). This option is available only on Linux targets. |

|

|

timer, sched, perf, cuda |

timer,sched |

Specify backtrace collection trigger. Multiple APIs can be selected, separated by commas only (no spaces). Available on Nsight Systems Embedded Platforms Edition targets only. |

|

|

[a-Z][0-9,a-Z,spaces] |

profile-<id>-<application> |

Name the session created by the command. Name must start with an alphabetical character followed by

printable or space characters. Any |

|

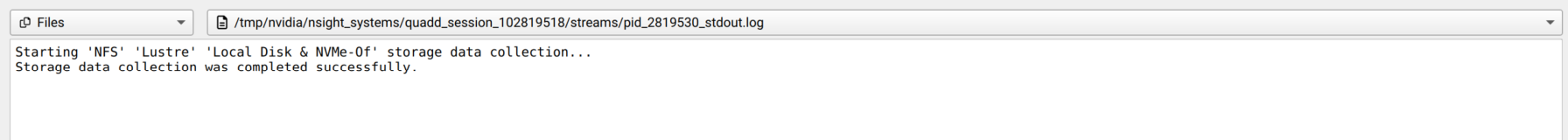

-w |

|

true, false |

true |

If true, send the target process’s stdout and stderr streams to both the console and stdout/stderr files which are added to the report file. If false, only send the target process stdout and stderr streams to the stdout/stderr files which are added to the report file. |

|

true,false |

false |

Collect SoC Metrics. Available in Nsight Systems Embedded Platforms Edition only. |

|

|

integer |

10000 |

Specify SoC Metrics sampling frequency. Minimum supported frequency is ‘100’ (Hz). Maximum supported frequency is ‘1000000’ (Hz). Available in Nsight Systems Embedded Platforms Edition only. |

|

|

alias, file:<file name> |

see description |

Specify metric set for SoC Metrics. The argument must be one of the aliases reported by

|

|

|

1 <= integer |

Start the recording session when the frame index reaches the frame number preceding the start frame index. Note when it is selected cannot include any other start options. If not specified, the default is disabled. |

||

-Y |

|

true, false |

false |

Delays collection indefinitely until the nsys start command is executed for this session.

Enabling this option overrides the |

|

true, false |

false |

Generate summary statistics after the collection. Warning When set to true, an SQLite database will be created after the collection. If the collection captures a large amount of data, creating the database file may take several minutes to complete. |

|

-x |

|

true, false |

true |

If true, stop collecting automatically when the launched process has exited or when the duration expires - whichever occurs first. If false, duration must be set and the collection stops only when the duration expires. Nsight Systems does not officially support runs longer than 5 minutes. |

|

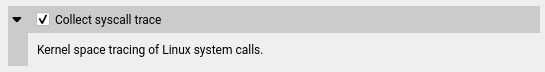

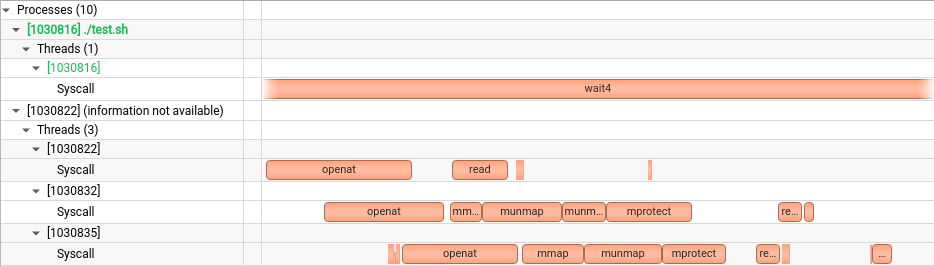

process-tree, pid-namespace, none |

none |

Collect system calls. The value defines the collection scope: |

|

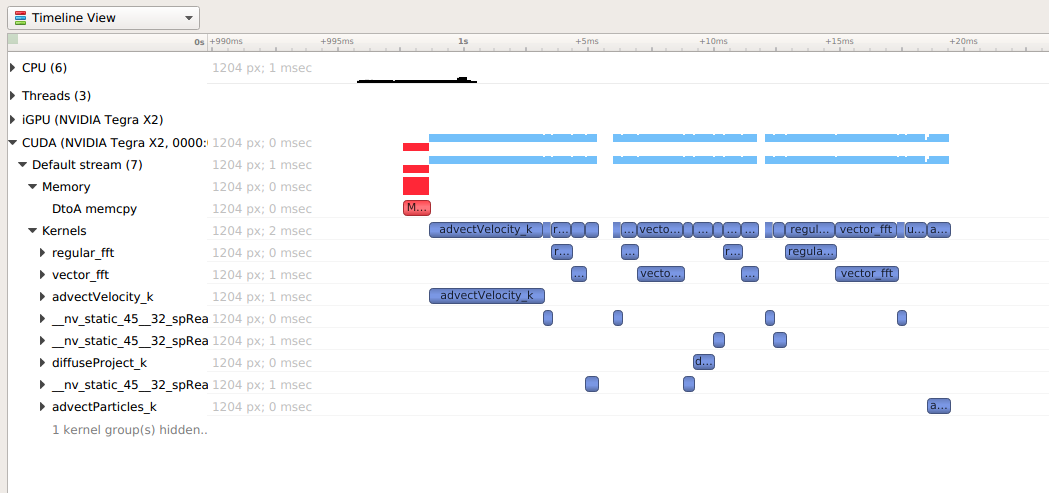

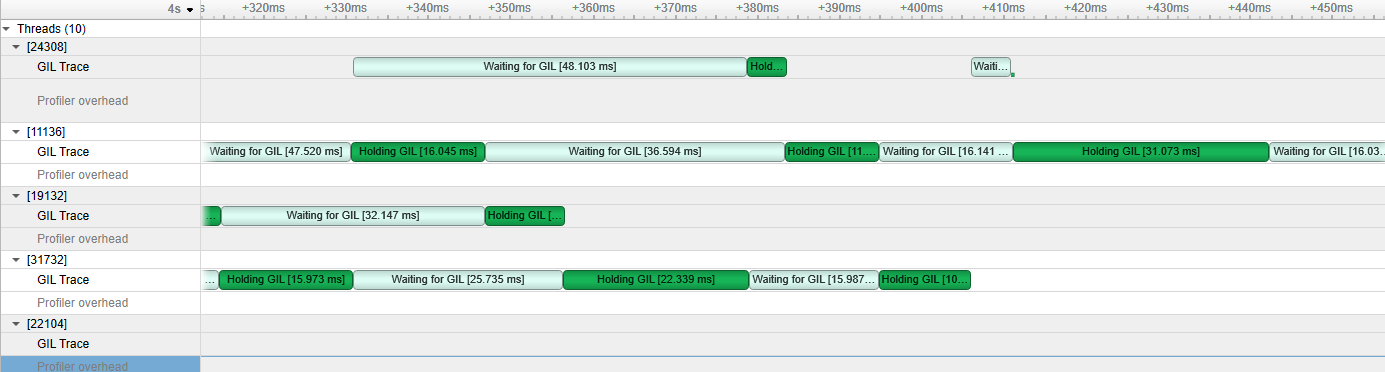

-t |

|

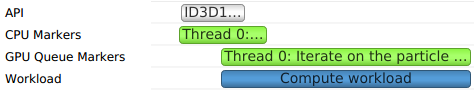

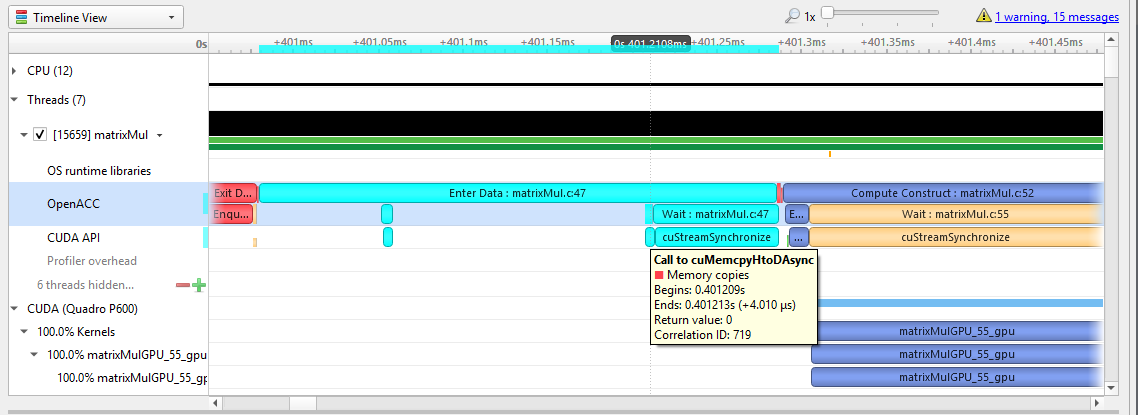

cuda, nvtx, cuda-hw, cublas, cublas-verbose, cusparse, cusparse-verbose, cudnn, cudla, cudla-verbose, cusolver, cusolver-verbose, opengl, opengl-annotations, openacc, openmp, osrt, mpi, nvvideo, vulkan, vulkan-annotations, dx11, dx11-annotations, dx12, dx12-annotations, openxr, openxr-annotations, oshmem, ucx, wddm, tegra-accelerators, python-gil, gds(experimental), none |

cuda, opengl, nvtx, osrt |

Select the API(s) to be traced. The osrt switch controls the OS runtime libraries tracing. Multiple

APIs can be selected, separated by commas only (no spaces). Since OpenACC and cuXXX APIs

are tightly linked with CUDA, selecting one of those APIs will automatically enable CUDA tracing.

cublas, cudla, cusparse and cusolver all have XXX-verbose options available.

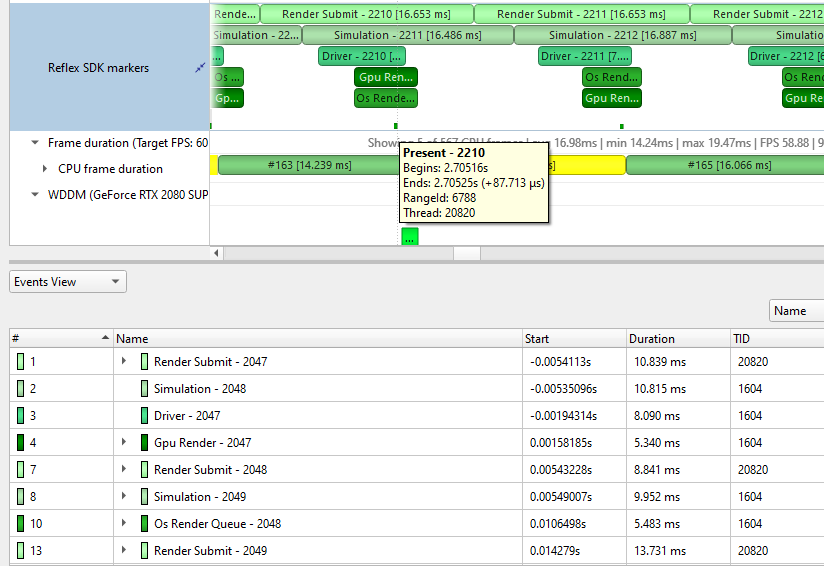

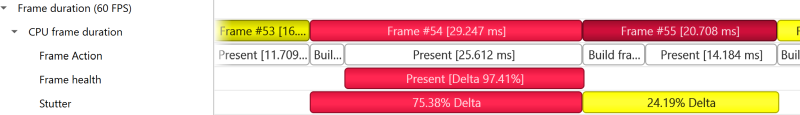

Reflex SDK latency markers will be automatically collected when DX or vulkan API trace is enabled.

See information on Note cuDNN is not available on Windows target. Note cuda-hw is not available for hardware before Blackwell, nor for Windows before 11.0 |

|

true, false |

false |

If true, trace any child process after fork and before they call one of the exec functions. Beware, tracing in this interval relies on undefined behavior and might cause your application to crash or deadlock. This option is only available on Linux target platforms. |

|

|

true, false |

false |

Collect vsync events. If collection of vsync events is enabled, display/display_scanline ftrace events will also be captured. Available in Nsight Systems Embedded Platforms Edition only. |

|

|

true, false, individual, batch, none |

individual |

If individual or true, trace each Vulkan workload’s GPU activity individually. If batch, trace

Vulkan workloads’ GPU activity in |

|

|

primary,all |

all |

If |

|

|

true, false |

true |

If |

|

|

true, false |

false |

If |

|

|

< filepath pct.json > |

none |

Collect hypervisor trace. Available in Nsight Systems Embedded Platforms Edition only. |

|

|

all, none, core, sched, irq, trap |

all |

Available in Nsight Systems Embedded Platforms Edition only. |

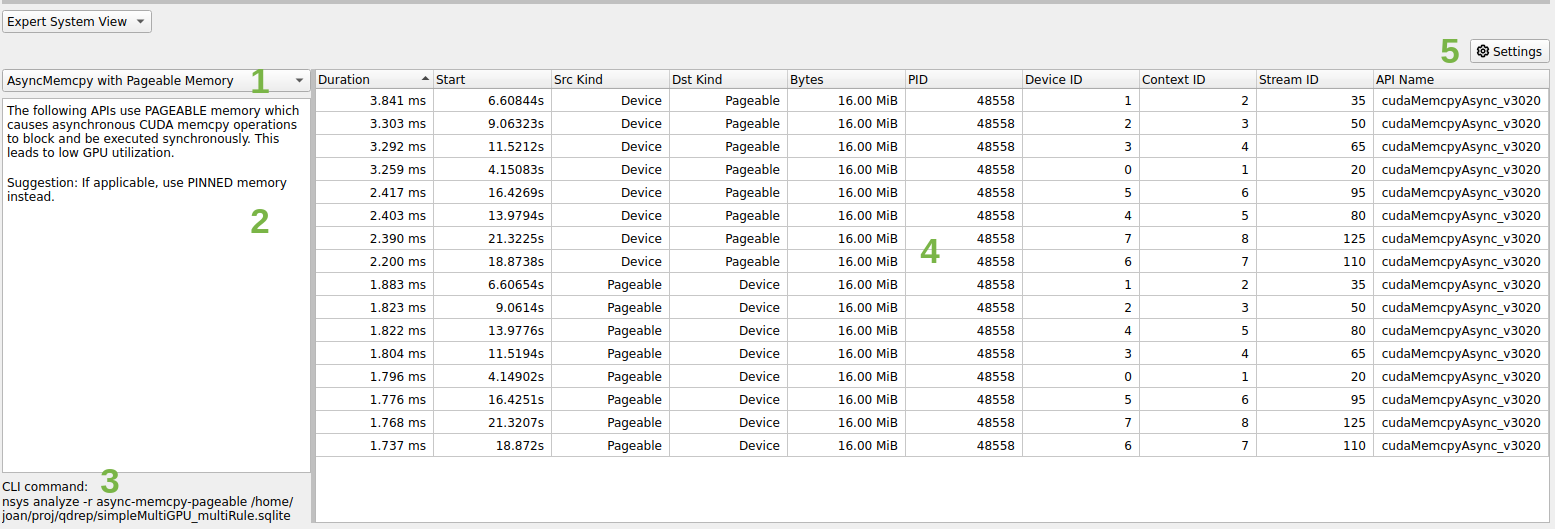

CLI Analyze Command Switch Options

The nsys analyze command generates and outputs a report to the terminal

using expert system rules on existing results. Reports are generated from an

SQLite export of a .nsys-rep file. If a .nsys-rep file is specified,

Nsight Systems will look for an accompanying SQLite file and use it. If no

SQLite export file exists, one will be created.

After choosing the analyze command switch, the following options are

available. Usage:

nsys [global-options] analyze [options] [input-file]

Short |

Long |

Possible Parameters |

Default |

Switch Description |

|---|---|---|---|---|

|

<tag> |

none |

Print the help message. The option can take one optional argument that will be used as a tag. If a tag is provided, only options relevant to the tag will be printed. |

|

-f |

|

column, table, csv, tsv, json, hdoc, htable, . |

Specify the output format. The special name “.” indicates the default format for the given output. The default format for console is column, while files and process outputs default to csv. This option may be used multiple times. Multiple formats may also be specified using a comma-separated list (<name[:args…][,name[:args…]…]>). See Report Formatters for options available with each format. |

|

|

true, false |

false |

Force a re-export of the SQLite file from the specified .nsys-rep file, even if an SQLite file already exists. |

|

|

true, false |

false |

Overwrite any existing output files. |

|

|

<format_name>, ALL, [none] |

none |

With no argument, list a summary of the available output formats. If a format name is

given, a more detailed explanation of the the format is displayed. If |

|

|

<rule_name>, ALL, [none] |

none |

With no argument, list available rules with a short description. If a rule name is given,

a more detailed explanation of the rule is displayed. If |

|

-o |

|

-, @<command>, <basename>, . |

|

Specify the output mechanism. There are three output mechanisms: print to console,

output to file, or output to command. This option may be used multiple times. Multiple

outputs may also be specified using a comma-separated list. If the given output name is

“-”, the output will be displayed on the console. If the output name starts with “@”,

the output designates a command to run. The nsys command will be executed and the

analysis output will be piped into the command. Any other output is assumed to be the

base path and name for a file. If a file basename is given, the filename used will be:

<basename>_<analysis&args>.<output_format>. The default base (including path) is the

name of the SQLite file (as derived from the input file or |

-q |

|

Do not display verbose messages, only display errors. |

||

-r |

|

cuda_memcpy_async, cuda_memcpy_sync, cuda_memset_sync, cuda_api_sync, gpu_gaps, gpu_time_util, dx12_mem_ops |

all |

Specify the rules(s) to execute, including any arguments. This option may be used

multiple times. Multiple rules may also be specified using a comma-separated list. See

Expert Systems section and |

|

<file.sqlite> |

Specify the SQLite export filename. If this file exists, it will be used. If this file

doesn’t exist (or if |

||

|

nsec, nanoseconds, usec, microseconds, msec, milliseconds, seconds |

nanoseconds |

Set basic unit of time. The argument of the switch is matched by using the longest

prefix matching. Meaning that it is not necessary to write a whole word as the switch

argument. It is similar to passing a “:time=<unit>” argument to every formatter,

although the formatter uses more strict naming conventions. See

|

CLI Cancel Command Switch Options

After choosing the cancel command switch, the following options are available. Usage:

nsys [global-options] cancel [options]

Short |

Long |

Possible Parameters |

Default |

Switch Description |

|---|---|---|---|---|

|

<tag> |

none |

Print the help message. The option can take one optional argument that will be used as a tag. If a tag is provided, only options relevant to the tag will be printed. |

|

|

<session identifier> |

none |

Cancel the collection in the given session. The option argument must represent a valid session name or ID as reported by |

CLI Export Command Switch Options

After choosing the export command switch, the following options are available. Usage:

nsys [global-options] export [options] [nsys-rep-file]

Short |

Long |

Possible Parameters |

Default |

Switch Description |

|---|---|---|---|---|

|

This option only applies to “directory of files” output formats with existing export files. If this option is given, an error will not be reported and the existing output files will not be over-written. |

|||

-f |

|

true, false |

false |

If true, overwrite all existing result files with same output filename (nsys-rep, SQLITE, HDF, TEXT, JSON, ARROW, ARROWDIR, PARQUETDIR). |

|

<tag> |

none |

Print the help message. The option can take one optional argument that will be used as a tag. If a tag is provided, only options relevant to the tag will be printed. |

|

|

true, false |

false |

Controls if NVTX extended payloads are exported as binary data. This option affects SQLite, Arrow, and Arrow/Parquet directory exports only. |

|

|

true, false |

false |

Controls if repetitive JSON blocks are included in an export or not.

Some events contain dynamically defined payloads. These payloads are

often exported as JSON blocks to preserve their free-form structure.

Unfortunately, blocks of JSON text are not an efficient way to represent

data, and can cause the export files to become quite large.

To address this, some classes of events (such as GENERIC_EVENT data) were

extended to export payload data in the native export format. For those

events that have an export-native representation, this flag will enable

or disable the export of the equivalent JSON blocks.

Note that this does not suppress all JSON output. Some tables, like

|

|

-l |

|

true, false |

true |

Controls if table creation is lazy or not. When true, a table will only be created when it contains data. This option will be deprecated in the future, and all exports will be non-lazy. This affects SQLite, HDF5, Arrow, and Arrow/Parquet directory exports only. |

-o |

|

<filename> |

<inputfile.ext> |

Set the .output filename. The default is the input filename with the extension for the chosen format. |

-q |

|

true, false |

false |

If true, do not display progress bar. |

|

true,false |

false |

Output stored strings and thread names separately, with one value per line. This affects JSON and text output only. |

|

-t |

|

sqlite, hdf, text, json, info, arrow, arrowdir, parquetdir |

sqlite |

Export format type. HDF format is supported only on x86_64 Linux and Windows. |

|

<pattern>[,<pattern>…] |

Value is a comma-separated list of search patterns (no spaces). This option can be given more than once. If set, only tables that match one or more of the patterns will be exported. If not set, all tables will be exported. This feature applies to SQLite, HDFS, Arrow, and Arrow/Parquet directory exports only. The patterns are case-insensitive POSIX basic regular expressions. Note This is an advanced feature intended for expert users. This

option does not enforce any type of dependency or relationship

between tables and will truly export only the listed tables.

If partial exports are used with analytics features such as

|

||

|

<timerange>[,<timerange>…] |

Value is a comma-separated list of time ranges (no spaces). This option can be given more than once. If set, only events that fall within at least one of the given ranges will be exported. If not set, all events will be exported. This feature applies to SQLite, HDFS, Arrow, and Arrow/Parquet directory exports only. Note This is an advanced feature intended for expert users. This option does not enforce any type of dependency or relationship between related events (such as CUDA launch APIs and CUDA kernel executions). If filtered exports are used with analytics features such as generated due to missing data, and unexpected or misleading results may be generated. It is the responsibility of the user to ensure all relevant and interrelated events are exported. The format of a time-range is:

For example, the value START END S/E :S/E S/E: :S/E:

| ==== | T T T T

============= | F T F T

| ============ F F T T

===================== F F F T

===== | or | ==== F F F F

While many events have both a start and end time, some events only have a

single timestamp. These types of events are treated as an event with a

start time equal to the end time. If an event’s end time is before the

start time, the end time is adjusted to the start time.

If used in conjunction with the |

||

|

true, false |

false |

If true, all timestamp values in the report will be shifted to UTC

wall-clock time, as defined by the UNIX epoch. This option can be used

in conjunction with the |

|

|

signed integer, in nanoseconds |

0 |

If given, all timestamp values in the report will be shifted by the given

amount. This option can be used in conjunction with the |

CLI Launch Command Switch Options

After choosing the launch command switch, the following options are available. Usage:

nsys [global-options] launch [options] <application> [application-arguments]

Short |

Long |

Possible Parameters |

Default |

Switch Description |

|---|---|---|---|---|

-b |

|

auto, fp ,lbr, dwarf, none |

Select the backtrace method to use while sampling. The option |

|

|

true, false |

false |

Collect clock frequency changes. Available in Nsight Systems Embedded Platforms Edition only. |

|

|

0x16, 0x17, …, none |

none |

Collect per-cluster Uncore PMU counters. Multiple values can be selected, separated by commas only (no

spaces). Use the |

|

|

< filename > |

none |

Open a file that contains profile switches and parse the switches. Note additional switches on the command line will override switches in the file. This flag can be specified more than once. |

|

|

0x11,0x13,…,none |

none |

Collect per-core PMU counters. Multiple values can be selected, separated by commas only (no spaces). Use

the |

|

|

0x2a, 0x2c, …, none |

none |

Collect per-socket Uncore PMU counters. Multiple values can be selected, separated by commas only (no spaces).

Use the |

|

|

process-tree, system-wide, none |

process-tree |

Trace OS thread scheduling activity. Select ‘none’ to disable tracing CPU context switches. Depending on

the platform, some values may require admin or root privileges. Note: if the |

|

|

milliseconds |

See Description |

Set the interval, in milliseconds, when buffered CUDA data is automatically saved to storage. CUDA data

buffer saves may cause profiler overhead. Buffer save behavior can be controlled with this switch. If the

CUDA flush interval is set to 0 on systems running CUDA 11.0 or newer, buffers are saved when they fill.

If a flush interval is set to a non-zero value on such systems, buffers are saved only when the flush

interval expires. If a flush interval is set and the profiler runs out of available buffers before the

flush interval expires, additional buffers will be allocated as needed. In this case, setting a flush

interval can reduce buffer save overhead but increase memory use by the profiler. If the flush interval

is set to 0 on systems running older versions of CUDA, buffers are saved at the end of the collection. If

the profiler runs out of available buffers, additional buffers are allocated as needed. If a flush interval

is set to a non-zero value on such systems, buffers are saved when the flush interval expires. A

|

|

|

true, false |

false |

Track the GPU memory usage by CUDA kernels. Applicable only when CUDA tracing is enabled. Note: This feature may cause significant runtime overhead. |

|

|

true, false |

false |

This switch tracks the page faults that occur when CPU code tries to access a memory page that resides on the device. Note: this feature may cause significant runtime overhead. Not available on Nsight Systems Embedded Platforms Edition. |

|

|

true, false |

false |

This switch tracks the page faults that occur when GPU code tries to access a memory page that resides on the host. Note: this feature may cause significant runtime overhead. Not available on Nsight Systems Embedded Platforms Edition. |

|

|

all, none, kernel, memory, sync, other |

none |

When tracing CUDA APIs, enable the collection of a backtrace when a CUDA API is invoked. Significant runtime

overhead may occur. Values may be combined using |

|

|

graph, node |

graph |

If |

|

|

true, false |

false |

The Nsight Systems trace initialization involves creating a D3D device and discarding it. Enabling this flag

makes a call to |

|

|

true, false, individual, batch, none |

individual |

If individual or true, trace each DX12 workload’s GPU activity individually. If batch, trace DX12 workloads’

GPU activity in ExecuteCommandLists call batches. If none or false, do not trace DX12 workloads’ GPU

activity. Note that this switch is applicable only when |

|

|

true, false |

true |

If true, trace wait calls that block on fences for DX12. Note that this switch is applicable only when

|

|

-e |

|

A=B |

NA |

Set environment variable(s) for the application process to be launched. Environment variables should be defined as A=B. Multiple environment variables can be specified as A=B,C=D. |

|

help, <id1,id2,…>, all, none |

none |

Analyze video devices. |

|

|

<tag> |

none |

Print the help message. The option can take one optional argument that will be used as a tag. If a tag is provided, only options relevant to the tag will be printed. |

|

|

‘F1’ to ‘F12’ |

‘F12’ |

Hotkey to trigger the profiling session. Note that this switch is applicable only when

|

|

-n |

|

true, false |

true |

When true, the current environment variables and the tool’s environment variables will be specified for the launched process. When false, only the tool’s environment variables will be specified for the launched process. |

|

true,false |

true |

Use detours for injection. If false, process injection will be performed by windows hooks which allows it to bypass anti-cheat software. |

|

|

true, false |

false |

Trace Interrupt Service Routines (ISRs) and Deferred Procedure Calls (DPCs). Requires administrative privileges. Available only on Windows devices. |

|

|

openmpi,mpich |

openmpi |

When using |

|

-p |

|

range@domain, range, range@* |

none |

Specify the NVTX range and domain to trigger the profiling session. This option is applicable only when

used along with |

|

default, <domain_names> |

Choose to exclude NVTX events from a comma separated list of domains. Note Only one of |

||

|

default, <domain_names> |

Choose to only include NVTX events from a comma separated list of domains. ‘default’ filters the NVTX default domain. A domain with this name or commas in a domain name must be escaped with ‘\’.

|

||

|

<json_file> |

Specify the path to the JSON file containing the requested functions to trace. |

||

|

true, false |

true |

If true, trace the OpenGL workloads’ GPU activity. Note that this switch is applicable only when

|

|

|

‘help’ or the end users selected events in the format ‘x,y’ |

Select the OS events to sample. Use the Not available on Nsight Systems Embedded Platforms Edition. |

||

|

integer |

24 |

Set the depth for the backtraces collected for OS runtime libraries calls. |

|

|

integer |

6144 |

Set the stack dump size, in bytes, to generate backtraces for OS runtime libraries calls. |

|

|

nanoseconds |

80000 |

Set the duration, in nanoseconds, that all OS runtime libraries calls must execute before backtraces are collected. |

|

|

< nanoseconds > |

1000 ns |

Set the duration, in nanoseconds, that Operating System Runtime (osrt) APIs must execute before they are traced. Values significantly less than 1000 may cause significant overhead and result in extremely large result files. Note This setting is ignored for APIs that interact with files when |

|

|

true, false |

false |

Collect file access data when tracing Operating System Runtime (osrt) APIs that interact with files. Note When this setting is set to true the |

|

|

cuda, none |

none |

Collect Python backtrace event when tracing the selected API’s trigger. This option is supported

on Arm server (SBSA) platforms and x86 Linux targets. Note: tracing and backtraces of the selected API and CPU

sampling must be enabled. For example, |

|

|

true, false |

false |

Collect Python backtrace sampling events. This option is supported on Arm server (SBSA) platforms, x86 Linux and Windows targets. Note: When profiling Python-only workflows, consider disabling the CPU sampling option to reduce overhead. |

|

|

1 < integers < 2000 |

1000 |

Specify the Python sampling frequency. The minimum supported frequency is 1Hz. The maximum

supported frequency is 2KHz. This option is ignored if the |

|

|

autograd-nvtx, autograd-shapes-nvtx, functions-trace, none |

none |

Enable automatic annotations of PyTorch functions. |

|

|

functions-trace, none |

none |

Enable automatic annotations of Dask functions |

|

|

class/event,event, class/event:mode, class:mode,help,none |

none |

Multiple values can be selected, separated by commas only (no spaces). See the

|

|

|

system,process,fast,wide |

system:fast |

Values are separated by a colon ( |

|

|

true,false |

true |

Resolve symbols of captured samples and backtraces. |

|

|

true, false |

false |

Retain ETW files generated by the trace, merge and move the files to the output directory. |

|

|

< username > |

none |

Run the target application as the specified username. If not specified, the target application will be run by the same user as Nsight Systems. Requires root privileges. Available for Linux targets only. |

|

-s |

|

process-tree, system-wide, none |

process-tree |

Select how to collect CPU IP/backtrace samples. If Note

Note If set to |

|

integer <= 32 |

1 |

The number of CPU IP samples collected for every CPU IP/backtrace sample collected. For example, if set to 4, on the fourth CPU IP sample collected, a backtrace will also be collected. Lower values increase the amount of data collected. Higher values can reduce collection overhead and reduce the number of CPU IP samples dropped. If DWARF backtraces are collected, the default is 4, otherwise the default is 1. This option is not available on Nsight Systems Embedded Platforms Edition or on non-Linux targets. |

|

|

100 < integers < 8000 |

1000 |

Specify the sampling/backtracing frequency. The minimum supported frequency is 100 Hz. The maximum supported frequency is 8000 Hz. This option is supported only on QNX, Linux for Tegra, and Windows targets. |

|

|

integer |

determined dynamically |

The number of CPU Cycle events counted before a CPU instruction pointer (IP) sample is collected.

If configured, backtraces may also be collected. The smaller the sampling period, the higher the

sampling rate. Note that smaller sampling periods will increase overhead and significantly increase

the size of the result file(s). Requires |

|

|

integer |

determined dynamically |

The number of events counted before a CPU instruction pointer (IP) sample is collected. The event used to trigger the collection of a sample is determined dynamically. For example, on Intel based platforms, it will probably be “Reference Cycles” and on AMD platforms, “CPU Cycles.” If configured, backtraces may also be collected. The smaller the sampling period, the higher the sampling rate. Note that smaller sampling periods will increase overhead and significantly increase the size of the result file(s). This option is available only on Linux targets. |

|

|

timer, sched, perf, cuda |

timer,sched |

Specify backtrace collection trigger. Multiple APIs can be selected, separated by commas only (no spaces). Available on Nsight Systems Embedded Platforms Edition targets only. |

|

|

session identifier |

none |

Launch the application in the indicated session. The option argument must represent a valid session

name or ID as reported by |

|

|

[a-Z][0-9,a-Z,spaces] |

profile-<id>-<application> |

Name the session created by the command. Name must start with an alphabetical character followed by

printable or space characters. Any |

|

-w |

|

true, false |

true |

If true, send target process’s stdout and stderr streams to both the console and stdout/stderr files which are added to the report file. If false, only send target process stdout and stderr streams to the stdout/stderr files which are added to the report file. |

-t |

|

cuda, nvtx, cuda-hw, cublas, cublas-verbose, cusparse, cusparse-verbose, cudnn, cudla, cudla-verbose, cusolver, cusolver-verbose, opengl, opengl-annotations, openacc, openmp, osrt, mpi, nvvideo, vulkan, vulkan-annotations, dx11, dx11-annotations, dx12, dx12-annotations, openxr, openxr-annotations, oshmem, ucx, wddm, tegra-accelerators, python-gil, gds(experimental), none |

cuda, opengl, nvtx, osrt |

Select the API(s) to be traced. The osrt switch controls the OS runtime libraries tracing. Multiple

APIs can be selected, separated by commas only (no spaces). Since OpenACC and cuXXX APIs

are tightly linked with CUDA, selecting one of those APIs will automatically enable CUDA tracing.

cublas, cudla, cusparse and cusolver all have XXX-verbose options available.

Reflex SDK latency markers will be automatically collected when DX or vulkan API trace is enabled.

See information on Note cuDNN is not available on Windows targets. Note cuda-hw is not available for hardware before Blackwell, nor for Windows before 11.0 |

|

true, false |

false |

If true, trace any child process after fork and before they call one of the exec functions. Beware, tracing in this interval relies on undefined behavior and might cause your application to crash or deadlock. Note: This option is only available on Linux target platforms. |

|

|

true, false, individual, batch, none |

individual |

If individual or true, trace each Vulkan workload’s GPU activity individually. If batch, trace

Vulkan workloads’ GPU activity in This option is not supported on QNX. |

|

|

primary,all |

all |

If primary, the CLI will wait on the application process termination. If all, the CLI will additionally wait on re-parented processes created by the application. |

|

|

true, false |

true |

If true, collect additional range of ETW events, including context status, allocations, sync wait

and signal events, etc. Note that this switch is applicable only when This option is only supported on Windows targets. |

|

|

true, false |

false |

If true, collect backtraces of WDDM events. Disabling this data collection can reduce overhead for

certain target applications. Note that this switch is applicable only when This option is only supported on Windows targets. |

CLI Sessions Command Switch Subcommands

After choosing the sessions command switch, the following subcommands are available. Usage:

nsys [global-options] sessions [subcommand]

Subcommand |

Description |

|---|---|

list |

List all active sessions including ID, name, and state information |

CLI Sessions List Command Switch Options

After choosing the sessions list command switch, the following options are available. Usage:

nsys [global-options] sessions list [options]

Short |

Long |

Possible Parameters |

Default |

Switch Description |

|---|---|---|---|---|

|

<tag> |

none |

Print the help message. The option can take one optional argument that will be used as a tag. If a tag is provided, only options relevant to the tag will be printed. |

|

-p |

|

true, false |

true |

Controls whether a header should appear in the output. |

CLI Shutdown Command Switch Options

After choosing the shutdown command switch, the following options are available. Usage:

nsys [global-options] shutdown [options]

Short |

Long |

Possible Parameters |

Default |

Switch Description |

|---|---|---|---|---|

|

<tag> |

none |

Print the help message. The option can take one optional argument that will be used as a tag. If a tag is provided, only options relevant to the tag will be printed. |

|

|

On Linux: one, sigkill, sigterm, signal number On Windows: true, false |

On Linux: sigterm On Windows: true |

Send signal to the target application’s process group when shutting down session. |

|

|

session identifier |

none |

Shutdown the indicated session. The option argument must represent a valid session name or ID as reported by |

CLI Start Command Switch Options

After choosing the start command switch, the following options are available. Usage:

nsys [global-options] start [options]

Short |

Long |

Possible Parameters |

Default |

Switch Description |

|---|---|---|---|---|

|

none,tegra-accelerators |

none |

Collect other accelerators workload trace from the hardware engine units. Available in Nsight Systems Embedded Platforms Edition only. |

|

-b |

|

auto,fp,lbr,dwarf,none |

Select the backtrace method to use while sampling. The option |

|

-c |

|

none, cudaProfilerApi, hotkey, nvtx |

none |

When Note Hotkey works for graphic applications only. |

|

none, stop, stop-shutdown, repeat[:N], repeat-shutdown:N |

stop-shutdown |

Specify the desired behavior when a capture range ends. Applicable only when used along with

|

|

|

‘help’ or the end users selected events in the format ‘x,y’ |

‘2’ i.e. Instructions Retired |

Select the CPU Core events to sample. Use the |

|

|

0,1,2,…,none |

none |

Collect metrics on the CPU core. Multiple values can be selected, separated by commas only (no spaces). Use

the Note Only available on Grace. |

|

|

‘help’ or the end users selected events in the format ‘x,y’ |

none |

Select the Uncore CPU Socket events to sample. Use the |

|

|

0,1,2,…,none |

none |

Collect Uncore metrics on the CPU socket. Multiple values can be selected, separated by commas only (no spaces).

Use the Note Only available on Grace. |

|

|

process-tree, system-wide, none |

process-tree |

Trace OS thread scheduling activity. Select Note If the |

|

|

<plugin_name>[,arg1,arg2,…] |

NA |

Use the specified plugin. The option can be specified multiple times to enable multiple plugins.

Plugin arguments are separated by commas only (no spaces). Commas can be escaped with a backslash |

|

|

<filepath kernel_symbols.json> |

none |

XHV sampling config file. Available in Nsight Systems Embedded Platforms Edition only. |

|

|

“<name>,<guid>”, or path to JSON file |

none |

Add custom ETW trace provider(s). If you want to specify more attributes than Name and GUID, provide a JSON configuration file as as outlined below. This switch can be used multiple times to add multiple providers. Note: Only available for Windows targets. |

|

|

system-wide, none |

none |

Use the |

|

|

Integers from 1 to 20 Hz |

3 |

The sampling frequency used to collect event counts. Minimum event sampling frequency is 1 Hz. Maximum event sampling frequency is 20 Hz. Not available in Nsight Systems Embedded Platforms Edition. |

|

|

arrow, arrowdir, hdf, json, parquetdir, sqlite, text, none |

none |

Create additional output file(s) based on the data collected. This option can be given more than once. Warning If the collection captures a large amount of data, creating the export file may take several minutes to complete. |

|

|

true, false |

true |

If set to true, any call to |

|

-f |

|

true, false |

false |

If true, overwrite all existing result files with same output filename (.nsys-rep, .sqlite, .h5, .txt, .json, .arrows, _arwdir, _pqtdir). |

|

Collect ftrace events. Argument should list events to collect as: subsystem1/event1,subsystem2/event2. Requires root. No ftrace events are collected by default. |

|||

|

Skip initial ftrace setup and collect already configured events. Default resets the ftrace configuration. |

|||

|

GPU ID, help, all, none |

none |

Collect GPU Metrics from specified devices. Determine GPU IDs by using |

|

|

integer |

10000 |

Specify GPU Metrics sampling frequency. Minimum supported frequency is 10 (Hz). Maximum supported frequency is 200000 (Hz). |

|

|

alias, file:<file name> |

see description |

Specify metric set for GPU Metrics. The argument must be one of the aliases reported by

|

|

|

help, <id1,id2,…>, all, none |

none |

Analyze video devices. |

|

|

true,false |

false |

Trace GPU context switches. Note that this requires driver r435.17 or later and root permission. |

|

|

<tag> |

none |

Print the help message. The option can take one optional argument that will be used as a tag. If a tag is provided, only options relevant to the tag will be printed. |

|

|

<NIC names> |

none |

A comma-separated list of NIC names. The NICs which |

|

|

<file paths> |

none |

A comma-separated list of file paths. Paths of an existing ibdiagnet db_csv files, containing networks

information data. Nsight Systems will read the networks information from these files. Don’t use |

|

|

<directory path> |

none |

Sets the path of a directory into which ibdiagnet network discovery data will be written. Use this option

together with the |

|

|

<IB switch GUIDs> |

none |

A comma-separated list of InfiniBand switch GUIDs. Collect InfiniBand switch congestion events from

switches identified by the specified GUIDs. This switch can be used multiple times. System scope. Use

the |

|

|

<NIC name> |

none |

The name of the NIC (HCA) through which InfiniBand switches will be accessed. By default, the first

active NIC will be used. One way to find a NIC’s name is via the |

|

|

1 <= integer <= 100 |

50 |

Percent of InfiniBand switch congestion events to be collected. This option enables reducing the network bandwidth consumed by reporting congestion events. |

|

|

1 < integer <= 1023 |

75 |

High threshold percentage for InfiniBand switch egress port buffer size. Before a packet leaves an InfiniBand switch, it is stored at an egress port buffer. The buffer’s size is checked and if it exceeds the given threshold percentage, a congestion event is reported. The percentage can be greater than 100. |

|

|

<IB switch GUIDs> |

none |

A comma-separated list of InfiniBand switch GUIDs. Collect metrics from the specified InfiniBand switches. This switch can be used multiple times. System scope. |

|

|

<NIC name> |

none |

The name of the NIC (HCA) through which InfiniBand switches will be accessed for performance metrics. By

default, the first active NIC will be used. One way to find a NIC’s name is via the |

|

|

true, false |

false |

Trace Interrupt Service Routines (ISRs) and Deferred Procedure Calls (DPCs). Requires administrative privileges. Available only on Windows devices. |

|

|

true, false |

false |

Collect metrics from supported NIC/HCA devices. System scope. Not available on Nsight Systems Embedded Platforms Edition. |

|

|

‘help’ or the end users selected events in the format ‘x,y’ |

Select the OS events to sample. Use the |

||

-o |

|

< filename > |

report# |

Set report file name. Any %q{ENV_VAR} pattern in the filename will be substituted with the value of the environment variable. Any %h pattern in the filename will be substituted with the hostname of the system. Any %p pattern in the filename will be substituted with the PID of the target process or the PID of the root process if there is a process tree. Any %% pattern in the filename will be substituted with %. Default is report#{.nsys-rep,.sqlite,.h5,.txt,.arrows,_arwdir,_pqtdir,.json} in the working directory. |

|

main, process-tree, system-wide |

main |

Select which process(es) to trace. Available in Nsight Systems Embedded Platforms Edition only. Nsight Systems Workstation Edition will always trace system-wide in this version of the tool. |

|

|

true, false |

false |

Retain ETW files generated by the trace, merge and move the files to the output directory. |

|

-s |

|

process-tree, system-wide, xhv, xhv-system-wide, none |

process-tree |

Select how to collect CPU IP/backtrace samples. If Note

Note If set to |

|

integer <= 32 |

1 |

The number of CPU IP samples collected for every CPU IP/backtrace sample collected. For example, if set to 4, on the fourth CPU IP sample collected, a backtrace will also be collected. Lower values increase the amount of data collected. Higher values can reduce collection overhead and reduce the number of CPU IP samples dropped. If DWARF backtraces are collected, the default is 4, otherwise the default is 1. This option is not available on Nsight Systems Embedded Platforms Edition or on non-Linux targets. |

|

|

100 < integers < 8000 |

1000 |

Specify the sampling/backtracing frequency. The minimum supported frequency is 100 Hz. The maximum supported frequency is 8000 Hz. This option is supported only on QNX, Linux for Tegra, and Windows targets. |

|

|

integer |

determined dynamically |

The number of CPU Cycle events counted before a CPU instruction pointer (IP) sample is collected.

If configured, backtraces may also be collected. The smaller the sampling period, the higher the

sampling rate. Note that smaller sampling periods will increase overhead and significantly increase

the size of the result file(s). Requires |

|

|

integer |

determined dynamically |

The number of events counted before a CPU instruction pointer (IP) sample is collected. The event used to trigger the collection of a sample is determined dynamically. For example, on Intel based platforms, it will probably be “Reference Cycles” and on AMD platforms, “CPU Cycles”. If configured, backtraces may also be collected. The smaller the sampling period, the higher the sampling rate. Note that smaller sampling periods will increase overhead and significantly increase the size of the result file(s). This option is available only on Linux targets. |

|

|

timer, sched, perf, cuda |

timer,sched |

Specify backtrace collection trigger. Multiple APIs can be selected, separated by commas only (no spaces). Available on Nsight Systems Embedded Platforms Edition targets only. |

|

|

[a-Z][0-9,a-Z,spaces] |

profile-<id>-<application> |

Name the session created by the command. Name must start with an alphabetical character followed by

printable or space characters. Any |

|

-w |

|

true, false |

true |

If true, send target process’s stdout and stderr streams to both the console and stdout/stderr files which are added to the report file. If false, only send target process stdout and stderr streams to the stdout/stderr files which are added to the report file. |

|

true,false |

false |

Collect SoC Metrics. Available in Nsight Systems Embedded Platforms Edition only. |

|

|

integer |

10000 |

Specify SoC Metrics sampling frequency. Minimum supported frequency is ‘100’ (Hz). Maximum supported frequency is ‘1000000’ (Hz). Available in Nsight Systems Embedded Platforms Edition only. |

|

|

alias, file:<file name> |

see description |

Specify metric set for SoC Metrics. The argument must be one of the aliases reported by

|

|

|

true, false |

false |

Generate summary statistics after the collection. WARNING: When set to true, an SQLite database will be created after the collection. If the collection captures a large amount of data, creating the database file may take several minutes to complete. |

|

-x |

|

true, false |

true |

If true, stop collecting automatically when the launched process has exited or when the duration expires - whichever occurs first. If false, duration must be set and the collection stops only when the duration expires. Nsight Systems does not officially support runs longer than 5 minutes. |

|

process-tree, pid-namespace, none |

none |

Collect system calls. The value defines the collection scope: |

|

|

true, false |

false |

Collect vsync events. If collection of vsync events is enabled, display/display_scanline ftrace events will also be captured. Available in Nsight Systems Embedded Platforms Edition only. |

|

|

< filepath pct.json > |

none |

Collect hypervisor trace. Available in Nsight Systems Embedded Platforms Edition only. |

|

|

all, none, core, sched, irq, trap |

all |

Available in Nsight Systems Embedded Platforms Edition only. |

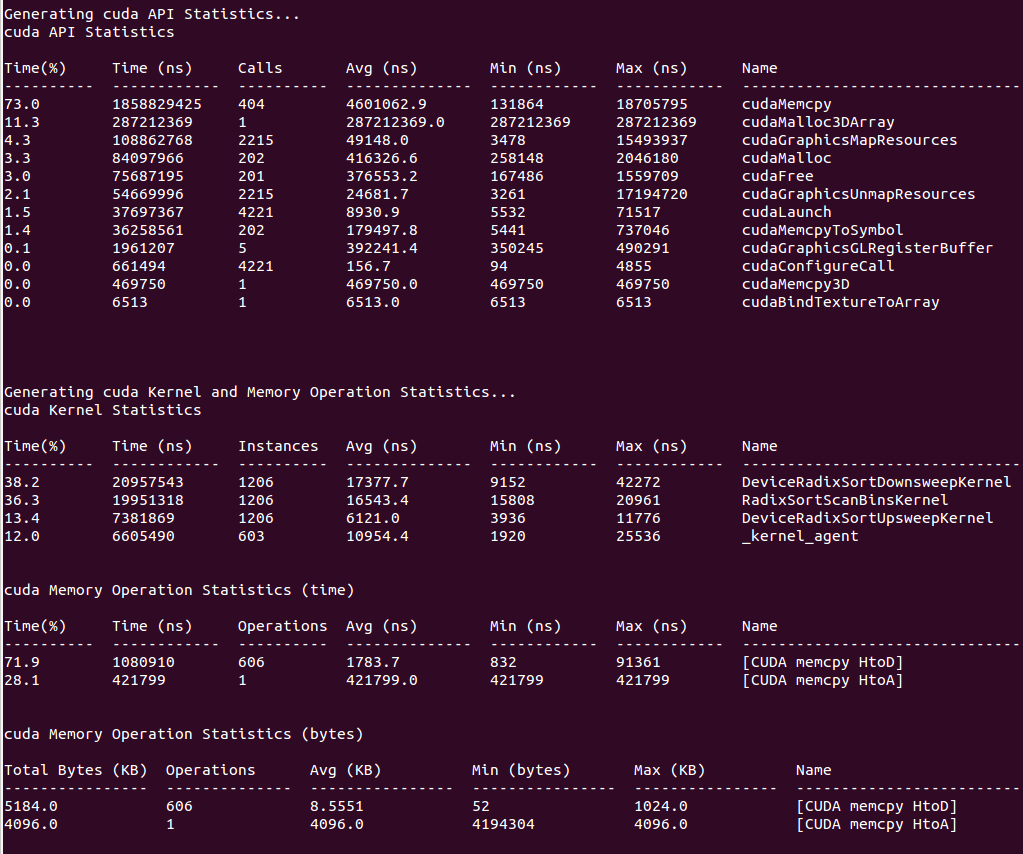

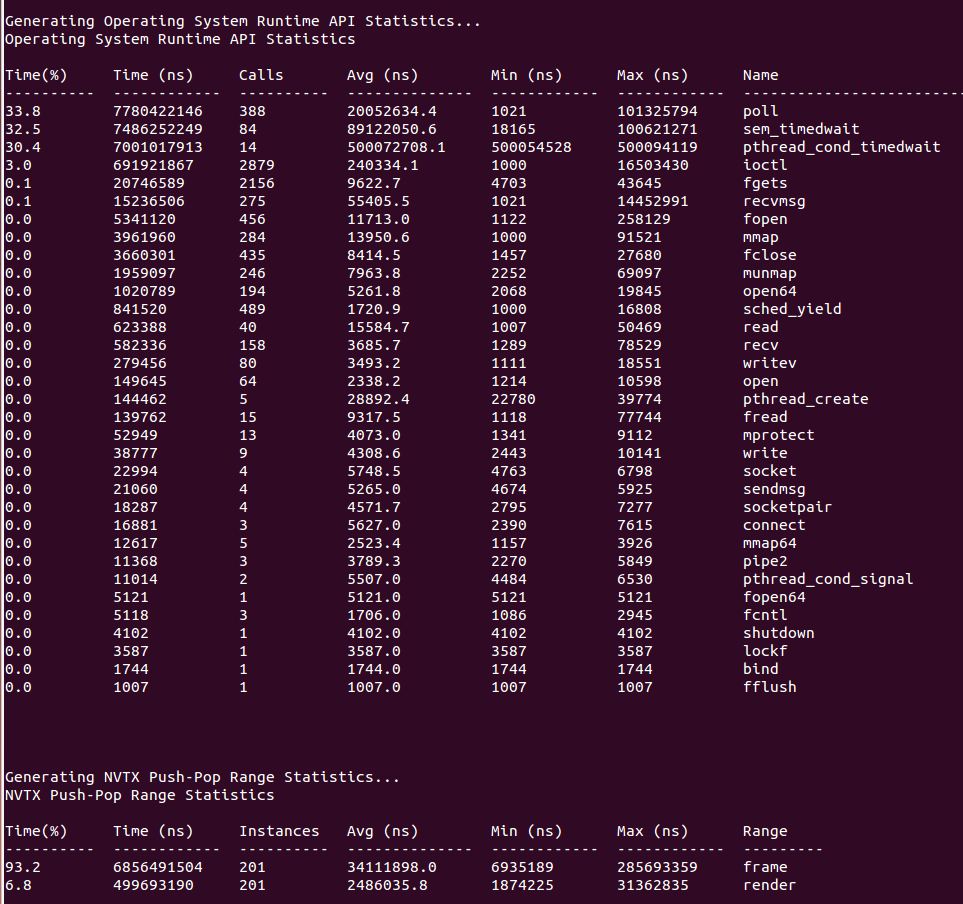

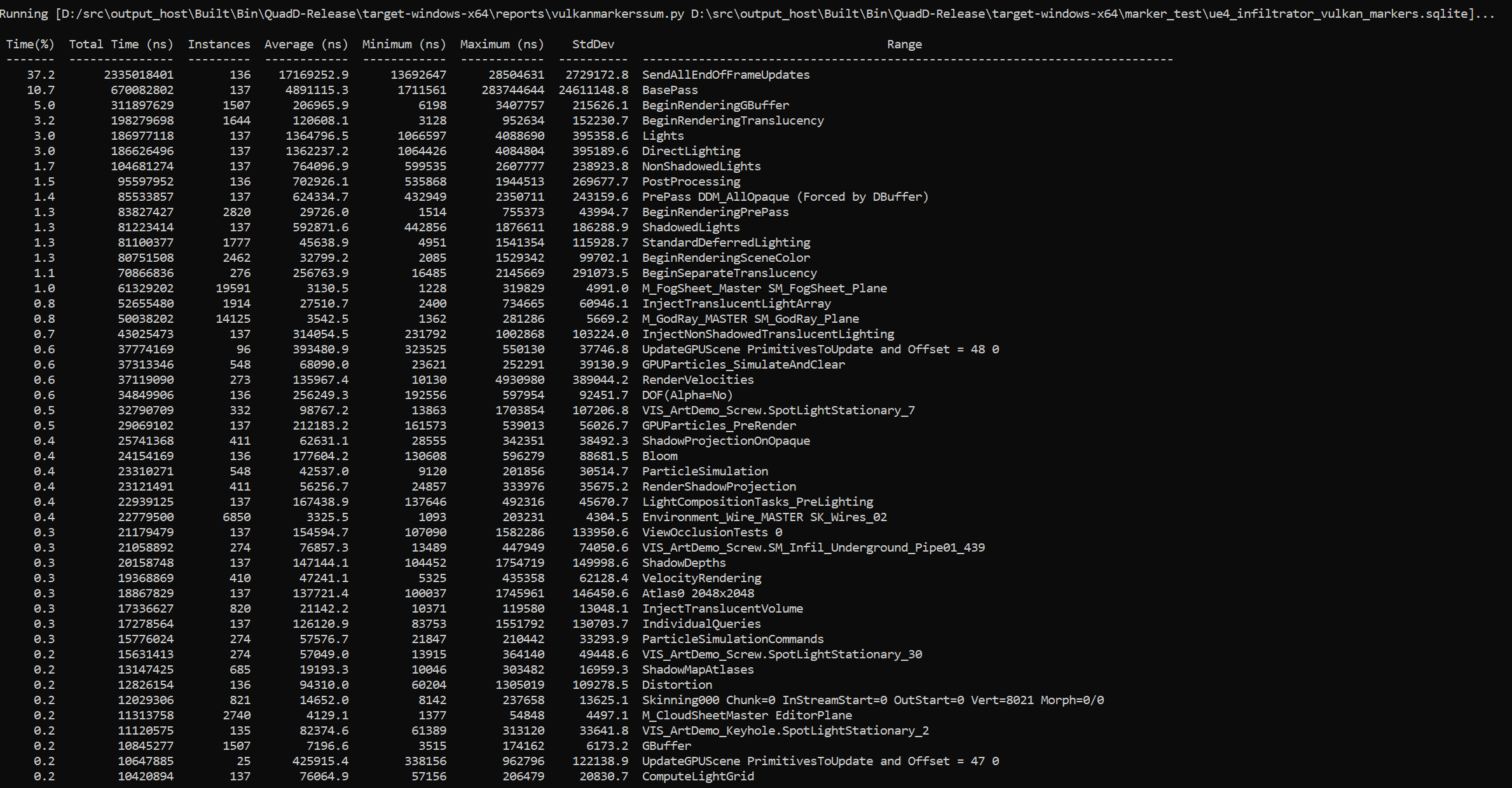

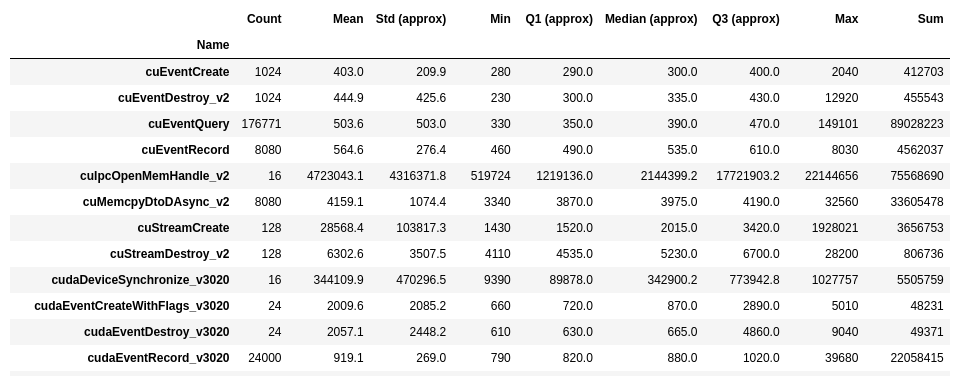

CLI Stats Command Switch Options

The nsys stats command generates a series of summary or trace reports.

These reports can be output to the console, or to individual files, or piped to

external processes. Reports can be rendered in a variety of different output

formats, from human readable columns of text, to formats more appropriate for

data exchange, such as CSV.

Reports are generated from an SQLite export of a .nsys-rep file. If a .nsys-rep file is specified, Nsight Systems will look for an accompanying SQLite file and use it. If no SQLite file exists, one will be exported and created.

Individual reports are generated by calling out to scripts that read data from the SQLite file and return their report data in CSV format. Nsight Systems ingests this data and formats it as requested, then displays the data to the console, writes it to a file, or pipes it to an external process. Adding new reports is as simple as writing a script that can read the SQLite file and generate the required CSV output. See the shipped scripts as an example. Both reports and formatters may take arguments to tweak their processing. For details on shipped scripts and formatters, see Report Scripts topic.

Reports are processed using a three-tuple that consists of:

The requested report (and any arguments),

The presentation format (and any arguments), and

The output (filename, console, or external process).

The first report specified uses the first format specified, and is presented via the first output specified. The second report uses the second format for the second output, and so forth. If more reports are specified than formats or outputs, the format and/or output list is expanded to match the number of provided reports by repeating the last specified element of the list (or the default, if nothing was specified).

nsys stats is a very powerful command and can handle complex argument

structures, please see the topic below on Example Stats Command Sequences.

After choosing the stats command switch, the following options are

available. Usage:

nsys [global-options] stats [options] [input-file]

Short |

Long |

Possible Parameters |

Default |

Switch Description |

|---|---|---|---|---|

|

<tag> |

none |

Print the help message. The option can take one optional argument that will be used as a tag. If a tag is provided, only options relevant to the tag will be printed. |

|

-f |

|

column, table, csv, tsv, json, hdoc, htable, . |

Specify the output format. The special name “.” indicates the default format for the given output. The default format for console

is column, while files and process outputs default to csv. This option may be used multiple times. Multiple formats may also be

specified using a comma-separated list ( |

|

|

true, false |

false |

Force a re-export of the SQLite file from the specified .nsys-rep file, even if an SQLite file already exists. |

|

|

true, false |

false |

Overwrite any existing report file(s). |

|

|

<format_name>, ALL, [none] |

none |

With no argument, give a summary of the available output formats. If a format name is given, a more detailed explanation of that

format is displayed. If |

|

|

<report_name>, ALL, [none] |

none |

With no argument, list a summary of the available summary and trace reports. If a report name is given, a more detailed explanation

of the report is displayed. If |

|

-o |

|

-, @<command>, <basename>, . |

|

Specify the output mechanism. There are three output mechanisms: print to console, output to file, or output to command. This

option may be used multiple times. Multiple outputs may also be specified using a comma-separated list. If the given output name

is “-”, the output will be displayed on the console. If the output name starts with “@”, the output designates a command to run.

The nsys command will be executed and the analysis output will be piped into the command. Any other output is assumed to be the base

path and name for a file. If a file basename is given, the filename used will be: |

-q |

|

Do not display verbose messages, only display errors. |

||

-r |

|

See Report Scripts |

Specify the report(s) to generate, including any arguments. This option may be used multiple times. Multiple reports may also be

specified using a comma-separated list ( |

|

|

<path> |

Add a directory to the path used to find report scripts. This is usually only needed if you have one or more directories with

personal scripts. This option may be used multiple times. Each use adds a new directory to the end of the path. A search path can

also be defined using the environment variable |

||

|

<file.sqlite> |

Specify the SQLite export filename. If this file exists, it will be used. If this file doesn’t exist (or if |

||

|

nsec, nanoseconds, usec, microseconds, msec, milliseconds, seconds |

nanoseconds |

Set basic unit of time. The argument of the switch is matched by using the longest prefix matching, meaning that it is not

necessary to write a whole word as the switch argument. It is similar to passing a |

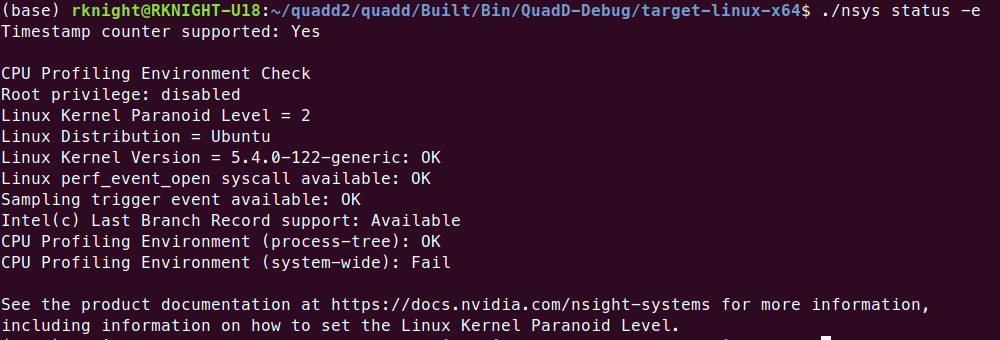

CLI Status Command Switch Options

The nsys status command returns the current state of the CLI. After choosing the status command switch, the following options are available. Usage:

nsys [global-options] status [options]

Short |

Long |

Possible Parameters |

Default |

Switch Description |

|---|---|---|---|---|

|

Prints information for all the available profiling environments. |

|||

-e |

|

Returns information about the system regarding suitability of the profiling environment. |

||

|

<tag> |

none |

Print the help message. The option can take one optional argument that will be used as a tag. If a tag is provided, only options relevant to the tag will be printed. |

|

-n |

|

Returns information about the system regarding suitability of the network profiling environment. |

||

|

session identifier |

none |

Print the status of the indicated session. The option argument must represent a valid session name or ID as reported by |

CLI Stop Command Switch Options

After choosing the stop command switch, the following options are available. Usage:

nsys [global-options] stop [options]

Short |

Long |

Possible Parameters |

Default |

Switch Description |

|---|---|---|---|---|

|

<tag> |

none |

Print the help message. The option can take one optional argument that will be used as a tag. If a tag is provided, only options relevant to the tag will be printed. |

|

|

time in seconds |

0 |

Indicate how many seconds of collected data previous to the stop command should be retained in the result file. Zero is treated as a special setting that retains all of the data. |

|

|

session identifier |

none |

Stop the indicated session. The option argument must represent a valid

session name or ID as reported by |

Example Single Command Lines

Version Information

nsys -v

Effect: Prints tool version information to the screen.

Run with elevated privilege

sudo nsys profile <app>

Effect: Nsight Systems CLI (and target application) will run with elevated privilege. This is necessary for some features, such as FTrace or system-wide CPU sampling. If you don’t want the target application to be elevated, use --run-as option.

Default analysis run

nsys profile <application>

[application-arguments]

Effect: Launch the application using the given arguments. Start collecting immediately and end collection when the application stops. Trace CUDA, OpenGL, NVTX, and OS runtime libraries APIs. Collect CPU sampling information and thread scheduling information. With Nsight Systems Embedded Platforms Edition this will only analysis the single process. With Nsight Systems Workstation Edition this will trace the process tree. Generate the report#.nsys-rep file in the default location, incrementing the report number if needed to avoid overwriting any existing output files.

Limited trace only run

nsys profile --trace=cuda,nvtx -d 20

--sample=none --cpuctxsw=none -o my_test <application>

[application-arguments]

Effect: Launch the application using the given arguments. Start collecting immediately and end collection after 20 seconds or when the application ends. Trace CUDA and NVTX APIs. Do not collect CPU sampling information or thread scheduling information. Profile any child processes. Generate the output file as my_test.nsys-rep in the current working directory.