Red Hat AI Factory with NVIDIA Overview#

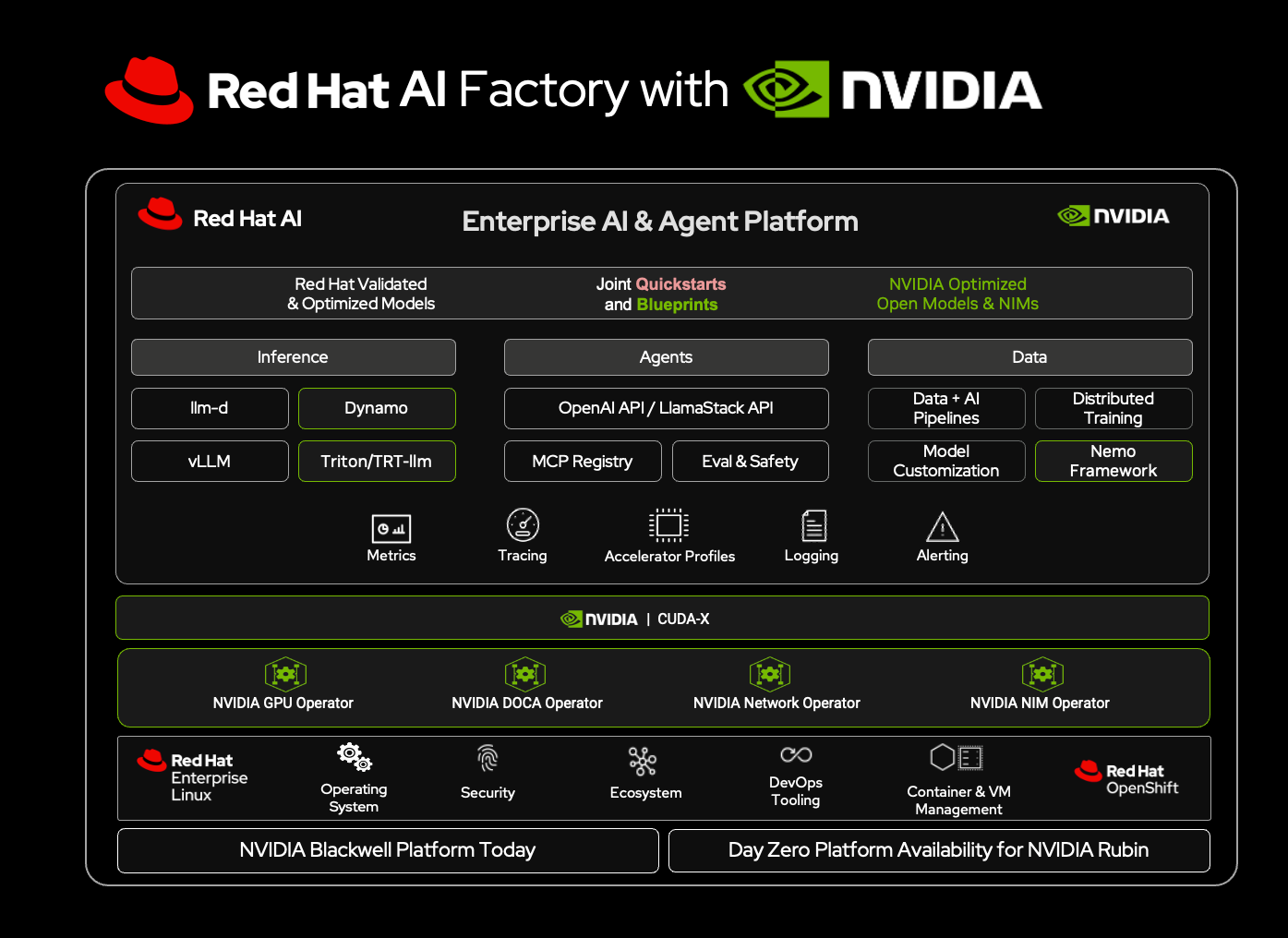

The Red Hat AI Factory with NVIDIA is a joint, co-engineered solution that integrates the full-stack capabilities of NVIDIA AI Enterprise with Red Hat AI Enterprise to transform ad hoc AI development into a repeatable, scalable, and safeguarded “factory” optimized for NVIDIA architectures. By providing a hardened foundation for the entire lifecycle, from data science and fine-tuning to high-throughput inference using distributed frameworks like llm-d and NVIDIA Dynamo. This unified platform delivers on three critical fronts: it accelerates time-to-value via simplified agentic workflows, increases operational efficiency by maximizing infrastructure utilization to reduce costs, and is built for the enterprise to ensure security and compliance across hybrid clouds. This guide provides the technical framework for deploying this architecture to meet production-grade performance standards.

The solution provides developers and AI engineers with purpose-built environments to move from concept to production faster:

Streamlined Agentic AI Innovation: Accelerate the delivery of AI agent developments using validated, co-developed NVIDIA Blueprints and Red Hat AI quickstarts and a unified API layer based on Llama Stack. Developers can also use the NVIDIA NeMo Agent Toolkit, an open source library for building, profiling, and optimizing AI agents across popular frameworks including LangChain, LlamaIndex, CrewAI and more. It includes ready-to-use applications, tool integrations, and Model Context Protocol (MCP) support—providing server acts as a standard interface translator between LLMs and external tools, simplifying the creation of complex, autonomous workflows.

Rapid Customization with Private Data: Bridge the gap between models and enterprise data using Retrieval-Augmented Generation (RAG) and Retrieval-Augmented Fine-Tuning (RAFT) patterns. NVIDIA NeMo enables model fine-tuning and evaluation and the continuous optimization and refinement of AI agents throughout their lifecycle at enterprise scale. The solution also includes open-source tooling for synthetic data generation and full-weight training to reduce the cost and time required to tune models, enabling teams to align models to specific domains without massive manual annotation effort.

Instant Access to Open Validated Models: Jumpstart development with NVIDIA NIM, prebuilt, optimized inference microservices for rapidly deploying the latest AI models, and the Red Hat AI repository, offering quick access to optimized, SafeTensor-weight models. This includes the Granite model family of open-source models that are indemnified and pre-configured for immediate use, and domain-specific AI models such as NVIDIA Nemotron for agentic AI and Cosmos for physical AI.

Purpose-Built Developer Environments: Red Hat AI’s Gen AI Studio and AI Hub provide dedicated dashboards for AI engineers to prototype and tune hyperparameters, while platform engineers manage assets, ensuring a frictionless path from experimentation to deployment.

Increase Operational Efficiency

Maximize infrastructure utilization and reduce inference costs through advanced model compression, distributed serving, and unified orchestration.

Optimized High-Performance Inference: The platform combines the Red Hat AI Inference Server (powered by vLLM) with NVIDIA NIM inference microservices (powered by vLLM, TensorRT-LLM, or SGLang) to maximize throughput and minimize latency. The LLM Compressor allows users to compress models while preserving accuracy, significantly lowering compute requirements.

Scalable Distributed Serving (llm-d): For large-scale workloads, the joint solution provides llm-d, an innovative distributed inference framework. Augmented by NVIDIA Dynamo and NIXL for high-speed GPU-to-GPU communication, llm-d disaggregates the inference pipeline, allowing enterprises to meet strict Service Level Objectives (SLOs) even with the largest models.

Intelligent Resource Management: The solution optimizes GPU utilization through advanced quota management and workload scheduling, creating a highly efficient internal Models-as-a-Service (MaaS) environment. NVIDIA BlueField further improves efficiency by running core networking, data, and security services on a dedicated processor, freeing CPU cores for AI workloads and improving overall performance per watt.

Mitigate Risk

Ensure enterprise security, compliance, and operational stability across hybrid cloud environments.

Hardened Security & Governance: Protect sensitive data with a platform rooted in Red Hat’s hardened OS features (SELinux, FIPS compliance) and NVIDIA’s production-branch and STIG-hardened containers. The solution supports air-gapped deployments, ensuring private data remains secure within your perimeter.

Zero-Trust, Real-Time Workload Protection: Built on defense-in-depth with Red Hat OpenShift and NVIDIA BlueField-powered infrastructure security, the platform enforces strict workload isolation and a distributed security model across distributed environments. With NVIDIA DOCA Argus, it delivers accelerated, real-time threat detection, purpose-built for AI workloads.

Trustworthy AI Operations: Deploy with confidence using built-in MLOps pipelines and observability dashboards that monitor for data drift and bias. Guardrails evaluate inputs and outputs in real-time to ensure reliability, while indemnified Granite models reduce legal exposure.

Hybrid Cloud Consistency: A flexible architecture that offers a consistent experience across bare metal, virtual machines, and public clouds. This ensures that governance policies, security standards, and operational workflows remain uniform regardless of where the workload resides.