Networking#

DGX Grace Blackwell rack scale systems leverage a sophisticated hybrid networking approach to enable seamless, high-performance communication both within the rack and across multiple racks. This model combines the unparalleled speed and coherence of NVLink with the traditional, highly scalable InfiniBand and Ethernet technologies.

NVLink forms the ultra-fast, memory-coherent scale-up fabric within each rack, making 72 GPUs act as one.

InfiniBand provides the high-bandwidth, low-latency scale-out compute fabric between racks, enabling massive multi-node AI training clusters.

Ethernet handles storage, management (in-band and out-of-band), and external connectivity, ensuring all components are integrated and manageable.

NVLink Networking#

NVIDIA NVLink is a high-speed, low-latency interconnect technology designed to overcome PCIe bandwidth limitations in multi-GPU and GPU-to-CPU communication, crucial for HPC and AI platforms. Its key features include ultra-high bandwidth (e.g., 1.8 TB/s bidirectional per GPU with NVLink 5.0) and extremely low latency for direct GPU-to-GPU data transfer. NVLink enables unified memory access across GPUs, simplifying programming, and scales efficiently through NVSwitch chips, which act as non-blocking switches between servers, creating high-bandwidth fabrics.

The NVLink fabric interconnects are provided by the connectors at the back of the trays, and connect to the cable cartridges that communicate all compute and NVLink switch trays up and down the back of the system. This fabric is managed by NVIDIA Fabric Manager (or NVLSM), integrated with GPU drivers and the CUDA software stack (like NCCL), which configures the NVSwitch topology and monitors performance. Additionally, NVSwitch incorporates in-network computing (SHARP) to accelerate collective operations, further optimizing distributed AI training by performing computations within the network.

For further and detailed information on NVIDIA NMX, please refer to NVIDIA NMX Documentation

Network Overview#

A DGX GB rack installation uses several discrete networks:

externalnet- This network provides external communication for the headnode to the enterprise network or to the Internet if allowed.internalnet- This network provides provisioning capabilities as well as system management to the control plane nodes.dgxnet- This network is used to provision and manage the compute trays.ipminet- This network provides Base Command Manager and the headnode access to the out-of-band interfaces of all the components in the rack, including the compute trays, NVLink switch trays, the power shelves, the top-of-rack switches, and the control plane nodes.computenet- This network is used for node communication across racks (east/west traffic).storagenet- This network provides each node access to storage.failovernet- This network is only used by the headnodes when configured in redundant failover configuration.

externalnet- This network provides external communication for the headnode to the enterprise network or to the Internet if allowed.internalnet- This network provides provisioning capabilities as well as system management to the control plane nodes.dgxnet- This network is used to provision and manage the compute trays.ipminet- This network provides Base Command Manager and the headnode access to the out-of-band/BMC interfaces of all the components in the rack, including the compute trays, NVLink switch trays, the power shelves, the top-of-rack switches, and the control plane nodes.computenet- This network is used for RDMA communication across racks (east/west traffic).storagenet- This network provides each node access to storage.failovernet- This network is only used by the headnodes when configured in redundant failover configuration.

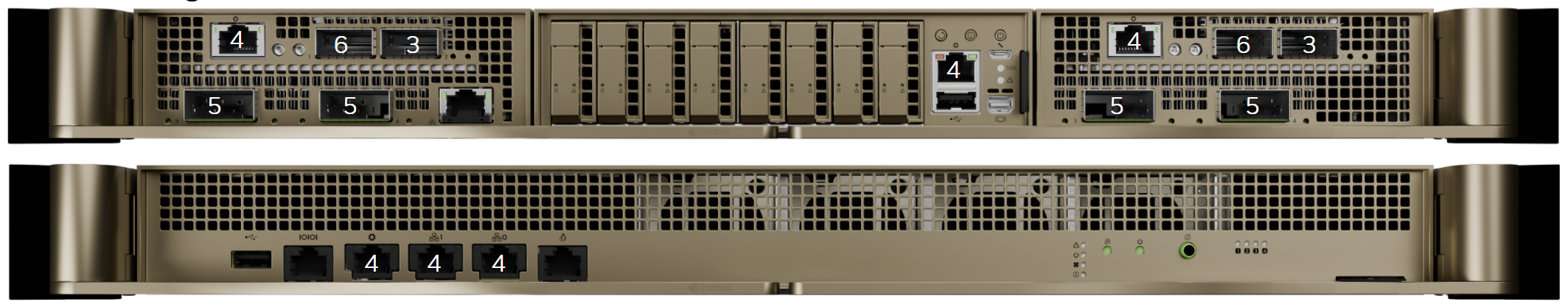

The following images identify the network ports used to communicate to these networks on the compute and switch trays. Note that the number in the image corresponds to the number of the network in the numbered list above.

DGX GB200 Compute and Switch Trays - Identification of network port locations#

DGX GB300 Compute and Switch Trays - Identification of network port locations#

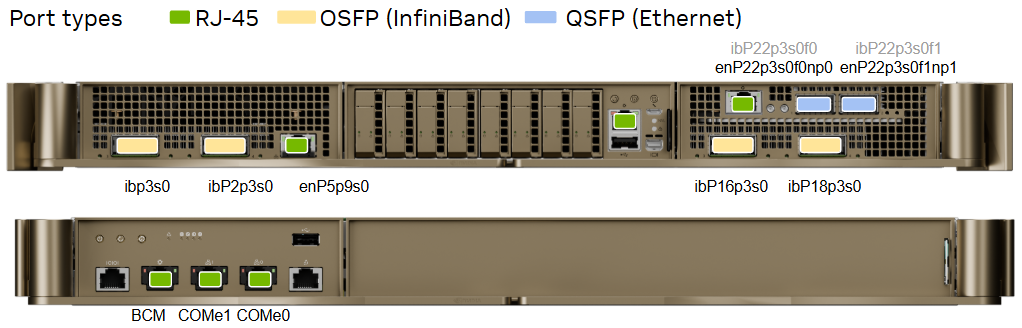

The following image describes the port types available in the compute tray, and how DGX OS identifies each of the ports. Note that the port names in black are the default configurations. Since the BlueField-3 card ports can be switched to InfiniBand mode, those network port names are called out in light gray.

Compute Tray Network Ports#

The following table describes the function and type of each of the compute tray network ports.

Port |

Port Type |

Network / switch |

|---|---|---|

ConnectX-7 port |

OSFP |

computenet (InfiniBand) |

BlueField-3 left port |

QSFP |

storagenet (Ethernet) |

BlueField-3 right port |

QSFP |

dgxnet (Ethernet) |

Bluefield-3 RJ-45 port |

RJ45 |

ipminet (Ethernet) |

1 GbEthernet port LAN |

RJ45 |

Not Connected |

1 GbEthernet Port BMC |

RJ45 |

ipminet (Ethernet) |

Network Interface Names#

The following table describes the network interface names for each of the ports on the compute tray.

Port |

PCIe Bus |

Interface name |

RDMA |

|---|---|---|---|

Left Bay OSFP P3 |

00:03:00.0 |

ibp3s0 |

mlx5_0 |

Left Bay OSFP P4 |

02:03:00.0 |

ibp2p3s0 |

mlx5_1 |

Right Bay OSFP P3 |

10:03:00.0 |

ibp16p3s0 |

mlx5_4 |

Right Bay OSFP P4 |

12:03:00.0 |

ibp11p3s0 |

mlx5_5 |

Left Bay BF3 P1 |

06:03:00.0 |

enP6p3s0f0np0 |

mlx5_2 |

Left Bay BF3 P2 |

06:03:00.1 |

enP6p3s0f1np1 |

mlx5_3 |

Right Bay BF3 P1 |

16:03:00.0 |

enP22p3s0f0np0 |

mlx5_6 |

Right Bay BF3 P2 |

16:03:00.1 |

enP22p3s0f1np1 |

mlx5_7 |

LAN |

enP5p9s0 |

Port |

PCIe Bus |

Interface name |

RDMA |

|---|---|---|---|

Left Bay OSFP P3 |

00:03:00.0 |

ibp3s0 |

mlx5_0 |

Left Bay OSFP P4 |

02:03:00.0 |

ibP2p3s0 |

mlx5_1 |

Right Bay OSFP P3 |

10:03:00.0 |

ibP16p3s0 |

mlx5_2 |

Right Bay OSFP P4 |

12:03:00.0 |

ibP18p3s0 |

mlx5_3 |

Right Bay BF3 P1 |

16:03:00.0 |

enP22p3s0f0np0 |

mlx5_4 |

Right Bay BF3 P2 |

16:03:00.1 |

enP22p3s0f1np1 |

mlx5_5 |

LAN |

05:09:00.0 |

enP5p9s0 |

NVLink Switch Network Ports#

The following table describes the function and type of each of the NVLink switch network ports.

Port (from left to right) |

Port Type |

Network / switch |

|---|---|---|

USB port |

USB |

Not applicable |

RS232 to CPU UART or for BMC |

RJ45 |

Not connected |

1GbEthernet port BMC |

RJ45 |

ipminet (Ethernet) |

1GbEthernet for switch management |

RJ45 |

ipminet (Ethernet) |

1GbEthernet for switch management |

RJ45 |

ipminet (Ethernet) |

Leakage connector |

RJ45 |

Not connected |