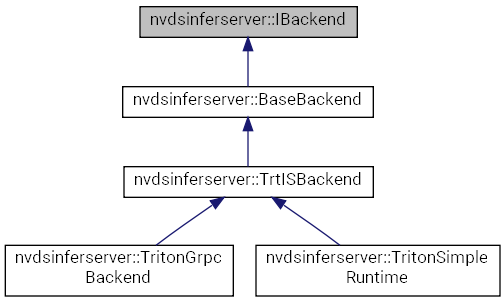

Detailed Description

Definition at line 60 of file sources/libs/nvdsinferserver/infer_ibackend.h.

Public Types | |

| enum | { kLTpLayerDesc, kTpLayerNum } |

| enum | { kInShapeName, kInShapeDims } |

| enum | { kLTpLayerDesc, kTpLayerNum } |

| enum | { kInShapeName, kInShapeDims } |

| using | InferenceDone = std::function< void(NvDsInferStatus, SharedBatchArray)> |

| Function wrapper for post inference processing. More... | |

| using | InputsConsumed = std::function< void(SharedBatchArray)> |

| Function wrapper called after the input buffer is consumed. More... | |

| using | LayersTuple = std::tuple< const LayerDescription *, int > |

| Tuple containing pointer to layer descriptions and the number of layers. More... | |

| using | InputShapeTuple = std::tuple< std::string, InferBatchDims > |

| Tuple of layer name and dimensions including batch size. More... | |

| using | InputShapes = std::vector< InputShapeTuple > |

| using | InferenceDone = std::function< void(NvDsInferStatus, SharedBatchArray)> |

| Function wrapper for post inference processing. More... | |

| using | InputsConsumed = std::function< void(SharedBatchArray)> |

| Function wrapper called after the input buffer is consumed. More... | |

| using | LayersTuple = std::tuple< const LayerDescription *, int > |

| Tuple containing pointer to layer descriptions and the number of layers. More... | |

| using | InputShapeTuple = std::tuple< std::string, InferBatchDims > |

| Tuple of layer name and dimensions including batch size. More... | |

| using | InputShapes = std::vector< InputShapeTuple > |

Public Member Functions | |

| IBackend ()=default | |

| Constructor, default. More... | |

| virtual | ~IBackend ()=default |

| Destructor, default. More... | |

| virtual NvDsInferStatus | initialize ()=0 |

| Initialize the backend for processing. More... | |

| virtual NvDsInferStatus | specifyInputDims (const InputShapes &shapes)=0 |

| Specify the input layers for the backend. More... | |

| virtual bool | isFirstDimBatch () const =0 |

| Check if the flag for first dimension being batch is set. More... | |

| virtual InferTensorOrder | getInputTensorOrder () const =0 |

| Get the tensor order set for the input. More... | |

| virtual int32_t | maxBatchSize () const =0 |

| Get the configured maximum batch size for this backend. More... | |

| virtual uint32_t | getLayerSize () const =0 |

| Get the number of layers (input and output) for the model. More... | |

| virtual uint32_t | getInputLayerSize () const =0 |

| Get the number of input layers. More... | |

| virtual const LayerDescription * | getLayerInfo (const std::string &bindingName) const =0 |

| Get the layer description from the layer name. More... | |

| virtual LayersTuple | getInputLayers () const =0 |

| Get the LayersTuple for input layers. More... | |

| virtual LayersTuple | getOutputLayers () const =0 |

| Get the LayersTuple for output layers. More... | |

| virtual NvDsInferStatus | enqueue (SharedBatchArray inputs, SharedCuStream stream, InputsConsumed bufConsumed, InferenceDone inferenceDone)=0 |

| Enqueue an array of input batches for inference. More... | |

| IBackend ()=default | |

| Constructor, default. More... | |

| virtual | ~IBackend ()=default |

| Destructor, default. More... | |

| virtual NvDsInferStatus | initialize ()=0 |

| Initialize the backend for processing. More... | |

| virtual NvDsInferStatus | specifyInputDims (const InputShapes &shapes)=0 |

| Specify the input layers for the backend. More... | |

| virtual bool | isFirstDimBatch () const =0 |

| Check if the flag for first dimension being batch is set. More... | |

| virtual InferTensorOrder | getInputTensorOrder () const =0 |

| Get the tensor order set for the input. More... | |

| virtual int32_t | maxBatchSize () const =0 |

| Get the configured maximum batch size for this backend. More... | |

| virtual uint32_t | getLayerSize () const =0 |

| Get the number of layers (input and output) for the model. More... | |

| virtual uint32_t | getInputLayerSize () const =0 |

| Get the number of input layers. More... | |

| virtual const LayerDescription * | getLayerInfo (const std::string &bindingName) const =0 |

| Get the layer description from the layer name. More... | |

| virtual LayersTuple | getInputLayers () const =0 |

| Get the LayersTuple for input layers. More... | |

| virtual LayersTuple | getOutputLayers () const =0 |

| Get the LayersTuple for output layers. More... | |

| virtual NvDsInferStatus | enqueue (SharedBatchArray inputs, SharedCuStream stream, InputsConsumed bufConsumed, InferenceDone inferenceDone)=0 |

| Enqueue an array of input batches for inference. More... | |

Member Typedef Documentation

◆ InferenceDone [1/2]

| using nvdsinferserver::IBackend::InferenceDone = std::function<void(NvDsInferStatus, SharedBatchArray)> |

Function wrapper for post inference processing.

Definition at line 66 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InferenceDone [2/2]

| using nvdsinferserver::IBackend::InferenceDone = std::function<void(NvDsInferStatus, SharedBatchArray)> |

Function wrapper for post inference processing.

Definition at line 66 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InputsConsumed [1/2]

| using nvdsinferserver::IBackend::InputsConsumed = std::function<void(SharedBatchArray)> |

Function wrapper called after the input buffer is consumed.

Definition at line 70 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InputsConsumed [2/2]

| using nvdsinferserver::IBackend::InputsConsumed = std::function<void(SharedBatchArray)> |

Function wrapper called after the input buffer is consumed.

Definition at line 70 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InputShapes [1/2]

| using nvdsinferserver::IBackend::InputShapes = std::vector<InputShapeTuple> |

Definition at line 84 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InputShapes [2/2]

| using nvdsinferserver::IBackend::InputShapes = std::vector<InputShapeTuple> |

Definition at line 84 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InputShapeTuple [1/2]

| using nvdsinferserver::IBackend::InputShapeTuple = std::tuple<std::string, InferBatchDims> |

Tuple of layer name and dimensions including batch size.

Definition at line 83 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InputShapeTuple [2/2]

| using nvdsinferserver::IBackend::InputShapeTuple = std::tuple<std::string, InferBatchDims> |

Tuple of layer name and dimensions including batch size.

Definition at line 83 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ LayersTuple [1/2]

| using nvdsinferserver::IBackend::LayersTuple = std::tuple<const LayerDescription*, int> |

Tuple containing pointer to layer descriptions and the number of layers.

Definition at line 77 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ LayersTuple [2/2]

| using nvdsinferserver::IBackend::LayersTuple = std::tuple<const LayerDescription*, int> |

Tuple containing pointer to layer descriptions and the number of layers.

Definition at line 77 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

Member Enumeration Documentation

◆ anonymous enum

| anonymous enum |

| Enumerator | |

|---|---|

| kLTpLayerDesc | |

| kTpLayerNum | |

Definition at line 72 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ anonymous enum

| anonymous enum |

| Enumerator | |

|---|---|

| kInShapeName | |

| kInShapeDims | |

Definition at line 79 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ anonymous enum

| anonymous enum |

| Enumerator | |

|---|---|

| kLTpLayerDesc | |

| kTpLayerNum | |

Definition at line 72 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

◆ anonymous enum

| anonymous enum |

| Enumerator | |

|---|---|

| kInShapeName | |

| kInShapeDims | |

Definition at line 79 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

Constructor & Destructor Documentation

◆ IBackend() [1/2]

|

default |

Constructor, default.

◆ ~IBackend() [1/2]

|

virtualdefault |

Destructor, default.

◆ IBackend() [2/2]

|

default |

Constructor, default.

◆ ~IBackend() [2/2]

|

virtualdefault |

Destructor, default.

Member Function Documentation

◆ enqueue() [1/2]

|

pure virtual |

Enqueue an array of input batches for inference.

This function adds a input to the inference processing queue of the backend. The post inference function and function to be called after consuming input buffer is provided.

- Parameters

-

[in] inputs List of input batch buffers [in] stream The CUDA stream to be used in inference processing. [in] bufConsumed Function to be called once input buffer is consumed. [in] inferenceDone Function to be called after inference.

- Returns

- Execution status code.

Implemented in nvdsinferserver::TrtISBackend, nvdsinferserver::TrtISBackend, nvdsinferserver::TritonGrpcBackend, nvdsinferserver::TritonGrpcBackend, nvdsinferserver::TritonSimpleRuntime, and nvdsinferserver::TritonSimpleRuntime.

◆ enqueue() [2/2]

|

pure virtual |

Enqueue an array of input batches for inference.

This function adds a input to the inference processing queue of the backend. The post inference function and function to be called after consuming input buffer is provided.

- Parameters

-

[in] inputs List of input batch buffers [in] stream The CUDA stream to be used in inference processing. [in] bufConsumed Function to be called once input buffer is consumed. [in] inferenceDone Function to be called after inference.

- Returns

- Execution status code.

Implemented in nvdsinferserver::TrtISBackend, nvdsinferserver::TrtISBackend, nvdsinferserver::TritonGrpcBackend, nvdsinferserver::TritonGrpcBackend, nvdsinferserver::TritonSimpleRuntime, and nvdsinferserver::TritonSimpleRuntime.

◆ getInputLayers() [1/2]

|

pure virtual |

Get the LayersTuple for input layers.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ getInputLayers() [2/2]

|

pure virtual |

Get the LayersTuple for input layers.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ getInputLayerSize() [1/2]

|

pure virtual |

Get the number of input layers.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ getInputLayerSize() [2/2]

|

pure virtual |

Get the number of input layers.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ getInputTensorOrder() [1/2]

|

pure virtual |

Get the tensor order set for the input.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ getInputTensorOrder() [2/2]

|

pure virtual |

Get the tensor order set for the input.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ getLayerInfo() [1/2]

|

pure virtual |

Get the layer description from the layer name.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ getLayerInfo() [2/2]

|

pure virtual |

Get the layer description from the layer name.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ getLayerSize() [1/2]

|

pure virtual |

Get the number of layers (input and output) for the model.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ getLayerSize() [2/2]

|

pure virtual |

Get the number of layers (input and output) for the model.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ getOutputLayers() [1/2]

|

pure virtual |

Get the LayersTuple for output layers.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ getOutputLayers() [2/2]

|

pure virtual |

Get the LayersTuple for output layers.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ initialize() [1/2]

|

pure virtual |

Initialize the backend for processing.

- Returns

- Status code of the type NvDsInferStatus.

Implemented in nvdsinferserver::TrtISBackend, nvdsinferserver::TrtISBackend, nvdsinferserver::TritonGrpcBackend, nvdsinferserver::TritonGrpcBackend, nvdsinferserver::TritonSimpleRuntime, and nvdsinferserver::TritonSimpleRuntime.

◆ initialize() [2/2]

|

pure virtual |

Initialize the backend for processing.

- Returns

- Status code of the type NvDsInferStatus.

Implemented in nvdsinferserver::TrtISBackend, nvdsinferserver::TrtISBackend, nvdsinferserver::TritonGrpcBackend, nvdsinferserver::TritonGrpcBackend, nvdsinferserver::TritonSimpleRuntime, and nvdsinferserver::TritonSimpleRuntime.

◆ isFirstDimBatch() [1/2]

|

pure virtual |

Check if the flag for first dimension being batch is set.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ isFirstDimBatch() [2/2]

|

pure virtual |

Check if the flag for first dimension being batch is set.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ maxBatchSize() [1/2]

|

pure virtual |

Get the configured maximum batch size for this backend.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ maxBatchSize() [2/2]

|

pure virtual |

Get the configured maximum batch size for this backend.

Implemented in nvdsinferserver::BaseBackend, and nvdsinferserver::BaseBackend.

◆ specifyInputDims() [1/2]

|

pure virtual |

Specify the input layers for the backend.

- Parameters

-

shapes List of name and shapes of the input layers.

- Returns

- Status code of the type NvDsInferStatus.

Implemented in nvdsinferserver::TrtISBackend, nvdsinferserver::TrtISBackend, nvdsinferserver::TritonSimpleRuntime, and nvdsinferserver::TritonSimpleRuntime.

◆ specifyInputDims() [2/2]

|

pure virtual |

Specify the input layers for the backend.

- Parameters

-

shapes List of name and shapes of the input layers.

- Returns

- Status code of the type NvDsInferStatus.

Implemented in nvdsinferserver::TrtISBackend, nvdsinferserver::TrtISBackend, nvdsinferserver::TritonSimpleRuntime, and nvdsinferserver::TritonSimpleRuntime.

The documentation for this class was generated from the following file: