Detailed Description

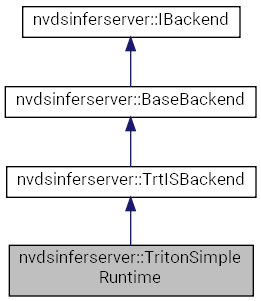

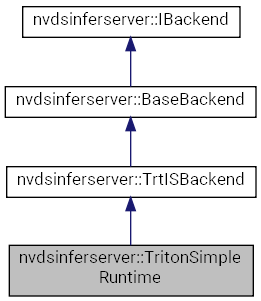

Definition at line 21 of file sources/libs/nvdsinferserver/infer_simple_runtime.h.

Public Types | |

| enum | { kLTpLayerDesc, kTpLayerNum } |

| enum | { kInShapeName, kInShapeDims } |

| enum | { kLTpLayerDesc, kTpLayerNum } |

| enum | { kInShapeName, kInShapeDims } |

| using | InferenceDone = std::function< void(NvDsInferStatus, SharedBatchArray)> |

| Function wrapper for post inference processing. More... | |

| using | InferenceDone = std::function< void(NvDsInferStatus, SharedBatchArray)> |

| Function wrapper for post inference processing. More... | |

| using | InputsConsumed = std::function< void(SharedBatchArray)> |

| Function wrapper called after the input buffer is consumed. More... | |

| using | InputsConsumed = std::function< void(SharedBatchArray)> |

| Function wrapper called after the input buffer is consumed. More... | |

| using | LayersTuple = std::tuple< const LayerDescription *, int > |

| Tuple containing pointer to layer descriptions and the number of layers. More... | |

| using | LayersTuple = std::tuple< const LayerDescription *, int > |

| Tuple containing pointer to layer descriptions and the number of layers. More... | |

| using | InputShapeTuple = std::tuple< std::string, InferBatchDims > |

| Tuple of layer name and dimensions including batch size. More... | |

| using | InputShapeTuple = std::tuple< std::string, InferBatchDims > |

| Tuple of layer name and dimensions including batch size. More... | |

| using | InputShapes = std::vector< InputShapeTuple > |

| using | InputShapes = std::vector< InputShapeTuple > |

Public Member Functions | |

| TritonSimpleRuntime (std::string model, int64_t version) | |

| ~TritonSimpleRuntime () override | |

| void | setOutputs (const std::set< std::string > &names) |

| NvDsInferStatus | initialize () override |

| TritonSimpleRuntime (std::string model, int64_t version) | |

| ~TritonSimpleRuntime () override | |

| void | setOutputs (const std::set< std::string > &names) |

| NvDsInferStatus | initialize () override |

| void | addClassifyParams (const TritonClassParams &c) |

| Add Triton Classification parameters to the list. More... | |

| void | addClassifyParams (const TritonClassParams &c) |

| Add Triton Classification parameters to the list. More... | |

| void | setTensorMaxBytes (const std::string &name, size_t maxBytes) |

| Set the maximum size for the tensor, the maximum of the existing size and new input size is used. More... | |

| void | setTensorMaxBytes (const std::string &name, size_t maxBytes) |

| Set the maximum size for the tensor, the maximum of the existing size and new input size is used. More... | |

| InferTensorOrder | getInputTensorOrder () const final |

| Returns the input tensor order. More... | |

| InferTensorOrder | getInputTensorOrder () const final |

| Returns the input tensor order. More... | |

| void | setUniqueId (uint32_t id) |

| Set the unique ID for the object instance. More... | |

| void | setUniqueId (uint32_t id) |

| Set the unique ID for the object instance. More... | |

| int | uniqueId () const |

| Get the unique ID of the object instance. More... | |

| int | uniqueId () const |

| Get the unique ID of the object instance. More... | |

| void | setFirstDimBatch (bool flag) |

| Set the flag indicating that it is a batch input. More... | |

| void | setFirstDimBatch (bool flag) |

| Set the flag indicating that it is a batch input. More... | |

| bool | isFirstDimBatch () const final |

| Returns boolean indicating if batched input is expected. More... | |

| bool | isFirstDimBatch () const final |

| Returns boolean indicating if batched input is expected. More... | |

| uint32_t | getLayerSize () const final |

| Returns the total number of layers (input + output) for the model. More... | |

| uint32_t | getLayerSize () const final |

| Returns the total number of layers (input + output) for the model. More... | |

| uint32_t | getInputLayerSize () const final |

| Returns the number of input layers for the model. More... | |

| uint32_t | getInputLayerSize () const final |

| Returns the number of input layers for the model. More... | |

| const LayerDescription * | getLayerInfo (const std::string &bindingName) const final |

| Retrieve the layer information from the layer name. More... | |

| const LayerDescription * | getLayerInfo (const std::string &bindingName) const final |

| Retrieve the layer information from the layer name. More... | |

| LayersTuple | getInputLayers () const final |

| Get the LayersTuple for input layers. More... | |

| LayersTuple | getInputLayers () const final |

| Get the LayersTuple for input layers. More... | |

| LayersTuple | getOutputLayers () const final |

| Get the LayersTuple for output layers. More... | |

| LayersTuple | getOutputLayers () const final |

| Get the LayersTuple for output layers. More... | |

| bool | checkInputDims (const InputShapes &shapes) const |

| Check that the list of input shapes have fixed dimensions and corresponding layers are marked as input layers. More... | |

| bool | checkInputDims (const InputShapes &shapes) const |

| Check that the list of input shapes have fixed dimensions and corresponding layers are marked as input layers. More... | |

| const LayerDescriptionList & | allLayers () const |

| Returns the list of all descriptions of all layers, input and output. More... | |

| const LayerDescriptionList & | allLayers () const |

| Returns the list of all descriptions of all layers, input and output. More... | |

| void | setKeepInputs (bool enable) |

| Set the flag indicating whether to keep inputs buffers. More... | |

| void | setKeepInputs (bool enable) |

| Set the flag indicating whether to keep inputs buffers. More... | |

| int32_t | maxBatchSize () const final |

| Returns the maximum batch size set for the backend. More... | |

| int32_t | maxBatchSize () const final |

| Returns the maximum batch size set for the backend. More... | |

| bool | isNonBatching () const |

| Checks if the batch size indicates batched processing or no. More... | |

| bool | isNonBatching () const |

| Checks if the batch size indicates batched processing or no. More... | |

| void | setOutputPoolSize (int size) |

| Helper function to access the member variables. More... | |

| int | outputPoolSize () const |

| void | setOutputMemType (InferMemType memType) |

| InferMemType | outputMemType () const |

| void | setOutputDevId (int64_t devId) |

| int64_t | outputDevId () const |

| std::vector< TritonClassParams > | getClassifyParams () |

| const std::string & | model () const |

| int64_t | version () const |

| void | setOutputPoolSize (int size) |

| Helper function to access the member variables. More... | |

| int | outputPoolSize () const |

| void | setOutputMemType (InferMemType memType) |

| InferMemType | outputMemType () const |

| void | setOutputDevId (int64_t devId) |

| int64_t | outputDevId () const |

| std::vector< TritonClassParams > | getClassifyParams () |

| const std::string & | model () const |

| int64_t | version () const |

| void | setOutputPoolSize (int size) |

| Helper function to access the member variables. More... | |

| int | outputPoolSize () const |

| void | setOutputMemType (InferMemType memType) |

| InferMemType | outputMemType () const |

| void | setOutputDevId (int64_t devId) |

| int64_t | outputDevId () const |

| std::vector< TritonClassParams > | getClassifyParams () |

| const std::string & | model () const |

| int64_t | version () const |

| void | setOutputPoolSize (int size) |

| Helper function to access the member variables. More... | |

| int | outputPoolSize () const |

| void | setOutputMemType (InferMemType memType) |

| InferMemType | outputMemType () const |

| void | setOutputDevId (int64_t devId) |

| int64_t | outputDevId () const |

| std::vector< TritonClassParams > | getClassifyParams () |

| const std::string & | model () const |

| int64_t | version () const |

| void | setOutputPoolSize (int size) |

| Helper function to access the member variables. More... | |

| int | outputPoolSize () const |

| void | setOutputMemType (InferMemType memType) |

| InferMemType | outputMemType () const |

| void | setOutputDevId (int64_t devId) |

| int64_t | outputDevId () const |

| std::vector< TritonClassParams > | getClassifyParams () |

| const std::string & | model () const |

| int64_t | version () const |

| void | setOutputPoolSize (int size) |

| Helper function to access the member variables. More... | |

| int | outputPoolSize () const |

| void | setOutputMemType (InferMemType memType) |

| InferMemType | outputMemType () const |

| void | setOutputDevId (int64_t devId) |

| int64_t | outputDevId () const |

| std::vector< TritonClassParams > | getClassifyParams () |

| const std::string & | model () const |

| int64_t | version () const |

Protected Types | |

| enum | { kName, kGpuId, kMemType } |

| Tuple keys as <tensor-name, gpu-id, memType> More... | |

| enum | { kName, kGpuId, kMemType } |

| Tuple keys as <tensor-name, gpu-id, memType> More... | |

| using | AsyncDone = std::function< void(NvDsInferStatus, SharedBatchArray)> |

| Asynchronous inference done function: AsyncDone(Status, outputs). More... | |

| using | AsyncDone = std::function< void(NvDsInferStatus, SharedBatchArray)> |

| Asynchronous inference done function: AsyncDone(Status, outputs). More... | |

| using | PoolKey = std::tuple< std::string, int64_t, InferMemType > |

| Tuple holding tensor name, GPU ID, memory type. More... | |

| using | PoolKey = std::tuple< std::string, int64_t, InferMemType > |

| Tuple holding tensor name, GPU ID, memory type. More... | |

| using | PoolValue = SharedBufPool< UniqSysMem > |

| The buffer pool for the specified tensor, GPU and memory type combination. More... | |

| using | PoolValue = SharedBufPool< UniqSysMem > |

| The buffer pool for the specified tensor, GPU and memory type combination. More... | |

| using | ReorderItemPtr = std::shared_ptr< ReorderItem > |

| using | ReorderItemPtr = std::shared_ptr< ReorderItem > |

| using | LayerIdxMap = std::unordered_map< std::string, int > |

| Map of layer name to layer index. More... | |

| using | LayerIdxMap = std::unordered_map< std::string, int > |

| Map of layer name to layer index. More... | |

Protected Member Functions | |

| NvDsInferStatus | specifyInputDims (const InputShapes &shapes) override |

| NvDsInferStatus | enqueue (SharedBatchArray inputs, SharedCuStream stream, InputsConsumed bufConsumed, InferenceDone inferenceDone) override |

| void | requestTritonOutputNames (std::set< std::string > &names) override |

| NvDsInferStatus | specifyInputDims (const InputShapes &shapes) override |

| NvDsInferStatus | enqueue (SharedBatchArray inputs, SharedCuStream stream, InputsConsumed bufConsumed, InferenceDone inferenceDone) override |

| void | requestTritonOutputNames (std::set< std::string > &names) override |

| virtual NvDsInferStatus | ensureServerReady () |

| Check that the Triton inference server is live. More... | |

| virtual NvDsInferStatus | ensureServerReady () |

| Check that the Triton inference server is live. More... | |

| virtual NvDsInferStatus | ensureModelReady () |

| Check that the model is ready, load the model if it is not. More... | |

| virtual NvDsInferStatus | ensureModelReady () |

| Check that the model is ready, load the model if it is not. More... | |

| NvDsInferStatus | setupReorderThread () |

| Create a loop thread that calls inferenceDoneReorderLoop on the queued items. More... | |

| NvDsInferStatus | setupReorderThread () |

| Create a loop thread that calls inferenceDoneReorderLoop on the queued items. More... | |

| void | setAllocator (UniqTritonAllocator allocator) |

| Set the output tensor allocator. More... | |

| void | setAllocator (UniqTritonAllocator allocator) |

| Set the output tensor allocator. More... | |

| virtual NvDsInferStatus | setupLayersInfo () |

| Get the model configuration from the server and populate layer information. More... | |

| virtual NvDsInferStatus | setupLayersInfo () |

| Get the model configuration from the server and populate layer information. More... | |

| TrtServerPtr & | server () |

| Get the Triton server handle. More... | |

| TrtServerPtr & | server () |

| Get the Triton server handle. More... | |

| virtual NvDsInferStatus | Run (SharedBatchArray inputs, InputsConsumed bufConsumed, AsyncDone asyncDone) |

| Create an inference request and trigger asynchronous inference. More... | |

| virtual NvDsInferStatus | Run (SharedBatchArray inputs, InputsConsumed bufConsumed, AsyncDone asyncDone) |

| Create an inference request and trigger asynchronous inference. More... | |

| NvDsInferStatus | fixateDims (const SharedBatchArray &bufs) |

| Extend the dimensions to include batch size for the buffers in input array. More... | |

| NvDsInferStatus | fixateDims (const SharedBatchArray &bufs) |

| Extend the dimensions to include batch size for the buffers in input array. More... | |

| SharedSysMem | allocateResponseBuf (const std::string &tensor, size_t bytes, InferMemType memType, int64_t devId) |

| Acquire a buffer from the output buffer pool associated with the device ID and memory type. More... | |

| SharedSysMem | allocateResponseBuf (const std::string &tensor, size_t bytes, InferMemType memType, int64_t devId) |

| Acquire a buffer from the output buffer pool associated with the device ID and memory type. More... | |

| void | releaseResponseBuf (const std::string &tensor, SharedSysMem mem) |

| Release the output tensor buffer. More... | |

| void | releaseResponseBuf (const std::string &tensor, SharedSysMem mem) |

| Release the output tensor buffer. More... | |

| NvDsInferStatus | ensureInputs (SharedBatchArray &inputs) |

| Ensure that the array of input buffers are expected by the model and reshape the input buffers if required. More... | |

| NvDsInferStatus | ensureInputs (SharedBatchArray &inputs) |

| Ensure that the array of input buffers are expected by the model and reshape the input buffers if required. More... | |

| PoolValue | findResponsePool (PoolKey &key) |

| Find the buffer pool for the given key. More... | |

| PoolValue | findResponsePool (PoolKey &key) |

| Find the buffer pool for the given key. More... | |

| PoolValue | createResponsePool (PoolKey &key, size_t bytes) |

| Create a new buffer pool for the key. More... | |

| PoolValue | createResponsePool (PoolKey &key, size_t bytes) |

| Create a new buffer pool for the key. More... | |

| void | serverInferCompleted (std::shared_ptr< TrtServerRequest > request, std::unique_ptr< TrtServerResponse > uniqResponse, InputsConsumed inputsConsumed, AsyncDone asyncDone) |

| Call the inputs consumed function and parse the inference response to form the array of output batch buffers and call asyncDone on it. More... | |

| void | serverInferCompleted (std::shared_ptr< TrtServerRequest > request, std::unique_ptr< TrtServerResponse > uniqResponse, InputsConsumed inputsConsumed, AsyncDone asyncDone) |

| Call the inputs consumed function and parse the inference response to form the array of output batch buffers and call asyncDone on it. More... | |

| bool | inferenceDoneReorderLoop (ReorderItemPtr item) |

| Add input buffers to the output buffer list if required. More... | |

| bool | inferenceDoneReorderLoop (ReorderItemPtr item) |

| Add input buffers to the output buffer list if required. More... | |

| bool | debatchingOutput (SharedBatchArray &outputs, SharedBatchArray &inputs) |

| Separate the batch dimension from the output buffer descriptors. More... | |

| bool | debatchingOutput (SharedBatchArray &outputs, SharedBatchArray &inputs) |

| Separate the batch dimension from the output buffer descriptors. More... | |

| void | resetLayers (LayerDescriptionList layers, int inputSize) |

| Set the layer description list of the backend. More... | |

| void | resetLayers (LayerDescriptionList layers, int inputSize) |

| Set the layer description list of the backend. More... | |

| LayerDescription * | mutableLayerInfo (const std::string &bindingName) |

| Get the mutable layer description structure for the layer name. More... | |

| LayerDescription * | mutableLayerInfo (const std::string &bindingName) |

| Get the mutable layer description structure for the layer name. More... | |

| void | setInputTensorOrder (InferTensorOrder order) |

| Set the tensor order for the input layers. More... | |

| void | setInputTensorOrder (InferTensorOrder order) |

| Set the tensor order for the input layers. More... | |

| bool | needKeepInputs () const |

| Check if the keep input flag is set. More... | |

| bool | needKeepInputs () const |

| Check if the keep input flag is set. More... | |

| void | setMaxBatchSize (uint32_t size) |

| Set the maximum batch size to be used for the backend. More... | |

| void | setMaxBatchSize (uint32_t size) |

| Set the maximum batch size to be used for the backend. More... | |

Member Typedef Documentation

◆ AsyncDone [1/2]

|

protectedinherited |

Asynchronous inference done function: AsyncDone(Status, outputs).

Definition at line 169 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ AsyncDone [2/2]

|

protectedinherited |

Asynchronous inference done function: AsyncDone(Status, outputs).

Definition at line 169 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ InferenceDone [1/2]

|

inherited |

Function wrapper for post inference processing.

Definition at line 66 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InferenceDone [2/2]

|

inherited |

Function wrapper for post inference processing.

Definition at line 66 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InputsConsumed [1/2]

|

inherited |

Function wrapper called after the input buffer is consumed.

Definition at line 70 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InputsConsumed [2/2]

|

inherited |

Function wrapper called after the input buffer is consumed.

Definition at line 70 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InputShapes [1/2]

|

inherited |

Definition at line 84 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InputShapes [2/2]

|

inherited |

Definition at line 84 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InputShapeTuple [1/2]

|

inherited |

Tuple of layer name and dimensions including batch size.

Definition at line 83 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

◆ InputShapeTuple [2/2]

|

inherited |

Tuple of layer name and dimensions including batch size.

Definition at line 83 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ LayerIdxMap [1/2]

|

protectedinherited |

Map of layer name to layer index.

Definition at line 136 of file sources/libs/nvdsinferserver/infer_base_backend.h.

◆ LayerIdxMap [2/2]

|

protectedinherited |

Map of layer name to layer index.

Definition at line 136 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ LayersTuple [1/2]

|

inherited |

Tuple containing pointer to layer descriptions and the number of layers.

Definition at line 77 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

◆ LayersTuple [2/2]

|

inherited |

Tuple containing pointer to layer descriptions and the number of layers.

Definition at line 77 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ PoolKey [1/2]

|

protectedinherited |

Tuple holding tensor name, GPU ID, memory type.

Definition at line 224 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ PoolKey [2/2]

|

protectedinherited |

Tuple holding tensor name, GPU ID, memory type.

Definition at line 224 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ PoolValue [1/2]

|

protectedinherited |

The buffer pool for the specified tensor, GPU and memory type combination.

Definition at line 229 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ PoolValue [2/2]

|

protectedinherited |

The buffer pool for the specified tensor, GPU and memory type combination.

Definition at line 229 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ ReorderItemPtr [1/2]

|

protectedinherited |

Definition at line 293 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ ReorderItemPtr [2/2]

|

protectedinherited |

Definition at line 293 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

Member Enumeration Documentation

◆ anonymous enum

|

inherited |

| Enumerator | |

|---|---|

| kLTpLayerDesc | |

| kTpLayerNum | |

Definition at line 72 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ anonymous enum

|

inherited |

| Enumerator | |

|---|---|

| kInShapeName | |

| kInShapeDims | |

Definition at line 79 of file sources/libs/nvdsinferserver/infer_ibackend.h.

◆ anonymous enum

|

protectedinherited |

Tuple keys as <tensor-name, gpu-id, memType>

| Enumerator | |

|---|---|

| kName | |

| kGpuId | |

| kMemType | |

Definition at line 220 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ anonymous enum

|

inherited |

| Enumerator | |

|---|---|

| kLTpLayerDesc | |

| kTpLayerNum | |

Definition at line 72 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

◆ anonymous enum

|

inherited |

| Enumerator | |

|---|---|

| kInShapeName | |

| kInShapeDims | |

Definition at line 79 of file 9.0/sources/libs/nvdsinferserver/infer_ibackend.h.

◆ anonymous enum

|

protectedinherited |

Tuple keys as <tensor-name, gpu-id, memType>

| Enumerator | |

|---|---|

| kName | |

| kGpuId | |

| kMemType | |

Definition at line 220 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

Constructor & Destructor Documentation

◆ TritonSimpleRuntime() [1/2]

| nvdsinferserver::TritonSimpleRuntime::TritonSimpleRuntime | ( | std::string | model, |

| int64_t | version | ||

| ) |

◆ ~TritonSimpleRuntime() [1/2]

|

override |

◆ TritonSimpleRuntime() [2/2]

| nvdsinferserver::TritonSimpleRuntime::TritonSimpleRuntime | ( | std::string | model, |

| int64_t | version | ||

| ) |

◆ ~TritonSimpleRuntime() [2/2]

|

override |

Member Function Documentation

◆ addClassifyParams() [1/2]

|

inlineinherited |

Add Triton Classification parameters to the list.

Definition at line 58 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ addClassifyParams() [2/2]

|

inlineinherited |

Add Triton Classification parameters to the list.

Definition at line 58 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ allLayers() [1/2]

|

inlineinherited |

Returns the list of all descriptions of all layers, input and output.

Definition at line 113 of file sources/libs/nvdsinferserver/infer_base_backend.h.

◆ allLayers() [2/2]

|

inlineinherited |

Returns the list of all descriptions of all layers, input and output.

Definition at line 113 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ allocateResponseBuf() [1/2]

|

protectedinherited |

Acquire a buffer from the output buffer pool associated with the device ID and memory type.

Create the pool if it doesn't exist.

- Parameters

-

[in] tensor Name of the output tensor. [in] bytes Buffer size. [in] memType Requested memory type. [in] devId Device ID for the allocation.

- Returns

- Pointer to the allocated buffer.

◆ allocateResponseBuf() [2/2]

|

protectedinherited |

Acquire a buffer from the output buffer pool associated with the device ID and memory type.

Create the pool if it doesn't exist.

- Parameters

-

[in] tensor Name of the output tensor. [in] bytes Buffer size. [in] memType Requested memory type. [in] devId Device ID for the allocation.

- Returns

- Pointer to the allocated buffer.

◆ checkInputDims() [1/2]

|

inherited |

Check that the list of input shapes have fixed dimensions and corresponding layers are marked as input layers.

◆ checkInputDims() [2/2]

|

inherited |

Check that the list of input shapes have fixed dimensions and corresponding layers are marked as input layers.

◆ createResponsePool() [1/2]

|

protectedinherited |

Create a new buffer pool for the key.

- Parameters

-

[in] key The pool key combination. [in] bytes Size of the requested buffer.

- Returns

◆ createResponsePool() [2/2]

|

protectedinherited |

Create a new buffer pool for the key.

- Parameters

-

[in] key The pool key combination. [in] bytes Size of the requested buffer.

- Returns

◆ debatchingOutput() [1/2]

|

protectedinherited |

Separate the batch dimension from the output buffer descriptors.

- Parameters

-

[in] outputs Array of output batch buffers. [in] inputs Array of input batch buffers.

- Returns

- Boolean indicating success or failure.

◆ debatchingOutput() [2/2]

|

protectedinherited |

Separate the batch dimension from the output buffer descriptors.

- Parameters

-

[in] outputs Array of output batch buffers. [in] inputs Array of input batch buffers.

- Returns

- Boolean indicating success or failure.

◆ enqueue() [1/2]

|

overrideprotectedvirtual |

Implements nvdsinferserver::IBackend.

◆ enqueue() [2/2]

|

overrideprotectedvirtual |

Reimplemented from nvdsinferserver::TrtISBackend.

◆ ensureInputs() [1/2]

|

protectedinherited |

Ensure that the array of input buffers are expected by the model and reshape the input buffers if required.

- Parameters

-

inputs Array of input batch buffers.

- Returns

- NVDSINFER_SUCCESS or NVDSINFER_TRITON_ERROR.

◆ ensureInputs() [2/2]

|

protectedinherited |

Ensure that the array of input buffers are expected by the model and reshape the input buffers if required.

- Parameters

-

inputs Array of input batch buffers.

- Returns

- NVDSINFER_SUCCESS or NVDSINFER_TRITON_ERROR.

◆ ensureModelReady() [1/2]

|

protectedvirtualinherited |

Check that the model is ready, load the model if it is not.

- Returns

- NVDSINFER_SUCCESS or NVDSINFER_TRITON_ERROR.

Reimplemented in nvdsinferserver::TritonGrpcBackend, and nvdsinferserver::TritonGrpcBackend.

◆ ensureModelReady() [2/2]

|

protectedvirtualinherited |

Check that the model is ready, load the model if it is not.

- Returns

- NVDSINFER_SUCCESS or NVDSINFER_TRITON_ERROR.

Reimplemented in nvdsinferserver::TritonGrpcBackend, and nvdsinferserver::TritonGrpcBackend.

◆ ensureServerReady() [1/2]

|

protectedvirtualinherited |

Check that the Triton inference server is live.

- Returns

- NVDSINFER_SUCCESS or NVDSINFER_TRITON_ERROR.

Reimplemented in nvdsinferserver::TritonGrpcBackend, and nvdsinferserver::TritonGrpcBackend.

◆ ensureServerReady() [2/2]

|

protectedvirtualinherited |

Check that the Triton inference server is live.

- Returns

- NVDSINFER_SUCCESS or NVDSINFER_TRITON_ERROR.

Reimplemented in nvdsinferserver::TritonGrpcBackend, and nvdsinferserver::TritonGrpcBackend.

◆ findResponsePool() [1/2]

Find the buffer pool for the given key.

◆ findResponsePool() [2/2]

Find the buffer pool for the given key.

◆ fixateDims() [1/2]

|

protectedinherited |

Extend the dimensions to include batch size for the buffers in input array.

Do nothing if batch input is not required.

◆ fixateDims() [2/2]

|

protectedinherited |

Extend the dimensions to include batch size for the buffers in input array.

Do nothing if batch input is not required.

◆ getClassifyParams() [1/2]

|

inlineinherited |

Definition at line 71 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ getClassifyParams() [2/2]

|

inlineinherited |

Definition at line 71 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ getInputLayers() [1/2]

|

finalvirtualinherited |

Get the LayersTuple for input layers.

Implements nvdsinferserver::IBackend.

◆ getInputLayers() [2/2]

|

finalvirtualinherited |

Get the LayersTuple for input layers.

Implements nvdsinferserver::IBackend.

◆ getInputLayerSize() [1/2]

|

inlinefinalvirtualinherited |

Returns the number of input layers for the model.

Implements nvdsinferserver::IBackend.

Definition at line 83 of file sources/libs/nvdsinferserver/infer_base_backend.h.

◆ getInputLayerSize() [2/2]

|

inlinefinalvirtualinherited |

Returns the number of input layers for the model.

Implements nvdsinferserver::IBackend.

Definition at line 83 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ getInputTensorOrder() [1/2]

|

inlinefinalvirtualinherited |

Returns the input tensor order.

Implements nvdsinferserver::IBackend.

Definition at line 49 of file sources/libs/nvdsinferserver/infer_base_backend.h.

◆ getInputTensorOrder() [2/2]

|

inlinefinalvirtualinherited |

Returns the input tensor order.

Implements nvdsinferserver::IBackend.

Definition at line 49 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ getLayerInfo() [1/2]

|

finalvirtualinherited |

Retrieve the layer information from the layer name.

Implements nvdsinferserver::IBackend.

Referenced by nvdsinferserver::BaseBackend::mutableLayerInfo().

◆ getLayerInfo() [2/2]

|

finalvirtualinherited |

Retrieve the layer information from the layer name.

Implements nvdsinferserver::IBackend.

◆ getLayerSize() [1/2]

|

inlinefinalvirtualinherited |

Returns the total number of layers (input + output) for the model.

Implements nvdsinferserver::IBackend.

Definition at line 75 of file sources/libs/nvdsinferserver/infer_base_backend.h.

◆ getLayerSize() [2/2]

|

inlinefinalvirtualinherited |

Returns the total number of layers (input + output) for the model.

Implements nvdsinferserver::IBackend.

Definition at line 75 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ getOutputLayers() [1/2]

|

finalvirtualinherited |

Get the LayersTuple for output layers.

Implements nvdsinferserver::IBackend.

◆ getOutputLayers() [2/2]

|

finalvirtualinherited |

Get the LayersTuple for output layers.

Implements nvdsinferserver::IBackend.

◆ inferenceDoneReorderLoop() [1/2]

|

protectedinherited |

Add input buffers to the output buffer list if required.

De-batch and run inference done callback.

- Parameters

-

[in] item The reorder task.

- Returns

- Boolean indicating success or failure.

◆ inferenceDoneReorderLoop() [2/2]

|

protectedinherited |

Add input buffers to the output buffer list if required.

De-batch and run inference done callback.

- Parameters

-

[in] item The reorder task.

- Returns

- Boolean indicating success or failure.

◆ initialize() [1/2]

|

overridevirtual |

Implements nvdsinferserver::IBackend.

◆ initialize() [2/2]

|

overridevirtual |

Reimplemented from nvdsinferserver::TrtISBackend.

◆ isFirstDimBatch() [1/2]

|

inlinefinalvirtualinherited |

Returns boolean indicating if batched input is expected.

Implements nvdsinferserver::IBackend.

Definition at line 69 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ isFirstDimBatch() [2/2]

|

inlinefinalvirtualinherited |

Returns boolean indicating if batched input is expected.

Implements nvdsinferserver::IBackend.

Definition at line 69 of file sources/libs/nvdsinferserver/infer_base_backend.h.

◆ isNonBatching() [1/2]

|

inlineinherited |

Checks if the batch size indicates batched processing or no.

Definition at line 130 of file sources/libs/nvdsinferserver/infer_base_backend.h.

References INFER_EXPORT_API::isNonBatch(), and nvdsinferserver::BaseBackend::maxBatchSize().

◆ isNonBatching() [2/2]

|

inlineinherited |

Checks if the batch size indicates batched processing or no.

Definition at line 130 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

References INFER_EXPORT_API::isNonBatch(), and nvdsinferserver::BaseBackend::maxBatchSize().

◆ maxBatchSize() [1/2]

|

inlinefinalvirtualinherited |

Returns the maximum batch size set for the backend.

Implements nvdsinferserver::IBackend.

Definition at line 125 of file sources/libs/nvdsinferserver/infer_base_backend.h.

Referenced by nvdsinferserver::BaseBackend::isNonBatching().

◆ maxBatchSize() [2/2]

|

inlinefinalvirtualinherited |

Returns the maximum batch size set for the backend.

Implements nvdsinferserver::IBackend.

Definition at line 125 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ model() [1/2]

|

inlineinherited |

Definition at line 73 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ model() [2/2]

|

inlineinherited |

Definition at line 73 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ mutableLayerInfo() [1/2]

|

inlineprotectedinherited |

Get the mutable layer description structure for the layer name.

Definition at line 153 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

References nvdsinferserver::BaseBackend::getLayerInfo().

◆ mutableLayerInfo() [2/2]

|

inlineprotectedinherited |

Get the mutable layer description structure for the layer name.

Definition at line 153 of file sources/libs/nvdsinferserver/infer_base_backend.h.

References nvdsinferserver::BaseBackend::getLayerInfo().

◆ needKeepInputs() [1/2]

|

inlineprotectedinherited |

Check if the keep input flag is set.

Definition at line 167 of file sources/libs/nvdsinferserver/infer_base_backend.h.

◆ needKeepInputs() [2/2]

|

inlineprotectedinherited |

Check if the keep input flag is set.

Definition at line 167 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ outputDevId() [1/2]

|

inlineinherited |

Definition at line 70 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ outputDevId() [2/2]

|

inlineinherited |

Definition at line 70 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ outputMemType() [1/2]

|

inlineinherited |

Definition at line 68 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ outputMemType() [2/2]

|

inlineinherited |

Definition at line 68 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ outputPoolSize() [1/2]

|

inlineinherited |

Definition at line 66 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ outputPoolSize() [2/2]

|

inlineinherited |

Definition at line 66 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ releaseResponseBuf() [1/2]

|

protectedinherited |

Release the output tensor buffer.

- Parameters

-

[in] tensor Name of the output tensor. [in] mem Pointer to the memory buffer.

◆ releaseResponseBuf() [2/2]

|

protectedinherited |

Release the output tensor buffer.

- Parameters

-

[in] tensor Name of the output tensor. [in] mem Pointer to the memory buffer.

◆ requestTritonOutputNames() [1/2]

|

overrideprotectedvirtual |

Reimplemented from nvdsinferserver::TrtISBackend.

◆ requestTritonOutputNames() [2/2]

|

overrideprotectedvirtual |

Reimplemented from nvdsinferserver::TrtISBackend.

◆ resetLayers() [1/2]

|

protectedinherited |

Set the layer description list of the backend.

This function sets the layer description for the backend and updates the number of input layers, layer name to index map.

- Parameters

-

[in] layers The list of descriptions for all layers, input followed by output layers. [in] inputSize The number of input layers in the list.

◆ resetLayers() [2/2]

|

protectedinherited |

Set the layer description list of the backend.

This function sets the layer description for the backend and updates the number of input layers, layer name to index map.

- Parameters

-

[in] layers The list of descriptions for all layers, input followed by output layers. [in] inputSize The number of input layers in the list.

◆ Run() [1/2]

|

protectedvirtualinherited |

Create an inference request and trigger asynchronous inference.

serverInferCompleted() is set as callback function that in turn calls asyncDone.

- Parameters

-

[in] inputs Array of input batch buffers. [in] bufConsumed Callback function for releasing input buffer. [in] asyncDone Callback function for processing response .

- Returns

Reimplemented in nvdsinferserver::TritonGrpcBackend, and nvdsinferserver::TritonGrpcBackend.

◆ Run() [2/2]

|

protectedvirtualinherited |

Create an inference request and trigger asynchronous inference.

serverInferCompleted() is set as callback function that in turn calls asyncDone.

- Parameters

-

[in] inputs Array of input batch buffers. [in] bufConsumed Callback function for releasing input buffer. [in] asyncDone Callback function for processing response .

- Returns

Reimplemented in nvdsinferserver::TritonGrpcBackend, and nvdsinferserver::TritonGrpcBackend.

◆ server() [1/2]

|

inlineprotectedinherited |

Get the Triton server handle.

Definition at line 164 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ server() [2/2]

|

inlineprotectedinherited |

Get the Triton server handle.

Definition at line 164 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ serverInferCompleted() [1/2]

|

protectedinherited |

Call the inputs consumed function and parse the inference response to form the array of output batch buffers and call asyncDone on it.

- Parameters

-

[in] request Pointer to the inference request. [in] uniqResponse Pointer to the inference response from the server. [in] inputsConsumed Callback function for releasing input buffer. [in] asyncDone Callback function for processing response .

◆ serverInferCompleted() [2/2]

|

protectedinherited |

Call the inputs consumed function and parse the inference response to form the array of output batch buffers and call asyncDone on it.

- Parameters

-

[in] request Pointer to the inference request. [in] uniqResponse Pointer to the inference response from the server. [in] inputsConsumed Callback function for releasing input buffer. [in] asyncDone Callback function for processing response .

◆ setAllocator() [1/2]

|

inlineprotectedinherited |

Set the output tensor allocator.

Definition at line 148 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ setAllocator() [2/2]

|

inlineprotectedinherited |

Set the output tensor allocator.

Definition at line 148 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ setFirstDimBatch() [1/2]

|

inlineinherited |

Set the flag indicating that it is a batch input.

Definition at line 64 of file sources/libs/nvdsinferserver/infer_base_backend.h.

◆ setFirstDimBatch() [2/2]

|

inlineinherited |

Set the flag indicating that it is a batch input.

Definition at line 64 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ setInputTensorOrder() [1/2]

|

inlineprotectedinherited |

Set the tensor order for the input layers.

Definition at line 162 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ setInputTensorOrder() [2/2]

|

inlineprotectedinherited |

Set the tensor order for the input layers.

Definition at line 162 of file sources/libs/nvdsinferserver/infer_base_backend.h.

◆ setKeepInputs() [1/2]

|

inlineinherited |

Set the flag indicating whether to keep inputs buffers.

Definition at line 118 of file sources/libs/nvdsinferserver/infer_base_backend.h.

◆ setKeepInputs() [2/2]

|

inlineinherited |

Set the flag indicating whether to keep inputs buffers.

Definition at line 118 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ setMaxBatchSize() [1/2]

|

inlineprotectedinherited |

Set the maximum batch size to be used for the backend.

Definition at line 174 of file sources/libs/nvdsinferserver/infer_base_backend.h.

◆ setMaxBatchSize() [2/2]

|

inlineprotectedinherited |

Set the maximum batch size to be used for the backend.

Definition at line 174 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ setOutputDevId() [1/2]

|

inlineinherited |

Definition at line 69 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ setOutputDevId() [2/2]

|

inlineinherited |

Definition at line 69 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ setOutputMemType() [1/2]

|

inlineinherited |

Definition at line 67 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ setOutputMemType() [2/2]

|

inlineinherited |

Definition at line 67 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ setOutputPoolSize() [1/2]

|

inlineinherited |

Helper function to access the member variables.

Definition at line 65 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ setOutputPoolSize() [2/2]

|

inlineinherited |

Helper function to access the member variables.

Definition at line 65 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ setOutputs() [1/2]

|

inline |

Definition at line 26 of file sources/libs/nvdsinferserver/infer_simple_runtime.h.

◆ setOutputs() [2/2]

|

inline |

Definition at line 26 of file 9.0/sources/libs/nvdsinferserver/infer_simple_runtime.h.

◆ setTensorMaxBytes() [1/2]

|

inlineinherited |

Set the maximum size for the tensor, the maximum of the existing size and new input size is used.

The size is rounded up to INFER_MEM_ALIGNMENT bytes.

- Parameters

-

name Name of the tensor. maxBytes New maximum number of bytes for the buffer.

Definition at line 110 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

References INFER_MEM_ALIGNMENT, and INFER_ROUND_UP.

◆ setTensorMaxBytes() [2/2]

|

inlineinherited |

Set the maximum size for the tensor, the maximum of the existing size and new input size is used.

The size is rounded up to INFER_MEM_ALIGNMENT bytes.

- Parameters

-

name Name of the tensor. maxBytes New maximum number of bytes for the buffer.

Definition at line 110 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

References INFER_MEM_ALIGNMENT, and INFER_ROUND_UP.

◆ setUniqueId() [1/2]

|

inlineinherited |

Set the unique ID for the object instance.

Definition at line 54 of file sources/libs/nvdsinferserver/infer_base_backend.h.

◆ setUniqueId() [2/2]

|

inlineinherited |

Set the unique ID for the object instance.

Definition at line 54 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ setupLayersInfo() [1/2]

|

protectedvirtualinherited |

Get the model configuration from the server and populate layer information.

Set maximum batch size as specified in configuration settings.

- Returns

- NVDSINFER_SUCCESS or NVDSINFER_TRITON_ERROR.

Reimplemented in nvdsinferserver::TritonGrpcBackend, and nvdsinferserver::TritonGrpcBackend.

◆ setupLayersInfo() [2/2]

|

protectedvirtualinherited |

Get the model configuration from the server and populate layer information.

Set maximum batch size as specified in configuration settings.

- Returns

- NVDSINFER_SUCCESS or NVDSINFER_TRITON_ERROR.

Reimplemented in nvdsinferserver::TritonGrpcBackend, and nvdsinferserver::TritonGrpcBackend.

◆ setupReorderThread() [1/2]

|

protectedinherited |

Create a loop thread that calls inferenceDoneReorderLoop on the queued items.

- Returns

- NVDSINFER_SUCCESS or NVDSINFER_TRITON_ERROR.

◆ setupReorderThread() [2/2]

|

protectedinherited |

Create a loop thread that calls inferenceDoneReorderLoop on the queued items.

- Returns

- NVDSINFER_SUCCESS or NVDSINFER_TRITON_ERROR.

◆ specifyInputDims() [1/2]

|

overrideprotectedvirtual |

Implements nvdsinferserver::IBackend.

◆ specifyInputDims() [2/2]

|

overrideprotectedvirtual |

Reimplemented from nvdsinferserver::TrtISBackend.

◆ uniqueId() [1/2]

|

inlineinherited |

Get the unique ID of the object instance.

Definition at line 59 of file sources/libs/nvdsinferserver/infer_base_backend.h.

◆ uniqueId() [2/2]

|

inlineinherited |

Get the unique ID of the object instance.

Definition at line 59 of file 9.0/sources/libs/nvdsinferserver/infer_base_backend.h.

◆ version() [1/2]

|

inlineinherited |

Definition at line 74 of file sources/libs/nvdsinferserver/infer_trtis_backend.h.

◆ version() [2/2]

|

inlineinherited |

Definition at line 74 of file 9.0/sources/libs/nvdsinferserver/infer_trtis_backend.h.

The documentation for this class was generated from the following file: