Introduction

This is the User Manual for Ethernet adapter cards based on the ConnectX®-6 integrated circuit device. ConnectX®-6 connectivity provides the highest performing low latency and most flexible interconnect solution for PCI Express Gen 3.0/4.0 servers used in enterprise data centers and high-performance computing environments.

ConnectX-6 Virtual Protocol Interconnect® adapter cards provide up to two ports of 200Gb/s for InfiniBand and Ethernet connectivity, sub-600ns latency and 215 million messages per second, enabling the highest performance and most flexible solution for the most demanding High-Performance Computing (HPC), Storage, and data center applications. ConnectX-6 is a groundbreaking addition to the NVIDIA ConnectX series of industry-leading adapter cards. In addition to all the existing innovative features of past ConnectX versions, ConnectX-6 offers a number of enhancements that further improve the performance and scalability of datacenter applications. Please refer to Feature and Benefits for more details.

Please make sure to install the ConnectX-6 cards in a PCIe slot that is capable of supplying the required power as stated in Specifications.

The following section provides the ordering part number, port speed, number of ports, and PCI Express speed.

ConnectX-6 PCIe x16 Card

ConnectX-6 with a PCIe x16 slot can support a bandwidth of up to 100GbE in a PCIe Gen 3.0/4.0 slot, or up to 200GbE in a PCIe Gen 4.0 slot.

Part Number | MCX613106A-VDAT | |

Form Factor/Dimensions | PCIe Half Height, Half Length / 167.65mm x 68.90mm | |

Data Transmission Rate | Ethernet: 10/25/40/50/100/200 Gb/s | |

Network Connector Type | Dual-port QSFP56 | |

PCIe x16 through Edge Connector | PCIe Gen 3.0 / 4.0 SERDES @ 8.0GT/s / 16.0GT/s | |

RoHS | RoHS Compliant | |

Adapter IC Part Number | MT28908A0-XCCF-HVM | |

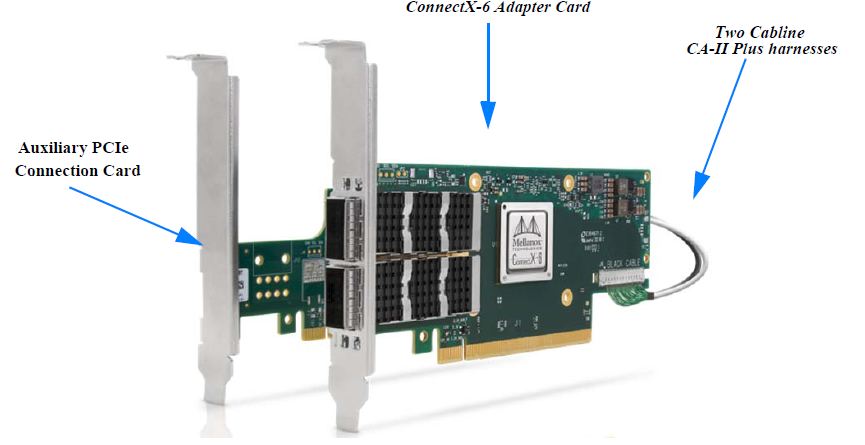

ConnectX-6 Socket Direct Cards (2x PCIe x16)

In order to obtain 200Gb/s speed, NVIDIA offers ConnectX-6 Socket Direct that enable 200Gb/s connectivity also for servers with PCIe Gen 3.0 capability. The adapter’s 32-lane PCIe bus is split into two 16-lane buses, with one bus accessible through a PCIe x16 edge connector and the other bus through an x16 Auxiliary PCIe Connection card. The two cards should be installed into two PCIe x16 slots and connected using two Cabline SA-II Plus harnesses, as shown in the below figure.

ConnectX-6 Ethernet Socket Direct Adapter Cards

Model | ConnectX-6 Ethernet Socket Direct Adapter Cards | ||

Part Number | MCX614105A-VCAT | MCX614106A-VCAT | MCX614106A-CCAT |

Form Factor / Dimensions | PCIe Full Height, ¾ Length Adapter Card Size: 6.6 in. x 2.71 in. (167.65mm x 68.90mm) | ||

Auxiliary PCIe Connection Card Size: 5.09 in. x 2.32 in. (129.30mm x 59.00mm) | |||

Cabline CA-II Plus harness Length: 35cm | |||

Ethernet Data Rate | 10/25/40/50/100/200 GbE | 10/25/40/50/100 GbE | |

Network Connector Type | Single-port QSFP56 | Dual-port QSFP56 | |

PCI Express Connectors | Adapter Card Edge Connector: PCIe Gen 3.0/4.0 SERDES @ 16.0GT/s x16 | ||

Auxiliary Connection Card: PCIe Gen 3.0 SERDES @ 16.0GT/s x16 | |||

RoHS | RoHS Compliant | ||

IC Part Number | MT28908A0-XCCF-HVM | ||

For more detailed information see Specifications.

This section describes hardware features and capabilities. Please refer to the relevant driver and/or firmware release notes for feature availability.

Feature | Description |

PCI Express (PCIe) | Uses the following 2x PCIe x16 interfaces:

|

Up to 25 Gigabit Ethernet | NVIDIA adapters comply with the following IEEE 802.3 standards: 200GbE / 100GbE / 50GbE / 40GbE / 25GbE / 10GbE / 1GbE – IEEE 802.3bj, 802.3bm 100 Gigabit Ethernet – IEEE 802.3by, Ethernet Consortium25, 50 Gigabit Ethernet, supporting all FEC modes – IEEE 802.3ba 40 Gigabit Ethernet – IEEE 802.3by 25 Gigabit Ethernet – IEEE 802.3ae 10 Gigabit Ethernet – IEEE 802.3ap based auto-negotiation and KR startup – IEEE 802.3ad, 802.1AX Link Aggregation – IEEE 802.1Q, 802.1P VLAN tags and priority – IEEE 802.1Qau (QCN) – Congestion Notification – IEEE 802.1Qaz (ETS) – IEEE 802.1Qbb (PFC) – IEEE 802.1Qbg – IEEE 1588v2 – Jumbo frame support (9.6KB) |

Memory |

|

Overlay Networks | In order to better scale their networks, data center operators often create overlay networks that carry traffic from individual virtual machines over logical tunnels in encapsulated formats such as NVGRE and VXLAN. While this solves network scalability issues, it hides the TCP packet from the hardware offloading engines, placing higher loads on the host CPU. ConnectX-6 effectively addresses this by providing advanced NVGRE and VXLAN hardware offloading engines that encapsulate and de-capsulate the overlay protocol. |

RDMA and RDMA over Converged Ethernet (RoCE) | ConnectX-6, utilizing IBTA RDMA (Remote Data Memory Access) and RoCE (RDMA over Converged Ethernet) technology, delivers low-latency and high-performance over Ethernet networks. Leveraging data center bridging (DCB) capabilities as well as ConnectX-6 advanced congestion control hardware mechanisms, RoCE provides efficient low-latency RDMA services over Layer 2 and Layer 3 networks. |

NVIDIA PeerDirect™ | PeerDirect™ communication provides high-efficiency RDMA access by eliminating unnecessary internal data copies between components on the PCIe bus (for example, from GPU to CPU), and therefore significantly reduces application run time. ConnectX-6 advanced acceleration technology enables higher cluster efficiency and scalability to tens of thousands of nodes. |

CPU Offload | Adapter functionality enables reduced CPU overhead leaving more CPU resources available for computation tasks. Open vSwitch (OVS) offload using ASAP2(TM) • Flexible match-action flow tables • Tunneling encapsulation/decapsulation |

Quality of Service (QoS) | Support for port-based Quality of Service enabling various application requirements for latency and SLA. |

Hardware-based I/O Virtualization | ConnectX-6 provides dedicated adapter resources and guaranteed isolation and protection for virtual machines within the server. |

Storage Acceleration | A consolidated compute and storage network achieves significant cost-performance advantages over multi-fabric networks. Standard block and file access protocols can leverage • RDMA for high-performance storage access • NVMe over Fabric offloads for target machine • Erasure Coding • T10-DIF Signature Handover |

SR-IOV | ConnectX-6 SR-IOV technology provides dedicated adapter resources and guaranteed isolation and protection for virtual machines (VM) within the server. |

High-Performance Accelerations | • Tag Matching and Rendezvous Offloads • Adaptive Routing on Reliable Transport • Burst Buffer Offloads for Background Checkpointing |

RHEL/CentOS

Windows

FreeBSD

VMware

OpenFabrics Enterprise Distribution (OFED)

OpenFabrics Windows Distribution (WinOF-2)

Interoperable with 1/10/25/40/50/100/200 Gb/s Ethernet switches

Passive copper cable with ESD protection

Powered connectors for optical and active cable support

ConnectX-6 technology maintains support for manageability through a BMC. ConnectX-6 PCIe stand-up adapter can be connected to a BMC using MCTP over SMBus or MCTP over PCIe protocols as if it is a standard NVIDIA PCIe stand-up adapter. For configuring the adapter for the specific manageability solution in use by the server, please contact NVIDIA Support.