Advanced GPU configuration (Optional)#

Added in version 2.0.

Compute workloads can benefit from using separate GPU partitions. The flexibility of GPU partitioning allows a single GPU to be shared and used by small, medium, and large-sized workloads. GPU partitions can be a valid option for executing Deep Learning workloads. An example is Deep Learning training and inferencing workflows, which utilize smaller datasets but are highly dependent on the size of the data/model, and users may need to decrease batch sizes.

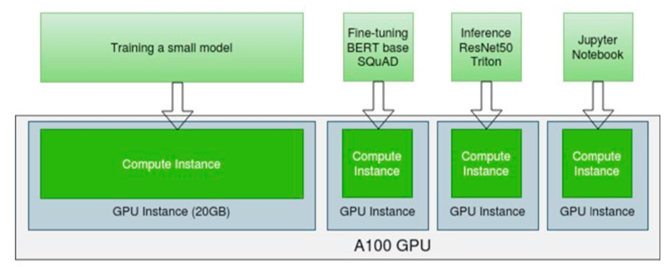

The following graphic illustrates a GPU partitioning use case where multi-tenant, multiple users are sharing a single A100 (40GB). In this use case, a single A100 can be used for multiple workloads such as Deep Learning training, fine-tuning, inference, Jupiter Notebook, debugging, etc.

GPUs are partitioned using Multi-Instance GPU (MIG) spatial partitioning. More details on MIG can be found in the NVIDIA Multi-Instance GPU User Guide.