Overview#

Added in version 3.0.

This document provides high level guidance for running NVIDIA AI Enterprise with GPUDirect® Storage (GDS). It is focused on deploying GDS on bare metal servers with upstream Kubernetes.

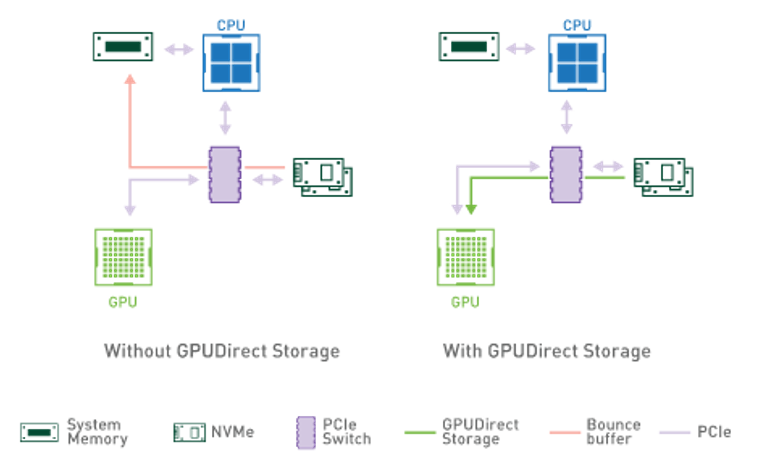

GPUDirect® Storage (GDS) creates a direct data path between local or remote storage, such as NVMe or NVMe over Fabrics (NVMe-oF), and the GPU memory. By enabling a direct-memory access (DMA) engine near the network adapter or storage, it moves data into or out of GPU memory—without burdening the CPU.

GPUDirect Storage enables a direct data path between storage and GPU memory and avoids extra copies through a bounce buffer in the CPU’s memory.

NVIDIA AI Enterprise contains the software to quickly leverage GPUDirect Storage without additional software. NVIDIA AI Enterprise 3.0 supports GPUDirect Storage Version 1.4 or later. Leverage the NVIDIA GPUDirect Storage Release Notes for the latest release information. For the latest developer and user information refer to the GPUDirect Storage Product Documentation.